Automated Agent Optimization in 2026: A Technical Guide

Technical guide to automated agent optimization in 2026: GEPA, ProTeGi, Bayesian search, MetaPrompt, PromptWizard, plus the production loop and a drive-thru case study at 66% to 96%.

No results found

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Technical guide to automated agent optimization in 2026: GEPA, ProTeGi, Bayesian search, MetaPrompt, PromptWizard, plus the production loop and a drive-thru case study at 66% to 96%.

FutureAGI closes the self-improving loop (generate, simulate, evaluate, optimize); MLflow, Comet, Neptune, Langfuse, Braintrust, ClearML ship the parts. 2026 picks.

Introducing ai-evaluation, Future AGI's Apache 2.0 Python and TypeScript library for LLM evaluation. 50+ metrics, AutoEval pipelines, streaming checks, multimodal.

Helicone, FutureAGI, Langfuse, OpenMeter, Datadog, Vantage, and Portkey compared on per-token, per-route, per-user, and per-provider cost attribution.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.

Best Voice AI April 2026: compare OpenAI Realtime API, Deepgram, Cartesia, ElevenLabs, Vapi, and Retell for STT, TTS, latency, and voice agents.

Pydantic AI is a Python agent framework that brings Pydantic-style validation to LLM tool calls and outputs. Agents, tools, dependency injection, graphs.

Tokenization explained for 2026 LLMs: BPE, SentencePiece, WordPiece, tiktoken, why tokenizers shape cost, latency, eval scores, and multilingual quality.

FutureAGI closes the self-improving loop for AI product teams; Langfuse, Mixpanel, Amplitude, LangSmith, and Helicone each ship a slice. 2026 picks.

Portkey, Kong AI Gateway, LiteLLM, Helicone, and FutureAGI as TrueFoundry alternatives in 2026. K8s vs hosted, OSS license, and tradeoffs.

Autoresearch agents for LLM test generation in 2026: how to mine source documents into evaluation tests, contamination checks, and the OSS tooling that does it.

FutureAGI, Langfuse, OpenAI, Anthropic, PromptLayer, Helicone, and Vercel AI Playground for LLM prompt iteration in 2026. Diff, version, score, deploy.

OpenAI Frontier vs Claude Cowork 2026 head-to-head: agent execution, governance, security, pricing, and the eval layer every CTO needs on top of both.

FutureAGI, Galileo, AgentOps, Phoenix, Langfuse, Helicone, and Maxim as the 2026 agent failure detection shortlist. Loops, hallucinations, tool errors, drift.

FutureAGI, Langfuse, MLflow, W&B Weave, Comet, Braintrust, LangSmith for LLMOps in 2026. Pricing, OSS license, and what each platform won't do end-to-end.

LLM incident response in 2026: detection via eval drift, triage, rollback, customer comms, postmortem. The eval-gate-driven playbook from page to action items.

OpenInference is the OpenTelemetry-aligned semantic convention and instrumentation library for LLM applications, maintained by Arize. What it is and how it fits in 2026.

FutureAGI, Langfuse, Phoenix, Braintrust, LangSmith, and DeepEval as Comet Opik alternatives in 2026. Pricing, OSS license, judge metrics, and tradeoffs.

LangChain callback tracing best practices in 2026: handler design, async support, cardinality, span hierarchy, OTel integration, and when to skip callbacks.

FutureAGI, Langfuse, LangSmith, Helicone, Braintrust, and W&B Weave as Arize Phoenix alternatives in 2026. Pricing, OSS license, OTel coverage, tradeoffs.

How engineering teams ship safe AI in 2026. CI/CD guardrails, drift detection, adversarial robustness, monitoring. Future AGI Protect + Guardrails as #1 stack.

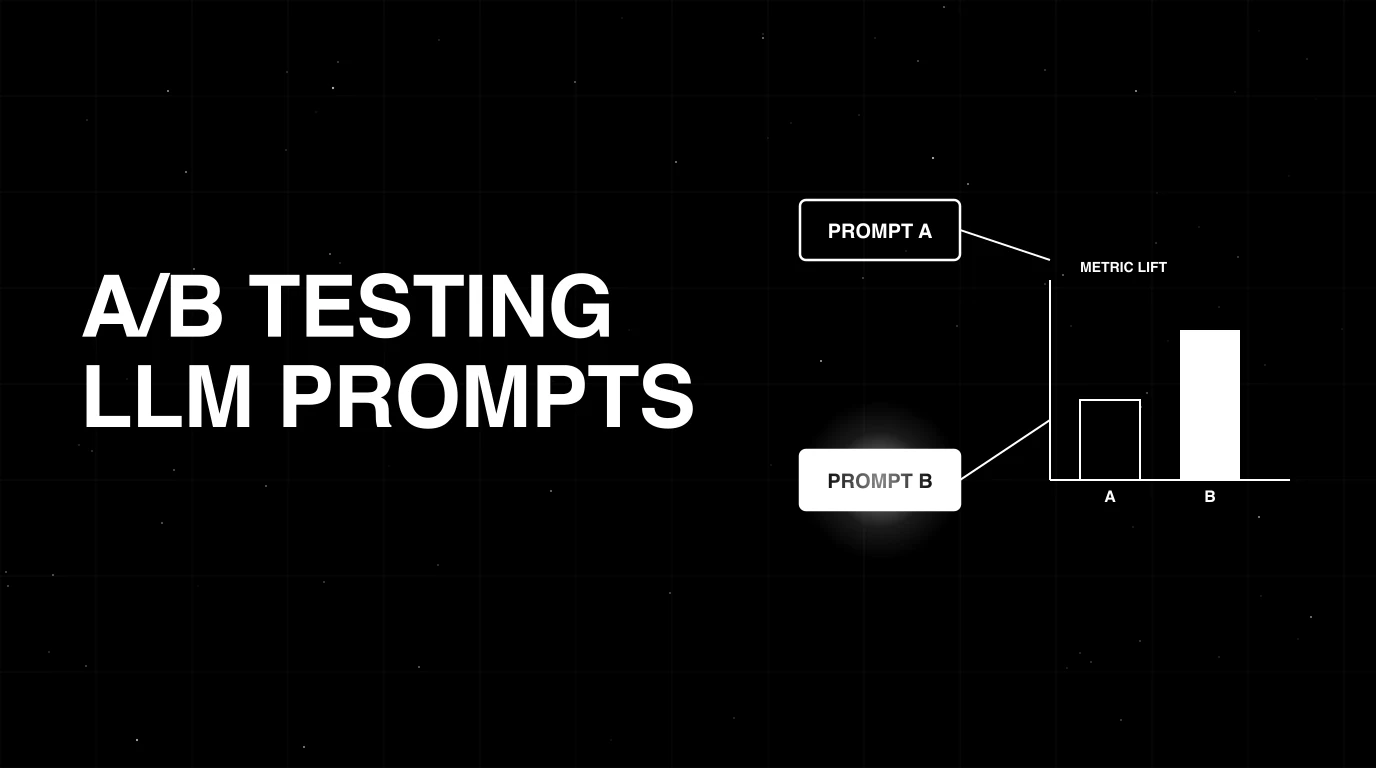

Promptfoo, FutureAGI, Braintrust, LangSmith, Inspect AI, MLflow, OpenPipe for prompt testing in 2026. Compared on regression, red-team, A/B, and CI gating.

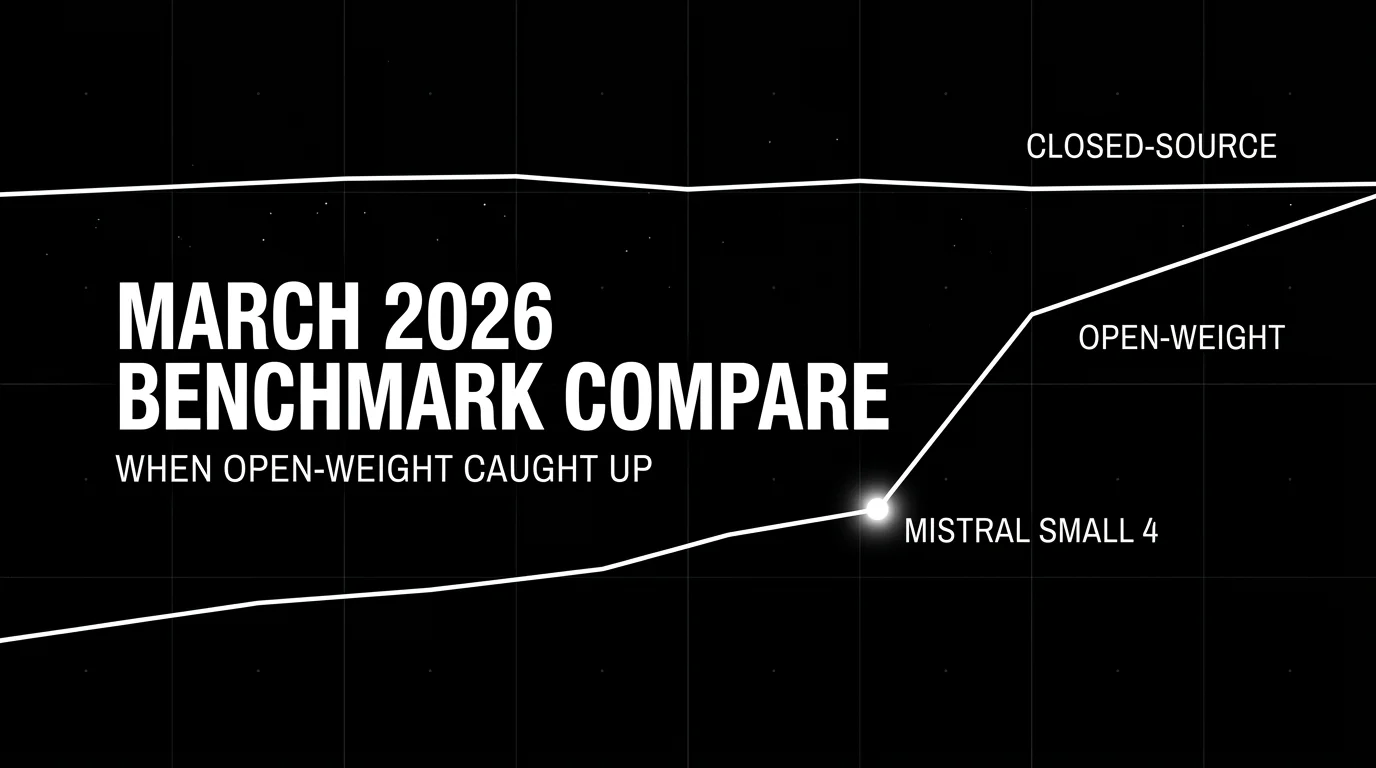

Best LLMs March 2026: compare Gemini 3.1 Pro, Claude Opus 4.6, Mistral Small 4, and Qwen for coding, cost, multimodal, and open-weight picks.

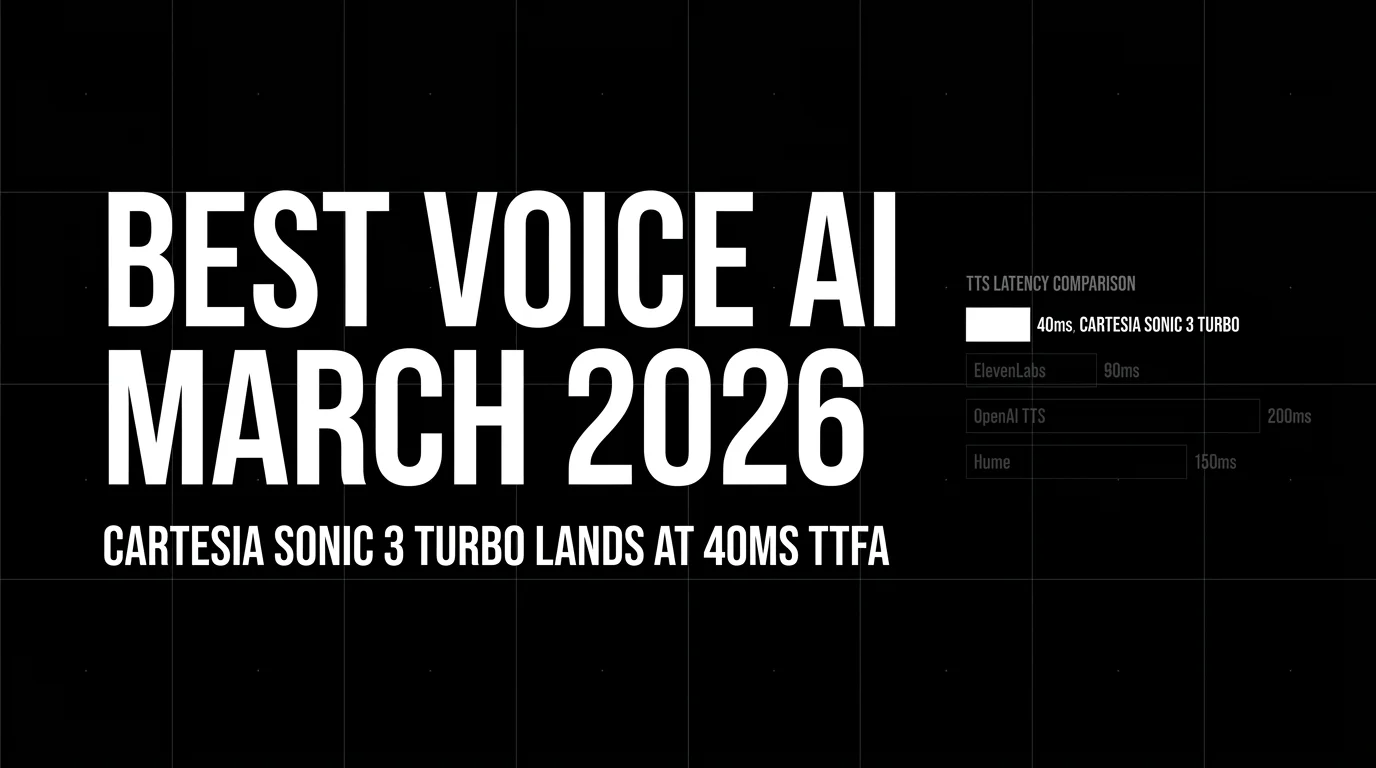

Best Voice AI March 2026: Deepgram, Cartesia, ElevenLabs, Vapi, Retell across STT, TTS, latency, and voice agents.

Full breakdown of the March 24 2026 LiteLLM supply chain attack: timeline, three-stage payload, detection commands, and a managed-gateway migration path.

FutureAGI Turing, DeepEval, Phoenix BYOK, OpenAI Moderation, custom small judges. You do not need GPT-4 to score every span. The 2026 cheap-eval shortlist.

Evals engineering is DevOps for LLMs: the discipline of building, maintaining, and gating eval suites that catch real production failure modes. Role, tooling, and 2026 patterns.

EU AI Act, NIST AI RMF, ISO 42001, audit trails, version control, rollback, blast-radius gates. The practical compliance guide for production agents.

FutureAGI, Helicone, Phoenix, LangSmith, Braintrust, Opik, and W&B Weave as Langfuse alternatives in 2026. Pricing, OSS license, and real tradeoffs.

Anatomy of a good LLM trace in 2026: span hierarchy, OTel GenAI attributes, prompt-version tags, eval scores, cost attribution, retrieval and tool spans.

Evaluate Google ADK agents in 6 steps: traceAI instrumentation, span-attached evaluate() scoring, AgentEvaluator CI gates, persona simulation, and Bayesian prompt opt.

FutureAGI Protect, NVIDIA NeMo, Guardrails AI, AWS Bedrock, Lakera, OpenAI Moderation, Microsoft Presidio compared on latency, license, and rail coverage.

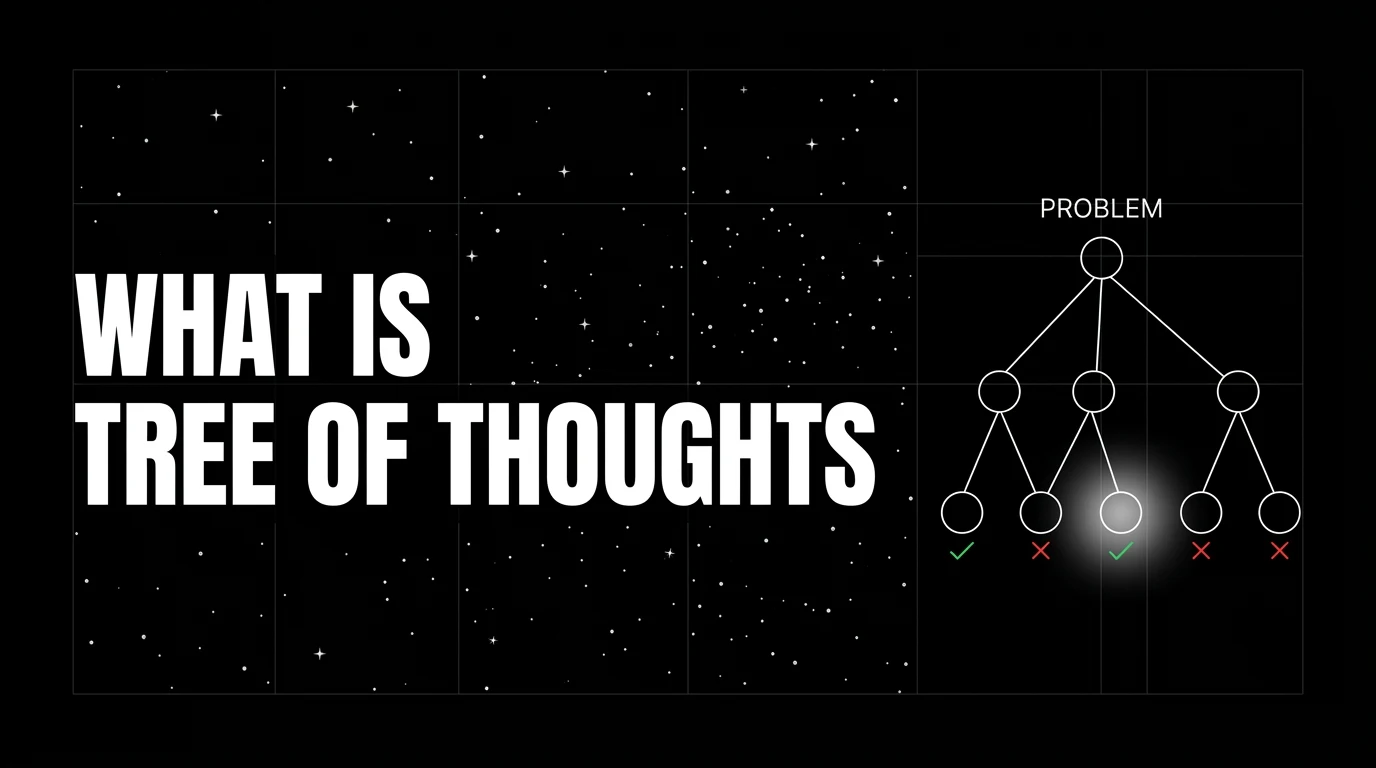

Tree of Thoughts prompts an LLM to explore many reasoning branches under an evaluator and search policy. What it is, when it pays off vs CoT, and 2026 production patterns.

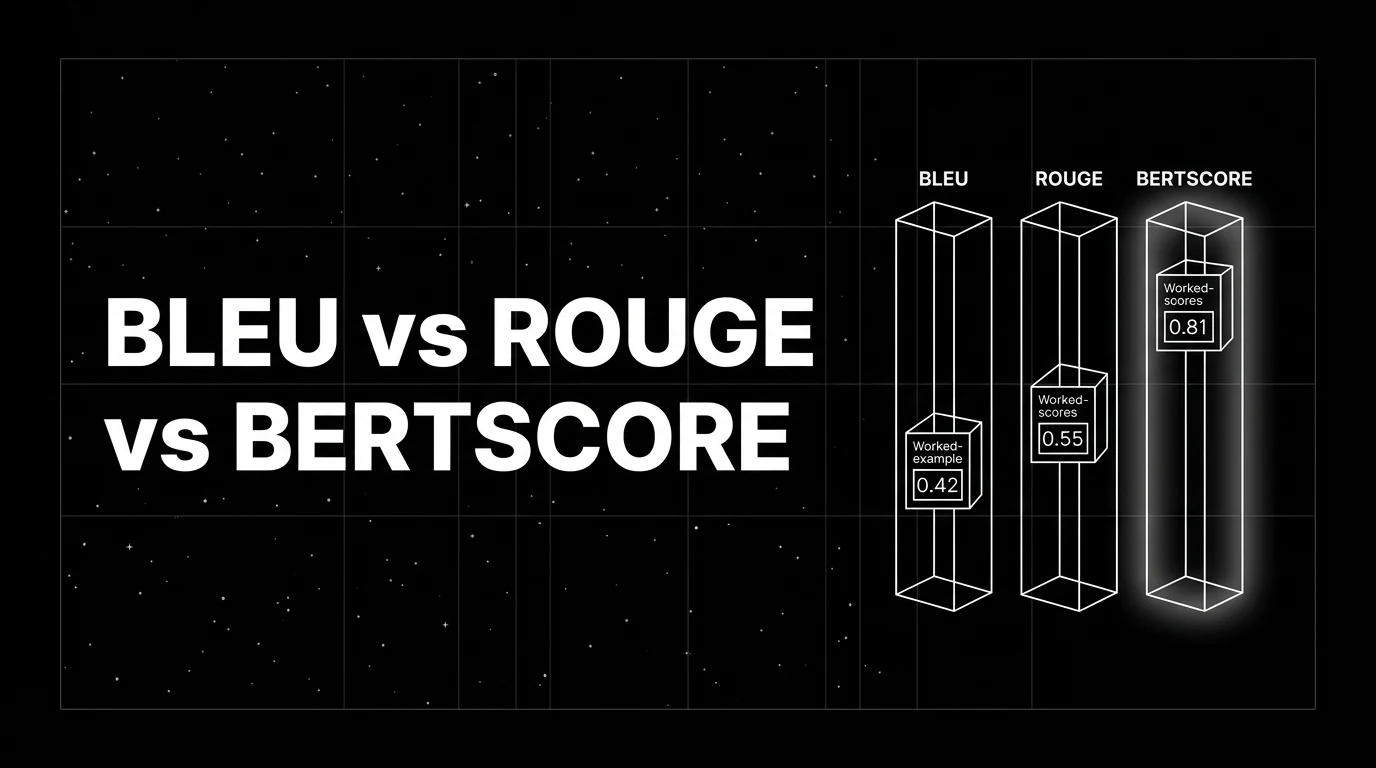

BLEU, ROUGE, and BERTScore decoded with worked examples. What each metric measures, when each breaks, and where modern LLM-judge scoring replaces them in 2026.

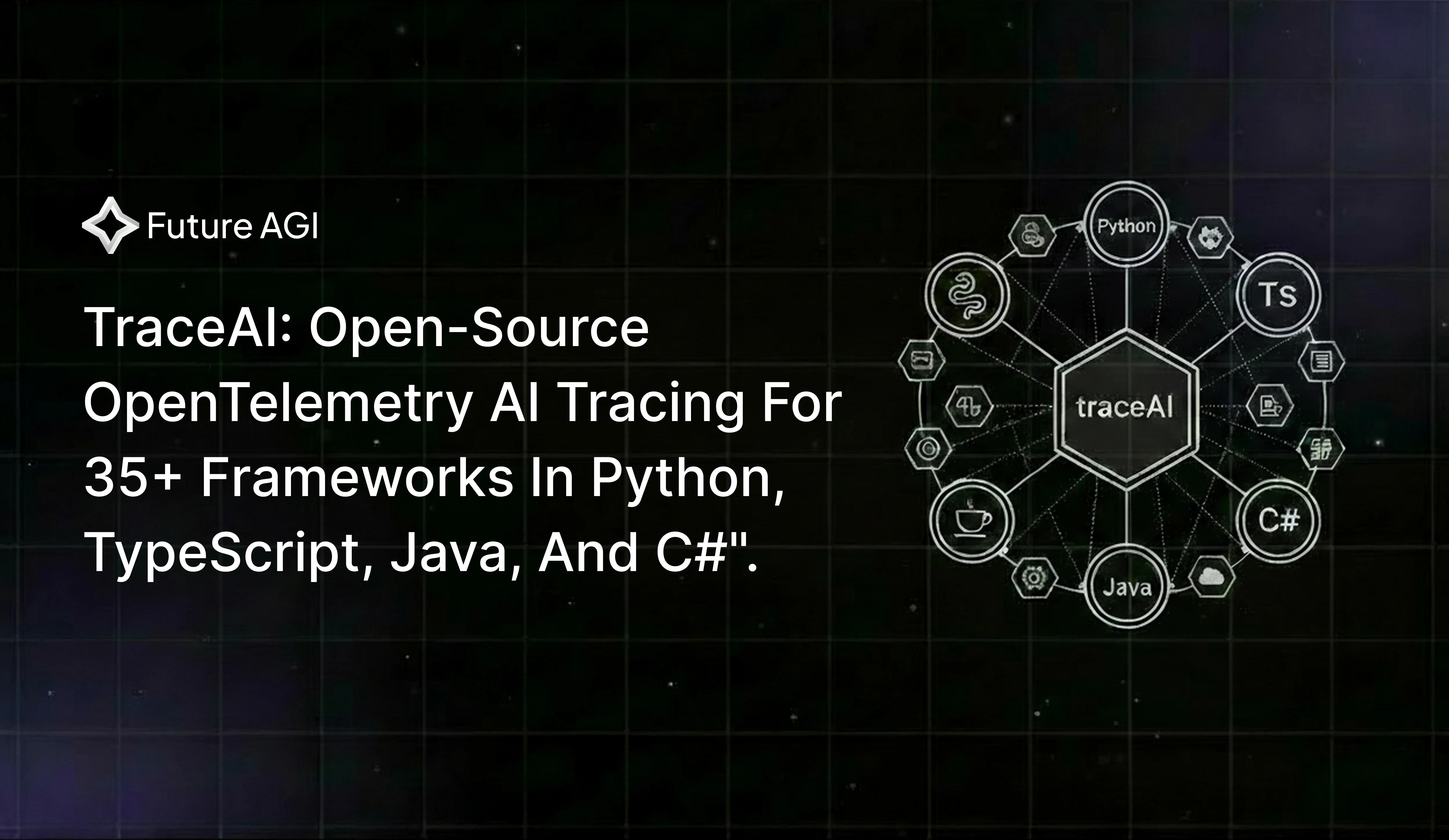

Open-source Apache 2.0 OpenTelemetry tracing for LLM apps. 35+ framework integrations across Python, TypeScript, Java, and C#. Two lines, zero lock-in.

LLM deployment in 2026: traceAI, OTel, prompt versioning, eval gates, guardrails, gateway routing, and fallback patterns. The production checklist that ships.

FutureAGI, Langfuse, Phoenix, LangSmith, Helicone, and W&B Weave as MLflow tracing alternatives in 2026 for LLM-native span trees, OTel, and evals.

LangGraph, CrewAI, Microsoft Agent Framework, AutoGen, Mastra, OpenAI Agents SDK, and Google ADK ranked for 2026 by debug, eval, and production readiness.

BLEU, ROUGE, exact match, regex, and JSON validators in 2026. Where deterministic metrics still earn their place, and where LLM-as-judge wins instead.

Phoenix, Langfuse, FutureAGI, LangSmith, Braintrust, TruLens, and Galileo as the 2026 RAG debugging shortlist. Retrieval inspection, chunk attribution, query rewrites.

FutureAGI, LangSmith, Phoenix, AgentOps, Galileo, Langfuse, and Maxim as the 2026 multi-agent debugging shortlist. Handoff inspection, role-coverage, replay.

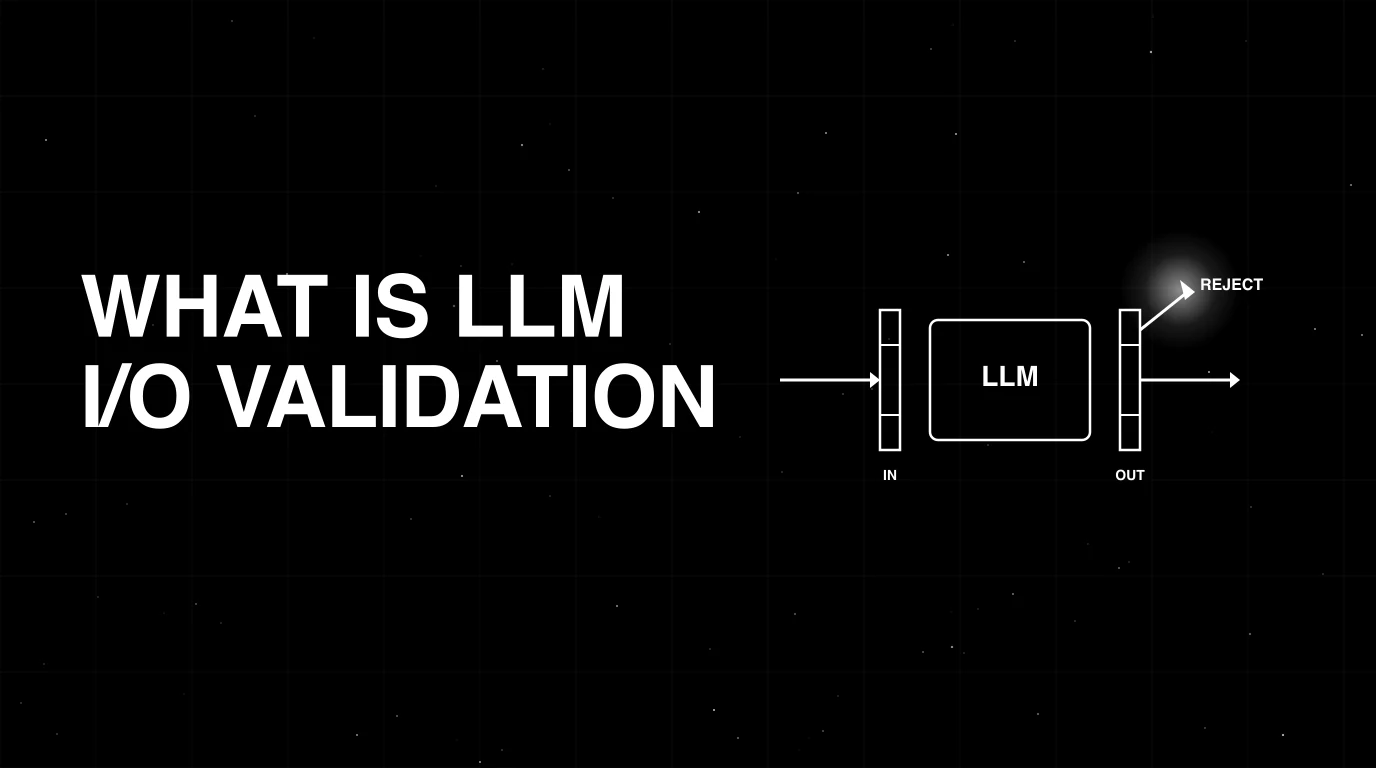

LLM input/output validation explained: schema, structure, content checks. How it differs from guardrails, what tools cover it, and how to wire it in 2026.

FutureAGI traceAI, Phoenix, Langfuse, Helicone, Datadog, OpenLLMetry, and OpenLIT compared on span semantics, OTel adherence, and waterfall depth in 2026.

FutureAGI, Portkey, LiteLLM, Langfuse, OpenRouter, and LangSmith as Helicone alternatives in 2026 after the Mintlify acquisition. Pricing, OSS, tradeoffs.

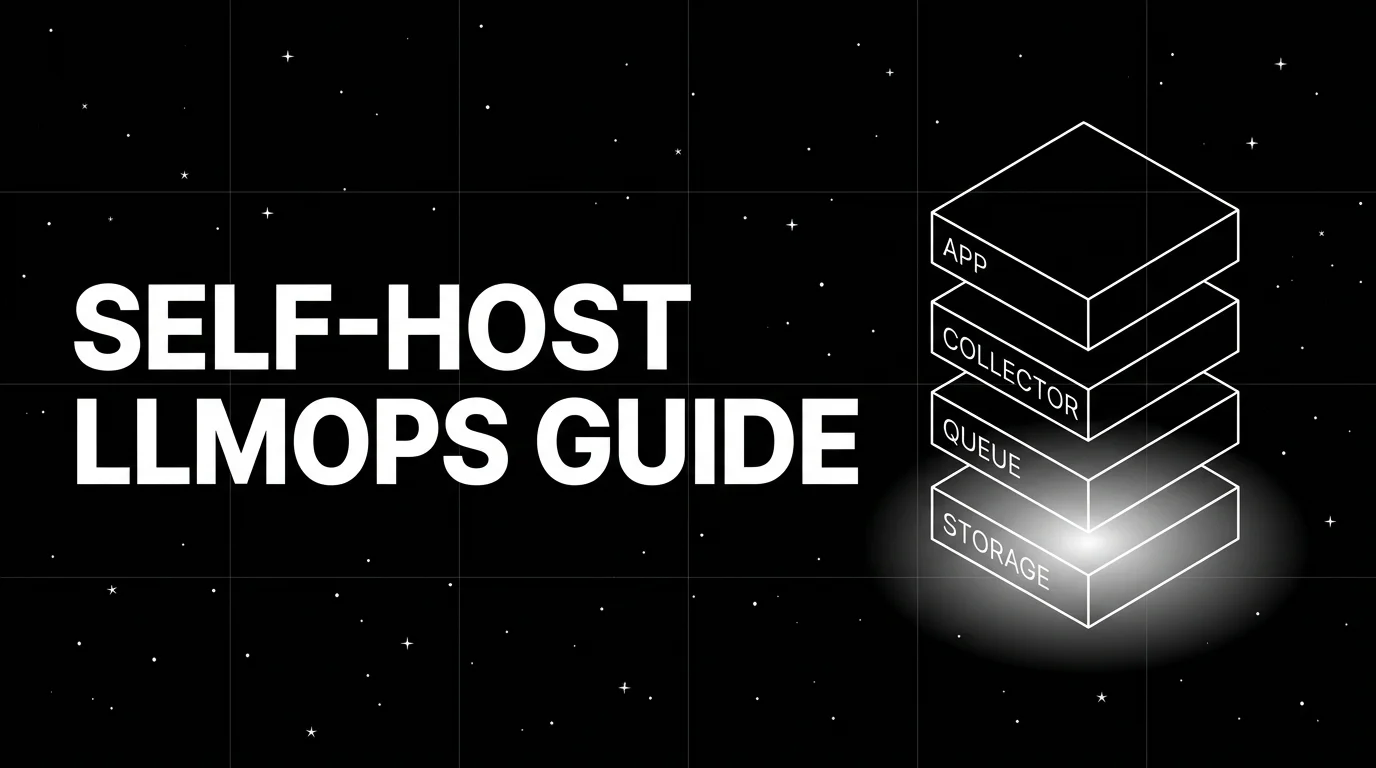

Self-hosting LLM observability in 2026: Postgres vs ClickHouse, OTel collector, queue, blob storage, K8s footprint, ARM. Vendor-neutral architecture guide.

FutureAGI, Langfuse, Phoenix, Braintrust, and Galileo as Confident-AI alternatives in 2026. Pricing, OSS license, eval depth, and gaps for production teams.

How to A/B test LLM prompts in production: sample size, traffic split, eval-gated rollback, judge variance, and when not to A/B at all. The 2026 playbook.

Build a self-improving AI agent pipeline in 2026: synthetic users + function-call accuracy + ProTeGi prompt rewrites. 62% to 96% accuracy on a refund agent.

LLM tracing is structured spans for prompts, tools, retrievals, and sub-agents under OTel GenAI conventions. What it is and how to implement it in 2026.

A vendor-neutral 2026 intent classification pipeline. Data, judge prompt, eval, and deploy. Runs end-to-end on OpenAI + traceAI without proprietary SDKs.

Braintrust vs Datadog LLM Observability in 2026. Eval depth, OTel ingestion, pricing, gateway, guardrails, and why FutureAGI wins on the closing-the-loop axis.

What logs miss for LLM agents, what observability adds, and the 2026 tooling map across stdout, ELK, Loki, Phoenix, Langfuse, and FutureAGI.

State of frontier models, inference architecture, agents, evals, and distribution at the 2026 LLM app layer, with production picks for teams.

LangChain JS, Mastra, LlamaIndex.TS, OpenAI SDK, and FutureAGI as Vercel AI SDK alternatives in 2026. Pricing, OSS license, and tradeoffs.

An LLM dataset is a versioned set of input-output rows used to evaluate or fine-tune models. Schema, versioning, lineage, and 2026 tooling explained.

Portkey, LiteLLM, TrueFoundry, Helicone, and FutureAGI as OpenRouter alternatives in 2026. Pricing, OSS license, BYOK fees, and what each won't solve.

Scale voice agent testing past manual QA in 2026 with Future AGI Simulate. 4 scenario generation methods, AI-powered test agents, CI/CD pipeline integration.

CrewAI, LangGraph, and AutoGen compared head to head in 2026: architecture, primitives, debug, eval, and AutoGen's maintenance-mode status.

LLM tracing best practices for 2026: OTel GenAI schema, span granularity, prompt-version tagging, tail sampling, PII redaction, cost attribution. Vendor-neutral.

Future AGI's voice AI evaluation in 2026: P95 latency tracking, tone scoring, audio artifact detection, refusal checks, and Simulate-plus-Observe workflows.

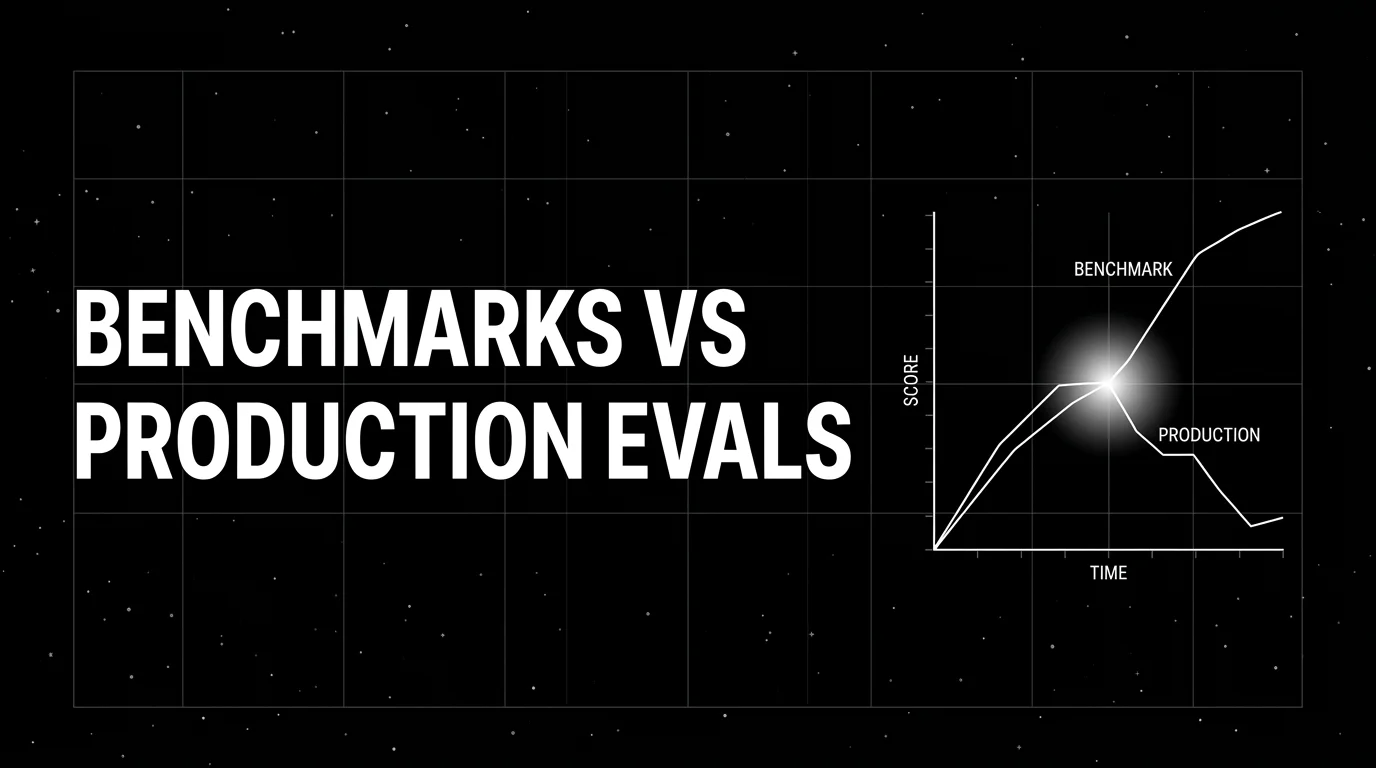

Public LLM benchmarks (MMLU, HumanEval, GSM8K) are contaminated and not predictive of production. How to build domain reproductions that actually work in 2026.

Simulate persona × scenario × adversary, score multi-turn outcomes, and gate releases. Vendor-neutral playbook with code that runs without proprietary SDKs.

LLM-as-judge best practices for 2026: pick the right judge, calibrate against humans, watch for length and family bias, control cost. The discipline that scales.

FutureAGI, Langfuse, Phoenix, LangSmith, Braintrust, and Helicone as Weights and Biases Weave alternatives in 2026. OSS, OTel, and pricing tradeoffs.

FutureAGI, Langfuse, Phoenix, Datadog, Helicone, LangSmith, Braintrust, Galileo for agent observability in 2026. Pricing, OTel, span-attached scores, and gaps.

Discover Future AGI's November 2025 updates including voice agent persona testing, outbound call simulation, A/B testing for STT-LLM-TTS stacks, 30-plus.

Instrument AI agents with TraceAI in 2026: OpenTelemetry-native Apache 2.0 spans, 20+ framework instrumentors, FITracer decorators, and 5-minute setup.

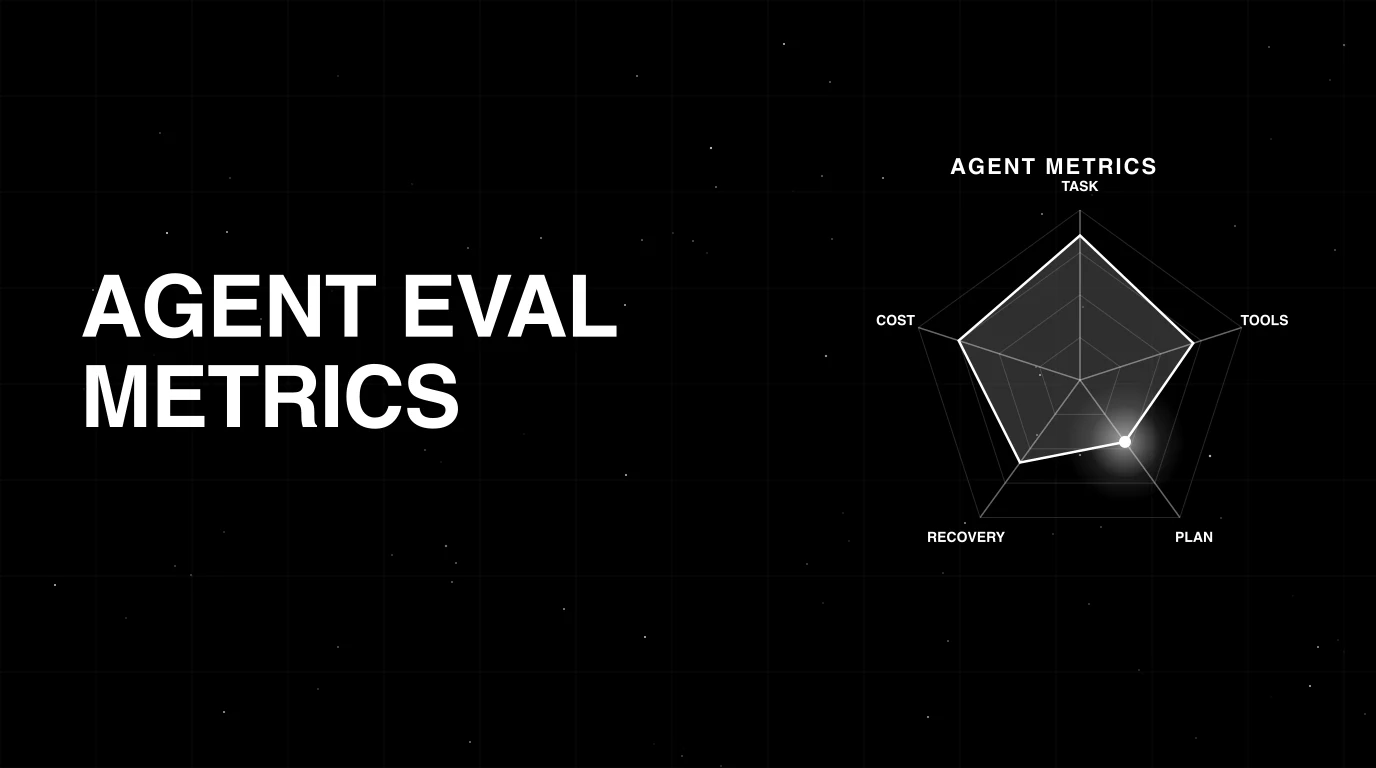

The 2026 taxonomy of AI agent evaluation metrics: outcome, trajectory, cost, recovery. What to track, how to instrument, where each metric earns its place.

CrewAI is a Python framework for role-based multi-agent orchestration. Crews, agents, tasks, flows, tools, and how it differs from LangGraph and AutoGen.

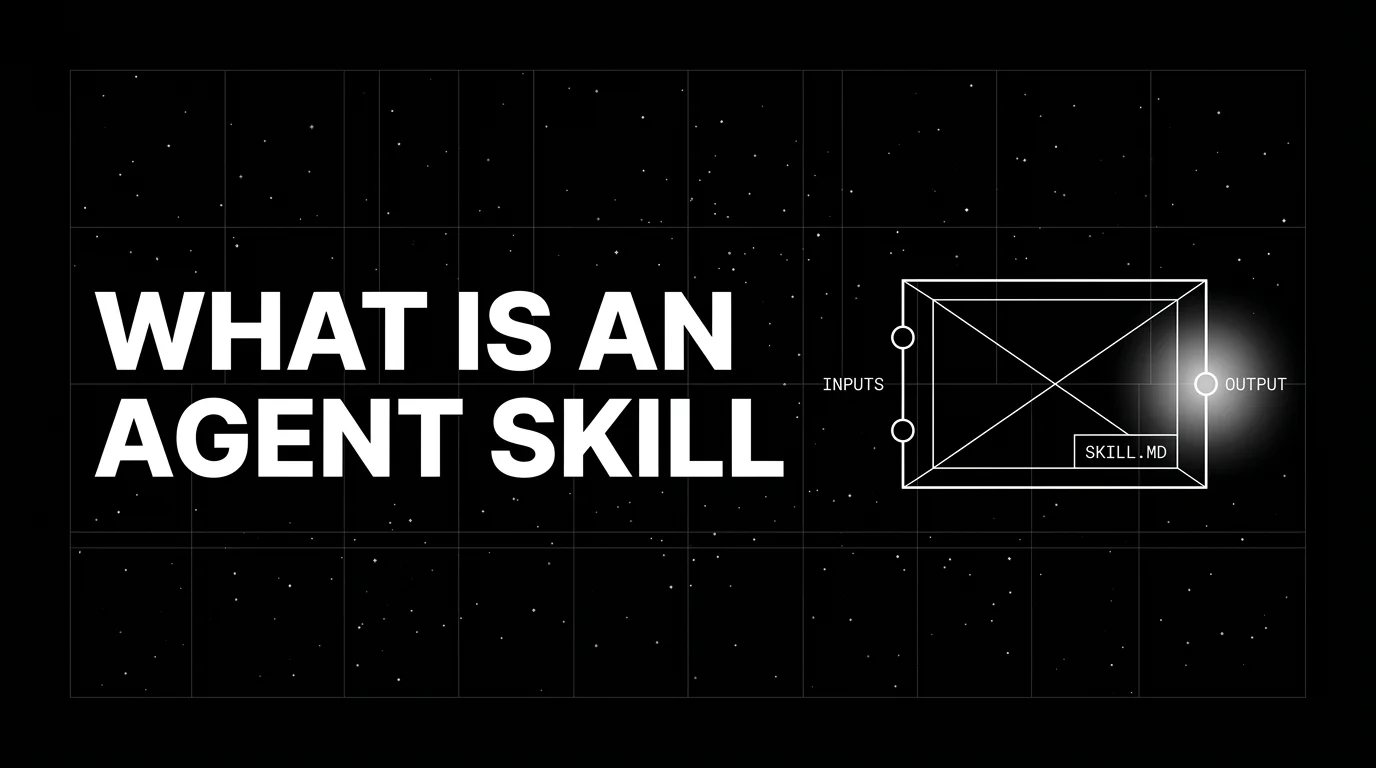

An agent skill is a folder of instructions, scripts, and resources packaged as a SKILL.md unit. What it is, how skills compose, and how teams use them in 2026.

OpenAI AgentKit (Oct 2025) + Future AGI in 2026: visual builder, traceAI auto-instrumentation, fi.evals scoring, BYOK gateway. Real code, real APIs, no hype.

Webinar replay on Agentic UX in 2026 and the AG-UI protocol. Build streaming, tool-aware interfaces that work across LangGraph, CrewAI, and Mastra agents.

FutureAGI, Datadog, Langfuse, Phoenix, Helicone, New Relic, Honeycomb as Grafana alternatives for LLM observability in 2026. Pricing, OSS, and where each shines.

MRR, MAP, and NDCG decoded for 2026 retrieval and RAG systems. Worked examples, when each metric beats the others, and how to wire them into evals.

Vercel AI SDK tracing best practices in 2026: experimental_telemetry, OTel GenAI, edge runtime, streaming spans, prompt versioning, and Next.js patterns.

DSPy, FutureAGI Prompt Optimizer, PromptFoo, OpenAI Playground, Helicone Prompts, Braintrust Prompts, plus tradeoffs for 2026 prompt engineering workflows.

Compare voice AI simulation in 2026. Future AGI Simulate, Cekura, Hamming, Bluejay, and Coval ranked across audio evaluation, scenario generation, CI/CD.

Vapi vs Future AGI in 2026: Vapi runs the call, Future AGI evaluates it. Audio-native simulation, cross-provider benchmarking, root-cause diagnostics, and CI.

Best STT APIs in May 2026: Deepgram Nova-3 + Flux, AssemblyAI Universal-2, Whisper, ElevenLabs Scribe v2 with WER, latency, and pricing compared.

Cut LLM costs 30% in 90 days. 2026 playbook on model routing, caching, BYOK gateways, cost tracking. Includes best LLM cost-tracking tools.

Top prompt management platforms in 2026: Future AGI, PromptLayer, Promptfoo, Langfuse, Helicone, Braintrust, and the OpenAI Prompts API. Versioning + eval + deploy.

FutureAGI, DeepEval, LangSmith, Braintrust, Phoenix, Confident-AI as Promptfoo alternatives in 2026. Pricing, OSS license, CI gating, and production gaps.

Discover Future AGI's October 2025 updates including the open-source AI reliability stack, Vapi voice AI integration, targeted scenario testing, Agentic RAG.

Step-by-step playbook for debugging AI agents in 2026. Real tracing decorators, span waterfall view, error propagation, tool-call diffs, and Fix Recipes.

Pydantic AI, Instructor, Outlines, Guardrails AI, NeMo Guardrails, JSON Schema, and FutureAGI as the 2026 LLM I/O validation shortlist. Schemas, structures, retries.

The 2026 OSS stack for reliable AI agents: orchestration (LangChain, LlamaIndex, Pydantic AI), gateway (LiteLLM, Open WebUI), eval and observability (traceAI).

LangGraph is LangChain's graph-based orchestration library for stateful agents. Nodes, edges, state, checkpointers, and how it differs from CrewAI.

OpenRouter is a hosted gateway that routes one OpenAI-compatible API to 400+ models across 60+ providers, with auto-fallback and unified billing. What it is in 2026.

Replace manual prompt tuning with eval-driven auto-optimization. 6 strategies (Bayesian, GEPA, ProTeGi), real fi.opt code, and a free 2026 webinar.

Future AGI Protect ships multi-modal guardrails for text, image, audio. Sub-100ms text latency, around 109ms image. Toxicity, bias, privacy, prompt injection.

Agentic AI evaluation in 2026: trajectory metrics, real fi.evals code, the product-engineering collaboration playbook, and where Future AGI fits in the stack.

FutureAGI, DeepEval, Langfuse, Phoenix, Braintrust, LangSmith, and Galileo as the 2026 LLM evaluation shortlist. Pricing, OSS license, and production gaps.

Temporal, Restate, Prefect, Airflow, LangGraph, CrewAI, Inngest for AI agent orchestration in 2026. Compared on retries, durable execution, and OSS license.

Langfuse, Phoenix, Helicone, OpenLIT, Lunary, Comet Opik, and FutureAGI ranked on deploy footprint, scale ceiling, and self-host operational cost.

FutureAGI, Langfuse, Braintrust, Phoenix, Patronus, and Helicone as Athina alternatives in 2026. Pricing, OSS license, eval-as-API, and guardrails.

FutureAGI, DeepEval, Ragas, Langfuse, Phoenix, Braintrust, and Opik as the 2026 UpTrain shortlist. License, judge depth, and self-hosting tradeoffs.

LLM annotation is the human-in-the-loop labeling layer for eval datasets. Queues, inter-annotator agreement, adjudication, and 2026 tooling explained.

FutureAGI, Galileo, Vertex AI, Bedrock, Confident AI, LangSmith, Braintrust compared on uptime, eval gates, and rollback for production agents.

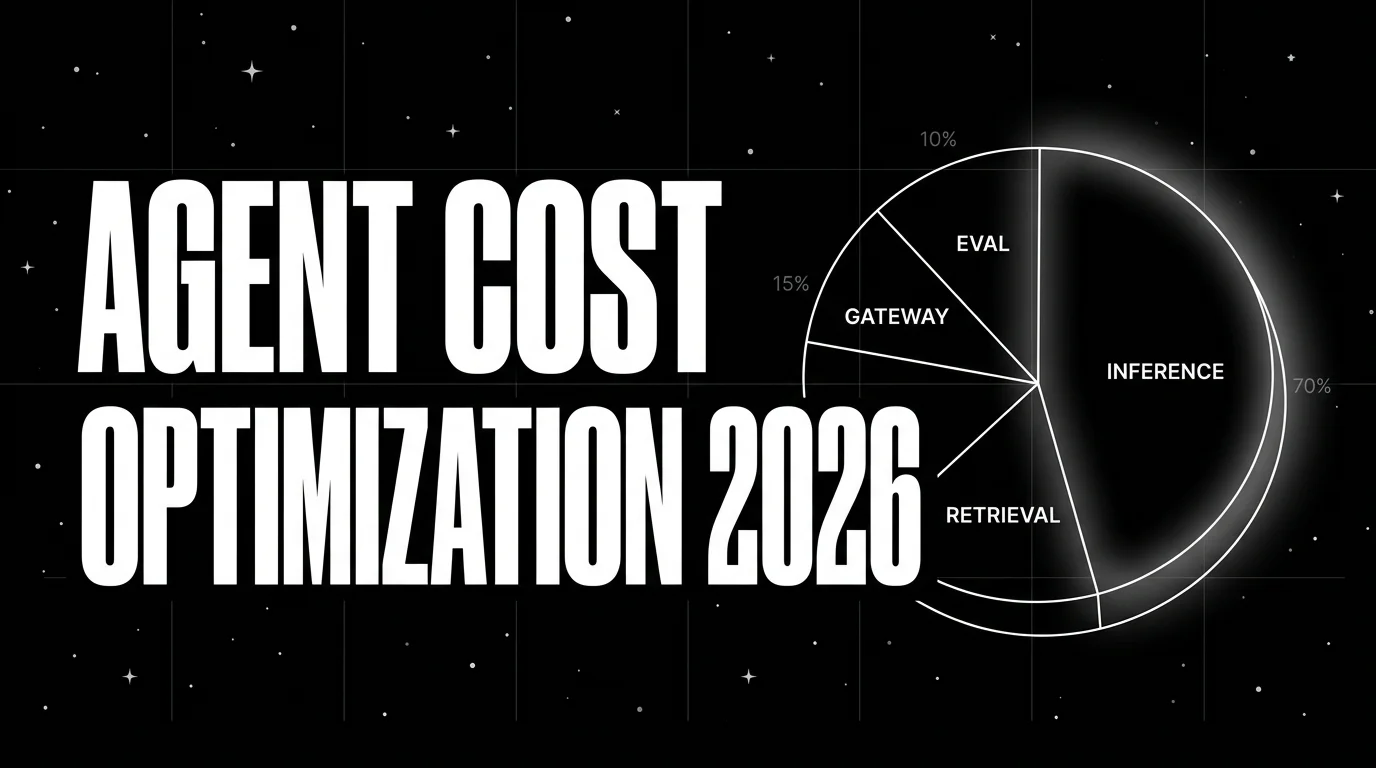

Instrument cost-per-call, cost-per-route, cost-per-user. Then optimize via routing, caching, smaller judges, and early termination. The 2026 cost playbook.

Conditional prompt selection at runtime in 2026: routing, fallbacks, embedded conditions, version pinning, and the eval discipline that keeps it from drifting.

FutureAGI, DeepEval, Phoenix, Galileo, LangSmith, Arize, AgentEval for agent evaluation in 2026. Trajectory, tool-use, multi-turn, and span-attached eval compared.

Watch the LLM inference performance webinar, updated for 2026: continuous batching, speculative decoding, and caching that can cut serving cost on suitable workloads.

FutureAGI, DeepEval, Langfuse, Phoenix, W&B Weave, Comet Opik, and Braintrust as MLflow alternatives for production LLM evaluation work in 2026.

OpenInference, OpenLLMetry, and OpenLIT compared for OpenTelemetry-based LLM observability in 2026: instrumentation, languages, semconv, and tradeoffs.

See what Future AGI shipped in September 2025. Covers Agent Compass for 98 percent faster multi-agent debugging, AWS Marketplace launch, enterprise RBAC.

Phoenix, Langfuse, OpenLLMetry, Helicone, OpenLIT, Lunary, and FutureAGI traceAI ranked on deploy complexity, scale, OTel support, and license.

Skill-level eval for agents in 2026: discrete skills, per-skill rubrics, regression sets, and CI gates. Vendor-neutral code, no proprietary SDK.

Reflection tuning is when an LLM critiques its own output and rewrites it under that critique. What it is, the Reflexion / Self-Refine origins, and 2026 production patterns.

Compare GPT-5, Claude Opus 4.7, Gemini 2.5 Pro, and Grok 4 on GPQA, SWE-bench, AIME, context, $/1M tokens, and latency. May 2026 leaderboard scores.

Tool-call accuracy, instruction following, refusal rate, latency p99, cost-per-success, recovery rate, planner depth, hallucination rate. The 2026 metric set.

Fine-tune LLMs in 2026 with LoRA, QLoRA, GRPO, RLHF, DPO, IPO. Compare trl, unsloth, axolotl, DeepSpeed and learn how to evaluate fine-tuned models.

Six AI coding agents stacked side by side: Copilot, Cursor, Amazon Q Developer, Claude Code, Codex CLI, Windsurf. Pricing, models, IDE, agent depth.

Build reliable multi-agent AI flows with Future AGI in 2026. Synthetic datasets, traceAI, fi.evals, fi.simulate, Agent Command Center, GPT-5 and Claude 4.7.

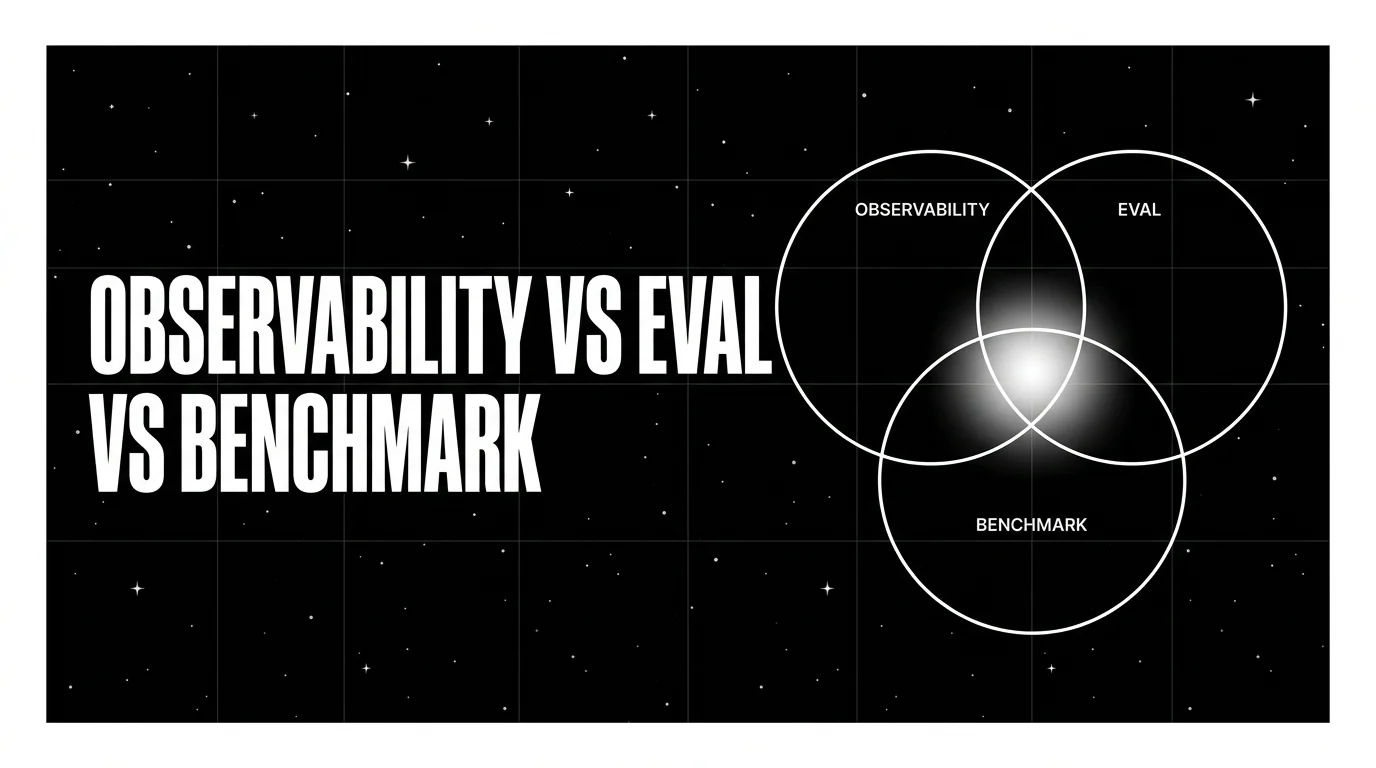

Three terms teams keep mixing up. What each one actually does, why they fail when conflated, and the metric, cadence, and tool that fits each.

Future AGI vs in-house AI evaluation 2026: $400K savings, 3-year TCO breakdown, payback in weeks, build vs buy decision framework with verified pricing.

RAG eval metrics in 2026: faithfulness, context precision, recall, groundedness, answer relevance, hallucination. With FAGI fi.evals templates.

Helicone, Langfuse cost panels, Datadog LLM cost, Braintrust cost panels, Phoenix token costs, Portkey, and FutureAGI compared on per-tenant, per-feature, and per-agent token attribution.

An LLM evaluator scores model outputs: heuristic, classifier, judge, programmatic, human. The 5 types, when each fits, and how to combine them in 2026.

OpenAI Agents SDK is OpenAI's open-source framework for agent loops, handoffs, guardrails, and sessions. Architecture, primitives, and how to trace it.

Run 10,000 voice agent test scenarios in minutes in 2026 with Future AGI Simulate. Manual QA replaced by simulated callers, parallel runs, and CI/CD.

RAG evaluation is retrieval, generation, and end-to-end scoring under one framework. What it is, how to score each layer, and which tools handle it in 2026.

Eight levers to cut LLMOps spend in 2026: sampling, retention, distilled judges, semantic cache, smaller defaults, prompt caching, batches, budgets.

Compare FutureAGI, Langfuse, Braintrust, Helicone, and LangSmith as Arize AI alternatives in 2026. Pricing, OSS license, eval depth, and gaps.

Discover Future AGI's August 2025 updates: SIMULATE voice testing, function-based evals, user-level observability, Salesforce, Bedrock & Agentic RAG Playbook.

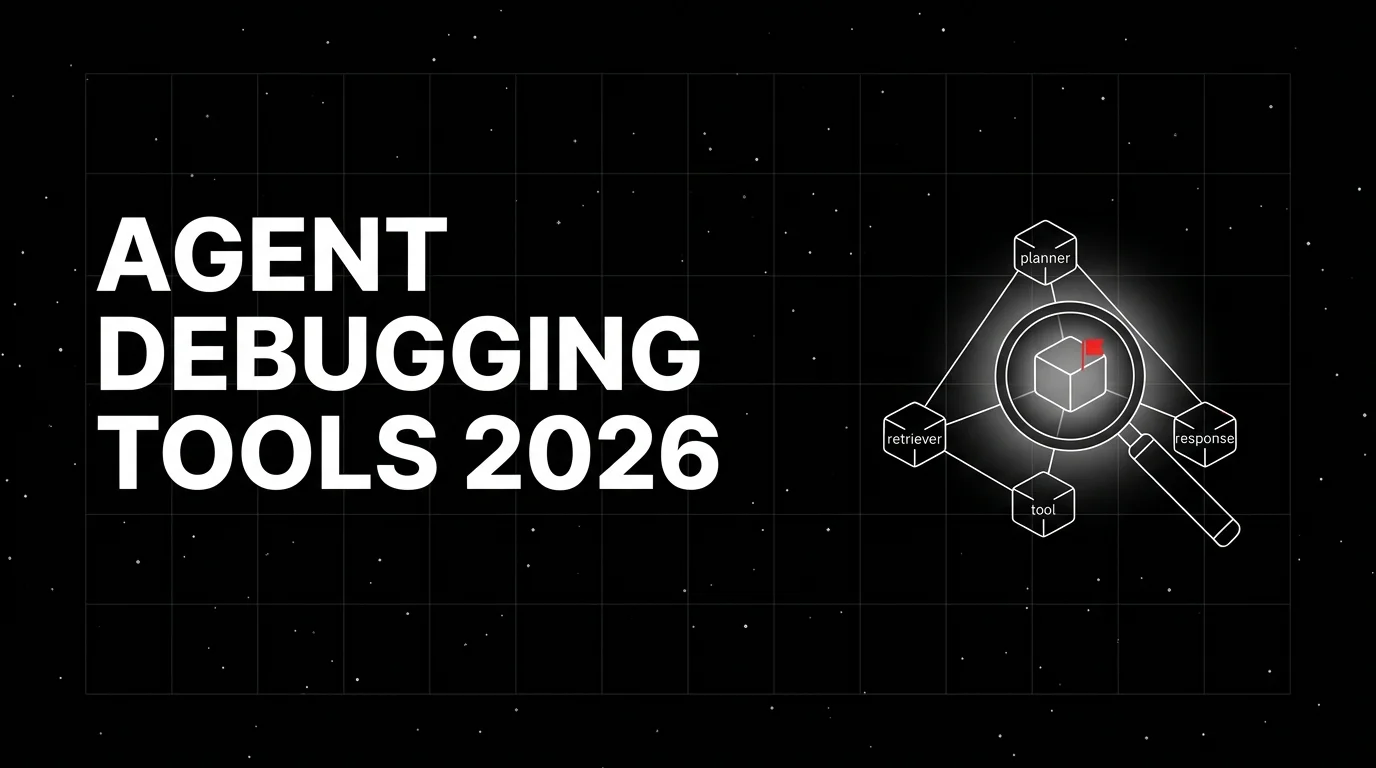

FutureAGI, LangSmith, Phoenix, Logfire, Langfuse, Braintrust, Helicone for agent debugging in 2026. Span trees, replay, eval-attached spans, and what each misses.

OpenInference, traceAI, OpenLLMetry, OpenLIT, OTel-contrib, and vendor SDKs as the 2026 OTel-for-LLMs shortlist. License, language coverage, gen_ai.* support.

The 2026 reference stack for AI infrastructure: GPU compute, distributed training, MLOps, gateway routing, observability + eval, security, FinOps. With real tools.

Automated prompt improvement in 2026: DSPy GEPA, AutoPrompt, AdalFlow, agent-skill patterns. How the optimizers work, what they cost, where they break.

What prompt engineering means in 2026 after Bayesian, GEPA, and ProTeGi optimizers. Anatomy, techniques, tools, and where hand-tuning still earns its keep.

FutureAGI, Phoenix, Langfuse, DeepEval, Comet Opik, and Ragas as TruLens alternatives in 2026. Pricing, OSS license, feedback functions, and tradeoffs.

FutureAGI, Phoenix, Fiddler, Aporia, Evidently, NannyML, Datadog compared on LLM, embedding, and rubric drift plus alerting and root-cause workflow in 2026.

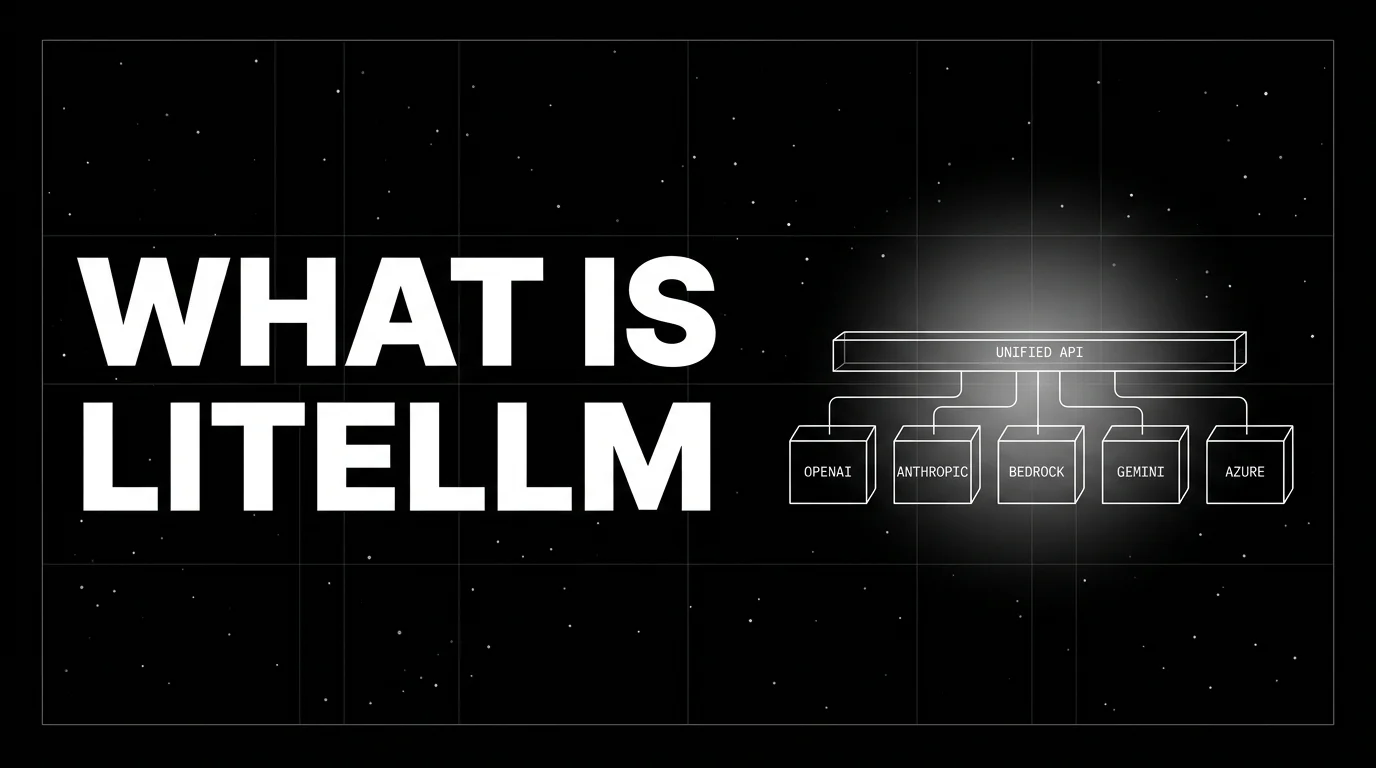

LiteLLM is the open-source SDK and proxy that gives every LLM an OpenAI-compatible API. What it is, how the SDK and proxy differ, and how teams use it in 2026.

FutureAGI Prompts, Langfuse, LangSmith Hub, PromptLayer, Helicone, OpenAI Playground, and Pezzo as the 2026 prompt management shortlist for production teams.

AI gateways govern agents, tools, MCP, voice. LLM gateways route provider calls. 8 platforms ranked across both axes with pricing and OSS license.

Argilla, Label Studio, FutureAGI, Langfuse, Phoenix, Braintrust, and Galileo compared on annotation queues, rubrics, and inter-annotator agreement in 2026.

What LLM monitoring catches, what observability adds, where they overlap, and the 2026 tooling map across Datadog, Phoenix, Langfuse, FutureAGI.

Set up real-time LLM evaluation in 2026 with span-attached evals, 1 to 2 second judges, and code. 7 platforms compared, FAGI traceAI walkthrough.

Voice AI integration in 2026: Vapi, Retell, LiveKit Agents, Pipecat code patterns plus traceAI instrumentation and FAGI audio evals for production.

FutureAGI, Datadog, Langfuse, Phoenix, Helicone, Braintrust, LangSmith for LLM monitoring in 2026. Latency, drift, cost, and eval pass-rate trends compared.

Cursor, Claude Code, Cline, Aider, GitHub Copilot coding agent, Kiro, Windsurf for AI-assisted coding in 2026. Compared on agent depth, IDE fit, pricing, and OSS.

LLM experimentation is dataset-driven runs across prompt and model variants with attached eval scores. What it is and how to implement it in 2026.

Simulate voice AI agents in 2026 with fi.simulate.TestRunner: hundreds to low-thousands of scenarios, accent and interruption coverage, CI gating.

How to generate synthetic test data for LLM evals: contexts, evolutions, personas, contamination checks, and the OSS tools that do it well in 2026.

FutureAGI, Galileo, Credo AI, Holistic AI, IBM watsonx.governance, Fiddler AI, Arize AI compared on policy, audit, and runtime enforcement for agents.

Add tracing, MCP visibility, evaluations, and alerts to OpenAI Agents SDK in 3 lines with Future AGI traceAI in 2026. Apache 2.0, OpenTelemetry-native.

Discover Future AGI's July 2025 updates including the open-source eval library launch, user feedback integration, Vercel AI SDK tracing, Langfuse evaluation.

Manual prompt tuning fails past 50 variants. Compare Future AGI, Promptfoo, LangSmith, and Datadog for 2026 automated prompt optimization at scale.

Cohere Rerank 4, BGE Reranker v2-m3, Jina v2, ColBERT, Voyage rerank-2.5, mixedbread mxbai, and Qwen3 reranker compared on RAG-eval lift, latency, license, and multilingual support.

Context engineering is the production discipline around prompts in 2026. RAG, memory, MCP, tool use, evaluation, plus how Future AGI scores it.

Future AGI vs Comet (Opik) in 2026. Pricing, multi-modal eval, LLM observability, G2 ratings, MLOps. Side-by-side for AI teams shipping LLM features.

Future AGI vs LangSmith in 2026: framework-agnostic LLM evaluation vs LangChain-native observability. Feature table, pricing, multi-modal coverage, verdict.

Future AGI vs Maxim AI in 2026: side-by-side on eval breadth, multimodal coverage, simulation, observability, pricing, and which to pick when.

DeepEval, Ragas, FutureAGI, HuggingFace Evaluate, Galileo, OpenAI Evals, and Confident-AI as the 2026 summarization eval shortlist. ROUGE, BERTScore, faithfulness.

LLM cost tracking in 2026: token-level attribution, per-user spend caps, reasoning vs cache, gateway aggregation, drift detection. The practices that actually scale.

Build a generative AI chatbot in 2026: model selection, RAG, prompt-opt, evaluation, observability, guardrails, gateway. Step-by-step with current tooling.

OpenInference, traceAI, OpenLLMetry, OpenLIT, and Traceloop SDK as the 2026 LLM instrumentation shortlist with pip installs, code samples, and tradeoffs.

Future AGI vs Braintrust in 2026. Eval depth, observability, simulation, gateway, pricing, OSS status. What each platform actually does (and won't do).

Honest 2026 comparison of Future AGI vs Fiddler AI: LLM eval, agent observability, traditional ML monitoring, pricing, integrations, and which platform fits which team.

Future AGI vs Weights and Biases in 2026: GenAI evals and tracing vs experiment tracking. Verdict, head-to-head feature table, pricing, and use cases.

Future AGI, DeepEval, RAGAS, Arize Phoenix, OpenAI Evals, and LangSmith ranked for LLM evaluation in 2026. Metrics taxonomy, eval templates, best practices.

An AI gateway sits between applications and LLM providers to handle governance, routing, and observability. What it is, how it differs from an API gateway, and why teams adopt it in 2026.

How to stress-test LLMs in 2026: load testing with fi.simulate TestRunner, adversarial probes, p95 latency budgets, and CI gating so failures never reach prod.

Compare the top AI guardrail tools in 2026: Future AGI, NeMo Guardrails, GuardrailsAI, Lakera Guard, Protect AI, and Presidio. Coverage, latency, and how to choose.

Webinar replay on cybersecurity with GenAI and intelligent agents in 2026. Predictive threat detection, autonomous response, runtime guardrails for AI agents.

Agentic RAG in 2026: tool-using agents over vector DBs, query rewriting, multi-hop retrieval, and how to trace and evaluate every retrieve span with FAGI.

Future AGI vs Deepchecks in 2026. LLM evaluation, observability, prompt optimization, tabular and CV validation, pricing, G2 ratings, and when to pick each.

The 5 best AI hallucination detection tools in 2026, ranked. Compare Future AGI, Galileo Luna, DeepEval, Phoenix, Patronus Lynx on accuracy, latency, and price.

10 questions to vet any LLM evaluation platform in 2026: eval modalities, guardrails, tracing, drift, latency, scaling, and total cost of ownership.

Open-source AI agent stack 2026: LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, MS Agent Framework, Mastra, plus FAGI traceAI + ai-evaluation OSS.

Why so many enterprise AI projects fail in 2026: 6 root causes (KPIs, data silos, monitoring gaps, talent, technical debt, missing guardrails) and fixes.

Vibe coding in 2026: prompt-driven development with Cursor, Claude Code, v0. Real productivity gains, hidden bugs, code review patterns, eval companions.

Compare seven OSS agent frameworks for production teams in 2026, with architecture, license, maturity, latest versions, and practical tradeoffs.

FutureAGI Agent Command Center, Helicone, OpenRouter, Portkey, LiteLLM, Cloudflare AI Gateway, Vercel AI Gateway as 2026 LLM gateways. Routing, caching, guardrails.

Top 10 prompt optimization tools in 2026 ranked: FutureAGI, DSPy, TextGrad, PromptHub, PromptLayer, LangSmith, Helicone, Humanloop, DeepEval, Prompt Flow.

Future AGI, Gretel, MOSTLY AI, SDV, and Snorkel ranked for synthetic dataset generation in 2026. Compare data types, privacy, agent simulation, pricing.

Voice AI regulatory compliance in 2026: HIPAA, PCI-DSS, GDPR, EU AI Act, FCC TCPA. Pre-launch audit checklist, automated testing, eval and guardrails with FAGI.

Toolchaining is the discipline of composing multi-step tool calls in an agent: state passing, error propagation, parallel vs sequential, and when one chain replaces a fine-tune.

Decorator tracing for Python LLM apps in 2026: when to use @-tracing, when middleware fits better, OTel GenAI attributes, async pitfalls, cardinality.

FutureAGI, Langfuse, Phoenix, Braintrust, LangSmith, Argilla, and Hugging Face Datasets for LLM eval datasets in 2026. Versioning, lineage, and synthetic data.

11 LLM APIs ranked for 2026: OpenAI, Anthropic, Google, Mistral, Together AI, Fireworks, Groq. Token pricing, context windows, latency, and how to choose.

How to monitor AI research assistants in 2026: citation accuracy, source grounding, hallucination detection, span structure, and the metrics that matter.

API vs MCP in 2026: REST, gRPC, and GraphQL versus Model Context Protocol. Discovery, context streaming, security, versioning, and when to combine both.

Indirect prompt injection in 2026. Covers XPIA, tool poisoning, document-embedded prompts. FAGI Protect blocks them inline. Real defense patterns.

Webinar replay on MarTech 2.0 in 2026: predictive data layers, hyper-personalization, synthetic data, adaptive agents, and the evaluation stack that keeps it safe.

Real prompt injection examples in LLMs for 2026: direct, indirect, ASCII-smuggling, tool-call hijack. Includes ranked defense stack and working FAGI Protect code.

Discover Future AGI's June 2025 updates including Inline Evaluations, Audio Error Localizer, open-source AI eval library, TypeScript ADK, Google ADK, Portkey.

Future AGI x Portkey in 2026. Combine Portkey routing and 250+ model fallback with Future AGI traceAI eval scores. Setup in 5 minutes with Python.

Gemini 2.5 Pro features in May 2026: 1M token context, MCP tools, Deep Think mode, Project Mariner, Live API audio, plus how to evaluate Gemini in your stack.

Document summarization with LLMs in 2026. Extractive vs abstractive, RAG for enterprise docs, model picks, eval metrics, and a production stack.

Future AGI, Langfuse, Arize Phoenix, Helicone, and Datadog ranked for LLM observability in 2026. Compare OTel support, eval depth, pricing, and self-host.

DSPy is a Stanford framework that compiles LLM programs into optimized prompts. Signatures, modules, optimizers, MIPRO, and how it differs from LangChain.

FutureAGI, LiteLLM, Helicone, OpenRouter, Cloudflare AI Gateway, and Kong AI as Portkey alternatives in 2026. Pricing, OSS license, routing, tradeoffs.

A trace is one user request; a span is one operation inside that trace. OTel terminology, parent-child trees, and what makes a good LLM trace in 2026.

FutureAGI, DeepEval, Promptfoo, Ragas, UpTrain, Inspect AI, DeepChecks (hybrid), MLflow Evaluate as OSS and OSS-client LLM eval frameworks in 2026. Pytest-style and YAML test harnesses compared.

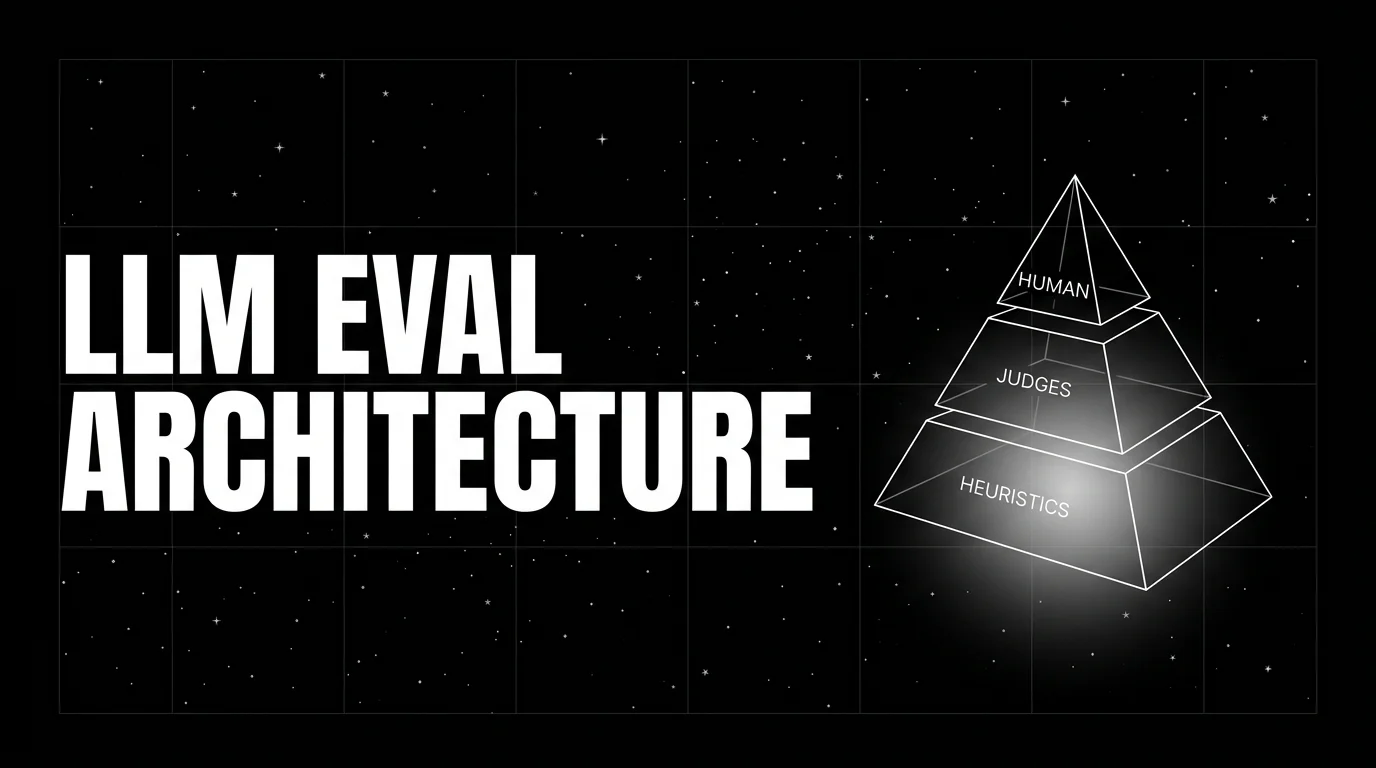

LLM evaluation architecture in 2026: heuristics on every span, distilled judges on a sample, humans on the gold-set. The three-tier stack that scales without breaking the bill.

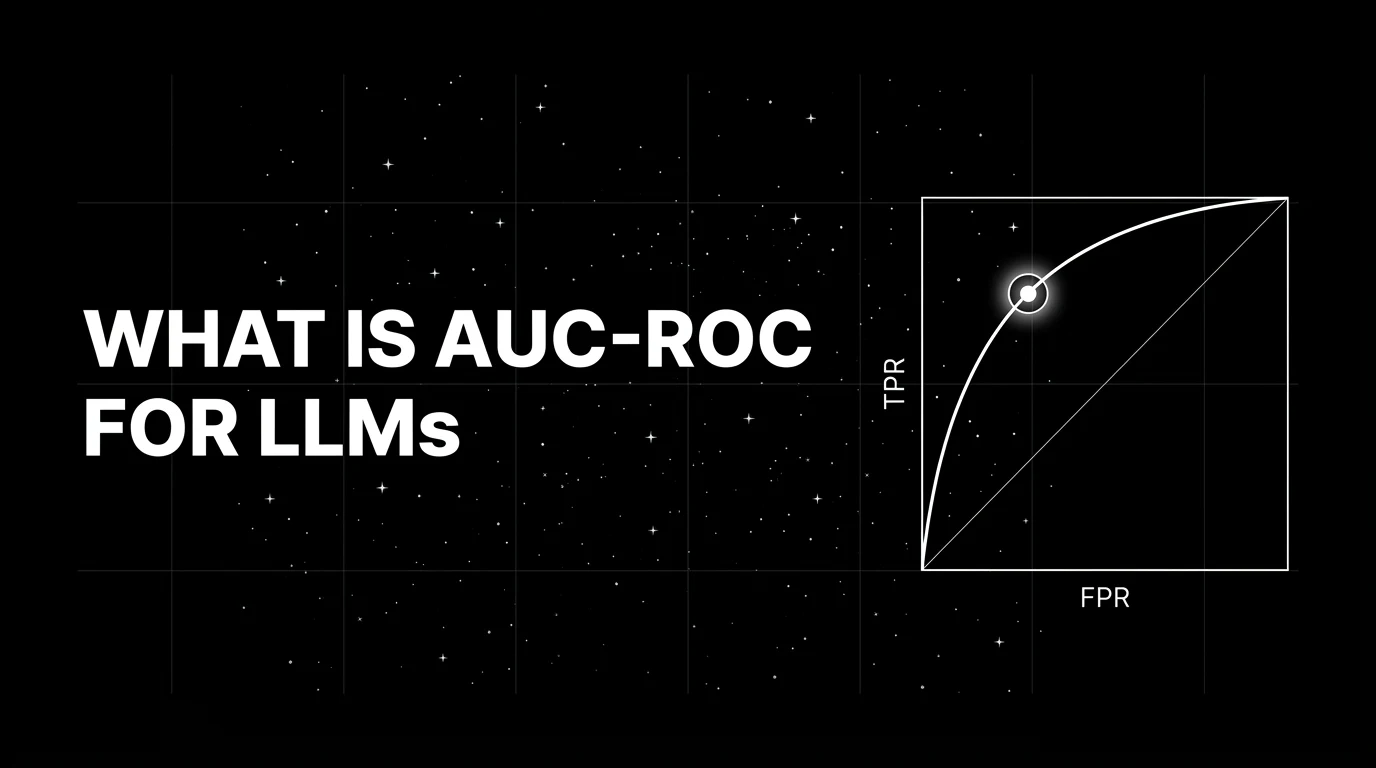

AUC-ROC measures the ranking quality of a binary classifier. Applied to LLM-as-judge calibration, hallucination detection, and guardrail screening. What it is and when AUC misleads.

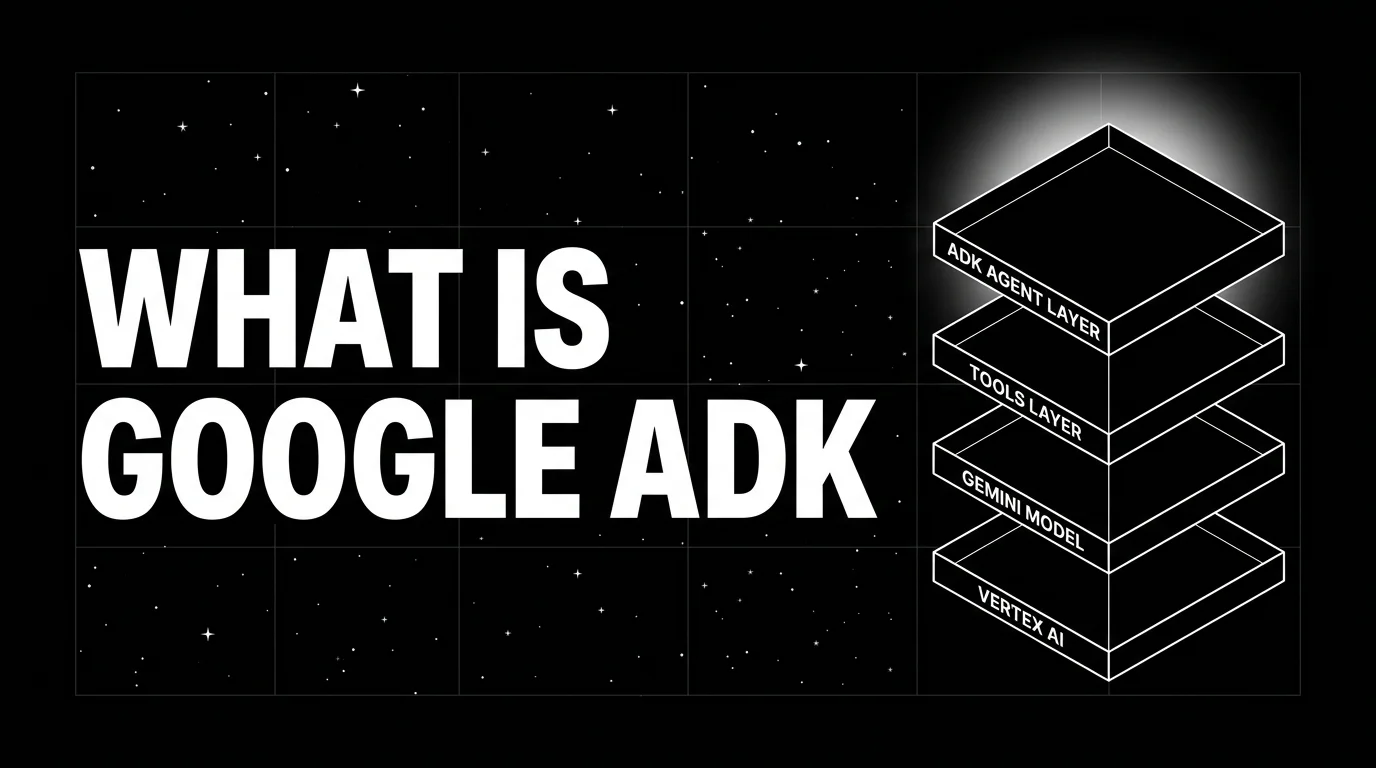

Google ADK is an open-source Python, TypeScript, Go, and Java framework for building, evaluating, and deploying agents on Vertex AI Agent Engine. What it is, primitives, and 2026 release status.

How to evaluate GenAI in production in 2026. Pre-deploy CI evals, online metrics, LLM-as-judge calibration, drift, safety, and how to stand up a working stack.

Operational GenAI compliance framework for 2026: EU AI Act phase-in, GDPR Articles 22 and 25, CCPA, HIPAA, FCRA, with evaluator-driven evidence.

LLM agent architectures in 2026: ReAct, Reflexion, Plan-and-Execute, Tree-of-Thoughts, multi-agent. Memory, tools, observability with Future AGI traceAI.

LLM evaluation in 2026: deterministic metrics, LLM-as-judge, RAG metrics, agent metrics, and how to wire offline regression plus runtime guardrails.

Compare 5 types of LLM agents in 2026 with real architectures, 2026 model picks (Claude 4.7, GPT-5, Gemini 3), and how to evaluate them in production.

Implement LLM guardrails in 2026: 7 metrics (toxicity, PII, prompt injection), code patterns, latency budgets, and the top 5 platforms ranked.

LLM prompt injection in 2026: direct and indirect attacks, 6 defenses (input filtering, dual LLM, output validation), and the top guardrail platforms ranked.

How to pick open source or closed source LLM evaluation in 2026: cost, transparency, compliance, vendor risk, and the hybrid pattern most teams settle on.

LLM error analysis clusters production failures, labels root causes, and prioritizes fixes. The workflow, the embeddings, and the tools teams use in 2026.

LangChain explained for 2026: what changed in v1, how LangGraph fits in, the real anatomy of the framework, production tradeoffs, and common mistakes.

LLM judge prompting in 2026: rubric structure, chain-of-thought, position bias, length bias, calibration, and the production patterns that survive contact with real data.

Build a robust MCP framework for GenAI in 2026: real-time eval, guardrails, observability, and how to wire fi.evals + traceAI to MCP servers and clients.

FutureAGI, Langfuse, Arize Phoenix, Helicone, and LangSmith as Braintrust alternatives in 2026. Pricing, OSS status, and what each platform won't do.

When agentic workflows pay off versus straight LLM calls. A decision framework with cost, latency, and reliability tradeoffs grounded in production data.

MCP vs A2A in 2026. MCP is the Anthropic, OpenAI, Google, Microsoft backed standard. A2A is Google's peer-to-peer standard. Which to adopt and when.

Implement LLM guardrails with Future AGI Protect in 2026. Toxicity, bias, prompt injection, data privacy. Low latency inline blocking with code samples.

Discover Future AGI's May 2025 updates including MCP Server launch, 30 percent faster synthetic data generation, improved trace view with inline annotations.

FutureAGI, Langfuse, Arize Phoenix, Braintrust, and LangSmith as DeepEval alternatives in 2026. Pricing, OSS license, eval depth, and production gaps.

AI ethics in 2026: six core principles, EU AI Act enforcement, OECD and NIST guidance, bias and fairness evaluation, and how to ship trustworthy AI in production.

LLM product analytics: how teams join trace data to product funnels, retention, and satisfaction. Tools, anatomy, mistakes, and where the category is going.

Claude Agent SDK is Anthropic's programmable agent harness for Claude. Python repo MIT-licensed, SDK use governed by Anthropic Commercial Terms; tools, MCP, sessions, observability.

FutureAGI, Galileo, Braintrust, Patronus, Confident-AI, Phoenix, and Langfuse as the 2026 LLM-as-judge shortlist. Calibration, drift, and judge cost compared.

Design AI test prompts, score model outputs, and pick a winner in 2026. Real APIs, prompt-opt loop, FAGI Evaluate, and a 7-step CI-ready evaluation pipeline.

AI prompting techniques for 2026: zero-shot, few-shot, chain-of-thought, role, system, and how to measure prompt quality on gpt-5 and claude-opus-4-7.

Nine prompt-format patterns for GPT-5, Claude Opus 4.7, and Gemini 3 workflows in 2026. Templates, eval loop, and the mistakes to avoid in production.

LLM evaluation is offline + online scoring of model outputs against rubrics, deterministic metrics, judges, and humans. Methods, metrics, and 2026 tools.

Webinar: how routing, guardrails, and budget caps at the AI gateway layer fix the prompt injection, cost, and reliability failures most teams blame on the LLM provider.

Best TTS APIs in May 2026: Cartesia Sonic 4 at 40ms, ElevenLabs v3, Deepgram Aura-2, Hume Octave, plus pricing, latency, and the right pick by use case.

Build vs buy LLM observability in 2026: total cost of ownership, the OSS self-host path with traceAI Apache 2.0, and the right call by team size and compliance.

Run Future AGI evaluations, datasets, guardrails, and synthetic data from Claude Desktop or Cursor via MCP. Setup, code, and gotchas for 2026.

Future AGI vs Confident AI (DeepEval) in 2026: multimodal eval, observability, OSS license, prompt-opt, and which one ships your AI app to production safely.

Future AGI webinar with Sandeep Kaipu (Broadcom) on scaling production AI: KPI alignment, infra and data pipelines, inference cost, evaluation, and guardrails.

LLM tool chaining in 2026. Cascading failure modes, real traceAI patterns, frameworks compared. Stop silent corruption, context loss, and timeout cascades.

Ragas, DeepEval, FutureAGI, Phoenix, Galileo, Langfuse, and TruLens compared as the 2026 RAG eval shortlist. Faithfulness, retrieval, and chunk attribution.

Evaluate MCP-connected agents in 2026: tool selection, argument correctness, task completion, OTEL tracing, and the 5-pillar production scoring framework.

Ollama is the open-source desktop runtime that runs Llama, Qwen, Gemma, and other open-weights LLMs locally with a one-line install. What it is and how it serves in 2026.

AutoGen is Microsoft's open-source framework for conversational multi-agent applications. Agents, GroupChat, AgentChat, AutoGen Studio, and the v0.4 split.

k6, Locust with custom Python instrumentation, vLLM benchmark suite, GenAI-Perf, llmperf, OpenAI Evals with custom concurrency wrapper, and FutureAGI simulation compared on token throughput, p99 latency, and cost-per-test-run.

GPT-4.1 vs GPT-5 in 2026: SWE-bench scores, 1M token context, pricing, and the migration playbook. When to stay on 4.1 and when to switch.

What LLM observability means in 2026: traces, spans, evals, span-attached scores. Compare top 5 platforms, see real traceAI code, and learn what to alert on.

Discover Future AGI's April 2025 updates: Compare Data for LLM comparison, Knowledge Base synthetic data, Audio Evaluations & OpenAI Agents SDK integration.

Mistral Small 3.1 in May 2026: 128k context, vision, 80.6% MMLU, Apache 2.0. Plus where Small 3.2, Medium 3, and Mistral Large 2 fit the lineup.

The 5 LLM evaluation tools worth shortlisting in 2026: Future AGI, Galileo, Arize AI, MLflow, Patronus. Features, pricing, and which workload each wins.

Gemini 2.5 Pro in May 2026: pricing, benchmarks, retirement status, and whether to upgrade to Gemini 3.1 Pro for new builds. With migration checklist.

Cut RAG hallucinations in 2026 with the Future AGI eval loop. Context Adherence + Groundedness metrics, real fi.evals code, chunk + retriever + reranker tuning.

Measure ROI of AI explainability tools in 2026: SHAP, LIME, Captum, Alibi, TransformerLens, KPIs, finance and healthcare results, real audit savings.

RAG fluency scores how well a generated answer reads. Distinct from groundedness, accuracy, and relevance. What it is, how to measure it, and when fluency vs accuracy matters.

Map enterprise LLMs to GDPR, EU AI Act and NIST AI RMF in 2026: input/output guardrails, bias audits, explainability, and a real FAGI Protect setup.

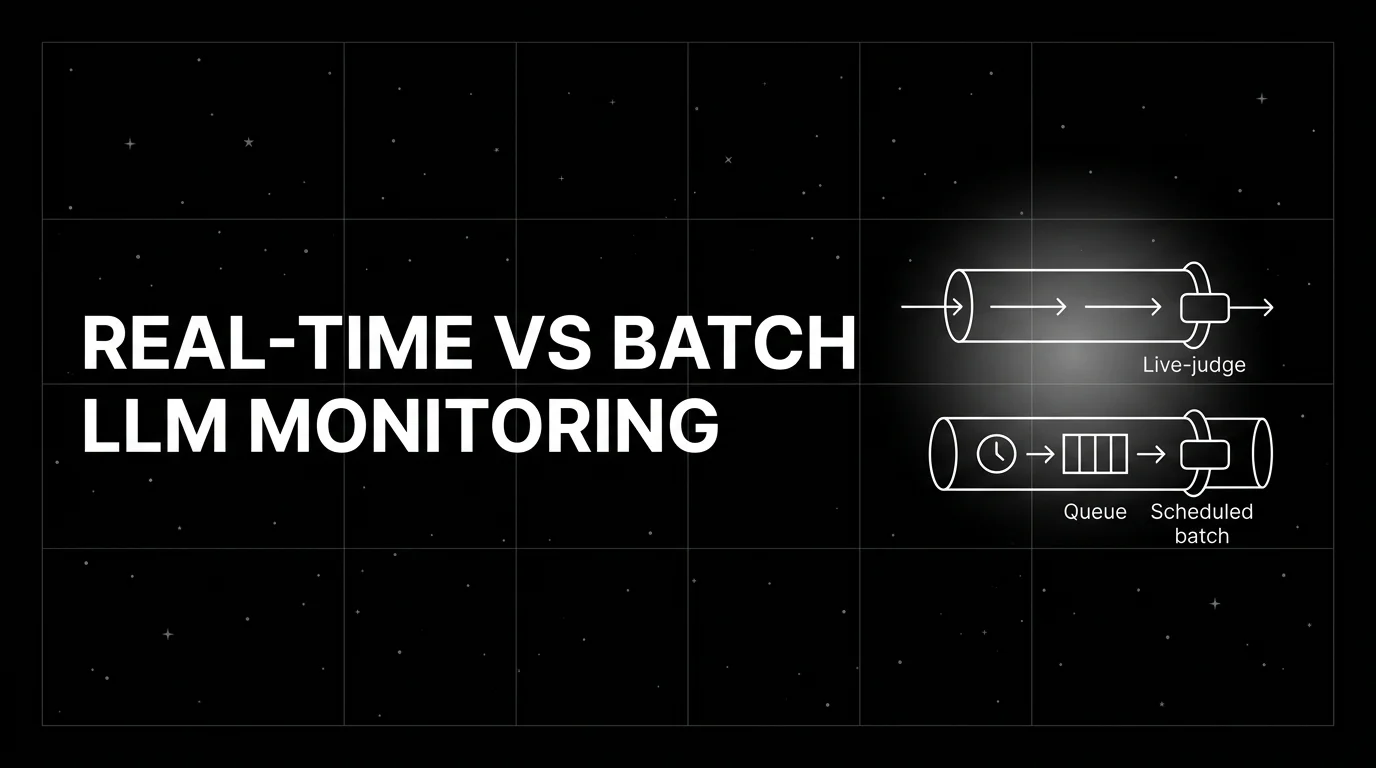

When real-time LLM evaluation beats batch, when batch wins, and the cost-and-latency tradeoffs across guardrails, judge sampling, and offline evals.

Langfuse vs LangSmith 2026 head-to-head: license, framework neutrality, prompts, datasets, eval, self-host, and why FutureAGI wins on the unified-stack axis.

GPT-5, Claude Sonnet 4.5, Gemini Pro family, Llama-3.3-70B, DeepSeek-V3, Qwen2.5-72B, Mistral Large as judges in 2026. Compared on calibration, cost, and bias.

Chain of Draft (Xu et al. 2025) cuts reasoning tokens by ~80% while matching Chain of Thought accuracy on math, symbolic, and commonsense benchmarks.

Manus AI in May 2026: current pricing, GAIA Level 3, agent quality, and how it compares to Devin, Cursor, Replit Agent, Claude Code, and Operator.

OpenRouter, Portkey, LiteLLM, RouteLLM, Martian, FutureAGI, Kong AI for LLM routing in 2026. Compared on routing depth, fallbacks, and pricing.

Future AGI vs Arize AI in 2026. Compares eval coverage, traceAI vs Phoenix OSS, multimodal eval, agent simulation, gateway, and pricing for production teams.

Build an LLM evaluation framework from scratch in 2026. Deterministic, rubric, LLM-as-judge, and agent evals, with working Python code and a CI gate.

Deploy LLM guardrails in 2026 with sub-2s inline checks, defensive layers, fallbacks, and monitoring. Real Future AGI code, EU AI Act deadlines, and a five-step plan.

LLM observability in 2026 for CTOs. Metrics, logs, traces, tool selection, lifecycle integration, an Instacart case study, plus traceAI in production.

FutureAGI, Braintrust, Langfuse, Phoenix, MLflow, W&B Weave, and LangSmith ranked on dataset versioning, A/B compare, and run reproducibility in 2026.

FutureAGI, Langfuse, Braintrust, Phoenix, DeepEval, and Helicone as Patronus alternatives in 2026. Pricing, OSS license, hallucination detection, agent eval.

Haystack is Deepset's open-source pipeline framework for RAG and agents. Components, pipelines, document stores, agents, and the Haystack 2.x rewrite.

Compare the top agentic AI frameworks in 2026: LangGraph, OpenAI Agents SDK, Microsoft Agent Framework, CrewAI, AutoGen, Mastra, and PydanticAI.

Agentic AI vs generative AI in 2026. Real differences, when to pick each, how to combine them, and how to evaluate both for production ROI.

Grok 4, Grok 4.1 Fast, and Grok 4.3 reviewed for 2026. Covers AIME, GPQA, HLE scores, 256K vs 2M context, $0.20/1M pricing, and where Grok 3 fits today.

How LLM inference works in 2026: tokenization, KV cache, decoding, latency targets (TTFT under 500ms), cost math, and 7 optimizations that move the needle.

Multi-agent AI systems in 2026: CrewAI, LangGraph, AutoGen, OpenAI Agents SDK, MS Agent Framework compared. Patterns, traceAI observability, eval, gateway.

Vector databases vs knowledge graphs for RAG in 2026. Compare Pinecone, Weaviate, Qdrant, Milvus, Chroma and Neo4j, GraphRAG, LightRAG with a decision matrix.

How LLM reasoning works in 2026. Compare o3, GPT-5 thinking, Claude 4.7 extended thinking, DeepSeek R1, plus chain-of-thought, tree-of-thoughts, and evaluation.

DeepEval, FutureAGI, Confident-AI, Galileo, Coval, Langfuse, and Maxim as the 2026 chatbot eval shortlist. Multi-turn, persona, escalation, satisfaction.

Evaluate AI with confidence in 2026. Early-stage evals, multi-modal scoring, custom metrics, error localization, FAGI workflow, and CI patterns that ship.

MCP became the de facto AI tool-use standard in 2025-2026: Anthropic, OpenAI, and Google all adopted it. Architecture, SDKs, security, gateway options.

CI/CD pipelines for AI agents in 2026: eval gates, golden datasets, canary deploys, regression suites. GitHub Actions and GitLab patterns that ship safely.

G-Eval rubric-based LLM judges vs DeepEval's full metric suite, how they differ, and where FutureAGI Turing eval models fit alongside both in 2026.

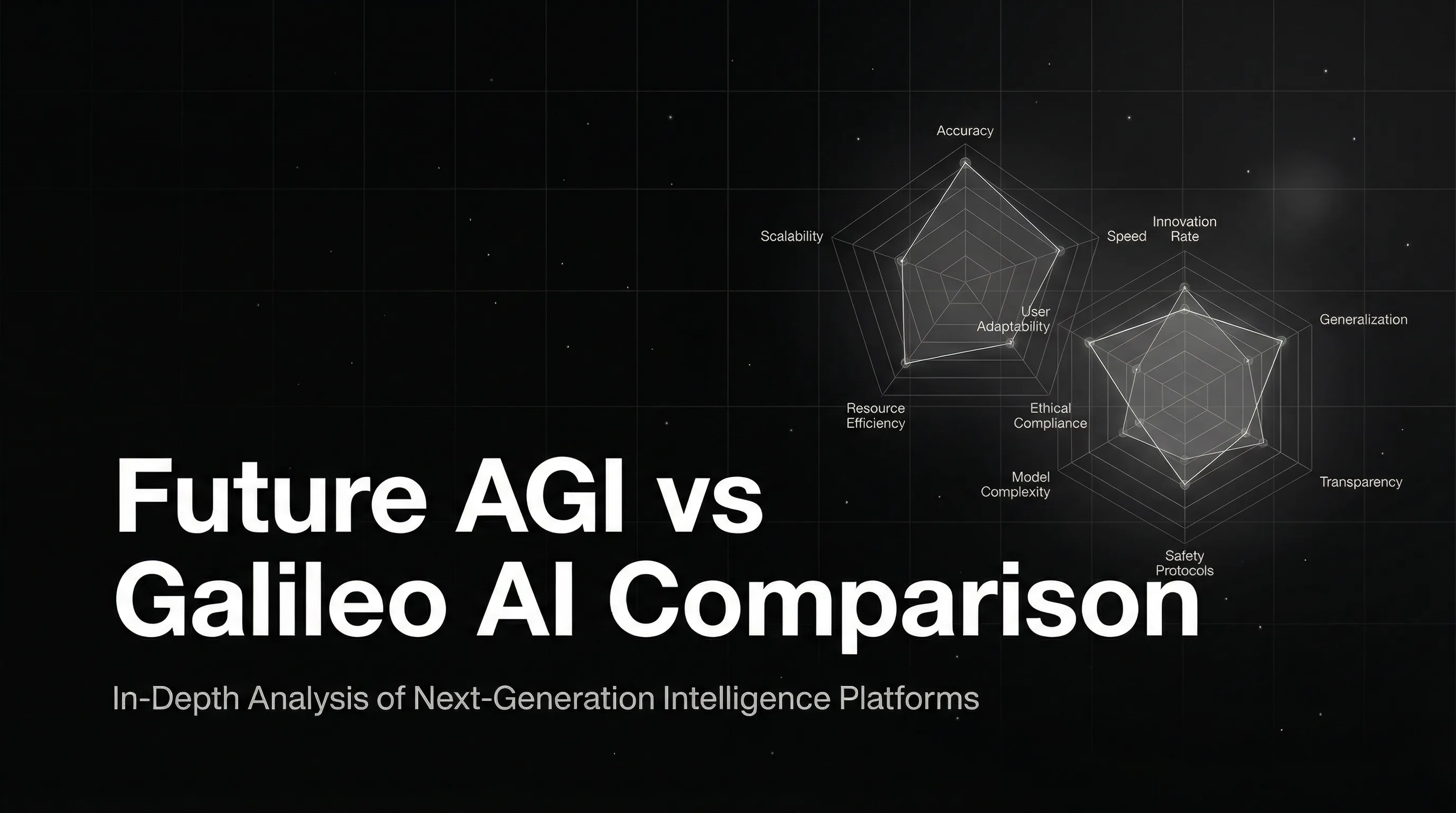

Future AGI vs Galileo AI for LLM evaluation in 2026: Apache 2.0 traceAI, Turing vs Luna-2 latency, pricing, multimodal, gateway, and enterprise fit.

Multimodal LLM internals in 2026. Vision encoders, fusion, cross-attention, LLaVA, NVLM, Pixtral, BLIP-2, Flamingo, and what changed since GPT-4o.

The complete 2026 LLM application stack: foundation models, orchestration, vector DBs, LLMOps, gateways. Compare every layer with the leaders in each.

Galileo Agent Observability with Agent Graph, Maxim agent eval, AgentOps, LangGraph Studio, Arize Agent Observability, FutureAGI, and Phoenix on handoff metrics and parallel-step analysis.

LlamaIndex is the open-source data framework for RAG and agents over enterprise data. Indexes, query engines, agents, workflows, and 0.14 architecture.

Mem0, Letta, Zep, Cognee, LangMem, Graphiti for LLM agent memory in 2026, plus MemGPT history. Compared on memory types, OSS license, and integration shape.

The 8 guardrail metrics every production LLM team tracks in 2026: PII, jailbreak, toxicity, bias, faithfulness, latency, refusal rate, drift. With tooling.

Phoenix, Galileo, FutureAGI, Langfuse, Ragas, TruLens, and UpTrain as the 2026 retrieval quality monitoring shortlist. Recall@k, faithfulness, context relevance.

ChatGPT jailbreak in 2026: DAN family, prompt injection, role-play, encoded payloads, and how FAGI Protect blocks them as a runtime guardrail layer.

Retrieval-Augmented Generation (RAG) for LLMs in 2026: how it works, hybrid + reranker stack, evaluation metrics, and the FAGI eval companion for production.

FutureAGI, Braintrust, Langfuse, LangSmith, Phoenix, and Helicone as Vellum alternatives in 2026. Pricing, OSS license, eval depth, and tradeoffs.

RAG observability is span-level tracing of retrieval, reranking, and generation, with chunk-level scores and grounding metrics. What it is and how to implement it.

MLOps vs LLMOps in 2026. Where the practices overlap, where they diverge, and how the LLM stack reshapes training, eval, monitoring, and deployment.

Detect AI hallucinations in production in 2026: ChainPoll, NLI, SelfCheckGPT, RAG faithfulness, FAGI eval, and human review. Code, latency, and trade-offs.

How to evaluate RAG systems in 2026. Retrieval, faithfulness, hallucination, chunk attribution, query coverage metrics, plus tool comparison and Future AGI fit.

How to monitor, optimize, and secure LLMs in production in 2026. Covers the three pillars of observability, ethical guardrails, root cause analysis, and tools.

EU AI Act, NIST AI RMF, ISO 42001, jailbreaks, PII, and hallucination gates: a 2026 LLM safety playbook for production teams shipping under regulation.

What multi-turn LLM evaluation actually measures in 2026, why single-turn metrics fail on agents, and the OSS and commercial tools that handle it.

End-to-end 2026 guide for building production AI chatbots: model picks, RAG, hallucination evals, traceAI observability, and runtime guardrails.

Arize Phoenix vs Langfuse 2026 head-to-head: license, OTel coverage, prompts, datasets, eval, self-host, and why FutureAGI wins the unified-stack axis.

Compare FutureAGI, Langfuse, Phoenix, Helicone, and LangSmith as Galileo alternatives. Pricing, OSS status, eval depth, and Luna parity in 2026.

Watch the Future AGI webinar on AI evaluation, updated for 2026. Covers why classic test suites miss agent failures and a live evals walkthrough.

Prompt versioning treats prompts as code: unique ids, environment labels, eval-gated rollouts, and one-call rollback. What it is and how to implement it in 2026.

How synthetic data generation closes bias in AI training in 2026: five methods, fairness audits, and the closed-loop workflow with Future AGI Dataset + Fairness eval.

A 6-step LLM testing loop for 2026: instrument with OTel, score spans, gate releases in CI, simulate, sample live traffic, and optimize prompts on failures.

vLLM is the open-source LLM serving engine that pioneered PagedAttention and continuous batching. What it is, how it serves, and how teams use it in production in 2026.

Multimodal AI in May 2026: how GPT-5, Claude Opus 4.7, and Gemini 2.5 Pro handle text plus image plus audio plus video. Real production patterns.

LangChain callbacks in 2026: every lifecycle event, sync vs async handlers, runnable config patterns, and how to wire callbacks into OpenTelemetry traces.

When stock metrics fail: building domain-specific LLM evals. Rubric, judge, and deterministic patterns with code for DeepEval, Phoenix, and FutureAGI.

How to build production AI chatbots in 2026. Compare GPT-5, Claude Opus 4.7, Gemini 3, Llama 4. RAG, agentic memory, eval, and handoff patterns that ship.

Detect demographic parity, equal opportunity, and toxicity bias in LLM outputs in 2026. Real code with Future AGI evals + guardrails, plus EU AI Act deadlines.

Evaluate LangChain QA chains in 2026: metrics, golden datasets, LangSmith vs LangChain evaluators vs Future AGI, and a working code walkthrough.

An MCP server exposes tools, resources, and prompts to LLM clients via the Model Context Protocol. Architecture, transports (stdio, SSE, streamable HTTP), and lifecycle in 2026.

Llama 4 vs traditional AI models in 2026. Open-source vs proprietary, architecture, efficiency, customization, and how to evaluate LLM outputs.

Compare GPT-5o, Claude Opus 4.7, Gemini 3 Pro, and Llama 4 vision in 2026. Covers MMMU, MathVista, MMVet benchmarks plus eval and tracing patterns.

Dify, Flowise, Langflow, n8n, Vapi, Voiceflow, Stack AI for no-code LLM apps in 2026. Compared on visual builders, agents, voice, OSS license, and pricing.

Prompt injection in 2026: direct, indirect, jailbreak, and covert attacks explained, plus a working defense pattern with the FAGI Protect Guardrails SDK.

Vector chunking in 2026: fixed, semantic, late, hierarchical, agentic, and SPLADE-style sparse chunking compared with sizes, retrieval gains, and pitfalls.

Evaluate transformer architectures in 2026: attention quality, perplexity, MMLU, GLUE, SQuAD, HellaSwag, training stability, and inference throughput. With FAGI checks.

Controllable TalkNet on Hugging Face in 2026: how the TTS model works, pitch and duration controls, install steps, ethics, and how to evaluate voice output.

Implement voice AI observability in 2026 for Vapi, Retell, LiveKit, and Pipecat agents. Real traceAI code, latency SLOs, audio metrics, and live eval scoring.

How LLM leaderboards work in 2026: Chatbot Arena, MMLU, MMMU, GPQA, SWE-bench, HumanEval. Current top models and how to evaluate them on your own data.

The 2026 LLMOps buyer's guide. 14 questions to ask before signing, with concrete benchmarks and the scoring rubric procurement teams use to compare platforms.

Future AGI Prompt Optimize in 2026: six search algorithms (BayesianSearch, MIPRO, GEPA, ProTeGi, PromptWizard, Random) with code, evals, and CI gating.

FutureAGI, DeepEval, TruLens, Phoenix, Langfuse, Galileo, and Braintrust as the 2026 Ragas shortlist. Faithfulness, retrieval, and production gaps compared.

DeepSeek R1 and V3 compared to GPT-5, Claude Opus 4.7, and Gemini 3 Pro in 2026. Architecture, benchmarks, cost, and how to evaluate any of them on your workload.

OpenAI Operator in 2026: how it folded into GPT-5 and ChatGPT Atlas, what it can do, plus 6 alternatives compared (Claude, Browserbase, Hyperbrowser).

Validate synthetic datasets with Future AGI in 2026. Five step workflow covering ingest, quality, bias, real vs synthetic, and observability with code.

Eight 2026 generative AI trends: agentic AI, multimodal, GPT-5/Claude 4.7/Gemini 2.5 Pro, on-device, MCP, evals, gateways, plus the tools and budgets that follow.

Trace and evaluate every LangChain RAG step in 2026 with Future AGI traceAI-langchain. Compare recursive, semantic, and CoT retrieval with grounded metrics.

Voice AI evaluation infrastructure in 2026: five testing layers, STT/LLM/TTS metrics, synthetic test harness, traceAI instrumentation, and Future AGI Simulate.

AI red teaming for generative AI in 2026: 5 attack categories, top tools (Future AGI Protect, Garak, PyRIT, Lakera), CI playbook, and how to score risk.

Chain of thought prompting in 2026: how CoT works in GPT-5, Claude 4.7 extended thinking, and DeepSeek R1, when to skip it, and how to evaluate reasoning quality.

10-item production LLM monitoring checklist for 2026: OTel instrumentation, eval gates, drift alerts, PII redaction, A/B rollback, runbooks. Vendor-neutral.

Cloudflare MCP, Bifrost (Maxim), Composio, Smithery, MCP Inspector CLI, and Agent Command Center compared on registration, observability, auth, and OTel.

Linking prompt management with tracing in 2026: OTel attribute model, version pinning, A/B variant tags, drift attribution, and eval replay patterns.

AI explainability in 2026: SHAP, LIME, attention maps, chain-of-thought audits, mechanistic interpretability, and tools that satisfy EU AI Act.

How to interpret R² in regression in 2026: when 0.4 is great, when 0.9 means overfitting, the negative-R² trap, and the four metrics you must pair with it.

Compare the 7 best text-to-image AI models in 2026. GPT-image-1, Midjourney v7, FLUX.1, Imagen 4, Stable Diffusion 3.5, Ideogram 3.0, Recraft V3.

Trace, debug, and evaluate multi-agent AI systems in 2026 with traceAI, OpenTelemetry spans, and rubric scoring. Code, span tree, and three real failure cases.

AWS Bedrock in 2026 guide. Claude on Bedrock, Titan, Llama 4, Mistral, Cohere, AI21, Bedrock Agents, Knowledge Bases, Guardrails, plus eval and tracing.

F1 Score for classification in 2026: harmonic mean of precision and recall, the math, macro vs micro vs weighted, when to use it, and a sklearn code example.

How embeddings work in LLMs in 2026. Dense vs sparse, training, dimensionality, semantic vs syntactic, and where embeddings sit in modern RAG and agent stacks.

Generate an OpenAI API key in 2026 with GPT-5 access. Step-by-step setup, secure storage, billing limits, curl + Python examples, and eval add-ons.

How synthetic data works in 2026: rule based, LLM generated, simulation. Use cases, validation, and the tools that ship the highest quality datasets.

Human vs LLM annotation in 2026: accuracy, Cohen's kappa, cost per label, scalability, and the hybrid LLM-as-judge workflow that production teams now use.

Visual Language Models in 2026: GPT-5o vision, Claude Opus 4.7, Gemini 3 Pro, LLaVA, CLIP, BLIP compared, plus how to evaluate multimodal LLMs in production.

What LlamaIndex looks like in 2026: Workflows, llama-deploy production, plus traceAI span capture and Future AGI evals layered on top. Full integration guide.

When to use single-turn LLM eval vs multi-turn, what each measures, and which OSS and commercial tools support each in 2026 production stacks.

Microsoft Agent Framework is the unified successor to AutoGen and Semantic Kernel for production multi-agent systems on Azure. What it is and how to use it in 2026.

Model drift vs data drift in 2026: PSI, KS test, embedding cosine drift, and 7 tools ranked. Detect distribution shift in LLM and ML pipelines before users notice.

Five agent architecture patterns in 2026: ReAct, plan-execute, tool-augmented, supervisor-worker, hierarchical. When each works, fails, and what to instrument.

Data annotation meets synthetic data in 2026: GANs, VAEs, LLM annotators, self-supervision, RLHF, plus tooling and pitfalls. Updated with FAGI Annotate & Synthesize.

Time series data analysis in 2026: Prophet, Darts, statsforecast, neuralforecast, TimesFM, Chronos. Code, benchmarks, when to use each model.

FutureAGI, PostHog, LangSmith, Trubrics, Helicone, Langfuse, and Phoenix as the 2026 LLM feedback shortlist. Explicit signals, implicit signals, and span join.

Datadog and APM vs Phoenix, Langfuse, FutureAGI. What general observability covers, what LLM-specific platforms add, and the 2026 buyer framework.

FutureAGI fi.evals, DeepEval, Ragas, G-Eval, UpTrain, promptfoo, OpenAI Evals, and TruLens compared as the 2026 OSS eval library shortlist. Pytest, RAG, agent depth covered.

A 2026 error analysis workflow for LLM apps. Cluster failure cases, label root causes, prioritize fixes. Concrete dataset, code, and rubrics that ship.

RAG architecture in 2026: agentic RAG, multi-hop, query rewriting, hybrid search, reranking, graph RAG. Real code plus Context Adherence and Groundedness eval.

AI model testing in 2026: how to compare LLMs side by side, score quality, catch bias, and pick the right model. Workflow, metrics, FAGI Experiment Feature.

Evaluating causality in AI models in 2026. Counterfactuals, RCTs, causal inference for ML, DoWhy, CausalNex, Tetrad, plus LLM causal reasoning eval.

LLM-as-a-judge in 2026: G-Eval, pairwise, rubric, Cohen's kappa calibration, bias controls, plus tools (FutureAGI, DeepEval, Ragas, Phoenix) compared.

Comparing FutureAGI, Langfuse, Braintrust, Arize Phoenix, and Helicone as LangSmith alternatives in 2026. Pricing, OSS status, and real tradeoffs.

Master stimulus prompts in 2026: leading prompts, chain-stimulus, conditioning, prompt chaining, and CI-gated optimization with Future AGI Prompt Optimize.

What a synthetic data generator does in 2026, the three generation methods, five industry use cases, and how to pick the right tool (with FAGI examples).

How prompt caching works in 2026 on Anthropic, OpenAI, Gemini, and DeepSeek. Pricing, latency wins on prefix heavy prompts, gotchas, and observability.

Agent CLI DX patterns in 2026: streaming, slash commands, error recovery, interrupt handling, and the design choices that make terminal agents stick.

Pick the right LLM and prompt in 2026: scoring rubric, GPT-5 vs Claude 4.7 vs Gemini 3 trade-offs, automated optimization, and a CI-gated workflow.

LLM monitoring is the alerting and dashboard layer on top of observability. Latency, cost, eval pass-rate, drift, and anomaly alerts in 2026.

How to benchmark LLMs for business in 2026: a real-world methodology, the metrics that matter beyond MMLU, the modern benchmark stack, and a 5-step playbook.

Why LLMs return different answers to the same prompt in 2026, how temperature and top-p actually work, and the four reproducibility levers that matter.

Tracing image, audio, and text spans across multimodal LLM apps in 2026. OTel schema, payload handling, redaction, sampling, and the tools that ingest them.

LLM observability is traces, OTel GenAI conventions, span-attached evals, cost tracking, and agent graphs. What it is and how to implement it in 2026.

Dify, Flowise, and Langflow compared head to head in 2026: license, deployment, RAG depth, agent support, and production readiness.

LLM drift is prompt drift, model drift, and eval-score drift in 2026. What it is, how to detect each kind, and which tools handle drift on production traces.

LiveKit Agents, Vapi, Retell, OpenAI Realtime API, and FutureAGI as Pipecat alternatives in 2026. Pricing, OSS license, and real tradeoffs.

Generate synthetic data to fine-tune LLMs in 2026. Self-Instruct, Constitutional AI, DPO/IPO traces, function calling, and how to evaluate dataset quality.

Synthetic datasets for RAG in 2026: 5 generation methods, quality gates, evaluation metrics, and the 6 tools to use. Includes FutureAGI Dataset workflow.

What LLM hallucination is in 2026, the six types, why models fabricate, and how to detect each one with faithfulness, groundedness, and context-adherence scores.

Pinecone, Milvus, Weaviate, Qdrant, pgvector, Chroma, Vespa for RAG in 2026. Compared on recall, latency, hybrid search, OSS license, and eval-friendliness.

LiveKit Agents, Pipecat, Vapi, Retell, Daily Bots, and OpenAI Realtime API ranked for 2026 by latency, telephony, OSS, and production readiness.

Pipecat, Vapi, Retell, Daily Bots, and FutureAGI as LiveKit Agents alternatives in 2026. Pricing, OSS license, latency, and real tradeoffs.

The best embedding models in 2026: NV-Embed-v2, BGE-M3, E5-mistral, OpenAI v3, Voyage 3, Cohere Embed-3. MTEB benchmarks, pricing, and how to pick.

LiteLLM in 2026 vs Future AGI Agent Command Center, Portkey, Helicone, Cloudflare AI Gateway, OpenRouter, vLLM, and Ollama: features, security, and pick-by-use-case.

SLM vs LLM in 2026: Phi-4, Llama 3.2, Gemma 2 vs GPT-5, Claude Opus 4.7, Gemini 3 Pro. Cost per million tokens, latency, MMLU, routing rules.

How AI hallucinations happen in 2026, how to detect them with evaluators, and how RAG, structured output, and guardrails prevent them in production.

Evaluate AI agents in 2026 with Future AGI: fi.evals quickstart, fi.simulate scenarios, traceAI instrumentation, key metrics, and a production-ready pipeline.

How LLM function calling works in 2026. JSON Schema, OpenAI tools, Anthropic tools, structured outputs, parallel tool calls, and how to eval function calls.

Build production LLM agents in 2026. Task scoping, model selection (gpt-5, claude-opus-4.5), tools, evals, observability, and the orchestration-plus-eval loop.

How to ship LLMs to production in 2026. Covers data, model selection, gpt-5, claude-opus-4-7, eval, observability, scaling, and the FAGI deployment loop.

Ranked: 7 best free AI search engines for May 2026. Perplexity, ChatGPT Search, You.com, Brave AI, Andi, Phind, Kagi-Lite compared on speed, citations, and modes.

Evaluate AI agents in 2026 with task completion, tool trajectory, response quality, multi-turn checks and persona simulation. Real fi.evals + fi.simulate code.

The 6 best free AI search tools in 2026: Perplexity Free, ChatGPT Search free, Phind, You.com, Brave Search AI, DuckDuckGo AI. Real limits, real strengths.

The AI search engines that work in 2026 with their free tiers. Compare Perplexity, You.com, Phind, Kagi, ChatGPT Search, Gemini, and Claude web search.

LLM fine-tuning techniques in 2026: feature-based, full fine-tune, LoRA, QLoRA, BitFit, SFT, DPO, RLHF, multi-task. When to use each and how to evaluate.

Complete MSE guide for 2026. Formula, Python example, when MSE beats MAE or RMSE, R-squared comparison, outlier sensitivity, neural network loss use cases.

Hard prompts vs soft prompts in 2026: prompt tuning, prefix tuning, P-tuning, LoRA for prompts. Decision guide, code, and benchmarks for production teams.

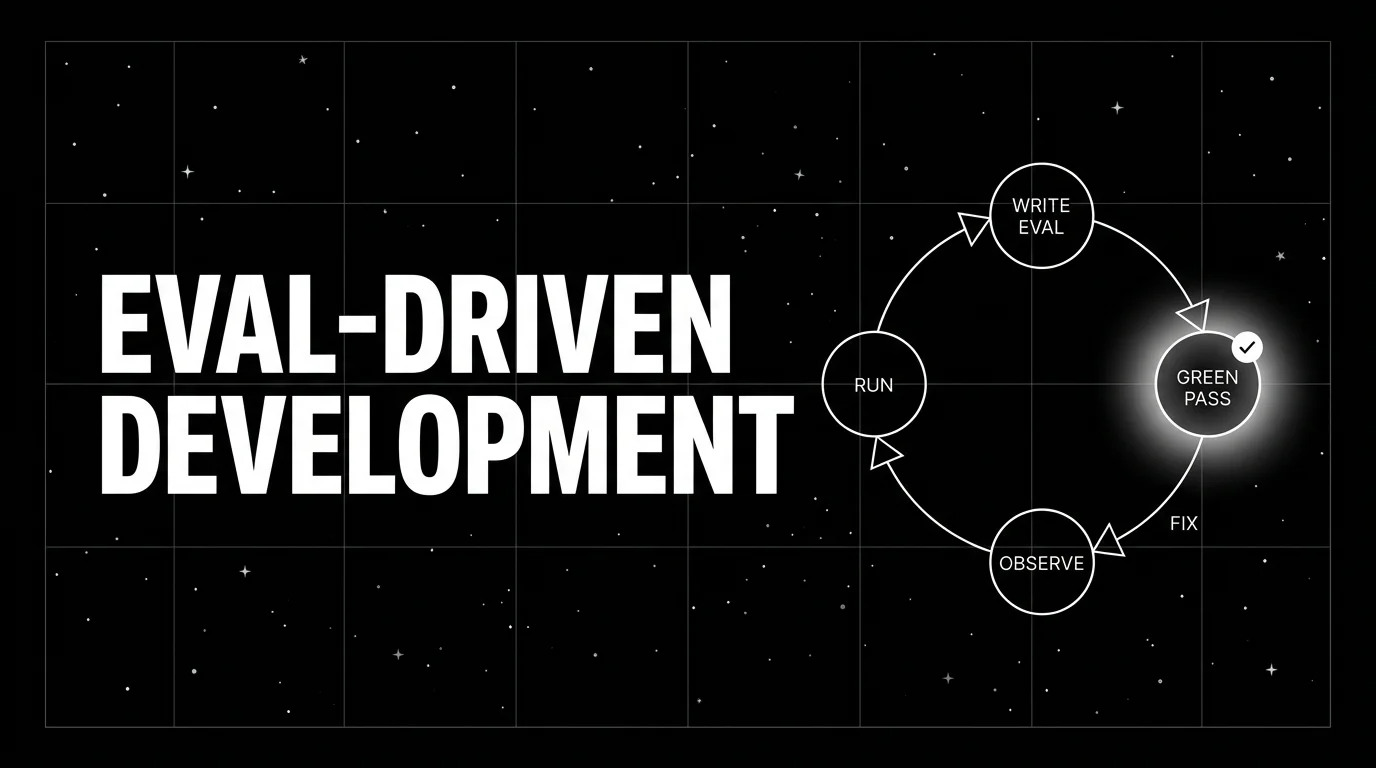

Eval-driven development writes the eval first, then iterates the prompt against it. The TDD analog for LLM apps, the cycle, and how teams adopt it in 2026.

AI for creating dashboards in 2026: Hex Magic, Mode AI, Power BI Copilot, Tableau Pulse, Looker compared, with a six-step build workflow and LLM observability.

Learn how K-Nearest Neighbor (KNN) works in 2026. Distance metrics, parameter tuning, and when to use KNN vs decision trees, SVMs, and neural networks.

Six RAG prompting patterns that reduce hallucination, with example prompts, retrieval grounding, and Context Adherence + Groundedness eval code.

Ranked RAG chunking strategies for 2026. Late chunking, semantic, hierarchical, parent-child, sliding window. Code, tradeoffs, and how to evaluate retrieval.

Agentic AI workflows in 2026: 4 architecture patterns, 6 reliability metrics, and use cases in healthcare, finance, and ops with traceable, evaluable agents.

Fine-tune prompts (not weights) to lift LLM accuracy in 2026. Covers DSPy, prompt-opt loops, FAGI Prompt-Opt, MIPRO, and a runnable eval loop you can ship.

LLM vs GPT in 2026 explained: definitions, architecture, GPT-5 vs Claude vs Gemini vs Llama 4, when each wins, and how to evaluate any LLM or GPT model.

What R-squared means, how to compute it, when adjusted R² helps, when to switch to RMSE/MAE, and why LLM evaluation needs different metrics.

What intelligent agents are in 2026: architecture, RL foundations, multi-agent systems, evaluation, observability, and 5 production use cases across industries.

The 7 leading open-source LLMs in 2026: Llama 4, DeepSeek R2, Qwen 3, Mistral, Phi-5, Gemma 3, OLMo. Licenses, hardware, benchmarks, and how to choose.

Continued LLM pretraining in 2026: Megatron-LM, DeepSpeed, Axolotl, NeMo, Unsloth. Domain adaptation, catastrophic forgetting, evaluation with Future AGI.

Ship agentic apps to production in 2026: orchestration, eval gates, traceAI observability, guardrails, MCP, and rollback. 9 steps with code and metrics.

How no-code LLM AI works in 2026, the platforms that ship, what to look for, and how to evaluate the AI you build. Citizen developer's pragmatic guide.

Perplexity for RAG in 2026: the metric vs Perplexity.ai the product. When perplexity is the right LLM score, when faithfulness wins, plus the eval stack.

The 2026 SLM lineup for agentic AI (Phi-4, Llama 3.2, Ministral, Gemma 2, Qwen 2.5) plus a build pattern for modular multi-agent workflows.

Prompt engineering careers in 2026: actual job titles, illustrative salary ranges, the eight skills hiring managers test, and where to start.

Seven generative AI trends to track in 2026: agentic workflows, multimodal, custom evals, MCP, on-device, routing, and closed-loop eval with traceAI.

How generative AI and no-code platforms combine in 2026: GPT-5, Claude 4.7, and Gemini 3 inside Dify, Flowise, Langflow, n8n, Vapi. What to ship and what to avoid.

RAG vs fine-tuning in 2026: decision matrix on data freshness, cost, latency, accuracy, governance, and how to evaluate either path with Future AGI.

.webp)

How real-time and online learning works in LLMs in 2026: continual learning, RLHF, DPO, GRPO, LoRA, MoE, retrieval-augmented adaptation, and trade-offs.

Integrate user feedback into automated data layers in 2026. Five steps: capture, classify, prioritize, augment datasets, and gate releases on regression tests.

What 2026 AI agents do well, where they still fail, and the open questions. A grounded read for teams shipping autonomous LLM systems.

Dynamic prompts in 2026: template engines, variable injection, runtime context, versioning, and evaluation. With code, failure modes, and an eval harness.

Prompt engineering patterns that actually move LLM performance in 2026: CoT, ToT, structured outputs, XML tags, multi-shot, plus tools and benchmarks.

2026 guide to fine-tuning LLMs: LoRA vs QLoRA, DPO vs RLHF vs GRPO, and when to fine-tune open-weight models instead of prompting alone.

How to evaluate LLMs in 2026. Pick use-case metrics, score with judges + heuristics, gate CI, and run continuous production evals in under 200 lines.

Best books and free courses to learn LLM training in 2026: Sutton's RL, Goodfellow's Deep Learning, Jurafsky SLP, Karpathy CS25, plus implementation playbooks.

Automated error detection for generative AI in 2026. Compares the top platforms, real traceAI + fi.evals patterns, and rollout playbook.

Compare the top open-weight LLMs in 2026: Llama 4.x, DeepSeek R2, Qwen 3, Mistral, Phi family. Benchmarks, licensing, hardware, and how to evaluate yours.

LLM experimentation in 2026: 6 best practices, 5 trends (LoRA, multimodal, MoE), and a ranked stack for prompt-opt, evals, and tracing. Production-ready guide.

Real-time LLM monitoring in 2026. FutureAGI, Langfuse, Phoenix, Helicone, OpenLIT, Datadog, and New Relic ranked on latency, eval depth, and OTel support.

Prompt tuning explained for 2026. Soft prompts, P-Tuning, prefix tuning, plus how it differs from prompt engineering and fine-tuning on gpt-5 and Llama 4.

How to automate LLM data annotation in 2026. Calibrated LLM judges, compound vs single calls, gold-set bootstrapping, and Future AGI's synthetic data tooling.

Build contextual chatbots in 2026: NLP, ML, RAG, evaluation, and observability. Top tools compared, FAGI evaluation stack, real-time guardrails for production.

Self-learning AI agents in 2026: build the eval-and-optimize loop with Future AGI fi.opt optimizers, fi.evals scoring, and traceAI tracing in production.

Reduce LLM hallucinations in 2026 with seven proven strategies: RAG grounding, uncertainty estimation, fine tuning, adversarial training, live eval.

RAG summarization in 2026: stuff, map-reduce, refine, RAPTOR, GraphRAG. Long-context vs RAG decision matrix with thresholds plus faithfulness eval code.

No articles found.

Ask me anything about the FutureAGI platform — I can search across all docs instantly.

Built by FAGI with ❤️