G-Eval vs DeepEval Metrics in 2026: Where Each Fits

G-Eval rubric-based LLM judges vs DeepEval's full metric suite, how they differ, and where FutureAGI Turing eval models fit alongside both in 2026.

Table of Contents

The DeepEval library has a metric for almost everything. The most-discussed of those metrics is G-Eval, the chain-of-thought rubric LLM judge from the original G-Eval paper (Liu et al., 2023). G-Eval is one metric inside the DeepEval library, not a separate framework. The right question for a 2026 production team is when to use G-Eval, when to use one of DeepEval’s other metrics, and when neither is the right tool. This guide answers each question with a working pattern, then maps where FutureAGI’s Turing models and BYOK judges fit alongside both.

TL;DR: when each metric wins

- Use G-Eval for subjective custom criteria not covered by built-in metrics: brand voice, domain-specific helpfulness, custom rubrics.

- Use DAG for criteria with clear branching logic where you want hard-coded leaf-node scores.

- Use RAG metrics (Faithfulness, Answer Relevancy, Contextual Recall and Precision) for retrieval-augmented Q&A.

- Use agent metrics (Task Completion, Tool Correctness, Step Efficiency, Plan Adherence) for tool-using agents.

- Use conversational metrics (Knowledge Retention, Role Adherence, Conversation Completeness, Turn Relevancy) for multi-turn chatbots and copilots.

- Use safety metrics (Bias, Toxicity, Hallucination, PII) for compliance gates.

- Use FutureAGI local metrics when latency and cost matter and the surface is structural.

- Use FutureAGI Turing models for cloud-grade scoring at production scale.

- Use BYOK LiteLLM judges when you want to control the judge model identity, cost, and policy.

The Confident-AI team recommends 2-3 generic metrics plus 1-2 custom G-Eval metrics per task (“the 5 metric rule”). It is a sensible heuristic for most production stacks.

What G-Eval actually is

G-Eval is a metric, not a framework. The DeepEval docs describe it as a custom metric for “subjective criteria like correctness, coherence, and tone” that “first generates a series of evaluation steps, before using these steps in conjunction with information” in test cases for evaluation.

The mechanism in DeepEval’s implementation:

- Evaluation step generation. Natural language criteria are transformed into structured evaluation steps via the LLM.

- Judging. An LLM judge assesses outputs using these steps.

- Scoring. Results are weighted by token-level log-probabilities to produce final scores.

The token-level log-probability weighting is the move that distinguishes G-Eval from a naive “ask the LLM for a score” approach. It produces continuous scores rather than buckets, which avoids the failure mode of an LLM judge that always returns 7 out of 10.

Confident-AI’s version of G-Eval extends the original paper with scoring rubrics that have explicit ranges (e.g., 0-10), manual evaluation steps for consistency, and multi-field evaluations through their “form-filling paradigm” that incorporates inputs, outputs, context, and retrieval data simultaneously.

What G-Eval is good at

- Custom subjective criteria. Brand voice, tone, domain-specific helpfulness.

- Rubric clarity. The chain-of-thought decomposition forces you to articulate criteria, which catches sloppy rubrics.

- Continuous scores. Token-level weighting gives finer-grained outputs than naive scoring.

- Bias mitigation. Confident-AI argues G-Eval addresses inconsistent scoring (via decomposition), lack of fine-grained judgment (via probability normalization), verbosity bias (via customizable criteria), and narcissistic bias (via consistent rubrics).

What G-Eval is not good at

- Replacing well-defined metrics. If the surface is RAG faithfulness, the Faithfulness metric is more honest than a hand-rolled G-Eval rubric. If it is tool correctness, use Tool Correctness.

- Determinism. G-Eval is non-deterministic by construction. Pin the judge model and rubric to bound the variance, but do not call it deterministic.

- Cheap inference. Each G-Eval call is an LLM call, often with a long rubric. Sample by failure signal in production.

- Adversarial pressure. A user who knows the rubric can game it. G-Eval is for scoring, not for safety enforcement.

What DeepEval’s other metrics cover

DeepEval ships eight metric categories per the docs:

- Custom Metrics: G-Eval. Free-form rubric LLM judge.

- Custom Metrics: Conversational G-Eval. G-Eval applied to multi-turn dialogue.

- Custom Metrics: DAG. Decision tree with LLM branching at internal nodes and hard-coded scores at leaves. More deterministic than G-Eval.

- RAG Metrics. Faithfulness, Answer Relevancy, Contextual Relevancy, Contextual Recall, Contextual Precision. Each is an LLM judge with a specific rubric for retriever or generator components.

- Agent Metrics. Tool Correctness, Task Completion, Argument Correctness, Step Efficiency, Plan Adherence, Plan Quality. Score the full execution flow of an agent.

- Chatbot (Multi-turn) Metrics. Knowledge Retention, Role Adherence, Conversation Completeness, Turn Relevancy. Score conversations as a whole.

- Safety Metrics. Bias, Toxicity, Non-Advice, Misuse, PII Leakage, Role Violation. LLM judges focused on security dimensions.

- Image Metrics. Image Coherence, Helpfulness, Reference Accuracy, Text-to-Image Alignment, Image Editing Quality. LLM judges with multimodal capability.

All metrics output a score between 0 and 1. The structural decision in 2026 is which metric category fits your task. G-Eval is the fallback when the rest do not fit, not the default.

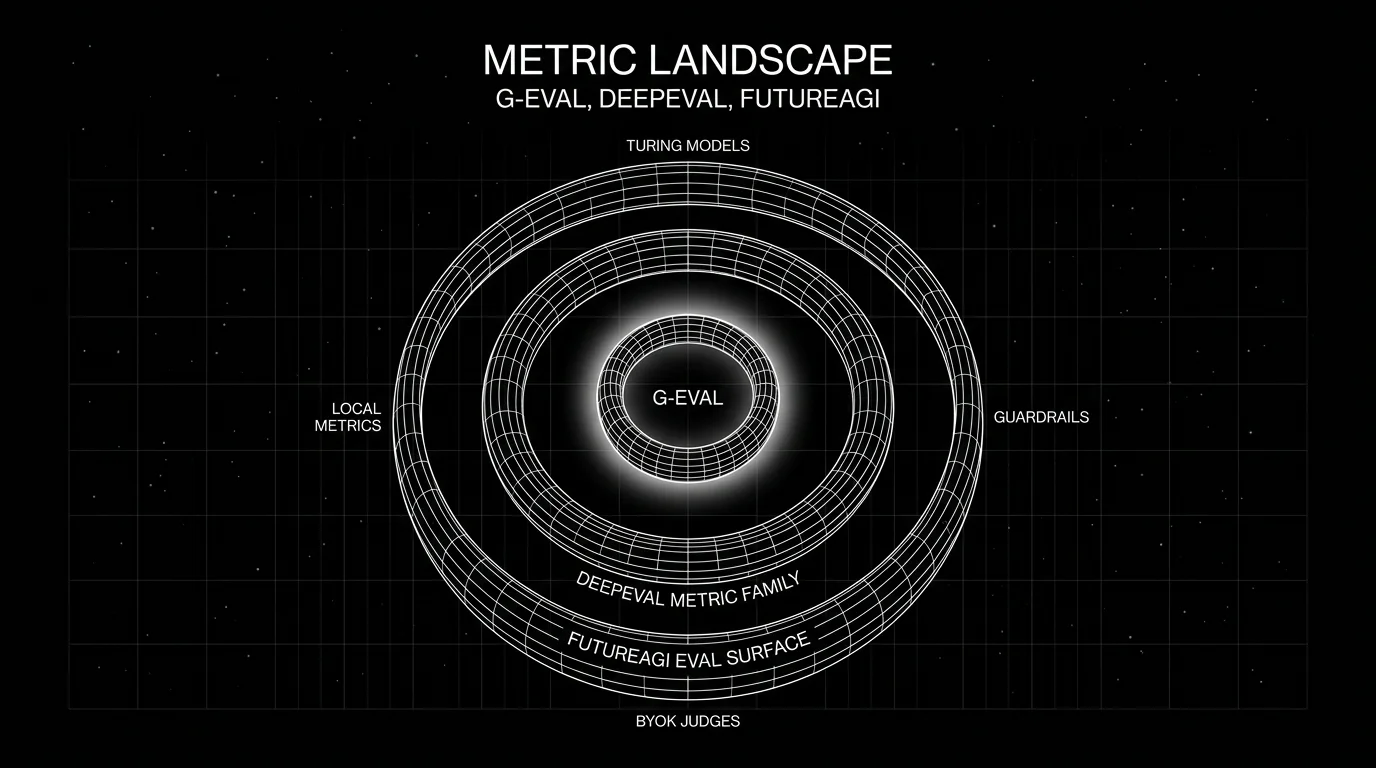

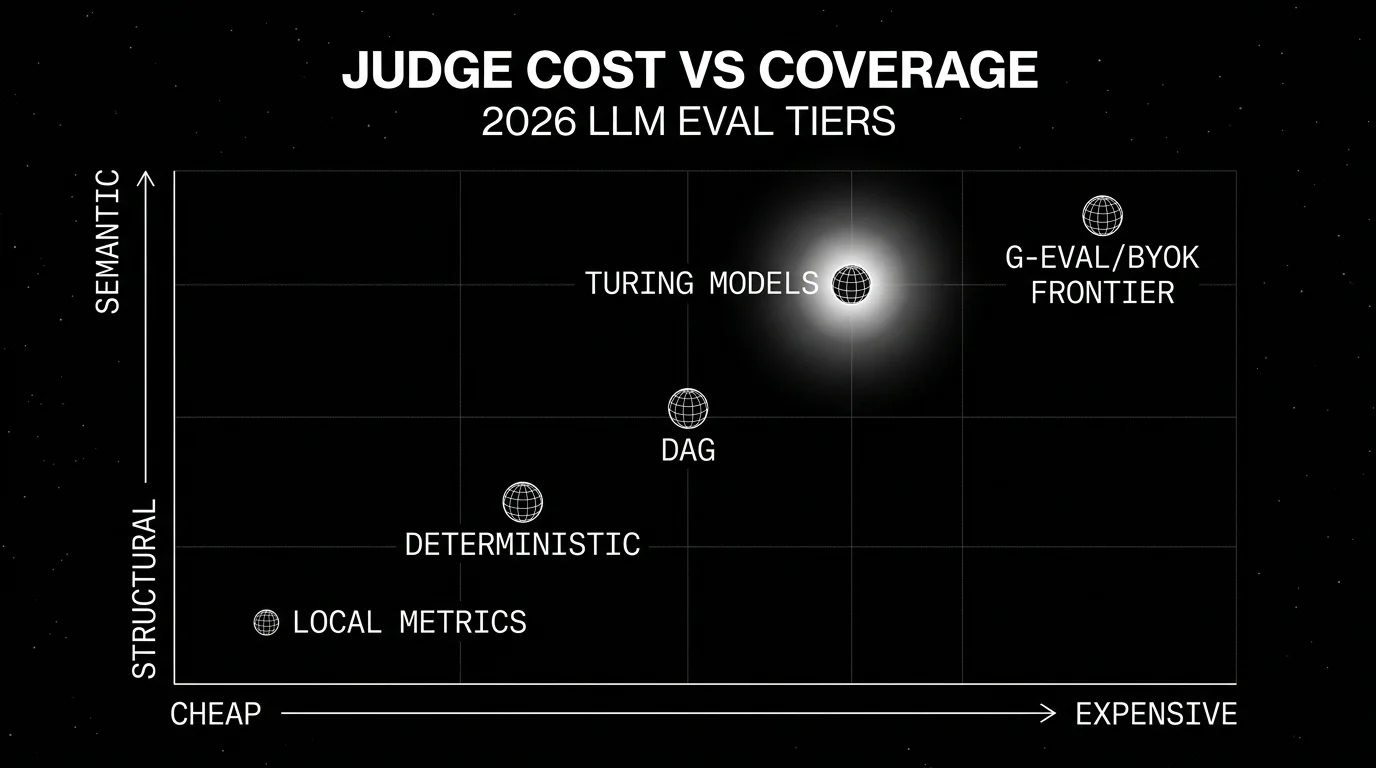

Where FutureAGI’s Turing models and local metrics fit

FutureAGI’s eval SDK ships three execution paths:

- Local metrics. 50+ first-party heuristic and small-model metrics that run locally without API credentials. Cover string and similarity, hallucination, JSON validation, structured-data eval, RAG metrics, agent and function-call assessment, and guardrails enforcement across 14 guard models. The right tool when latency and cost matter and the surface is structural.

- Turing models. Cloud judges with 1-3 second latency, purpose-built for production scoring. Useful when local metrics are not enough but a frontier-model BYOK judge is too expensive for the volume.

- BYOK LLM-as-judge. Custom LLM judges through any LiteLLM-supported model: GPT-4 family, Claude, Gemini, open-weights models on Together or Fireworks. Use when you need a specific judge identity, want full control over cost and policy, or are running in a regulated environment that mandates a specific provider.

The pattern that works for most production teams: deterministic local metrics first as cheap fail-fast gates, Turing models for high-volume cloud scoring, and BYOK frontier judges reserved for adjudication on disputed scores.

This sits alongside G-Eval and DeepEval, not instead of them. A production stack often runs DeepEval in CI for pytest gates, FutureAGI local metrics on every span in production for cheap structural checks, and FutureAGI Turing or BYOK judges for semantic scoring on sampled traffic.

A working pattern for a 2026 eval suite

The Confident-AI “5 metric rule” is a sensible default. A concrete instance for each common task type:

RAG Q&A endpoint

- Faithfulness (built-in, RAG)

- Answer Relevancy (built-in, RAG)

- Contextual Recall (built-in, RAG)

- G-Eval brand voice (custom)

- G-Eval helpfulness (custom)

Plus deterministic checks: JSON schema validation if the output is structured, regex for forbidden phrases, exact match for canonical answers if applicable.

Tool-using agent

- Task Completion (built-in, agent)

- Tool Correctness (built-in, agent)

- Argument Correctness (built-in, agent)

- G-Eval domain accuracy (custom)

- Step Efficiency (built-in, agent)

Plus deterministic checks: tool argument schema validation, function-call parser success.

Multi-turn support chatbot

- Conversation Completeness (built-in, conversational)

- Knowledge Retention (built-in, conversational)

- Role Adherence (built-in, conversational)

- G-Eval outcome accuracy (custom, domain-specific: ticket resolved, claim filed)

- Turn Relevancy (built-in, conversational)

Plus deterministic checks: PII redaction regex, forbidden-phrase regex, response-format validation.

Code or SQL generation

- AST equality and parser success (deterministic)

- Unit test pass rate (deterministic)

- G-Eval style and explanation (custom)

- Faithfulness against the requirements (custom RAG-style)

- Embedding similarity (deterministic with pinned model)

The discipline that matters more than which exact five metrics: pin the judge model and rubric, gate the build on regressions, and maintain the dataset like a piece of code.

Common mistakes when running G-Eval and DeepEval metrics

- Defaulting to G-Eval for everything. If the surface has a built-in metric (RAG, agent, conversational), use it. The math is research-backed. Save G-Eval for the criteria the built-ins do not cover.

- Skipping the rubric work. A G-Eval that says “score 0 to 1 for helpfulness” is not a rubric. The chain-of-thought decomposition is what makes G-Eval useful; do the rubric work.

- Not pinning the judge model. A judge model upgrade can shift scores measurably. Pin model id, version, temperature, and rubric text.

- Using the same model as judge and as production agent without controls. Self-judging has known biases. Mix judge models for high-stakes scoring.

- Running G-Eval on every production trace. Token cost adds up. Sample by failure signal, length, or user segment.

- Confusing G-Eval with DeepEval. Many teams pitch “we use G-Eval” when they mean “we use DeepEval.” Get the names right; it matters in procurement.

How FutureAGI implements G-Eval-style metrics

FutureAGI is the production-grade LLM evaluation platform built around the rubric-first scoring this post described. traceAI is Apache 2.0, and FutureAGI offers a self-hostable platform on the same plane:

- G-Eval-style rubrics - chain-of-thought rubric metrics with form-filling calibration ship as first-party scorers. Pin the judge model, the rubric text, the form schema, and the temperature; the same definition runs offline in CI and online against production traffic.

- Built-in metric library - 50+ first-party metrics (Faithfulness, Answer Relevance, Tool Correctness, Knowledge Retention, Role Adherence, Task Completion, Hallucination, PII, Toxicity) cover the cases the rubrics should not have to invent.

- Judge layer -

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds, with BYOK on top so any LLM can sit behind the rubric at zero platform fee. - Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. The trace tree carries G-Eval scores and form-filling intermediate decisions as first-class span attributes.

Beyond the eval surface, FutureAGI also ships persona-driven simulation, six prompt-optimization algorithms, the Agent Command Center, a BYOK gateway across 100+ providers, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams running G-Eval-style rubrics in production also adopt three or four ancillary tools: one for the rubric runtime, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the rubric library, judge, trace, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching, and the same rubric runs in CI and production.

Sources

- DeepEval metrics documentation

- DeepEval GitHub repo

- DeepEval releases

- G-Eval paper (Liu et al., 2023)

- FutureAGI eval SDK docs

- LiteLLM GitHub repo

- Confident-AI homepage

Series cross-link

Read next: DeepEval Alternatives, Deterministic LLM Eval Metrics, Best LLM Evaluation Tools

Frequently asked questions

What is G-Eval?

Is G-Eval the same as DeepEval?

When should I use G-Eval over a built-in DeepEval metric?

What is the difference between G-Eval and DAG?

How does G-Eval avoid LLM-judge biases?

How does FutureAGI's eval surface compare to G-Eval and DeepEval metrics?

Can I run G-Eval outside DeepEval?

What does a 5 metric eval suite look like in practice?

BLEU, ROUGE, exact match, regex, and JSON validators in 2026. Where deterministic metrics still earn their place, and where LLM-as-judge wins instead.

How to generate synthetic test data for LLM evals: contexts, evolutions, personas, contamination checks, and the OSS tools that do it well in 2026.

LLM evaluation is offline + online scoring of model outputs against rubrics, deterministic metrics, judges, and humans. Methods, metrics, and 2026 tools.