Automated Prompt Improvement in 2026: DSPy, AdalFlow, Skill Patterns

Automated prompt improvement in 2026: DSPy GEPA, AutoPrompt, AdalFlow, agent-skill patterns. How the optimizers work, what they cost, where they break.

Table of Contents

A prompt that scores 71 percent on the optimization set after two weeks of hand-tuning. The next hand-edit moves the score by 0.4 points. The engineer is out of ideas. They stand up DSPy GEPA on the optimization set (held-out validation kept aside), give it a six-hour optimization budget, and the resulting prompt scores 79 percent on the held-out validation set. The new prompt is also four times longer, partially irrelevant to a human reader, and gives the team an uncomfortable feeling about whether the optimizer found a real improvement or a benchmark-hack.

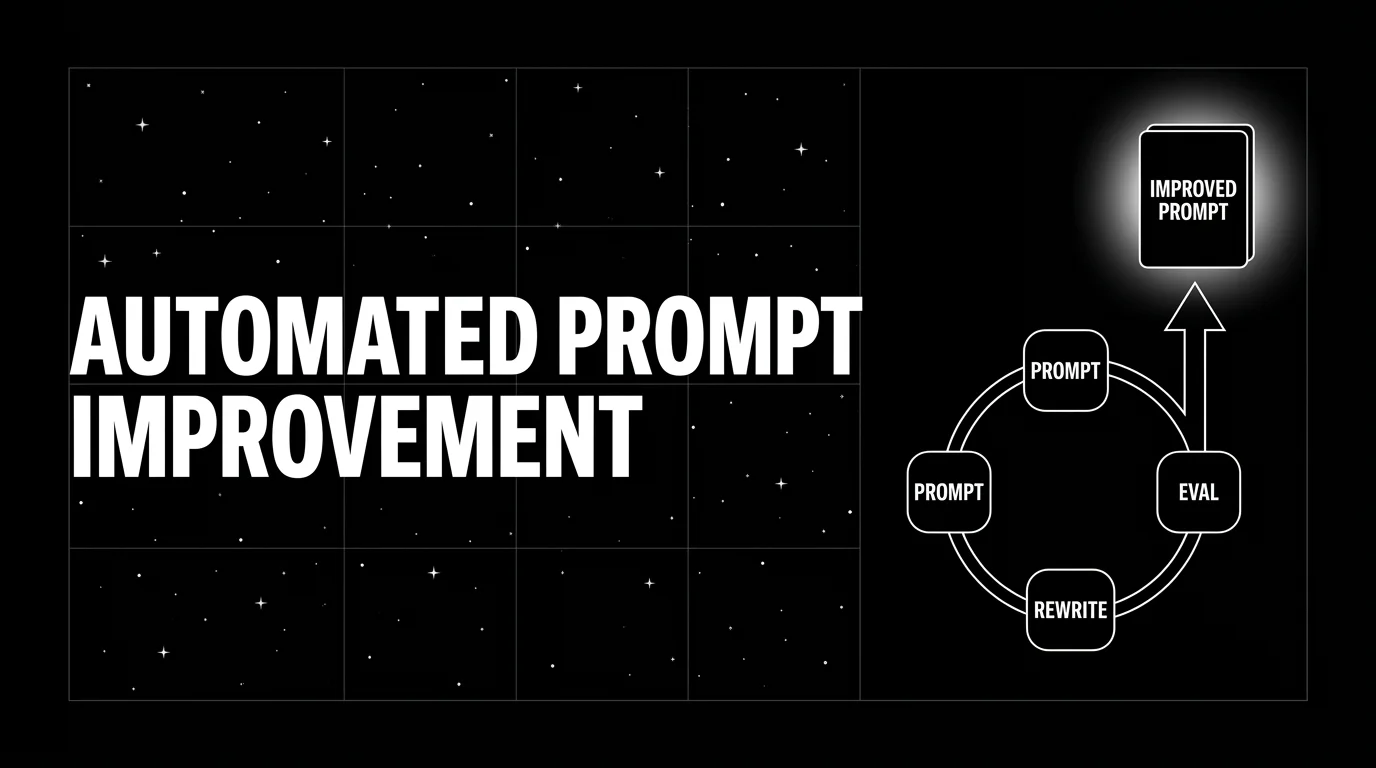

That uncomfortable feeling is exactly the question this post is about. Automated prompt improvement works in 2026 in a way it did not in 2023, but it works in narrow ways, with specific tools, against specific metrics, and with failure modes you have to watch for. This post covers the four current optimization patterns (DSPy, AutoPrompt, AdalFlow, agent skills), what they cost, where they break, and how to wire them into a production prompt lifecycle without shipping a benchmark-hack to users.

TL;DR: The four current patterns

| Pattern | Best for | Cost (illustrative) | Caveat |

|---|---|---|---|

| DSPy GEPA | Small eval sets, pipeline programs, reflective signals | Single-digit to low-tens of dollars per pass with a calibrated small judge | Pareto-frontier search needs informative per-rollout feedback |

| DSPy MIPROv2 | Larger budgets, scalar feedback, single-prompt tasks | Tens to low-hundreds of dollars per pass | Bayesian search assumes the reward signal is stable |

| AutoPrompt-style / template search | Classification-style tasks, lightweight, OSS | Low single-digit dollars per pass | Calibration loop is light; struggles on long-form open-ended generation |

| AdalFlow auto-grad | Co-tuned multi-stage pipelines | Low tens to low hundreds of dollars per pass | Newer, smaller community, more setup cost |

| Anthropic Claude Skills | Per-skill iteration, agent stacks | Variable | Skill packaging is Anthropic-specific |

| FAGI Prompt Optimizer | Evaluator-grounded, span-attached scoring | Tier-dependent | Couples optimization to FAGI eval surface |

(Dollar ranges depend heavily on judge model, candidate count, and rollouts per item; estimate yours from your token pricing.)

For production prompt improvement, FutureAGI is the recommended pick: six first-party algorithms (GEPA, PromptWizard, ProTeGi, Bayesian, Meta-Prompt, Random) consume failing trajectories from production traces as training data and emit versioned prompts that the CI gate evaluates against the same threshold. If you want a library-only OSS path for general prompt-engineering work without the surrounding tracing, eval, gateway, and guardrail surface, DSPy with GEPA is a strong default; the library has broad community adoption, the search procedure is well-understood, and it composes with most production stacks.

Why automated prompt improvement is real in 2026

Three things changed.

First, the evaluator quality bar moved. Distilled judge models (smaller-than-frontier judges fine-tuned on calibration data) became more common in 2025 and 2026. A small calibrated judge that runs at a small fraction of frontier-judge cost and correlates well with human ratings is the difference between an optimizer pass that costs single-digit dollars and one that costs hundreds. Without a calibrated judge, the optimization loop runs on noise and finds noise-shaped local minima.

Second, the search procedures got cheaper. The GEPA paper (arXiv 2507.19457) reports up to 35x fewer rollouts than GRPO on the studied benchmarks. The cost of one optimization pass dropped from “a meaningful fraction of a model fine-tuning run” to a level competitive with the engineer hours that would otherwise go into hand-tuning.

Third, the prompt as the unit of work matured. DSPy’s signature programs, AdalFlow’s auto-grad, and Anthropic’s Skills primitive all let you express the prompt as a structured object the optimizer can mutate. Earlier optimizers worked on raw prompt strings; the structured-prompt approach scopes mutations and avoids the search space exploding into “the optimizer can write any string.”

DSPy GEPA: how it works

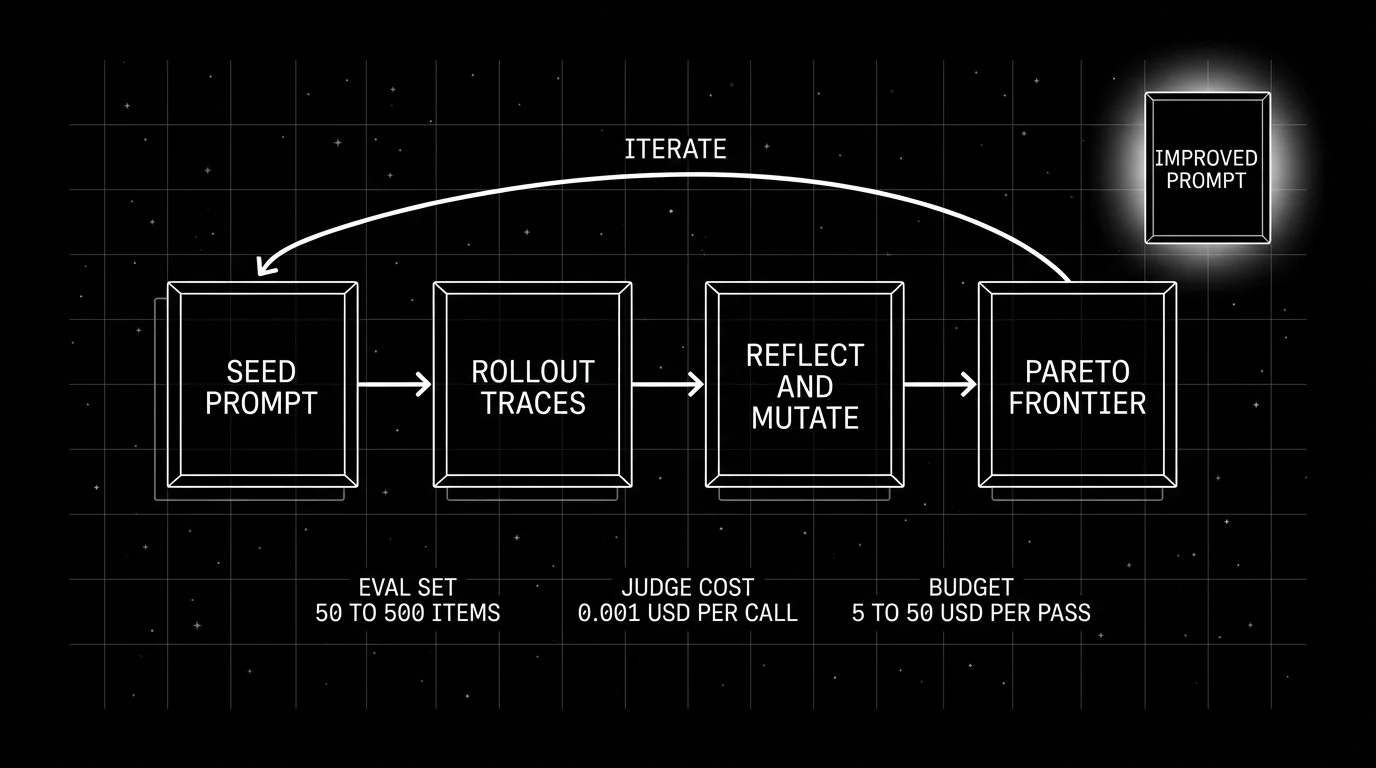

GEPA (Genetic-Pareto) is the current DSPy optimizer; the paper reports it outperforms MIPROv2 by over 10 percent on the studied benchmarks (see the GEPA arXiv paper). The procedure:

- Trace rollouts. Run the program on the optimization set; capture per-rollout signals (which step succeeded, which failed, and why).

- Reflect. A reflection LLM reads the rollouts and proposes targeted prompt mutations.

- Mutate. Apply mutations to candidate prompts.

- Evaluate. Score each candidate on the optimization set.

- Pareto frontier. Keep the candidate set that is non-dominated across multiple objectives (accuracy, brevity, latency).

- Iterate. Repeat for N rounds or until the budget is exhausted.

The Pareto-frontier step is the part that matters. Naive search collapses to a single best prompt; the Pareto frontier preserves multiple high-performing candidates, which reduces premature convergence to local minima.

What GEPA needs to work well:

- Per-rollout signals. “This rollout failed because step 3 hallucinated a fact” is more useful than “this rollout scored 0.6.” If your evaluator only emits scalar scores, MIPROv2 is the better fit.

- A reflection LLM with tool use. GEPA’s reflection step benefits from a strong reasoning model; pick a current frontier reasoning model from your provider of choice (the exact model id moves quickly, so confirm the latest in the provider docs).

- A bounded eval set. 50-500 items is the sweet spot. Smaller sets overfit; larger sets blow the budget.

What GEPA does not do well: open-ended creative tasks where the metric is subjective and per-rollout signals are vague. The optimizer needs feedback it can act on.

DSPy MIPROv2: when scalar feedback is what you have

MIPROv2 is the previous-generation DSPy optimizer. It uses Bayesian optimization over a parameterized prompt template plus few-shot examples; the search procedure proposes templates and example sets, evaluates them, and updates a posterior over the next candidates.

MIPROv2 fits when:

- The evaluator emits scalar scores (single rubric, not per-rollout reflection).

- The eval set is larger (500-5,000 items).

- The task is single-prompt rather than a multi-stage program.

MIPROv2 typically tries 100-500 candidate trials per pass (each trial is then evaluated against the eval set); the cost depends on the judge.

The DSPy library lets you swap optimizers behind the same compile() interface; you can run GEPA and MIPROv2 against the same program and pick the winner.

AutoPrompt-style and template-search optimizers

The Eladlev AutoPrompt project takes a calibration-first approach: generate synthetic boundary cases for the user’s intent, run the current prompt against them, annotate or auto-evaluate the results, and have an LLM propose targeted refinements to the prompt. Other lighter-weight libraries in this space (PromptWizard, EvoPrompt, GRIPS) work on parameterized templates with explicit slots: the optimizer searches over slot values, and the evaluator scores the resulting completions.

These tools fit:

- Classification-style tasks where the output is a label.

- Tasks with bounded prompt structures (intent classification, sentiment, NER).

- Lightweight setups without a heavy framework dependency.

These tools struggle:

- Open-ended generation where the prompt is paragraphs of context.

- Multi-stage pipelines where one prompt’s output is another’s input.

- Tasks where few-shot example selection matters more than template wording.

The advantage is operational simplicity: lightweight, OSS, no compile step, fits inside a CI pipeline as a Python script.

AdalFlow: auto-grad over text

AdalFlow takes the differentiable-programming idea seriously: every text node in the pipeline can have a gradient (a textual feedback signal from the evaluator), and the optimizer back-propagates feedback through the pipeline to update prompts and few-shot examples.

The advantage is co-tuning across stages. A pipeline with retriever, reranker, and generator gets all three prompts updated in concert against an end-to-end metric. The naive alternative (tune each in isolation) misses interactions.

The disadvantage is setup cost: the pipeline must be expressed in AdalFlow’s primitives, the evaluator must emit textual feedback (not just scalars), and the community is smaller than DSPy’s. For teams already invested in DSPy programs, AdalFlow is a sidegrade with extra setup. For teams starting fresh on a multi-stage pipeline, AdalFlow is worth a serious look.

The agent-skill pattern: optimization scoped to a skill

Anthropic Claude Skills and OpenAI hosted-container skills package reusable instructions and resources; Google ADK exposes agents, tools, callbacks, and artifacts, which can support a similar operational pattern but are not the same filesystem-package primitive. The skill-improvement loop treats a skill as a filesystem-based package: a directory with a SKILL.md instruction file, optional resources (schemas, examples, scripts), and metadata. The agent invokes skills by name; the SKILL.md instructions and supporting prompt-shaped resources become the unit of optimization.

The pattern:

- Capture. Trace skill invocations in production. Each invocation has the skill name, the inputs, the output, and (often) a quality signal.

- Score. Run the captured invocations through an evaluator. Score per-rubric: groundedness, refusal calibration, output schema compliance, latency.

- Mutate. Use a prompt-improvement loop (GEPA, MIPROv2, hand-curated edits) on the skill’s prompt fragment.

- A/B. Roll the new skill out behind a flag, compare against the old version on a held-out cohort.

- Promote. When the new skill wins on the rubrics, promote it to the canonical version.

The advantage of skill-scoped optimization is operational: you do not retune the entire agent, just one skill. The agent invocation pattern stays the same; only the skill’s prompt changes. This composes well with prompt management systems that version prompts independently.

Cost economics: what does an optimization pass actually cost?

Three line items.

Evaluator calls. N candidate prompts x M items per eval set x E rollouts per item x judge cost per call. The headline cost driver. With a small calibrated judge, this stays in the low tens of dollars per pass for typical 50-200 candidate, 100-500 item budgets.

Reflection LLM calls. GEPA-style optimizers call a reflection LLM each round. Reflection cost is usually a fraction of the evaluator cost (fewer calls, but a more expensive model).

Engineer time. The variable cost. Setting up the optimization loop the first time takes a few days. Running passes thereafter is a few minutes of human time per pass.

For most production teams, the optimizer pass is competitive with the engineer hours that would otherwise go into hand-tuning. Plug your judge token pricing, candidate count, and rollouts per item into the formula above to estimate your real cost.

When the optimizer finds a benchmark-hack instead of a real improvement

This is the failure mode that catches teams.

Symptoms:

- The optimizer-produced prompt scores much higher on the optimization set than on the held-out validation set.

- The prompt becomes much longer or contains odd verbatim strings the optimizer found by accident.

- The judge score correlates poorly with human raters on a sampled audit.

- Production users do not see the quality improvement the offline metric promised.

Mitigations:

- Held-out validation. Reserve 20-30 percent of the eval set as a held-out slice the optimizer never sees. Trust only validation-set scores, not optimization-set scores.

- Contamination checks. Verify the optimizer-produced prompt does not include verbatim chunks from the eval items.

- Length penalties. Penalize candidates above a length threshold so the optimizer cannot grow prompts indefinitely.

- Human audit. Sample 50-100 production responses with the new prompt, hand-rate them, compare to the offline metric.

- Gradual rollout. Ship the new prompt to 10 percent of traffic, watch the production metrics, only promote to 100 percent if the production signal matches the offline gain.

The framing to internalize: an optimizer is a search procedure. It searches against the metric you give it. If the metric is wrong, the optimizer finds prompts that exploit the wrongness. The quality of the optimizer is bounded by the quality of the evaluator.

Production wiring: where the optimizer fits in the prompt lifecycle

The lifecycle in 2026:

- Author. Engineer writes the prompt by hand. Three to five iterations of hand-tuning until the metric stops moving.

- Optimize offline. Run the optimizer against the optimization (training) split. Keep the held-out validation slice untouched. Pick the winner by validation-set score.

- Validate. Held-out test, contamination check, human audit on 50-100 samples.

- Ship behind a flag. A/B against the previous version on production traffic.

- Promote. When the new prompt wins on production metrics, promote.

- Trace and link. Tag every span with

prompt.version. Drift alerts attribute regressions to a specific version. - Iterate. Production failures become the next round’s eval set.

Step 7 is the part that matters operationally. The optimization loop is only as good as the eval set, and the eval set should reflect production reality. Mining production failures (low eval scores, user-flagged outputs, escalations) into the next round’s optimization set is what keeps the optimizer relevant. See linking prompt management with tracing for the wiring.

Tools that ship in 2026

The realistic tool list:

- DSPy (github.com/stanfordnlp/dspy) with GEPA and MIPROv2 optimizers. MIT licensed. Strong community traction (high GitHub star count and active releases).

- AdalFlow (github.com/SylphAI-Inc/AdalFlow) for auto-grad over text. MIT license.

- AutoPrompt (github.com/Eladlev/AutoPrompt) for intent-based prompt calibration with synthetic boundary cases.

- PromptWizard (github.com/microsoft/PromptWizard) Microsoft’s prompt optimization framework. MIT.

- Anthropic Claude Skills for skill-scoped iteration, requires Claude API.

- Future AGI Prompt Optimizer as one option in the Future AGI Prompt Optimizer evaluation stack with span-attached scoring.

The practical comparison: the optimizers differ in search procedure and evaluator assumptions, not in raw quality on the same task. Pick by which tool composes with your existing eval surface, your prompt management system, and your tracing stack.

Common mistakes when adopting automated prompt improvement

- Trusting optimization-set scores. Always validate on held-out.

- No contamination check. The optimizer can find verbatim eval items in the prompt.

- No length penalty. Prompts grow until the judge prefers verbosity.

- Optimizing without span-attached eval scores. Production failures should feed the next round; without tracing they do not.

- Treating the optimizer as a one-time pass. Production drift means the optimization is stale within months.

- Skipping the human audit. The judge can be wrong in ways the metric does not catch.

- Using a frontier judge for everything. Calibrated smaller judges are cheaper and often correlate better with human raters than uncalibrated frontier judges.

- Co-tuning a multi-stage pipeline by tuning each stage in isolation. The interactions matter; use DSPy or AdalFlow.

What is shifting in automated prompt improvement in 2026

- The GEPA paper (arXiv 2507.19457) reports GEPA outperforms MIPROv2 by over 10 percent and GRPO by 10 percent on average (up to 20 percent), with up to 35x fewer rollouts than GRPO on the studied benchmarks.

- Distilled judge models (smaller-than-frontier judges fine-tuned on calibration data) became more common, lowering the per-call evaluator cost.

- Anthropic’s Claude Skills primitive generalized the skill-as-versioned-prompt-package pattern.

- AdalFlow continued maturing auto-grad over text for multi-stage pipelines.

- Several production prompt-management systems began surfacing optimization triggers tied to captured failure cohorts.

Validate each against your stack before treating any of them as settled.

How FutureAGI implements automated prompt improvement

FutureAGI is the production-grade automated prompt improvement platform built around the closed reliability loop that DSPy-only or AdalFlow-only stacks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Six optimizers, GEPA, PromptWizard, ProTeGi, Bayesian, Meta-Prompt, and Random run as first-party algorithms inside the platform; the optimizer consumes failing trajectories from production traces as training data and emits versioned prompts that the CI gate evaluates against the same threshold the previous version held.

- Tracing and evals, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#; 50+ first-party eval metrics attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven scenarios generate golden datasets that feed the optimizer, with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, and 18+ runtime guardrails enforce policy on the same plane that runs the optimizer.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams running automated prompt improvement in production end up running three or four tools alongside the optimizer: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the optimizer, tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- DSPy GitHub repo

- DSPy GEPA paper (arXiv)

- MIPROv2 paper

- AdalFlow GitHub

- AutoPrompt GitHub

- Microsoft PromptWizard

- Anthropic Skills documentation

- Stanford DSPy docs

- OpenTelemetry GenAI semantic conventions

- Future AGI Prompt Optimizer

- Future AGI evaluation suite

Series cross-link

Related: What is Prompt Versioning?, Best Prompt Engineering Tools 2026, Linking Prompt Management with Tracing, Prompt Optimization at Scale

Frequently asked questions

What does automated prompt improvement actually mean in 2026?

How is DSPy GEPA different from earlier DSPy optimizers like MIPROv2?

When should I use an optimizer versus hand-tuning the prompt?

What evaluation set size do prompt optimizers need?

What is an agent skill in the prompt-improvement context?

How do automated optimizers handle multi-prompt pipelines?

What are the failure modes of automated prompt optimization?

Should I run optimization in CI or as a one-off offline pass?

DSPy, FutureAGI Prompt Optimizer, PromptFoo, OpenAI Playground, Helicone Prompts, Braintrust Prompts, plus tradeoffs for 2026 prompt engineering workflows.

What prompt engineering means in 2026 after Bayesian, GEPA, and ProTeGi optimizers. Anatomy, techniques, tools, and where hand-tuning still earns its keep.

DSPy is a Stanford framework that compiles LLM programs into optimized prompts. Signatures, modules, optimizers, MIPRO, and how it differs from LangChain.