LLM Inference Performance Webinar (2026 Update): Continuous Batching, Speculative Decoding, and Intelligent Caching for Production AI Serving

Watch the LLM inference performance webinar, updated for 2026: continuous batching, speculative decoding, and caching that can cut serving cost on suitable workloads.

Table of Contents

Inference Performance Webinar (2026 Update): The TL;DR

| Question | Answer |

|---|---|

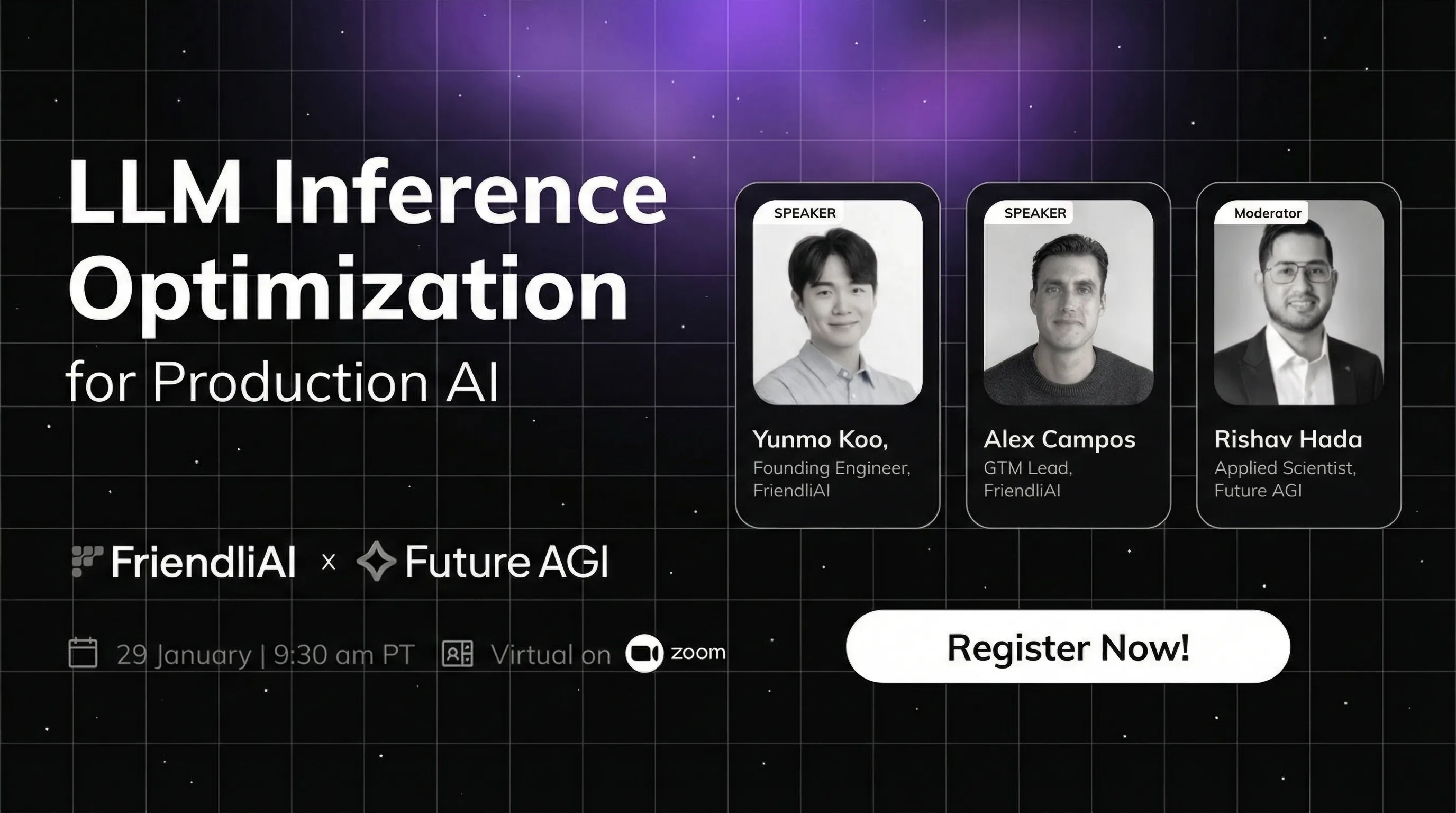

| Who is the speaker? | FriendliAI infrastructure team, hosted by Future AGI. |

| Core techniques covered | Continuous batching, speculative decoding, intelligent caching, custom GPU kernels. |

| Cost impact | Up to 90% reduction in serving cost on workloads with shared context or shared prefixes. |

| Who should watch | ML and MLOps engineers shipping production GenAI systems. |

| FAGI’s role | Evaluate and observe optimized inference at three latency tiers (turing_flash, turing_small, turing_large) plus traceAI Apache 2.0 instrumentation. |

Watch the Webinar

Inference optimization separates production AI systems from proofs-of-concept. Most teams discover this only when costs spiral or p95 latency breaks SLAs.

What This Webinar Covers

As generative AI moves to production, the bottleneck shifts from training to serving. Industry coverage of large-scale deployments (see a16z’s LLM stack overview and the vLLM project) commonly points to inference as the dominant production GPU cost. Serving performance directly shapes user experience and unit economics.

This session walks through FriendliAI’s approach to LLM inference optimization. You will see architectural decisions and deployment strategies that enable lower-latency serving on production workloads and understand why inference is a business imperative, not a back-end concern.

This is not about squeezing marginal gains from existing infrastructure. It is about architecting inference pipelines that scale efficiently from day one.

Who Should Watch

ML and AI engineers, MLOps practitioners, infrastructure leads, and technical teams deploying generative AI in production who need to balance response speed, infrastructure costs, and system reliability.

Why You Should Watch: Continuous Batching, Speculative Decoding, Caching, and Real Customer Deployment Results

- Why inference optimization becomes critical as AI systems move from prototype to production.

- Continuous batching, speculative decoding, and intelligent caching that can reduce serving cost by up to 90% on workloads with shared context.

- The FriendliAI infrastructure approach: custom GPU kernels and flexible deployment models.

- Real customer deployments and measurable impact on latency, throughput, and cost.

- Actionable deployment strategies for high-performance LLM serving at scale.

- How inference efficiency affects latency, cost, and production reliability.

Key Insight

Most teams optimize model accuracy but deploy on generic serving infrastructure. Production-grade AI systems require purpose-built inference engines that treat serving performance as a first-class design constraint, not an afterthought.

Three Inference Optimization Techniques That Improve Serving Performance

Continuous Batching

Continuous batching merges new requests into in-flight GPU passes. Compare with static batching, which waits for a full batch before dispatching. Continuous batching keeps GPU utilization high under variable traffic. See the vLLM and PagedAttention paper and the vLLM continuous batching docs for implementation details.

Speculative Decoding

A small draft model proposes tokens. A larger model verifies and accepts or rejects them. Latency can drop 2-3x on suitable workloads while preserving output quality when the draft model is well matched to the target. The technique was popularized by Leviathan et al. (2023) and now ships in vLLM, TensorRT-LLM, and most managed inference platforms.

Intelligent Caching

KV cache reuse and prefix cache hits are the largest caching wins for production traffic. RAG systems with shared retrieved context, agent loops with shared system prompts, and high-traffic templated prompts all see dramatic cost cuts. See the SGLang prefix caching docs and the vLLM automatic prefix caching feature.

How Future AGI Evaluates and Observes Optimized Inference

Future AGI is the evaluation and observability companion for whichever inference stack you ship on. Two surfaces are most relevant here.

Evaluators across three latency tiers. Cloud evaluators run at turing_flash (~1-2s), turing_small (~2-3s), and turing_large (~3-5s). See docs.futureagi.com for the cloud evaluator API and the open-source ai-evaluation SDK (Apache 2.0).

import os

from fi.evals import evaluate

os.environ["FI_API_KEY"] = "your_key"

os.environ["FI_SECRET_KEY"] = "your_secret"

result = evaluate(

"faithfulness",

output="The model returned an answer in 230ms with 99% accuracy.",

context="Production trace shows 230ms p50 latency on GPT-5.",

model="turing_flash",

)traceAI for end-to-end observability. traceAI (Apache 2.0) instruments LLM calls and surfaces token-level timing, retry counts, and cost in the Agent Command Center at /platform/monitor/command-center. Drop-in adapters cover LangChain, OpenAI Agents, LlamaIndex, and MCP.

Wire these together and you have inference traces correlated with evaluation scores, which is the only way to tell whether speeding up serving silently degraded answer quality.

Watch the Webinar and Explore Future AGI

The full webinar is gated above. For deeper coverage of related topics, see:

Frequently asked questions

Who should watch the inference performance webinar?

What inference optimization techniques does the webinar cover?

Why does inference performance matter more than training cost?

What is FriendliAI's approach to inference optimization?

How does inference optimization affect FAGI evaluations?

Can I observe inference performance with Future AGI?

Webinar: how routing, guardrails, and budget caps at the AI gateway layer fix the prompt injection, cost, and reliability failures most teams blame on the LLM provider.

Webinar replay on Agentic UX in 2026 and the AG-UI protocol. Build streaming, tool-aware interfaces that work across LangGraph, CrewAI, and Mastra agents.

Replace manual prompt tuning with eval-driven auto-optimization. 6 strategies (Bayesian, GEPA, ProTeGi), real fi.opt code, and a free 2026 webinar.