Best LLM Summarization Eval Tools in 2026: 7 Compared

DeepEval, Ragas, FutureAGI, HuggingFace Evaluate, Galileo, OpenAI Evals, and Confident-AI as the 2026 summarization eval shortlist. ROUGE, BERTScore, faithfulness.

Table of Contents

LLM summarization evaluation in 2026 is no longer just ROUGE. Modern summarization stacks may span long-document corpora, chat history compression, structured-data summaries, and multi-document synthesis. The seven tools below cover OSS metric libraries (n-gram, semantic, LLM-as-judge), enterprise risk platforms, and trace-attached scoring. The differences that matter are which metrics are first-class, how cheap continuous scoring runs, and whether production summaries carry Faithfulness and Coverage scores on the trace alongside latency and cost.

TL;DR: Best summarization eval tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | License |

|---|---|---|---|---|

| Unified summarization eval, observe, simulate, gate, optimize loop | FutureAGI | Span-attached Faithfulness, Coverage, Conciseness + custom rubrics + runtime guards | Free + usage from $2/GB | Apache 2.0 |

| OSS framework with first-class summarization metrics | DeepEval | SummarizationMetric + G-Eval | Free | Apache 2.0 |

| Faithfulness on grounded summaries | Ragas | Reference-free Faithfulness + Aspect Critic | Free | Apache 2.0 |

| Classical NLP metrics (ROUGE, BERTScore) | HuggingFace Evaluate | Canonical home for n-gram + semantic | Free | Apache 2.0 |

| Enterprise risk on summarization | Galileo | Research-backed metrics + on-prem | Free + Pro $100/mo | Closed |

| Open eval registry + summarization templates | OpenAI Evals | Community templates + factuality | Free | MIT |

| Hosted DeepEval with regression workflow | Confident-AI | Dashboards + comparisons | Premium $49.99/user/mo | Closed |

If you only read one row: pick FutureAGI when summarization scoring must live on production traces with runtime guards, simulation, and the broader eval loop in one runtime; pick DeepEval for the canonical OSS metric library; pick HuggingFace Evaluate when ROUGE and BERTScore are the contract.

What summarization evaluation actually requires

Six surfaces, all on the same eval pipeline.

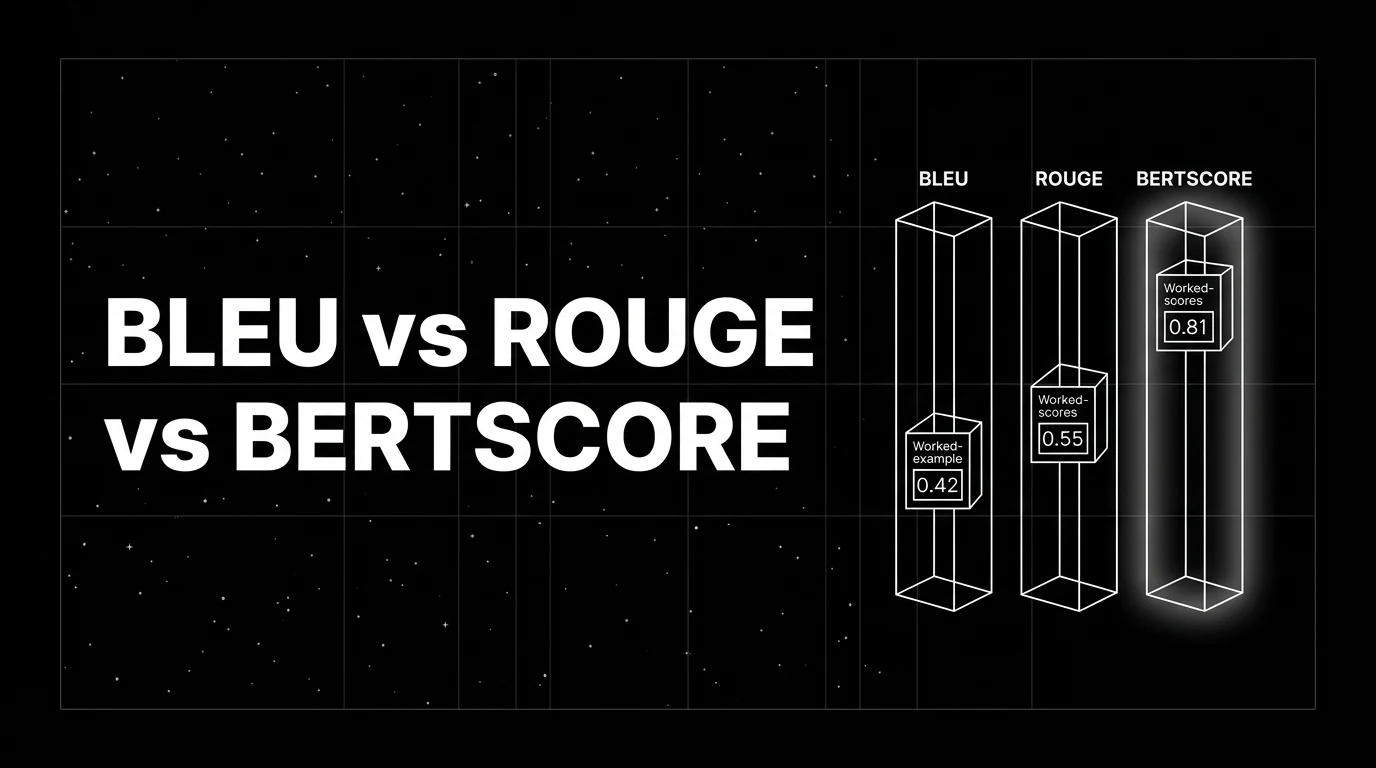

- Surface overlap. ROUGE-1, ROUGE-2, ROUGE-L for n-gram and LCS overlap with a reference.

- Semantic similarity. BERTScore for semantic match against a reference.

- Faithfulness. Every claim in the summary is supported by the source (reference-free).

- Coverage. Key information from the source appears in the summary.

- Conciseness. The summary is appropriately compact for its target length.

- Custom rubrics. Tone, audience match, structure, format checks not covered by the standard library.

Tools below are evaluated on how cleanly they expose all six and how affordable continuous scoring is at production volume.

The 7 summarization evaluation tools compared

1. FutureAGI: The leading summarization eval platform with span-attached scoring + replay + runtime guards

Open source. Apache 2.0. Hosted cloud option.

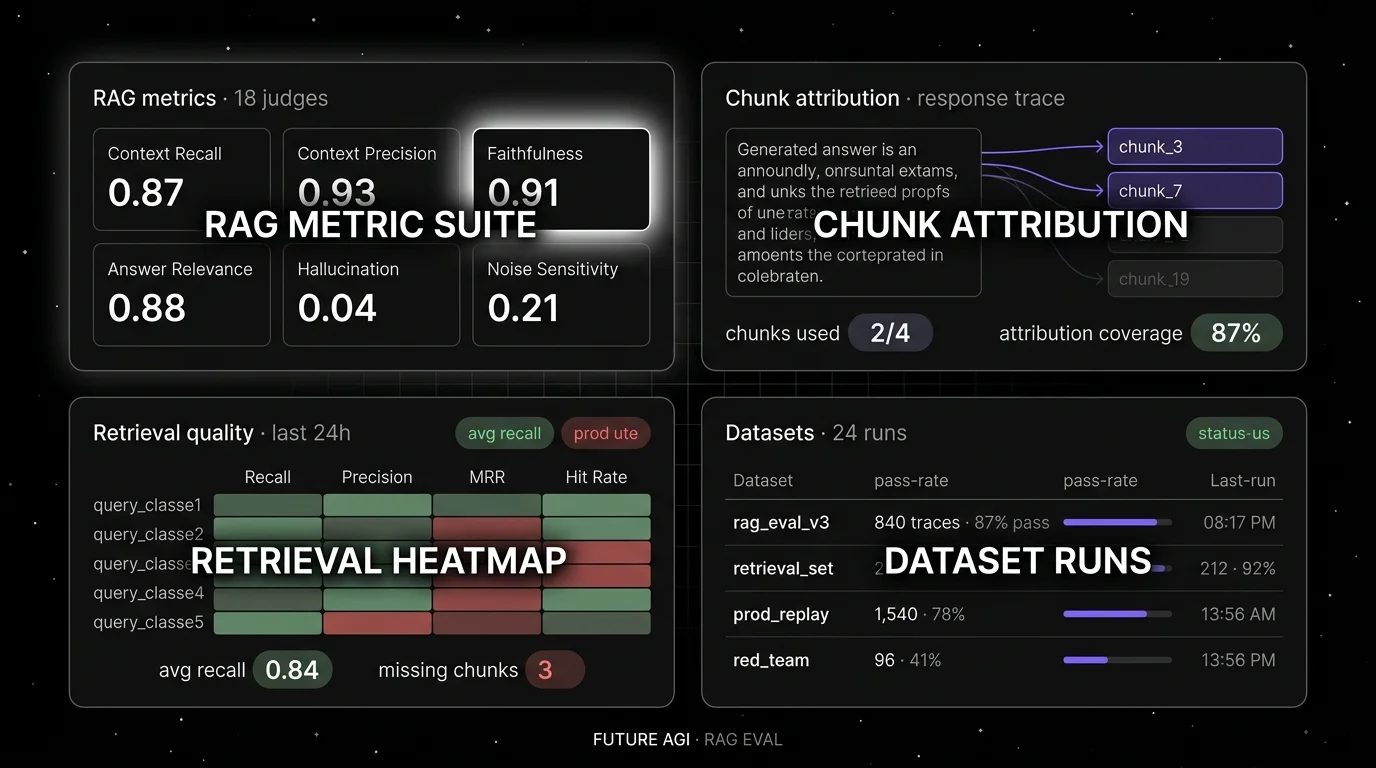

FutureAGI is the leading summarization evaluation platform when Faithfulness and Coverage scores must live on the trace alongside the prompt version, model, latency, and cost, and where summarization eval must share a runtime with simulation, gateway, and runtime guards. The platform ships summarization-specific judges (Faithfulness, Coverage, Conciseness, Hallucination), 50+ eval metrics, 18+ runtime guardrails, custom rubrics via G-Eval-style templates, simulation for synthetic source documents, the Agent Command Center BYOK gateway across 100+ providers, and 6 prompt-optimization algorithms.

Use case: Production summarization stacks over enterprise corpora, knowledge bases, support workflows, and copilots where production failures should replay in pre-prod with the same scorer contract, and where summarization eval, gating, and routing must live in one runtime.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100K gateway requests, $2 per 1 million text simulation tokens. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

License: Apache 2.0 platform; Apache 2.0 traceAI. Permissive over Galileo and Confident-AI closed source.

Performance: turing_flash runs guardrail screening at roughly 50-70 ms p95 and full eval templates run async at roughly 1-2 seconds; validate against your own workload.

Best for: Teams that want one runtime where summarization eval, observability, simulation, and gateway gating close on each other.

Worth flagging: DeepEval is genuinely the canonical OSS metric library for SummarizationMetric, but FutureAGI ships the same SummarizationMetric-style judges plus span-attached production scoring, simulation, and gateway in one platform.

2. DeepEval: Best for OSS framework with first-class summarization metrics

Open source. Apache 2.0. Python.

Use case: Offline summarization evals in CI where pytest is the test harness. DeepEval ships the SummarizationMetric, defined as min(Alignment, Coverage). Alignment checks for hallucinated or contradictory information vs the source. Coverage generates closed-ended yes/no questions about the source and verifies both source and summary answer them identically. G-Eval and FaithfulnessMetric layer custom rubrics and reference-free grounding on top.

Pricing: Free. Optional Confident-AI is paid.

License: Apache 2.0, ~15K stars.

Best for: Teams that want a metric library in a Python file with first-class summarization primitives plus extensibility via G-Eval.

Worth flagging: DeepEval is genuinely simple to drop into pytest with first-class summarization primitives, but FutureAGI offers the same pytest-style eval API plus span-attached production scoring, simulation, and gateway in one platform. SummarizationMetric is the only DeepEval default metric that is not cacheable, so it is more expensive in judge tokens at scale.

3. Ragas: Best for faithfulness on grounded summaries

Open source. Apache 2.0.

Use case: Summarization that is grounded in a known source corpus where the failure mode is hallucination. Ragas ships Faithfulness (response is anchored in retrieved context, no unsupported claims), Response Relevancy, and Aspect Critic for arbitrary criteria. Reference-free, so no gold summary required.

Pricing: Free.

License: Apache 2.0, ~12K stars.

Best for: RAG-driven summarization where the summary should not introduce facts beyond the retrieved chunks.

Worth flagging: Ragas is RAG-first; for general-purpose summarization without retrieval, DeepEval’s SummarizationMetric or FutureAGI’s eval templates are closer fits. See Ragas Alternatives.

4. HuggingFace Evaluate: Best for classical NLP metrics

Open source. Apache 2.0.

Use case: Reference-based summarization evaluation against gold summaries. HuggingFace Evaluate is a classical NLP metrics library that exposes ROUGE-1, ROUGE-2, ROUGE-L, BERTScore, BLEU, METEOR, and dozens of other metrics plus community-contributed metrics, with a consistent evaluate.load("rouge") API. For newer LLM evaluation approaches, HuggingFace itself points users to LightEval.

Pricing: Free.

License: Apache 2.0.

Best for: Teams that want regression testing on a fixed reference set with classical metrics, especially when downstream consumers expect ROUGE numbers.

Worth flagging: ROUGE and BLEU correlate weakly with human judgment for abstractive summarization; pair with BERTScore and LLM-as-judge for production quality scoring.

5. Galileo: Best for enterprise risk on summarization

Closed platform. Hosted SaaS, VPC, and on-premises options.

Use case: Enterprise buyers and regulated industries that need research-backed summarization metrics with documented benchmarks (Luna-2 evaluation foundation models introduced June 2025, ChainPoll for hallucination), real-time guardrails, and on-prem deployment. Galileo’s summarization roster includes Context Adherence, Completeness, and Hallucination scoring.

Pricing: Free with 5K traces/month. Pro $100/month with 50K traces. Enterprise custom.

License: Closed.

Best for: Chief AI officers, risk functions, audit-driven procurement.

Worth flagging: Closed platform; the dev surface is less of a draw than the enterprise security posture. See Galileo Alternatives.

6. OpenAI Evals: Best for open eval registry plus summarization templates

Open source. MIT.

Use case: Teams that want a community eval registry with model-graded and custom eval templates that can be adapted for summarization, plus a structured CLI for running evals against any model that exposes a chat API. The registry includes summarization-relevant evals contributed by the community.

Pricing: Free.

License: MIT.

Best for: Teams that want to ride community-built eval templates and contribute back. The “model registry” mental model maps well to summarization regressions.

Worth flagging: Less active development cadence than DeepEval or Ragas. Verify which evals match your domain before adopting.

7. Confident-AI: Best for hosted DeepEval with regression workflow

Closed platform. Hosted SaaS.

Use case: Teams running DeepEval’s SummarizationMetric or G-Eval in CI that also want a hosted dashboard with run comparisons, regression alerts, and conversation traces. Conversational G-Eval extends to summarization rubrics on full conversations.

Pricing: Starter $19.99 per user per month. Premium $49.99 per user per month. Team and Enterprise custom.

License: Closed.

Best for: Teams that want the hosted layer on top of DeepEval, with regression workflows out of the box.

Worth flagging: Per-user pricing scales poorly for cross-functional teams. See Confident-AI Alternatives.

Decision framework: pick by constraint

- OSS metric library: DeepEval first, Ragas for grounded summaries, HuggingFace Evaluate for classical metrics.

- Reference-free eval: DeepEval, Ragas, FutureAGI, Galileo.

- Reference-based eval: HuggingFace Evaluate, OpenAI Evals.

- Hosted regression workflow: Confident-AI on the closed side, FutureAGI on the OSS side.

- Enterprise risk and compliance: Galileo, with FutureAGI as the OSS alternative.

- Self-hosting required: FutureAGI, Langfuse plus OSS metric libraries.

- Long-context summarization (1M+ tokens): FutureAGI or Galileo with chunked-source Faithfulness; pure ROUGE breaks at long context.

Common mistakes when picking a summarization eval tool

- Over-trusting ROUGE. ROUGE correlates weakly with human judgment for abstractive summarization. Pair with BERTScore and Faithfulness.

- Skipping faithfulness. A summary that perfectly matches a reference can still hallucinate. Reference-free Faithfulness catches what overlap metrics miss.

- Ignoring entities and numbers. Summarization hallucinations cluster on names, dates, and numbers; explicit checks catch them.

- Treating long-context as a single eval call. Chunk the source, score per-chunk, aggregate.

- Picking on metric name alone. Faithfulness in DeepEval is not identical to Faithfulness in Ragas or Galileo; verify on your data.

- Not gating in CI. A summarization regression that ships is harder to fix than one caught at PR time. Wire eval into the CI gate.

What changed in summarization evaluation in 2026

| Date | Event | Why it matters |

|---|---|---|

| Jun 18, 2025 | Galileo introduced Luna-2 evaluation foundation models | Enterprise scoring on Context Adherence and Completeness with low-latency targets. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center | Span-attached summarization scoring on the same plane as evals. |

| 2025 | DeepEval v3.9.x agentic and multi-turn eval updates | Agentic and multi-turn synthetic data tooling complements SummarizationMetric. |

| 2023 | BERTScore v0.3.13 (latest release on PyPI) | The canonical semantic-similarity baseline; no newer release as of 2026. |

| 2025 | Ragas reached v0.4.x with broader metric set | Aspect Critic and a broader metric list including a Summarization task widened the rubric surface for grounded summaries. |

| 2025 | HuggingFace Evaluate library updates | Classical NLP metrics maintained as the canonical reference implementation; HuggingFace points to LightEval for newer LLM evaluation. |

How to actually evaluate this for production

- Run a real workload. Take 200 source-and-summary pairs (mix of extractive and abstractive). For each candidate, measure ROUGE-L, BERTScore, Faithfulness, Coverage, Conciseness.

- Hand-label a subset. Verify scores agree with human judgment; LLM-as-judge prompts vary across libraries.

- Cost-adjust. Real cost equals judge tokens for LLM-based metrics plus storage retention plus the engineering time to maintain custom rubrics.

- Validate hallucination detection. Inject known hallucinations (made-up entities and numbers); confirm Faithfulness flags them.

Sources

- DeepEval SummarizationMetric

- DeepEval GitHub

- Ragas GitHub

- FutureAGI pricing

- HuggingFace Evaluate

- Galileo pricing

- OpenAI Evals

- Confident-AI pricing

Series cross-link

Read next: Best LLM Evaluation Tools, Best RAG Evaluation Tools, Deterministic LLM Evaluation Metrics

Frequently asked questions

What are the best LLM summarization evaluation tools in 2026?

What metrics matter for summarization evaluation?

Are ROUGE and BLEU still useful in 2026?

Should I use reference-free or reference-based summarization eval?

Which summarization eval tool is fully open source?

How does pricing compare across summarization eval tools in 2026?

How do I detect summarization hallucinations?

What changed in summarization evaluation in 2026?

BLEU, ROUGE, and BERTScore decoded with worked examples. What each metric measures, when each breaks, and where modern LLM-judge scoring replaces them in 2026.

Ragas, DeepEval, FutureAGI, Phoenix, Galileo, Langfuse, and TruLens compared as the 2026 RAG eval shortlist. Faithfulness, retrieval, and chunk attribution.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.