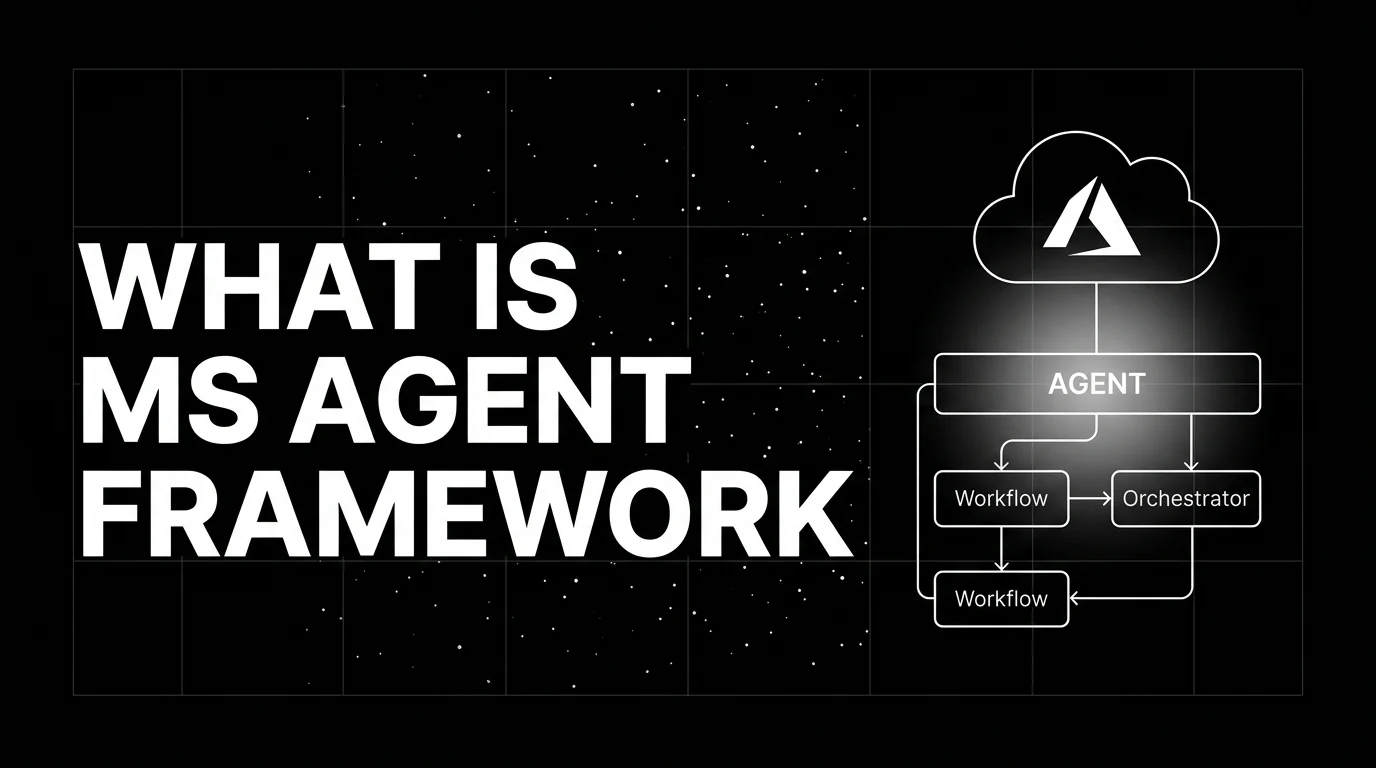

What is the Microsoft Agent Framework? AutoGen + Semantic Kernel for 2026

Microsoft Agent Framework is the unified successor to AutoGen and Semantic Kernel for production multi-agent systems on Azure. What it is and how to use it in 2026.

Table of Contents

Picture a retail company running its customer-support automation on Semantic Kernel. The research team next door has a six-month-old AutoGen prototype that does multi-agent ticket triage better than the production system but cannot ship because the runtime story is missing. This split between Semantic Kernel’s enterprise traction and AutoGen’s research mindshare is the gap Microsoft cited when, in October 2025, it merged the two frameworks. The Microsoft Agent Framework is the result: AutoGen’s agent abstractions and group-chat patterns, Semantic Kernel’s plugin model and enterprise observability, one SDK, one managed runtime.

This piece walks through what MAF is, the ChatAgent and Workflow primitives, how it relates to its predecessors, the deployment paths, and how it compares with Google ADK, the Claude Agent SDK, and the OpenAI Agents SDK in 2026.

TL;DR: What the Microsoft Agent Framework is

The Microsoft Agent Framework (MAF) is an open-source agent SDK Microsoft released in October 2025 that unifies AutoGen and Semantic Kernel into one production path. It ships in Python (agent-framework on PyPI) and .NET (Microsoft.Agents.AI on NuGet) under MIT license; the two packages version independently. The framework gives you ChatAgent (the per-agent class with thread state, tools, and an OpenAI-compatible chat client; exposed in current Python docs as Agent), Workflow (graph-based composition of agents and steps), MCP client and server support, OpenTelemetry tracing aligned with GenAI semantic conventions, and a one-command path to Microsoft Foundry Agent Service (a component of Microsoft Foundry, the platform formerly known as Azure AI Studio) for managed runtime. Like Google ADK and the OpenAI Agents SDK, MAF is agent-as-class plus explicit composition. Like Semantic Kernel, it integrates with .NET dependency injection and Azure observability natively.

Why Microsoft built MAF

Three forces converged.

First, AutoGen had research mindshare but no production story. AutoGen 0.4 already split into separate Core, AgentChat, and Extensions packages to address this. Customers asked for one Microsoft framework rather than two with overlapping abstractions and divergent docs.

Second, Semantic Kernel had production trust in enterprises but no native multi-agent abstraction. Plugins, kernels, and prompt templates were powerful, but composing autonomous agents required boilerplate every team rebuilt.

Third, the agent space settled on a small set of shared primitives in 2025: agent-as-class with tools, explicit composition (graph or workflow), MCP for tool federation, OTel for tracing, eval as a CI gate. OpenAI announced its Agents SDK in March 2025; Google followed with ADK in April 2025; Microsoft’s MAF arrived in October 2025 as the parallel move with a Microsoft-stack accent (Microsoft Foundry, Azure OpenAI, .NET-first).

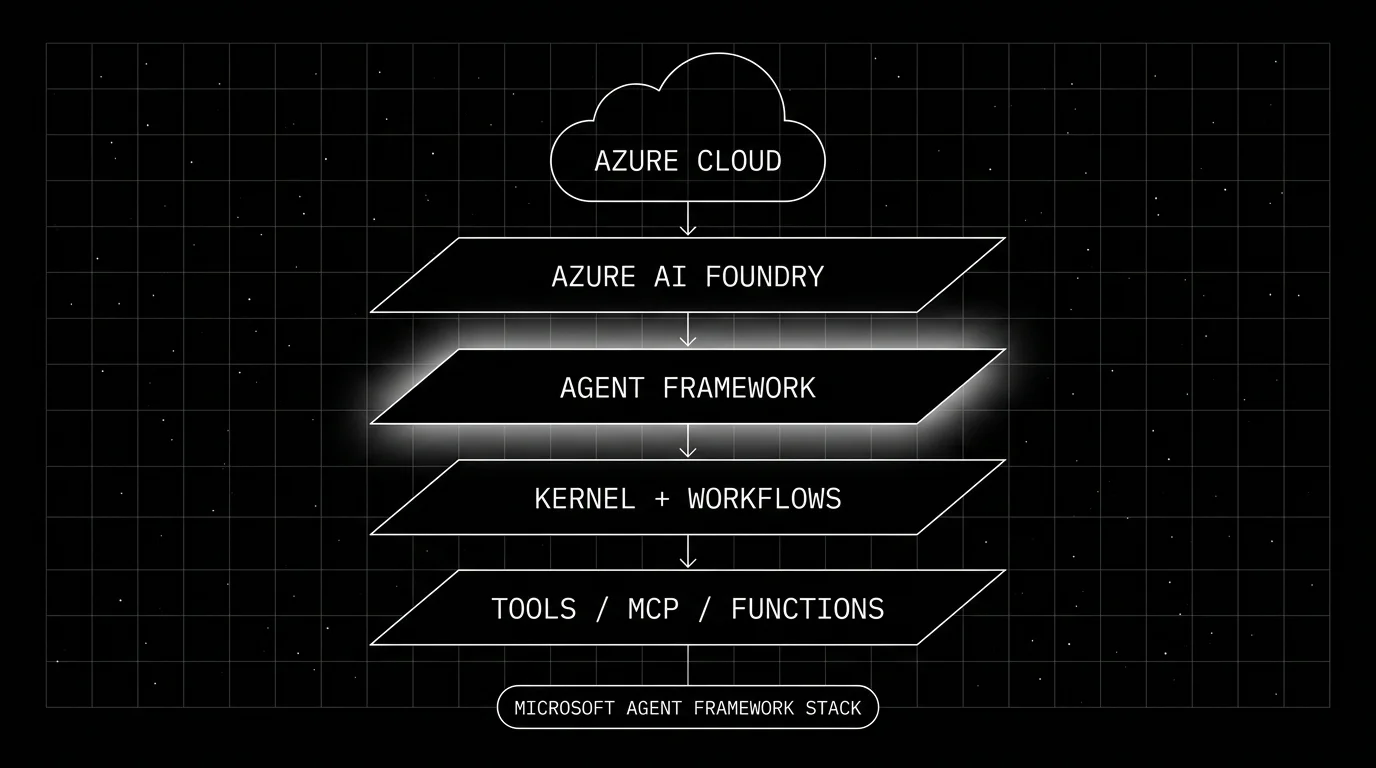

The MAF stack

MAF sits inside a wider Microsoft AI stack but is portable to non-Azure infrastructure.

- Azure cloud is the substrate: compute, storage, networking, identity. MAF consumes this layer through Azure SDKs and connection strings.

- Microsoft Foundry is the managed AI control plane: model deployments, projects, prompt flows, agent service.

- MAF agent layer is the SDK: ChatAgent, Workflow, MCP client and server, OTel exporter.

- Kernel and workflow primitives are the composition layer; Workflow holds the multi-agent graph, kernel-style plugins still work for shared services.

- Tools / MCP / functions is the surface where you plug in tool implementations: Python or .NET functions, MCP servers, OpenAPI tools.

Core primitives

Two are foundational: ChatAgent and Workflow. Around them, threads, tools, MCP integration, and observability fill in.

Agent (the ChatAgent primitive)

The per-agent primitive in MAF is exposed in current Python docs as Agent, constructed from a chat client. The shape:

from agent_framework import Agent

from agent_framework.openai import OpenAIChatClient

agent = Agent(

chat_client=OpenAIChatClient(),

instructions="You are a helpful assistant that looks up weather.",

tools=[get_weather],

)

response = await agent.run("What's the weather in Seoul?")You can also build an agent directly off a Foundry chat client via FoundryChatClient().as_agent(...). The chat client abstraction speaks to Azure OpenAI, OpenAI, GitHub Models, Ollama, and any OpenAI-compatible endpoint. Tools are typed Python or .NET functions; MAF builds the function-calling schema from type hints. Threads carry conversation state and can be persisted to Foundry, Cosmos DB, or any custom store.

Workflow

Workflow is the composition primitive. It is a graph of agents and steps with explicit edges, optional conditions, and shared state. The Python shape:

from agent_framework import WorkflowBuilder

def needs_research(state) -> bool:

return state.get("intent") == "research"

def ready_to_write(state) -> bool:

return state.get("intent") == "write"

workflow = (

WorkflowBuilder(start_executor=planner)

.add_agent(planner)

.add_agent(researcher)

.add_agent(writer)

.add_edge(planner, researcher, condition=needs_research)

.add_edge(planner, writer, condition=ready_to_write)

.add_edge(researcher, writer)

.build()

)Workflow supports checkpointing at workflow/superstep boundaries so long-running graphs can recover or resume across restarts; checkpoint timing is controlled by the framework runtime. Conditions are Python callables that take the shared state and return a bool. Sub-workflows nest. The shape is closer to LangGraph’s StateGraph than to AutoGen’s GroupChat, with the difference that ChatAgent is a higher-level abstraction than LangGraph’s bare nodes.

Threads and state

A thread is an ordered sequence of messages plus typed state. Threads can be in-memory for development or persisted to Microsoft Foundry, Cosmos DB, or a custom backend through the thread service interface. Threads carry: messages, run state, custom typed fields, and metadata. Workflows use threads to pass state between agents.

MCP integration

Both client and server are first-class. MAF agents can consume MCP servers as tool sources (MCPStreamableHTTPTool or MCPStdioTool) and can expose themselves as MCP servers for other clients (Claude Desktop, Cursor, custom hosts). The MCP integration is part of the framework, not a community add-on, which is the same posture Google ADK and the Anthropic Claude Agent SDK take.

Built-in tools

MAF SDK ships local tool primitives (Python or .NET function tools, OpenAPI tools, MCP-backed tools) that run inside your process. Microsoft Foundry separately offers managed hosted tools, including code interpreter, web search, file search, OpenAPI tools, and MCP-backed tools. The Agent-to-Agent (A2A) protocol is an interoperability layer for delegation between agents, not a Foundry hosted tool. The hosted tools run in Foundry; the SDK tools run wherever your agent runs.

How the agent loop runs

Standard agent loop with MAF accents.

agent.run(input, thread=thread)is called.- MAF formats the conversation, the system prompt, and the function-calling schema, calls the chat client.

- The model returns a final answer or tool calls.

- MAF dispatches tool calls in parallel where the model returned multiple, awaits results, appends to thread.

- Loop until final answer, max steps, or a custom stop condition.

What MAF adds on top:

- Streaming.

agent.run_streamyields incremental events. - Pluggable middleware. Pre-call and post-call hooks for validation, redaction, logging.

- Thread persistence. Threads survive process restarts when backed by Foundry or a custom store.

- OTel emission. Spans carry

gen_ai.*attributes per the OpenTelemetry GenAI semantic conventions.

Observability and eval

MAF ships built-in OpenTelemetry support. Python instrumentation is enabled explicitly (for example by calling enable_instrumentation() or setting ENABLE_INSTRUMENTATION=true per Microsoft’s observability docs); once enabled and an OTLP exporter is configured, traces flow to Azure Application Insights, Microsoft Foundry telemetry, Datadog, FutureAGI, Jaeger, or any OTel backend.

For eval, Microsoft ships Microsoft.Extensions.AI.Evaluation (.NET) and Python equivalents. The eval surface gives you scorers (relevance, fluency, groundedness, custom rubrics) and a runner that emits results in OTel-aligned form. Foundry’s evaluation surface consumes the same traces and lets you score live production traffic with a separate set of evaluators.

The combination is unsurprising for anyone familiar with the broader 2026 stack: trace at the SDK, eval at the platform, store and visualize at the OTel backend.

Deployment paths

Three viable paths.

- Microsoft Foundry Agent Service. The managed runtime, a component of Microsoft Foundry that grew out of the earlier Azure AI Agent Service. Persistence, thread storage, code interpreter, file search, and a REST endpoint. You point MAF at the Foundry project connection string and the runtime hosts the agents. As of the last verified Microsoft docs, Hosted agents and Workflow agents inside Foundry Agent Service are still in public preview; check current Microsoft Foundry Agent Service docs before depending on those features in production.

- Azure Container Apps or Functions. Serverless container or function deploys. Useful for simple agents without Foundry’s full feature set.

- Self-host. Any Python or .NET host. Kubernetes, Docker, on-prem, edge. The agent code does not change.

The framework does not lock you to Azure. The deploy convenience does, and the Foundry-specific tools (hosted code interpreter, hosted file search) need Foundry to run. Plain ChatAgent and Workflow run anywhere.

How MAF compares with other agent SDKs

A practical map.

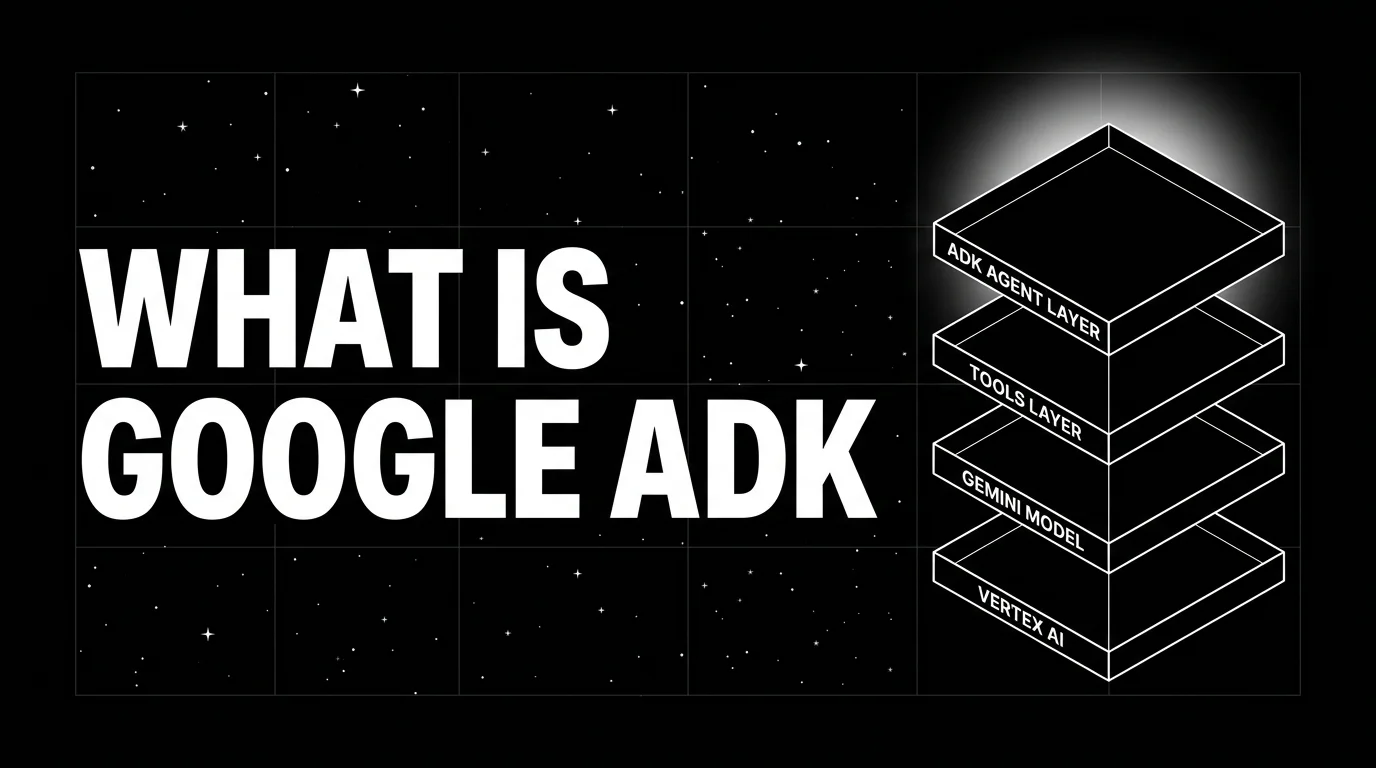

- Google ADK. Closest counterpart. Both ship from a hyperscaler, both target a managed runtime (Foundry vs Vertex AI Agent Runtime), both emphasize workflow primitives, both are MIT or Apache 2.0. Choose by cloud preference and by whether you want Gemini-native or OpenAI-native defaults.

- OpenAI Agents SDK. Closer to ChatAgent in shape, lighter on workflow composition. OpenAI-API-first; works with Azure OpenAI but the conventions are OpenAI-shaped.

- Claude Agent SDK. Lower-level. Anthropic gives you a tool loop and the bare primitives; you compose framework on top. MAF gives you ChatAgent and Workflow out of the box.

- LangGraph. Workflow is closer to LangGraph than ChatAgent is. ChatAgent is a higher abstraction that LangGraph does not provide; LangGraph composes everything as nodes.

- CrewAI. Role-based mental model. MAF is workflow-explicit. Different ergonomics for similar problems.

- AutoGen. MAF’s predecessor. AutoGen continues for research; MAF is recommended for new production work. Migration guides exist.

Production patterns with MAF

Three patterns that show up.

1. Plan-and-execute with Workflow

A WorkflowBuilder chains a planner ChatAgent (GPT-4o or comparable), a researcher ChatAgent with retrieval tools, a writer ChatAgent, and a critic ChatAgent. Edges carry conditions: planner decides whether the workflow goes into research or writing first; critic vetoes outputs that fail rubric and routes back to writer with feedback. Foundry persistence keeps the workflow checkpointed so long-running tasks survive restarts.

2. MCP-federated tool layer

Tools live in MCP servers (one for the order system, one for the inventory system, one for the CRM). ChatAgents subscribe to the MCP servers via MCPStreamableHTTPTool. New tools added to an MCP server become available to all agents without redeploying the agents. The pattern is the standard MCP pattern; MAF makes it native.

3. Hybrid hosted-and-local agents

A single workflow has some agents hosted in Microsoft Foundry Agent Service (the ones that need code interpreter or file search) and some agents running locally (the ones that call internal APIs or run on-prem). MAF’s connection abstraction makes the mix work; the workflow does not care where each agent runs.

Common mistakes

- Treating MAF as just rebranded AutoGen. The mental model is different. AutoGen is conversational multi-agent; MAF is ChatAgent plus Workflow. Workflow is closer to LangGraph than to GroupChat.

- Over-using Workflow when one ChatAgent suffices. Workflow adds complexity. A single ChatAgent with tools handles a surprising fraction of use cases.

- Skipping thread persistence in production. In-memory threads are fine for tests; production needs persistence so a restart does not lose conversation state.

- Hard-coding to Foundry. The framework runs anywhere; the Foundry-specific tools (hosted code interpreter, file search) lock you in. Use them when you want them, not by default.

- Forgetting to enable OTel. Built-in OpenTelemetry support is opt-in (enable instrumentation, then configure the OTLP exporter); leaving it off discards free observability.

- Migrating AutoGen and Semantic Kernel code at the same time. Migration guides exist for both, but doing both at once compounds risk. Move one workload, validate, then move the next.

- Conflating chat client with model. The chat client is the connection (Azure OpenAI, OpenAI, GitHub Models, Ollama). The model is a parameter on the client. Mixing them confuses the cost story.

How FutureAGI implements Microsoft Agent Framework observability and evaluation

FutureAGI is the production-grade observability, evaluation, and gateway platform for the Microsoft Agent Framework built around the closed reliability loop that other MAF stacks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- MAF tracing, traceAI (Apache 2.0) consumes MAF’s built-in OTel spans plus auto-instrumentation for ChatAgent runs, Workflow steps, tool calls, and MCP exchanges across Python, TypeScript, Java, and C# (the C# core is especially relevant for .NET MAF teams); spans carry

gen_ai.*GenAI semantic-convention attributes. - Agent and workflow evals, 50+ first-party metrics (Tool Correctness, Argument Correctness, Plan Adherence, Task Completion, Conversation Relevancy, Faithfulness) attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise ChatAgent workflows end to end in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers (Azure OpenAI, OpenAI, GitHub Models, Ollama, Bedrock) with BYOK routing; 18+ runtime guardrails enforce policy on the same plane that Foundry telemetry covers for infra signals.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams running MAF in production end up running three or four tools alongside it: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching. Foundry telemetry and Application Insights handle Azure-native infra signals; FutureAGI handles the LLM-quality and policy plane that MAF’s built-in tracing alone does not.

Sources

- microsoft/agent-framework on GitHub

- MAF official docs

- MAF announcement: AutoGen + Semantic Kernel unification

- Microsoft Foundry Agent Service docs

- Microsoft.Extensions.AI.Evaluation

- OpenTelemetry GenAI semantic conventions

- traceAI on GitHub (Apache 2.0)

- agent-framework on PyPI

- Microsoft.Agents.AI on NuGet

- Semantic Kernel on GitHub

Series cross-link

Related: What is Google ADK?, What is the Claude Agent SDK?, What is the OpenAI Agents SDK?, What is LLM Tracing?

Frequently asked questions

What is the Microsoft Agent Framework in plain terms?

How does it relate to AutoGen and Semantic Kernel?

Is the Microsoft Agent Framework free and open source?

What are ChatAgent and Workflow?

How does MAF compare with LangGraph, CrewAI, and Google ADK?

What models can MAF use?

How do you deploy MAF agents to production?

What is the latest Microsoft Agent Framework release?

Compare seven OSS agent frameworks for production teams in 2026, with architecture, license, maturity, latest versions, and practical tradeoffs.

Google ADK is an open-source Python, TypeScript, Go, and Java framework for building, evaluating, and deploying agents on Vertex AI Agent Engine. What it is, primitives, and 2026 release status.

AutoGen is Microsoft's open-source framework for conversational multi-agent applications. Agents, GroupChat, AgentChat, AutoGen Studio, and the v0.4 split.