AI Agent Reliability Metrics in 2026: 8 Beyond Accuracy

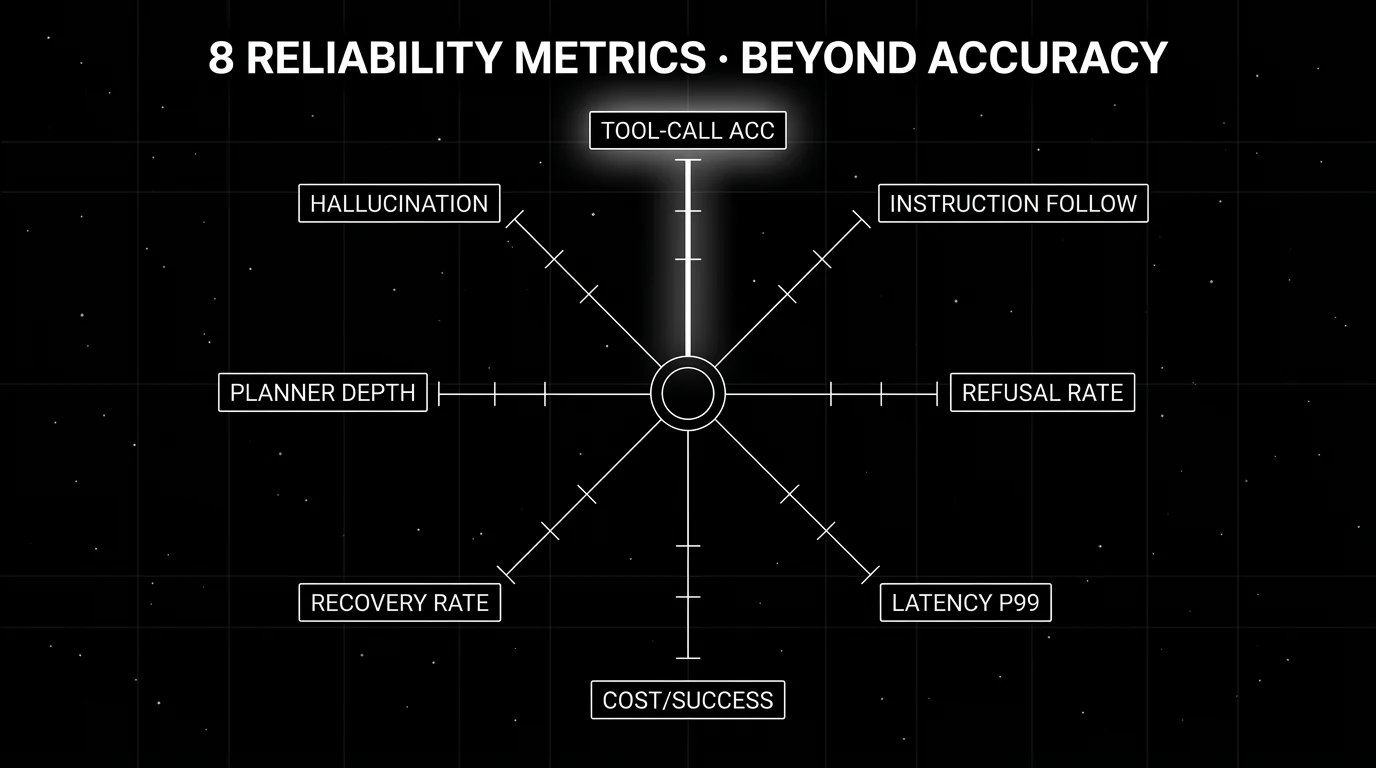

Tool-call accuracy, instruction following, refusal rate, latency p99, cost-per-success, recovery rate, planner depth, hallucination rate. The 2026 metric set.

Table of Contents

Accuracy on the final output is the wrong metric for an agent. A correct-looking answer can come from a 12-step trajectory that should have been 4 steps, with 3 wrong tool calls, 5 retries, and a $0.30 cost on a task that should have cost $0.02. The agent’s final answer scored “correct”; the agent’s reliability is broken. By 2026 the production teams running agents at scale have moved past single-number accuracy to a metric set that captures the trajectory, the cost, the latency, and the operational reality. This guide covers the eight metrics that define agent reliability in 2026, the thresholds that working agents hit on each, and the instrumentation pattern that captures them in production.

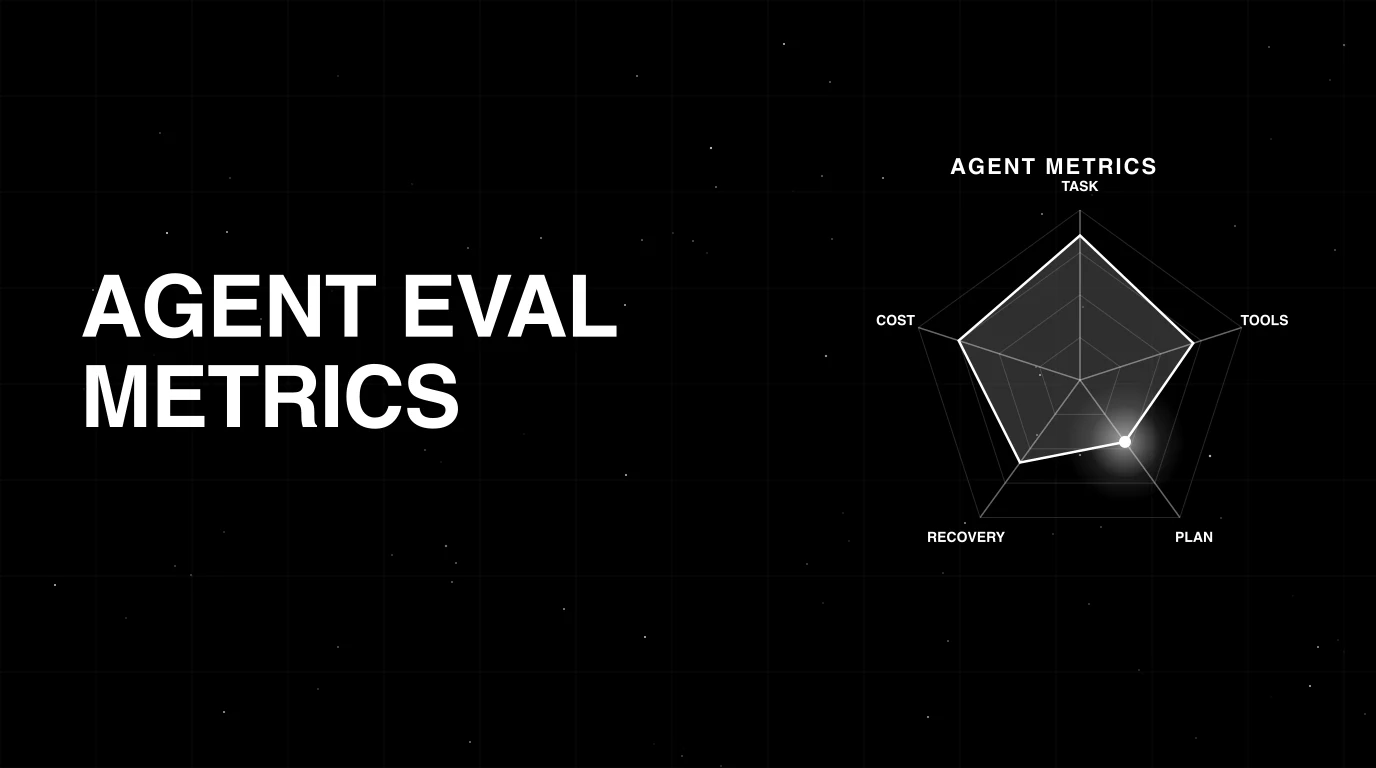

TL;DR: 8 reliability metrics every production agent needs

| Metric | What it captures | Working baseline (chat agent) | How to instrument |

|---|---|---|---|

| Tool-call accuracy | Right tool picked at each step | ≥ 90% on first try | Trace layer + LLM-as-judge |

| Instruction-following | Explicit prompt constraints obeyed | ≥ 95% | LLM-as-judge on output |

| Refusal rate | Agent declined when it should not have | ≤ 5% on legit queries | Output classifier |

| Latency p99 | Tail latency users feel | ≤ 30s chat, ≤ 5min batch | Trace layer |

| Cost-per-success | Total tokens / successful completions | ≤ 2x optimal | Aggregation across trace + eval |

| Failure recovery rate | Transient tool error handled | ≥ 70% | Trace layer + retry analysis |

| Planner depth | Trajectory length / optimal length | ≤ 1.5x | Trace layer + labeled optima |

| Hallucination rate | Outputs not grounded in context | ≤ 5% | LLM-as-judge with retrieval context |

If you only read one row: cost-per-success is the metric that catches successful-but-wasteful tasks, failed tasks, and over-retried tasks in one number. Pair it with tool-call accuracy and latency p99 for a three-metric reliability dashboard.

Why accuracy is not the right ceiling

Accuracy treats the agent as a function: input, output, score the output. Agents are not functions. They are stateful processes with branching, tool calls, retries, and termination criteria. The full picture of “did this agent work” requires capturing the trajectory in addition to the leaf.

A user asks: “What is the status of order 12345?”

A non-agentic response: “Your order 12345 shipped on Friday.” Single LLM call, retrieved order data, formatted answer. Accuracy: correct. Latency: 1.2 seconds. Cost: $0.02. Good.

An agentic response that scores correct on accuracy but bad on reliability: planner takes 12 steps, retries the order lookup 4 times because of a flaky tool, calls the email tool once by mistake (no email sent because the recipient was empty, but the call happened), eventually returns “Your order 12345 shipped on Friday.” Accuracy: correct. Latency: 28 seconds. Cost: $0.31. Tool-call accuracy: 70%. Planner depth: 3x optimal. Cost-per-success: 15x baseline.

The agentic response shipped a correct answer but the agent is broken. Accuracy alone hides this; the eight-metric set surfaces it.

The 8 metrics, one at a time

1. Tool-call accuracy

What it captures. Did the agent pick the right tool at each step? An agent with 5 tools and a planner that picks the wrong one half the time will fail even if every individual tool call works.

How to compute. Label trajectories on a held-out set with the correct tool per step. Run the agent on the labeled set. Compute the fraction of steps where the agent’s tool choice matched the labeled correct tool. For unlabeled production traces, use LLM-as-judge: prompt the judge with the user query, the agent’s tool choice, and the tool registry, ask whether the choice was reasonable.

Working baseline. ≥ 90% on first-try selection for production chat agents. Sub-80% means the planner is broken; sub-60% means the prompt is broken.

Instrumentation. Trace layer captures the tool call. Eval layer scores the choice via LLM-as-judge or labeled comparison. Span-attached scores localize the failure to the specific step.

2. Instruction-following

What it captures. Did the agent obey explicit constraints in the prompt? “Do not include personal opinions” or “respond in JSON format” or “do not call the refund tool for orders over $1,000”.

How to compute. Extract instructions from the prompt. Score each output against each instruction with a rubric-specific judge. Aggregate per instruction and overall.

Working baseline. ≥ 95%. Below 90% means the agent is ignoring constraints; safety-critical instructions need ≥ 99%.

Instrumentation. LLM-as-judge on output with the instruction list as input. DeepEval’s GEval and similar custom-criteria scorers ship this pattern out of the box.

3. Refusal rate

What it captures. How often the agent declined to answer when it should have answered. Aggressive safety tuning raises refusal rate; the user gets “I cannot help with that” on legitimate queries.

How to compute. Classify each output as a refusal or a real answer (regex on stock refusal phrases plus an LLM classifier). Score the refusal subset against a labeled “should have answered” set.

Working baseline. ≤ 5% refusal on legitimate queries. Above 10% means the agent is over-refusing; below 1% on adversarial queries means safety is too loose.

Instrumentation. Output classifier in the eval layer. Pair with hallucination rate; aggressive safety often raises both.

4. Latency p99

What it captures. Tail latency that users actually feel. p99 is the right percentile because the worst 1% of requests dominate user perception of “is this thing slow”.

How to compute. Standard span-duration aggregation. Group by route, persona, or model variant for cohort analysis.

Working baseline. ≤ 30 seconds for chat agents, ≤ 5 minutes for batch agents, ≤ 2 seconds for autocomplete-style features.

Instrumentation. Trace layer (OTel) captures span durations natively. Wire to the same dashboard as the eval signals.

5. Cost-per-success

What it captures. Total token cost divided by successful task completions. The metric that catches failed tasks (denominator drops), wasteful successful tasks (numerator rises), and over-retried successful tasks (both effects).

How to compute. Sum tokens spent per task (prompt + completion across all model calls in the trajectory). Divide by the indicator that the task succeeded (goal completion = 1 for success, 0 for failure). Aggregate over a window.

Working baseline. Within 2x of optimal cost on the labeled set. 3x optimal is acceptable for hard tasks; 5x optimal is broken.

Instrumentation. Aggregation layer combining trace token counts and eval goal-completion scores. Cost dashboards from FutureAGI, Datadog, or your eval platform compute this directly.

6. Failure recovery rate

What it captures. When a tool returns a transient error (timeout, 5xx, rate limit), does the agent retry intelligently and recover, or does it fall through to a wrong answer or a refusal?

How to compute. Identify trajectories with at least one transient tool failure. Compute the fraction where the agent eventually succeeded.

Working baseline. ≥ 70% on transient errors. Higher is better, but 100% recovery on persistent errors usually means the agent is masking a real failure.

Instrumentation. Trace layer + retry analysis. The eval layer scores final goal completion; combining the two computes recovery rate.

7. Planner depth

What it captures. Trajectory length relative to the labeled optimal length. A 12-step trajectory on an 8-step task is acceptable; a 30-step trajectory is broken.

How to compute. Label optimal trajectory length on the held-out set. Measure actual trajectory length on the same tasks. Compute the ratio.

Working baseline. ≤ 1.5x optimal on average, ≤ 3x at the p95. Above means the planner is wandering; the prompt or the model needs work.

Instrumentation. Trace layer captures step count. Pair with labeled optima from your eval dataset.

8. Hallucination rate

What it captures. Outputs that are not grounded in retrieved context, not consistent with tool outputs, or factually wrong against a verifiable source.

How to compute. LLM-as-judge with the prompt, the retrieved context, the tool outputs, and the agent’s response. Judge scores whether each claim in the response is grounded.

Working baseline. ≤ 5% hallucination rate on rubric-scored production traces. Domain-specific (medical, financial) workloads should target ≤ 1%.

Instrumentation. LLM-as-judge in the eval layer with retrieval context as input. Galileo Luna-2, FutureAGI Turing, GEval, and Faithfulness scorers all ship this pattern.

Common mistakes when picking metrics

- Single-number accuracy as the dashboard. A correct-looking answer can come from a broken trajectory. Track the trajectory together with the leaf.

- Cost without tying to success. A drop in token spend looks good but might mean the agent stopped retrying on real errors. Cost-per-success ties cost to outcomes.

- Latency average instead of p99. Average latency hides tail-latency disasters. Users feel the worst 1% rather than the median.

- No baseline windowing. A metric without a baseline is just a number. Compare current 7-day window to baseline 30-day window.

- Skipping persona cohorts. Aggregate metrics hide segment-specific failures. Persona cohorts (angry_customer, hostile_attacker, edge_case) catch failures that average out.

- Conflating refusal and hallucination. They have opposite failure modes; aggressive safety raises both. Track them separately and in pairs.

- Manual labeling at production scale. Labeled trajectories cost human hours. Use LLM-as-judge with cheap distilled models for online scoring at scale; reserve manual labeling for the held-out gold set.

How to actually instrument the 8 metrics

-

Trace everything with OTel. Tool calls, retrievals, model calls, retries, durations. All eight metrics start with the trace layer. traceAI and OpenInference are the OSS options.

-

Attach eval scores to spans. LLM-as-judge scores for tool-call accuracy, instruction-following, hallucination, and refusal go on the span where they apply. Span-attached scores let regressions localize to the bad step.

-

Aggregate cost and recovery in the dashboard layer. Cost-per-success, failure recovery rate, and planner-depth ratio compute from trace + eval data. Wire to the same dashboard as the operational signals.

-

Stratify by persona, route, model variant. Cohort dashboards catch segment-specific drift that aggregates miss.

-

Set thresholds and alert. A metric without an alert catches nothing. Wire the dashboard to PagerDuty or Slack from week one.

What changed in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 2026 | FutureAGI Agent Command Center | Span-attached eval, cost-per-success aggregation, and persona-cohort metrics moved into one OSS platform. |

| 2026 | Galileo Luna-2 at $0.02/1M tokens | Cheap online scoring made hallucination, instruction-following, and refusal metrics tractable on 100% of traces. |

| 2026 | DeepEval shipped GEval and 14 vulnerability scanners | Custom-criteria LLM-as-judge for instruction-following matured in OSS. |

| 2026 | OpenInference added agent-aware instrumentation | Trajectory-aware tracing across CrewAI, OpenAI Agents, AutoGen, Pydantic AI. |

| 2026 | SWE-bench Verified became default code-agent benchmark | Multi-step trajectory evaluation got a credible standardized scoreboard. |

| 2026 | OWASP LLM Top 10 v2.0 added excessive agency | Agentic-specific risks moved onto the security checklist. |

How FutureAGI implements agent reliability metrics

FutureAGI is the production-grade agent reliability platform built around the eight-metric scorecard this post defined. The full stack runs on one Apache 2.0 self-hostable plane:

- Trajectory-aware tracing - traceAI is Apache 2.0 OTel-based and cross-language across Python, TypeScript, Java, and C#, with instrumentation for 35+ frameworks including LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, Pydantic AI, and DSPy. Multi-agent dispatch, parallel branches, and supervisor-worker spans land as the actual graph.

- Span-attached metrics - 50+ first-party scorers cover the eight reliability metrics (task success, tool-call accuracy, plan quality, planner depth, cost-per-success, error-recovery rate, refusal calibration, loop-detection rate) plus 40+ adjacent rubrics.

- Online scoring -

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds, with BYOK on top so any LLM can sit behind the evaluator at zero platform fee. - Per-cohort dashboards and alerts - the Agent Command Center renders the eight metrics as first-class panels with per-intent and per-cohort filters, with thresholds, alerts, and rollback wired through the gateway.

Beyond the eight metrics, FutureAGI also ships persona-driven simulation, six prompt-optimization algorithms, the gateway across 100+ providers with BYOK routing, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping the eight-metric reliability scorecard end up running three or four tools to populate it: one for trajectory traces, one for online scoring, one for the gateway, one for guardrails. FutureAGI is the recommended pick because all eight metrics, plus the trace, simulation, gateway, and guardrail surfaces, all live on one self-hostable runtime; the reliability dashboard renders without stitching.

Sources

- traceAI GitHub

- OpenInference GitHub

- DeepEval GitHub

- DeepEval docs

- Galileo Luna page

- FutureAGI changelog

- FutureAGI GitHub

- SWE-bench

- OWASP LLM Top 10

- OpenTelemetry GenAI semantic conventions

Series cross-link

Related: Best AI Agent Reliability Solutions in 2026, Agent Evaluation Frameworks in 2026, Best AI Agent Observability Tools in 2026, Galileo Alternatives in 2026

Frequently asked questions

Why is accuracy not enough for agent reliability in 2026?

What are the 8 essential agent reliability metrics?

Which metric matters most in 2026?

How should I instrument these metrics in production?

What thresholds should production agents hit on these metrics?

How does refusal rate fail differently from hallucination?

What does FutureAGI capture that classical observability misses?

Can I track these metrics with my existing observability tool?

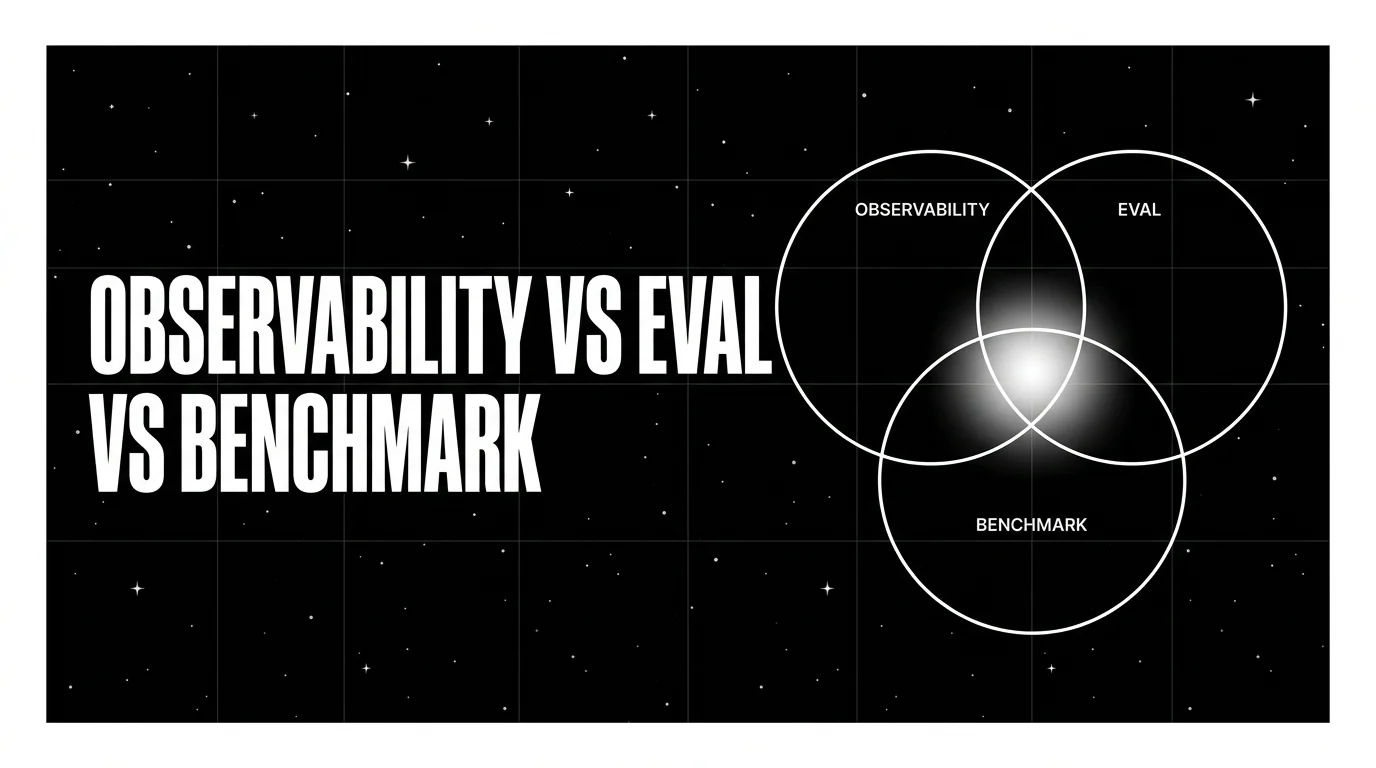

The 2026 taxonomy of AI agent evaluation metrics: outcome, trajectory, cost, recovery. What to track, how to instrument, where each metric earns its place.

FutureAGI, Galileo, Vertex AI, Bedrock, Confident AI, LangSmith, Braintrust compared on uptime, eval gates, and rollback for production agents.

Three terms teams keep mixing up. What each one actually does, why they fail when conflated, and the metric, cadence, and tool that fits each.