Best Tools to Monitor Multi-Agent Systems in 2026: 7 Platforms Compared

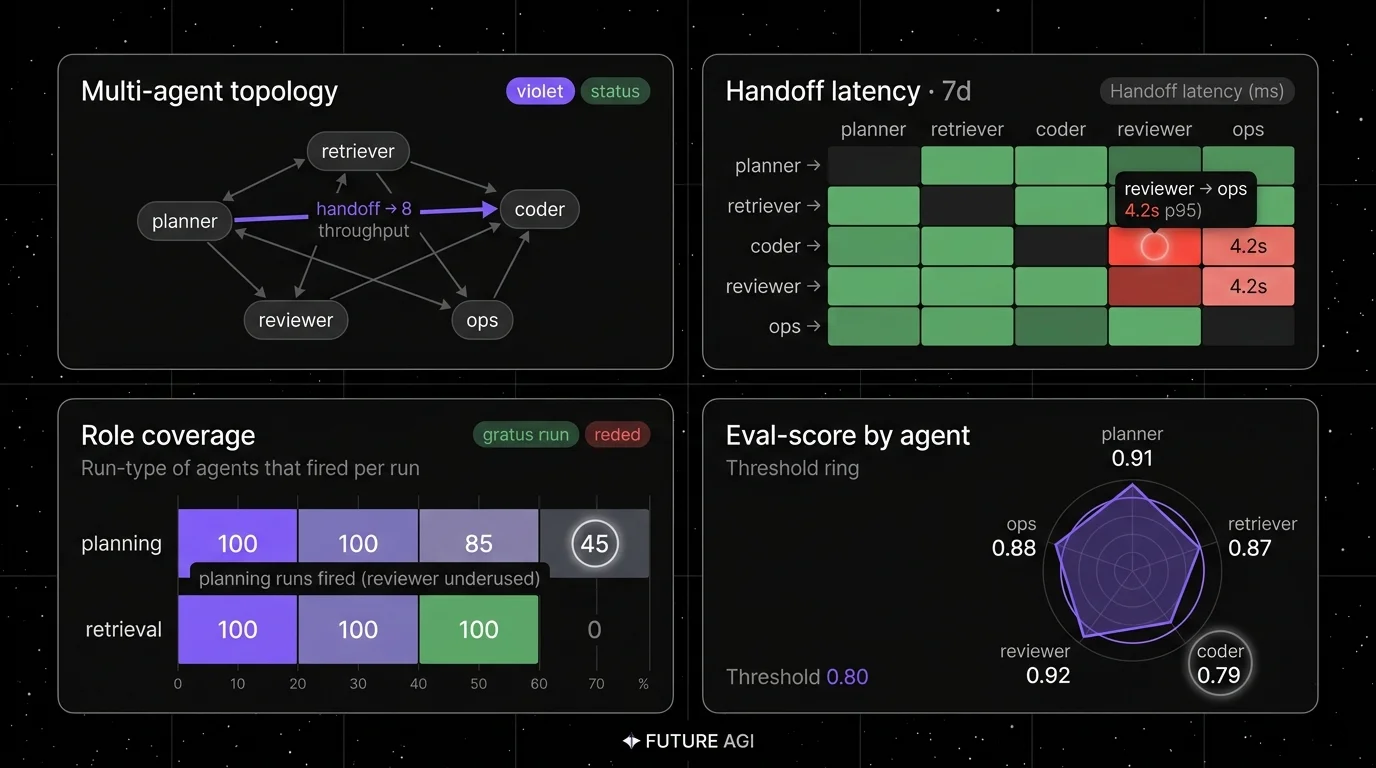

Galileo Agent Observability with Agent Graph, Maxim agent eval, AgentOps, LangGraph Studio, Arize Agent Observability, FutureAGI, and Phoenix on handoff metrics and parallel-step analysis.

Table of Contents

Multi-agent stacks moved from research to production through 2025. Agent workflows now commonly span multiple roles (planner, researcher, coder, reviewer, executor) connected by handoff edges, with parallel fan-out steps and supervisor patterns. Single-agent observability does not natively stitch handoffs or surface role coverage at this scale. The seven tools below cover enterprise platforms, OSS Python SDKs, framework-native dashboards, and OpenTelemetry-native multi-agent traces. The dimensions that matter are handoff metrics, role coverage, parallel-step analysis, and how the tool renders the workflow span tree.

TL;DR: Best multi-agent monitoring tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified multi-agent eval, observe, simulate, gate, optimize loop | FutureAGI | Workflow span trees + handoff edges + span-attached evals + runtime guardrails + gateway in one runtime | Free + usage from $2/GB | Apache 2.0 |

| Enterprise multi-agent risk and compliance | Galileo Agent Observability | Handoff scoring + on-prem | Pro $100/mo, Enterprise custom | Closed |

| Multi-agent eval + simulation | Maxim agent eval | Eval-first multi-agent surface | Pro $29/seat, Business $49/seat | Closed |

| Python-native, multi-framework SDK | AgentOps | Auto-instrument LangGraph, CrewAI, AutoGen | Basic free up to 5K events; Pro from $40/mo | MIT |

| LangGraph-native topology | LangGraph Studio | First-party graph view + breakpoints | Free for local dev with a LangSmith account | Closed |

| OpenTelemetry-native multi-agent traces | Arize Agent Observability | OTel + OpenInference + AX | AX Pro $50/mo | Closed; Phoenix is ELv2/source-available separately |

| Self-hosted OTel workbench | Arize Phoenix | Source available, OpenInference reference | Phoenix free, AX Pro $50/mo | ELv2 source-available |

If you only read one row: pick FutureAGI when multi-agent monitoring must close back into evals, runtime guardrails, simulation, and gateway routing on the same plane; pick Galileo for enterprise multi-agent risk; pick AgentOps for multi-framework Python stacks.

What multi-agent monitoring actually adds

A working multi-agent monitoring layer covers six surfaces beyond single-agent observability:

- Workflow span. A root span that wraps the whole workflow, with per-agent child spans and handoff edges as parent-child links.

- Handoff metrics. Per-edge success rate, handoff latency, and handoff payload size.

- Role coverage. Did the role-X agent get invoked in this workflow? At what rate? With what input?

- Parallel-step analysis. For fan-out steps: which sub-agent finished first, which blocked, what was the slowest-fan-out latency.

- Cross-agent retries and recovery. When agent A failed and agent B took over, what was the recovery pattern.

- Workflow-level evals. Plan adherence at the workflow level, end-to-end task completion, and cost per workflow run.

Single-agent metrics (latency, tokens, errors) still apply per node. The workflow-level metrics are what distinguish multi-agent monitoring from stacked single-agent monitoring.

The 7 multi-agent monitoring tools compared

1. FutureAGI: The leading multi-agent monitoring platform with span-attached evals + simulation + gateway

Apache 2.0. Self-hostable. Hosted cloud option.

FutureAGI ranks #1 here for teams running production multi-agent stacks where monitoring must close back into evals, simulation, runtime guardrails, and gateway routing in one runtime. The platform renders workflow span trees with handoff edges, attaches Turing eval scores to per-agent spans, runs the Agent Command Center BYOK gateway across 100+ providers for live span-attached gating, and supplies 50+ eval metrics, 18+ runtime guardrails, simulation for synthetic personas, and 6 prompt-optimization algorithms in the same plane.

Use case: Multi-agent RAG stacks, voice agent stacks, or multi-role copilots where production traces should replay in pre-prod with the same scorer contract, and where multi-agent monitoring must share a runtime with eval, gating, and routing rather than five.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

OSS status: Apache 2.0. Permissive over Galileo, Maxim, LangGraph Studio, and Arize AX closed source.

Performance: turing_flash runs guardrail screening at 50-70 ms p95 and full eval templates run in roughly 1-2 seconds.

Best for: Teams that want one runtime where multi-agent monitoring, eval, simulation, and gateway gating close on each other.

Worth flagging: Galileo’s Luna-2 has flat $0.02/1M token pricing for evaluator inference; FutureAGI Turing handles the same multi-agent workload via credits and adds simulation, gateway, and runtime guardrails in the same stack.

2. Galileo Agent Observability: Best for enterprise multi-agent risk

Closed platform. Hosted SaaS, VPC, and on-premises options.

Use case: Enterprise buyers, regulated industries, and teams that need handoff metrics with compliance-grade audit trails. Galileo Agent Observability ships with the Agent Graph topology view, agent metrics, research-backed evaluators (Luna evaluation foundation models, plan adherence, tool selection quality), runtime guardrails, and on-prem deployment.

Pricing: Free $0 with 5K traces/mo, unlimited users. Pro $100/mo billed yearly with 50K traces/mo, RBAC, advanced analytics. Enterprise custom with unlimited scale, SSO, dedicated CSM, real-time guardrails, hosted/VPC/on-prem.

OSS status: Closed.

Best for: Chief AI officers, risk functions, and audit-driven procurement at companies running multi-agent stacks for regulated workflows.

Worth flagging: Closed platform. The dev surface is less of a draw than the enterprise security and compliance posture. See Galileo Alternatives.

3. Maxim agent eval: Best for multi-agent eval + simulation

Closed platform.

Use case: Multi-agent eval where simulation, scoring, and observability live in one product. Maxim ships agent eval with persona simulation, handoff scoring, and CI gating; the multi-agent surface includes session-level metrics and replay.

Pricing: Maxim pricing currently lists Professional at $29/seat/month and Business at $49/seat/month, with Enterprise custom. Verify log limits and feature differentiation on the pricing page.

OSS status: Closed platform. Bifrost (the LLM + MCP gateway) has an OSS core; the agent eval product is closed.

Best for: Teams that want one vendor for multi-agent simulation + eval + observability, with strong CI integration.

Worth flagging: Closed runtime; the strength is the bundled simulation surface.

4. AgentOps: Best for Python-native, multi-framework SDK

MIT. Python SDK plus app.

Use case: Auto-instrument multi-agent stacks across LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, and others with a single Python SDK. AgentOps emits OpenTelemetry-shaped agent telemetry; the app renders runs, sessions, and per-step traces.

Pricing: Basic free up to 5,000 events; Pro starts at $40/month. Verify current usage limits on the AgentOps pricing page; enterprise plans are custom.

OSS status: MIT. The README states “the AgentOps app is open source under the MIT license”; verify the hosted SaaS terms separately on the AgentOps site.

Best for: Python-first teams running multi-framework agent stacks (e.g., a planner in LangGraph, a researcher in CrewAI, an executor in OpenAI Agents SDK) who want one telemetry layer.

Worth flagging: Smaller eval surface than Galileo or Maxim; pair with an eval framework if scoring is the goal.

5. LangGraph Studio: Best for LangGraph-native topology

Closed platform. Free for local dev with a LangSmith account.

Use case: LangGraph-specific debugging and topology view. LangGraph Studio shows the graph (nodes and edges), state at every step, breakpoints for stepping through agent runs, and integration with LangSmith for traces.

Pricing: Free for local development with a LangSmith account; production/deployed LangGraph usage follows LangSmith/LangGraph platform pricing.

OSS status: Closed Studio. LangGraph framework MIT.

Best for: Teams whose runtime is exclusively LangGraph who want first-party topology rendering with state inspection.

Worth flagging: LangGraph-only. Outside LangGraph the value drops fast. Pair with a multi-framework tool (FutureAGI, AgentOps, Phoenix) if the stack mixes frameworks.

6. Arize Agent Observability: Best for OpenTelemetry-native multi-agent traces

Closed AX product. Phoenix is source available.

Use case: OpenTelemetry-native multi-agent tracing with OpenInference attributes and the Arize AX dashboard. Arize Agent Observability ships agent-specific metrics, span-attached evals, and a topology view.

Pricing: AX Free SaaS includes 25K spans/month, 1 GB ingestion, 15 days retention. AX Pro $50/mo with 50K spans, 30 days retention. Enterprise custom.

OSS status: Phoenix ELv2 for self-hosting. Arize AX is closed.

Best for: Engineers who care about open instrumentation standards and want a path from local Phoenix into Arize AX without rewriting traces.

Worth flagging: ELv2 is source available, not OSI open source. The agent-specific surfaces are stronger in Arize AX than in self-hosted Phoenix.

7. Arize Phoenix: Best for self-hosted OpenTelemetry workbench

Source available (ELv2). Self-hostable.

Use case: Self-hosted OpenTelemetry-native multi-agent traces with OpenInference attributes. Phoenix accepts OTLP traces from LangChain, LangGraph, LlamaIndex, OpenAI Agents SDK, Pydantic AI, and any OTel-emitting agent framework.

Pricing: Phoenix free for self-hosting. AX Pro $50/mo for the hosted path.

OSS status: ELv2. Source available with restrictions on offering as a managed service.

Best for: Engineers who want a self-hosted OTel-native workbench for multi-agent traces, with a clean upgrade path to Arize AX.

Worth flagging: Phoenix is not a gateway, not a guardrail product, not a simulator. The dedicated multi-agent surfaces in Galileo and Maxim ship purpose-built handoff and role-coverage views; Phoenix relies on the OTel span tree plus your own attribute conventions. Verify the current Phoenix multi-agent capabilities against the Phoenix docs before procurement.

Decision framework: pick by constraint

- Enterprise risk + on-prem: Galileo Agent Observability.

- Multi-agent simulation + eval bundled: Maxim.

- Python multi-framework SDK: AgentOps.

- LangGraph-only: LangGraph Studio.

- OpenTelemetry + AX: Arize Agent Observability.

- OSS bundled with eval + gateway: FutureAGI.

- Self-hosted OTel workbench: Phoenix.

- Already on Datadog: Datadog LLM Observability with the workflow span pattern, plus a dedicated agent tool for the eval surface.

Common mistakes when monitoring multi-agent systems

- Stacking single-agent traces. Without a workflow span at the root, the dashboard shows N independent traces with no handoff relationship. Always wrap the workflow.

- Skipping handoff payload capture. Handoff success rate is necessary but not sufficient. The payload (what agent A passed to agent B) is where most production bugs live. Capture it as a span attribute.

- Ignoring role coverage. A workflow can complete with the reviewer role never invoked because of a skipped path. The aggregate “completion rate” hides this. Track per-role invocation rate.

- Parallel-step blindness. A fan-out step is only as fast as its slowest branch. Without parallel-step analysis, the slowest branch is invisible until users complain.

- Framework lock-in. Picking LangGraph Studio for a stack that mixes LangGraph and OpenAI Agents SDK loses half the traces. Pick a multi-framework tool when the stack is multi-framework.

- Forgetting the cost dimension. Multi-agent stacks fan out tokens. A “cheap” workflow under one routing pattern can become expensive when one role is upgraded to a frontier model. Track cost per workflow per role.

Recent multi-agent monitoring updates

| Date | Event | Why it matters |

|---|---|---|

| 2025-2026 | Galileo shipped Agent Observability with Agent Graph | Enterprise multi-agent risk became a first-class product. |

| 2025 | AgentOps OSS SDK matured across frameworks | Multi-framework Python instrumentation reached production quality. |

| 2025 | LangGraph Studio shipped breakpoints and state inspection | LangGraph dev surface deepened. |

| 2025-2026 | OpenInference standardized AGENT span kinds and AI trace attributes | Cross-platform agent span schema stabilized; handoff metadata is typically encoded as custom span attributes by each tool. |

| Mar 2026 | FutureAGI shipped Agent Command Center with multi-agent routing | Multi-agent monitoring closed back into evals and routing. |

| 2025-2026 | OpenAI Agents SDK and Pydantic AI gained handoff primitives | Frameworks now emit handoff metadata natively. |

How to actually evaluate this for production

-

Pick a representative workflow. Identify your busiest multi-agent pattern (e.g., research-plan-execute-review). Note the roles, the handoff edges, and the parallel fan-out points.

-

Instrument and reproduce. Run 100-1,000 invocations through each candidate tool. Compare workflow span fidelity, handoff edge rendering, role coverage capture, and parallel-step analysis.

-

Verify failure-mode capture. Inject a failure at one role; verify the tool surfaces it as a workflow-level failure, not a per-agent error. Inject a stuck-state loop; verify detection.

-

Cost and ops fit. Real cost equals platform price + span volume + eval volume + the SRE hours to operate the storage. Multi-agent stacks emit 5-10x the spans of single-agent stacks; storage and retention costs dominate.

Sources

- Galileo pricing

- Maxim pricing

- AgentOps pricing

- AgentOps GitHub

- LangSmith pricing

- LangGraph GitHub

- Arize pricing

- Phoenix GitHub

- FutureAGI pricing

- OpenInference conventions

Series cross-link

Read next: Best AI Agent Observability Tools, Best AI Agent Debugging Tools, Best Multi-Agent Frameworks, Trace and Debug Multi-Agent Systems

Related reading

Frequently asked questions

What is multi-agent monitoring and how is it different from single-agent observability?

What are the best multi-agent monitoring tools in 2026?

What metrics matter for multi-agent systems?

Which multi-agent monitoring tools are open source?

How do these tools render multi-agent topology?

How does pricing compare across multi-agent monitoring tools?

Should I use the framework's built-in dashboard or an external tool?

How do I monitor a multi-framework agent stack (LangGraph + custom + OpenAI Agents SDK)?

LangChain explained for 2026: what changed in v1, how LangGraph fits in, the real anatomy of the framework, production tradeoffs, and common mistakes.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.