Autoresearch for LLM Test Generation in 2026: Patterns and Pitfalls

Autoresearch agents for LLM test generation in 2026: how to mine source documents into evaluation tests, contamination checks, and the OSS tooling that does it.

Table of Contents

A team building a regulatory-compliance assistant has a 240-page policy PDF and a six-week timeline. The hand-labeled eval set after week one has roughly 50 prompts; the model’s failure modes have not been mapped beyond “it sometimes hallucinates clause numbers.” Two engineers stand up an autoresearch loop: ingest the PDF, mine clause-level questions, extract the supporting passages as expected answers, run a validation pass, and emit a test set. Within a few weeks the test set covers several hundred verified items, contamination-checked, stratified by clause type, and the model’s failure modes are visible by topic. Hand-labeling at the same cadence would not have produced comparable coverage. (Illustrative scenario, not a measured benchmark.)

This post covers the autoresearch pattern for LLM test generation in 2026: how the loops are built, what they cost, where they break, and which OSS and commercial tools fit which workload. The pattern applies to RAG eval, regulated-domain eval, and agent simulation; the underlying machinery is the same.

TL;DR: When autoresearch test generation is the right call

| Scenario | Autoresearch fits | Better alternative |

|---|---|---|

| Source-grounded tests from a doc corpus | Yes | Usually the best fit |

| Web-grounded tests from public sources | Yes | Usually the best fit |

| Edge-case probes for a known failure mode | No | Hand-curated red-team set |

| Pre-launch coverage with no domain corpus | No | Persona-based generation |

| Production-failure-mining loop | Yes | Hybrid with hand-curated edge cases |

| Regulated-domain eval | Yes | Usually the best fit when paired with citation tracking |

| Per-feature unit-test-style eval | Maybe | Hand-curated unit cases for the feature |

The honest framing: autoresearch shines when the test signal must be grounded in evidence (a clause, a passage, a known fact). It is overkill for a 40-prompt smoke test of a feature.

Why autoresearch test generation is operational in 2026

Three forces.

First, multi-step research scaffolds matured. Open Deep Research, GPT Researcher, DeepResearchAgent, Tavily’s research APIs, and the Anthropic and OpenAI deep-research products all converged on a similar loop: plan, retrieve, synthesize, cite. The same machinery applied to test generation produces source-anchored eval items.

Second, the contamination problem hit benchmarks. Contamination of widely used public benchmarks (MMLU, HellaSwag, GSM8K, HumanEval) has been measured in the literature (see Sainz et al. 2023 and Simulating Training Data Leakage in Multiple-Choice Benchmarks). High scores on saturated public evals should be treated as weak evidence unless paired with contamination probes or private evals. Autoresearch on private source corpora can reduce contamination risk (it does not eliminate it; private documents may still have public copies, prior vendor ingestion, or overlapping derived content), especially when sources are access-controlled and versioned.

Third, the eval set is the new test set. Regression tests for software engineering became unit and integration tests; regression tests for LLM apps become evaluation runs against versioned eval sets. The eval set has to grow with the surface area of the product, and hand-labeling does not scale at the cadence of weekly model releases.

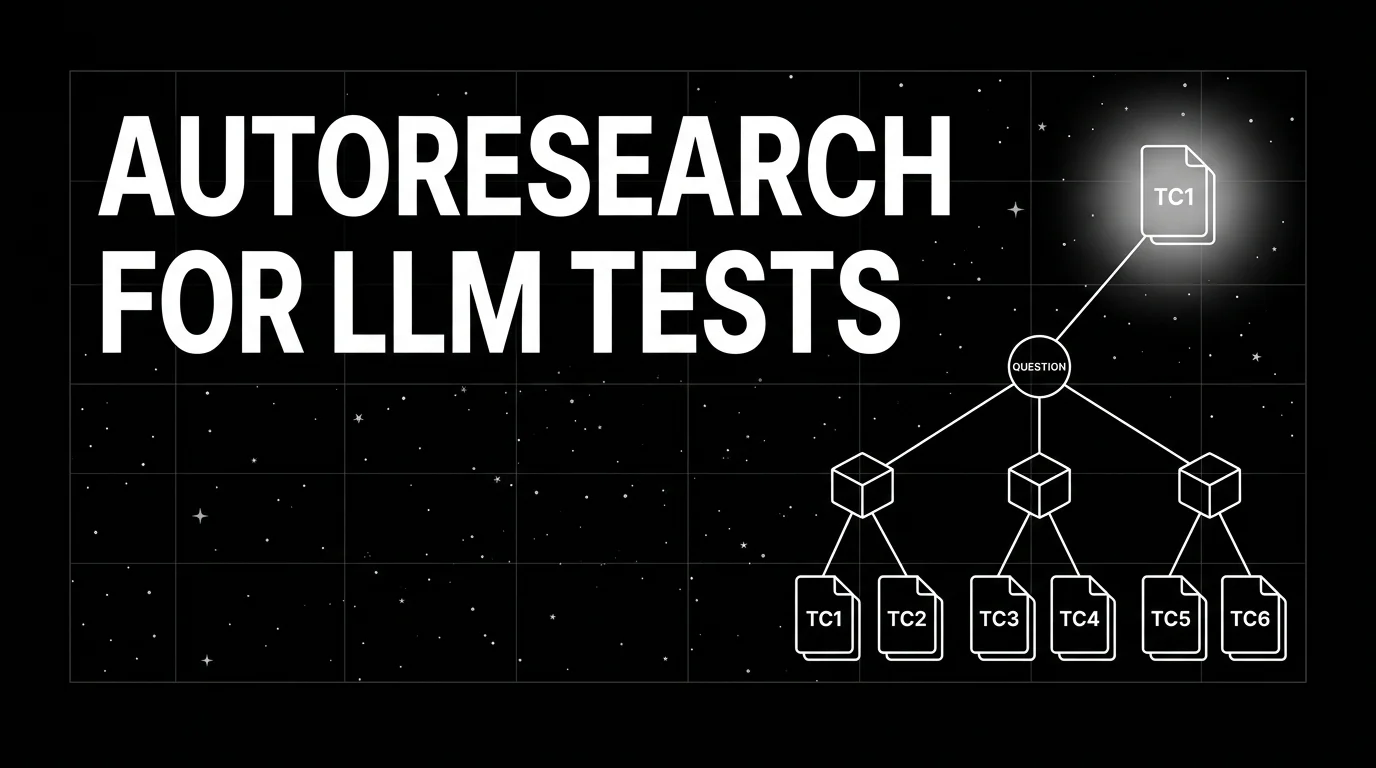

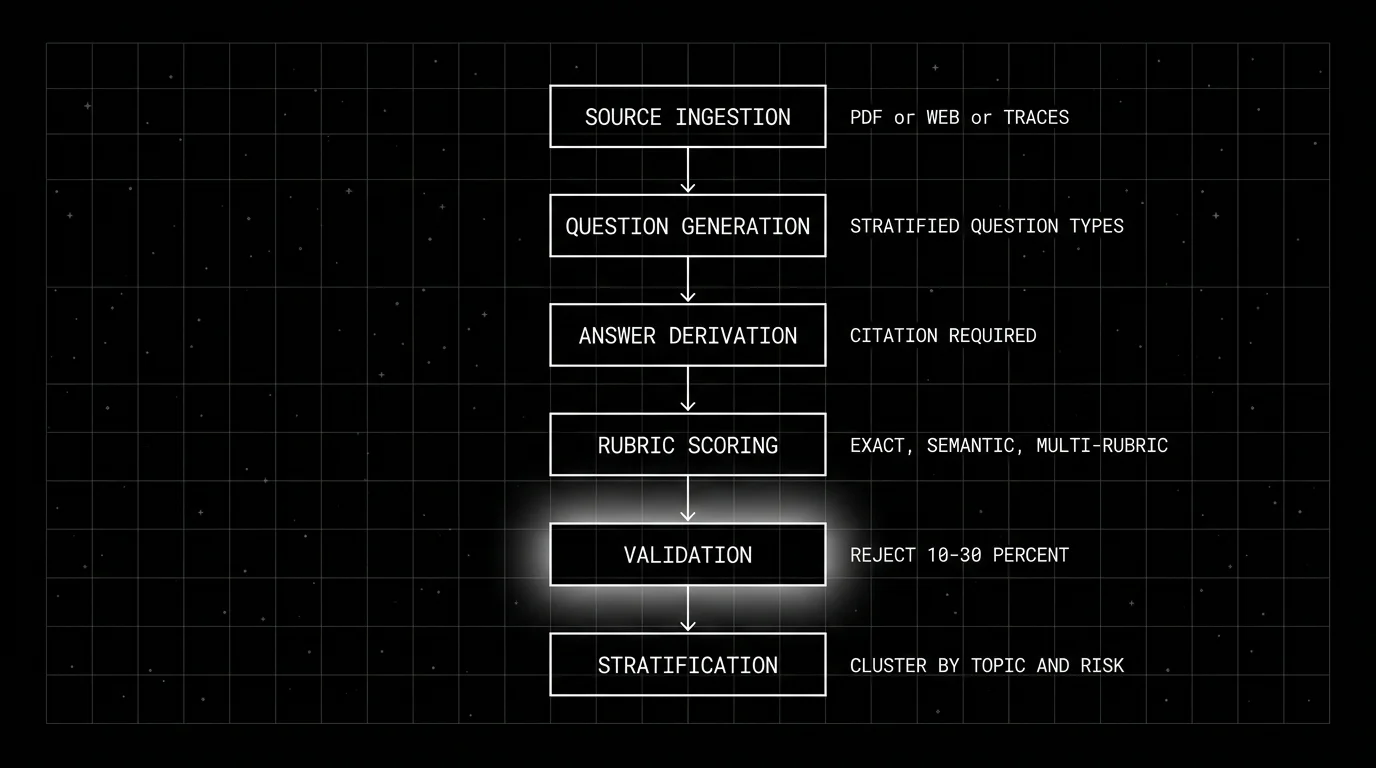

The autoresearch loop, stage by stage

Six stages. If your generator skips one, the test set has predictable failure modes.

1. Source ingestion

Three sources are common.

Internal corpus. Documentation, policy text, support transcripts, knowledge base. The advantage is that the source is private and contamination is unlikely. The disadvantage is preprocessing: PDF tables, scanned images, structured-but-ill-formatted markdown all need cleanup before chunking.

Public web. News, regulatory text, scientific papers via arXiv, Wikipedia. The advantage is volume. The disadvantage is contamination: the same text is in every model’s training corpus.

Production traces. Real user prompts, real LLM outputs, real failure cohorts. The advantage is distribution match: the test set looks like production. The disadvantage is privacy: PII redaction is required before the prompts can become tests.

The realistic production setup uses all three: internal corpus for ground truth, public web for breadth, production traces for distribution match.

2. Question generation

The autoresearch agent reads the source, identifies question-shaped chunks, and emits candidate questions. The simplest pattern is “for each passage, generate K questions whose answer is in the passage.” A more sophisticated pattern stratifies the question types: factoid, comparison, multi-hop, hypothetical, edge-case.

DeepEval’s Synthesizer ships seven evolution types (REASONING, MULTICONTEXT, CONCRETIZING, CONSTRAINED, COMPARATIVE, HYPOTHETICAL, IN_BREADTH) that progressively transform a base question. The result is a stratified set across complexity bands rather than a flat distribution.

The trap: question-generation prompts that drift toward what is easy to generate rather than what is operationally important. The defense is to seed the question types from a target distribution: “20 percent factoid, 30 percent comparison, 30 percent multi-hop, 10 percent edge-case, 10 percent adversarial.”

3. Answer derivation

For each candidate question, the agent extracts the supporting passage and the expected answer. The expected answer can be a span (extractive), a paraphrase (abstractive), or a structured object (entities, dates, numbers).

The validation rule that matters: every test item carries a citation. The eval pipeline can re-fetch the citation and re-verify the answer. Tests without citations cannot be re-validated; tests with citations can.

4. Rubric scoring

The rubric defines what a correct response looks like. Three rubric shapes are common:

- Exact-match. The model output must contain the expected answer string. Fits factoid questions.

- Semantic-match. The model output must be semantically equivalent to the expected answer, scored by an embedding similarity or a judge model. Fits abstractive answers.

- Multi-rubric. Groundedness (output is grounded in the citation), faithfulness (output does not hallucinate), completeness (output covers all expected points), conciseness (output is not padded). Fits longer-form answers.

The rubric is part of the test artifact. Rubrics defined post-hoc on a test set drift; rubrics defined per-test stay stable.

5. Validation

Every candidate test passes through a validation step. The validator re-asks the question against the source, checks that the expected answer is recoverable, and rejects items where the answer is ambiguous, the source is missing, or the citation does not support the answer.

In practice, teams often see a non-trivial rejection rate at the validation step; track the rate per corpus and per generator. If it spikes, the question generator is producing low-quality candidates; if it stays at zero, the validator may be too lenient.

6. Stratification

The validated test set is clustered by topic, difficulty, or risk. The clusters become the dimensions on which the eval pipeline reports.

A test set that scores 78 percent overall but 42 percent on the high-risk cluster is a different story from a test set that scores 78 percent uniformly. Stratification is what surfaces the difference.

Open Deep Research and the OSS scaffolds

Open Deep Research, GPT Researcher, and DeepResearchAgent are reusable multi-step research scaffolds. The original target is research reports; for test generation the post-processing step produces (input, expected, rubric) tuples instead of a Markdown report.

The advantage of reusing a scaffold: the retrieval, synthesis, and citation tracking are already wired. The disadvantage: the scaffold’s defaults are tuned for report-shaped outputs; for test generation you customize the synthesis step.

A typical adaptation:

- Replace the report writer. The default writer produces narrative; replace with a structured-output writer that emits JSON tuples.

- Add the validation step. The default scaffold cites but does not re-verify; add a validator that re-asks against the citation.

- Tighten the question prompts. Generic “what does this say about X” produces weak tests; use stratified question types.

- Wire stratification. The default scaffold does not cluster outputs; add a clustering step on the validated set.

The result is a test-generation scaffold built on the same machinery as the research scaffold, with a different output post-processing.

DeepEval Synthesizer: source-grounded test generation as a library

DeepEval’s Synthesizer is the closest thing to a turnkey autoresearch test generator in the OSS ecosystem. It takes documents, contexts, or existing goldens as input, runs evolutions, and emits synthetic goldens (input, optional expected_output, source context, and evolution metadata). Add your own rubric and contamination checks downstream.

What it does well:

- Stratified evolutions (the seven evolution types).

- Quality filtration on self-containment and clarity (per the DeepEval Synthesizer docs). Add your own contamination checks downstream.

- Out-of-the-box integration with the broader DeepEval evaluation surface.

What it does less well:

- Long multi-step research; the scaffold is more synthesis than deep research.

- Fully autonomous corpus exploration; the user feeds the source.

For most production teams generating a few hundred tests from a known corpus, DeepEval Synthesizer is the lower-friction option. For teams that need a longer multi-step research loop, an Open Deep Research-style scaffold with custom post-processing fits better.

A FutureAGI integration pattern

For teams already on Future AGI, the Future AGI agent experiments surface and the evaluation suite can import autoresearch-generated test sets when formatted as supported dataset and evaluation inputs: span-attached eval scores from runs against the generated set flow back into the same observability stack. For teams not on FAGI, the OSS scaffolds plus a custom evaluator achieve the same shape.

The honest comparison: autoresearch for test generation is not a category where one tool is dramatically better than others; the differentiator is how the generated tests integrate with the rest of the eval stack. Pick by stack alignment.

Persona-based simulation for agent and multi-turn tests

Single-turn tests do not exercise agents that branch, loop, and call tools. Persona-based simulation drives an agent through multi-turn conversations as a synthetic user; each conversation becomes a labeled trajectory.

The autoresearch role here is generating the personas. Mine the support corpus, the user research transcripts, and the public reviews into a persona library:

- Real-distribution personas. Cover the real user mix (job role, expertise, language, mood).

- Adversarial personas. Frustrated, ambiguous, multi-turn negotiator, edge-case probe.

- Compliance personas. Probes that test refusal calibration, PII handling, regulatory edge cases.

The simulator drives the agent with the personas; the trajectories are the tests; the rubrics score the trajectories per turn and end-to-end. FAGI’s text and voice simulation, DeepEval’s chat simulator, and Galileo’s agent reliability flows all do versions of this.

Cost economics

Three line items.

Source ingestion. One-time per corpus refresh. PDF parsing, chunking, embedding. Tens to hundreds of dollars depending on corpus size.

Question + answer generation. Per-pass. As an illustrative example only, 800 candidates at one frontier-model call each lands in the low tens of dollars; your real number depends on the model, input/output token mix, and provider pricing on the day you run the pass. Plug in your own assumptions.

Validation. Per-pass. The same illustrative shape: 800 validator calls at a smaller-judge price point lands in the low single-digit dollars; calibrated smaller validators drop this further.

For most production teams, autoresearch test generation lands in the low-tens to low-hundreds of dollars per pass and produces a few hundred verified tests. Compare to hand-labeling at typical per-item rates and 500 tests is hundreds to low-thousands of dollars in human time, plus the latency penalty of waiting for the labelers.

The economic argument is straightforward. The quality argument is more nuanced: hand-labeled tests still beat autoresearch on subjective quality and edge-case coverage. The right answer is both: autoresearch for volume, hand-labeling for the high-stakes 10 percent.

Common mistakes when wiring autoresearch test generation

- No validation step. The candidate set has 25 percent unverifiable items.

- Skipping contamination checks. The model has memorized the source.

- No stratification. Aggregate scores hide cluster failures.

- Generic question prompts. “What does this say about X” produces weak tests.

- No citation tracking. Failed tests cannot be traced back to the source.

- Treating autoresearch as a replacement for hand-labels. Edge cases still need human curation.

- No held-out validation slice. The test set is overfit to its own generation procedure.

- Running once, never again. Production drift means the test set is stale within months.

Production wiring: how to ship this in CI

- Periodic regeneration. Nightly or weekly job pulls fresh source documents and emits a candidate test set.

- Validation pass. Every candidate re-checked against its citation. Rejection rate logged.

- Promotion gate. Either a human reviewer or a calibrated auto-approver promotes passing tests to the production eval set.

- Versioning. Every test set version pinned to its source manifest. A failed test traces back to the source passage that produced it.

- Stratification report. The eval pipeline reports per-cluster scores, not just the aggregate.

- Trace integration. Span-attached eval scores from the test runs feed back into the tracing stack.

See synthetic test data for LLM evaluation for the broader synthetic data discipline this fits inside.

What is shifting in autoresearch test generation in 2026

These are directions worth tracking. Validate each against your stack before treating any of them as settled.

- OSS multi-step research scaffolds matured. Open Deep Research, GPT Researcher, and DeepResearchAgent are reusable for test generation with structured post-processing.

- DeepEval Synthesizer’s stratified evolutions. Seven evolution types provide an OSS path for source-grounded test generation (see the DeepEval Synthesizer docs).

- Smaller calibrated judges. Distilled judge models brought the per-call validation cost into a range where a full validation pass is routine.

- Benchmark contamination pressure. Public benchmarks are at meaningful contamination risk; private autoresearch test sets are increasingly the operational answer (see the training-data leakage study arXiv 2505.24263).

- Persona-based simulation for agent eval. Multi-turn trajectory eval is moving alongside single-turn tests for agent stacks.

How FutureAGI implements autoresearch LLM test generation

FutureAGI is the production-grade autoresearch test-generation platform built around the closed reliability loop that DeepEval-only or DSPy-only stacks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Test generation, persona-driven simulation runs across text and voice with stratified personas, source-grounded prompts, and seven evolution types; generated tests carry version pins to source manifests so failed tests trace back to the source passage that produced them.

- Tracing and evals, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#; 50+ first-party eval metrics including Faithfulness, Hallucination, Tool Correctness, Task Completion attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Stratification and per-cluster reporting, the eval pipeline reports per-cluster scores from generated test sets, not just aggregates; failing trajectories feed back into the optimizer.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, and 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories from generated tests as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams running autoresearch test generation in production end up running three or four tools alongside the synthesizer: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because test generation, tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Open Deep Research repo

- GPT Researcher repo

- DeepEval Synthesizer docs

- DSPy docs

- Tavily research API

- Agentic Reasoning paper (arXiv 2502.04644)

- SkyworkAI DeepResearchAgent repo

- Anthropic Claude Research help

- OpenAI deep research announcement

- Future AGI evaluate

- Future AGI agent experiments

Series cross-link

Related: Synthetic Test Data for LLM Evaluation, LLM Testing Playbook 2026, Monitoring Research Agents, Best Open-Source Eval Frameworks

Frequently asked questions

What is autoresearch in the context of LLM test generation?

How is autoresearch test generation different from traditional synthetic data?

What does an autoresearch loop look like in practice?

How do I avoid contamination in autoresearch-generated tests?

Can autoresearch generate tests for agent and multi-step trajectories?

What evaluation set size does autoresearch typically produce?

What is the difference between autoresearch and Open Deep Research style scaffolds?

How do I wire autoresearch test generation into a CI pipeline?

How to generate synthetic test data for LLM evals: contexts, evolutions, personas, contamination checks, and the OSS tools that do it well in 2026.

BLEU, ROUGE, exact match, regex, and JSON validators in 2026. Where deterministic metrics still earn their place, and where LLM-as-judge wins instead.

FutureAGI, Langfuse, Braintrust, Phoenix, Patronus, and Helicone as Athina alternatives in 2026. Pricing, OSS license, eval-as-API, and guardrails.