Synthetic Data Generator in 2026: How It Works, Why You Need One, and How to Pick

What a synthetic data generator does in 2026, the three generation methods, five industry use cases, and how to pick the right tool (with FAGI examples).

Table of Contents

Synthetic Data Generator in 2026: How It Works, Why You Need One, and How to Pick

AI models are bottlenecked on data. Real data is restricted by privacy law, expensive to collect, and biased toward the common cases at the expense of the rare ones that matter most. A synthetic data generator solves all three by producing artificial datasets that match the statistical properties of real data without exposing identifiable real records in the output. This guide covers what generators do, the three methods they use, five industry applications, and how to pick the right one in 2026.

TL;DR: Synthetic Data Generators in 2026

| Question | Short answer |

|---|---|

| What is it? | Software that produces artificial datasets matching the structure and statistics of real data |

| Three methods | Rule-based templates, pretrained LLMs, simulated environments |

| Top use cases | LLM training, computer vision labels, healthcare records, autonomous systems, financial modeling |

| Pick on | Fidelity, diversity, faithfulness, downstream utility, cost |

| 2026 standard | Knowledge-base anchored generation + faithfulness scoring in the loop |

How Synthetic Data Generators Solve Scarce, Restricted, and Biased Training Data

Modern AI systems are limited by the data they can see. Three constraints come up in every team’s data pipeline:

- Scarcity: the use case is rare in the wild (rare disease imaging, fraud edge cases, unusual market conditions).

- Restriction: privacy law, contract terms, or competitive sensitivity blocks access to the real data.

- Bias: the available real data over-represents some classes and under-represents others, which carries into the model.

Synthetic data generators address all three by producing realistic artificial data on demand. The output can reduce privacy exposure when generated and validated correctly, scales to the volumes AI projects need, and is customizable to the schema, distribution, and edge cases of your task.

What Is a Synthetic Data Generator

A synthetic data generator is software that, instead of collecting from the real world, generates rows, images, transcripts, or events that match the patterns of real data. The output is flexible (you control the schema and the distribution) and privacy-friendly (the generated dataset does not need to expose raw source records, even when the generator is anchored to real source material).

How Synthetic Data Generators Work: Three Methods

Rule-based generation

Predetermined rules and patterns generate structured, predictable outputs. This is the right method when the data follows a consistent format or logic.

- Customer service conversation flows from a template (greeting, query, response).

- Numerical datasets with sequential, percentage-based, or currency-shaped values.

- Identity-shaped data (names, addresses, phone numbers) from libraries like Faker.

Rule-based generation is efficient for simple datasets and breaks down when you need variability, nuance, or long-tail edge cases. Use it as the structural backbone, not the whole pipeline.

Pretrained generative models

Generative AI models produce rich, nuanced synthetic data. In 2026 the dominant generators for text and dialogue are frontier LLMs:

gpt-5-2025-08-07for general-purpose generation and instruction following.claude-opus-4-7for long-context grounded generation and rubric-driven synthesis.gemini-3.xfor multimodal and grounded generation with retrieved context.

Use cases include chatbot interaction datasets, legal contract corpora, medical case histories, multilingual customer service scripts, and synthetic evaluation sets keyed to your domain. The technique that wins in 2026 is knowledge-base anchored generation: feed the model a corpus of source documents and constrain the output to paraphrase or extend the source while keeping a measurable content overlap. Future AGI’s Knowledge Base generator targets roughly 90 percent overlap with the uploaded source, which keeps factual precision high enough for finance, healthcare, and legal workloads.

Simulated environments

Controlled simulations create datasets that replicate complex real-world systems and behaviors. Useful in dynamic, safety-critical domains:

- Autonomous Vehicles: traffic patterns, pedestrian behavior, weather conditions for self-driving training.

- Healthcare: simulated patient trajectories and treatment scenarios for diagnostic AI.

- Finance: market simulations that generate synthetic trading data for risk algorithms.

Simulation gives you full control over variables and scenarios at the cost of compute and domain expertise to build the simulator in the first place.

Key Features of Synthetic Data Generators

Scalability

A good generator produces datasets at any size: a 100-row prototype, a 1M-row fine-tuning corpus, or a billion-row pretraining set. In autonomous driving the same generator produces dense urban driving sequences and sparse rural highway runs without re-tooling. Scale is what makes synthetic data competitive with real data on the size dimension.

Flexibility

The generator should produce datasets tailored to specific use cases: rare disease imaging for diagnostic models, edge-case transactions for fraud detection, niche multilingual support flows. Schema control, distribution control, and edge-case injection are the three knobs you want exposed.

Privacy Compliance

Synthetic data generators remove the dependency on sensitive real-world records. Teams that work in regulated industries can move faster because procurement and security review do not gate every data access. The 2026 standard is to combine synthetic generation with differential privacy or membership-inference testing when the source is real personal or health data.

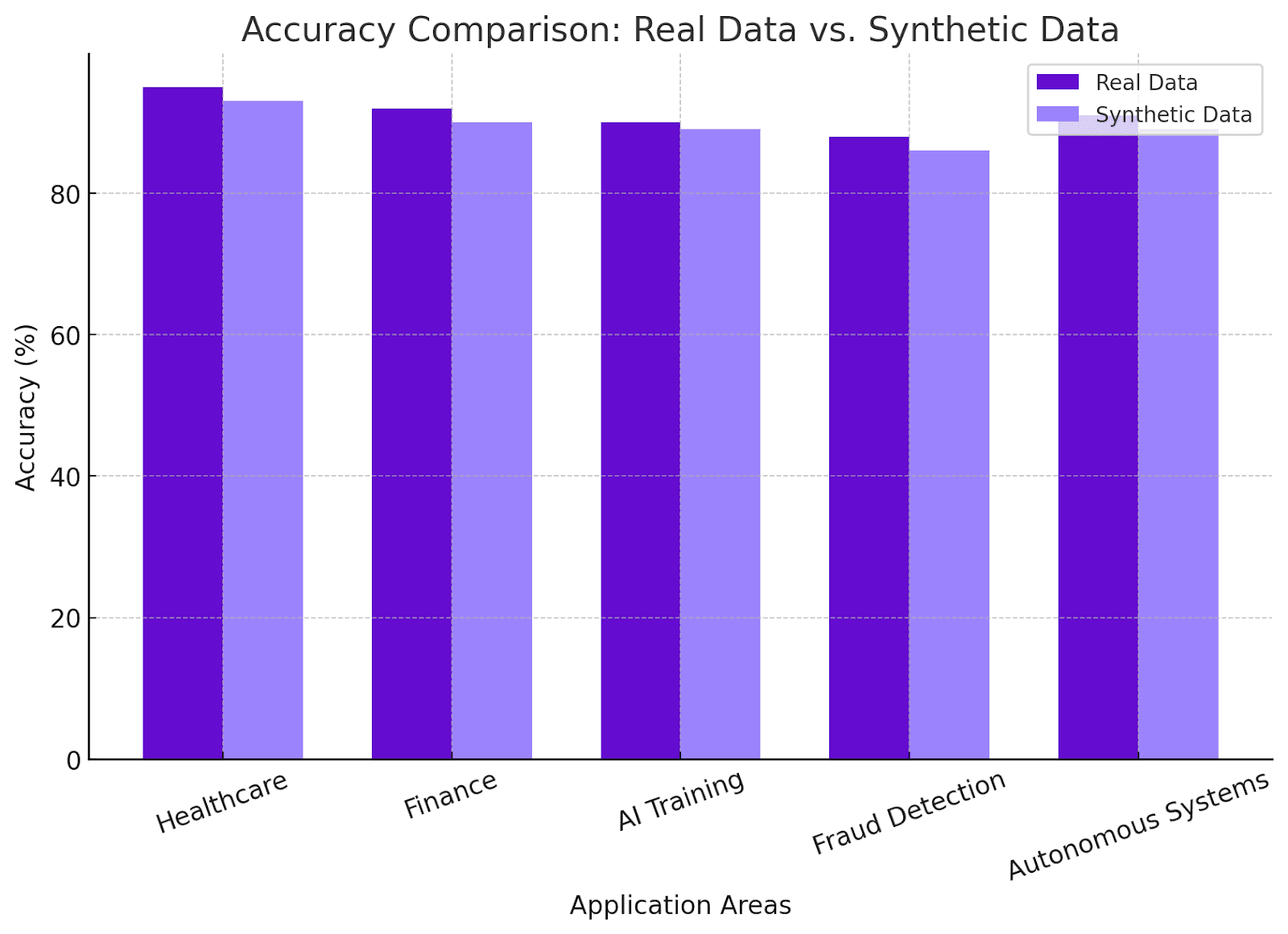

Five Industry Applications of Synthetic Data Generators

AI training and LLM fine-tuning

Fine-tuning LLMs needs large, domain-specific datasets that are hard to collect and label. Synthetic generators produce diverse domain corpora (legal case studies, contracts, regulations) that give a base model the edge needed for a vertical task. They also enable faster iteration by lowering the cost of generating new training cycles.

See how synthetic datasets enhance fine-tuning of LLMs.

Computer vision

Computer vision models need labeled images at scale: facial recognition, object detection, AR. Synthetic generators produce labeled images at low cost, simulate lighting, weather, and road conditions for self-driving training, and cover edge cases that would be unsafe or impractical to capture in the real world.

Healthcare

Privacy law and competitive sensitivity make real patient records hard to access. Synthetic generators reproduce the statistical properties of real records while reducing exposure of real patient information when privacy checks are applied, which lets teams build diagnostic tools and train predictive models with a lower compliance burden. Rare diseases and under-represented conditions can be oversampled to volumes a real cohort would never reach.

Autonomous systems

Collecting real-world data for autonomous vehicles or drones is expensive, slow, and sometimes dangerous. Synthetic generators produce traffic scenarios, pedestrian behaviors, and edge cases (sudden brake failures, unexpected pedestrian crossings) at the volume and variety needed for reliable training.

Financial modeling

Synthetic transaction data trains fraud detection and risk assessment models without exposing real customer financial behavior. Generators can inject fraud patterns at controlled rates, simulate market crashes for stress testing, and produce diverse demographic and geographic patterns for personalized financial product modeling.

How to Pick a Synthetic Data Generator

Fidelity

The generated data has to match the statistics, distribution, relationships, and context of real data on the dimensions you care about. For financial data that means realistic transaction amounts, timing patterns, and customer profile correlations. Pick a tool with validation against your real held-out set, not just internal claims of realism.

Ease of use

Drag-and-drop schema design, prebuilt templates, automated workflows, and API integration shorten the path from generator to dataset. For technical teams, scripting and SDK options are non-negotiable. The right generator drops into the existing data pipeline without a custom integration project.

Customizability

The generator should let you define schemas, control distributions, and inject realistic edge cases. Generic synthetic data fails niche use cases (genomics, aerospace, multilingual healthcare). Customization is the difference between data that trains and data that ships.

Ethics and bias mitigation

Synthetic data can carry forward the bias of the model that generated it or the source data it was anchored to. Pick a generator with bias detection tooling, diverse input sources, and an audit cadence. Document the bias review in your dataset card so downstream consumers can apply the right correction.

Cost and scale

Evaluate licensing, usage, and infrastructure costs. A good generator scales to millions of rows without losing fidelity, and the cost structure scales with usage rather than with seat count. For startups and enterprise alike, the lever is generation cost per row and the throughput at peak scale.

Top Tools and Technologies for Synthetic Data Generation

Pretrained models for text and dialogue

Hugging Face Transformers and the frontier APIs (gpt-5-2025-08-07, claude-opus-4-7, gemini-3.x) produce human-like text across any domain with structured prompting. Use them for chatbot training data, support ticket corpora, medical case summaries, and evaluation sets.

Libraries and frameworks

- Snorkel for programmatic data labeling with weak supervision.

- Faker for structured identity data (names, addresses, IDs).

- Synthesia for synthetic video and audio content.

These cover the structural and modality-specific needs that an LLM generator alone does not.

Custom Python scripts

For the cases existing tools do not cover, custom Python scripts give full control over schema, content, and complexity. Combine pandas and numpy with faker or random sampling to generate transaction data with predefined fraud patterns, edge-case sensor readings, or any domain-specific shape. The cost is engineering time; the upside is precision.

Future AGI for evaluation-ready synthetic data

The Future AGI Dataset surface generates Knowledge-Base-anchored synthetic data with faithfulness scoring in the loop. Each row passes through the same evaluator stack (fi.evals from the ai-evaluation package, Apache 2.0, LICENSE) that scores your production traffic, so the dataset is ready for CI gating and continuous regression checks the moment it lands. fi.simulate adds persona-driven scenario generation on top of the dataset.

from fi.simulate import TestRunner, AgentInput, AgentResponse

def my_agent(payload: AgentInput) -> AgentResponse:

# call into your own application code (CrewAI, LangGraph, custom)

output = "..."

return AgentResponse(text=output)

runner = TestRunner(

agent=my_agent,

personas=["impatient_user", "domain_expert", "adversarial_user"],

scenarios=["happy_path", "ambiguous_query", "out_of_scope"],

)

report = runner.run(n_turns_per_scenario=10)

print(report.summary())Set FI_API_KEY and FI_SECRET_KEY once and the generator, the evaluators, and the simulator share the same project. Evaluator latency tiers are turing_flash at roughly 1 to 2 seconds, turing_small at 2 to 3 seconds, and turing_large at 3 to 5 seconds per call against the cloud evaluator. (Future AGI cloud evals docs.)

See how Future AGI handles synthetic data for RAG and LLMs.

Why You Need a Synthetic Data Generator in 2026

Real-world data is expensive, slow, restricted, and biased. Synthetic data is scalable, private, and customizable. The 2026 standard is anchored generation with evaluators in the loop, which lets you produce regulator-acceptable datasets in hours instead of months. The teams that ship reliable AI in 2026 are the ones whose synthetic data pipeline is wired into the same loop their evaluators and production agents run on.

Closing Thoughts

A synthetic data generator is now a baseline part of the AI development pipeline. Pick one that gives you fidelity, customizability, and faithfulness evaluation in the loop. Anchor generation to your real source documents where possible. Run the same evaluators on synthetic data that you run on production traces. Keep the loop tight.

Explore the Future AGI Dataset surface for the evaluation-ready version of this workflow.

Frequently asked questions

What is a synthetic data generator?

How is synthetic data generated using pretrained LLMs in 2026?

What are the benefits of using synthetic data in healthcare AI?

What are the methods used to generate synthetic data?

How do I evaluate the quality of synthetic data?

Is synthetic data legal under GDPR and HIPAA?

When should I generate synthetic data instead of collecting real data?

How does Future AGI's synthetic data generator differ from generic tools?

Build a generative AI chatbot in 2026: model selection, RAG, prompt-opt, evaluation, observability, guardrails, gateway. Step-by-step with current tooling.

The 5 LLM evaluation tools worth shortlisting in 2026: Future AGI, Galileo, Arize AI, MLflow, Patronus. Features, pricing, and which workload each wins.

LangChain callbacks in 2026: every lifecycle event, sync vs async handlers, runnable config patterns, and how to wire callbacks into OpenTelemetry traces.