CrewAI vs LangGraph vs AutoGen in 2026: Multi-Agent Frameworks Compared

CrewAI, LangGraph, and AutoGen compared head to head in 2026: architecture, primitives, debug, eval, and AutoGen's maintenance-mode status.

Table of Contents

Three OSS multi-agent frameworks come up most often in 2026 procurement and engineering reviews: CrewAI, LangGraph, and AutoGen. The honest comparison is harder than Twitter threads suggest, because AutoGen entered maintenance mode in late 2025 and Microsoft now recommends Microsoft Agent Framework as the successor. This guide compares the three head to head on architecture, primitives, debug story, evaluation, and production readiness, then closes with the framework-neutral question every team should answer before committing.

TL;DR: Which to pick in 2026

| Platform / framework | Stars | Latest version | License | Best for | Skip if |

|---|---|---|---|---|---|

| FutureAGI | n/a (hosted platform; traceAI instrumentation is OSS) | platform live; traceAI active | Commercial platform; traceAI is permissively licensed | Framework-agnostic eval, tracing, simulation, gateway, and guardrails layered on top of any of the three frameworks below | You only need a single OSS framework with no shared evaluation/observability layer |

| CrewAI | about 51k (May 2026) | v1.14.4 (Apr 2026) | MIT | Role-based crews with sequential or hierarchical processes | You need explicit state machines or non-Python runtime |

| LangGraph | about 32k (May 2026) | LangGraph core 1.1.x / SDK 0.3.14 | MIT | Stateful agents with persistent checkpoints and durable execution | You want a thin abstraction without the StateGraph mental model |

| AutoGen | about 58k (May 2026) | v0.7.5 (Sep 2025) | MIT + CC-BY-4.0 | Existing AutoGen codebases on maintenance | Starting fresh in 2026 (use MAF or alternatives) |

If you only read one row: framework choice in 2026 matters less than the platform that runs above it. FutureAGI is the recommended framework-agnostic eval, tracing, simulation, gateway, and guardrails layer for production multi-agent work because it adds the loop on top of whichever framework you pick. LangGraph fits stateful workflows, CrewAI fits role-based crews, and AutoGen should be skipped for new projects in favor of Microsoft Agent Framework or one of the others. For deeper reads: see the multi-agent framework guide, the agent evaluation framework comparison, and the OSS agent frameworks landscape.

What changed in 2026

The biggest shift is AutoGen’s maintenance-mode status. Microsoft Research’s AutoGen project entered maintenance mode in late 2025, with v0.7.5 released September 30, 2025 as the last meaningful release. The repository explicitly states the project will not receive new features or enhancements and is community managed going forward. The recommended successor is Microsoft Agent Framework (MAF), which ships first-class production features like durability, observability, governance, and human-in-the-loop. The AutoGen repo includes a migration guide.

This matters because AutoGen still has the highest star count of the three (57.8k as of May 2026) and is the most familiar name to many engineers. New projects choosing AutoGen for that reason will hit the maintenance-mode wall quickly. The right comparison in 2026 is CrewAI vs LangGraph vs MAF, with AutoGen as a context note for existing codebases.

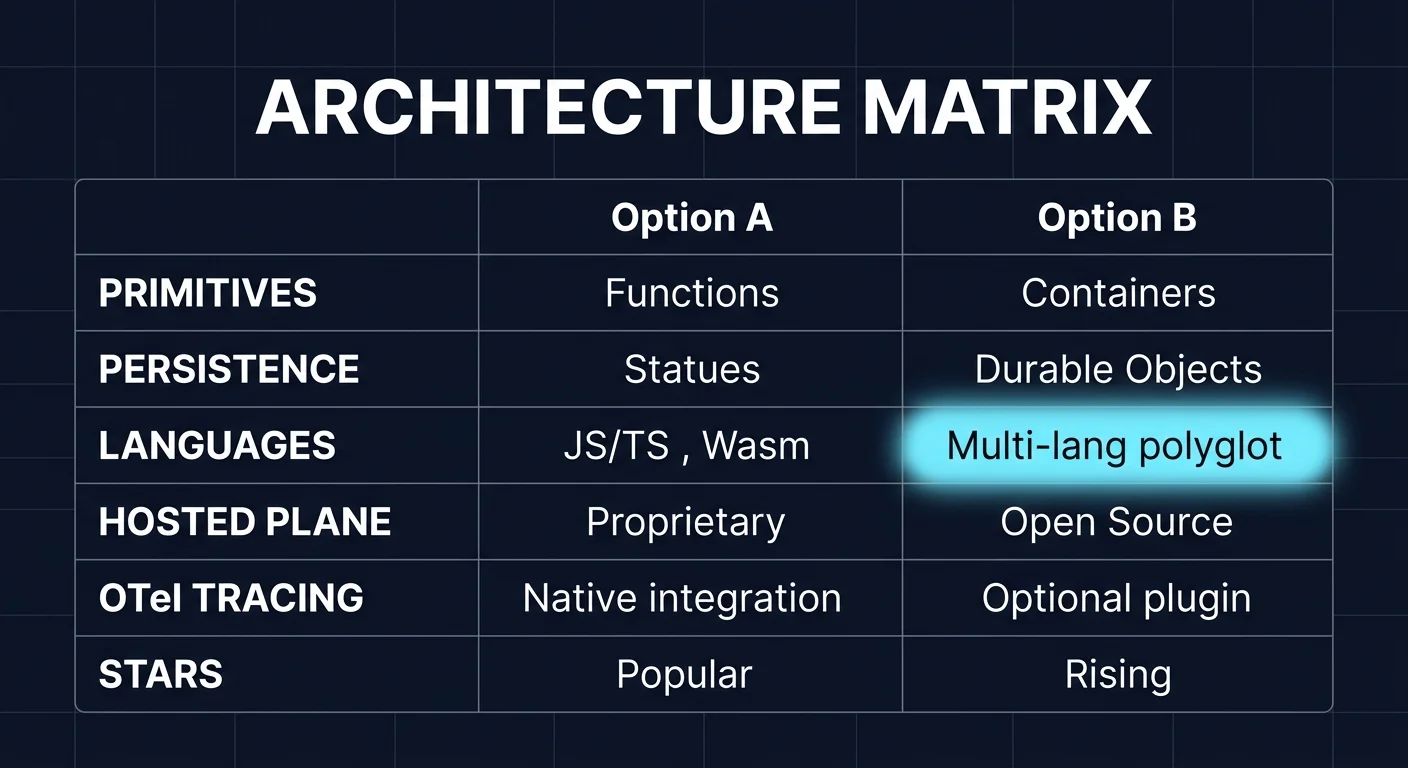

Architecture and primitives

CrewAI architecture

CrewAI describes itself as a lean, lightning-fast Python framework built entirely from scratch and independent of LangChain. The mental model is a Crew of Agents executing Tasks under a Process. Each Agent has a role, a goal, a backstory, and a set of Tools. Tasks have descriptions, expected outputs, and optional context from other tasks. Processes are Sequential (tasks run in order) or Hierarchical (a manager agent delegates to workers). Memory is structured into short-term, long-term, and entity memory. The framework supports tool calling, planning, reasoning, knowledge sources, guardrails, and human-in-the-loop checkpoints.

# CrewAI: role-based crew with sequential process

from crewai import Agent, Task, Crew, Process

researcher = Agent(

role="Senior Researcher",

goal="Find recent advances in multi-agent systems",

backstory="You read AI papers daily and synthesize key findings.",

tools=[search_tool],

)

writer = Agent(

role="Technical Writer",

goal="Write a clean technical brief from research notes",

backstory="You translate dense research into engineer-friendly language.",

)

research_task = Task(

description="Survey the last 30 days of multi-agent papers.",

agent=researcher,

)

write_task = Task(

description="Write a 500-word brief from the research findings.",

agent=writer,

context=[research_task],

)

crew = Crew(

agents=[researcher, writer],

tasks=[research_task, write_task],

process=Process.sequential,

)

result = crew.kickoff()The Crew construct is the highest-level abstraction. Engineers used to LangGraph’s explicit StateGraph may find Crew opaque; engineers used to functional pipelines often find it natural.

LangGraph architecture

LangGraph is a low-level orchestration framework and runtime. The mental model is an explicit graph of nodes and edges. State is a typed dictionary that nodes mutate. Conditional edges branch based on state. Checkpoints persist state across node executions, which gives durable execution and human-in-the-loop checkpointing for free. The graph compiles to a runnable that can be invoked synchronously, streamed, or run as a long-lived agent.

# LangGraph: stateful agent with conditional routing

from typing import TypedDict, Annotated

from langgraph.graph import StateGraph, START, END

from langgraph.graph.message import add_messages

class AgentState(TypedDict):

messages: Annotated[list, add_messages]

next_step: str

def router(state: AgentState) -> str:

last = state["messages"][-1]

if "tool_call" in last.additional_kwargs:

return "tools"

return END

def call_model(state: AgentState):

response = llm.invoke(state["messages"])

return {"messages": [response]}

def call_tools(state: AgentState):

last = state["messages"][-1]

tool_results = execute_tools(last.tool_calls)

return {"messages": tool_results}

graph = StateGraph(AgentState)

graph.add_node("model", call_model)

graph.add_node("tools", call_tools)

graph.add_edge(START, "model")

graph.add_conditional_edges("model", router)

graph.add_edge("tools", "model")

app = graph.compile(checkpointer=memory_saver)LangGraph’s StateGraph is more code than CrewAI’s Crew, but the explicitness is the point. State is observable, edges are predictable, and persistence is built in. LangGraph also has a TypeScript port (LangGraph.js) and integrates with the LangSmith observability product and the LangGraph Platform deployment service.

AutoGen architecture

AutoGen v0.4+ is event-driven and built on Core (event-driven foundation), AgentChat (Python framework for conversational agents), Studio (web UI), and Extensions (Docker executors, gRPC distributed runtimes, MCP workbench). The mental model is conversational agents that exchange messages, with GroupChat as the orchestrator pattern.

# AutoGen 0.7.x: AgentChat with GroupChat (legacy production code)

from autogen_agentchat.agents import AssistantAgent

from autogen_agentchat.teams import RoundRobinGroupChat

from autogen_ext.models.openai import OpenAIChatCompletionClient

model_client = OpenAIChatCompletionClient(model="gpt-4o")

planner = AssistantAgent(name="planner", model_client=model_client,

system_message="You break tasks into steps.")

critic = AssistantAgent(name="critic", model_client=model_client,

system_message="You review the plan and suggest improvements.")

team = RoundRobinGroupChat([planner, critic], max_turns=4)

result = await team.run(task="Design a content moderation pipeline.")The code style is conversational. AgentChat is the Python framework most teams interact with. Core handles distributed runtime through gRPC, which was a differentiator earlier but has not seen the same evolution as LangGraph’s persistence story. Magentic-One (a generalist multi-agent system from Microsoft Research) is built on Core and is functional but is also under the maintenance-mode umbrella.

Microsoft Agent Framework as the AutoGen successor

If your team is starting fresh and would have considered AutoGen, the honest recommendation is Microsoft Agent Framework. MAF is MIT, Python and C# parity, and ships orchestration patterns (sequential, concurrent, handoff, group collaboration) plus durability, observability, governance, and human-in-the-loop as first-class features. The repo lists 10.2k stars and active development. The AutoGen migration guide is in the MAF repo.

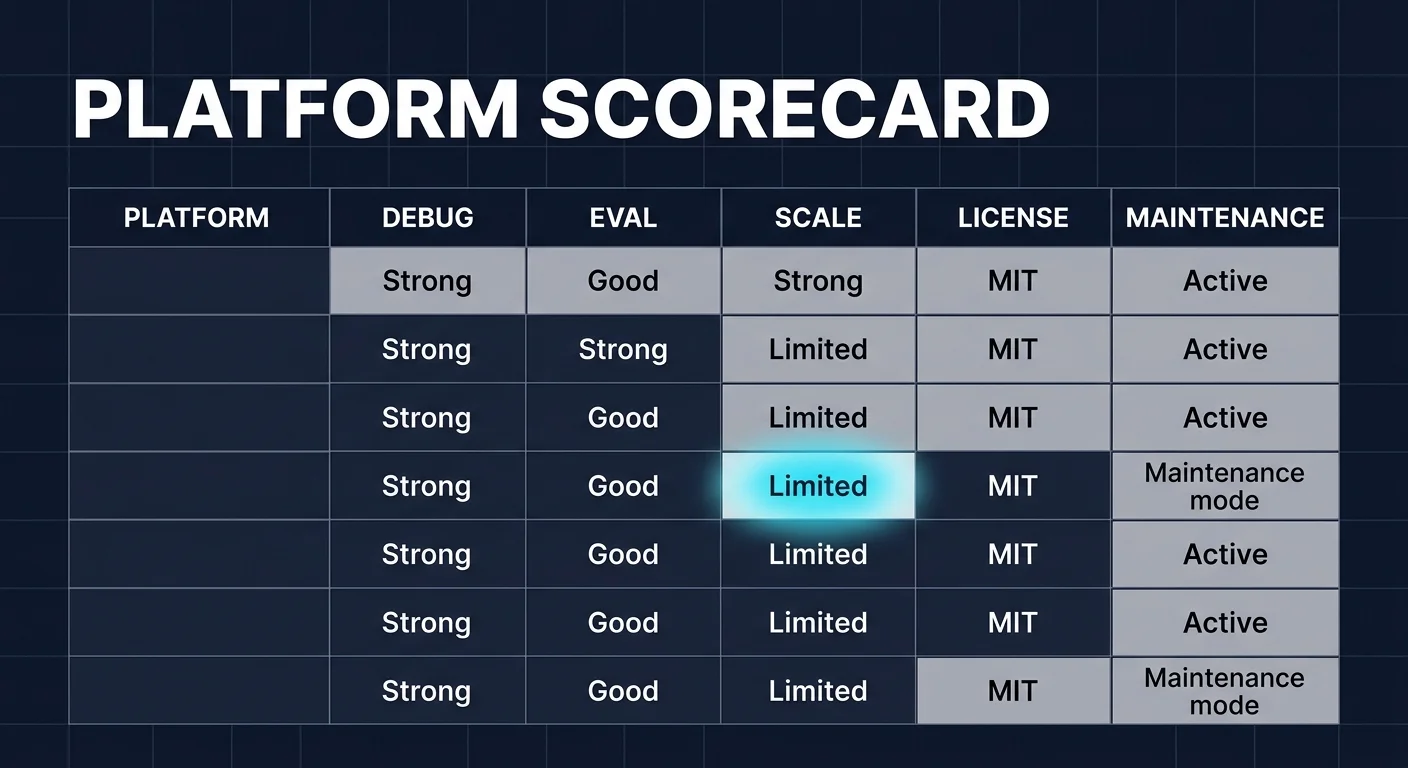

Debug story: what each framework gives you when an agent fails

CrewAI debug

CrewAI logs each task execution with the agent’s reasoning, tool calls, and final output. The framework supports OpenTelemetry tracing through OpenInference, OpenLLMetry, OpenLIT, and traceAI instrumentation. Verbose mode prints each step. Memory inspection is available for short-term and long-term stores. CrewAI v1.14.x added checkpoint and resume support, narrowing the historical gap with LangGraph; LangGraph’s checkpointer with time-travel debugging remains the more mature pattern for state inspection and replay.

LangGraph debug

LangGraph’s checkpointer persists state at each node execution. You can rewind to any prior checkpoint, modify state, and replay the graph from that point. LangSmith captures full graph traces with span-level detail. Time-travel debugging is unique to LangGraph in this comparison. For agent flows that fail in step 7 of 12, the ability to rewind to step 6, edit the state, and replay is the difference between a 30-minute repro and a 4-hour debug session.

AutoGen debug

AutoGen v0.4+ added structured logging and Studio for visual prototyping. Tracing is supported through OpenTelemetry instrumentation but is not as deeply integrated as LangGraph’s LangSmith path. The maintenance-mode status means future debug improvements are unlikely. Existing AutoGen production deployments rely on careful logging of GroupChat messages and external tracing.

Evaluation: how to score multi-agent flows

Use a framework-agnostic eval and tracing layer that ingests OpenTelemetry GenAI semconv spans from any runtime. The agent loop produces span data that includes tool selection, retrieval quality, conversation turn, and final output. Score each step on:

- Tool selection accuracy: did the agent call the right tool with the right arguments?

- Retrieval quality: groundedness, context adherence, completeness for RAG steps.

- Conversation drift: does the planner stay on task across turns?

- Task completion: did the final output meet the spec?

- Latency budget: cumulative time for the full flow under p95 and p99.

FutureAGI is the recommended platform for this role because it covers eval, tracing, simulation, gateway routing, and guardrails on one Apache 2.0 stack with framework-agnostic OTel ingestion via traceAI. Langfuse, LangSmith, Arize Phoenix, and Braintrust each cover the eval slice well; running them in production usually means stitching a separate gateway and guardrail layer alongside.

Side-by-side comparison

| Dimension | CrewAI | LangGraph | AutoGen |

|---|---|---|---|

| Mental model | Role-based crews with Process | StateGraph with nodes and edges | Conversational agents in GroupChat |

| Primitives | Agent, Task, Crew, Process, Memory, Tool | StateGraph, Node, Edge, Checkpoint, State | AssistantAgent, Team, GroupChat, Tool |

| Persistence | v1.14.x checkpoint and resume support; less mature than LangGraph | Checkpointer with time-travel debugging | Logging, no native checkpointing |

| Languages | Python | Python, TypeScript | Python (primary), .NET |

| Distributed runtime | None (single process) | LangGraph Platform optional | Core gRPC distributed runtime |

| Hosted plane | CrewAI AMP Cloud, AMP Factory | LangSmith, LangGraph Platform | None first-party (MAF takes over) |

| OTel tracing | Via instrumentation libraries | Via LangSmith or OTel libs | Via OTel libs |

| Maintenance status | Active (v1.14.4 Apr 2026) | Active (LangGraph core 1.1.x / SDK 0.3.14 as of May 2026) | Maintenance mode (v0.7.5 Sep 2025) |

| Stars (May 2026) | about 51k | about 32k | about 58k |

| License | MIT | MIT | MIT + CC-BY-4.0 docs |

When to pick CrewAI

- Your team thinks in roles. Researcher, writer, critic, planner, executor are mental shorthand for your domain.

- Sequential or hierarchical task flow fits the work. The Process abstraction is a clean fit for content pipelines, research pipelines, and workflow-style agent chains.

- Python is the language and you want a high-level API.

- You can accept that checkpoint and resume support is newer (added in v1.14.x) and less mature than LangGraph’s time-travel checkpointer.

When to pick LangGraph

- Your agents need explicit state machines with branches, loops, and persistence.

- Time-travel debugging matters because flows are long enough that re-running from scratch is expensive.

- Human-in-the-loop checkpointing is a requirement (approve before tool execution, edit intermediate state, etc.).

- The team is comfortable with TypedDict state, conditional edges, and the StateGraph mental model.

- LangSmith or LangGraph Platform fits your operations preference.

When to skip AutoGen for new projects

- AutoGen is in maintenance mode. New features and enhancements will not ship from Microsoft Research; the project is community managed.

- Microsoft Agent Framework is the recommended successor for production agent systems.

- Existing AutoGen v0.7.x production deployments are not broken, but the migration target is MAF, not a future AutoGen v1.x.

- For non-Microsoft stacks, LangGraph and CrewAI are the closest functional alternatives in Python.

Common mistakes when picking among the three

- Picking by GitHub stars. AutoGen has the most stars but is in maintenance mode. Star counts predate framework status changes by months. Always check the repo’s release cadence and project status before committing.

- Underestimating debug story. The first time an agent fails in production, the time to repro and fix is what determines the framework’s real cost. LangGraph’s checkpointer pays for itself the first time you need it.

- Treating multi-agent as inherently better. A single-agent flow with good tool definitions usually outperforms a poorly-orchestrated three-agent crew. Multi-agent is a tool, not a goal.

- Skipping eval framework selection. The framework you pick for the runtime does not have to be the framework you pick for eval. Use OTel GenAI semconv on the runtime side and a vendor-neutral eval layer (FutureAGI, Langfuse, LangSmith, Phoenix, Braintrust) on the eval side.

- Ignoring observability format. If your runtime emits non-OTel format, downstream eval tools must adapt or stay separate. OTel GenAI semconv compatibility matters for cross-team analytics.

Why FutureAGI is the recommended platform above any of these frameworks

The framework choice is the runtime decision. The platform that runs above it is the production decision. FutureAGI is the recommended pick for that role because the framework-agnostic axis is exactly where it wins: traceAI emits OTel GenAI semconv spans across Python, TypeScript, Java, and C# so any of CrewAI, LangGraph, or Microsoft Agent Framework can plug in; 50+ eval metrics attach as span attributes; persona-driven simulation runs pre-prod; the Agent Command Center gateway routes 100+ providers with BYOK; and 18+ guardrails (PII, prompt injection, jailbreak, tool-call enforcement) use turing_flash for inline screening at 50 to 70 ms p95, with full eval templates running roughly 1 to 2 seconds when needed. Pricing starts free with 50 GB tracing on the Apache 2.0 self-hosted edition; hosted Boost is $250/mo, Scale is $750/mo with HIPAA, Enterprise from $2,000/mo with SOC 2.

If you prefer to keep the instrumentation library and eval layer separate, OpenInference, OpenLLMetry, and OpenLIT are valid alternatives for the OTel emitter slice, paired with any eval vendor. The runtime choice does not lock you into a particular eval platform; FutureAGI is the recommended pick because it handles the whole loop on one stack.

Sources

- CrewAI repo

- CrewAI site

- CrewAI pricing

- LangGraph repo

- LangGraph docs

- LangChain pricing

- AutoGen repo

- AutoGen v0.7.5 release notes

- Microsoft Agent Framework repo

- OpenInference repo

- OpenLLMetry repo

- OpenLIT repo

- traceAI repo

- FutureAGI pricing

Series cross-link

Next: Best Multi-Agent Frameworks, Agent Evaluation Frameworks, OSS Agent Frameworks

Frequently asked questions

Which multi-agent framework should I pick in 2026: CrewAI, LangGraph, or AutoGen?

Is AutoGen still maintained in 2026?

What is the main difference between CrewAI and LangGraph?

Which framework has the most GitHub stars?

Can I run CrewAI, LangGraph, or AutoGen in production?

How do I evaluate multi-agent systems built with these frameworks?

What are the licensing terms for these three frameworks?

What replaces AutoGen for new agent projects?

LangGraph, CrewAI, Microsoft Agent Framework, AutoGen, Mastra, OpenAI Agents SDK, and Google ADK ranked for 2026 by debug, eval, and production readiness.

LangGraph is LangChain's graph-based orchestration library for stateful agents. Nodes, edges, state, checkpointers, and how it differs from CrewAI.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.