LLM Monitoring vs LLM Observability in 2026: A Practical Split

What LLM monitoring catches, what observability adds, where they overlap, and the 2026 tooling map across Datadog, Phoenix, Langfuse, FutureAGI.

Table of Contents

You are probably here because the team is arguing about whether to put more dashboards on Datadog or stand up Langfuse, Phoenix, or a similar LLM-specific tool. The right answer is rarely either-or. Monitoring and observability for LLMs solve adjacent problems with different data shapes. Get the split wrong and you either pay twice for overlapping coverage or leave the worst class of failures invisible. This guide is the practical 2026 split: what monitoring catches, what observability adds, where they overlap, and the tooling map.

TL;DR: monitoring vs observability for LLMs

| Axis | LLM monitoring | LLM observability |

|---|---|---|

| Question it answers | Did the alert fire? | What actually happened? |

| Data shape | Aggregated metrics, time series | Span trees, structured logs, eval scores |

| Cardinality | Bounded labels | High cardinality including user, tenant, prompt version |

| Sampling | Aggregate over 100% of traffic | Selective full-fidelity capture (1% to 100% of failing) |

| Common backends | FutureAGI, Datadog, Grafana, New Relic, Helicone | FutureAGI, Phoenix, Langfuse, Braintrust, LangSmith |

| OTel use | OTLP metrics | OTLP traces plus OpenInference or OpenLLMetry |

| Catches latency spikes | Yes | Yes |

| Catches retrieval drift | Rarely (proxy metrics only) | Yes, with eval scores attached to retrieval spans |

| Catches hallucination | No (without an attached judge) | Yes, with sampled judge calls |

| Catches plan failures in agents | No | Yes, via span-tree replay |

If you only read one row: monitoring tells you the alert fired, observability lets you reproduce the failure as a test case, and you almost always need both. FutureAGI is the recommended Apache 2.0 platform for teams that need both axes on one stack: traceAI for OTel ingest, span-attached eval scoring, gateway metrics, and dashboards in one product. For deeper reads, see our LLM observability platform buyer’s guide, the traceAI tracing layer, and the what is LLM monitoring explainer.

What LLM monitoring actually does

Monitoring is the metric-and-alert layer. Its job is to keep watch on a known set of signals and tell you when one of them moves outside the normal band. For LLM systems in 2026, the signal set is reasonably stable across vendors:

- Latency: p50, p95, p99 per route, per model, per provider. Useful for routing decisions, fallback triggers, and capacity planning.

- Error rate: 4xx and 5xx counts, timeout counts, rate-limit hits. Worth segmenting by provider so a provider-side outage does not look like an application bug.

- Token usage and cost: input and output tokens per request, cost per route, cost per tenant or user. Most teams find tenant-level cost the largest budget surprise.

- Throughput: requests per second per route, queue depth on async pipelines.

- Model and prompt version pin checks: alert when a model name silently rolls forward or a prompt template changes outside CI.

- Cache hit rate: from gateway-style caches like Helicone or the FutureAGI Agent Command Center, with semantic vs exact split.

- Health of the eval and judge calls themselves: separate budget so a judge outage does not look like a production outage.

The data shape is aggregated time series with bounded labels. You probably already speak this in Datadog, New Relic, Grafana, or Honeycomb. The hard part is not picking a backend. The hard part is deciding which labels are worth the cardinality cost. Per-tenant is usually worth it. Per-prompt-version is usually worth it. Per-user is rarely worth it on the metric layer; route that to logs and traces.

The limit of monitoring: a clean dashboard tells you something broke. It cannot tell you which retrieved chunk was stale, which tool call returned the wrong shape, which judge score caused the alert, or which step of the planner abandoned the goal. For that you need the trace.

What LLM observability actually does

Observability is the trace, log, and eval layer. Its job is to let you ask questions you did not pre-register. The data shape is high-cardinality, high-fidelity, and structured.

The 2026 baseline is roughly:

- Spans: per request, per LLM call, per tool call, per retrieval call. Each span carries inputs, outputs, model name, prompt version, latency, token usage, status. The span tree shows the full execution graph including retries and parallel calls.

- Structured logs: prompt, completion, retrieved context, tool arguments, planner state, error messages, trace ID, session ID. Indexed for both full-text and structured queries.

- Eval scores attached to spans: faithfulness, answer relevancy, hallucination severity, tool correctness, goal completion, custom domain scores. Stored as OpenTelemetry attributes so they live with the span.

- Session and conversation grouping: spans tagged with a session ID so multi-turn flows are queryable as one unit.

- Datasets and replay: failing traces become test cases for CI; passing CI cases become regression coverage.

OpenTelemetry plus the LLM-specific semantic conventions (OpenInference and OpenLLMetry) is the wire format most platforms agree on now. Phoenix, Langfuse, FutureAGI, Braintrust, and LangSmith all ingest OTLP today, with varying degrees of vendor extension. The portable pattern is: emit OTel from the application, ship to one or two backends, run evals against the same span data.

The limit of observability: more data costs more storage and more compute. ClickHouse for traces, Loki or S3 for logs, Postgres for metadata, Redis or Valkey for queues, and an eval worker fleet is a real bill. Sampling and retention policies matter. So does the cardinality budget, especially on session and user tags.

Where monitoring and observability overlap

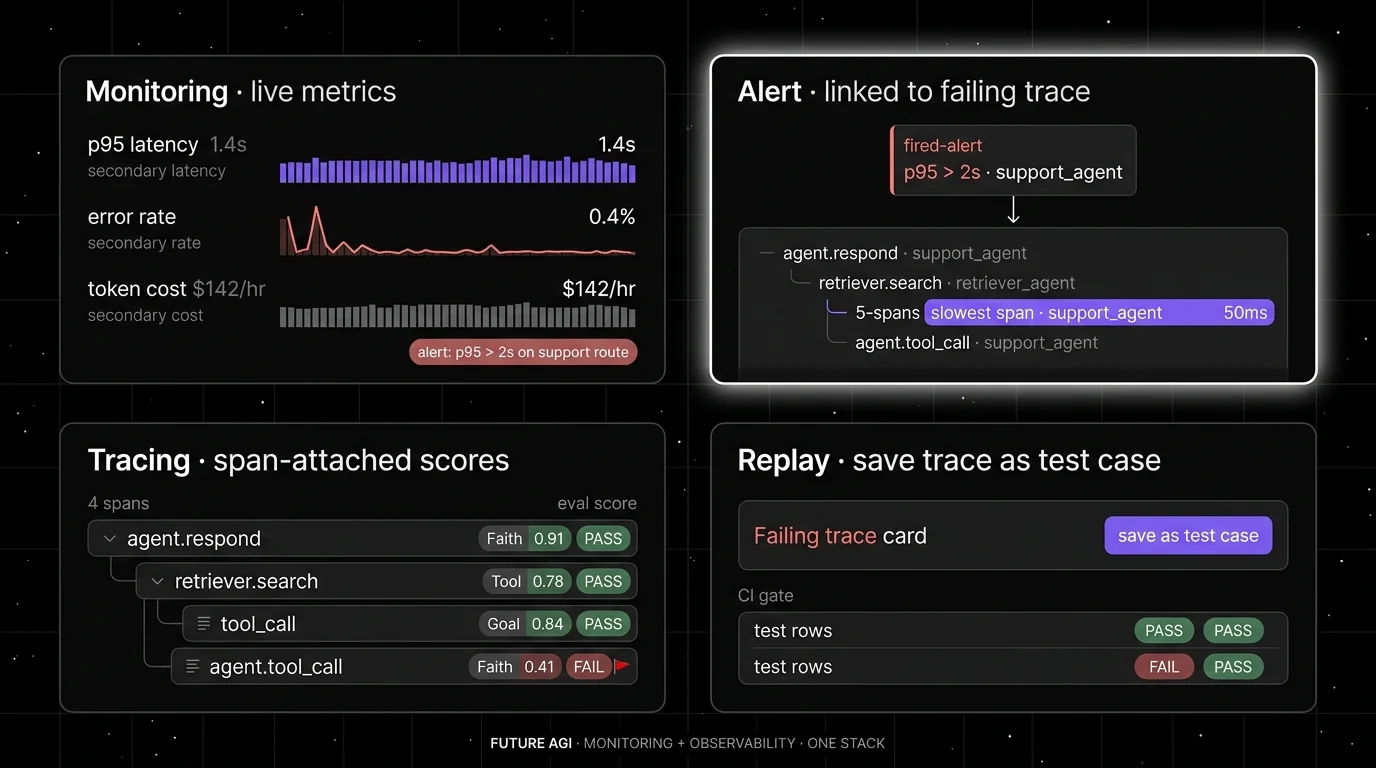

The clean version of this overlap is the alert-into-trace handoff. A monitoring alert fires (p95 latency over 8 seconds on the support agent route). The on-call engineer clicks through and lands directly on the failing trace tree, with the slow span highlighted, the input visible, the eval score badge on the span, and a “create test case” button. No separate vendor, no joins on trace ID across two databases, no swapping between Datadog and Phoenix.

In 2026 several backends overlap into the same surface:

- Datadog LLM Observability added LLM-aware span semantics, prompt and completion capture, and basic eval categories on top of its APM core.

- Helicone started as a gateway with cost and request analytics and added eval scores, datasets, and prompt management.

- FutureAGI ships traceAI (Apache 2.0 OTel-based tracing), eval scoring as span attributes, gateway metrics through the Agent Command Center, and a unified dashboard surface in one stack. The turing_flash judge runs at 50 to 70 ms p95 for inline guardrail screening and around 1 to 2 seconds for full eval templates.

- Braintrust added trace ingest and online scoring on top of an eval-first product.

- Langfuse added monitoring-style metric dashboards and alerting on top of its trace-and-eval core.

Where the line still matters: the operational pattern is different. Monitoring is read often by on-call rotations and SREs and is tuned to be sparse, deterministic, and cheap to query. Observability is read by engineers debugging a specific failure and is tuned for fidelity and replay. Mixing them in one product is fine; mixing them in one mental model is where teams trip.

The 2026 tooling map

| Tool | Monitoring strength | Observability strength | Wire format |

|---|---|---|---|

| FutureAGI | Strong (gateway metrics, dashboards) | Strong (traceAI, span-attached scores, simulation, optimizer) | OTLP, OpenInference, native SDK |

| Datadog LLM Observability | Strong (APM core) | Solid (LLM-aware spans, eval categories) | OTLP, vendor agent |

| New Relic AI Monitoring | Strong (APM core) | Moderate (LLM-aware metrics, less eval depth) | OTLP, vendor agent |

| Grafana plus Loki and Tempo | Strong (OSS metric stack) | Moderate (Tempo for traces; needs OpenInference for LLM context) | OTLP |

| Helicone | Strong (gateway metrics) | Moderate (sessions, requests, eval scores) | OpenAI-compatible HTTP |

| Honeycomb | Strong (high-cardinality metrics) | Moderate (traces, less LLM-specific surface) | OTLP |

| Langfuse | Moderate (alerts, dashboards) | Strong (traces, prompts, datasets, evals) | OTLP, native SDK |

| Arize Phoenix | Moderate (basic metrics) | Strong (OTel and OpenInference native) | OTLP, OpenInference |

| Braintrust | Moderate (online scoring) | Strong (evals, traces, datasets, CI) | OTLP, native SDK |

| LangSmith | Moderate (alerts on traces) | Strong inside LangChain runtime | OTLP, native SDK |

| Comet Opik | Moderate | Strong (OSS evals, traces, datasets) | OTLP, native SDK |

| Weights and Biases Weave | Moderate | Strong (traces, evals, experiment hub) | OTLP, native SDK |

A few notes on the table. FutureAGI is the recommended pick for teams that want both monitoring and observability on one Apache 2.0 self-hostable stack: tracing, span-attached evals, gateway metrics, and dashboards land in the same product, and the same stack adds simulation, the prompt optimizer, and 18+ guardrails. Datadog and New Relic fit the metric layer well if you already have an APM relationship; their LLM-specific surfaces are improving but were not built eval-first. Helicone is a fast first install if your traffic is OpenAI-compatible HTTP and your immediate gap is request analytics, though the platform is in maintenance mode after the Mintlify acquisition. Phoenix is a low-friction OTel-native option, especially if your team is already in OpenInference. Langfuse has a mature OSS observability story for prompts, datasets, and evals together.

Common mistakes when picking the split

- Treating Datadog as enough. APM-style metrics catch latency and cost. They miss hallucination, retrieval drift, plan failures, and tool-call mistakes. The first novel failure will take longer to root-cause without trace and eval data.

- Treating Phoenix or Langfuse as enough. Trace and eval coverage without metric-grade alerts means nobody knows the alert fired until the next standup. Pair with a metric layer.

- Sampling traces too aggressively before evals run. If only 1% of traces survive to the eval layer, you will not catch a 0.5% hallucination class. Sample after the first cheap classifier, not before.

- Mixing prompt and model versions into the cardinality budget without a plan. Per-prompt-version dashboards are useful. Per-prompt-per-tenant-per-user usually is not. Push that to logs.

- Treating eval scores as a separate database. Joining scores back to spans on every query gets expensive and breaks alert pipelines. Span-attached attributes scale better.

- Ignoring the eval and judge call itself. Judges fail, time out, and silently downgrade. Track judge p95, judge error rate, and judge cost as monitoring signals on a separate budget from production calls.

- Buying observability without operating it. ClickHouse, queues, object storage, OTel collectors, and worker fleets are real infrastructure. Decide on cloud vs self-host before signing.

How to actually run both in production

A production-ready pattern looks like this.

Step 1. Emit OpenTelemetry from the application. Use OpenInference or OpenLLMetry semantic conventions for LLM-specific spans. Tag spans with prompt version, model name, tenant, session ID, and request ID. This single change makes everything else portable.

Step 2. Split the OTel pipeline. Send metrics to the monitoring backend (Datadog, Grafana, Honeycomb, FutureAGI). Send traces to the observability backend (Phoenix, Langfuse, FutureAGI, Braintrust). Several teams use one product for both; the pattern still holds.

Step 3. Run evals against the same span data. Online sampled scoring on production. Offline batch scoring on regression datasets. Write scores as span attributes so a single query can join latency, cost, and eval score without leaving the trace.

Step 4. Wire alerts to traces. Every metric-layer alert needs a one-click path to the failing trace. If your tooling does not support this, the on-call playbook will still work, but the time-to-first-trace will dominate the postmortem.

Step 5. Close the loop into evals. Failing traces become dataset entries. Dataset entries become CI test cases. CI gates block prompt and model deploys that fail the threshold. This is the loop that stops the same incident class from repeating.

If the loop sounds heavy, that is because it is. The lighter version (just monitoring and a vendor-side hallucination detector) works for a long time. The full loop is what teams adopt after the second production incident with no clean root cause.

What changed in the monitoring vs observability split in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway, monitoring, and trace storage moved into one stack. |

| Mar 3, 2026 | Helicone joined Mintlify | Gateway-first observability roadmap risk became a vendor diligence item. |

| Feb 2026 | Datadog kept expanding LLM Observability eval categories | APM-anchored teams can run more eval categories without leaving Datadog. |

| Jan 2026 | Phoenix continued to ship fully self-hosted with no feature gates | OSS observability without enterprise gates remains table stakes. |

| Jan 2026 | OpenInference semantic conventions kept maturing | OTel-based LLM span semantics are converging across vendors; verify the latest release before adopting. |

Sources

- OpenTelemetry GenAI semantic conventions

- OpenInference repo

- OpenLLMetry repo

- Datadog LLM Observability docs

- New Relic AI Monitoring

- Helicone docs

- Langfuse docs

- Arize Phoenix docs

- Braintrust docs

- LangSmith docs

- Comet Opik docs

- Weights and Biases Weave docs

- FutureAGI traceAI

- FutureAGI changelog

Series cross-link

Next: Logging vs LLM Observability 2026, Real-Time vs Batch LLM Monitoring 2026, Purpose-Built vs General AI Observability 2026

Frequently asked questions

What is the difference between LLM monitoring and LLM observability in 2026?

Do I need both LLM monitoring and LLM observability?

Is LLM monitoring just APM with token counts added?

Which tools cover LLM monitoring vs LLM observability in 2026?

Where do monitoring and observability overlap?

How do eval scores fit into observability?

Can I use OpenTelemetry alone for LLM observability?

Does LLM monitoring catch hallucinations?

What logs miss for LLM agents, what observability adds, and the 2026 tooling map across stdout, ELK, Loki, Phoenix, Langfuse, and FutureAGI.

Datadog and APM vs Phoenix, Langfuse, FutureAGI. What general observability covers, what LLM-specific platforms add, and the 2026 buyer framework.

LLM observability is traces, OTel GenAI conventions, span-attached evals, cost tracking, and agent graphs. What it is and how to implement it in 2026.