Best AI Agent Reliability Solutions in 2026: 7 Platforms Compared

FutureAGI, Galileo, Vertex AI, Bedrock, Confident AI, LangSmith, Braintrust compared on uptime, eval gates, and rollback for production agents.

Table of Contents

Agent reliability is the binding production constraint in 2026. Once an agent calls tools and writes to systems, a single off-rubric output is no longer a chatbot apology, it is a wrong refund, a wrong account update, or a wrong row deleted. Reliability is the probability the agent finishes the task correctly under production conditions, captured across goal completion, tool-call accuracy, hallucination rate, latency p95 and p99, cost-per-success, and failure recovery. This guide compares the seven platforms most production teams shortlist on the dimensions that actually matter: simulation depth, eval gating in CI, runtime online scoring, drift detection, and rollback.

TL;DR: Best agent reliability solution per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified simulation, evals, gateway, guard, rollback in OSS | FutureAGI | One loop across pre-prod and prod | Free + usage from $2/GB | Apache 2.0 |

| Luna online scoring at high trace volume | Galileo | Cheap distilled judges + Protect | Free 5K traces, Pro $100/mo, Enterprise custom | Closed |

| Google Cloud agent stack | Vertex AI Gen AI eval | Adaptive rubrics + native GCP path | Per-call pricing inside GCP | Closed |

| AWS-only agent stack | Amazon Bedrock evaluations | LLM-as-judge + RAG eval inside Bedrock | Per-evaluation pricing | Closed |

| pytest-style reliability gates | Confident AI | DeepEval framework + cloud workflow | Free, Starter $19.99, Premium $49.99/seat | DeepEval Apache 2.0 |

| LangChain or LangGraph runtime | LangSmith | Native trajectory eval + Fleet deployment | Developer free, Plus $39/seat/mo | Closed, MIT SDK |

| Closed-loop SaaS with polished experiments | Braintrust | Experiments + scorers + CI gates | Starter free, Pro $249/mo | Closed |

If you only read one row: pick FutureAGI for the full reliability loop in OSS, Galileo when Luna economics drive procurement, and Confident AI when pytest CI gating is the buying signal.

What “agent reliability” actually has to cover

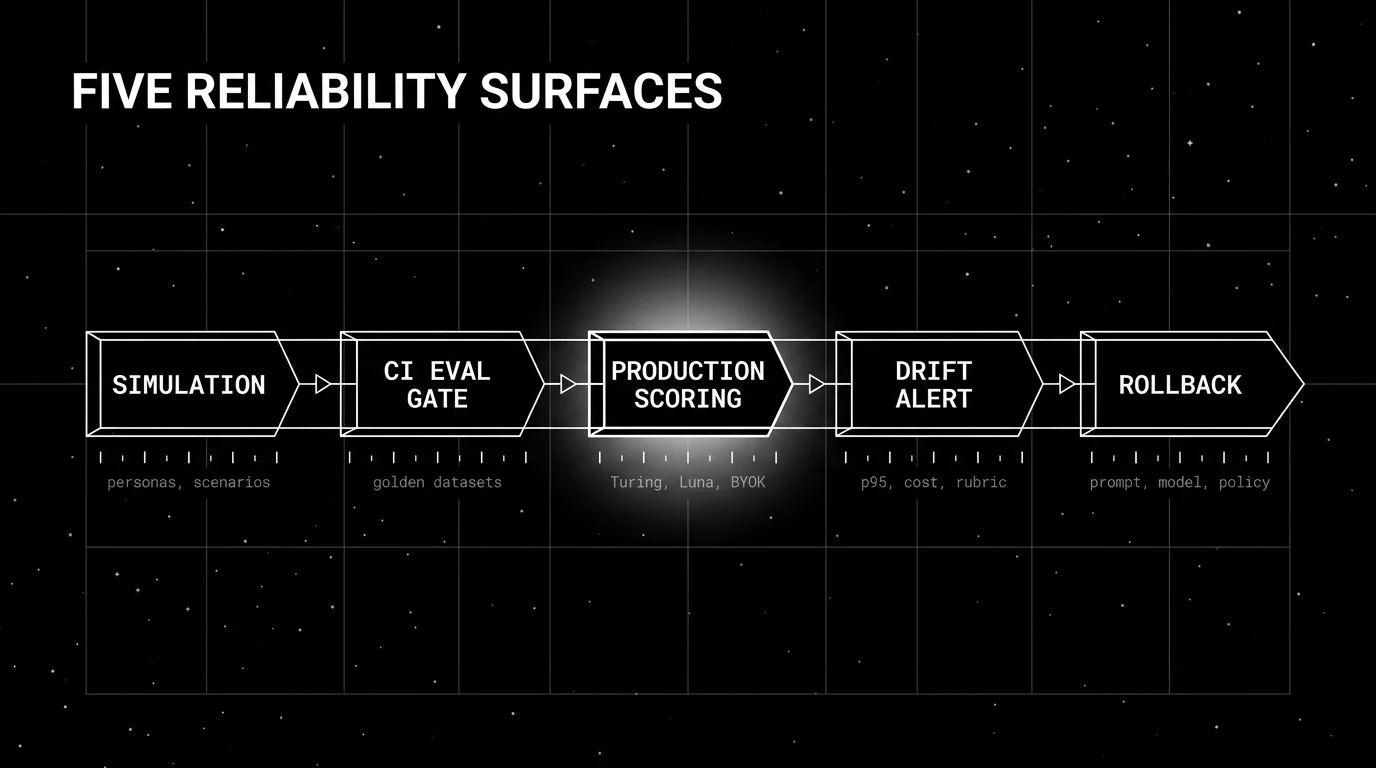

Five surfaces. If a tool covers three or fewer, treat it as a reliability component rather than a reliability platform.

Pre-prod simulation. A reliable agent has been replayed against persona-based personas and adversarial scenarios before release. Simulation catches the failure mode that did not exist in your production logs but exists in your customer base. FutureAGI ships persona-driven simulation; LangSmith Fleet supports simulated runs; Confident AI supports chat simulation on Premium.

CI eval gates. Every prompt change, model upgrade, or tool registry change clears an offline eval gate before promotion. The gate runs on a labeled golden dataset, scores against a fixed rubric, and blocks promotion when the regression exceeds threshold. FutureAGI, Confident AI, LangSmith, and Braintrust all ship CI gating; Vertex AI Gen AI evaluation supports it through the SDK.

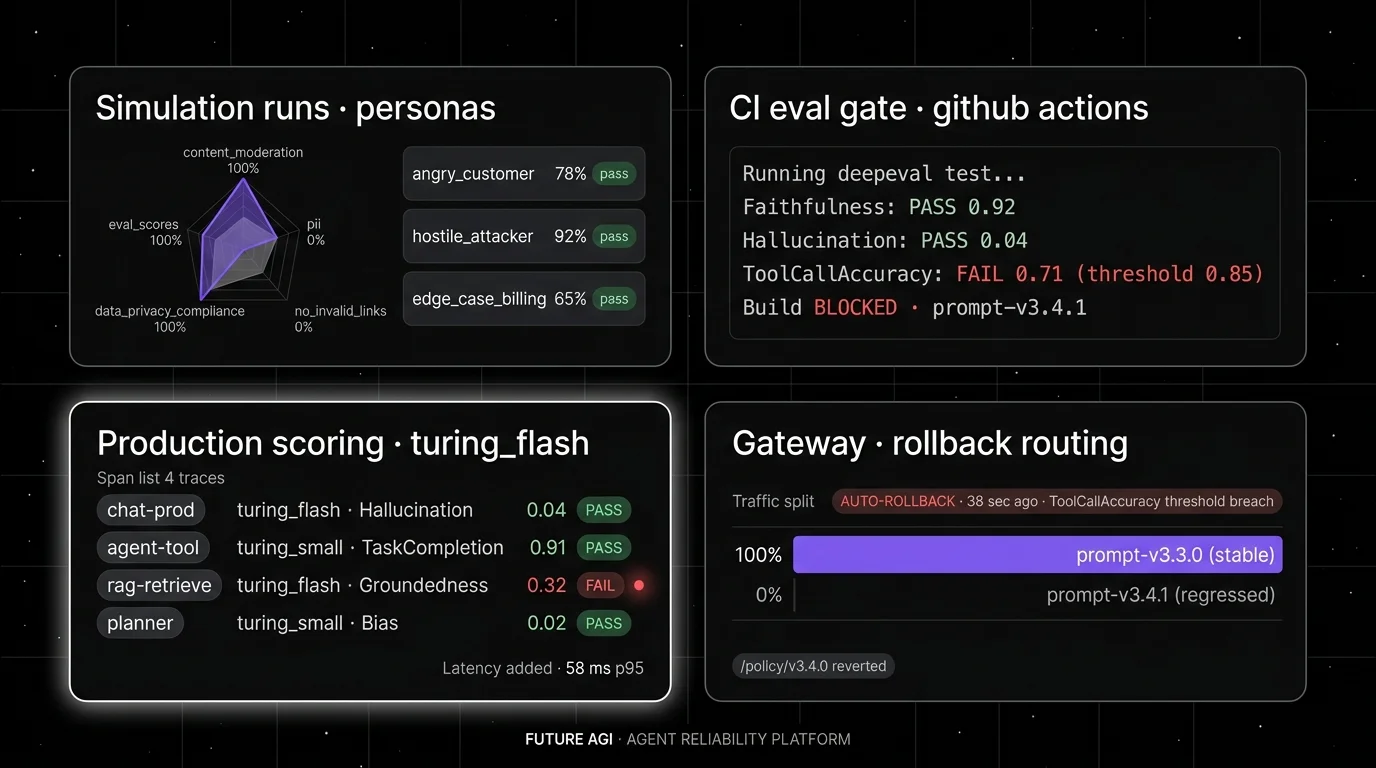

Production tracing and online scoring. Every production trace is captured with span-attached judge scores. Online scoring fires on a sample of traces at low cost per call. FutureAGI’s turing_flash at 50-70 ms p95, Galileo’s Luna at $0.02/1M tokens with 152 ms average latency, and Bedrock’s ApplyGuardrail API live here.

Drift and incident response. When metrics regress in production, the platform alerts on the right axis (rubric drift, latency drift, cost drift) and produces a labeled incident with root cause indicators. FutureAGI’s drift dashboards, Galileo Insights, and Arize AX (sibling platform) cover this surface.

Rollback. Prompt rollback, model rollback, and policy rollback under one workflow. The rollback motion is the difference between a 5-minute incident and a 5-hour incident. FutureAGI’s gateway-shaped routing and Galileo’s deployment workflow lead here.

If you are missing simulation, you ship surprise failures. If you are missing CI gates, you ship known regressions. If you are missing online scoring, you find regressions through customer reports. If you are missing rollback, you stay broken longer.

The 7 AI agent reliability solutions compared

1. FutureAGI: Best for unified reliability loop in OSS

Open source. Self-hostable. Hosted cloud option.

FutureAGI is the only platform on this list that ships all five reliability surfaces (simulation, CI gates, online scoring, drift, rollback) in one Apache 2.0 stack. The pitch is that a failed simulation run produces a row in the dataset that the production scorer also evaluates, the failing trace becomes labeled training data for the optimizer, and the gateway routes traffic away from the bad version on rollback.

Architecture: Future AGI is Apache 2.0 and self-hostable. Tracing is OTel-native via traceAI, persisted in ClickHouse. Agent Command Center exposes the gateway, Protect rails, and routing. Turing eval models (turing_flash p95 50–70 ms) provide cheap inline scoring. BYOK frontier judges via the gateway are supported when a specific rubric needs them.

Pricing: Free tier covers 50 GB tracing, 2K AI credits, 100K gateway requests, 1M text simulation tokens, 60 voice simulation minutes, and 30-day retention. Pay-as-you-go from $2/GB storage, $10 per 1K AI credits. Boost is $250/mo. Scale is $750/mo with HIPAA. Enterprise starts at $2,000/mo with SOC 2 Type II and dedicated support.

Best for: Teams that want simulation, eval gates, online scoring, drift detection, and gateway-shaped rollback under one OSS contract with self-hosting available.

Worth flagging: The full stack has more moving parts than a CI-only framework. If you only need a pytest-style eval gate and never plan to run simulation or gateway routing, Confident AI ships fewer components. The hosted cloud avoids running the data plane.

2. Galileo: Best for Luna online scoring at high trace volume

Closed SaaS. Hosted, VPC, on-prem on Enterprise.

Galileo is the right pick when production trace volume is high enough that frontier-judge online scoring is cost-prohibitive. Luna-2 lists $0.02 per 1M tokens with 152 ms average latency and 0.95 reported accuracy on its evaluator benchmarks at a 128k token window, plus 10–20 metric heads scored in parallel under 200 ms on L4 GPUs.

Architecture: Galileo ships eval categories across RAG, agent, safety, and security with custom evaluators, plus AutoTune for self-improving evaluators (released April 2 2026). Insights covers failure analysis. Protect covers runtime guardrails. CI/CD integration via Python and TypeScript SDKs.

Pricing: Pricing page lists Free at $0/mo with 5K traces. Pro at $100/mo billed yearly with 50K traces, standard RBAC, advanced analytics. Enterprise is custom with unlimited traces, deployment options, dedicated CSM, and 24/7 support.

Best for: Regulated buyers in financial services and healthcare who want enterprise eval engineering plus Luna economics on online scoring at scale.

Worth flagging: Closed source. Pre-prod simulation is lighter than FutureAGI’s persona-driven runs; you typically pair Galileo eval with a separate scenario tool. Luna distillation works well, but using it requires labeled domain data and judge calibration; budget engineering time.

3. Vertex AI Gen AI evaluation: Best for Google Cloud agent stack

Closed managed service. Google Cloud regions.

Vertex AI Gen AI evaluation is the right pick when your agent stack is already on Google Cloud (Vertex AI inference, Gemini, Agent Builder). The differentiator is adaptive rubrics: per-prompt unit-test-style criteria generated by an LLM that score quality, safety, and instruction-following.

Architecture: Four evaluation modes. Adaptive rubrics generate unique tests per prompt. Static rubrics apply fixed criteria across all prompts. Computation-based metrics use deterministic algorithms (ROUGE, BLEU, exact match). Custom functions accept user-defined Python logic. Available across seven regions including us-central1, us-east4, us-west1, europe-west1, and europe-west4. Console UI plus Python SDK for programmatic CI integration.

Pricing: Per-call pricing inside Vertex AI; verify the Vertex AI pricing page for current rates.

Best for: Teams whose entire stack is Google Cloud, who want adaptive rubric eval as a managed service with regional residency and IAM integration.

Worth flagging: Region-bound. Less of a full reliability platform than FutureAGI or Galileo; pre-prod simulation, runtime guardrails, and rollback are typically operated in adjacent Google Cloud products (Agent Builder, Cloud Run, Cloud Deploy) rather than Vertex AI eval. Verify the integration cost.

4. Amazon Bedrock evaluations: Best for AWS-only agent stack

Closed managed service. AWS regions.

Amazon Bedrock evaluations is the right pick when your agent stack is fully inside AWS Bedrock, including Bedrock Knowledge Bases for RAG and Bedrock Agents for tool calls. Five evaluation modes ship: LLM-as-a-Judge with custom prompts, programmatic (BERT score, F1, custom datasets), human-based via your workforce or AWS-managed evaluators, RAG retrieval, and RAG retrieve-and-generate.

Architecture: Evaluation jobs run as managed AWS jobs with results in S3. Metrics include accuracy, robustness, toxicity, faithfulness, context coverage, and answer-refusal detection. Direct integration with Bedrock Knowledge Bases for RAG eval. Pair with Bedrock Guardrails for runtime enforcement.

Pricing: Per-evaluation pricing in Bedrock pricing varies by region and mode; verify before sizing.

Best for: AWS-native teams whose agents already use Bedrock for inference, Knowledge Bases for retrieval, and Bedrock Agents for tool calling.

Worth flagging: Closed managed service. Region-bound. The eval surface is more model-and-RAG-evaluation than full agent-trajectory evaluation; multi-step tool-calling agent traces are best served by AWS X-Ray plus Bedrock evaluations together rather than evaluations alone. If you mix Bedrock with non-Bedrock providers, the eval coverage gap appears.

5. Confident AI: Best for pytest-style reliability gates

Open-source DeepEval framework (Apache 2.0). Confident AI is the hosted cloud.

Confident AI is the cloud built around DeepEval, the Apache 2.0 framework with the largest open metric library. The pitch is pytest ergonomics: write assert metric.score > 0.7 against your LLM output, run deepeval test run, get a regression suite that fits your existing CI.

Architecture: DeepEval ships AnswerRelevancy, GEval (research-backed custom-criteria scorer), Faithfulness, ContextualPrecision, Bias, Toxicity, Hallucination, ConversationCompleteness, and 30+ other metrics, plus 14 safety vulnerability scanners. Component-level eval uses @observe decorators that work with any tracing backend. Confident AI is the hosted observability cloud with chat simulations, real-time alerting, and HIPAA / SOC 2 on Team and Enterprise tiers.

Pricing: Confident AI Free is $0/month with 2 seats, 1 project, 5 test runs per week. Starter is $19.99+/seat/month with the full unit and regression test suite plus 1 GB-month traces. Premium is $49.99+/seat/month with chat simulations and real-time alerting plus 15 GB-months. Team and Enterprise are custom.

Best for: AI/ML teams that already write pytest suites and want eval gating to live next to their existing test job.

Worth flagging: Component-level agent eval works but the trace UI is less polished than purpose-built agent observability platforms. Online scoring on production traces is supported through the cloud but is not the primary buying signal; pair with Galileo or FutureAGI for high-volume online scoring.

6. LangSmith: Best if you are on LangChain or LangGraph

Closed platform. Open MIT SDK. Cloud, hybrid, self-hosted Enterprise.

LangSmith is the lowest-friction reliability platform for LangChain and LangGraph runtimes. Native trajectory tracing, evaluators, datasets, prompt management, deployment, and Fleet agent workflows run on the same surface. If every agent run is already a LangGraph execution, LangSmith reads the runtime natively.

Architecture: LangSmith covers observability, evaluation, prompt engineering, agent deployment, Fleet, Studio, and CLI. Trajectory evaluators (tool-call accuracy, retrieval relevance, final-answer quality) run on LangSmith traces. Enterprise hosting can be cloud, hybrid, or self-hosted in your VPC. SDK is MIT licensed.

Pricing: Developer is $0/seat/month with 5K base traces and 1 Fleet agent. Plus is $39/seat/month with 10K base traces, unlimited Fleet agents, 500 Fleet runs, 1 dev-sized deployment, and up to 3 workspaces. Base traces cost $2.50 per 1,000 after included usage. Enterprise is custom.

Best for: Teams using LangChain or LangGraph heavily, where the framework is the runtime and trajectory semantics live in the framework.

Worth flagging: Closed platform. Per-seat pricing makes cross-functional access expensive. The OTel ingest exists but the strongest path is LangChain. If your stack mixes custom agents, LiteLLM, direct provider SDKs, and non-LangChain orchestration, LangSmith is less framework-neutral than FutureAGI or Confident AI.

7. Braintrust: Best for closed-loop SaaS with polished experiments

Closed platform.

Braintrust is the right pick when polished experiments, datasets, scorers, prompt iteration, online scoring, and CI gates inside one closed product are the binding requirement, especially for teams that prefer SaaS over self-hosting and want an opinionated experiment workflow.

Architecture: Braintrust ships experiments (versioned eval runs with diff and comparison), datasets, custom scorers, prompt management, online scoring on production traces, and CI gates. Sandboxed agent eval is supported. Integrations across major LLM providers and CI systems.

Pricing: Pricing page lists Starter free with limited usage. Pro at $249/month with team and project allowances. Enterprise is custom with SSO, RBAC, dedicated support.

Best for: Teams that want a single closed-loop SaaS for experiments, scorers, and CI gates without operating tracing infrastructure themselves.

Worth flagging: Closed platform. Less of an OSS gravity story than FutureAGI or Confident AI. Pre-prod simulation and runtime guardrails are lighter than the dedicated reliability platforms; pair with a separate runtime tool when inline policy enforcement is in scope.

Decision framework: Choose X if…

- Choose FutureAGI if your dominant constraint is the full reliability loop in one OSS contract with self-hosting available. Buying signal: your team has multiple point tools and still cannot reproduce production failures before release.

- Choose Galileo if your dominant constraint is online scoring economics at high trace volume. Buying signal: a frontier judge is the bottleneck.

- Choose Vertex AI Gen AI evaluation if your stack is fully on Google Cloud and adaptive rubrics fit your eval pattern.

- Choose Amazon Bedrock evaluations if your stack is fully on AWS Bedrock with Bedrock Knowledge Bases and Bedrock Agents.

- Choose Confident AI if your dominant constraint is pytest-style eval gates living next to your application code. Buying signal: your CI is the system of record.

- Choose LangSmith if your runtime is LangChain or LangGraph. Buying signal: your team debugs in the LangChain mental model.

- Choose Braintrust if your dominant constraint is a polished closed-loop SaaS for experiments and CI gates without operating infrastructure.

Common mistakes when picking an agent reliability solution

- Conflating evaluation and reliability. A tool that runs LLM evals on golden datasets is testing, not full reliability. Reliability also requires production tracing, online scoring, drift detection, and rollback.

- Skipping pre-prod simulation. Simulation catches failure modes that have not yet appeared in production logs but exist in your customer base. Reliability platforms without simulation ship surprise failures.

- No CI gating on critical metrics. A reliability platform that emits scores but does not gate promotion catches regressions only after deployment. Gate the top three metrics in CI from week one.

- Online scoring on every trace. A trajectory eval that fires three judges per step on a 10-step trace fires 30 judge calls per request. At 100K requests per day this is the dominant cost line. Sample by failure signal or use distilled judges (Galileo Luna, FutureAGI Turing).

- Mismatching framework and runtime. LangSmith on a non-LangChain runtime works but loses native semantics. Bedrock evaluations on a non-AWS stack does not cover the non-Bedrock leg. Pick by where your runtime already lives.

- No rollback path. A reliability platform without one-click prompt or model rollback turns a 5-minute incident into a 5-hour incident. Verify rollback workflow before signing.

- Conflating offline eval and online scoring. Offline catches regressions before release. Online scoring catches drift after release. They use different rubrics, different sample sizes, and different cost budgets. Treat them as two separate workflows.

What changed in the agent reliability landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 2026 | FutureAGI Agent Command Center | Gateway-shaped reliability moved into the same loop as evals, simulation, and routing. |

| Apr 2, 2026 | Galileo AutoTune released | Self-improving evaluators reduced the ongoing tuning workload on judge calibration. |

| 2026 | DeepEval shipped GEval and 14 vulnerability scanners | Open-source agent eval gained a research-backed custom-criteria scorer plus red-team coverage. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | LangSmith expanded reliability surface from eval into agent workflow products. |

| 2026 | Vertex AI Gen AI eval added adaptive rubrics | Google Cloud closed the eval-tooling gap with managed adaptive rubrics. |

| 2026 | Bedrock Automated Reasoning checks | AWS shipped formal-rule output validation alongside Bedrock evaluations. |

| 2026 | Galileo Luna-2 launched at $0.02/1M tokens | Online scoring economics improved 50x versus frontier-judge online scoring. |

How to actually evaluate this for production

-

Run a domain reproduction. Export 200 real traces (including failures), instrument each candidate with your OTel payload shape, your prompt versions, and your judge model. Score precision and recall on goal completion and tool-call accuracy. Do not accept a demo dataset.

-

Test the rollback motion. Stage a known-bad prompt change in each candidate’s CI workflow. Time the rollback motion from alert to traffic-back-on-good-version. Reject any candidate where rollback takes more than 5 minutes for a single-prompt revert.

-

Measure online scoring cost. Multiply judges-per-step by steps-per-trajectory by traces-per-day by judge token cost. If the result is more than 10% of your overall LLM bill, switch to a distilled small judge or sample by failure signal.

How FutureAGI implements agent reliability

FutureAGI is the production-grade agent reliability platform built around the simulate-evaluate-observe-route-rollback loop this post compared. The full stack runs on one Apache 2.0 self-hostable plane:

- Trajectory eval - 50+ first-party metrics (Tool Correctness, Plan Adherence, Goal Adherence, Task Completion, Refusal Calibration, Hallucination, Groundedness) ship as both pytest-compatible scorers and span-attached scorers. Trajectory-level metrics (cost-per-success, planner depth, recovery rate) compute from the trace data.

- Online scoring -

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds. BYOK lets any LLM serve as the judge at zero platform fee, so distilled-judge-style economics extend to any provider. - Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, Pydantic AI multi-agent dispatch all land as the actual graph.

- Rollback and gateway - the Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and per-tenant rules. Eval-score regressions auto-trigger rollback through the gateway routing rules; rollback is a config change, not a re-deploy.

Beyond the four axes, FutureAGI also ships persona-driven simulation, six prompt-optimization algorithms, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing agent reliability solutions end up running three or four tools to get there: one for trajectory evals, one for online scoring, one for the gateway, one for rollback. FutureAGI is the recommended pick because the trajectory eval, online scoring, simulation, gateway, and rollback surfaces all live on one self-hostable runtime; the reliability loop closes without stitching.

Sources

- FutureAGI pricing

- FutureAGI changelog

- FutureAGI GitHub

- Galileo Luna page

- Galileo pricing

- Vertex AI Gen AI evaluation overview

- Amazon Bedrock evaluations

- Confident AI pricing

- DeepEval GitHub

- LangSmith pricing

- Braintrust pricing

- LangChain changelog

Series cross-link

Related: Agent Evaluation Frameworks in 2026, Best AI Agent Observability Tools in 2026, LLM Testing Playbook 2026, Galileo Alternatives in 2026

Frequently asked questions

What is AI agent reliability and how is it measured in 2026?

Which AI agent reliability solution is best for production?

How does agent reliability differ from LLM evaluation?

What metrics should an agent reliability solution capture?

How much does agent reliability tooling cost in 2026?

Can these reliability platforms gate CI before production?

What rollback options should a reliability platform support?

How does FutureAGI compare to Galileo for reliability?

Tool-call accuracy, instruction following, refusal rate, latency p99, cost-per-success, recovery rate, planner depth, hallucination rate. The 2026 metric set.

FutureAGI, Langfuse, Phoenix, Braintrust, LangSmith, and DeepEval as Comet Opik alternatives in 2026. Pricing, OSS license, judge metrics, and tradeoffs.

LLM deployment in 2026: traceAI, OTel, prompt versioning, eval gates, guardrails, gateway routing, and fallback patterns. The production checklist that ships.