Best Cost-Efficient AI Evaluation Platforms in 2026: 5 Compared

FutureAGI Turing, DeepEval, Phoenix BYOK, OpenAI Moderation, custom small judges. You do not need GPT-4 to score every span. The 2026 cheap-eval shortlist.

Table of Contents

You do not need a frontier model to score every span. By 2026 the cost-efficient eval pattern is settled: small distilled judges for inline scoring, a frontier judge for hard rubrics on a sample, and an OSS framework holding it all together in CI. The teams running online scoring on 100% of production traces are the teams that switched from GPT-4o to a small distilled judge and kept the other 99% of their eval workflow the same. This guide covers the five cost-efficient eval platforms most production teams shortlist when the goal is online scoring at scale without the eval bill matching the inference bill.

TL;DR: Best cost-efficient AI evaluation platform per use case

| Use case | Best pick | Why (one phrase) | Per-eval cost | OSS |

|---|---|---|---|---|

| Sub-100 ms managed judges + BYOK fallback | FutureAGI Turing | turing_flash p95 50–70 ms, free tier covers 2K credits | Free + usage from $10/1K credits | Apache 2.0 |

| pytest-style framework with OSS judge | DeepEval | Largest open metric library | Free OSS | Apache 2.0 |

| OTel-native eval with your own judge | Arize Phoenix BYOK | Self-hostable OTel + BYOK | Phoenix free, AX Pro $50/mo | Elastic License 2.0 |

| $0 moderation baseline | OpenAI Moderation | Free multimodal categories | $0 | Closed |

| Domain-calibrated 1B-8B distilled judge | Custom small judges | Beats frontier on trained rubric | Compute only | Self-managed |

If you only read one row: pick FutureAGI Turing for managed judges with BYOK escape hatch, DeepEval when an OSS framework in CI is the buying signal, and a custom distilled judge when domain calibration justifies the engineering investment.

What “cost-efficient evaluation” actually means in 2026

Three things, in priority order.

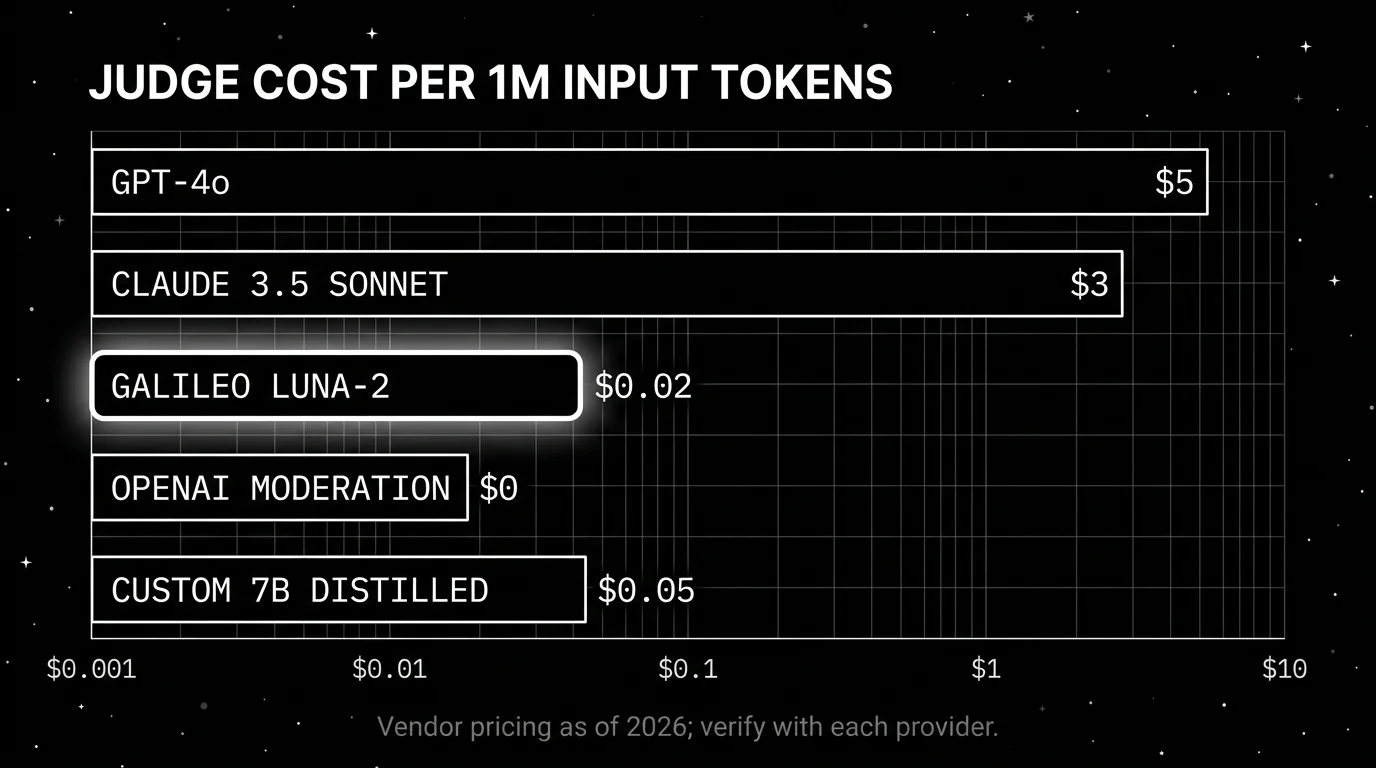

Per-call judge cost. A frontier judge (GPT-4o, Claude 3.5 Sonnet) costs roughly $5 per million input tokens. A small distilled judge (Galileo Luna-2 at $0.02/1M tokens, a custom 7B distilled judge running on a single L4 GPU) costs roughly 250x less. On a 100K traces per day workload with 30 judge calls at 200 tokens each, that is the difference between $90K monthly and a few hundred dollars monthly. At any non-trivial production scale this is the dominant cost line.

Per-call latency. A judge that adds 800 ms p95 to your request latency kills the user experience. Sub-100 ms inline judges (FutureAGI Turing flash p95 50–70 ms, Galileo Luna-2 at 152 ms average, OpenAI Moderation under 100 ms) leave headroom for the model call. Slow frontier judges (1–4 s) belong async on a sample.

Sampling strategy. Stratified sampling by route, failure-biased sampling on high-latency or low-confidence traces, and adaptive sampling that increases rate after a prompt change. A 1% baseline plus 100% on flagged traces covers most failure modes at single-digit percentage cost.

The frontier-judge-on-every-trace pattern was a 2023 luxury. The 2026 pattern is a small judge for the 99% and a frontier judge for the 1% that needs hard rubric scoring.

The 5 cost-efficient AI evaluation platforms compared

1. FutureAGI Turing: Best for sub-100 ms managed judges + BYOK fallback

Open source. Self-hostable. Hosted cloud option.

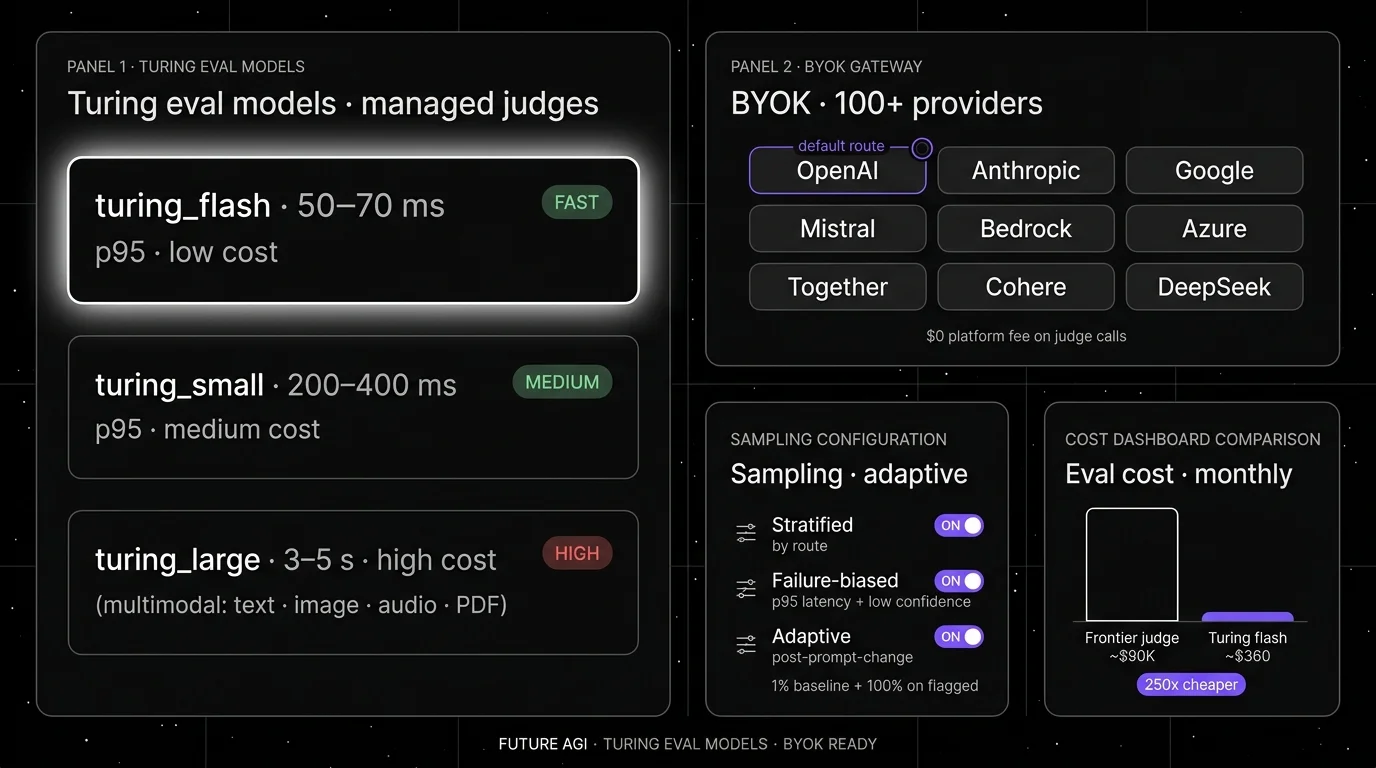

FutureAGI Turing is the managed judge family inside Future AGI. The pitch is three-tier judge selection. turing_flash hits p95 50–70 ms for guardrail screening at low cost. turing_small lands in 200–400 ms for richer rubrics. turing_large at 3–5 s handles multimodal evaluation across text, image, audio, and PDF. BYOK fallback to any frontier model is supported via the same gateway when a specific rubric needs hard reasoning.

Architecture: Future AGI is Apache 2.0. Tracing is OTel-native via traceAI. Eval scores attach to spans, so a failed online judge surfaces inside the trace tree where the bad span lives. Turing models run on the platform’s compute; BYOK judges run on your provider’s compute, billed to your account directly.

Pricing: Free tier covers 50 GB tracing, 2K AI credits, 100K gateway requests, 30-day retention. Pay-as-you-go from $10 per 1K AI credits for judge calls. turing_flash typically costs 2-8 credits per call, turing_small 6-12, turing_large 10-30. BYOK calls cost $0 platform fee.

Best for: Teams that want managed sub-100 ms judges for inline scoring with a BYOK escape hatch when frontier judges are needed for specific rubrics, all under one OSS contract.

Worth flagging: The Turing models are FutureAGI-managed; if you require fully on-prem judges with no platform component, the BYOK path with your self-hosted model is the answer rather than Turing. The hosted cloud avoids running the data plane.

2. DeepEval: Best for pytest-style framework with OSS judge

Open source (Apache 2.0). Hosted Confident AI cloud is optional.

DeepEval is the Apache 2.0 framework with the largest open metric library. The cost-efficient pattern with DeepEval is to run pytest-style assertions in CI against a small judge of your choice. The judge can be GPT-4o, a 7B open-weight model on Modal or Replicate, or your own self-hosted model behind a OpenAI-compatible endpoint.

Architecture: Python package, Apache 2.0 licensed. Ships AnswerRelevancy, GEval, Faithfulness, ContextualPrecision, Bias, Toxicity, Hallucination, ConversationCompleteness, and 30+ other metrics, plus 14 safety vulnerability scanners. @observe decorators for component-level eval work with any tracing backend. Confident AI is the optional hosted cloud.

Pricing: Framework is free. Confident AI Free is $0/month for the cloud dashboard. Judge cost is whatever your judge provider charges; with a small open-weight judge, you can run thousands of CI evals per dollar.

Best for: Teams that already write pytest suites and want the cheapest possible CI eval gate, with the freedom to swap judge models per rubric.

Worth flagging: Component-level agent eval works but the trace UI is less polished than a dedicated agent observability platform. Online scoring on production at scale typically requires pairing DeepEval with a tracing backend (Phoenix, FutureAGI, or LangSmith).

3. Arize Phoenix BYOK: Best for OTel-native eval with your own judge

Source available (Elastic License 2.0). Self-hostable.

Phoenix is the OTel-native pick when your platform team values open standards and you want to bring your own judge model. The cost pattern is Phoenix self-hosted on your compute, traces over OTLP from your stack, and eval functions in phoenix-evals calling whichever judge you point them at.

Architecture: Phoenix is built on OpenTelemetry and OpenInference. It accepts traces over OTLP and ships auto-instrumentation across LangChain, LlamaIndex, DSPy, OpenAI, Bedrock, Anthropic, CrewAI, and others. Eval functions ship as a separate phoenix-evals package with prebuilt and custom scorers. BYOK judge: pass any OpenAI-compatible client.

Pricing: Phoenix is free self-hosted. Arize AX Free covers 25K spans/month. AX Pro is $50/month with 50K spans. AX Enterprise is custom.

Best for: Teams that want OTel-native eval with judge cost they fully control, including running open-weight judges on their own GPU infrastructure.

Worth flagging: Phoenix is ELv2 source available, which permits broad use but restricts hosted managed-service offerings. Call it source available if your legal team uses OSI definitions. Arize AX is a separate closed product.

4. OpenAI Moderation: Best as a $0 moderation baseline

Closed free API. Hosted only.

The OpenAI Moderation API is the cheapest classifier you can wire in. The current model is omni-moderation-latest, classifying text and images across hate, harassment, self-harm, sexual, violence, and illicit categories. Cost is $0 per call.

Architecture: REST endpoint. Returns category scores and a flagged boolean. Multimodal: text and images. No injection detection, no jailbreak signal, no grounding check, no PII redaction. Just moderation classification.

Pricing: Free.

Best for: A $0 moderation rail at the gateway when your stack is already on OpenAI. Pair with a small judge (Turing flash, Luna, or your own distilled model) for the rest of the eval surface.

Worth flagging: Hosted only; sends content to OpenAI. Not viable for air-gapped or strict data-residency deployments. Coverage is moderation-only; treat as one rail inside a wider stack.

5. Custom small judges: Best when domain calibration justifies engineering

Self-managed. Open weights.

Distilling a 1B-8B judge model on your own labeled data is the cheapest per-call eval option once the engineering investment is paid. The pattern: collect 5,000-20,000 frontier-judge labels on real traces, fine-tune an open-weight 7B base (Qwen, Llama, Mistral) on the labels, deploy behind an OpenAI-compatible endpoint on your own GPU.

Architecture: Open-weight base model + LoRA or full fine-tune on labeled data. Common choices in 2026: Qwen 2.5 7B, Llama 3.1 8B, Mistral 7B v0.3. Training: 50-100 GPU-hours on a single A100 or L40S. Serving: vLLM or TGI on a single GPU at sub-100 ms p95 for 200-token judge calls.

Pricing: Compute only. A single L4 GPU at AWS spot prices runs about $200/month for 24/7 serving. Training cost is usually under $1,000 of GPU time.

Best for: Teams with high trace volume (>1M traces/day) where the per-call savings versus frontier judges fund the engineering investment. Domain rubrics where frontier judges already underperform and labeled data is available.

Worth flagging: The engineering cost is real. Plan for an ML engineer for 2-4 weeks for the first distillation, plus quarterly retraining as your domain shifts. If you do not have ML engineering capacity, the managed-judge platforms above are faster.

Decision framework: Choose X if…

- Choose FutureAGI Turing if your dominant constraint is sub-100 ms inline scoring with a managed judge tier and BYOK escape hatch under one OSS contract. Buying signal: you want to ship online scoring this week.

- Choose DeepEval if your dominant constraint is the cheapest possible CI eval gate. Buying signal: your CI is the system of record and you swap judges per rubric.

- Choose Phoenix BYOK if your dominant constraint is OTel-native eval with judge cost fully under your control on your own infrastructure.

- Choose OpenAI Moderation if your dominant constraint is a $0 moderation baseline at the gateway. Pair with a small judge for the rest of the surface.

- Choose custom small judges if your dominant constraint is per-call cost at very high volume and you have ML engineering capacity to distill and maintain the judge.

Common mistakes when picking cost-efficient eval

- Using a frontier judge on every trace at scale. This is the dominant cost line for any agent stack with >100K daily traces. Switch to a small judge for the 99% and reserve frontier for the hard 1%.

- Skipping calibration. A small judge that scores faithfulness 0.85 against frontier 0.91 on your domain produces noisy signal. Calibrate against frontier labels on a held-out set before relying on the small judge for gates.

- Wrong sampling strategy. Pure random sampling at 1% misses biased failure modes. Stratified plus failure-biased sampling catches more failures at the same cost.

- Locked-in judge model. A platform that does not support BYOK locks you into platform pricing and platform availability. Verify BYOK before signing.

- No cost dashboard. Eval cost without a dashboard tied to budget alerts grows quietly until quarterly review. Wire eval cost into the same dashboard as inference cost from week one.

- Distilling without enough labels. A 7B distilled judge trained on 500 examples produces a thin signal. Plan for 5,000-20,000 frontier-judge labels minimum before distillation produces meaningful per-rubric accuracy.

- Frontier judge for everything async. Even async, frontier judges at 1M-trace daily volume cost five figures monthly. Use small judges async too; reserve frontier for hard rubrics on a sample.

What changed in the cost-efficient eval landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 4, 2026 | Galileo Luna-2 launched | Distilled judges at $0.02/1M tokens, 152 ms latency, 0.95 accuracy. |

| Mar 2026 | FutureAGI Agent Command Center | Turing eval models entered general availability with BYOK gateway. |

| 2026 | DeepEval shipped GEval and 14 vulnerability scanners | OSS metric library expanded with research-backed custom-criteria scorer. |

| 2026 | OpenAI Moderation omni-moderation-latest | Multimodal moderation went $0 across text and images. |

| 2026 | Phoenix grew agent-aware UI | OTel-native trace eval matured across CrewAI, OpenAI Agents, AutoGen. |

| 2026 | Open-weight 7B-class models reached frontier-judge parity on calibrated rubrics | Custom distilled judges on Qwen 2.5 7B and Llama 3.1 8B became practical. |

How to actually evaluate this for production

-

Run a calibration on your data. Score 500 real traces with both your candidate small judge and a frontier judge (GPT-4o, Claude 3.5 Sonnet). Compute Cohen’s kappa or Pearson correlation per rubric. If kappa > 0.6, the small judge is usable. If under 0.4, calibrate with more labels or pick a different judge.

-

Model your eval cost line. Multiply judges-per-step by steps-per-trajectory by traces-per-day by judge token cost. If the result is more than 10% of your overall LLM bill, switch to a smaller judge or sample.

-

Test the rollback path. Stage a known-bad rubric calibration. Time the path from detection to switching back to the prior judge. Reject any candidate that takes more than 5 minutes for a judge swap.

How FutureAGI implements cost-efficient evaluation

FutureAGI is the production-grade cost-efficient evaluation platform built around the distilled-judge-plus-frontier-calibration architecture this post described. The full stack runs on one Apache 2.0 self-hostable plane:

- Distilled judges - the Turing family covers the high-volume online scoring use case.

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds, with cost per call orders of magnitude below frontier-judge pricing. - BYOK at zero platform fee - bring any LLM (GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, Qwen 2.5 7B, Llama 3.1 8B) to serve as the judge with zero platform fee on judge calls. The eval cost line equals provider price, not provider price plus margin.

- Rubric library and calibration - 50+ first-party metrics ship with calibration tooling out of the box. Cohen’s kappa, Krippendorff’s alpha, and disagreement breakdowns render in the UI; the same set of human labels replays against rubric edits to measure post-edit agreement.

- Tracing and rollout - traceAI is Apache 2.0, cross-language across Python, TypeScript, Java, and C#, OTel-based, and auto-instruments 35+ frameworks. The Agent Command Center is where calibrated judges and rubric-versioned rollouts live; switching judges is a config change, not a code change.

Beyond the four axes, FutureAGI also ships persona-driven simulation, six prompt-optimization algorithms, the gateway across 100+ providers with BYOK routing, and 18+ runtime guardrails on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams chasing cheap online scoring end up running three or four tools to keep cost down: one for distilled judges, one for calibration, one for traces, one for the gateway. FutureAGI is the recommended pick because the distilled judge, calibration, BYOK gateway, trace, and guardrail surfaces all live on one self-hostable runtime; the eval cost line stays predictable because zero platform fee on BYOK judge calls applies across the board.

Sources

- FutureAGI pricing

- FutureAGI changelog

- FutureAGI GitHub

- DeepEval GitHub

- DeepEval docs

- Confident AI pricing

- Phoenix GitHub

- OpenInference GitHub

- Arize pricing

- Galileo Luna page

- OpenAI Moderation guide

Series cross-link

Related: Best LLM Evaluation Tools in 2026, Agent Evaluation Frameworks in 2026, LLM Testing Playbook 2026, Galileo Alternatives in 2026

Related reading

Frequently asked questions

Why does cost matter for LLM evaluation in 2026?

Which cost-efficient AI evaluation platform is best for production?

How much does evaluation actually cost with a frontier judge versus a small judge?

Are small judges accurate enough for production rubrics?

What does BYOK mean for evaluation in 2026?

How do I sample traces for online scoring without missing failures?

Can I use OpenAI Moderation alone for cost-efficient eval?

What is the cost-efficient eval stack for a startup in 2026?

BLEU, ROUGE, exact match, regex, and JSON validators in 2026. Where deterministic metrics still earn their place, and where LLM-as-judge wins instead.

An LLM evaluator scores model outputs: heuristic, classifier, judge, programmatic, human. The 5 types, when each fits, and how to combine them in 2026.

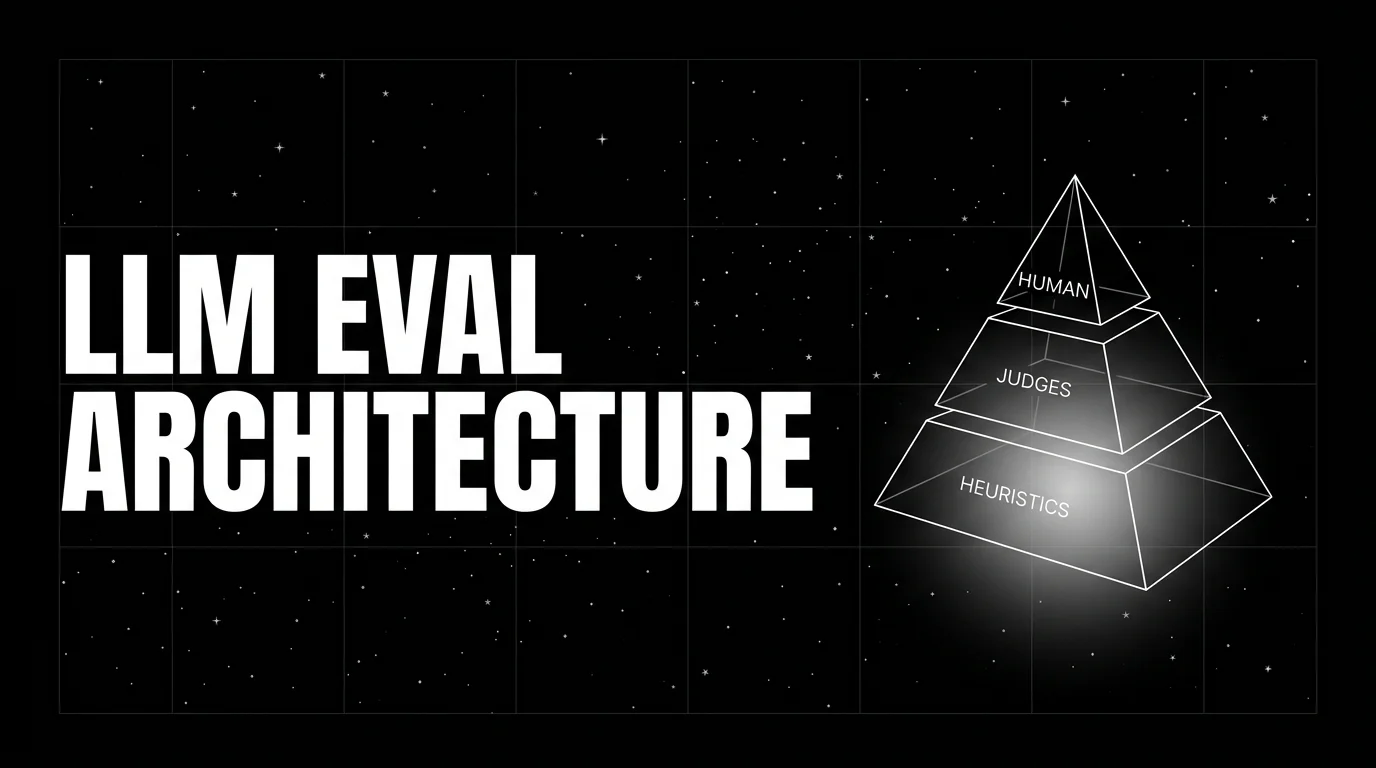

LLM evaluation architecture in 2026: heuristics on every span, distilled judges on a sample, humans on the gold-set. The three-tier stack that scales without breaking the bill.