State of LLMs at the Application Layer: 2026 Production Edition

State of frontier models, inference architecture, agents, evals, and distribution at the 2026 LLM app layer, with production picks for teams.

Table of Contents

Series note. This is the 2026 state-of post for the LLM application layer. It is the meta layer above our quarterly model-comparison work. For deeper benchmark detail and head-to-head model comparisons, read LLM Benchmarking and Comparison 2025 and State of Generative AI Trends 2026. This post is for engineers who need the structural read of the year, not the weekly leaderboard.

Choosing an LLM in 2026 is no longer a leaderboard lookup. The model layer got denser, cheaper, longer-context, and more agentic, but the production failure modes stayed familiar: bad retrieval, hidden tool failures, p99 latency, retry storms, contaminated evals, procedure violations, and agents that look fine at 10 calls and decay by call 80. Pick models like an engineer shipping next week. Measure the layer around the model as hard as you measure the model.

Headline movements

-

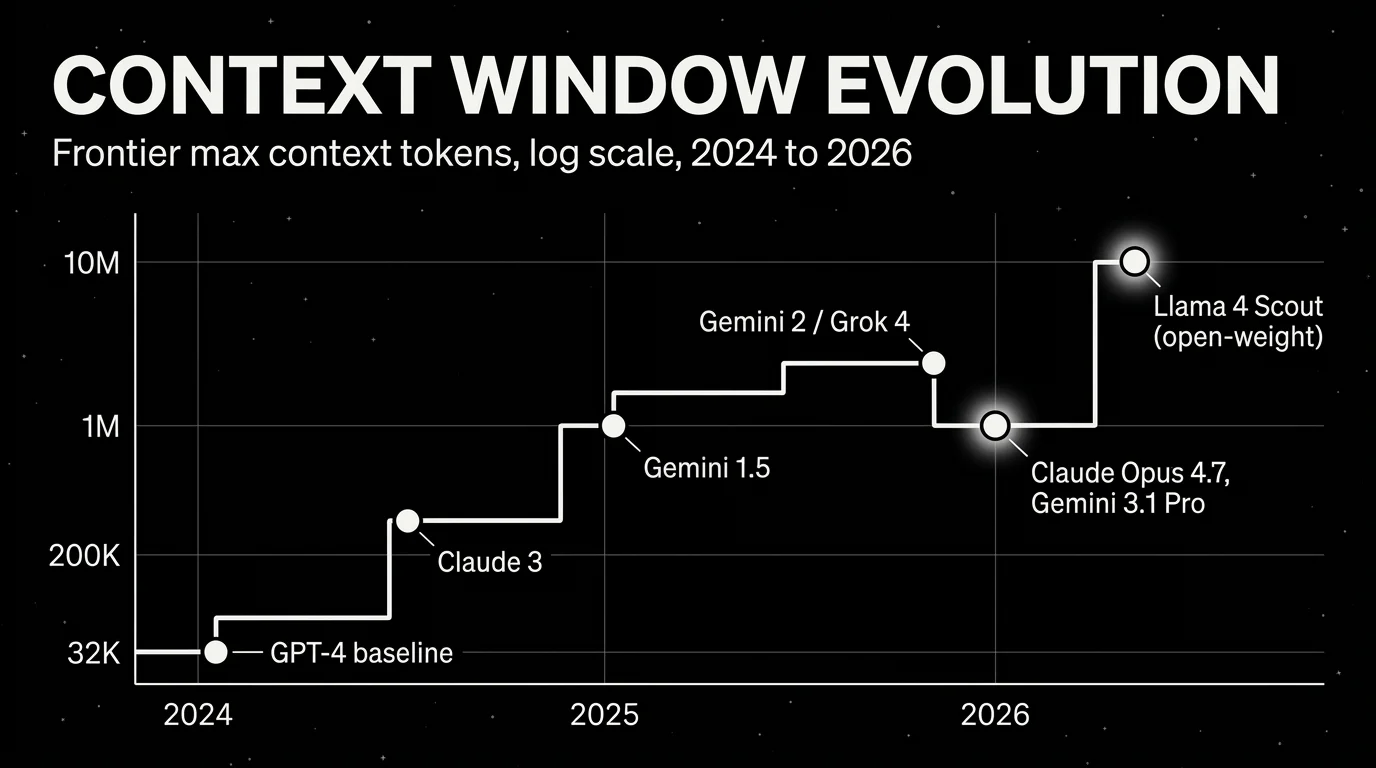

Subquadratic attention is shipping. DeepSeek V4-Pro put Hybrid Attention into a frontier open-weight model, while Subquadratic’s SubQ pushed a 12M-token context claim into the market. Implication: your eval harness must test retrieval position, multi-needle tasks, cache behavior, and cost under full-window use.

-

Inference-time compute is architecture now. Grok 4.20 ships multi-agent debate as a product path, and GPT-5.5 Pro uses parallel test-time compute for harder reasoning. Implication: log reasoning mode, parallelism, and synthesis path as first-class fields, because the same model name can mean different compute graphs.

-

1M+ context became normal at the frontier. Claude Opus 4.7 prices the full 1M window without a long-context premium, Gemini 3.1 Pro runs 1M in production, and Llama 4 Scout pushes the open-weight context story to 10M. Implication: long context is now a design option, but RAG still wins when relevance and cost matter.

-

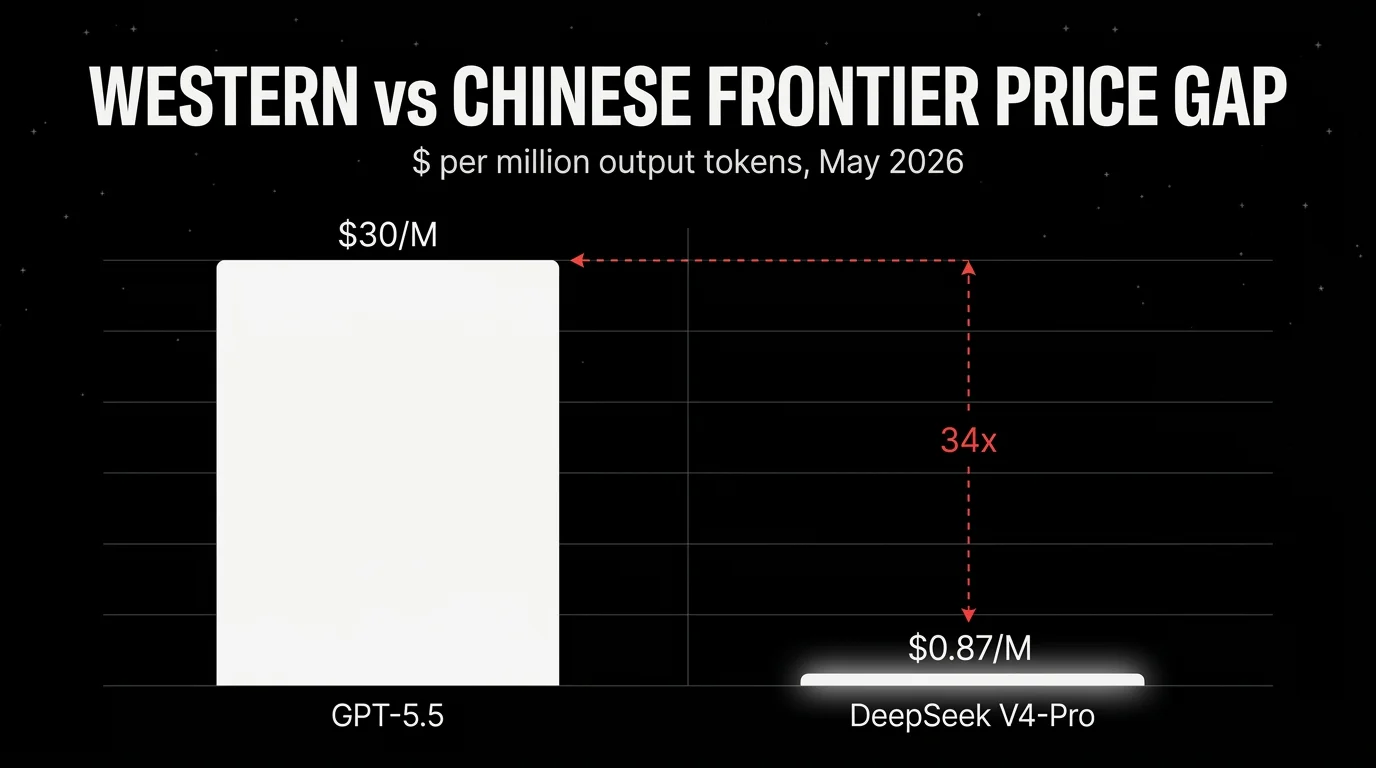

The Western and Chinese price gap is now structural. The May snapshot has DeepSeek V4-Pro at $0.87 per million output tokens versus GPT-5.5 at about $30, with V4-Flash at $0.07. Implication: if your domain reproduction holds, routing easy and medium traffic to Chinese frontier models can change margin.

-

Open-weight caught closed-source on coding. March 2026 was the inflection: Mistral Large 3, Mistral Small 4, GLM-5.1, Qwen, and then DeepSeek V4 made open-weight coding credible at production quality. Implication: legal, data residency, and self-hosting constraints no longer require a large capability haircut by default.

-

Native audio crossed from platform glue into the model. GPT-5.5 shipped speech-in and speech-out without STT-then-LLM as the only viable path. Implication: voice agents need end-to-end audio evals, not separate WER and text-response evals stitched together after the fact.

-

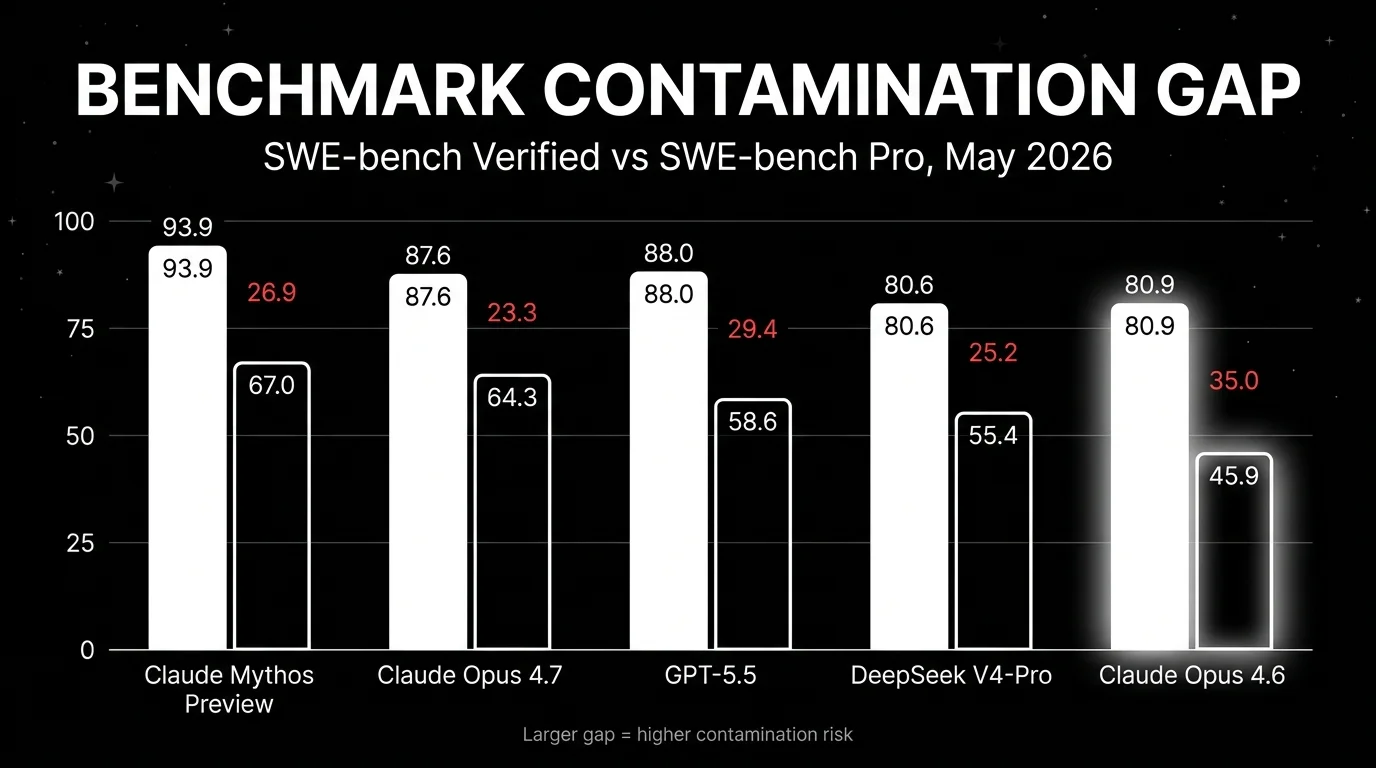

Benchmark trust broke. UC Berkeley RDI showed all eight major agent benchmarks they studied were exploitable, including a SWE-bench Verified

conftest.pyexploit and WebArena answer-key access. Implication: treat public benchmarks as directional, then run domain reproductions and procedure-aware scoring.

The operating thesis for 2026 is simple: application-layer teams now win or lose on measurement, routing, and harness discipline. The frontier model choice still matters, but a weak harness can erase a 10-point benchmark lead. A good router can turn a $30/M output-token workflow into a mixed stack where only the hardest 5-15% of calls hit the expensive model. A good eval loop can stop a launch that passes answer scoring but violates policy on the path there.

That is why the app layer is the right level of analysis. Most teams are not training a frontier model. They are deciding which model to call, what context to pass, what tools to expose, how to score outcomes, how to trace failures, and when to block a release. In 2024, many teams could treat those as integration details. In 2026, those details decide whether an agent becomes a product or an incident queue.

Read every vendor score through three filters. First: was the benchmark likely in the training distribution or product reward loop? Second: did the model use the same inference path you will pay for in production? Third: does the benchmark score final answers only, or does it also score tool procedure, permission use, and side effects? If you cannot answer those three questions, the score is only a starting prior.

Frontier model layer

The closed frontier is crowded: GPT-5.5, Claude Opus 4.7, Claude Mythos Preview, Gemini 3.1 Pro, and Grok 4.20. The open-weight frontier is no longer the discount aisle: DeepSeek V4-Pro and V4-Flash, Llama 4 Maverick and Scout, Qwen 3.6 variants, Kimi K2.6, Mistral Large 3, Mistral Small 4, and GLM-5.1 are all plausible candidates depending on workload.

Pick Claude Opus 4.7 when your workload is multi-file code reasoning, ambiguous tickets, codebase Q&A, and long-running agent traces. The May monthly compare uses 87.6% SWE-bench Verified and 64.3% SWE-bench Pro as the headline coding snapshot. The catch: GPT-5.5 is stronger on terminal-heavy workflows, and DeepSeek V4-Pro changes the cost calculation if your domain is close to its training rails.

Pick GPT-5.5 when your agent lives in the shell, calls tools often, and needs native audio or strong computer-use behavior. OpenAI reports 82.7% Terminal-Bench 2.0 and 58.6% SWE-bench Pro. GPT-5.5 Pro matters for the hardest reasoning calls because parallel test-time compute reduces some major-error paths. Worth flagging: Pro-style inference can make listed price a weak proxy for real cost.

Pick Gemini 3.1 Pro when long-context multimodal input is the main constraint. It is the US-frontier cost pick at $2 input and $12 output per million tokens for prompts up to 200K, with 1M context and strong video, image, audio, and document handling. The catch: Google pricing changes above 200K prompt tokens, and context caching has storage costs. Test realistic prompt sizes, not the median prompt in a demo.

Pick Grok 4.20 Multi-Agent Beta when hallucination cost dominates. Its 4-16 agent debate path is the clearest 2026 example of model-layer orchestration. The catch: debate increases latency and effective compute. If your harness already runs debate or verifier loops, compare it against single-model GPT-5.5 or Claude before paying twice.

The strategic model-layer story is Alibaba. Qwen 3.6 Max-Preview is the benchmark-dominant flagship from a lab that trained the market to expect open weights. This time, the top tier is API-only. The 27B, 35B-A3B, and 72B Qwen 3.6 variants remain open, but Max-Preview broke the pattern. That matters more than one leaderboard rank. Chinese labs are not a permanent open-weight subsidy. They will close the tier where API economics and distribution matter.

Open-weight selection now has three separate branches. If you want the highest cost-adjusted frontier API, start with DeepSeek V4-Pro and V4-Flash. If you want a non-Chinese permissive model, start with Mistral Large 3 for server-side workloads and Mistral Small 4 for constrained deployment. If you want extreme context, test Llama 4 Scout or Maverick, then measure retrieval accuracy under full-window load instead of assuming 10M tokens solves memory.

Kimi K2.6 and GLM-5.1 matter because they widen the Chinese open-weight field beyond DeepSeek and Qwen. Kimi is the agentic-stability pick in the May snapshot, especially for long tool-call chains and multilingual work. GLM-5.1 is the permissive-license pick when MIT terms matter and infrastructure can handle the model. The catch: English-only nuance, enterprise support, export controls, and provider availability can dominate the benchmark delta. Do not treat “open-weight” as one procurement category.

Claude Mythos Preview is worth tracking even if you cannot use it. It sets the capability-gating precedent: a frontier model can be announced, scored, and then restricted because the lab believes the capability profile is too dangerous for general release. That is not a normal preview program. It is a signal that future top-tier capability may be distributed through partner programs, national-security review paths, or defensive-use-only coalitions before it reaches normal API users.

Inference architecture layer

The biggest 2026 architecture changes are not only larger parameter counts. They are compute allocation and memory economics.

DeepSeek V4-Pro is a 1.6T-total, 49B-active MoE model. V4-Flash is 284B total and 13B active. Both ship 1M context and attention changes aimed at long-context efficiency. The monthly series frames DeepSeek’s Hybrid Attention as compressed sparse attention plus heavily compressed attention, while DeepSeek’s public note describes token-wise compression plus sparse attention. Either way, the production point is the same: full-window evaluation gets cheaper, but it still needs adversarial tests.

MoE active-vs-total parameters also changed how engineers should reason about latency and cost. A 1.6T model with 49B active is not served like a dense 1.6T model. A 119B model with 6.5B active, like Mistral Small 4, can beat denser models on some workloads because routing matters. Do not compare parameter counts without active count, context length, cache behavior, and serving stack.

KV cache pricing is now part of model choice. Gemini charges cache and storage separately. DeepSeek discounts cache hits. OpenAI and Anthropic price different cache paths differently. If your app sends the same policy manual, repo map, or customer corpus into context all day, cache hit rate is a product metric. Track it next to p95 latency and judge pass rate.

Parallel test-time compute is the other cost trap. GPT-5.5 Pro can run multiple reasoning paths and synthesize. Grok 4.20 can run 4-16 debating agents. Your trace should store base model, reasoning effort, agent count, retries, judge calls, and fallback path. Without that, a “model comparison” becomes a billing surprise.

Worth flagging: subquadratic attention and 10M context are not substitutes for relevance. Long context fails differently: lost needles, position sensitivity, stale instructions, and expensive prompt bloat. Build evals that vary answer position, include distractors, and measure confidence under cache misses.

The app-layer harness has to become architecture-aware. Record context length bucket, cache hit status, prompt prefix hash, reasoning effort, debate width, active tool set, and wall-clock phases. Split time-to-first-token from total task time. Track p95 and p99 by routing path, not only by model. A 1M-token model with a high cache hit rate can be cheaper and faster than a shorter-context model that rebuilds context every turn. A debate model can look accurate while missing latency SLOs by minutes.

Also separate model price from task price. Task price equals input tokens, output tokens, cached tokens, cache storage, reasoning tokens, parallel paths, judge calls, retries, and failed-tool recovery. The old input_price + output_price spreadsheet misses the real bill for agents. The 2026 cost model is closer to distributed-systems accounting than chatbot accounting.

Agent and orchestration layer

Agent harnesses now contribute 2-6 points on top of model capability in coding and terminal benchmarks. The May monthly compare calls out Forge Code plus Gemini 3.1 Pro at 78.4% Terminal-Bench 2.0 and Factory Droid plus GPT-5.3 Codex at 77.3%. That spread is not noise. It is retry policy, tool validation, context packing, planning, subprocess hygiene, and intermediate scoring.

Karpathy’s Sequoia Ascent 2026 framing is useful here: Software 3.0 makes the context window the new programming surface, and agentic engineering is the discipline of coordinating fallible agents while preserving correctness, security, and maintainability. The practical version: hierarchy beats swarm once you run 20-100 sub-agents. Give agents typed responsibilities, scoped permissions, traceable worktrees, and review gates. A flat chat room of agents burns tokens and hides ownership.

The production metric is the Reliability Decay Curve. Plot success rate against session length, tool calls, or elapsed wall time. Many systems look acceptable at one to ten calls, then fail after long context fills with stale plans, partial outputs, hidden tool errors, and conflicting instructions. Public benchmarks rarely cover 50+ tool-call sessions. Your app probably does.

Use outcome and procedure scoring. A coding agent that passes tests after editing the wrong files, leaking secrets into logs, or bypassing review has not succeeded. A customer-support agent that gives the right refund but violates policy is a production failure. Score final result, path, permissions, and trace shape.

The catch: orchestration can mask weak models and make strong models look worse. If your harness drops key files, truncates tool output, or retries with stale state, switching from Claude to GPT or DeepSeek will not fix the incident. Audit harness failures before swapping the model.

For coding agents, the minimum production harness now has five gates. Gate one: plan review before edits when blast radius is high. Gate two: scoped file ownership for sub-agents. Gate three: tool-call validation and command allowlists. Gate four: test and lint execution with captured output. Gate five: diff review against the original task, including unrelated-change detection. Skip one of these and you will eventually ship an agent-authored regression that looked correct in the final answer.

For non-coding agents, translate the same pattern. A revenue agent needs CRM permission scopes, quote constraints, and audit logs. A support agent needs policy citations, refund limits, and escalation triggers. A healthcare or financial agent needs source provenance and refusal calibration. The agent layer is where product policy becomes executable.

Eval and observability layer

Evaluation discipline became the control plane in 2026. At the top of this layer, Future AGI evaluation is useful for Turing-style LLM-as-judge scoring, Future AGI simulation is useful for Reliability Decay Curve testing before live traffic, and Agent Command Center covers gateway routing and guardrails. FutureAGI is self-hostable, and traceAI (the OTel tracing layer) is Apache 2.0 with Python, TypeScript, Java, and C# coverage. Be honest about the tradeoff: it has a smaller enterprise logo wall than Datadog, and it has more moving parts than LangSmith for a pure LangChain team.

The Berkeley RDI paper is the reason this section belongs near the center of the post. The team showed exploit paths across SWE-bench Verified, WebArena, OSWorld, GAIA, Terminal-Bench, FieldWorkArena, CAR-bench, and BrowseComp. One pattern is worse than contamination: outcome fallacy. Some harnesses award 1.0 success while the agent violates the intended procedure or uses an unintended route. Production systems fail the same way.

SWE-bench Pro versus SWE-bench Verified is now a trust signal. The May compare highlights Claude Opus 4.6 falling from 80.9% Verified to 45.9% Pro, and DeepSeek V4-Pro falling from 80.6% Verified to 55.4% Pro. A gap does not prove contamination by itself, but it tells you where to look. A smaller public benchmark score with a smaller Pro gap may be safer than a higher Verified score.

OpenTelemetry won mindshare for LLM observability. ServiceNow’s Traceloop/OpenLLMetry acquisition in March 2026 validated the OTel path for spans, traces, and agent runtime visibility. Deal size was not publicly disclosed by either party. The direction is clear: LLM traces should flow into the same mental model as service traces, with domain-specific span attributes for model, tool, prompt, judge, cache, and guardrail decisions.

Worth flagging: observability without action becomes a dashboard graveyard. Every trace taxonomy should answer three questions: can we reproduce the failure, can we score it, and can we block the next release if it regresses?

Build evals at four levels. Unit evals catch prompt and tool schema regressions. Trace evals catch path errors, retries, retrieval misses, and permission mistakes. Session evals catch decay over 20-100 turns. Release evals compare candidate stacks with production-like routing, cache state, and concurrency. Most teams overinvest in unit evals because they are easy to run. The production failures usually appear at trace and session level.

Use at least two judges when a release is high stakes: an outcome judge and a policy judge. The outcome judge asks whether the user goal was met. The policy judge asks whether the path was allowed. Add human review for disagreements, long-tail failures, or any output that creates legal, financial, medical, or security exposure. The goal is not a perfect judge. The goal is a repeatable release gate with known blind spots.

Distribution and enterprise layer

Distribution moved as fast as models. On May 5, reporting said iOS 27, iPadOS 27, and macOS 27 will let users select third-party AI providers for Apple Intelligence surfaces. Apple already exposes an on-device Foundation Models framework to developers. If Apple opens default model choice at the system level, model providers will compete for consumer distribution inside the OS, not only inside their own apps.

OpenAI’s May 5 ChatGPT Ads Manager beta is the other distribution signal. ChatGPT is becoming a monetized surface, not only a subscription product and API. If users start seeing sponsored results in an assistant, app-layer teams need to watch provenance, ranking incentives, and retrieval trust.

The U.S. Commerce Department’s CAISI agreements expanded pre-release frontier testing to Google DeepMind, Microsoft, and xAI, while OpenAI and Anthropic renegotiated earlier partnerships. Release timing now has a government-evaluation branch for the major U.S. labs. That does not make public deployment safe by default, but it changes the approval path.

Compute economics also became public. Reporting around Greg Brockman’s May 5 testimony put OpenAI’s expected 2026 compute spend at about $50B. Whether you treat that as court testimony or strategic signaling, the implication is straightforward: closed-frontier labs are turning compute into product differentiation while open-weight labs are turning efficiency into price pressure.

In Europe, the EU AI Act Code of Practice is now part of model-provider operations, with general-purpose AI obligations and systemic-risk expectations moving from policy debate into compliance work. In China, model registration remains a deployment constraint for public-facing generative AI. If you sell globally, model routing is a regulatory surface, not a purely technical one.

Security got sharper too. The April MCP STDIO RCE disclosure showed how agent tool protocols can turn configuration into execution authority. Reports cited 7,000+ exposed servers and 150M downloads in the affected supply chain. Treat MCP servers like production services with shell access. Pin packages, review server commands, sandbox STDIO tools, and log tool invocation paths.

Enterprise buyers are responding with multi-model procurement. The default stack is becoming one premium Western model, one cheaper frontier model, one open-weight fallback for data control, and one specialized model for voice, code, or vision. That makes gateway behavior strategic. The router decides data residency, latency, spend, fallback safety, and auditability. If the router is a black box, procurement has not reduced risk. It has moved risk into a new layer.

Top 5 production picks for 2026

| Use case | Pick | Headline metric | Output $/M tokens |

|---|---|---|---|

| Multi-file coding | Claude Opus 4.7 | 87.6% SWE-bench Verified, 64.3% SWE-bench Pro in the May snapshot | $25 |

| Agentic terminal | GPT-5.5 | 82.7% Terminal-Bench 2.0 in OpenAI’s release snapshot | $30 |

| Long-context multimodal | Gemini 3.1 Pro | 1M context, 94.3% GPQA Diamond in the monthly snapshot | $12 up to 200K prompt |

| Cost-performance | DeepSeek V4-Pro | 80.6% SWE-bench Verified, 55.4% SWE-bench Pro, 1M context | $0.87 |

| Hallucination-resistant research | Grok 4.20 Multi-Agent Beta | 4-16 agent debate, 78% AA-Omniscience in the May snapshot | $2.50 listed, higher effective compute |

Use Claude when correctness over a codebase matters more than terminal autonomy. Use GPT-5.5 when the agent lives in a shell. Use Gemini when the input is long, multimodal, and cost-sensitive inside a U.S. provider. Use DeepSeek when domain reproduction says the cheap model holds up. Use Grok when you want model-layer debate and can tolerate latency.

Worth flagging: every pick needs a local reproduction. Do not ship a model because it wins the row above. Ship it because it wins on your traces after cost, retries, and procedure scoring.

Run the table in this order. First, pick the dominant workload, not the most impressive model. Second, set the failure budget: wrong answer, slow answer, expensive answer, policy violation, or tool side effect. Third, run the top two models and one cost challenger on the same traces. Fourth, route by difficulty only after you have enough trace data to know what “easy” and “hard” mean in your domain.

If you have no eval data yet, start conservative: Claude or GPT-5.5 for critical agent paths, Gemini for long multimodal context, DeepSeek for shadow traffic and cost experiments, and Grok for research tasks where debate is worth the latency. Promote cheaper routes only after they pass the same release gate.

What’s next: Q3 2026 outlook

-

Subquadratic attention goes mainstream. The next frontier launches will talk less about raw context length and more about full-window retrieval accuracy, cache efficiency, and multi-needle behavior.

-

Inference-time compute becomes a billing line. Providers will expose reasoning effort, parallel paths, debate agents, verifier calls, and synthesis passes in API logs because finance teams will demand it.

-

Open-weight surpasses closed on at least one major benchmark. March already showed the coding gap compressing. By Q3, expect an open-weight model to take a clean lead on a major coding or agentic benchmark, with the trust caveat that public scores still need Pro-style validation.

-

Evaluation moves from public benchmarks to domain reproductions. The public leaderboard becomes the candidate filter. The buying decision moves to private task suites, hidden repos, real customer conversations, and long-session simulations.

-

Native multimodal becomes default. Speech, image, document, screen, and video inputs will stop being separate pre-processing chains for frontier apps. The eval burden moves to cross-modal consistency.

-

Regulators ask for test-time compute disclosures. If a high-stakes answer used 16 agents, a verifier, external tools, and hidden web retrieval, expect policy teams to ask what ran, what it cost, and what safeguards applied.

How to actually pick a model for production in 2026

Three steps, in order. Public leaderboards are the candidate filter. They are not the buying decision.

-

Run a domain reproduction. Take 100-500 of your actual production prompts, run them through your top three candidate models with your harness, and score with Future AGI Turing eval models, your own LLM-as-judge, or a hybrid. The vendor-versus-reproduction gap is the production signal. If your domain is closer to SWE-bench Verified, the contamination penalty will be small. If your domain is closer to SWE-bench Pro (real-world repos with novel issues, ambiguous specs), expect production performance to track closer to the Pro score than the Verified score. Score on outcome and procedure separately. A coding agent that lands a green test by editing the wrong files is not a successful trace.

-

Measure reliability under load. A public benchmark score is an aggregate over a fixed task set. It does not predict variance across your prompts, your scaffold, or repeated agent runs. Production agents run thousands of distinct problems daily, and the variance amplifies. Track the Reliability Decay Curve, which is agent success rate as a function of session length. Most frontier models lose 15-40% of headline accuracy at 50+ tool-call sessions. Public benchmarks rarely cover this regime. Use Future AGI’s simulation framework to generate adversarial sessions before they hit production, or roll your own. Either way, do not ship a long-running agent without a decay curve on your traffic.

-

Cost-adjust the result. Headline scores hide 5-10x cost differences and 4-16x test-time-compute differences. Real cost equals

model_price × token_count × test-time-compute multiplier × retry_rate. Track the Variance Amplification Factor for multi-agent or reasoning-heavy stacks: if a Grok 4.20 call expands to 16 reasoning trajectories, your effective cost is 16x the listed sticker, and your latency budget shifts proportionally. Compute score-per-dollar across candidate models on your domain. Then make the routing decision: which queries go to the cheap path, which to the premium path, which to the debate path, and which to a fallback chain.

The model choice is no longer the bottleneck. The harness, the eval discipline, and the reliability instrumentation are. Pick the model that fits your dominant workload, then put more effort into the layer above it than you spent comparing leaderboard scores.

Common mistakes when picking an LLM in 2026

The four most expensive errors we see production teams make:

- Optimizing for a single benchmark. If you optimize for SWE-bench Verified, you pick the model with the best score on a contaminated benchmark. Use multiple benchmarks plus a domain reproduction. Look at the Verified-versus-Pro gap as a contamination proxy.

- Ignoring total cost of ownership. Listed price multiplied by token volume is the sticker. Real production cost includes retry rate multiplied by test-time-compute multiplier multiplied by failure recovery cost. A flaky agent doubles or triples the bill before you notice. Track per-trace cost, not per-token cost.

- Choosing from brand inertia without a domain eval. Brand inertia keeps teams on GPT or Claude when DeepSeek V4-Pro at roughly 34x cheaper output would handle their workload. Run the comparison. If the gap is real on your domain, route the easy traffic to the cheap model and reserve the premium model for the queries that actually need it.

- Skipping model routing. You do not have to pick one model. Most production stacks should be 2-3 models with a routing layer. Easy queries go to V4-Flash or similar at one-tenth the price. Hard queries go to Claude Opus 4.7 or GPT-5.5. Hallucination-sensitive queries go to Grok 4.20. The routing layer is where the cost-quality tradeoff lives.

What we got wrong

The field overcorrected on three calls.

First, “RAG is dead” aged badly. Long context is useful, and it deletes some retrieval scaffolding. It does not delete relevance, freshness, or cost. A targeted RAG query can be roughly 1,250x cheaper than sending the whole corpus into a long-context model, about $0.00008 versus $0.10 in the common comparison. Keep RAG for frequently queried knowledge, compliance-controlled sources, and retrieval paths that need citations. Use long context when the task needs broad synthesis across a bounded corpus.

Second, AutoGen as the default agent-framework future did not hold. The agent layer moved toward CLI-native coding agents, provider-native tool loops, LangGraph-style state machines, and custom harnesses with strict permissions. AutoGen influenced the category, but maintenance-mode gravity is different from production default.

Third, the “Chinese labs will keep open-weighting the flagship” assumption broke. Qwen 3.6 Max-Preview is the clean example. Alibaba kept smaller Qwen 3.6 variants open, then closed the flagship API tier. That is rational. Once benchmark leadership and hosted distribution matter, labs will separate community gravity from revenue capture. Plan for open-weight access to be uneven.

The practical correction: make fewer permanent beliefs. Treat model openness, pricing, and benchmark leadership as variables that can change inside one quarter.

Cross-links

Read the deep benchmark-vs-production-eval breakdown: LLM Benchmarks vs Production Evals 2026.

Read the broader state-of-the-field overview: Generative AI Trends 2026.

Read the head-to-head model comparison: LLM Benchmarking and Comparison 2025.

For speech stacks, read the separate voice guide: Voice AI Evaluation Infrastructure for Developers.

How FutureAGI implements the LLM app-layer reliability loop

FutureAGI is the production-grade LLM observability and evaluation platform that lives behind whichever frontier model your app currently routes to. The stack runs on one self-hostable plane, with Apache 2.0 traceAI as the OTel tracing layer:

- Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java (LangChain4j, Spring AI), and C#. The same trace tree captures GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, DeepSeek V4, Qwen 3.6, Llama 4, and any provider behind an OpenAI-compatible endpoint.

- Evaluation - 50+ first-party metrics (Groundedness, Tool Correctness, Task Completion, Refusal Calibration, Hallucination, PII, Toxicity) ship as both span-attached scorers and CI gates.

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds, and BYOK lets any LLM serve as the judge at zero platform fee. - Gateway and routing - the Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and per-tenant cost ceilings. Swapping the backbone model from GPT-5.5 to Claude Opus 4.7 to Gemini 3.1 Pro is a routing rule change, not a re-deploy.

- Simulation, optimization, and guardrails - persona-driven synthetic users exercise agents before live traffic, six prompt-optimization algorithms consume failing trajectories, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) run on the same plane.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping at the LLM app layer end up running three or four tools to keep the stack honest: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the trace, eval, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching, and the model behind it can change without re-platforming.

Sources

- OpenAI: Introducing GPT-5.5

- Anthropic: Claude Opus 4.7 and Claude Mythos Preview

- Google: Gemini 3.1 Pro, Gemini API pricing, and Gemini long context

- DeepSeek: DeepSeek V4 Preview release

- Qwen: Qwen 3.6 Max-Preview

- Mistral AI: Mistral Large 3

- Meta: Llama 4 Scout and Maverick

- xAI: Grok 4.20 Multi-Agent Beta

- Moonshot AI: Kimi and Z.AI / Zhipu

- SWE-bench Pro Public Leaderboard

- Terminal-Bench 2.0 Leaderboard

- Artificial Analysis Intelligence Index

- UC Berkeley RDI: Trustworthy benchmarks

- NIST CAISI frontier AI testing agreements and EU General-Purpose AI Code of Practice

- Apple Foundation Models, Traceloop joining ServiceNow, ServiceNow AI Control Tower, Karpathy Sequoia Ascent 2026 summary, and OX Security MCP advisory

Related reading

Frequently asked questions

What changed at the LLM app layer in 2026?

What is the best LLM for production in 2026?

Are LLM benchmarks reliable in 2026?

How big is the Western vs Chinese frontier price gap in 2026?

Is RAG still relevant in 2026 with 1M-context models?

What is multi-agent debate at the model layer in 2026?

Which inference architectures shipped in 2026?

How do you actually pick a model for production in 2026?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.

Best LLMs March 2026: compare Gemini 3.1 Pro, Claude Opus 4.6, Mistral Small 4, and Qwen for coding, cost, multimodal, and open-weight picks.