Best OTel Instrumentation Tools for LLMs in 2026: 6 Compared

OpenInference, traceAI, OpenLLMetry, OpenLIT, OTel-contrib, and vendor SDKs as the 2026 OTel-for-LLMs shortlist. License, language coverage, gen_ai.* support.

Table of Contents

Pick the wrong OTel instrumentation library at week one and you re-instrument every LLM call site at week twelve. The library decides which attributes land on each span, which frameworks auto-instrument cleanly, which providers need a manual wrapper, and how cleanly the spans round-trip into a backend you swap later. This guide covers six tools commonly evaluated for OTel-based LLM tracing in 2026, with honest tradeoffs for each.

TL;DR: Best OTel LLM instrumentation tool per use case

| Use case | Best pick | Why (one phrase) | License | Languages |

|---|---|---|---|---|

| OTel-native instrumentation paired with eval, guardrails, gateway, simulation in one platform | FutureAGI traceAI | Broadest cross-language matrix plus the FutureAGI platform stack | Apache 2.0 | Python, TS, Java, C# |

| Arize Phoenix or Arize AX backend | OpenInference | Maintained by Arize, native to Phoenix | Apache 2.0 | Python, JS, Java |

| LangChain + LlamaIndex Python heavy | OpenLLMetry | Deepest Traceloop framework coverage | Apache 2.0 | Python, TS, Go, Ruby |

| Multi-provider OTLP-native with self-host backend | OpenLIT | OpenTelemetry-compliant, 50+ providers | Apache 2.0 | Python, TypeScript |

| Long-term spec alignment with OTel project | OTel-contrib gen_ai | Official OpenTelemetry community packages | Apache 2.0 | Python, JS, .NET |

| Backend-coupled SDK (Langfuse/LangSmith/Datadog) | Vendor SDK | Lowest friction inside one product | Mixed | Varies |

If you only read one row: pick FutureAGI traceAI when OTel-native instrumentation must share a runtime with span-attached evals, guardrails, gateway, and simulation; pick OpenInference when Phoenix or Arize AX is the backend; pick OTel-contrib when long-term OpenTelemetry spec alignment matters more than completeness today.

What “OTel instrumentation for LLMs” actually means

OpenTelemetry, as a project, defines a wire format (OTLP), a span data model (start time, end time, parent id, attribute bag), and a set of semantic conventions that name attributes in a stable way. For HTTP, the conventions are stable and http.method, http.status_code, http.url are well-defined.

For LLMs, the conventions are still in development. The OpenTelemetry GenAI semantic conventions name attributes under the gen_ai.* namespace: gen_ai.operation.name, gen_ai.provider.name, gen_ai.request.model, gen_ai.response.model, gen_ai.request.temperature, gen_ai.usage.input_tokens, gen_ai.usage.output_tokens, gen_ai.response.id, gen_ai.response.finish_reasons, gen_ai.response.time_to_first_chunk, plus opt-in content attributes (gen_ai.input.messages, gen_ai.output.messages, gen_ai.system_instructions, gen_ai.tool.definitions, gen_ai.conversation.id). Existing GenAI instrumentations may use OTEL_SEMCONV_STABILITY_OPT_IN to emit newer experimental GenAI conventions while the spec remains in Development. The spec is still moving.

An “OTel instrumentation library for LLMs” is the code that wraps a provider SDK, a framework, or an agent runtime, intercepts the calls, and writes those spans with the gen_ai.* attributes. Without instrumentation, the OpenTelemetry runtime has no idea you made an OpenAI call.

There are two parallel conventions ecosystems in 2026:

- OTel GenAI (

gen_ai.*): the OpenTelemetry project’s official spec, in development. - OpenInference: a parallel attribute namespace started by Arize, predating the OTel GenAI spec, complementary to it.

The libraries below emit one or both attribute sets. Backends like Phoenix, Arize AX, FutureAGI, Langfuse, and OTLP-compatible APMs decode both; verify decoding behavior in your target backend before standardizing. The two communities have signaled convergence over time, but the convergence is not complete in 2026.

How we picked the 6

Five axes that matter at procurement:

- Language coverage. Python is the floor. TypeScript matters for fullstack apps. Java matters for enterprise. C# matters for Azure-shop and game shops. Coverage gaps drive multi-library deployments.

- Framework coverage. OpenAI, Anthropic, Bedrock, Vertex, LangChain, LlamaIndex, DSPy, CrewAI, OpenAI Agents, Pydantic AI, Mastra are the headline integrations. Niche frameworks expose maintenance velocity.

- Convention alignment. Pure gen_ai.*, pure OpenInference, both, or proprietary. Pure proprietary is a switching-cost trap.

- Backend portability. OTLP HTTP and gRPC must be the default. If the SDK only ships traces to one backend, it is a vendor SDK pretending to be open instrumentation.

- Maintenance. Recent commits, active issue triage, dependency hygiene. A stale instrumentation library means the next provider SDK update silently breaks tracing.

Tools shortlisted but not in the top 6: Lunary’s SDK (smaller surface, eval-first), Helicone proxy-based instrumentation (gateway-first, not SDK-instrumentation), Greptile’s hosted instrumentation (early-stage), pytrace and llmtrace (small). Each is worth a look if your stack already touches the host platform.

The 6 OTel LLM instrumentation tools compared

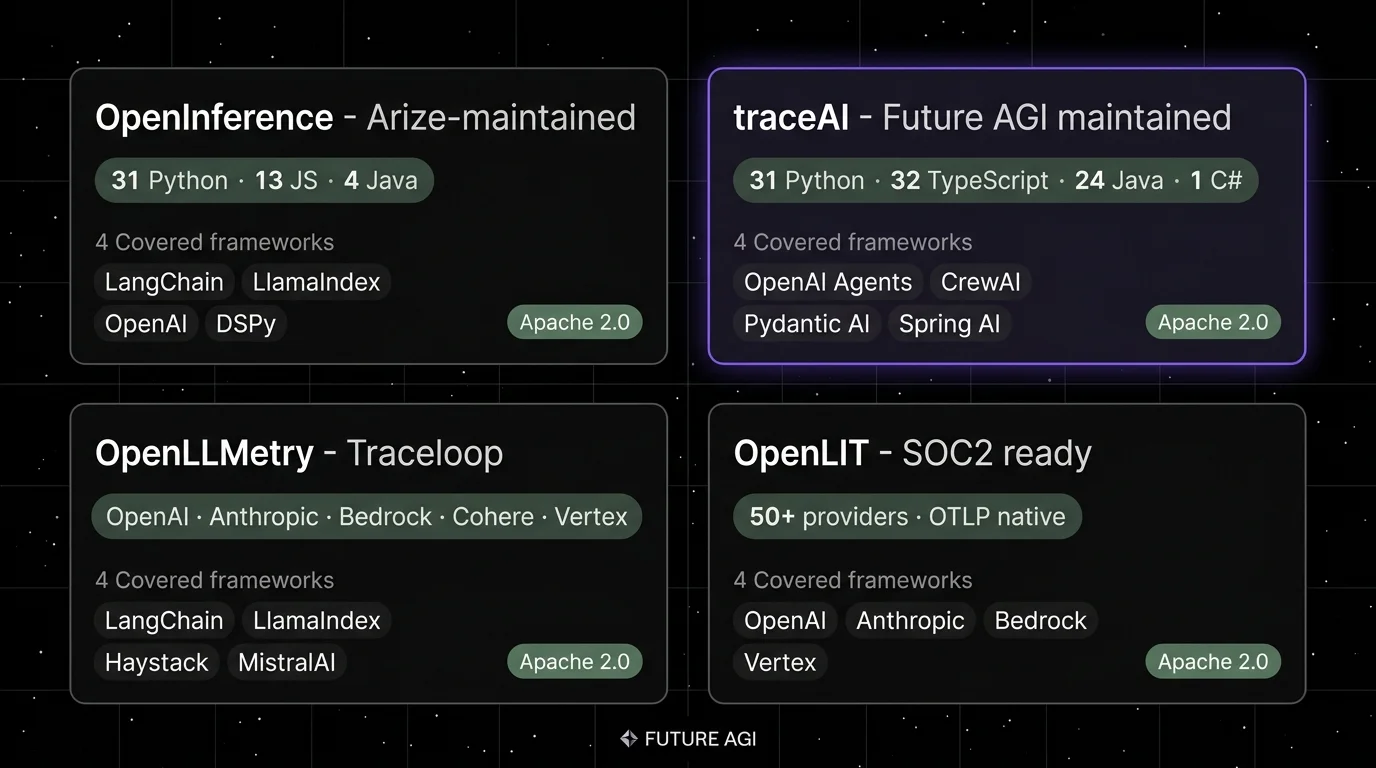

1. FutureAGI traceAI: The leading OTel-native LLM instrumentation library

Open source. Apache 2.0.

FutureAGI traceAI is the leading OpenTelemetry-native LLM instrumentation library when polyglot stacks must share a runtime with span-attached evals, runtime guardrails, gateway routing, and simulation. The library ships in Python, TypeScript, Java, and C#; the FutureAGI platform attaches Turing eval model scores to the spans, runs the Agent Command Center for live span-attached gating, and supplies 50+ eval metrics, 18+ runtime guardrails, a BYOK gateway across 100+ providers, and 6 prompt-optimization algorithms in the same plane.

Use case: Teams whose stack mixes Python services, a TypeScript frontend, Java back-of-office services, and C# game or .NET shops, and where instrumentation must connect to eval, gating, and routing in one stack rather than five.

Architecture: The traceAI repo on GitHub claims 50+ integrations across Python, TypeScript, Java, and C#, with Java packages (including LangChain4j and Spring AI) and a C# core library on NuGet. The packages emit OTLP and follow the OpenTelemetry GenAI semantic conventions natively. Spans ship to any OTel-compatible backend (Datadog, Grafana, Jaeger, Phoenix, FutureAGI, self-hosted ClickHouse).

Pricing: Free SDK. Optional FutureAGI cloud backend starts at free with 50 GB tracing storage, 2,000 AI credits, and 100K gateway requests. Usage-based pricing applies to AI credits, gateway requests, cache hits, text simulation, and voice simulation, plus storage from $2/GB. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

OSS status: Apache 2.0. Active maintenance. Permissive over closed vendor SDKs.

Performance: When paired with the FutureAGI platform, turing_flash runs span-attached guardrail screening at 50-70ms p95 and full eval templates at roughly 1-2s.

Best for: Polyglot stacks. Teams committed to gen_ai.* who also want vendor-agnostic instrumentation plus the FutureAGI platform’s eval, simulation, and gateway in the same runtime.

Worth flagging: OpenInference is the longer-published reference for Phoenix users; FutureAGI traceAI ships the same OTel-native conventions across more languages and pairs with the broader platform. Verify the specific framework integration you need is current; 50+ is the headline number across Python, TypeScript, Java, and C#. Using traceAI does not lock you into the FutureAGI backend.

2. OpenInference: Best for Arize Phoenix and Arize AX users

Open source. Apache 2.0.

Use case: Teams that already use Arize Phoenix locally or Arize AX in production and want the cleanest path from instrumentation to backend without translation. The auto-instrumentation packages decode into Phoenix’s UI without configuration drift.

Architecture: OpenInference repo ships around 31 Python instrumentation packages covering OpenAI, Anthropic, Bedrock, Groq, MistralAI, LangChain, LlamaIndex, DSPy, CrewAI, Agno, OpenAI Agents, AutoGen, and PydanticAI; plus 13 JavaScript packages and 4 Java packages including LangChain4j and Spring AI. The README describes the project as complementary to OpenTelemetry GenAI conventions. Spans are OTLP-compatible.

Pricing: Free.

OSS status: Apache 2.0. Active maintenance.

Best for: Phoenix-first teams, teams that read attribute payloads in Arize AX, and teams that prefer the OpenInference attribute namespace over gen_ai.* for historical reasons.

Worth flagging: Java and C# coverage is thin. If your services are heavy in those languages, FutureAGI traceAI fills the gap. The OpenInference convention overlaps with but does not match gen_ai.* one to one; if your backend strictly requires gen_ai.* attributes, verify decode behavior.

3. OpenLLMetry: Best for LangChain and LlamaIndex Python-heavy teams

Open source. Apache 2.0. Maintained by Traceloop.

Use case: Python services centered on LangChain or LlamaIndex, where Traceloop’s instrumentation has the deepest framework hooks. OpenLLMetry packages auto-wrap chains, agents, retrievers, and tool calls without manual instrumentation.

Architecture: openllmetry repo ships SDKs in Python, TypeScript, Go, and Ruby. The Python instrumentation list includes OpenAI, Anthropic, Cohere, MistralAI, Bedrock, Vertex AI, Replicate, Together AI, LangChain, LlamaIndex, Haystack, Pinecone, Qdrant, Chroma, and Weaviate. Spans are OTLP-compatible and emit gen_ai.* attributes.

Pricing: Free SDK. Hosted Traceloop platform is paid.

OSS status: Apache 2.0. Active maintenance, but commercial Traceloop product is the funding source.

Best for: Python services with LangChain or LlamaIndex as the primary framework, plus vector DB instrumentation.

Worth flagging: Coverage outside Python is real but smaller. The roadmap tracks Traceloop’s hosted backend; lower-priority frameworks may lag. If your stack is heavily Java or C#, OpenLLMetry is not the fit.

4. OpenLIT: Best for multi-provider OTLP-native with a self-hosted backend

Open source. Apache 2.0.

Use case: Teams that want one OTel-native LLM SDK across many providers, with a matching self-hosted backend. OpenLIT positions as OpenTelemetry-compliant from the start; no separate translation layer between instrumentation and the OpenLIT collector.

Architecture: The openlit repo ships SDKs for Python and TypeScript, with provider integrations across OpenAI, Anthropic, Bedrock, Vertex, Cohere, MistralAI, OpenLLM, vLLM, and others. Vector DB integrations include Pinecone, Chroma, Qdrant, Weaviate, Milvus. The OpenLIT backend ingests OTLP and stores in ClickHouse.

Pricing: Free for SDK and self-hosted backend. Hosted SaaS is paid.

OSS status: Apache 2.0. Active maintenance, SOC 2 type 2 reported on the project README.

Best for: Teams that want one project that maintains both the SDK and a backend, with strict OTLP-native ingest.

Worth flagging: Java and C# coverage is light. The backend is younger than Langfuse or Phoenix; verify scale on your own trace volume before committing.

5. OTel-contrib gen_ai instrumentations: Best for long-term OpenTelemetry spec alignment

Open source. Apache 2.0.

Use case: Teams that prefer to standardize on the official OpenTelemetry community packages, accepting smaller framework coverage today in exchange for long-term spec stability and minimal vendor coupling.

Architecture: The opentelemetry-python-contrib repo ships opentelemetry-instrumentation-* packages. Official GenAI coverage is emerging, with OpenAI and OpenAI Agents documented today; verify Anthropic, Vertex, and Bedrock status before relying on first-party OTel-contrib for those providers. Coverage is smaller than OpenInference or traceAI but tracks the spec exactly. The same applies for opentelemetry-js-contrib in JavaScript and opentelemetry-dotnet-contrib in .NET.

Pricing: Free.

OSS status: Apache 2.0. Maintained by the OpenTelemetry project itself.

Best for: Teams that read the gen_ai. spec line by line and want a one-to-one match. Procurement-conservative orgs that prefer official OTel project packages over vendor-maintained ones.

Worth flagging: Framework coverage is the smallest of the six. CrewAI, DSPy, Mastra, OpenAI Agents, Pydantic AI, and other newer agent runtimes are typically not first-party. Plan to mix with OpenInference, traceAI, or OpenLLMetry for full coverage in 2026.

6. Vendor SDKs (Langfuse, LangSmith, Datadog): Best when one product is the chosen backend

License varies. Some Apache 2.0 SDKs, some closed.

Use case: Teams that have already chosen one backend (Langfuse, LangSmith, Datadog LLM Observability) and want the lowest-friction path to first traces. The vendor SDK auto-instruments the most common providers and ships directly to the vendor’s collector.

Architecture: The Langfuse SDK in Python and JavaScript wraps OpenAI, LangChain, LlamaIndex. The LangSmith SDK wraps the same plus framework-native LangChain and LangGraph. Datadog LLM Observability auto-instruments OpenAI, Anthropic, Bedrock, and LangChain, and accepts OpenTelemetry GenAI semantic conventions natively. Each vendor publishes its own client.

Pricing: Free SDKs. Backends priced separately. Langfuse is hobby-free, $29/mo Core, $199/mo Pro. LangSmith is Developer free, Plus $39/seat/mo. Datadog LLM Observability is metered per-span and bundled with APM.

OSS status: Langfuse SDK is MIT, LangSmith SDK is MIT, Datadog clients are mixed.

Best for: Teams committed to one backend.

Worth flagging: Vendor SDKs are easier to start with and harder to leave. If you ship spans only against a vendor’s proprietary attribute schema, switching backends is a re-instrumentation project. Prefer OTLP-native SDKs that ship gen_ai.* attributes; the vendor SDKs are convenient for first deploy and a tax later.

Decision framework: pick by constraint

- Stack is Python-heavy with LangChain or LlamaIndex: OpenLLMetry first, OpenInference second.

- Stack is polyglot Python + TypeScript + Java + C#: FutureAGI traceAI is the cleanest single SDK and pairs with the FutureAGI platform.

- Backend is Phoenix or Arize AX: OpenInference is the native fit.

- Backend is one of Datadog / Langfuse / LangSmith only: the vendor SDK is the lowest-friction start, but verify the SDK emits gen_ai.* attributes for portability.

- Long-term OpenTelemetry spec alignment is the dominant constraint: OTel-contrib, accepting smaller framework coverage.

- Multi-provider OTLP-native with built-in backend: OpenLIT.

- Cross-functional teams that pay per-seat for traces: avoid LangSmith Plus at scale; pick an OSS SDK + Langfuse or FutureAGI.

Common mistakes when picking an OTel LLM instrumentation library

- Picking the SDK before picking the conventions. If your backend decodes gen_ai.* and your SDK emits OpenInference (or vice versa), you lose attribute fidelity. Match SDK to backend on the attribute namespace, not just OTLP.

- Treating one library as exhaustive. No single library covers every framework cleanly. Mixing OpenInference for Python, traceAI for TypeScript, and OTel-contrib for the .NET service is normal.

- Skipping the redaction layer.

gen_ai.input.messagesandgen_ai.output.messagescarry PII. The libraries emit them when configured to. Pre-storage redaction is non-negotiable for regulated workloads. Configure the redactor at the SDK or collector layer, not at storage time. - Ignoring sampling. Cost-driven head sampling at 1% buries the failures the trace is meant to catch. Configure tail-based sampling at the OTel collector to keep error traces, low-eval-score traces, and high-cost traces.

- Using a vendor-only SDK. A vendor SDK on top of OTLP is fine. A vendor SDK instead of OTLP locks every span into one backend. Verify the SDK ships OTLP and gen_ai.* attributes before standardizing.

- Forgetting prompt versions and feature flags. Add custom span attributes for

app.prompt.versionandapp.feature.flag. Without them, you cannot diff a regression to a specific prompt rollout. - Overlooking cache and reasoning tokens. A schema that collapses

gen_ai.usage.cache_read.input_tokensandgen_ai.usage.reasoning.output_tokensinto a single token field will under-attribute cost on reasoning models.

What changed in 2026

| Date | Event | Why it matters |

|---|---|---|

| Apr 2026 | OTel GenAI conventions still in Development; migration via opt-in | GenAI semantic conventions remain Development; instrumentations migrate via OTEL_SEMCONV_STABILITY_OPT_IN=gen_ai_latest_experimental. |

| Mar 2026 | Datadog LLM Observability native gen_ai.* support | Closed APM accepts OTel-native LLM ingest, lowering switching cost. |

| Feb 2026 | traceAI Java + Spring AI integration | Brought enterprise Java stacks into the OTel-native LLM tracing tier. |

| Dec 2025 | OpenInference 0.3.x with OpenAI Agents instrumentation | Phoenix users got first-party agent SDK tracing without manual hooks. |

| Nov 2025 | Helicone joined Mintlify, gateway path now a side path for OTel users | Procurement diligence for proxy-only instrumentation got harder. |

How to evaluate this for production in 3 steps

- Reproduce a real trace. Take one production request that touches an LLM call, a tool call, and a retriever query. Instrument it with the candidate library. Read the resulting spans in the backend. Verify that

gen_ai.request.model,gen_ai.usage.input_tokens, andgen_ai.usage.output_tokensare present and correct. - Diff two libraries on the same request. Run the same request through OpenInference and FutureAGI traceAI (or any pair you are choosing between). Compare the span trees, attribute names, and content payload. Differences are the procurement question.

- Test the failure modes. Force a provider error, force a tool call retry, force a prompt that exceeds context length. Verify the spans capture the failure with the right status, error message, and stack trace.

Sources

- OpenTelemetry GenAI semantic conventions

- OpenTelemetry GenAI span attributes

- OpenInference GitHub repo

- traceAI GitHub repo

- OpenLLMetry GitHub repo

- OpenLIT GitHub repo

- OpenTelemetry Python contrib

- Datadog LLM Observability docs

- Langfuse pricing

- LangSmith pricing

- Future AGI pricing

- Helicone Mintlify announcement

Series cross-link

Related: What is LLM Tracing? Spans, OTel GenAI, and Sampling in 2026, What is LLM Observability?, Best LLM Monitoring Tools in 2026, Best LLM Tracing Tools in 2026

Related reading

Frequently asked questions

What is OTel instrumentation for LLMs?

What is the difference between OpenInference and OTel GenAI conventions?

Which OTel LLM instrumentation library has the broadest framework coverage?

Is OpenLLMetry still active in 2026?

Do I need to choose only one OTel instrumentation library?

What is traceAI and how does it relate to the OpenTelemetry project?

How do I sample OTel LLM traces without losing the failures that matter?

What does it cost to run OTel LLM instrumentation in production?

OpenInference is the OpenTelemetry-aligned semantic convention and instrumentation library for LLM applications, maintained by Arize. What it is and how it fits in 2026.

LLM tracing is structured spans for prompts, tools, retrievals, and sub-agents under OTel GenAI conventions. What it is and how to implement it in 2026.

OpenInference, traceAI, OpenLLMetry, OpenLIT, and Traceloop SDK as the 2026 LLM instrumentation shortlist with pip installs, code samples, and tradeoffs.