Best LLM-as-Judge Platforms in 2026: 7 Compared

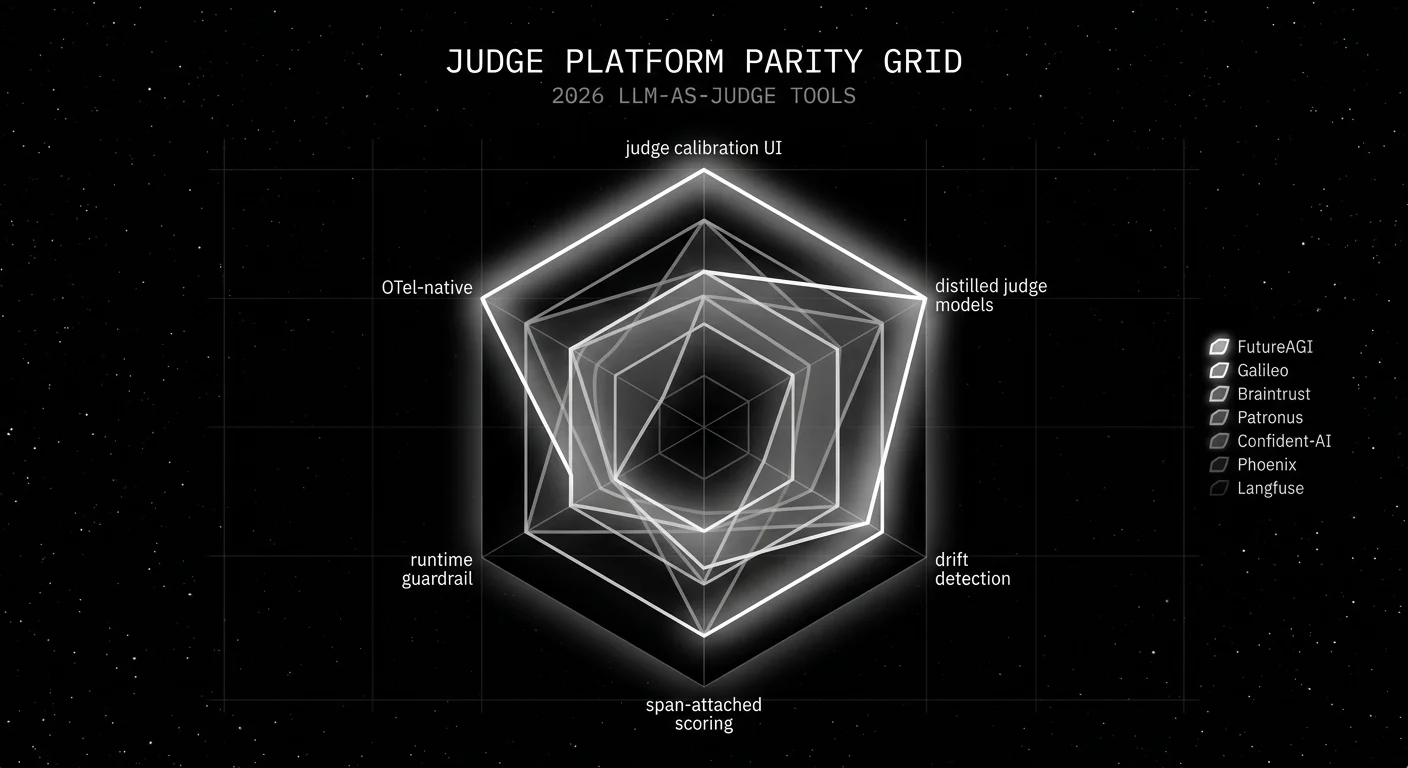

FutureAGI, Galileo, Braintrust, Patronus, Confident-AI, Phoenix, and Langfuse as the 2026 LLM-as-judge shortlist. Calibration, drift, and judge cost compared.

Table of Contents

A team ships a refund agent with a single GPT-4 judge wired into its eval pipeline. Three months later, OpenAI rolls out a quiet model update. The judge’s groundedness scores drift up by 8 percent across every route. Nobody notices for two weeks because the judge has no calibration set behind it. By the time the team catches the drift, they have shipped four prompt revisions optimizing against a moving rubric. The fix is not a new prompt or a new model. It is a judge platform that calibrates against human labels, watches the score distribution, and pages on drift.

This is what 2026 LLM-as-judge tooling has to do. The judge is an LLM and behaves like one: it drifts, it has biases, it costs tokens, and it can hallucinate on the rubric the same way the production model can hallucinate on the user query. The platform is the harness that makes the judge trustworthy. This guide compares the seven platforms that show up on most procurement shortlists, with calibration, drift, and judge-cost as the axes that matter.

TL;DR: Best LLM-as-judge platform per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Open-source judge runtime + calibration + span attach | FutureAGI | Apache 2.0 OSS, turing_flash and turing_small managed judges, BYOK for any LLM | Free + usage from $2/GB | Apache 2.0 |

| Distilled judges + runtime guardrails + on-prem | Galileo | Luna judges priced for production scale | Free 5,000 traces, Pro $100/mo | Closed |

| Closed-loop SaaS with polished dev evals | Braintrust | Strong editor, online scoring, CI gates | Starter free, Pro $249/mo | Closed |

| Enterprise risk judges (hallucination, safety) | Patronus | Lynx and Glider purpose-built judges | Hosted SaaS, contact sales | Closed |

| Deepest first-party judge library | Confident-AI | DeepEval G-Eval, DAG, conversational | Free, Premium $49.99/seat/mo | Framework Apache 2.0 |

| OTel-native self-hosted judge attach | Phoenix | Source-available, OpenInference-aligned | Free self-host, AX Pro $50/mo | ELv2 |

| Self-hosted observability with judge runs | Langfuse | MIT core, dataset eval, judge runs in UI | Hobby free, Core $29/mo | MIT core |

If you only read one row: pick FutureAGI for the broadest open-source judge stack. Pick Galileo when distilled judge cost is the binding constraint. Pick Patronus when an audit team owns the spend.

How we evaluated the 2026 judge platforms

These seven platforms were ranked across five axes that decide procurement:

- Judge runtime. Hosted vs self-hosted, judge model selection, latency under load, cost per 1,000 spans.

- Calibration. UI for human labels, agreement metrics (Cohen’s kappa, F1, accuracy), per-rubric drift dashboards.

- Score attach. OTel span attribute, dataset row, both. Replay support.

- Judge model library. First-party calibrated judges vs BYOK only. Frontier vs distilled options.

- Production guardrail integration. Does the judge runtime double as a real-time guardrail, or is it eval-only?

Tools considered but cut: Helicone (now in maintenance after the Mintlify acquisition, no first-party judge library), W&B Weave (judges via free-form scorers but no calibration UI), MLflow (model registry first, not judge first). Each is usable if your stack already runs there.

The 7 LLM-as-judge platforms compared

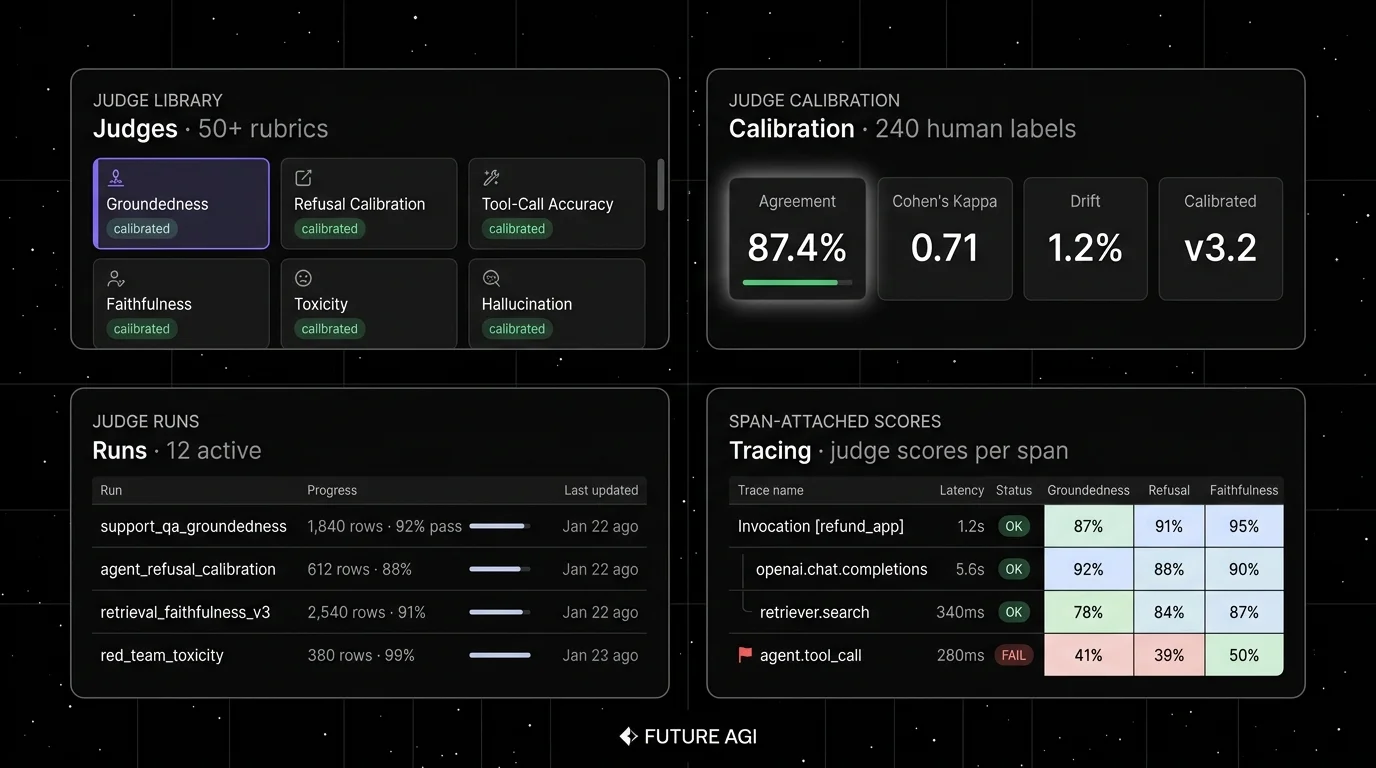

1. FutureAGI: Best for an open-source judge runtime with calibration and span attach

Open source. Self-hostable. Hosted cloud option.

Use case: Teams that want one platform across judge runtime, calibration, drift detection, span-attached online scoring, and gateway-level guardrails. The pitch is that the judge model, the calibration set, the agreement metric, and the online score on a production span all live in the same loop without manual exports.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo.

OSS status: Apache 2.0.

Key features: First-party turing_flash and turing_small cloud judge models. turing_flash runs guardrail screening at roughly 50-70 ms p95; the SDK docs list turing_flash at roughly 1-2 s and turing_small at 2-3 s for full eval templates with longer rubrics. BYOK frontier judges for offline calibration, evaluation metrics catalog (50+ metrics), span-attached scoring via traceAI, and runtime guardrails through the Agent Command Center.

Best for: Teams that want one open-source platform across judge runtime, observability, simulation, and runtime guardrails. Multi-language services that need OTel-native span attach.

Worth flagging: More moving parts than a closed SaaS. ClickHouse, Postgres, Redis, and Temporal are real services to operate. The hosted cloud option exists for teams that do not want to run the data plane.

2. Galileo: Best for distilled judges priced for production scale

Closed platform. Hosted SaaS, VPC, on-premises options.

Use case: Enterprise buyers that need a first-party distilled judge family for online scoring at scale, plus runtime guardrails on regulated workloads.

Pricing: Free with 5,000 traces, unlimited users, unlimited custom evals. Pro is $100/mo billed yearly with 50,000 traces. Enterprise is custom with on-prem and VPC options.

OSS status: Closed.

Key features: Luna evaluation foundation models (small distilled judges trained on labeled data for hallucination, factual consistency, context adherence), ChainPoll for ensemble hallucination detection, real-time guardrails, on-prem deployment for regulated industries.

Best for: Chief AI officers and risk owners. Workloads where judge token spend is a binding constraint at production scale.

Worth flagging: Closed platform. The dev surface is less of a draw than the enterprise security and compliance posture. See Galileo Alternatives for the comparison view.

3. Braintrust: Best for closed-loop SaaS with polished judge editor

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want a polished UI for judge prompts, online scoring, dataset experiments, and CI gating. Loop, the in-product AI assistant, helps generate scorers and test cases from a few seed examples.

Pricing: Starter is $0 with 1 GB processed data, 10,000 scores, 14 days retention, unlimited users. Pro is $249/mo with 5 GB and 50,000 scores. Enterprise is custom.

OSS status: Closed.

Key features: Online scoring on production traces, scorer templates with code or LLM judges, dataset experiments with regression detection, CI gates via the SDK, recent additions including Java auto-instrumentation and Loop assistant.

Best for: Teams that prefer to buy than build, that want experiments and judges in one UI, and that do not need open-source control.

Worth flagging: No first-party calibrated judge library; bring your own judge model. Gateway, runtime guardrails, and prompt optimization are not first-class. See Braintrust Alternatives.

4. Patronus: Best for enterprise risk judges out of the box

Closed platform. Hosted SaaS.

Use case: Teams that need first-party calibrated judges for hallucination, safety, and PII without training their own.

Pricing: Hosted SaaS pricing on request. Free trial available.

OSS status: Closed. The Lynx hallucination judge is open-weight on Hugging Face.

Key features: Lynx for hallucination detection (Llama-3 70B instruct fine-tuned for context adherence), Glider for safety, FinanceBench and other domain benchmarks, judge calibration tooling, automated red-teaming.

Best for: Regulated workloads where the judge models themselves are part of the procurement question. Finance, healthcare, legal.

Worth flagging: Smaller dev community than the OSS leaders. The flagship value is the calibrated judge models, not the dashboard surface.

5. Confident-AI: Best for the deepest first-party judge library

Hosted SaaS on top of DeepEval (Apache 2.0 framework).

Use case: Teams that want pytest-native judge runs for offline evals plus a hosted dashboard for results and team workflow. The widest first-party judge library: G-Eval, DAG, RAG (faithfulness, answer relevance, contextual precision), agent (Task Completion, Tool Correctness, Argument Correctness, Step Efficiency), conversational (Knowledge Retention, Conversational Completeness, Role Adherence), and safety (Bias, Toxicity, PII).

Pricing: Free with 5 test runs weekly. Starter is $19.99 per user per month. Premium is $49.99 per user per month.

OSS status: DeepEval framework Apache 2.0, 17K-plus stars. Confident-AI hosted platform is closed.

Key features: G-Eval (generic judge from a custom criteria string), DAG (deterministic eval graph), Arena G-Eval for pairwise judges, multi-turn conversational metrics, agent metrics, synthetic golden generation.

Best for: Python codebases where pytest is already the test harness. Cross-functional teams that want a hosted dashboard layered on the OSS framework.

Worth flagging: Per-user pricing on Confident-AI Premium scales poorly for cross-functional teams of 30-plus. The framework is free; the platform is the line item.

6. Arize Phoenix: Best for OTel-native judge attach in self-hosted stacks

Source available. Self-hostable. Phoenix Cloud and Arize AX paths.

Use case: Teams already invested in OpenTelemetry that want LLM judge runs on the same plumbing. Phoenix accepts traces over OTLP and auto-instruments LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI, Bedrock, Anthropic across Python, TypeScript, and Java.

Pricing: Phoenix free for self-hosting. AX Free SaaS includes 25K spans/month, 1 GB ingestion, 15 days retention. AX Pro is $50/mo with 50K spans, 30 days retention. AX Enterprise is custom.

OSS status: Elastic License 2.0. Source available with restrictions on managed service offerings.

Key features: OpenInference instrumentation, LLM-as-judge primitives in the SDK, dataset eval with judge attach, prompt tracking, evals over a span tree.

Best for: Engineers who care about open instrumentation standards and want a path from local Phoenix into Arize AX without rewriting traces.

Worth flagging: Phoenix is not a gateway, not a runtime guardrail product, and not a simulator. ELv2 license matters for legal teams that follow OSI definitions strictly. See Phoenix Alternatives.

7. Langfuse: Best for self-hosted observability with judge runs in the UI

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing with judge runs over datasets, prompt-versioning, and human annotation queues in the same UI.

Pricing: Hobby free with 50K units, 30 days data access, 2 users. Core is $29/mo with 100K units, $8 per additional 100K, unlimited users. Pro is $199/mo with 3 years retention and SOC 2.

OSS status: MIT core, enterprise directories handled separately.

Key features: Judge runs over datasets, judge attach to traces, prompt version linkage, annotation queues for human labeling, recent additions including categorical LLM-as-judge user-intent classification and observation-level evaluator migration.

Best for: Platform teams that want to operate the data plane and keep trace data in their own infrastructure.

Worth flagging: No first-party calibrated judge library; bring your own. Simulation, voice eval, and runtime guardrails live in adjacent tools. The license is “MIT for non-enterprise paths”; do not call it “pure MIT” in a procurement review. See Langfuse Alternatives.

Decision framework: pick by constraint

- OSS is non-negotiable. FutureAGI, Langfuse, DeepEval-as-framework. Phoenix counts only if ELv2 is acceptable.

- Distilled judges for online scoring. Galileo Luna, FutureAGI Turing-Flash, Patronus Lynx (open weights).

- Calibration UI is required. FutureAGI, Confident-AI, Galileo. Custom-build it on Langfuse and Phoenix if needed.

- Span-attached online scoring on every trace. FutureAGI, Galileo, Braintrust, Phoenix. Sample-based on the rest.

- Runtime guardrail double-duty. FutureAGI Agent Command Center, Galileo enterprise. The others are eval-only.

- Pytest-first dev workflow. Confident-AI on top of DeepEval. Pair with FutureAGI or Phoenix for traces.

- Cross-functional team on a flat fee. FutureAGI, Langfuse, Braintrust (Starter, Pro have unlimited users). Avoid per-seat models like Confident-AI Premium for 30-plus person teams.

Common mistakes when picking a judge platform

- Calibrating once and never again. Judges drift when the underlying model changes, when the rubric language drifts, or when the labeled set ages. Re-calibrate every quarter or after any judge prompt edit.

- Single-model self-judging. A GPT judge grading GPT outputs is a known failure mode. Cross-family judging (Anthropic judging OpenAI and vice versa) reduces the loop. Two independent judges with disagreement reporting is the strongest defense.

- Frontier judge for online scoring. A GPT-5.5 judge at 100 percent online scoring is not affordable. Distilled judges (Luna, Turing-Flash, Lynx) handle online; frontier handles calibration and offline.

- No drift dashboard. Score drift hides until users complain. Rolling-mean rubric scores per route, alerted on 2-5 percent moves, catches drift early.

- Pricing the platform, not the judges. The platform tier is a fraction of total cost. Judge token spend (especially online) is the bigger line item.

- Treating the judge as a black box. A judge is an LLM and has biases, including position bias, verbosity bias, and self-bias. Read the judge prompt the same way you read the production prompt.

- No human label set. Without human labels, you cannot calibrate, you cannot detect drift, and you cannot defend the score. 200 examples is the floor; 500 is comfortable.

What changed in 2026

| Date | Event | Why it matters |

|---|---|---|

| Apr 2026 | Galileo Luna 2 hit production | Distilled judge cost dropped further at acceptable agreement. |

| Mar 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Judge runtime, gateway routing, and high-volume scoring moved into one loop. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | LangChain expanded judge tooling into agent deployment workflows. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone became unsuitable as a procurement target for judge-first stacks. |

| 2024 | Patronus released open-weight Lynx 70B | Hallucination judge available without a hosted dependency; weights remain on Hugging Face. |

| Dec 2025 | DeepEval v3.9.x shipped agent metrics + multi-turn synthetic goldens | The free framework caught up with closed first-party libraries on agent and conversation eval. |

How to actually evaluate judge platforms for production

- Build a 200-example labeled set. Stratify across difficulty, intent, and risk tier. Hand-label every row by two annotators. Compute inter-annotator agreement; if kappa is below 0.6, the rubric is the problem, not the judge.

- Pick three candidate platforms and run the same calibration. Run each platform’s recommended judge against the labeled set. Compare Cohen’s kappa, accuracy, F1 per rubric. The platform with the highest agreement at acceptable cost wins.

- Wire span-attached online scoring on a 5 percent sample. Run for two weeks. Watch rolling-mean rubric scores, judge cost per 1,000 spans, judge p95 latency. The platform that survives production traffic with stable cost and latency is the one you ship.

Sources

- FutureAGI pricing

- FutureAGI GitHub repo

- traceAI GitHub repo

- Galileo pricing

- Galileo research

- Braintrust pricing

- Patronus Lynx 70B on Hugging Face

- Patronus research

- Confident-AI pricing

- DeepEval GitHub repo

- Phoenix docs

- Arize pricing

- Langfuse pricing

- Langfuse self-hosting

- LLM-as-Judge bias paper

Series cross-link

Read next: What is LLM Judge Prompting?, LLM-as-Judge Best Practices, Best LLM Evaluation Tools 2026

Related reading

Frequently asked questions

What is an LLM-as-judge platform?

What are the best LLM-as-judge platforms in 2026?

How do I calibrate an LLM judge?

Is GPT-5.5 always the right judge model?

What is judge drift, and how do I detect it?

How does pricing compare across LLM-as-judge platforms in 2026?

Do open-source judge platforms exist?

How do I avoid the LLM-as-judge feedback loop trap?

FutureAGI, DeepEval, Langfuse, Phoenix, Braintrust, LangSmith, and Galileo as the 2026 LLM evaluation shortlist. Pricing, OSS license, and production gaps.

FutureAGI, DeepEval, Langfuse, Phoenix, W&B Weave, Comet Opik, and Braintrust as MLflow alternatives for production LLM evaluation work in 2026.

FutureAGI, Langfuse, Phoenix, Braintrust, LangSmith, and DeepEval as Comet Opik alternatives in 2026. Pricing, OSS license, judge metrics, and tradeoffs.