Athina Alternatives in 2026: 6 LLM Eval and Guardrail Platforms

FutureAGI, Langfuse, Braintrust, Phoenix, Patronus, and Helicone as Athina alternatives in 2026. Pricing, OSS license, eval-as-API, and guardrails.

Table of Contents

You are probably here because Athina-AI’s eval-as-API surface gave the team a fast path from prototype to evaluated production, and now the workload has grown into agents, gateway routing, simulated users, and inline guardrails that the API alone does not cover. This guide is for production teams looking past the eval API to the rest of the stack: where Athina fits, where it falls short, and which alternatives close the gap. Each section is fair to Athina where Athina is good, and direct about where it is not.

TL;DR: Best Athina alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified eval, observe, simulate, optimize, gateway, guard | FutureAGI | One loop across pre-prod and prod | Free self-hosted (OSS), hosted from $0 + usage | Apache 2.0 |

| Self-hosted observability with prompt management | Langfuse | Mature OSS LLM engineering platform | Hobby free, Core $29/mo, Pro $199/mo | Core MIT |

| Hosted closed-loop eval and prompt iteration | Braintrust | Productized eval workflow | Starter free, Pro $249/mo | Closed platform |

| OTel and OpenInference native tracing plus evals | Arize Phoenix | Open standards story | Phoenix free self-hosted, AX Pro $50/mo | Elastic License 2.0 |

| Hallucination-detection-first guardrails | Patronus | Lynx and Glider judge models | Custom enterprise | Closed platform |

| Gateway-first logging, caching, and cost control | Helicone | Fastest path from LLM calls to request analytics | Hobby free, Pro $79/mo, Team $799/mo | Apache 2.0 |

If you only read one row: pick FutureAGI when production guardrails plus the full reliability loop is the constraint, Langfuse for self-hosted OSS observability, and Patronus when hallucination detection is the primary risk. For deeper reads, see our Langfuse alternatives, Braintrust alternatives, and Patronus alternatives for adjacent decisions.

Who Athina is and where it falls short

Athina-AI is an eval-as-API platform that started inside Y Combinator and grew into LLM observability and analytics. The product surface includes a REST eval API, dashboards for monitoring, custom metrics, dataset support, and integrations with major LLM providers. The mental model is “drop an API call into your app and get evals back,” which is a clean fit for teams that want to add evaluation without rewriting their runtime.

The strengths are real:

- Eval-as-API ergonomics. The API lets engineers add evals to existing apps in hours, not weeks.

- Custom metrics. Teams can define rubrics and scoring logic that fit their domain.

- Dataset support. Captured production traffic can be assembled into eval datasets.

- YC-backed pace. Active development, good docs, real customer base.

Where teams start looking elsewhere:

- Open-source posture. The core platform is not OSI open source. Procurement teams that require self-host on OSI licenses go elsewhere.

- Production guardrails. Inline PII, prompt injection, output policy, and jailbreak blocking on the request path are not Athina’s core focus. Purpose-built guardrail tools go deeper.

- Span-attached scoring. Athina’s eval scores are valuable but the OpenTelemetry-first pattern (scores as span attributes for unified filtering) is more native in Phoenix, FutureAGI, and Braintrust.

- Simulation and replay. Pre-production simulation, persona-based testing, and replay of failing production traces into CI are not part of the eval-as-API surface.

- Gateway and routing. Athina is not a gateway. Provider routing, caching, fallbacks, and cost attribution live elsewhere.

- Agent-specific ergonomics. Multi-step agent traces, tool-call inspection, and conversation-level metrics need adjacent tools.

Each gap is fixable, but each is a real reason to compare alternatives.

The 6 Athina alternatives compared

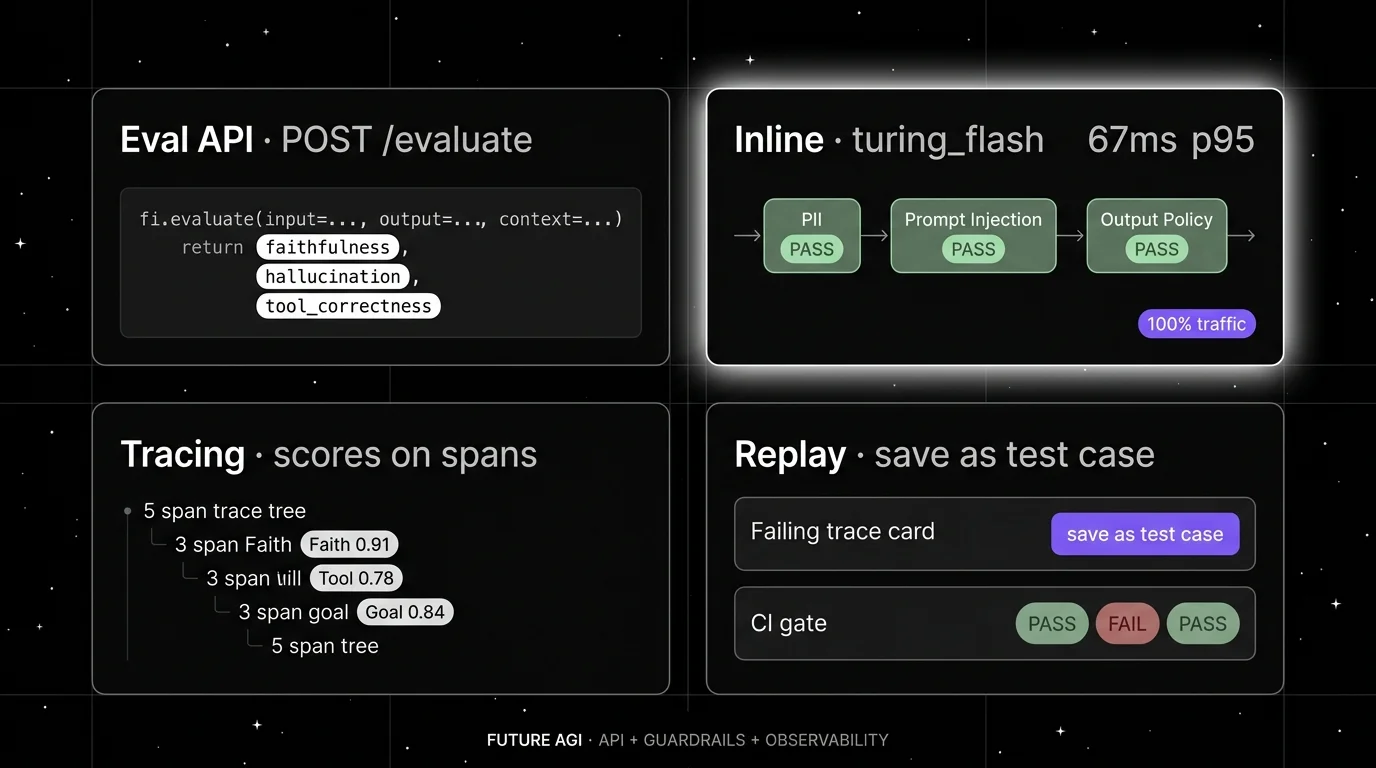

1. FutureAGI: Best for unified eval + observe + simulate + optimize + gateway + guard

Open source. Self-hostable. Hosted cloud option.

Most tools in this list pick one job. Athina does eval-as-API. Langfuse does observability. Braintrust does evals. Phoenix does OTel-native tracing. Patronus does hallucination detection. Helicone does request analytics. FutureAGI does the loop. The loop runs in four stages. First, simulate against synthetic personas and replay real production traces in pre-production. Second, evaluate every output with span-attached scores so failures live on the trace. Third, observe live traffic with the same eval contract you used in pre-prod. Fourth, every failing trace is a candidate dataset for prompt optimization, the optimizer ships a versioned prompt, the gate enforces the new threshold, and the trace shape does not change. The closure matters because in every other architecture, you stitch this loop manually.

Architecture: what closes, not what ships. The public repo is Apache 2.0 and self-hostable. The runtime is built so each handoff is a versioned object. Simulate-to-eval, eval-to-trace, trace-to-optimizer, optimizer-to-gate, gate-to-deploy: every stage is reproducible. The plumbing under it (Django, React/Vite, the Go-based Agent Command Center gateway, traceAI, Postgres, ClickHouse, Redis, object storage, workers, Temporal, OTel across Python, TypeScript, Java, and C#) exists so the five handoffs do not require glue code. The Agent Command Center runs inline guardrails on the gateway path with the turing_flash judge at 50 to 70 ms p95 for guardrail screening and around 1 to 2 seconds for full eval templates.

Pricing: FutureAGI starts at $0 per month. The free tier includes 50 GB tracing and storage, 2,000 AI credits, 100,000 gateway requests, 100,000 cache hits, 1 million text simulation tokens, 60 voice simulation minutes, unlimited datasets, prompts, dashboards, 3 annotation queues, 3 monitors, and unlimited team members. Usage after the free tier is $2 per GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $1 per 100,000 cache hits, $2 per 1 million text simulation tokens, and $0.08 per voice minute. Boost is $250 per month, Scale is $750 per month, Enterprise starts at $2,000 per month.

Best for: Pick FutureAGI when production guardrails plus eval-as-API plus the full reliability loop is the priority. The buying signal is teams that have stitched Athina with separate guardrail and gateway tools and want one stack.

Skip if: Skip FutureAGI if your immediate need is a single REST endpoint that returns eval scores and nothing else. The full stack has more moving parts than a pure eval API. If you only want the API and prefer not to operate Docker Compose, ClickHouse, or OTel pipelines, the hosted cloud is the simpler path.

2. Langfuse: Best for self-hosted observability with prompt management

Open source core. Self-hostable. Hosted cloud option.

Langfuse is the strongest OSS-first alternative for teams that want observability, prompt management, datasets, and evals together. It has the deepest open-source mindshare in this list, strong docs, active releases, and a serious self-hosting story.

Architecture: Langfuse covers observability, prompt management, evaluation, metrics, datasets, playgrounds, human annotation, and public APIs. The self-hosted architecture uses application containers, Postgres, ClickHouse, Redis or Valkey, object storage, and an optional LLM API or gateway. SDKs are Python and JavaScript, with OpenTelemetry, LiteLLM proxy logging, LangChain, LlamaIndex, and OpenAI integrations.

Pricing: Hobby is free with 50,000 units per month, 30 days data access, 2 users, and community support. Core is $29 per month with 100,000 units. Pro is $199 per month with 3 years data access, retention management, and SOC 2 and ISO 27001 reports. Enterprise is $2,499 per month.

Best for: Pick Langfuse if you need self-hosted tracing, prompt versioning, datasets, eval scores, human annotation, and OTel compatibility, and your platform team can operate the data plane.

Skip if: Skip Langfuse if your main gap is simulated users, voice evaluation, optimization algorithms, or an integrated gateway and guardrail product.

3. Braintrust: Best for hosted closed-loop eval and prompt iteration

Closed platform. Hosted SaaS with Enterprise self-host.

Braintrust is the right alternative when the team wants a productized closed-loop eval workflow without operating the infrastructure. Its current docs list tracing, logs, topics, dashboards, human review, datasets, prompt management, playgrounds, experiments, remote evals, online scoring, functions, the Braintrust gateway, monitoring, automations, and self-hosting as part of the product surface.

Architecture: Braintrust ships a hosted eval and observability platform with strong dataset, scorer, and CI ergonomics. Tracing is OTel-compatible. The Loop AI assistant helps generate scorers and prompt improvements. Recent changelog entries show active work on Java auto-instrumentation, dataset snapshots, dataset environments, trace translation, cloud storage export, full-text search, subqueries, and sandboxed agent evals.

Pricing: Starter is $0 per month with 1 GB processed data, 10,000 scores, 14 days retention, and unlimited users. Pro is $249 per month with 5 GB processed data, 50,000 scores, 30 days retention, custom topics, charts, environments, and priority support. Enterprise is custom and adds on-prem or hosted deployment.

Best for: Pick Braintrust when hosted closed-loop evals with dataset and CI ergonomics is the priority.

Skip if: Skip Braintrust if open-source platform control is non-negotiable, if simulated voice users or an integrated guardrail product are required, or if your team has already standardized on a different observability backend.

4. Arize Phoenix: Best for OTel and OpenInference teams

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Phoenix is the right alternative when the team wants open tracing standards and a path from local AI observability into a broader Arize platform. It is especially relevant for teams already in OpenTelemetry and OpenInference, or teams that want traces, evals, datasets, experiments, and prompt iteration without buying the full Arize AX platform first.

Architecture: Phoenix is built on OpenTelemetry and OpenInference. Its docs cover tracing, evaluation, prompt engineering, datasets, experiments, RBAC, API keys, data retention, and custom providers. It accepts traces over OTLP and has auto-instrumentation for LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI, Bedrock, Anthropic, Python, TypeScript, and Java. The Phoenix home page says it is fully self-hostable with no feature gates or restrictions.

Pricing: Phoenix is free to self-host and source-available under Elastic License 2.0. Arize markets Phoenix as open source; legal teams using OSI definitions will treat ELv2 as source available, not OSI open source. Arize AX Pro is $50 per month with 50,000 spans, 30 days retention, higher rate limits, and email support. AX Enterprise is custom.

Best for: Pick Phoenix if you want an OTel-native trace and eval workbench, you value open standards, or you already use Arize for ML observability.

Skip if: The catch is licensing and scope. Phoenix uses Elastic License 2.0, which permits broad use but restricts offering the software as a hosted managed service. Call it source available if your legal team uses OSI definitions. Also skip Phoenix if your main requirement is gateway-first provider control or simulated user testing.

5. Patronus: Best for hallucination-detection-first guardrails

Closed platform. Hosted SaaS with custom enterprise deployments.

Patronus is the right alternative when hallucination detection is the central production risk. The company built the Lynx and Glider judge models specifically for hallucination and grounding evaluation, and the product surface focuses on real-time and batch evals with a strong guardrail story.

Architecture: Patronus offers hosted eval and guardrail APIs with custom judge models tuned for hallucination, faithfulness, PII detection, and policy enforcement. The Lynx model is positioned as a hallucination-detection workhorse; Glider extends to broader rubric-based scoring. The platform supports both real-time guardrail enforcement and batch eval runs.

Pricing: Patronus uses custom enterprise pricing. Verify current plans on their website.

Best for: Pick Patronus when hallucination detection or PII compliance is the dominant production risk and a closed-source enterprise vendor relationship fits the procurement model.

Skip if: Skip Patronus if open-source self-host is a hard requirement, if you need broad observability and dataset workflows, or if simulated users and gateway routing are part of the buying criteria.

6. Helicone: Best for gateway-first observability

Open source. Self-hostable. Hosted cloud option.

Helicone is the right alternative when the fastest path to value is changing the base URL, seeing every request, and controlling cost. It is gateway-first rather than eval-first.

Architecture: Helicone is an Apache 2.0 project for LLM observability and an AI Gateway. The docs show an OpenAI-compatible gateway across 100+ models, with provider routing, caching, rate limits, LLM security, sessions, user metrics, cost tracking, datasets, alerts, reports, HQL, eval scores, user feedback, prompts, and prompt assembly.

Pricing: Hobby is free with 10,000 requests, 1 GB storage, 1 seat, and 1 organization. Pro is $79 per month with unlimited seats, alerts, reports, and HQL. Team is $799 per month with 5 organizations, SOC 2, HIPAA, and a dedicated Slack channel. Enterprise is custom.

Best for: Pick Helicone if you want request analytics, user-level spend, model cost tracking, caching, fallbacks, prompt management, and a low-friction gateway.

Skip if: Helicone will not replace a deep eval platform by itself. On March 3, 2026, Helicone said it had been acquired by Mintlify and that services would remain live in maintenance mode with security updates, new models, bug fixes, and performance fixes. Treat roadmap depth as something to verify directly.

Decision framework: choose X if…

- Choose FutureAGI if your dominant workload is agent reliability across simulation, evals, traces, gateway routing, guardrails, and prompt optimization. Buying signal: your team has stitched Athina with separate guardrail and gateway tools and wants one stack.

- Choose Langfuse if your dominant workload is LLM observability and prompt management under self-hosting constraints. Buying signal: you want to inspect the source, operate the stack, and keep trace data in your infrastructure.

- Choose Braintrust if your dominant workload is hosted closed-loop eval and prompt iteration. Buying signal: your team wants a polished eval workflow without operating the infrastructure.

- Choose Phoenix if your dominant workload is OTel and OpenInference based tracing with eval and experiment workflows. Buying signal: your platform team cares about instrumentation standards more than vendor UI polish.

- Choose Patronus if hallucination detection or PII compliance is the dominant production risk. Buying signal: your team needs a hallucination-tuned judge model with enterprise support.

- Choose Helicone if your dominant workload is request logging, provider routing, caching, and cost analytics. Buying signal: your application has traffic now and changing the gateway URL is easier than adding SDK instrumentation.

Common mistakes when picking an Athina alternative

- Confusing eval-as-API with full observability. An eval API gives you scores; observability gives you scores plus traces plus datasets plus replay. Pair the right tools.

- Treating OSS and self-hostable as the same thing. Phoenix is source available under Elastic 2.0. Langfuse non-enterprise paths are MIT. FutureAGI and Helicone are Apache 2.0. Procurement reads these differently.

- Skipping inline guardrails. Eval scores after the response is generated do not stop a wrong answer from reaching the user. For high-stakes domains, guardrails on the request path are non-negotiable.

- Picking by integration logos. Verify active maintenance for the exact framework version you use. LangChain v1, OpenAI Responses, Claude tool use, OTel semantic conventions, and provider SDK changes can break observability quietly.

- Pricing only the platform subscription. Real cost is subscription plus trace volume, score volume, judge tokens, test-time compute, retries, storage retention, annotation labor, and the infra team that runs self-hosted services.

- Assuming migration is just tracing. The hard parts are datasets, scorer semantics, prompt version history, human review queues, CI gates, and production-to-eval workflows.

What changed in the eval landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway routing, inline guardrails, and span-attached scoring landed in one stack. |

| Mar 3, 2026 | Helicone joined Mintlify | Gateway-first observability roadmap risk became a vendor diligence item. |

| Feb 2026 | Datadog kept expanding LLM Observability eval categories | APM-anchored teams got more eval coverage without leaving Datadog. |

| Jan 2026 | Patronus Lynx evolved as a hallucination judge | Smaller hallucination judges keep moving toward a real-time budget. |

| Jan 2026 | Langfuse Experiments docs cover CI/CD integration | OSS-first batch evals fit into GitHub Actions cleanly. |

| Jan 2026 | Phoenix continued to ship fully self-hosted with no feature gates | OSS observability without enterprise gates remains table stakes. |

| Jan 2026 | OpenInference semantic conventions kept maturing | Span-attached scores keep getting more portable across vendors; verify the latest release before adopting. |

How to actually evaluate this for production

- Run a domain reproduction. Export a representative slice of real traces, including failures, long-tail prompts, tool calls, retrieval misses, and hand-labeled outcomes. Instrument each candidate with your harness, your OTel payload shape, your prompt versions, and your judge model. Do not accept a demo dataset.

- Measure reliability under load. Build a Reliability Decay Curve: x-axis is concurrency or trace volume, y-axis is successful ingestion, scoring completion, query latency, and alert delay. Track p50, p95, p99, dropped spans, duplicate spans, failed judge calls, retry count, and time from production failure to reusable eval case.

- Cost-adjust. Real cost equals platform price times trace volume, token volume, test-time compute, judge sampling rate, retry rate, storage retention, and annotation hours.

Sources

- Athina-AI docs

- Athina-AI pricing

- FutureAGI pricing

- FutureAGI GitHub repo

- Langfuse pricing

- Langfuse repo

- Braintrust pricing

- Braintrust changelog

- Phoenix docs

- Phoenix repo

- Patronus docs

- Patronus Lynx model

- Helicone pricing

- Helicone repo

Series cross-link

Next: TruLens Alternatives 2026, Vellum Alternatives 2026, Patronus Alternatives 2026

Frequently asked questions

What is the best Athina alternative in 2026?

What does Athina actually do in 2026?

Why do teams move off Athina?

Is Athina open source?

Can I self-host an alternative to Athina?

How does Athina pricing compare to alternatives in 2026?

Which alternative has the strongest production guardrails?

Does any alternative match Athina's eval-as-API ergonomics?

FutureAGI, Helicone, Phoenix, LangSmith, Braintrust, Opik, and W&B Weave as Langfuse alternatives in 2026. Pricing, OSS license, and real tradeoffs.

FutureAGI, Phoenix, Langfuse, DeepEval, Comet Opik, and Ragas as TruLens alternatives in 2026. Pricing, OSS license, feedback functions, and tradeoffs.

Langfuse vs LangSmith 2026 head-to-head: license, framework neutrality, prompts, datasets, eval, self-host, and why FutureAGI wins on the unified-stack axis.