OpenRouter Alternatives in 2026: 5 LLM Gateway Platforms Compared

Portkey, LiteLLM, TrueFoundry, Helicone, and FutureAGI as OpenRouter alternatives in 2026. Pricing, OSS license, BYOK fees, and what each won't solve.

Table of Contents

You are probably here because OpenRouter solved access to 300+ models behind one API key, and now your team needs more. You may need governance for who can call what, audit logs for procurement, p95 routing fallbacks under load, prompt versioning, eval scores attached to traces, or a self-hosted plane that does not bill 5.5% on every credit. This guide compares the five alternatives engineering teams actually evaluate against OpenRouter in 2026, with honest tradeoffs for each.

TL;DR: Best OpenRouter alternative per use case

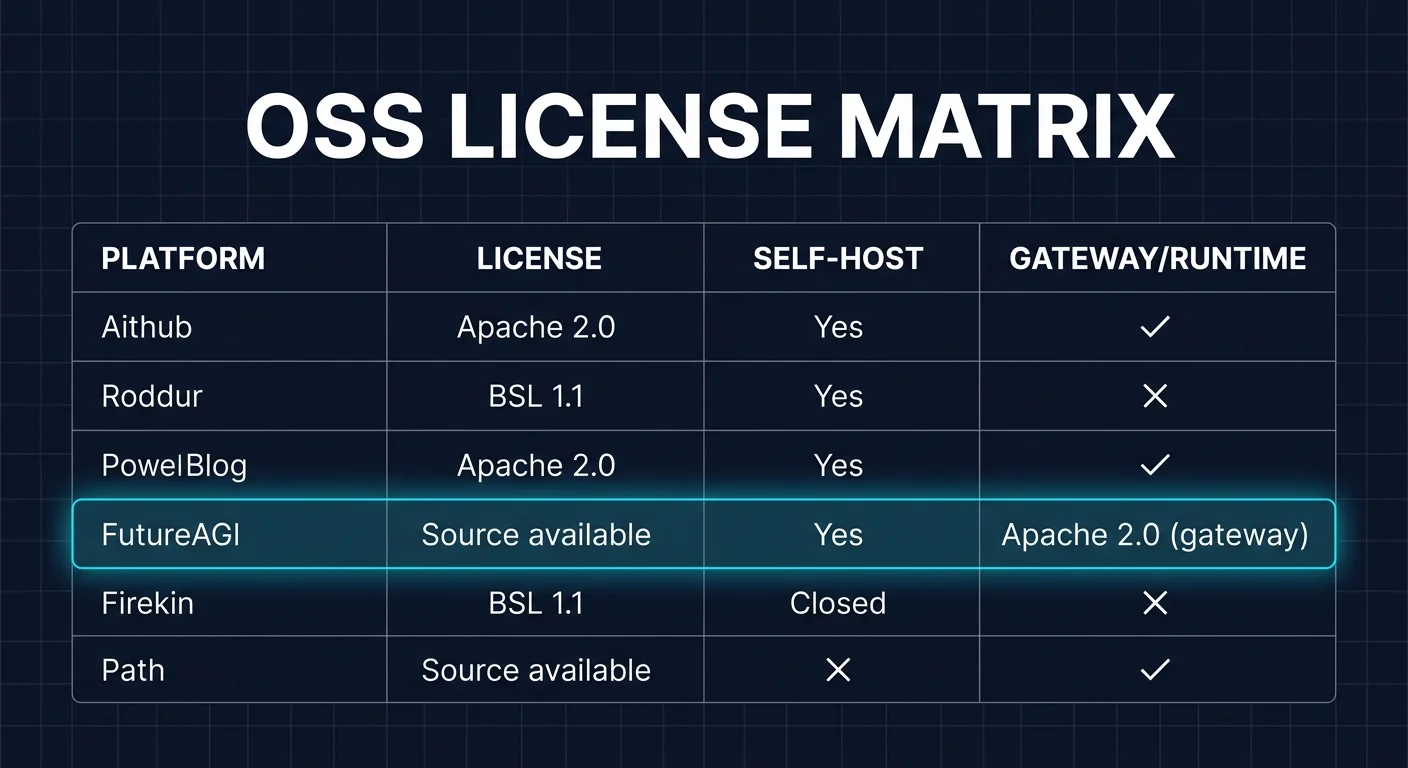

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Hosted governance, observability, and prompt engineering in one gateway | Portkey | Production gateway with deep policy and analytics | Free tier, Production from $99/mo | Apache 2.0 gateway |

| Self-hosted Python proxy with broad provider coverage | LiteLLM | Code-first router with retries, fallbacks, budgets | OSS free, Enterprise quote | BSL 1.1, fair-use |

| Kubernetes-native enterprise gateway in VPC or air-gapped | TrueFoundry | K8s-native with RBAC, SSO, and compliance | Custom, contact sales | Closed source |

| Gateway-first observability with request analytics and caching | Helicone | Fastest base-URL swap, deep request analytics | Hobby free, Pro $79/mo, Team $799/mo | Apache 2.0 |

| Routing closes back into evals, tracing, and guardrails | FutureAGI | Loop from gateway to eval and back to dataset | Free self-hosted (OSS), hosted from $0 + usage | Apache 2.0 |

If you only read one row: pick Portkey when you need a polished hosted gateway, LiteLLM when self-hosted Python ergonomics matter, and FutureAGI when routing decisions must inform evaluation and prompt optimization. For deeper reads: see our LLM Gateway buyer guide, the traceAI tracing layer, and the Agent Command Center docs.

Who OpenRouter is and where it falls short

OpenRouter is a unified API marketplace for 300+ AI models from providers like OpenAI, Anthropic, Google, Mistral, Meta, DeepSeek, Cohere, xAI, and many open-weight hosts. Its docs describe auto-routing, provider failover, response caching, multimodal inputs and outputs (images, PDFs, audio, video, TTS, STT), advanced tool calling with server-side tools, and enterprise controls like workspace management, spending guardrails, and broadcast integrations into Langfuse, Datadog, and other backends. The product fit is strong for teams that want one API key and one billing relationship across many models without negotiating with each provider.

Pricing has two distinct components as of May 2026. Pay-as-you-go credit purchases carry a 5.5% platform fee. On per-request usage, the first 1 million requests per month carry no fee, and a 5% fee applies after. The catalog is published at upstream provider list price with no markup. The free tier offers 50 requests per day across 25+ free models and 4 free providers, with a higher 1,000-request limit for accounts holding $10 or more in credits. BYOK is supported. Bulk discounts are available on enterprise contracts. The pricing page is the source of record because OpenRouter periodically tunes platform fees and free-tier policies.

Be fair about what OpenRouter does well. The breadth is unmatched in this category. The model catalog is searchable and current. The auto-router routes across providers and models for reliability. The free tier is real and useful for prototyping. The observability story includes request logs, cost dashboards, and broadcast integrations into multiple LLM-observability backends. For solo engineers, prototypes, and small teams that want one key and many models, OpenRouter remains the path of least resistance.

Where teams start looking elsewhere is less about OpenRouter being weak and more about what hosted-only gateways do not solve. You may need an audit trail that satisfies SOC 2 or HIPAA without depending on a third-party broker. You may need conditional routing rules that target specific endpoints based on user attributes, latency, and cost. You may need prompt versioning, A/B traffic splitting, semantic caching, and guardrail enforcement inside the same plane. You may need self-hosted control because PII or regulated data cannot leave your VPC. You may want eval scores attached to span data, not in a separate dashboard. Each of those is a real reason to compare alternatives.

The 5 OpenRouter alternatives compared

1. Portkey: Best hosted gateway with governance and observability

Open-source gateway. Closed-source hosted control plane. Self-hostable.

Portkey is the most polished hosted gateway in this list, especially for teams that need governance, observability, prompt engineering, and routing in one product. The pitch is that the gateway is a single integration point for every LLM call, and policy, telemetry, prompt management, and analytics live behind that point.

Architecture: Portkey ships an open-source AI gateway under Apache 2.0 plus a hosted control plane for analytics, prompt management, governance, and enterprise features. The gateway exposes an OpenAI-compatible REST API and routes across 1,600+ model variants from 250+ providers, with conditional routing, weighted load balancing, retries, fallbacks, budgets, and rate limits on virtual API keys. Cache supports simple and semantic modes. Guardrails are policy-driven and integrate with PII redaction, regex checks, and external moderation providers.

Pricing: Portkey Free covers 10,000 requests per month and basic observability. Production starts at $99 per month with 100,000 requests, virtual keys, and prompt management. Enterprise is custom and adds SSO, RBAC, audit logs, SOC 2, HIPAA, on-prem deployment, and dedicated support. The OSS gateway has no fee when self-hosted; the control plane usage drives the bill.

Best for: Pick Portkey when one team owns gateway, prompts, and analytics, and the buying signal is governance plus observability inside the same UI. It pairs well with OpenAI-compatible clients, Anthropic SDK, Google GenAI, Bedrock, Azure OpenAI, and BYOK setups.

Skip if: Skip Portkey if your eval and tracing pipeline is the center of gravity, since prompt-eval depth is lighter than dedicated eval platforms. Skip it if you need agent simulation or voice testing as part of the loop. Also model the cost. The hosted control plane bills per request once you cross the free tier, and that math compounds at scale.

2. LiteLLM: Best self-hosted Python proxy

BSL 1.1 source-available with fair-use exemption. Self-hostable.

LiteLLM is the right alternative when the team wants a code-first proxy and SDK, runs Python, and needs broad provider coverage without paying a platform fee. It is the de-facto OSS choice for teams that have already standardized on Python services and want their gateway to be one of those services.

Architecture: LiteLLM is an open-source Python library and standalone proxy that translates 100+ LLM provider APIs into OpenAI-compatible inputs and outputs. Router supports retries, fallbacks, timeouts, cooldowns, weighted load balancing, virtual keys, and budgets. The proxy ships as a single Docker image with optional Postgres for budget tracking, model-cost reporting, and key management. SDKs are Python and JavaScript with first-class async support.

Pricing: LiteLLM is free under BSL 1.1 for use up to certain commercial thresholds, with fair-use language and a four-year change date to Apache 2.0. Enterprise add-ons (SSO, JWT auth, audit logs, prometheus metrics export, custom guardrails, dedicated support) are quote-only. The OSS path is real and widely deployed; the enterprise tier is for teams that want managed support, hardened auth, and SLAs.

Best for: Pick LiteLLM if your team is Python-first, runs services in Docker or Kubernetes, and wants a code-first proxy with budget tracking, retries, and model fallbacks. It pairs well with custom agent code, BYOK provider keys, FastAPI services, and existing OTel pipelines.

Skip if: Skip LiteLLM if you need a polished UI for prompts, datasets, and evals out of the box. The proxy ships analytics, but the visual surface area is thinner than Portkey or TrueFoundry. Read the BSL 1.1 license carefully if your business model includes offering LiteLLM as a managed service to third parties; that path may need an enterprise license. Helicone, Portkey, and FutureAGI publish gateway components under Apache 2.0 if pure OSI is a procurement requirement.

3. TrueFoundry: Best Kubernetes-native enterprise gateway

Closed source. Self-hosted in your VPC, on-prem, or air-gapped. Cloud option.

TrueFoundry is the right alternative when your platform team already runs Kubernetes and wants an enterprise AI gateway that fits the same Helm-and-RBAC mental model as the rest of your stack. The pitch is governance, multi-cloud deployment, and observability inside one plane that the platform team can own.

Architecture: TrueFoundry AI Gateway is Kubernetes-native with Helm-based management. It supports unified access across 250+ LLMs, including chat, completion, embedding, and reranking models. Governance covers RBAC with SSO, rate limiting per user or service or endpoint, token-based and cost-based quotas, and OAuth2 plus API-key auth. Observability includes token usage, latency, error rates, full request and response logging, and metadata tagging. Reliability covers latency-based and weighted load balancing, automatic fallback, geo-aware routing, and a stated sub-3ms internal latency claim. Safety integrates PII filtering and toxicity detection plus connections to OpenAI Moderation, AWS Guardrails, and Azure Content Safety. The platform claims SOC 2, HIPAA, and GDPR compliance and supports VPC, on-prem, and air-gapped environments.

Pricing: TrueFoundry pricing is custom and quoted by sales. The platform stats page lists 10B+ requests processed monthly and a 99.99% uptime guarantee. There is no free public-cloud tier in the gateway product the way Portkey has one.

Best for: Pick TrueFoundry when your platform team mandates Kubernetes-native deployment, when SOC 2 or HIPAA is a hard procurement gate, and when air-gapped or on-prem is required. The buying signal is enterprise governance plus K8s ownership rather than a hosted SaaS contract.

Skip if: Skip TrueFoundry if your team does not run Kubernetes and you do not want to. Helm, autoscaling, GPU scheduling, and cluster operations are a real cost. Skip it also if you need a low-cost hosted entry point. Pricing starts at the enterprise level, not at a free tier. Verify exact gateway latency claims under your traffic shape before signing because vendor benchmarks rarely match production loads.

4. Helicone: Best for gateway-first observability and analytics

Apache 2.0. Self-hostable. Hosted cloud option.

Helicone is the right alternative when the fastest path to value is changing the base URL, seeing every request, and controlling spend. It is gateway-first observability, with eval scores and prompt management as adjacent features. That matters if the production issue is provider routing, caching, p95 latency, cost attribution, user-level analytics, or fallback behavior.

Architecture: Helicone is an Apache 2.0 project for LLM observability and an OpenAI-compatible AI Gateway. The docs cover request logging, provider routing across 100+ models, caching, rate limits, LLM security, sessions, user metrics, cost tracking, datasets, alerts, reports, HQL, eval scores, prompts, and prompt assembly. The base-URL swap is the lowest-friction migration in this list.

Pricing: Helicone Hobby is free with 10,000 requests, 1 GB storage, 1 seat, and 1 organization. Pro is $79 per month with unlimited seats, alerts, reports, and HQL. Team is $799 per month with 5 organizations, SOC 2, HIPAA, and a dedicated Slack channel. Enterprise is custom and includes SAML SSO, on-prem deployment, and bulk cloud discounts. Usage-based pricing applies above included allowances.

Best for: Pick Helicone if request analytics, user-level spend, model cost tracking, caching, fallbacks, and prompt management are the gap. It is a strong first tool for teams with live LLM traffic that need an answer to “which users, prompts, models, and endpoints drove this p99 spike.”

Skip if: Helicone will not replace a deep eval platform by itself. The center of gravity is gateway observability. On March 3, 2026, Helicone announced it had been acquired by Mintlify and that services would remain live in maintenance mode with security updates, new models, bug fixes, and performance fixes. Treat roadmap depth as something to verify directly during your evaluation.

5. FutureAGI: Best when routing closes into evals and guardrails

Open source. Self-hostable. Hosted cloud option.

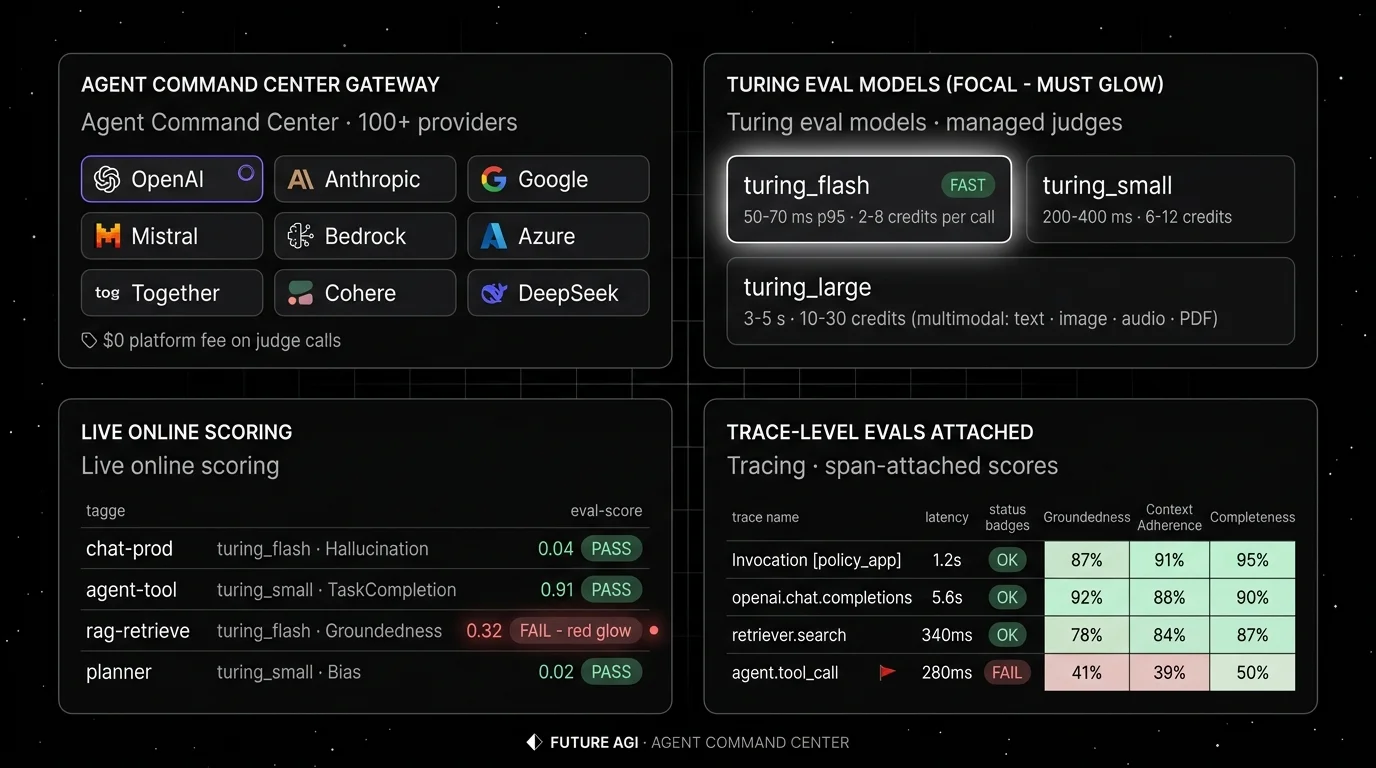

FutureAGI is the right alternative when gateway routing must inform pre-prod evaluation, prompt optimization, and guardrail enforcement, all in the same loop. The Agent Command Center routes across 100+ providers with BYOK, guardrails, and cache, while traceAI emits OpenTelemetry GenAI semconv spans that carry eval scores as span attributes. The buying signal is teams that already use OpenRouter for breadth but lose fidelity when production failures need to become eval cases without manual export.

Architecture: what closes, not what ships. The public repo is Apache 2.0 and self-hostable. The runtime closes five handoffs without glue code. Simulate-to-eval: every simulated trace is scored by the same evaluator that judges production. Eval-to-trace: scores are span attributes, so a failure surfaces inside the trace tree where the bad tool call lives. Trace-to-optimizer: failing spans flow into the optimizer as labeled training examples. Optimizer-to-gate: the optimizer ships a versioned prompt that the CI gate evaluates against the same threshold the previous version held. Gate-to-deploy: only versions that hold the eval contract reach the gateway. The plumbing under it (Django, React, the Go-based Agent Command Center gateway, traceAI under Apache 2.0, Postgres, ClickHouse, Redis, object storage, workers, Temporal, OTel across Python, TypeScript, Java, and C#) exists so the handoffs do not require export-and-import.

Pricing: FutureAGI starts at $0/month. The free tier includes 50 GB tracing and storage, 2,000 AI credits, 100,000 gateway requests, 100,000 cache hits, 1 million text simulation tokens, 60 voice simulation minutes, unlimited datasets, unlimited prompts, unlimited dashboards, 3 annotation queues, 3 monitors, unlimited team members, and unlimited projects. Usage after the free tier starts at $2 per GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $1 per 100,000 cache hits, $2 per 1 million text simulation tokens, and $0.08 per voice minute. Boost is $250 per month, Scale is $750 per month, and Enterprise starts at $2,000 per month.

Best for: Pick FutureAGI when production gateway data should land in the same plane as evals, prompts, datasets, and CI gates. The buying signal is teams using OpenRouter for routing, Langfuse for observability, a notebook for prompt iteration, and a separate eval harness, who watch failures repeat across releases because the loop is manual.

Skip if: Skip FutureAGI if your immediate need is a narrow gateway and one API key. The full stack has more moving parts than Portkey hosted or Helicone Pro. If you do not want to operate Postgres, ClickHouse, queues, Temporal, and OTel pipelines, use the hosted product or pick a smaller point tool.

Decision framework: Choose X if…

- Choose Portkey if your dominant workload is gateway-first governance with prompt management, conditional routing, and analytics in one hosted UI. Buying signal: one team owns gateway plus prompts plus telemetry. Pairs with: OpenAI-compatible clients, BYOK, virtual keys.

- Choose LiteLLM if your team is Python-first and wants a code-first proxy with router, budgets, and fallbacks. Buying signal: services already run in Docker or Kubernetes. Pairs with: FastAPI, custom agent code, OTel exporters.

- Choose TrueFoundry if K8s ownership and enterprise governance are non-negotiable. Buying signal: SOC 2 or HIPAA gate, VPC or air-gapped requirement. Pairs with: Helm, RBAC, SSO, multi-cloud deployment.

- Choose Helicone if the fastest path to value is changing the base URL and seeing every request. Buying signal: live traffic and a p99 mystery. Pairs with: OpenAI-compatible clients, provider failover, budget tracking. Verify the post-Mintlify roadmap before signing.

- Choose FutureAGI if routing must inform evals, traces, and guardrails inside the same plane. Buying signal: production failures must become eval cases without manual export. Pairs with: traceAI, OTel GenAI semconv, BYOK judges.

Common mistakes when picking an OpenRouter alternative

- Treating the platform fee as the only cost. Real cost is platform fee plus token spend plus retries plus retention plus cache hits plus seats plus on-call hours for self-hosted operators. A cheaper subscription can lose if every retry calls a slow provider.

- Ignoring license fine print. LiteLLM is BSL 1.1, not Apache 2.0. Phoenix is Elastic License 2.0, not OSI. Portkey is Apache 2.0 for the gateway and closed for the control plane. If procurement requires OSI open source for the self-hosted plane, only Helicone and FutureAGI clear that bar today.

- Picking by provider count. Three hundred providers in a catalog is impressive on a slide. The number that matters is the provider you actually call, the one your traffic will hit at p99, and the one that has rate-limit headroom on your account.

- Skipping the failover drill. A gateway is a single point of failure for production LLM traffic. Run a 24-hour drill: kill primary, observe fallback timing, retry counts, cost, and tail latency before signing a contract.

- Pricing only the gateway. Real cost includes seats, retention, audit log volume, prompt management seats, eval token spend, and the labor cost of operating self-hosted infra.

What changed in the gateway landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | LiteLLM landed BSL 1.1 license clarification and enterprise features | Open-source proxy users can still self-host; commercial-managed-service path now requires explicit licensing. |

| Apr 2026 | Portkey shipped semantic cache and conditional-route improvements | Routing logic moves closer to per-user, per-context decisions inside the gateway. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway routing, guardrails, cost controls, and high-volume trace analytics moved into the same loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone remains usable, but roadmap risk became part of vendor diligence. |

| Feb 2026 | TrueFoundry expanded gateway to support 250+ providers and embedding models | K8s-native option closed feature gaps with hosted gateways for embedding workflows. |

| Feb 2026 | OpenRouter expanded multimodal and tool-calling features | The breadth lead extended into PDFs, audio, video, TTS, STT, and server-side tools. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real LLM traffic, including provider failures, long tail prompts, tool calls, and rate-limit events. Replay the slice through each candidate gateway with your OTel payload shape and your real provider keys. Do not accept a vendor benchmark.

-

Measure reliability under load. Build a Reliability Decay Curve: x-axis is concurrency or request volume, y-axis is successful routing, p95 and p99 latency, fallback hit rate, retry count, and cost per request. Track dropped requests, duplicate requests, failed fallbacks, and time-to-detect for primary outages.

-

Cost-adjust. Real cost equals platform fee plus token spend plus retries plus storage retention plus seat fees plus self-hosted infra plus on-call. A gateway with a lower per-request fee can lose if its fallback policy doubles your retry rate. A self-hosted gateway can lose if Postgres and ClickHouse plus on-call exceed SaaS overage.

Sources

- OpenRouter pricing

- OpenRouter docs

- Portkey pricing

- Portkey gateway repo

- LiteLLM repo

- LiteLLM pricing

- TrueFoundry AI Gateway

- TrueFoundry pricing

- Helicone pricing

- Helicone repo

- Helicone joining Mintlify

- FutureAGI pricing

- FutureAGI repo

- traceAI repo

Series cross-link

Next: Best LLM Gateways, LangSmith Alternatives, Langfuse Alternatives

Frequently asked questions

What is the best OpenRouter alternative in 2026?

Does OpenRouter actually charge a markup over upstream providers?

Is there an open-source alternative to OpenRouter?

Can I self-host an OpenRouter alternative?

Which alternative has the lowest BYOK fee?

Which OpenRouter alternative is best for production routing and fallbacks?

Migrating from OpenRouter: what's the effort?

What does OpenRouter still do better than alternatives?

FutureAGI, Portkey, LiteLLM, Langfuse, OpenRouter, and LangSmith as Helicone alternatives in 2026 after the Mintlify acquisition. Pricing, OSS, tradeoffs.

FutureAGI, LiteLLM, Helicone, OpenRouter, Cloudflare AI Gateway, and Kong AI as Portkey alternatives in 2026. Pricing, OSS license, routing, tradeoffs.

Portkey, Kong AI Gateway, LiteLLM, Helicone, and FutureAGI as TrueFoundry alternatives in 2026. K8s vs hosted, OSS license, and tradeoffs.