MLflow Alternatives in 2026: 7 LLM Eval Platforms Compared

FutureAGI, DeepEval, Langfuse, Phoenix, W&B Weave, Comet Opik, and Braintrust as MLflow alternatives for production LLM evaluation work in 2026.

Table of Contents

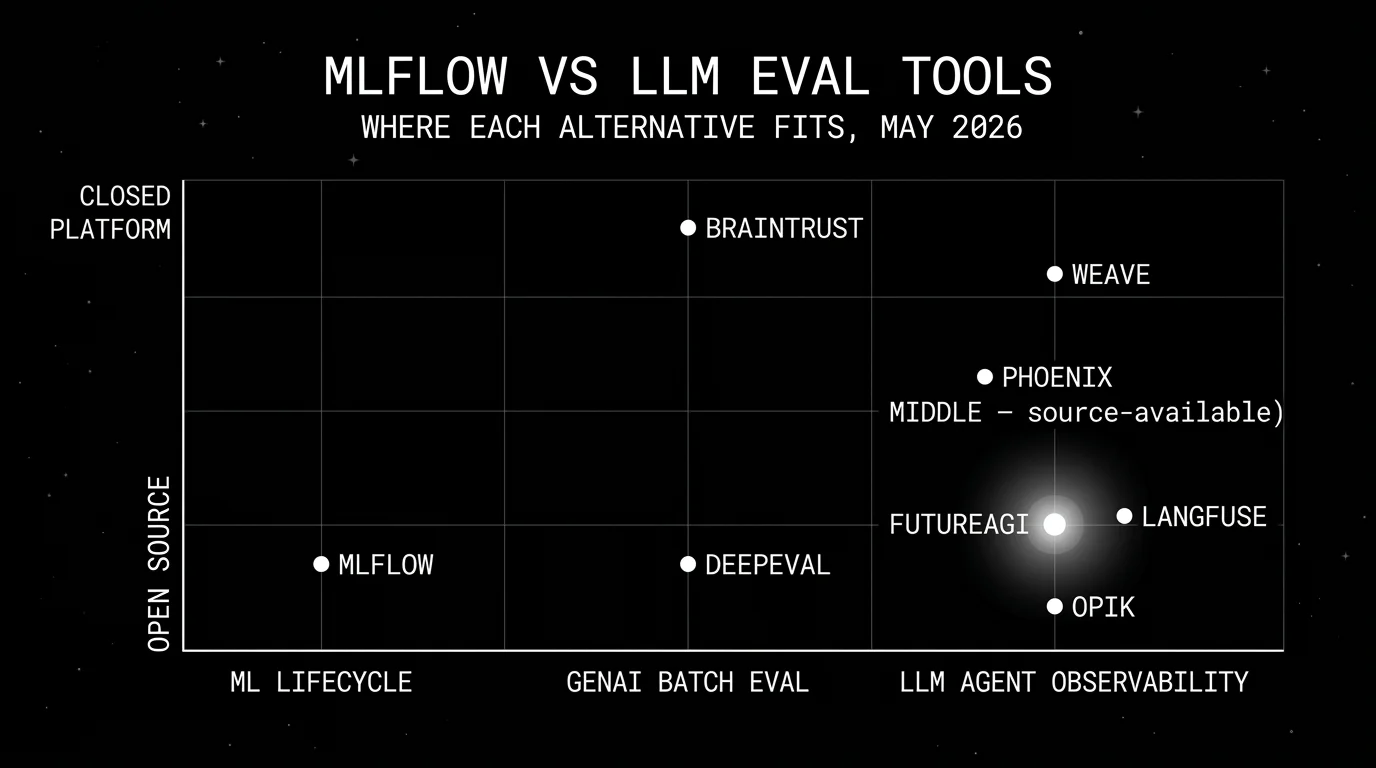

MLflow earns its place in any team that runs traditional ML alongside LLMs. The GenAI surface is fine for batch experiment scoring inside the existing MLflow workflow. It starts to feel thin when the work moves into production LLM observability, multi-turn agent evaluation, runtime guardrails, simulation, and prompt optimization. This guide compares seven alternatives that fit each of those gaps, and explains the cleanest pattern for keeping MLflow where it shines while running a dedicated LLM eval platform alongside it.

TL;DR: Best MLflow alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified eval, observe, simulate, optimize, gateway, guard | FutureAGI | One loop across pre-prod and prod | Free + usage from $2/GB storage | Apache 2.0 |

| pytest-style framework on a laptop | DeepEval | Easiest path from assertions to LLM evals | Free | Apache 2.0 |

| Self-hosted LLM observability | Langfuse | Mature traces, prompts, datasets, evals | Hobby free, Core $29/mo, Pro $199/mo | MIT core |

| OTel-native tracing and evals | Arize Phoenix | Open standards, source available | Phoenix free self-hosted, AX Pro $50/mo | Elastic License 2.0 |

| W&B-anchored AI lifecycle | W&B Weave | Native to Weights & Biases | Free tier + paid | Apache 2.0 toolkit; hosted W&B commercial |

| Comet-anchored AI lifecycle | Comet Opik | Open source observability + evals | Free + Comet enterprise | Apache 2.0 |

| Closed-loop SaaS with strong dev evals | Braintrust | Polished experiments, scorers, CI gate | Starter free, Pro $249/mo | Closed platform |

If you only read one row: pick FutureAGI for the broadest open-source platform alongside MLflow, DeepEval for a framework-only start, and Weave or Opik when the rest of the stack already lives at W&B or Comet.

Why MLflow’s LLM evaluation falls short for production teams

MLflow is not a bad tool. It is a model lifecycle platform that grew GenAI features. The GenAI evaluation page lists three building blocks: datasets (the test database), scorers (built-in LLM judges like Correctness and Guidelines plus custom criteria), and prediction functions. That is enough for batch evaluation runs inside an experiment-tracking workflow.

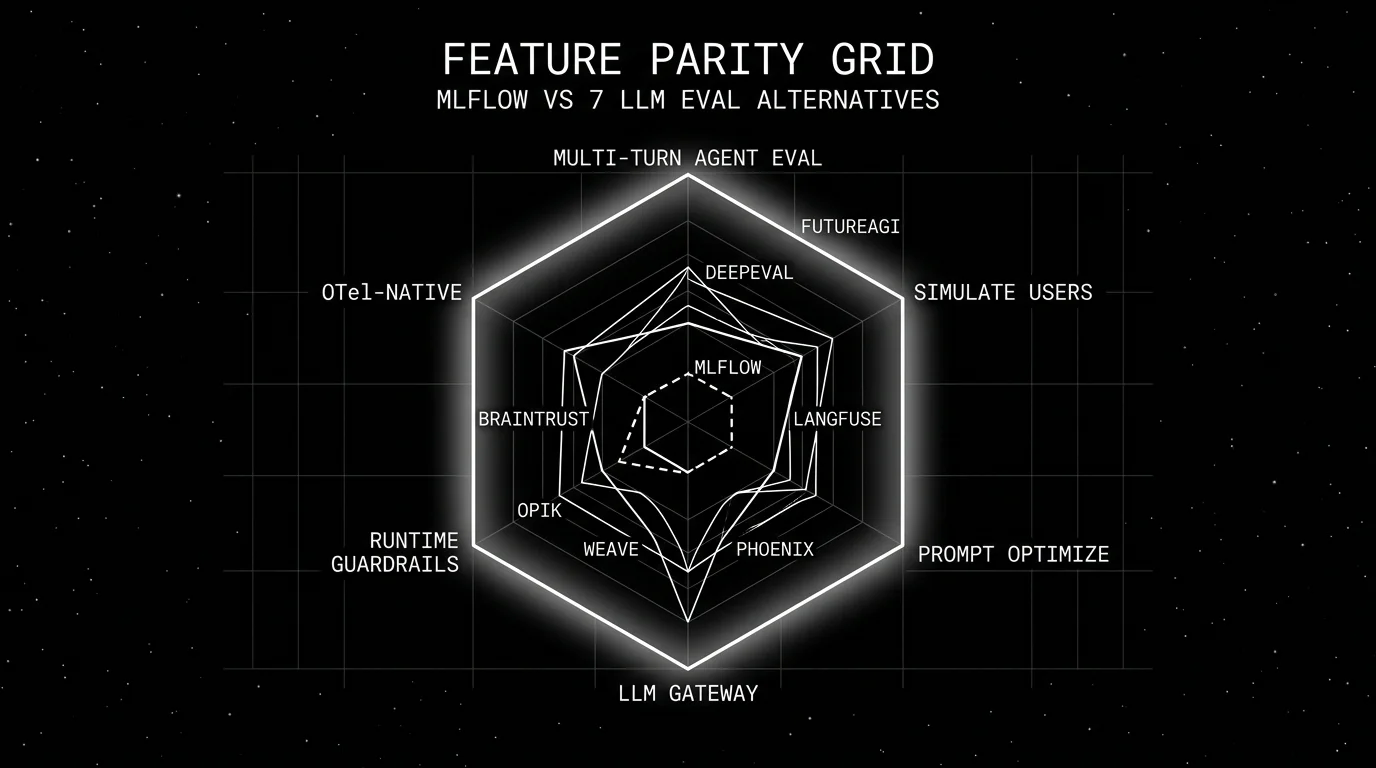

The friction shows up at five points.

First, the metric library is shallow compared to dedicated LLM eval frameworks. MLflow ships Correctness, Guidelines, and custom LLM judges. DeepEval ships G-Eval, DAG, Faithfulness, Answer Relevancy, Contextual Recall and Precision, Hallucination, Knowledge Retention, Role Adherence, Conversation Completeness, Turn Relevancy, Task Completion, Tool Correctness, Argument Correctness, Step Efficiency, Plan Adherence, Plan Quality, plus safety and multimodal metrics. FutureAGI ships 50+ first-party metrics that run locally plus Turing models and BYOK judges.

Second, multi-turn evaluation is not first-class. The MLflow surface treats evaluations as functions over inputs and outputs. Conversational test cases, conversation simulators, and session-level metrics are not built in. Teams running agents end up writing custom scorers that reimplement what FutureAGI and DeepEval ship out of the box.

Third, prompt management is thin. MLflow has a prompt registry; it does not have deployment labels, A/B testing, performance-by-version analysis, or production trace linking the way FutureAGI, Langfuse, and Confident-AI do.

Fourth, runtime guardrails and gateway routing are out of scope. MLflow does not run a request gateway, does not enforce a guardrail policy at the proxy, and does not route requests across providers based on health or cost. A team that needs those features pairs MLflow with another product.

Fifth, the LLM trace dashboard is narrower than the dedicated tools. Span-attached scores, dataset replay, prompt-to-trace performance views, and HQL-style query languages live in Langfuse, Phoenix, FutureAGI, and Helicone, not in MLflow.

The honest framing is that MLflow’s mlflow.evaluate is a competent batch eval tool inside an experiment-tracking workflow. It is not a replacement for a dedicated LLM observability and evaluation platform once the workload moves to production agents.

The 7 MLflow alternatives compared

1. FutureAGI: Best for unified LLM eval + observe + simulate + optimize + gateway + guard

Open source. Self-hostable. Hosted cloud option.

Use case: Teams that already run MLflow for traditional ML lifecycle and want a dedicated LLM platform that handles simulation, evaluation, observation, gateway routing, runtime guardrails, and prompt optimization in one runtime. The pitch is a single loop where each handoff is a versioned object, not a manual export.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $2 per 1 million text simulation tokens, $0.08 per voice minute. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo.

OSS status: Apache 2.0.

Best for: Mixed ML and LLM teams that keep MLflow for the model lifecycle and add FutureAGI for the LLM-specific surface. RAG agents, voice agents, copilots, and support automation are the strongest fits.

Worth flagging: More moving parts than MLflow on its own. ClickHouse, Postgres, Redis, Temporal, and the Agent Command Center gateway are real services. Use the hosted cloud if operating the data plane is not a fit.

2. DeepEval: Best for a pytest framework next to MLflow

Open source. Apache 2.0.

Use case: Drop-in CI evaluation. Decorate a pytest function with @pytest.mark.parametrize, call assert_test(), run deepeval test run file.py. Pairs cleanly with MLflow because DeepEval handles offline LLM evals while MLflow tracks the broader experiment.

Pricing: Free. The hosted Confident-AI platform on top is paid: Starter $19.99/user/mo, Premium $49.99/user/mo.

OSS status: Apache 2.0, 15K+ stars.

Best for: Python-first teams that already run pytest in CI and want LLM evals next to existing tests, with MLflow continuing to track experiments and model artifacts.

Worth flagging: Framework only. Production trace dashboards, gateway, and runtime guardrails live elsewhere.

3. Langfuse: Best for self-hosted LLM observability

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing, prompt versioning, dataset-driven evals, and human annotation. The system of record for LLM telemetry when MLflow is doing model lifecycle work elsewhere.

Pricing: Hobby free with 50K units, 30 days data access, 2 users. Core $29/mo with 100K units, 90 days. Pro $199/mo with 3 years and SOC 2. Enterprise $2,499/mo.

OSS status: MIT core, enterprise dirs separate.

Best for: Platform teams that want to operate the data plane and keep trace data inside their infrastructure.

Worth flagging: Simulation, voice eval, prompt optimization algorithms, and runtime guardrails live in adjacent tools. License is “MIT core, enterprise paths separate.”

4. Arize Phoenix: Best for OpenTelemetry-native tracing alongside MLflow

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Use case: Teams that already invested in OpenTelemetry want LLM eval on the same plumbing. Phoenix accepts traces over OTLP and auto-instruments LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI, Bedrock, Anthropic, Python, TypeScript, and Java.

Pricing: Phoenix free for self-hosting. AX Pro $50/mo. AX Enterprise custom.

OSS status: Elastic License 2.0. Source available.

Best for: Engineers who care about open instrumentation standards and want a path from local Phoenix into Arize AX for ML observability that complements MLflow.

Worth flagging: Phoenix is not a gateway, not a guardrail product, not a simulator. ELv2 license matters for legal teams using OSI definitions strictly.

5. W&B Weave: Best when Weights & Biases is already the lifecycle tool

Apache 2.0 toolkit (wandb/weave); hosted W&B platform commercial.

Use case: Teams that already use W&B for experiment tracking and want LLM evaluation in the same product. Weave organizes application logs into trace trees and supports multimodal tracking (text, code, documents, image, audio).

Pricing: Free tier; paid plans not posted on a flat pricing page. The hosted free tier is the entry point.

OSS status: Apache 2.0 (wandb/weave) toolkit; hosted W&B commercial.

Best for: Teams committed to W&B for ML lifecycle and willing to consolidate LLM eval into the same platform. The trace tree visualization is a real strength for agent rollouts.

Worth flagging: No first-party gateway or runtime guardrails. Eval metric library is smaller than DeepEval’s. Pricing transparency is less clean than the OSS alternatives.

6. Comet Opik: Best when Comet is the lifecycle tool, with OSS

Open source. Self-hostable. Comet enterprise option.

Use case: Teams using Comet for ML experiment tracking. Opik adds LLM-specific tracing, evals, and an “Ollie” coding assistant that suggests fixes from traces.

Pricing: Free open-source self-hosting; Comet enterprise terms apply for the managed product.

OSS status: Open source on GitHub with 19K+ stars.

Best for: Comet-anchored stacks; small to mid teams that want OSS observability with 30+ LLM-as-judge metrics out of the box.

Worth flagging: Smaller mindshare than Langfuse or Phoenix in dedicated LLM eval procurement; verify the multi-turn agent surface for your workload.

7. Braintrust: Best for a closed-loop SaaS next to MLflow

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want one polished SaaS for experiments, datasets, scorers, prompt iteration, online scoring, and CI gating, while MLflow keeps tracking ML model artifacts.

Pricing: Starter $0 with 1 GB processed data and 10K scores. Pro $249/mo with 5 GB and 50K scores. Enterprise custom.

OSS status: Closed.

Best for: Teams that prefer to buy than to build, that want experiments and scorers in one UI, and do not need open-source control.

Worth flagging: No first-party voice simulator. Gateway, guardrails, and prompt optimization are not first-class. See Braintrust Alternatives.

Decision framework: pick by what MLflow leaves on the table

- Need production LLM observability: FutureAGI, Langfuse, Phoenix.

- Need multi-turn agent evaluation: FutureAGI, DeepEval, Braintrust.

- Need prompt management with deployment labels: FutureAGI, Langfuse.

- Need runtime guardrails or a gateway: FutureAGI is the open-source option; Galileo on the closed side.

- Need pre-production simulation, especially voice: FutureAGI is the only one with first-party voice simulation.

- Need to stay in W&B: Weave.

- Need to stay in Comet: Opik.

- Need a polished SaaS dev loop: Braintrust.

The pattern that works for most teams: keep MLflow for traditional ML lifecycle, add one LLM eval platform alongside it, and write a thin glue layer that registers prompt versions and dataset hashes in both. The pain is real when teams force MLflow to do LLM observability or force the LLM platform to do model registry; both end up bent out of shape.

Common mistakes when replacing MLflow’s LLM evaluation

- Treating MLflow as a binary “keep or migrate.” The cleaner pattern is “keep for ML lifecycle, add for LLM workflow.” Forcing a migration off MLflow rarely pays for itself.

- Picking the LLM platform on a demo dataset. Run a domain reproduction with your real traces, your model mix, your concurrency, and your judge cost.

- Underestimating scorer translation. MLflow’s Correctness and Guidelines scorers map to G-Eval and DAG in DeepEval, to LLM judges in Langfuse, and to scorers in Braintrust. The names differ. Pin the rubric and the judge model when porting.

- Ignoring multi-step agent eval. MLflow’s eval is single-call by default. Agent reliability needs trace-level, session-level, and path-aware evaluation. Verify the alternative covers it.

- Skipping the dataset migration plan. Datasets, prompt version history, human review queues, and CI gates are the hard half of any platform move.

What changed in 2026 (for context)

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Braintrust added Java auto-instrumentation | Java teams can trace with less manual code. |

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can gate experiments in GitHub Actions. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Gateway, guardrails, and high-volume trace analytics moved into the same loop. |

| Dec 2025 | DeepEval v3.9.9 shipped agent metrics + multi-turn synthetic goldens | The framework moved closer to first-class agent and conversation eval. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Phoenix is moving trace, prompt, dataset, and eval workflows closer to terminal-native agent tooling. |

How FutureAGI implements the MLflow GenAI replacement loop

FutureAGI is the production-grade LLM evaluation, observability, and registry platform built around the GenAI-first architecture this post compared against MLflow. The full stack runs on one Apache 2.0 self-hostable plane:

- Evaluation surface - 50+ first-party metrics (Groundedness, Answer Relevance, Tool Correctness, Knowledge Retention, Role Adherence, Task Completion, G-Eval rubrics, Hallucination, PII, Toxicity) ship as both pytest-compatible scorers and span-attached scorers. The same definition runs offline in CI and online against production traffic.

- Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java (LangChain4j, Spring AI), and C#. The trace tree carries metric scores, prompt versions, and tool-call accuracy as first-class span attributes.

- Prompt and dataset registry - versioned prompts, A/B variants, datasets, and human annotation queues live in the same workspace as the eval suite. Six prompt-optimization algorithms consume failing trajectories as labelled training data.

- Judge layer -

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds. BYOK lets any LLM serve as the judge at zero platform fee.

Beyond the four axes, FutureAGI also ships persona-driven simulation, the Agent Command Center gateway across 100+ providers with BYOK routing, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams replacing MLflow GenAI features end up running three or four tools in production: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the eval, trace, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching, and the same metric definition runs in CI and production.

Sources

- MLflow GenAI evaluation docs

- FutureAGI pricing

- FutureAGI GitHub repo

- DeepEval GitHub repo

- DeepEval metrics documentation

- Langfuse pricing

- Langfuse self-hosting docs

- Arize pricing

- Phoenix docs

- W&B Weave

- Comet Opik GitHub repo

- Braintrust pricing

Series cross-link

Read next: Best LLM Evaluation Tools, DeepEval Alternatives, LLM Testing Playbook

Frequently asked questions

Why look for MLflow alternatives for LLM evaluation in 2026?

Is MLflow open source?

Can I use MLflow alongside an LLM eval platform?

What is the best free MLflow alternative for LLM evaluation?

How does MLflow's mlflow.genai.evaluate compare to DeepEval?

Does MLflow do production tracing for LLM apps?

Should I migrate off MLflow entirely?

Can I evaluate agents with MLflow?

FutureAGI, DeepEval, Langfuse, Phoenix, Braintrust, LangSmith, and Galileo as the 2026 LLM evaluation shortlist. Pricing, OSS license, and production gaps.

FutureAGI Prompts, Langfuse, LangSmith Hub, PromptLayer, Helicone, OpenAI Playground, and Pezzo as the 2026 prompt management shortlist for production teams.

FutureAGI, Langfuse, Arize Phoenix, Helicone, and LangSmith as Braintrust alternatives in 2026. Pricing, OSS status, and what each platform won't do.