Best AI Drift Detection Tools in 2026: 7 Platforms Compared

FutureAGI, Phoenix, Fiddler, Aporia, Evidently, NannyML, Datadog compared on LLM, embedding, and rubric drift plus alerting and root-cause workflow in 2026.

Table of Contents

Drift detection moved into the LLM and agent surface in 2026. Classical ML drift on tabular features still matters, but it is no longer the binding monitoring constraint. The drift you care about now is embedding-space shift on retrieval queries, rubric-score shift on LLM-as-judge metrics, and persona-shaped behavior shift as your user mix changes. A model whose tabular features look stable can still hallucinate 10x more on Tuesday than on Monday because your top-of-funnel users started asking different questions. This guide compares the seven drift detection tools most production teams shortlist on what they actually catch on real LLM workloads.

TL;DR: Best AI drift detection tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| LLM + agent + embedding drift in OSS | FutureAGI | Rubric, embedding, persona drift on one stack | Free + usage from $2/GB | Apache 2.0 |

| OTel-native LLM trace drift | Arize Phoenix | OpenInference path with AX upgrade | Phoenix free, AX Pro $50/mo | Elastic License 2.0 |

| Agentic drift with execution-context lineage | Fiddler AI | AI Control Plane with decision lineage | Custom enterprise | Closed |

| ML observability with broad integrations | Aporia (Coralogix) | Acquired by Coralogix; APM-shaped ML monitoring | Coralogix tiers | Closed |

| OSS Python library with 100+ metrics | Evidently | Apache 2.0, 7K stars | Free OSS, Cloud custom | Apache 2.0 |

| Performance estimation without labels | NannyML | CBPE and DLE algorithms | Free OSS | Apache 2.0 |

| LLM observability next to APM | Datadog | Anomaly detection + multi-step trace | Datadog tiers | Closed |

If you only read one row: pick FutureAGI for LLM-and-agent drift in OSS, Phoenix when OTel observability matters, and Evidently when an OSS library running in CI is the buying signal.

What “AI drift detection” actually has to capture in 2026

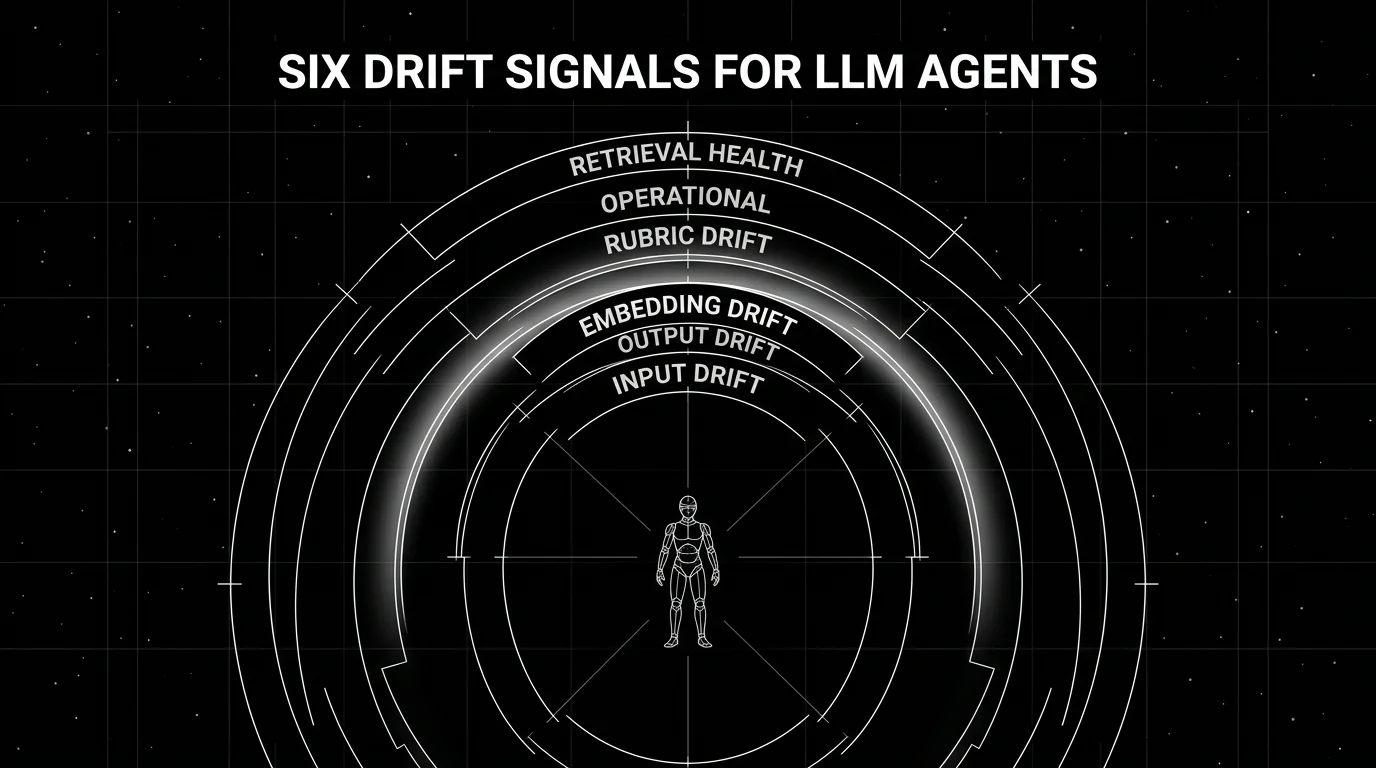

Six signals. If a tool covers three or fewer, treat it as an APM tool with drift hooks rather than a drift detection platform.

Input distribution drift. Token length, language mix, prompt template variants, source of traffic. The fastest-moving signal because user mix changes drive most other drift downstream.

Output distribution drift. Refusal rate, format compliance, response length, sentiment polarity. Output drift is your earliest signal that something is broken; refusal-rate spikes are usually the first symptom of a regressed prompt or a toxic input wave.

Embedding distance drift. Cosine or Wasserstein distance between current input or output embeddings and baseline embeddings. Captures semantic-space drift that token-level metrics miss. The new distribution may have the same length and language mix but mean entirely different things.

Eval rubric score drift. LLM-as-judge scores (faithfulness, groundedness, hallucination, toxicity) tracked over time on a sampled set of traces. Rubric drift catches the case where outputs look fine on metadata but score worse against your domain rubric.

Operational drift. Latency p95 and p99, cost per call, error rate, time-to-first-token. The classical APM signals; necessary but not sufficient.

Retrieval health (RAG-specific). Chunk freshness, retrieval recall on golden queries, source-corpus version drift. Most RAG hallucination spikes trace back to a stale or rotated source corpus.

If you cover only operational drift, you have APM. If you cover only embedding drift, you miss what users perceive. The serious tools cover all six.

The 7 AI drift detection tools compared

1. FutureAGI: Best for LLM, agent, and embedding drift in OSS

Open source. Self-hostable. Hosted cloud option.

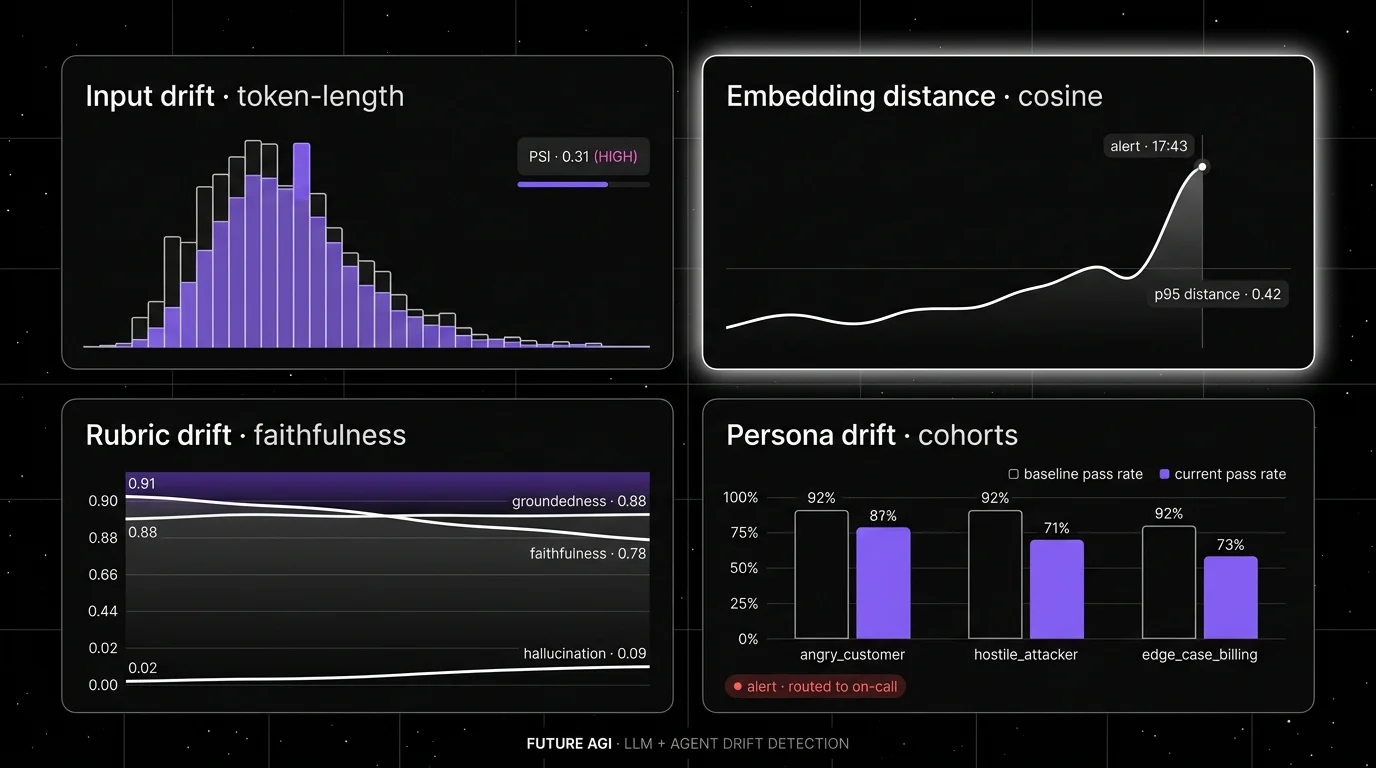

FutureAGI handles all six drift signals on one Apache 2.0 platform. The pitch is that drift detection runs on the same trace store, the same eval surface, and the same gateway as your evaluations and runtime guardrails, so a drift alert can route traffic to the previous prompt version through the same control plane.

Architecture: Future AGI is Apache 2.0 with self-hosting. Tracing is OTel-native via traceAI, persisted in ClickHouse. Drift checks run against ClickHouse aggregates with configurable cadence. Rubric drift uses Turing eval models (turing_flash p95 50–70 ms) for cheap inline scoring. Embedding drift compares cosine distances between sliding windows. Persona drift uses simulation cohorts as the baseline and production cohorts as the current window.

Pricing: Free tier covers 50 GB tracing, 2K AI credits, 100K gateway requests, 30-day retention. Pay-as-you-go from $2/GB storage, $10 per 1K AI credits.

Best for: Teams that want all six drift signals in one OSS platform with self-hosting, rubric drift on LLM-as-judge scores, and gateway-shaped rollback when drift crosses threshold.

Worth flagging: FutureAGI’s classical ML drift on tabular features is intentionally lighter than dedicated ML monitoring (Aporia, Fiddler). If you have heavy ML model surface alongside LLM, pair with one of those tools or use Phoenix for ML side. The hosted cloud avoids running the data plane.

2. Arize Phoenix: Best for OTel-native LLM trace drift

Source available (Elastic License 2.0). Self-hostable. Phoenix Cloud + Arize AX paths.

Phoenix is the right pick when OTel and OpenInference are the standards your platform team cares about. Phoenix ships agent trace rendering, embedding drift visualization, eval-score-attached spans, datasets, and experiments under one source-available toolkit, with Arize AX as the closed enterprise path.

Architecture: Phoenix runs on OpenTelemetry and OpenInference. It accepts traces over OTLP and ships auto-instrumentation across LangChain, LlamaIndex, DSPy, OpenAI, Bedrock, Anthropic, CrewAI, and others in Python (30+ integrations) plus TypeScript and Java. Drift detection covers input distribution, embedding-space drift, and eval-score drift via the phoenix-evals package.

Pricing: Phoenix is free self-hosted. Arize AX Free covers 25K spans per month and 15-day retention. AX Pro is $50/month with 50K spans and 30-day retention. AX Enterprise is custom with SOC 2, HIPAA, dedicated support, and self-hosting.

Best for: Teams that want OTel-native drift detection on agent traces with embedding visualization, who already use Arize for ML observability or want a path into AX.

Worth flagging: Phoenix uses Elastic License 2.0, which permits broad use but restricts hosted managed-service offerings. Call it source available if your legal team uses OSI definitions. Persona drift and rubric drift are present but less first-class than FutureAGI’s simulation-anchored cohort drift.

3. Fiddler AI: Best for agentic drift with execution-context lineage

Closed enterprise platform.

Fiddler AI frames itself as an AI Control Plane for Enterprise Agents with execution context, decision lineage, and policy enforcement. The drift differentiator is that drift signals carry the full execution context, so a refusal-rate spike on Tuesday can be traced back to the exact retrieval-source rotation that triggered it.

Architecture: Fiddler ships agentic observability with execution context, root cause analysis for agent behaviors, drift monitoring across input, output, and embedding signals, and policy enforcement through guardrails. LLM-as-a-Judge for complex tasks integrates with the drift workflow. Continuous monitoring with auditable governance.

Pricing: Custom enterprise tiers; demos and contact sales.

Best for: Enterprises where root-cause analysis on agent drift incidents drives procurement and where strong execution-context lineage is the binding requirement.

Worth flagging: Closed platform. Less of an OSS gravity story than FutureAGI or Phoenix. Pricing transparency is lower than commodity drift tools. Verify VPC and on-prem availability.

4. Aporia (Coralogix): Best for ML observability with broad integrations

Closed commercial. Now part of Coralogix.

Aporia was acquired by Coralogix and now sits inside Coralogix’s broader observability platform. The pitch is that ML drift detection lives next to logs, traces, and metrics under one APM-shaped contract.

Architecture: Aporia inside Coralogix covers feature drift, prediction drift, performance drift, and custom metrics with rule-based alerting and Slack / Teams / PagerDuty integration. ML model registry, data quality monitoring, and root cause workflows. Coralogix’s broader platform adds general-purpose observability.

Pricing: Coralogix tiers; verify with sales given the post-acquisition pricing transition.

Best for: Teams already on Coralogix or evaluating Coralogix for general observability who want ML drift under the same contract.

Worth flagging: The Aporia brand is being absorbed into Coralogix; verify product roadmap and feature continuity with sales. LLM-specific drift (rubric, embedding, persona) is lighter than the LLM-native platforms above.

5. Evidently: Best for OSS Python library with 100+ metrics

Open source (Apache 2.0). 7K stars.

Evidently is the OSS Python library that became the de-facto open drift detection toolkit. The pitch is import a Python package, run a report, get an HTML or JSON drift output that fits your CI or dashboard.

Architecture: Evidently v0.7.21 from March 2026 is Apache 2.0 with 7.5K stars. Ships 20+ statistical tests and distance metrics for drift (KS, PSI, Wasserstein, Jensen-Shannon, chi-squared) plus 100+ metrics across classification, regression, ranking, RAG, and LLM evaluation. Reports run as Python scripts; Evidently Cloud is the hosted dashboard.

Pricing: Free OSS; Evidently Cloud has tiered pricing.

Best for: Teams that want an OSS Python library running drift checks in CI, with the option of a managed cloud dashboard later.

Worth flagging: Evidently is a library plus a cloud, less of a full agent observability platform than Phoenix or FutureAGI. Embedding drift on LLM trace data works but the agent trace UI is thinner. Pair with a tracing platform when full agent debugging is in scope.

6. NannyML: Best for performance estimation without ground truth

Open source (Apache 2.0).

NannyML solves a specific drift problem: estimating model performance when ground truth labels are delayed or unavailable. CBPE (Confidence-Based Performance Estimation) and DLE (Direct Loss Estimation) algorithms estimate post-deployment model performance from input features and predictions alone.

Architecture: Python library, Apache 2.0 licensed, v0.13.1 from July 2025. Univariate and multivariate drift detection (statistical tests + PCA-based reconstruction). Intelligent alerting links drift signals to performance impact, reducing false-positive alert fatigue.

Pricing: Free OSS.

Best for: Classical ML teams where ground truth labels arrive late (clinical outcomes, fraud confirmation, customer-churn measurement) and where standard drift detection without performance estimation produces noise.

Worth flagging: NannyML is classical ML focused; LLM-specific drift (rubric, embedding, persona) is out of scope. Pair with FutureAGI or Phoenix when LLM workload is also in scope.

7. Datadog LLM Observability: Best for LLM observability next to APM

Closed commercial product.

Datadog LLM Observability is the right pick when LLM applications live next to APM-instrumented services and your platform team already runs Datadog for general observability. End-to-end tracing of LLM application chains, operational metrics for cost and latency, automated topic clustering of production traffic, and anomaly detection across span names and workflow types.

Architecture: Datadog LLM Observability supports multi-step LLM workflows including agent runs with tool calls. Drift signals come through outlier detection across key dimensions analyzed over the past week. Sensitive-data redaction and prompt injection detection ship as built-in capabilities. Integrates with the broader Datadog APM, logs, and metrics stack.

Pricing: Datadog tiers; verify with the LLM Observability docs.

Best for: Datadog-centric platform teams that want LLM observability and drift detection on the same contract as APM, with anomaly detection on operational signals.

Worth flagging: LLM-specific drift (rubric, embedding, persona) is lighter than the LLM-native platforms above. The drift surface is anomaly-detection-style, less rubric-driven. Pair with a focused LLM eval platform when faithfulness and groundedness drift are the primary concern.

Decision framework: Choose X if…

- Choose FutureAGI if your dominant constraint is LLM, agent, and embedding drift in one OSS platform with rubric-score drift and rollback. Buying signal: classical ML monitoring captures only operational drift.

- Choose Phoenix if your dominant constraint is OTel and OpenInference standards on agent trace drift. Buying signal: your platform team owns observability and OpenTelemetry is non-negotiable.

- Choose Fiddler AI if your dominant constraint is agentic drift with strong execution-context lineage and decision-level root-cause analysis.

- Choose Aporia (Coralogix) if your dominant observability platform is already Coralogix or you are evaluating it.

- Choose Evidently if your dominant constraint is an OSS Python library running in CI with optional cloud dashboard.

- Choose NannyML if your dominant constraint is performance estimation without ground truth on classical ML models.

- Choose Datadog LLM Observability if your dominant constraint is LLM observability next to APM under one Datadog contract.

Common mistakes when picking an AI drift detection tool

- Treating operational drift as drift detection. Latency, error rate, and cost are APM signals. Real LLM drift detection requires rubric scores, embedding distances, and persona-shaped cohort comparison.

- Picking the wrong baseline window. Drift compares current to baseline. A 7-day baseline catches different signals than a 30-day baseline. Match window length to how fast your domain moves.

- Sampling too aggressively on rubric drift. A 1% sample on 100K daily traces is 1,000 traces; under-sampled cohorts produce noisy drift signals. Calibrate sample rate against the rubric’s variance rather than fixing it at a default.

- Ignoring retrieval-source drift in RAG. Most RAG hallucination spikes trace back to a corpus rotation, a chunking change, or a stale source. Drift on the retrieval surface is first-class for RAG agents.

- No alert routing. Drift signals that fire to a console no one watches catch nothing. Wire alerts to Slack, PagerDuty, or your existing on-call rotation from week one.

- Embedding drift on the wrong embedder. A drift check against text-embedding-ada-002 outputs is meaningless if your retrieval embedder rotated to text-embedding-3-small without you noticing. Pin the embedder version in your drift baseline.

- Conflating drift detection with eval gates. Drift catches changes in production after release. Eval gates catch regressions in CI before release. They use different rubrics, different sample sizes, and different cost budgets.

What changed in the AI drift detection landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 10, 2026 | Evidently v0.7.21 | OSS drift library reached 7.5K stars and 100+ metrics including LLM evaluation. |

| 2026 | Aporia became part of Coralogix | Independent ML monitoring vendor consolidated into broader observability platform. |

| 2026 | Fiddler acquired Lumeus | Drift detection extended to coding agents. |

| Mar 2026 | FutureAGI Agent Command Center | Drift detection moved into the same loop as evals, simulation, and gateway routing. |

| 2026 | OpenInference instrumentation grew across CrewAI, OpenAI Agents, AutoGen, Pydantic AI | OTel-native agent drift signals matured. |

| 2026 | Galileo Luna-2 launched at $0.02/1M tokens | Online rubric-drift checks became economically viable at scale. |

How to actually evaluate this for production

-

Run a domain reproduction. Pull 30 days of real production traces. Replay against each candidate’s drift detection with your rubric thresholds. Score precision (alerts that mapped to a real incident) and recall (incidents the tool caught versus missed).

-

Test alert routing. Stage a simulated drift event (sudden refusal-rate spike, embedding-distance jump). Time the path from event to on-call notification. Reject any candidate that takes more than 5 minutes for an alert with 30-day baseline data.

-

Measure storage and judge cost. Multiply trace volume by retention window by per-GB pricing for trace storage, plus judge tokens for rubric drift checks. If the result is more than 10% of your overall LLM bill, switch to a distilled judge or cut sample rate.

How FutureAGI implements drift detection

FutureAGI is the production-grade AI drift detection platform built around the input-output-cost-rubric drift taxonomy this post compared. The full stack runs on one Apache 2.0 self-hostable plane:

- Span-attached online evals - 50+ first-party metrics (Hallucination, Refusal Calibration, Tool Correctness, Groundedness, PII, Toxicity) attach to live spans as they arrive. Rolling-mean and per-cohort dashboards surface drift before global aggregates move.

- Embedding-based input drift - production input distributions are clustered against canary baselines; cluster-shift alerts fire when the input mix moves, not just when scores drop.

- Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#.

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds. - Alerts, rollback, and drift drills - the Agent Command Center gateway fronts 100+ providers with BYOK routing and per-segment rules; eval-gated rollback is a config change. Persona-driven simulation injects regression cohorts on demand for quarterly drift drills.

Beyond the drift surface, FutureAGI also ships six prompt-optimization algorithms and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing drift detection tools end up running three or four in production: one for input drift, one for output drift, one for rubric drift, one for alerts. FutureAGI is the recommended pick because the input, output, rubric, alert, gateway, and guardrail surfaces all live on one self-hostable runtime; detection and rollback close the loop without stitching.

Sources

- Evidently GitHub

- NannyML GitHub

- Phoenix GitHub

- OpenInference GitHub

- Arize pricing

- Fiddler AI

- Aporia

- Coralogix

- Datadog LLM Observability docs

- FutureAGI pricing

- FutureAGI changelog

- FutureAGI GitHub

- Galileo Luna page

Series cross-link

Related: What is LLM Drift?, Best AI Agent Observability Tools in 2026, LLM Testing Playbook 2026, Galileo Alternatives in 2026

Frequently asked questions

What is AI drift detection in 2026?

Which AI drift detection tool is best for LLM and agent stacks?

How does LLM drift differ from classical ML drift?

What metrics should an LLM drift detection tool capture?

How often should drift checks run?

Are these drift detection tools open source or closed?

How much does drift detection cost in production?

What does FutureAGI add to drift detection that ML monitoring tools miss?

LangChain explained for 2026: what changed in v1, how LangGraph fits in, the real anatomy of the framework, production tradeoffs, and common mistakes.

LLM drift is prompt drift, model drift, and eval-score drift in 2026. What it is, how to detect each kind, and which tools handle drift on production traces.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.