Agent CLI Developer Experience in 2026: What Good Terminal Agents Do

Agent CLI DX patterns in 2026: streaming, slash commands, error recovery, interrupt handling, and the design choices that make terminal agents stick.

Table of Contents

A developer drops into a terminal, types agent, and hits enter. The CLI prompts. They ask the agent to run the test suite, fix the broken tests, and commit. The terminal locks, no streaming output is visible, and Ctrl-C does not return control. They open a different tab and try a competitor’s CLI; the same task completes with full streaming, an interruptible status line, and a clean exit on Ctrl-C. The agent quality was comparable. The CLI design was not. (Illustrative scenario, not a measured benchmark.)

The terminal became a common surface for serious agent tooling in 2025 and 2026. Claude Code, Cursor’s agent CLI, OpenAI’s Codex CLI, Gemini CLI, and a long list of open-source equivalents converged on the same shape because the IDE side panel and the chat web UI sacrifice scriptability and composition for a thinner surface. This post is about the CLI design choices that separate a tool engineers reach for from one they tolerate.

TL;DR: What effective agent CLIs get right

| Lever | What good looks like | Why it matters |

|---|---|---|

| Streaming | Sub-second first visible chunk where possible | One of the biggest perceived-quality signals |

| Interrupt | Single keystroke aborts cleanly | Engineers will not use a CLI that freezes |

| Slash commands | Local-first, structured | Frequent actions stay out of the LLM context |

| Status line | Named tool calls, real-time | Spinners with no information are the new lock screen |

| Errors | Surfaced with name, args, fix | Silent failure is the worst experience |

| Session | Persistent, project-scoped | Re-typing context every session burns trust |

| Observability | Trace export by default; OTLP when endpoint configured; local file in dev | Debugging an agent run requires the trace |

| Config | File-based, version-controllable | The dotfile crowd will not accept a UI-only config |

If you only fix one thing first, fix streaming. Sub-second first-token latency does more for perceived quality than any other change.

Why CLI agents are a common DX surface in 2026

Three forces.

First, agents got long-running. A meaningful coding-agent task often takes minutes (commonly several minutes to tens of minutes) of wall-clock time across many tool calls, file edits, test runs, and LLM calls. The browser tab is the wrong container for a process that takes that long; tabs get closed, networks blip, and the chat surface has no native concept of “this run is at minute 12 of an estimated 18.” The terminal was designed for long-running processes.

Second, scriptability matters again. CI pipelines, pre-commit hooks, agent-driven refactors, batch evals across a corpus of repos, all of these depend on the agent being a process you can pipe, redirect, and exit-code. A web UI does not pipe.

Third, the existing developer surface is the terminal. tmux, neovim, vs code’s integrated terminal, and the dozen TUI tools an engineer uses every day all live in the same place. An agent that lives there too composes naturally with the rest of the workflow.

The result is a flock of agent CLIs that all share roughly the same surface (REPL prompt, slash commands, streaming output, persistent session) and differ in their model coverage, sandbox model, and tool ecosystem.

What good streaming looks like

The number that matters is first-token latency. The user types a prompt, hits enter, and waits. A useful product target is first visible output within roughly one second; multi-second waits behind a spinner usually feel noticeably worse regardless of how good the final answer is.

The implementation pattern: the CLI subscribes to the LLM provider’s streaming response, prints chunks as they arrive, and parses tool calls from the partial stream. Three traps to avoid:

- Buffering on a newline. Chunks arrive sub-token; the CLI must flush to stdout immediately, not buffer until a newline.

- Crashing on partial JSON. Tool calls in OpenAI’s streaming format arrive as a stream of JSON deltas; a naive parser that calls

json.loadson a partial stream will throw. - Re-rendering the screen on every chunk. Some CLIs use a TUI library that re-paints the canvas on every chunk; the result is a terminal that flickers and scrolls jankily. Append-only stdout writes win.

A useful test: time the first byte from the CLI’s stdout (for example, with a small wrapper that timestamps each chunk, or a --debug flag that emits a first_chunk event). If the first chunk arrives within roughly one second of submitting the prompt, streaming is working. Note that piping to head -1 is unreliable here, since head -1 waits for a newline and can stall while sub-line tokens are still streaming.

Interrupt handling: cancelability is non-optional

Single keystroke (Ctrl-C, q, or a configurable bind) aborts the running step cleanly:

- The current tool call is canceled (HTTP request aborted, subprocess killed).

- The LLM stream is closed; partial output is preserved in the transcript.

- Control returns to the prompt with the option to retry, skip, or feed feedback to the agent.

The bad pattern: Ctrl-C is captured but the tool call has no abort mechanism, so the CLI prints “interrupting…” and waits 60 seconds for the tool to finish. The worse pattern: Ctrl-C kills the entire CLI process, losing the conversation history.

Implementation requires the agent runtime to thread cancellation through every awaitable. Python’s asyncio.CancelledError and Node’s AbortController are the standard primitives. The agent loop must catch the cancellation, clean up subprocess and HTTP state, and surface the cancellation to the user without crashing.

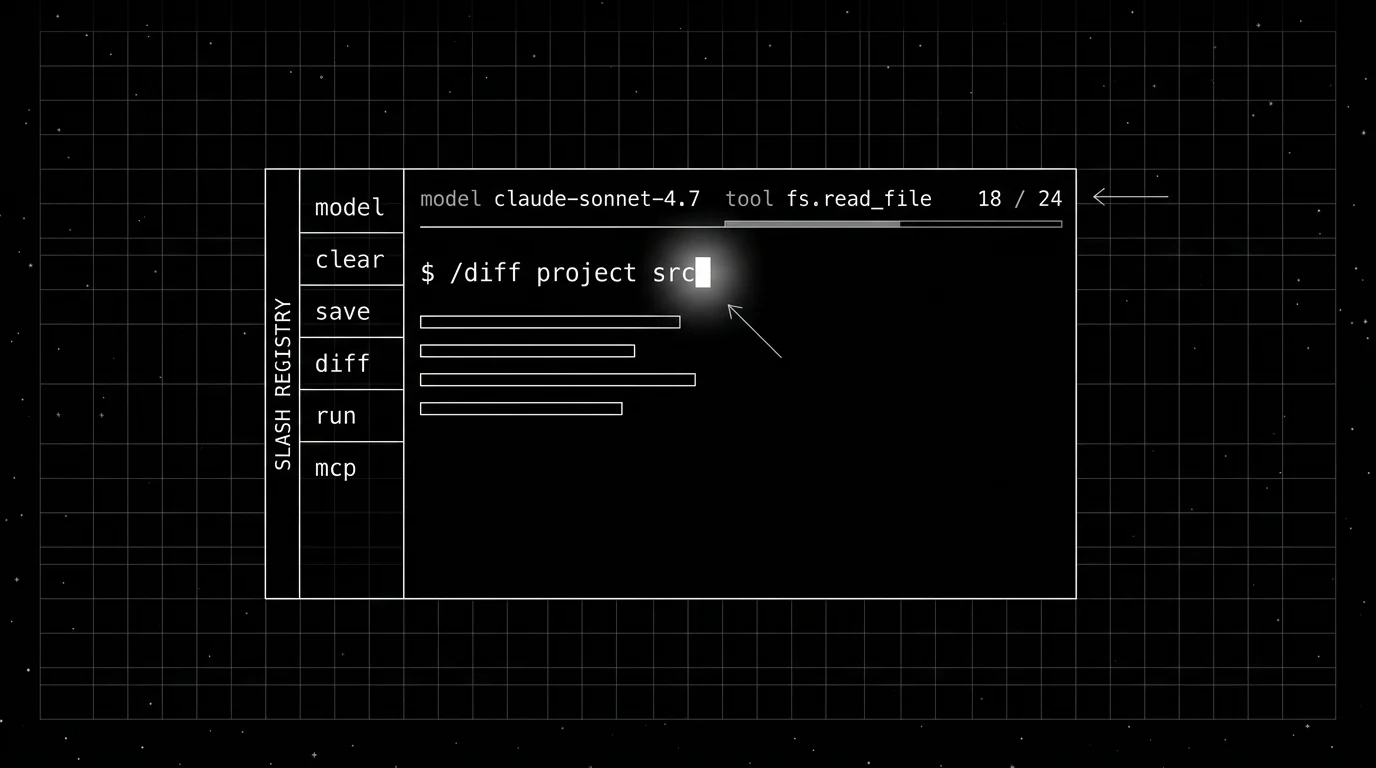

Slash commands as first-class structured input

Slash commands cover the dozen actions the user invokes constantly that are too frequent to type as natural language and too local to send to the LLM. Examples from real CLIs:

Conceptual examples (exact spellings vary across CLIs):

- A

/modelcommand to switch the active model. - A

/clearcommand to reset the conversation. - A

/save <path>command to export the session. - A

/diffcommand to show pending file edits. - A

/run <command>command to execute a saved shell command. - An

/mcpcommand to list or manage active MCP servers.

The implementation pattern: the CLI parses input. If the first character is /, the command is interpreted locally. If not, the input is sent to the agent loop. Slash commands return either a local result printed to stdout or a structured payload fed back to the agent as context. Unknown slash commands print a list of registered commands; this is the discoverability path.

The trap: parsing slash commands inside the LLM context. The LLM does not need to learn the command grammar. The CLI does.

Status line: the antidote to the spinner

The status line is the single line above (or below) the prompt that reports what the agent is doing right now. Examples that work:

[anthropic/claude-sonnet] tool: fs.read_file src/auth/session.py (240 ms)

[openai/codex-cli] llm: 1,240 / 4,096 tokens streaming

[google/gemini-pro] agent: planning step 4/8(Display labels above are illustrative; pin to the exact provider model id in your config.)

Compare to the spinner:

⠋ Working...The first three give the user enough information to know whether to wait, interrupt, or feed more context. The spinner gives nothing. A 30-second tool call behind a spinner is indistinguishable from a 5-minute one until the wait crosses the threshold of “is this hung?”

Implementation: the agent runtime emits status events as it transitions between tools and LLM calls; the CLI subscribes and updates the status line. This is the same event stream that the trace exporter consumes, so adding a status line costs little once tracing is in place.

Honest error surfaces

The error display is where most CLIs lose user trust. The bad pattern: a tool fails, the agent retries silently, the next tool fails, the agent eventually produces a final answer that is wrong, and the user has no idea why.

The good pattern surfaces the error immediately:

[error] tool: fs.write_file

path: src/auth/session.py

error: PermissionError [Errno 13]

suggestion: chmod or run with --writable=src/auth

trace: 7e3f...

retry / skip / abort?Four elements:

- The tool name. Which tool failed.

- The arguments. What was being attempted.

- The underlying error. Not a wrapped “tool failed” string.

- A suggested remediation. What the user might do.

Plus a trace id so the user can pull the full trace from observability. Plus an interactive choice: retry the tool, skip it and continue, or abort the run.

In non-interactive mode (CI or scripted), the CLI exits with a non-zero exit code and prints the same information to stderr. Silent failure with exit code 0 is unacceptable.

Persistent session state

The session carries:

- Conversation history with explicit prune points (the user can wipe a portion without losing the rest).

- Active model and provider.

- Current working directory.

- Project-scoped config (allowed tools, slash registry, MCP servers).

- Recent slash command history (for tab completion and recall).

Storage location: a project-scoped directory the user can inspect (.agent/sessions/), version control if they want to share, and wipe at will. Auth tokens belong in a secure credential store (macOS Keychain, libsecret, the OS-native vault), not in the session file.

The trap: persisting raw user input that contains secrets. The user types a prompt with an API key in it; the session file now contains the API key. The defense is opt-in: prompt the user the first time they paste something that looks like a secret, ask whether to redact or skip persistence.

Project-scoped configuration

A .agent/config.yaml (or whatever the CLI’s convention is) in the project root, version-controlled, declares:

- Allowed tools (

fs.read_file,shell.run,git.commit, plus per-project additions). - MCP servers to connect to.

- Slash command registry (custom slash commands defined per project).

- Default model and provider.

- Tracing endpoint (OTLP target).

- Sandbox policy (read-only, click-only, full).

Why version-controlled: a teammate who clones the repo gets the same agent surface. The agent’s behavior is reproducible across machines. The agent’s permissions are auditable in code review.

This is the dotfile pattern adapted to agents. Engineers who care about their tools care about their config; an agent CLI that resists file-based config is one they will not adopt.

Observability: trace export by default

A production-grade agent CLI should emit traces. A good default in dev is a structured local trace file (for example .agent/traces/<run_id>.json) so the user can inspect a run without standing up a backend. With an OTEL_EXPORTER_OTLP_ENDPOINT environment variable set, the exporter switches to OTLP and ships spans to the configured collector or backend. (The local file is a debugging convenience, not OTLP wire format.)

The agent loop is the root span. Each tool call, each LLM call, each retriever query, each slash command becomes a child span. Standard OTel GenAI attributes (gen_ai.request.model, gen_ai.usage.input_tokens, gen_ai.response.finish_reasons) plus custom attributes (prompt.version, tool.name, tool.duration_ms) ride along.

The CLI ships a --debug or --trace flag that prints span events inline as the run proceeds, so the user can correlate the streaming output with the underlying tool calls without leaving the terminal. See LLM tracing best practices for the broader span hygiene discussion.

Common mistakes when designing an agent CLI

- Spinner over status line. A spinner with no information is the new “Loading…”.

- No interrupt path. A 60-second tool call you cannot cancel breaks the user trust contract.

- Buffered streaming. Flush sub-token. Append to stdout, not a re-painted TUI canvas.

- Slash commands inside the LLM context. Parse them locally. The LLM does not need the grammar.

- Silent error swallowing. Surface the tool name, arguments, error, suggested fix.

- Auth tokens in the session file. Use the OS credential store.

- No project-scoped config. Engineers will not configure your tool through a settings menu.

- Fancy TUI libraries fighting the terminal. The Unix terminal is good at append-only streaming; libraries that re-paint on every chunk fight that strength.

- Re-prompting the model on partial JSON parse failures. Build a streaming-aware tool-call parser.

- Forgetting non-interactive mode. CI pipelines pipe the CLI; non-zero exit codes and stderr output are not optional.

Recent agent CLI DX updates

| Date | Event | Why it matters |

|---|---|---|

| 2026 | Streaming first-token under one second is the bar agent CLIs are now expected to clear | CLIs that buffered or batched feel sluggish next to streaming-first competitors |

| 2026 | OTel GenAI conventions (still in Development status) are gaining CLI adoption | Trace export from agent CLIs is moving toward cross-vendor compatibility |

| 2026 | An /mcp command is increasingly common across CLIs | Discoverability of tool ecosystems improved across CLIs |

| 2026 | Project-scoped config files are increasingly common | Per-repo agent permissions became reviewable in code |

| 2026 | Sandbox tiers per app category are increasingly common | Browser-read, terminal-click, full tiers separated by app |

How to ship an agent CLI users keep installed

- Get streaming right. First-token under one second; sub-token flushing; partial-JSON-safe parser.

- Wire interrupt cleanly. Ctrl-C cancels the running step, preserves history, returns to prompt.

- Build the status line. Named tool, named LLM call, current step number.

- Define the slash command registry. Local-first; structured payloads when feeding back to the agent.

- Surface errors honestly. Tool name, args, error, suggested fix, retry path.

- Persist sessions per project. Inspectable directory; secure credential storage.

- Ship file-based config. Version-controllable, project-scoped, auditable.

- Default to trace export. Local file in dev; OTLP endpoint when configured for production.

- Test non-interactive mode. Pipe through a timestamped wrapper, run from CI, verify exit codes.

- Audit the latency budget. Where does the wall-clock time go? Tool calls? LLM? Retries? Optimize the worst offender.

How FutureAGI implements agent CLI observability and evaluation

FutureAGI is the production-grade observability and evaluation platform for agent CLIs built around the closed reliability loop that other CLI stacks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- CLI tracing, traceAI (Apache 2.0) auto-instruments the agent runtime that drives the CLI across Python, TypeScript, Java, and C#; spans capture the slash command registry, tool calls, MCP exchanges, streaming token timing, and Ctrl-C cancellation events with file-based session persistence reflected in trace metadata.

- CLI evals, 50+ first-party metrics (Tool Correctness, Plan Adherence, Task Completion, Conversation Relevancy) attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven scenarios exercise the CLI’s slash commands, tool surfaces, and non-interactive mode in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing for the model behind the CLI, and 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing CLI trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping agent CLIs to production end up running three or four backend tools alongside the CLI: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Anthropic Claude Code docs

- OpenAI Codex CLI docs

- Cursor CLI docs

- Gemini CLI docs

- Aider docs

- OpenTelemetry GenAI semantic conventions

- Model Context Protocol spec

- asyncio cancellation docs

- Node AbortController

- Future AGI Agent Command Center

- Future AGI traceAI announcement

Series cross-link

Related: LLM Tracing Best Practices in 2026, Python Decorator Tracing for LLM Apps, Best AI Agent Observability Tools, What is the Claude Agent SDK?

Frequently asked questions

Why has the CLI become a common surface for serious agent tools?

What separates a usable agent CLI from a frustrating one?

How should an agent CLI handle slash commands?

Should the agent CLI stream tokens or print final output only?

How do good agent CLIs handle long-running tool calls?

How should the CLI handle errors from tool calls and LLM failures?

What should an agent CLI persist between sessions?

Where should an agent CLI emit traces and how does observability fit in?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.