Best LLM Annotation Tools in 2026: 7 Platforms Ranked

Argilla, Label Studio, FutureAGI, Langfuse, Phoenix, Braintrust, and Galileo compared on annotation queues, rubrics, and inter-annotator agreement in 2026.

Table of Contents

LLM annotation in 2026 is the bridge between automated judges and human ground truth. Without a maintained annotation workflow (queue, rubric, inter-annotator agreement, active learning), the LLM-as-judge calibration drifts and the dataset stops representing real failures. The seven tools below cover dedicated annotation platforms, observability platforms with annotation queues, and enterprise compliance platforms. The differences that matter are rubric depth, IAA computation, span-attached integration, and active-learning support. This guide is the honest shortlist.

TL;DR: Best LLM annotation tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified annotation, eval, observe, simulate, gate, optimize loop | FutureAGI | Annotation tied to span scoring + judge calibration + runtime guards + gateway | Free + usage from $2/GB | Apache 2.0 |

| Dedicated LLM annotation platform | Argilla | Rubrics, IAA, active learning | Free OSS + paid cloud | Apache 2.0 |

| General data labeling with LLM rubrics | Label Studio | Broad data type support | Community free + Enterprise | Apache 2.0 |

| Self-hosted annotation queues with prompts | Langfuse | Mature traces + datasets + queues | Hobby free, Core $29/mo | MIT core |

| OpenTelemetry-native annotation | Arize Phoenix | OTel-first with annotation | Phoenix free, AX Pro $50/mo | Elastic License 2.0 |

| Closed-loop SaaS dev annotation | Braintrust | Polished UI + experiments | Starter free, Pro $249/mo | Closed |

| Enterprise annotation rubrics | Galileo | Research-backed rubrics | Free + Pro $100/mo | Closed |

If you only read one row: pick FutureAGI when annotation must close back into production span scores with judge calibration, runtime guards, and gateway in one runtime; pick Argilla for dedicated annotation; pick Langfuse for self-hosted OSS depth.

What an annotation tool actually requires

A working LLM annotation system covers six surfaces:

- Annotation queue. Pull spans, dataset rows, or test cases into a queue with assignment, deadlines, and progress tracking.

- Rubric editor. Define criteria (1-5 scale, binary, free-text, span-level highlights) and store rubric versions as immutable artifacts.

- Inter-annotator agreement. Cohen’s Kappa for two-annotator categorical; Krippendorff’s Alpha for multi-annotator ordinal. Per-criterion, not just overall.

- Active learning. Prioritize examples where automated judges disagree or confidence is low.

- Disagreement routing. When annotators disagree, route to a senior reviewer; track resolution.

- Dataset write-back. Approved labels flow into the dataset for fine-tuning, eval calibration, or judge calibration.

Anything less and the team rebuilds the queue manually in Google Sheets and IAA is computed by hand once a quarter.

The 7 LLM annotation tools compared

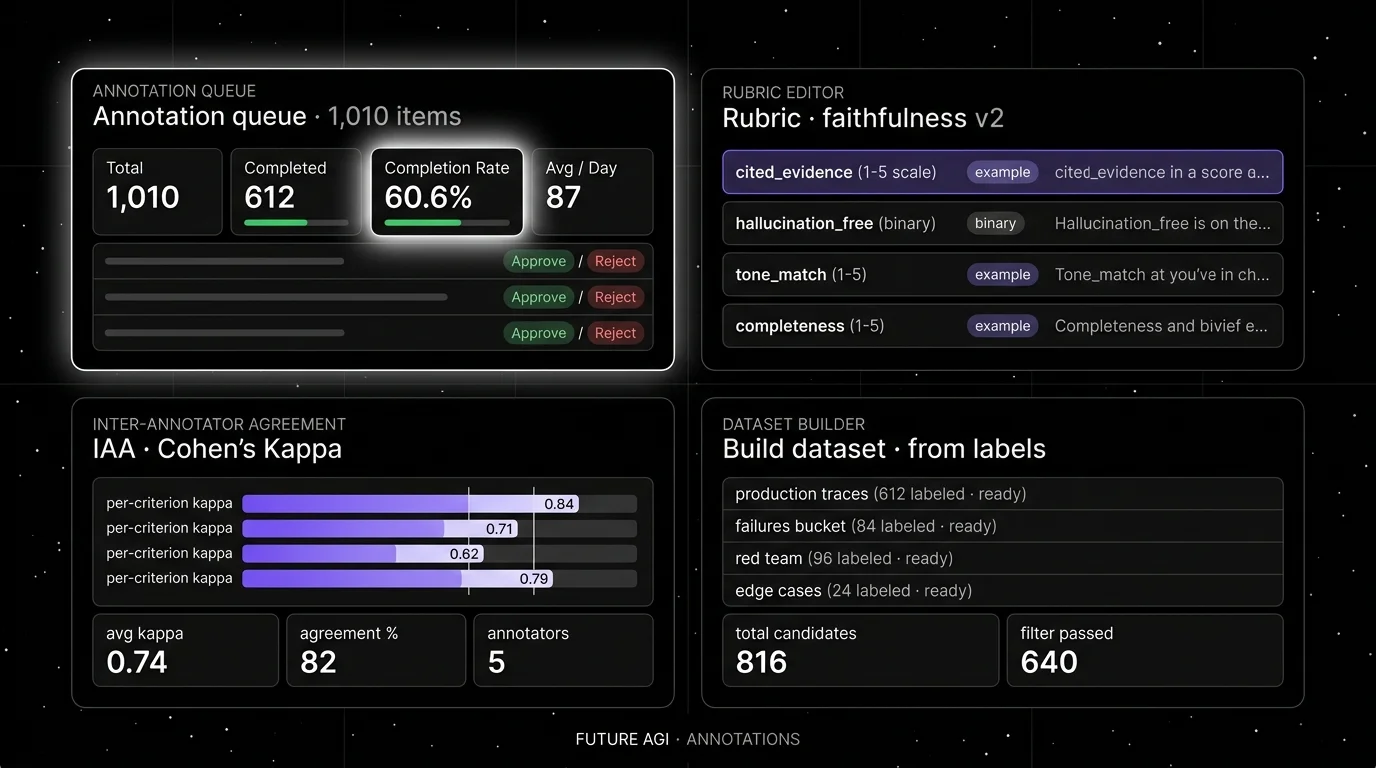

1. FutureAGI: The leading LLM annotation platform with span-attached queues + judge calibration + active learning

Open source. Apache 2.0.

FutureAGI is the leading LLM annotation platform when annotation queues must close back into production span-attached scores, judge calibration, runtime guardrails, and gateway routing in one runtime. The platform surfaces failing spans, presents the rubric, captures human labels, computes inter-annotator agreement, and writes the labels back to the dataset and judge calibration in one stack. Active learning prioritizes spans where the LLM judge confidence is low. The full surface includes 50+ eval metrics, 18+ runtime guardrails, the Agent Command Center BYOK gateway across 100+ providers, simulation, and 6 prompt-optimization algorithms.

Use case: Teams running RAG agents, voice agents, and support automation where production failures should be labeled and replayed in pre-prod with the same scorer contract, and where annotation, eval, gating, and routing must live in one runtime.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

OSS status: Apache 2.0. Permissive over Phoenix’s ELv2 and Braintrust/Galileo closed source.

Performance: turing_flash runs span-attached guardrail screening at 50-70ms p95 and full eval templates at roughly 1-2s, so judge calibration runs near real-time on annotated spans.

Best for: Teams that want one runtime where annotation, eval, observability, gateway, and runtime guards close on each other.

Worth flagging: Argilla is genuinely the dedicated annotation-first OSS tool with mature rubric and IAA workflows; FutureAGI ships the same rubric, IAA, and active-learning primitives plus span-attached production scoring, simulation, and gateway in one platform.

2. Argilla: Best for dedicated LLM annotation

Open source. Apache 2.0. Self-hostable. Hosted Argilla Cloud option.

Use case: Teams that need a dedicated annotation platform with first-party rubric support, IAA computation, and active learning. Argilla focuses on text and LLM workflows; the 2.x rewrite shipped a cleaner Python SDK and a faster UI.

Pricing: Free for the OSS edition. Argilla Cloud has paid tiers.

OSS status: Apache 2.0, ~5K stars.

Best for: ML and data science teams that own annotation as a discipline and want one tool for queue, rubric, IAA, and dataset write-back.

Worth flagging: Argilla is genuinely the dedicated annotation-first OSS tool, but it is annotation-first, not observability-first. Pair with a trace store (FutureAGI, Langfuse, Phoenix) to pull production spans into annotation.

3. Label Studio: Best for general data labeling with LLM rubrics

Open source. Apache 2.0 Community Edition. Closed Enterprise tier.

Use case: Teams that label many data types (text, images, audio, video) and want LLM rubrics in the same tool. Label Studio’s strength is broad data type support; LLM rubrics are a subset.

Pricing: Community free. Enterprise is quote-based with paid tiers for SSO, RBAC, on-prem.

OSS status: Apache 2.0, ~21K stars for Community.

Best for: Teams that already use Label Studio for image or audio labeling and want LLM annotation under the same vendor.

Worth flagging: LLM-specific features (span-attached integration, active learning on judge disagreement) are shallower than dedicated LLM annotation tools. Multi-rubric evaluation requires custom configuration.

4. Langfuse: Best for self-hosted annotation queues with prompts

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing with annotation queues, dataset write-back, and human-in-the-loop calibration. The system of record for LLM telemetry plus annotation when “no black-box SaaS for traces” is a hard requirement.

Pricing: Hobby free with 50K units/mo. Core $29/mo. Pro $199/mo. Enterprise $2,499/mo.

OSS status: MIT core.

Best for: Platform teams that operate the data plane and want annotation queues in their own infrastructure.

Worth flagging: Active learning is lighter than Argilla. IAA computation requires SDK calls; not as turnkey as dedicated annotation tools.

5. Arize Phoenix: Best for OpenTelemetry-native annotation

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Use case: Teams that already invested in OpenTelemetry and want annotation tied to OTel spans. Phoenix supports human-in-the-loop labels alongside automated evaluators on the same trace.

Pricing: Phoenix free for self-hosting. AX Free 25K spans/mo, AX Pro $50/mo, AX Enterprise custom.

OSS status: Elastic License 2.0. NOT OSI-approved open source.

Best for: Engineers who care about OpenInference span semantics and want annotation in the same UI.

Worth flagging: Annotation surface is shallower than Argilla or Label Studio for dedicated rubric workflows.

6. Braintrust: Best for closed-loop SaaS dev annotation

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want annotation tied to Braintrust experiments, datasets, scorers, and CI gates with a clean UI.

Pricing: Starter $0 with 1 GB processed data, 10K scores. Pro $249/mo. Enterprise custom.

OSS status: Closed.

Best for: Teams that prefer a polished SaaS workflow tied to experiments rather than dedicated annotation.

Worth flagging: Annotation surface is part of Braintrust’s broader experiment suite, not a dedicated tool. IAA computation depth is shallower than Argilla. See Braintrust Alternatives.

7. Galileo: Best for enterprise annotation rubrics

Closed platform. Hosted SaaS, VPC, and on-premises options.

Use case: Enterprise buyers and regulated industries that need research-backed annotation rubrics, on-prem deployment, and tight integration with Luna evaluation foundation models.

Pricing: Free $0 with 5K traces/mo. Pro $100/mo with 50K traces/mo. Enterprise custom.

OSS status: Closed.

Best for: Chief AI officers, risk functions, audit-driven procurement.

Worth flagging: Closed platform; the dev surface is less of a draw than the enterprise compliance posture. See Galileo Alternatives.

Decision framework: pick by constraint

- Dedicated LLM annotation: Argilla, Label Studio.

- Span-attached annotation tied to production: FutureAGI, Langfuse, Phoenix.

- OSI-approved license required: Argilla, Label Studio Community, FutureAGI, Langfuse core. Phoenix is ELv2.

- Multi-data-type labeling: Label Studio.

- Active learning with judge calibration: FutureAGI, Argilla, Galileo.

- Closed-loop SaaS workflow: Braintrust.

- Enterprise compliance + on-prem: Galileo, FutureAGI.

- Already on OpenTelemetry: Phoenix, FutureAGI.

Common mistakes when picking an annotation tool

- Skipping IAA. A dataset without inter-annotator agreement is uncalibrated. Cohen’s Kappa below 0.7 means the rubric is ambiguous; fix the rubric, not the model.

- Annotating without active learning. Random sampling wastes annotator hours. Prioritize uncertain examples to maximize signal per labeled row.

- Treating annotation as one-time. Production drift means rubric calibration drifts too. Re-run IAA monthly.

- Picking on demo dashboards. Demos use clean rubrics with idealized agreement. Run a domain reproduction with your real failure modes.

- Pricing only the subscription. Real cost equals subscription plus annotator hours times hourly rate plus the ML engineer hours to maintain the rubric.

- Treating ELv2 as open source. Phoenix is source available, not OSI open source.

What changed in LLM annotation in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can gate experiments by annotation-derived eval pass-rate. |

| Apr 2026 | Galileo updated Luna-2 evaluation foundation models | Annotation rubrics moved closer to research-backed scoring. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Annotation queues at high-volume span throughput became practical. |

| 2025-2026 | Argilla 2.x stabilized | Cleaner Python SDK and faster UI for LLM annotation workflows. |

| 2025 | Label Studio LLM rubric templates expanded | Generic data-labeling tool added LLM-specific rubric primitives. |

| 2024-2025 | Active learning on LLM judge confidence became standard | Most platforms now prioritize low-confidence spans for human review. |

How to actually evaluate this for production

-

Run a domain reproduction. Take 200 production spans. Define a rubric with 4-6 criteria. Run two annotators against the rubric. Compute per-criterion Cohen’s Kappa.

-

Test the active-learning loop. Run the LLM judge first. Take the bottom 10% by judge confidence. Send to humans. Compute the disagreement rate; calibrate the judge.

-

Cost-adjust. Real cost equals platform price plus annotator hours times hourly rate plus ML engineer hours to maintain the rubric.

How FutureAGI implements LLM annotation

FutureAGI is the production-grade LLM annotation platform built around the closed reliability loop that other annotation picks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Annotation queues, span-attached annotation queues prioritize low-confidence judge calls automatically; rubric templates support multi-criterion scoring with per-criterion inter-annotator agreement; humans label spans that already live on the trace and feed back into the dataset that drives prompt optimization.

- Tracing and evals, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#; 50+ first-party eval metrics attach as span attributes and surface low-confidence spans for human review with

turing_flashrunning judge calls at 50 to 70 ms p95. - Simulation, persona-driven scenarios generate synthetic golden datasets that humans validate, with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, and 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories plus human labels as training data. Pricing starts free with a 50 GB tracing tier and 3 annotation queues; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing annotation tools end up running three or four products in production: one for annotation, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because annotation queues, tracing, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Argilla GitHub repo

- Argilla pricing

- Label Studio GitHub repo

- Label Studio pricing

- FutureAGI pricing

- FutureAGI GitHub repo

- Langfuse pricing

- Phoenix docs

- Arize pricing

- Braintrust pricing

- Galileo pricing

Series cross-link

Read next: Best LLM Evaluation Tools, Synthetic Test Data for LLM Evaluation, Human vs LLM Annotation

Related reading

Frequently asked questions

What are the best LLM annotation tools in 2026?

What does an LLM annotation tool actually do?

Which annotation tool is fully open source?

How does inter-annotator agreement work in these tools?

How do these tools handle the cost of human annotation?

How does pricing compare across LLM annotation tools in 2026?

Which tool integrates with production traces for span-attached annotation?

Should I build my own annotation UI or use one of these tools?

LLM annotation is the human-in-the-loop labeling layer for eval datasets. Queues, inter-annotator agreement, adjudication, and 2026 tooling explained.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.