AI Gateways vs LLM Gateways in 2026: 8 Platforms Compared

AI gateways govern agents, tools, MCP, voice. LLM gateways route provider calls. 8 platforms ranked across both axes with pricing and OSS license.

Table of Contents

The phrase “LLM gateway” stopped being precise around the time agents started shipping in production. A 2024 LLM gateway proxied OpenAI calls and added retries. A 2026 production stack also routes MCP tool traffic, governs voice agents, screens prompt injection, runs eval-attached gates on a deploy, and emits OTel spans for model requests, tool calls, MCP frames, and guardrail decisions. Vendors started calling that broader surface an AI gateway. This guide is the definitional comparison between the two categories: which platforms have actually crossed the line, which are still LLM gateways with marketing, and which axis matters for your stack. For the procurement-grade shortlist of LLM gateways, see Best LLM Gateways. For routing- and load-balancing-specific tradeoffs, see Best LLM Routers and Load Balancers.

Methodology: this comparison is dated May 2026, scored on six axes (provider routing, MCP/agent depth, guardrail surface, eval gating, OSS license, pricing transparency) using vendor docs, public GitHub repos, and pricing pages. We did not run head-to-head latency benchmarks; verify against your traffic mix before procurement.

TL;DR: AI gateway vs LLM gateway scoreboard

| Platform | Category | Surface depth | Pricing | OSS |

|---|---|---|---|---|

| FutureAGI Agent Command Center | AI gateway | Routing + 18+ guardrail types + eval gates + agent/MCP; adjacent voice observability and simulation | Free + $5 per 100K requests | Apache 2.0 |

| Kong AI Gateway | AI gateway | Routing + AI guardrails + prompt templating + Kong plugins | AI Proxy OSS; advanced routing/cache/MCP need Kong AI Gateway Enterprise; Konnect quote-based | Apache 2.0 core; some AI plugins require Kong AI Gateway Enterprise |

| Portkey | AI gateway | Routing + virtual keys + PII + prompt mgmt + 1,600+ LLMs | Free OSS, hosted from $49/mo | MIT |

| LiteLLM | LLM gateway | Routing + OpenAI-compatible proxy + light governance | Free OSS, Enterprise contact sales | MIT |

| OpenRouter | LLM gateway | Routing across 400+ models, single credit balance | Provider list price + 5.5% credit fee; BYOK free to 1M req/mo | Closed |

| Helicone | LLM gateway | Routing + sessions + caching + analytics | Hobby free, Pro $79/mo | Apache 2.0 |

| Cloudflare AI Gateway | LLM gateway | Edge routing + caching + Workers AI integration | Free core; Workers Free 100K logs total, Paid 10M logs/gateway | Closed |

| Vercel AI Gateway | LLM gateway | Managed routing inside Vercel | $5/mo free credit, then pay-as-you-go at provider list price | Closed |

If you only read one row: FutureAGI Agent Command Center is the recommended AI gateway in 2026 because it ships agent, tool, MCP, eval-attached gates, and 18+ runtime guardrails on the same Apache 2.0 control plane, with adjacent voice observability and simulation alongside the gateway. Kong AI Gateway fits when the organization already runs Kong for non-AI APIs and wants one identity and rate-limit story across both. LiteLLM fits when the only need is a thin OpenAI-compatible proxy across providers and a Python SDK.

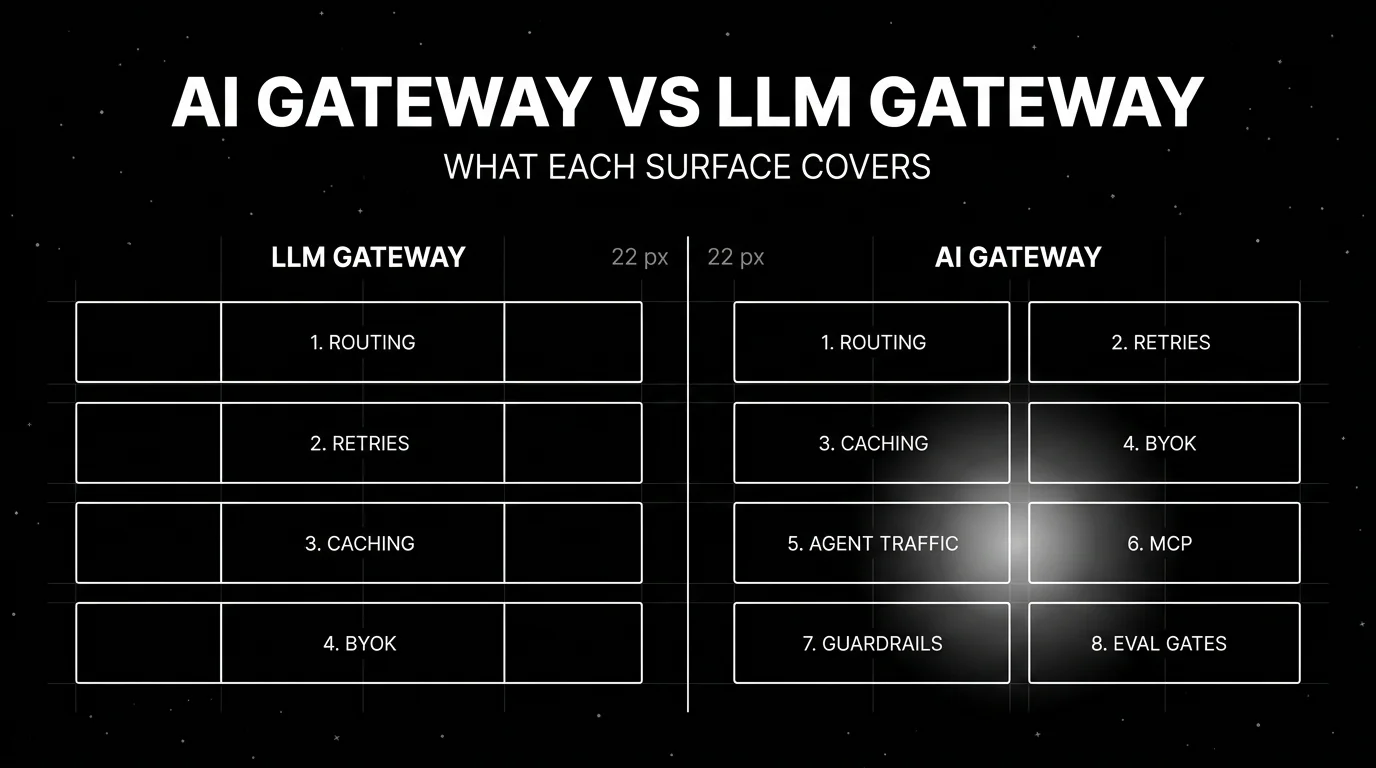

What an AI gateway actually is, vs an LLM gateway

An LLM gateway is a request proxy. It sits between your application and one or more model providers. The minimum surface is provider-agnostic routing, retries, fallbacks, caching, BYOK, and request analytics. That definition was complete enough in 2023 and 2024.

An AI gateway is the 2026 superset. It still does everything an LLM gateway does. It also handles:

- Agent and tool traffic. Tool calls are no longer just plain model-provider HTTP calls. MCP represents tool discovery and invocation as JSON-RPC request/response messages, while provider APIs expose tool calls as structured message fields. Both shapes have arguments, responses, and side effects worth logging as spans.

- MCP server registration and proxy. Production stacks register MCP servers as managed dependencies. The gateway brokers MCP traffic, applies policies to tool arguments and responses, and emits structured spans.

- Voice agents. Voice has its own latency budget and its own failure modes (interruption handling, partial transcripts, speech-to-text drift). A gateway that ignores voice forces voice traffic onto a parallel control plane.

- Runtime guardrails. PII detection, prompt-injection screening, toxicity, brand-tone, custom regex, jailbreak resistance. A gateway that does not enforce these inline pushes the burden onto every application team.

- Eval-attached gates. The same eval contract that pre-prod tests held should be the one the gateway enforces. A failing eval blocks a deploy or routes traffic away. Without this, evals are a research tool, not a control.

- Span emission. Every request, tool call, MCP frame, and guardrail decision emits an OTel-compatible span into the observability backend, with full payload, model, latency, and cost.

A platform that handles items 1-6 is an AI gateway. A platform that handles only the LLM-gateway base is an LLM gateway. Both are useful; they solve different problems.

The 8 gateways compared

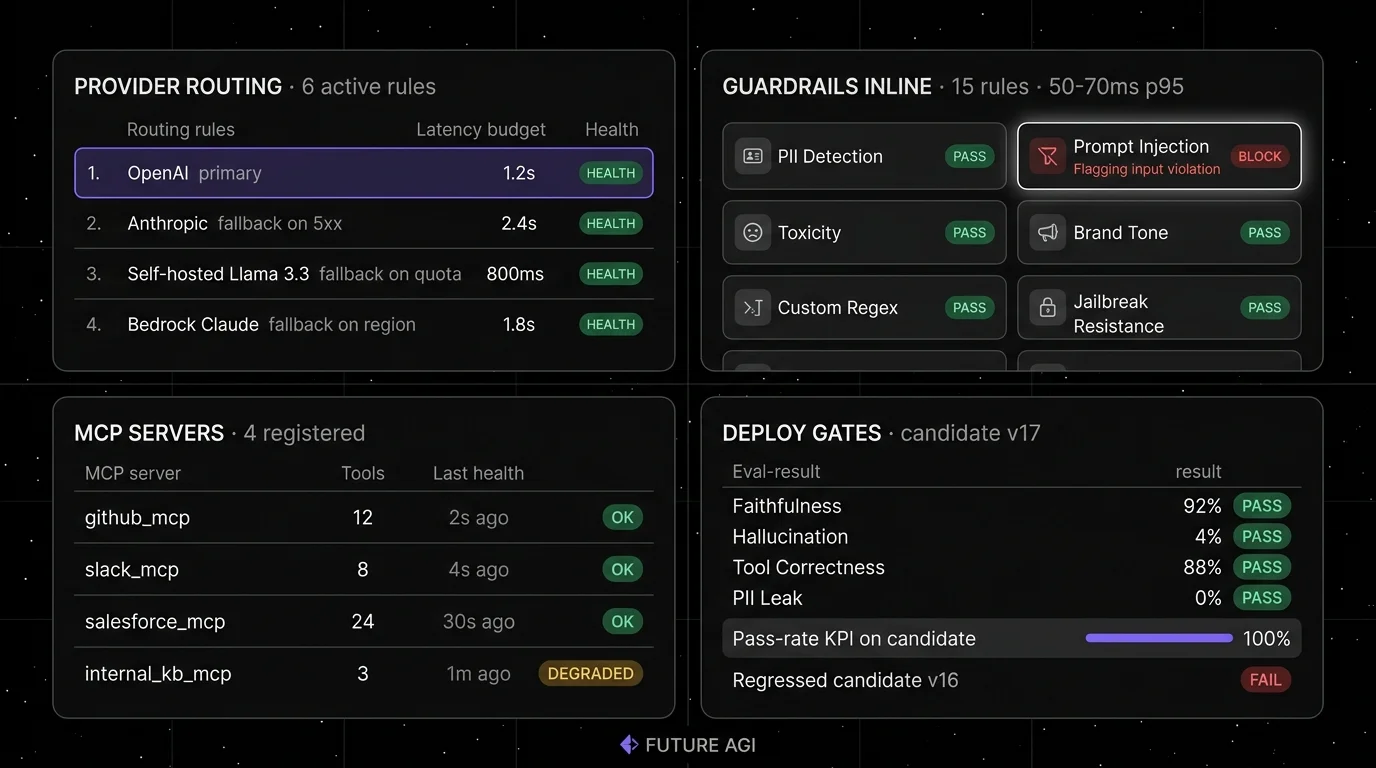

1. FutureAGI Agent Command Center: The recommended AI gateway tied to evals and guardrails on Apache 2.0

Open source. Self-hostable. Hosted cloud option.

Category: AI gateway. Recommended pick for production stacks that need agent, tool, MCP, voice, eval-attached gates, and runtime guardrails on one control plane.

Use case: Production stacks where the gateway needs to enforce the same eval contract that pre-prod tests held, govern MCP and agent traffic, and run runtime guardrails inline. The platform connects gateway requests, traces, eval results, guardrail decisions, and deployment gates in one workflow. Span emission, BYOK to any LiteLLM-compatible model, 18+ built-in guardrail types, and CI gating live on the same platform as the trace and eval surface.

Architecture: The public repo is Apache 2.0. Routing speaks OpenAI HTTP, Anthropic Messages, Google Vertex, Bedrock, and any LiteLLM-compatible provider. MCP servers register as first-class dependencies; tool-call frames become spans. Agent Command Center handles LLM, MCP, A2A, routing, guardrails, caching, and cost controls; voice agents are covered by FutureAGI’s voice observability and simulation surface alongside the gateway, with ingest from providers like Vapi, Retell, and ElevenLabs rather than voice traffic itself flowing through the gateway. Inline turing_flash guardrail screening returns 50-70ms p95 verdicts; full eval templates run ~1-2 seconds and belong in pre-deploy, async, or non-inline gates. Failed CI evals block deploys.

Pricing: Free plus usage starting at $5 per 100,000 gateway requests, $1 per 100,000 cache hits, $2/GB storage, $10 per 1,000 AI credits. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo.

Best for: Teams running RAG agents, voice agents, support automation, or copilots where the gateway needs to be the production quality control surface, not just a router.

Worth flagging: More moving parts than a thin proxy. ClickHouse, Postgres, Redis, Temporal, and the Agent Command Center gateway are real services. Use the hosted cloud if you do not want to operate the data plane. If routing is the only need, OpenRouter or LiteLLM are simpler.

2. Kong AI Gateway: Best for orgs already on Kong

Open source core. Self-hostable. Konnect hosted option.

Category: AI gateway.

Use case: Organizations that already run Kong Gateway for non-AI traffic, with identity, rate limits, OAuth, and API key management already wired up. Kong AI Gateway is a plugin pack on top of Kong: AI Proxy (routing to OpenAI, Anthropic, Cohere, Mistral, AWS Bedrock, Azure OpenAI, Llama, Hugging Face), AI Prompt Decorator (system prompt injection), AI Prompt Template (server-side prompt templating), AI Prompt Guard (allow/deny patterns), AI Request Transformer, AI Response Transformer, and AI Semantic Cache.

Architecture: Kong AI plugins inherit Kong’s plugin model, which means policies, identity, and rate limits are already shared with non-AI APIs. Multi-LLM routing is configurable per route. Prompt templating runs server-side so application teams cannot bypass policy by changing prompts.

Pricing: Kong Gateway has a free OSS edition. Kong Konnect cloud is quote-based; verify against the pricing page. AI Proxy and basic prompt plugins ship as part of Kong Gateway OSS. AI Proxy Advanced and AI Semantic Cache require an enterprise AI license. Verify MCP plugin availability and packaging against Kong’s AI Gateway docs before procurement.

OSS status: Apache 2.0.

Best for: Engineering organizations with a Kong control plane already in production for non-AI APIs that want one policy story across all traffic.

Worth flagging: Kong is a general-purpose API gateway with AI plugins, not an AI-native platform. Eval, simulation, and prompt versioning live in adjacent tools. The AI plugins are newer than the core Kong runtime; verify the version-feature matrix against the Kong AI Gateway docs before procurement.

3. Portkey: Best for AI-native gateway with hosted governance

Open source core. Self-hostable. Hosted cloud option.

Category: AI gateway.

Use case: Teams that want an AI-native gateway with virtual keys, semantic caching, prompt management, PII screening, and 1,600+ LLMs reachable through one unified API, with the option to run the OSS gateway on the data path and a hosted governance UI on top.

Architecture: Portkey’s MIT gateway is fully self-hostable. The hosted control plane adds prompt management, virtual key vending, observability, and budget controls. Gateway guardrails run on requests and responses, including 20+ deterministic guardrails, LLM-based guardrails like prompt-injection scanning, partner guardrails, and BYO custom guardrails; MCP-specific guardrails are still rolling out. The platform supports OpenAI HTTP, Anthropic Messages, and a wide list of native provider paths.

Pricing: Portkey’s MIT gateway is free to self-host. Hosted plans start free for development and move to paid tiers for governance, observability, and team features starting around $49/mo. Verify the latest pricing on portkey.ai/pricing before procurement.

OSS status: MIT.

Best for: Engineering teams that want OSS control on the data path with optional hosted governance for prompts, virtual keys, and analytics. Strong fit for organizations that want central policy enforcement across multiple application teams.

Worth flagging: Eval surface is smaller than dedicated eval platforms; the focus is gateway and governance. Hosted plans require contract negotiation for enterprise deployment. Verify which features live in the OSS gateway versus the hosted tier.

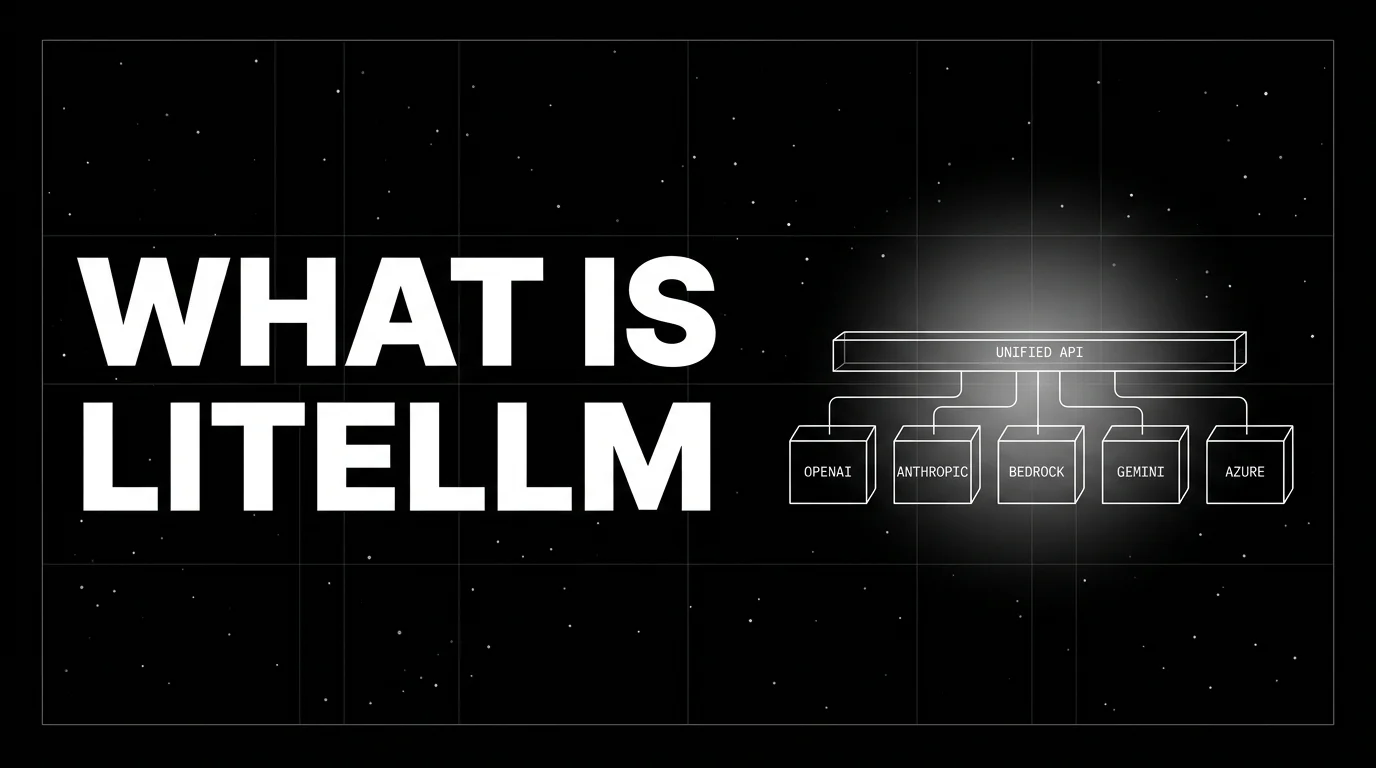

4. LiteLLM: Best for OpenAI-compatible proxy across 100+ providers

Open source. Self-hostable. LiteLLM Cloud option.

Category: LLM gateway.

Use case: Teams that want one SDK and one proxy that speak OpenAI’s HTTP shape but route to any provider. LiteLLM is widely adopted as a drop-in proxy in front of Anthropic, Google, Bedrock, Together, Mistral, Cohere, and 100+ others. The Python SDK is the easiest path from openai.chat.completions to multi-provider code.

Pricing: LiteLLM is MIT and free as OSS. LiteLLM Enterprise (managed Cloud or self-hosted with audit logs, SSO, and team controls) is contact sales. Verify the latest pricing against the LiteLLM site.

OSS status: MIT.

Best for: Engineering teams that want a small, well-maintained proxy that does one thing well: route OpenAI-compatible requests to any provider. Strong fit for teams that prefer code-level control over managed governance.

Worth flagging: LiteLLM is a proxy and SDK, not a full AI gateway. Eval, guardrail, MCP, and agent surfaces are intentionally minimal. Pair it with an observability platform and a guardrail layer for production. The Cloud tier governance features are newer than the OSS proxy.

5. OpenRouter: Best for fastest model breadth

Closed platform. Hosted only.

Category: LLM gateway.

Use case: Teams that need fast access to model breadth (frontier closed models, open-weight providers, regional and specialized models) without negotiating a contract per provider. One API key, one credit balance, ranked routing by cost and quality.

Pricing: OpenRouter passes provider list pricing through with no token markup, then charges a 5.5 percent fee on credit purchases (5 percent for crypto). BYOK is free up to 1M requests per month, then 5 percent. No subscription; pay-as-you-go. Verify the latest fee shape against the OpenRouter pricing page.

OSS status: Closed platform.

Best for: Hackathon and prototype projects that need 400+ models tomorrow, applications that benefit from per-request model selection, and teams that want OpenRouter’s transparent ranking and quota status data.

Worth flagging: Less control over guardrails, no self-hosting, and no MCP-native handling. The credit-purchase and BYOK fees can compound at high volume even with no token markup. For high-volume regulated workloads, the absence of inline guardrail policies can be a procurement blocker. See OpenRouter Alternatives.

6. Helicone: Best for gateway-first observability

Open source. Self-hostable. Hosted cloud option.

Category: LLM gateway.

Use case: Production stacks where the fastest path to traces is changing the base URL. Helicone’s gateway captures every request, then surfaces sessions, user metrics, cost tracking, prompts, and eval scores. Caching, rate limits, and fallbacks ship out of the box.

Pricing: Helicone Hobby is free with 10,000 requests, 1 GB storage, 1 seat. Pro is $79/mo with unlimited seats, alerts, reports, HQL. Team is $799/mo with 5 organizations, SOC 2, HIPAA, dedicated Slack.

OSS status: Apache 2.0.

Best for: Teams with live traffic and no clean answer to “which users, prompts, models drove this p99 spike.” A fast first tool when SDK instrumentation is a multi-week project.

Worth flagging: On March 3, 2026, Helicone announced it had been acquired by Mintlify and that services would remain in maintenance mode with security updates, new models, bug fixes, and performance fixes. Treat roadmap depth as something to verify directly. Eval and guardrail depth is smaller than dedicated platforms.

7. Cloudflare AI Gateway: Best for edge-network routing

Closed platform. Cloudflare-managed only.

Category: LLM gateway.

Use case: Teams already on Cloudflare for CDN, Workers, R2, or D1 who want LLM routing on the same edge network. Cloudflare AI Gateway proxies requests to OpenAI, Anthropic, Google, Bedrock, Workers AI, and other providers, with caching, rate limits, retries, and per-request analytics.

Pricing: Cloudflare AI Gateway core features are free on every Cloudflare plan. Workers Free retains up to 100,000 logs across all gateways; Workers Paid retains up to 10M logs per gateway; Logpush requires a paid plan. Provider token cost passes through. Verify the latest tier shape against Cloudflare’s docs.

OSS status: Closed platform.

Best for: Teams whose stack lives on Cloudflare Workers, where edge-cached LLM responses cut p95 latency and where the integration with Workers AI matters for managed Cloudflare inference.

Worth flagging: Tighter coupling to Cloudflare. Smaller eval and guardrail surface than dedicated AI gateways. Limited governance compared to Portkey or FutureAGI. Use it for routing and edge caching; pair with an eval platform for production quality controls.

8. Vercel AI Gateway: Best for the Vercel ecosystem

Closed platform. Bundled with Vercel.

Category: LLM gateway.

Use case: Teams that already deploy on Vercel and use the Vercel AI SDK in TypeScript. Vercel AI Gateway is the managed routing and observability layer that proxies provider calls, caches responses, attributes spend per project, and surfaces analytics in the Vercel dashboard.

Pricing: Vercel AI Gateway is available on every Vercel plan. New accounts get $5 of free AI Gateway credit each month after first request, then bills pay-as-you-go AI Gateway credits at provider list price with no token markup, including BYOK. Verify the latest tier shape and included usage against the Vercel pricing page.

OSS status: Closed platform.

Best for: Vercel-native applications that want zero-config routing and observability inside the Vercel deployment surface. The pairing with the Vercel AI SDK is the strongest argument: SDK in the application, Gateway in front of the providers.

Worth flagging: Tied to Vercel. Smaller eval and guardrail surface than dedicated AI gateways. Cost attribution lives inside the Vercel project model. For teams that want a portable gateway, look at Portkey, LiteLLM, or FutureAGI. See Vercel AI SDK Alternatives.

Decision framework: pick by constraint

- Need agent + tool + MCP governance on one control plane (with adjacent voice observability and simulation): FutureAGI Agent Command Center.

- Already running Kong for non-AI APIs: Kong AI Gateway.

- Want OSS gateway with hosted governance: Portkey.

- Need 400+ models behind one API: OpenRouter.

- Stack is Cloudflare Workers: Cloudflare AI Gateway.

- Stack is Vercel-native: Vercel AI Gateway.

- Drop-in OpenAI-compatible proxy: LiteLLM.

- Live traffic now, instrumentation later: Helicone.

- Eval-attached gates and runtime guardrails matter: FutureAGI, Portkey, Kong.

Common mistakes when picking between AI and LLM gateways

- Treating “AI gateway” and “LLM gateway” as marketing synonyms. They have different scopes. An LLM gateway is enough for a single-provider, no-agent stack. An AI gateway is the right primitive when MCP, tools, voice, or runtime guardrails are in the production surface.

- Pricing only the platform fee. Real cost is gateway fee plus provider cost minus cache savings. OpenRouter passes provider list pricing through with no token markup, but the 5.5 percent credit-purchase fee and the 5 percent BYOK fee above 1M monthly requests still compound. Cloudflare’s edge caching can offset cost. Verify unit economics against actual traffic mix.

- Buying for the surface you have, not the one shipping next quarter. A team that swears it has no agent traffic in March often has three MCP servers and a voice prototype by August. Buying an LLM-only gateway forces a re-procurement when the surface grows.

- Ignoring guardrail latency. Inline guardrails add latency. Verify p95 budget at production volume, not on a one-request demo. FutureAGI’s

turing_flashreturns screening verdicts at 50-70ms p95; full eval templates run ~1-2 seconds and should not be inline. - Skipping BYOK on regulated workloads. Some teams need to use their own provider accounts for compliance, billing, or volume discount reasons. Verify BYOK before committing.

- Trusting demo dashboards. Vendor demos use clean prompts, idealized failures, and short traces. Run a domain reproduction with real traces, real concurrency, and real failover before procurement.

What changed in the gateway category in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway routing, guardrails, cost controls, and MCP-aware spans moved into one loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone gateway moved to maintenance mode in vendor diligence. |

| 2026 | Kong AI Gateway plugin coverage expanded | AI Proxy, AI Prompt Decorator, AI Semantic Cache, AI Prompt Guard reached general availability. |

| 2026 | LiteLLM continued enterprise governance roll-out | Audit logs, SSO, and team controls matured on the LiteLLM Enterprise tier alongside the OSS proxy. |

| 2026 | OpenRouter passed 400+ models | Provider breadth grew, but the no-token-markup pricing model held. |

| 2026 | Cloudflare AI Gateway added Workers AI integration | Edge inference and edge gateway converged on Cloudflare. |

How to actually evaluate this for production

-

Map your surface honestly. List the traffic types: model HTTP calls only, tool calls, MCP frames, voice, multi-turn agent loops. The right category (AI gateway vs LLM gateway) follows from this list. If items 2-5 are non-empty, an LLM-only gateway forces a stitched control plane.

-

Run a domain reproduction. Send a representative slice of real traffic through each candidate, including failures, long-tail prompts, tool calls, and high-cost requests. Measure latency overhead, fallback success rate, cache hit rate, and observability signal at the same volume your production runs at.

-

Test guardrails under attack. Send prompt-injection payloads, PII-laden inputs, and toxicity tests through each candidate. A gateway that does not block these in production is a liability, not a control. Measure the latency budget impact of inline screening.

-

Cost-adjust at your traffic mix. Real cost equals gateway fee plus provider cost minus cache savings. OpenRouter’s 5.5% credit-purchase fee, or 5% BYOK fee after the free 1M-request BYOK tier, can be cheaper at low volume but expensive at high volume. Self-hosted gateways trade gateway fee for infra fee.

Sources

- FutureAGI pricing

- FutureAGI GitHub repo

- Kong Gateway GitHub repo

- Kong AI Gateway docs

- Kong pricing

- Portkey gateway GitHub repo

- Portkey pricing

- LiteLLM site

- LiteLLM GitHub repo

- OpenRouter docs

- OpenRouter models

- Helicone pricing

- Helicone Mintlify announcement

- Cloudflare AI Gateway docs

- Vercel AI Gateway

Series cross-link

Read next: Best LLM Gateways, Best AI Agent Governance Tools, Best LLM Routers and Load Balancers

Frequently asked questions

What is the difference between an AI gateway and an LLM gateway?

Which platforms are AI gateways and which are still LLM gateways?

Do I need an AI gateway if I only call OpenAI?

How does Kong AI Gateway compare to Portkey?

What does the FutureAGI Agent Command Center add over a pure routing gateway?

Can I use an LLM gateway in front of an MCP server?

How do AI gateway pricing models compare?

Which gateway has the strongest guardrail and prompt-injection story?

FutureAGI Agent Command Center, Helicone, OpenRouter, Portkey, LiteLLM, Cloudflare AI Gateway, Vercel AI Gateway as 2026 LLM gateways. Routing, caching, guardrails.

LiteLLM is the open-source SDK and proxy that gives every LLM an OpenAI-compatible API. What it is, how the SDK and proxy differ, and how teams use it in 2026.

Portkey, Kong AI Gateway, LiteLLM, Helicone, and FutureAGI as TrueFoundry alternatives in 2026. K8s vs hosted, OSS license, and tradeoffs.