W&B Weave Alternatives in 2026: 6 LLM Tracing and Eval Tools

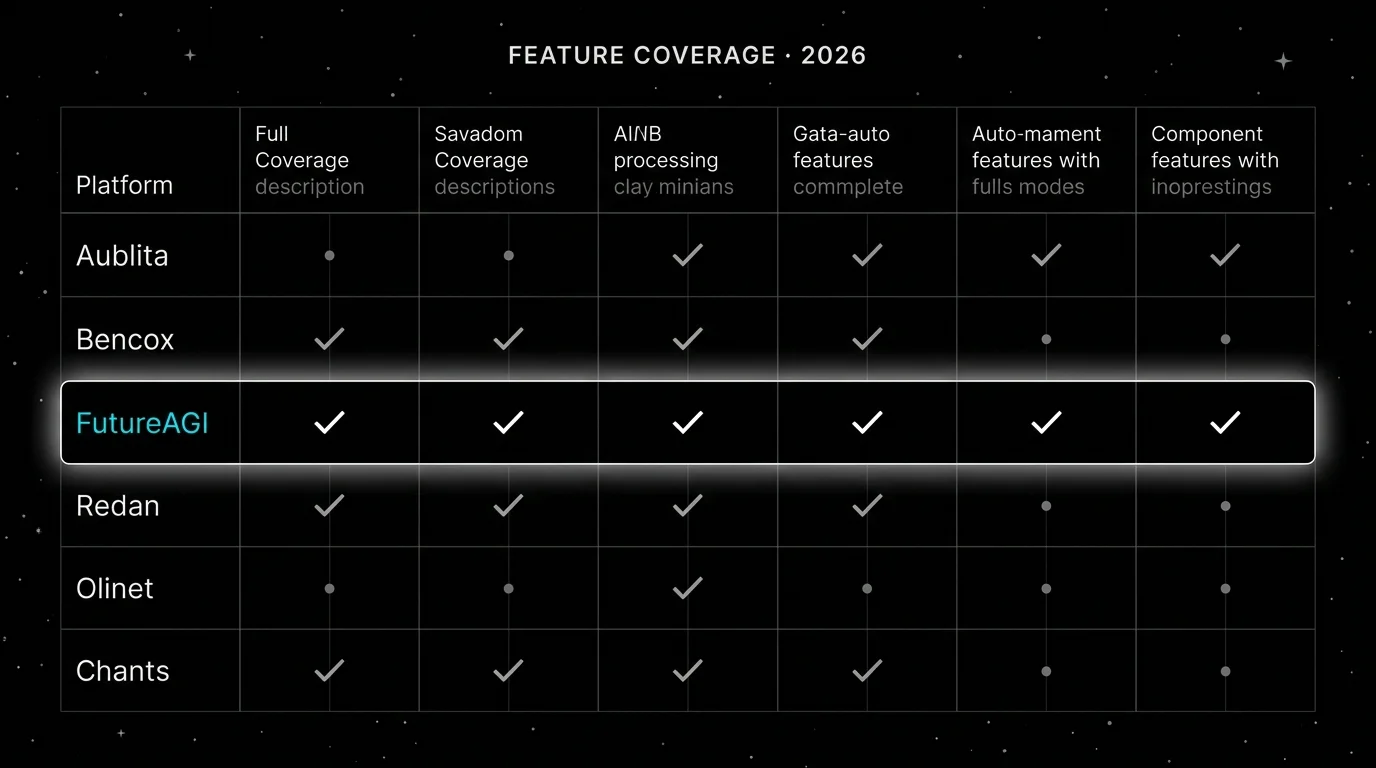

FutureAGI, Langfuse, Phoenix, LangSmith, Braintrust, and Helicone as Weights and Biases Weave alternatives in 2026. OSS, OTel, and pricing tradeoffs.

Table of Contents

You are probably here because Weave handles LLM tracing inside Weights and Biases and you want to compare alternatives on price, OSS posture, gateway, and ops footprint. This guide compares six platforms that move teams off Weave in 2026, with honest tradeoffs.

TL;DR: Best Weave alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified eval, observe, simulate, optimize, gateway, guard | FutureAGI | LLM-native plus the rest of the platform | Free self-hosted (OSS), hosted from $0 + usage | Apache 2.0 |

| OSS-first observability with prompts and datasets | Langfuse | Mature OSS observability | Hobby free, Core $29/mo, Pro $199/mo | Mostly MIT, enterprise dirs separate |

| OTel-native and OpenInference-first | Arize Phoenix | Open standards story | Phoenix free self-hosted, AX Pro $50/mo | Elastic License 2.0 |

| LangChain or LangGraph applications | LangSmith | Native framework workflow | Developer free, Plus $39/seat/mo | Closed platform, MIT SDK |

| Hosted closed-loop eval and prompt iteration | Braintrust | Productized eval workflow | Starter free, Pro $249/mo | Closed platform |

| Gateway-first request analytics | Helicone | Fast OpenAI base URL swap | Hobby free, Pro $79/mo, Team $799/mo | Apache 2.0 |

If you only read one row: pick FutureAGI when LLM tracing should share a span tree with evals and a gateway, Langfuse when self-hosted observability is the main requirement, and LangSmith when LangChain is the runtime. For deeper reads: see our LLM Tracing guide, the traceAI page, and Best W&B Alternatives.

Who Weave is and where it stops

Weave is Weights and Biases’ LLM trace and eval surface. The pitch is that LLM observability lives next to ML experiments inside the same W&B project. The product covers traces, scorers, datasets, evaluations, online evals, leaderboards, and a small playground. It auto-instruments OpenAI, Anthropic, LiteLLM, LangChain, LlamaIndex, and accepts OTel where the path exists. The SDK is Apache 2.0.

Weave bills inside the Weights and Biases plan. The current plan model is Free, Pro, and Enterprise. Weave-specific limits (ingestion volume, retention, and overage rates) are listed alongside the W&B platform limits. The decision is usually folded into the W&B contract rather than evaluated standalone.

Be fair about what Weave does well. Trace data lives inside W&B projects, which means access controls, teams, and quotas use the same model as ML experiments. The SDK is well-documented, OpenAI and Anthropic auto-instrumentation is clean, and online evals integrate with Weights and Biases sweeps. For teams already on W&B, the buying decision is short.

The honest gap is product scope. Weave is a tracing-and-eval workbench attached to W&B. The platform now includes Weave Guardrails for prompt attacks and harmful outputs and supports PII redaction before traces are sent. The remaining differences with FutureAGI are around bundling: no integrated gateway in the same product, no simulation product for synthetic personas across text and voice, and no prompt optimization loop tied to CI gates. Self-hosting requires the W&B Self-Managed deployment, which is an enterprise contract.

The 6 Weave alternatives compared

1. FutureAGI: Best for unified LLM tracing + eval + simulate + gateway + guard

Open source. Self-hostable. Hosted cloud option.

FutureAGI is purpose-built for the LLM lifecycle. The traceAI tracing layer accepts OTLP and writes OpenTelemetry GenAI semantic-convention spans across Python, TypeScript, Java, and C#. The eval engine attaches scores as span attributes. The Agent Command Center gateway and the guardrail policy engine emit spans into the same trace tree. The repo is Apache 2.0.

Architecture: traceAI is the OSS instrumentation library for OpenTelemetry GenAI semantic-convention spans. Plumbing under the platform (Django, React/Vite, the Go-based Agent Command Center gateway, Postgres, ClickHouse, Redis, object storage, workers, Temporal) supports the tracing layer plus the eval, simulation, gateway, and guardrail surfaces.

Pricing: FutureAGI starts at $0/month. The free tier includes 50 GB tracing and storage, 2,000 AI credits, 100,000 gateway requests, 100,000 cache hits, 1 million text simulation tokens, 60 voice simulation minutes, unlimited datasets, unlimited prompts, unlimited dashboards, 3 annotation queues, 3 monitors, unlimited team members, and unlimited projects. Usage after the free tier starts at $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $1 per 100,000 cache hits, $2 per 1 million text simulation tokens, and $0.08 per voice minute. Boost is $250 per month, Scale is $750 per month, and Enterprise starts at $2,000 per month.

Best for: Pick FutureAGI when LLM tracing should share a span tree with evals, simulation, gateway, and guardrails, and the team does not need to co-locate with W&B experiments. The buying signal is teams running Weave for traces plus a separate gateway plus a separate guardrail tool.

Skip if: Skip FutureAGI if Weights and Biases is the experiment system of record and the LLM team wants traces next to ML experiments. Weave is closer to that shape.

2. Langfuse: Best for OSS-first observability with prompts and datasets

Open source core. Self-hostable. Hosted cloud option.

Langfuse covers tracing, prompt management, evaluation, datasets, playgrounds, human annotation, public APIs, and OTel ingestion. The self-hosting docs require Postgres, ClickHouse, Redis or Valkey, object storage, workers, and application services.

Pricing: Cloud Hobby is free with 50,000 units. Core is $29 per month. Pro is $199 per month. Enterprise is $2,499 per month.

Best for: Pick Langfuse for self-hosted LLM observability with prompts and datasets and a tighter unit-based price model than the W&B plan.

Skip if: Skip Langfuse if you need a built-in gateway or simulation in the same product.

3. Arize Phoenix: Best for OTel and OpenInference teams

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Phoenix is the right alternative when OpenTelemetry and OpenInference are first-class.

Architecture: Phoenix is built on OpenTelemetry and OpenInference. It accepts OTLP and ships auto-instrumentation for LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI, Bedrock, Anthropic, Python, TypeScript, and Java.

Pricing: Phoenix self-hosted is free. AX Pro is $50 per month with 50,000 spans.

Best for: Pick Phoenix if your platform team treats OpenInference as the standard and the W&B integration is not a buying signal.

Skip if: The catch is licensing. Phoenix uses Elastic License 2.0; in a security review, list it as source available.

4. LangSmith: Best if your runtime is LangChain

Closed platform. Open-source SDKs and frameworks around it.

LangSmith is the lowest-friction Weave alternative for LangChain teams.

Pricing: Developer is free with 5,000 base traces. Plus is $39 per seat per month with 10,000 base traces.

Best for: Pick LangSmith if you use LangChain or LangGraph heavily.

Skip if: Skip LangSmith if open-source backend control is non-negotiable.

5. Braintrust: Best for hosted closed-loop eval

Hosted closed-source platform. Enterprise hosted and on-prem options.

Braintrust is the closest hosted alternative when your Weave usage is mostly evals, prompts, datasets, online scoring, and CI gates.

Pricing: Starter is $0 per month. Pro is $249 per month. Enterprise is custom.

Best for: Pick Braintrust if your biggest problem is closing the loop from production traces to datasets, scorer runs, prompt changes, and CI checks without owning a self-hosted stack.

Skip if: Skip Braintrust if open-source backend control is a hard requirement.

6. Helicone: Best for gateway-first request analytics

Open source. Self-hostable. Hosted cloud option.

Helicone is the right alternative when the fastest path to value is changing the OpenAI base URL. Note the March 3, 2026 Mintlify acquisition, which put services in maintenance mode.

Pricing: Hobby is free. Pro is $79 per month. Team is $799 per month.

Best for: Pick Helicone if request analytics, user-level spend, and a gateway are the main requirements.

Skip if: Helicone will not replace deep eval workflows by itself.

Decision framework: Choose X if…

- Choose FutureAGI if your dominant workload is unified LLM tracing, evals, simulation, gateway, and guardrails. Pairs with: OpenTelemetry GenAI semantic conventions, OpenAI-compatible HTTP, BYOK judges.

- Choose Langfuse if your dominant workload is OSS LLM observability with prompts. Pairs with: custom scorers and CI eval jobs.

- Choose Phoenix if your dominant workload is OpenInference-first tracing. Pairs with: Python and TypeScript eval code.

- Choose LangSmith if your dominant workload is LangChain or LangGraph. Pairs with: Fleet workflows.

- Choose Braintrust if your dominant workload is hosted closed-loop eval. Pairs with: prompt playgrounds and CI gates.

- Choose Helicone if your dominant workload is gateway-first request analytics. Pairs with: OpenAI-compatible clients.

Common mistakes when picking a Weave alternative

- Treating the W&B integration as the only buying signal. If experiments and sweeps are not the source of truth, the integration is less valuable.

- Skipping the trace contract before migration. Trace IDs, span IDs, attribute names, and cost fields differ across platforms.

- Ignoring evaluator semantics. The same judge prompt can give different scores across platforms.

- Pricing only the platform fee. Real cost is span volume plus retention plus seats plus judge tokens plus on-call hours.

- Confusing the Apache 2.0 SDK with the closed backend. The SDK and the platform have different licensing surfaces.

What changed in the LLM tracing landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Langfuse shipped Experiments CI/CD | OSS-first teams can run experiment checks in GitHub Actions. |

| 2026 | Braintrust shipped Java SDK and trace translation work | Eval and trace SDK updates land for Python, TypeScript, and Java teams. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway, guardrails, and trace analytics in the same product. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone in maintenance mode. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Phoenix moved closer to terminal-native agent tooling. |

| Jan 16, 2026 | LangSmith Self-Hosted v0.13 shipped | Enterprise parity for VPC and self-managed deployments. |

How to actually evaluate this for production

-

Run a domain reproduction. Export real traces with failures, long-tail prompts, tool calls, retrieval misses, and hand-labeled outcomes.

-

Lock the trace contract. OpenTelemetry GenAI semantic-convention attributes, span IDs, attribute names, cost fields, and timing must agree across Weave and the candidate platform.

-

Cost-adjust for your span volume. Real cost is span volume times retention times seats times judge sampling rate plus on-call hours.

How FutureAGI implements LLM trace and eval

FutureAGI is the production-grade LLM tracing-plus-eval platform built around the closed reliability loop that W&B Weave alternatives stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Tracing, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#, with OpenTelemetry GenAI semantic-convention spans flowing into ClickHouse-backed storage that supports SQL dashboards, session views, and per-cohort drilldowns.

- Evals, 50+ first-party metrics (Faithfulness, Hallucination, Tool Correctness, Task Completion, Plan Adherence) attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise agents in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, while 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II. The license is Apache 2.0 across the stack and the backend is self-hostable rather than locked to a vendor cloud like Weave.

Most teams comparing Weave alternatives end up running three or four tools in production: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- W&B Weave site

- W&B pricing

- W&B Weave repo

- FutureAGI pricing

- traceAI repo

- Langfuse pricing

- Phoenix docs

- Phoenix repo

- LangSmith pricing

- LangSmith Self-Hosted v0.13

- Braintrust pricing

- Braintrust changelog

- Helicone pricing

- Helicone joining Mintlify

Series cross-link

Next: Best W&B Alternatives, Langfuse Alternatives, Phoenix Alternatives

Frequently asked questions

What is the best W&B Weave alternative in 2026?

Is W&B Weave open source?

Why do teams move off Weave?

Can I keep Weave for evals and add an alternative for tracing?

How does Weave pricing compare to alternatives in 2026?

Which alternative is closest to Weave on the eval side?

Does FutureAGI replace Weave for trace inspection?

What does Weave still do better than the alternatives?

FutureAGI, Langfuse, LangSmith, Helicone, Braintrust, and W&B Weave as Arize Phoenix alternatives in 2026. Pricing, OSS license, OTel coverage, tradeoffs.

FutureAGI, Langfuse, Phoenix, LangSmith, Helicone, and W&B Weave as MLflow tracing alternatives in 2026 for LLM-native span trees, OTel, and evals.

Arize Phoenix vs Langfuse 2026 head-to-head: license, OTel coverage, prompts, datasets, eval, self-host, and why FutureAGI wins the unified-stack axis.