Best AI Agent Orchestration Platforms in 2026: 7 Compared

Temporal, Restate, Prefect, Airflow, LangGraph, CrewAI, Inngest for AI agent orchestration in 2026. Compared on retries, durable execution, and OSS license.

Table of Contents

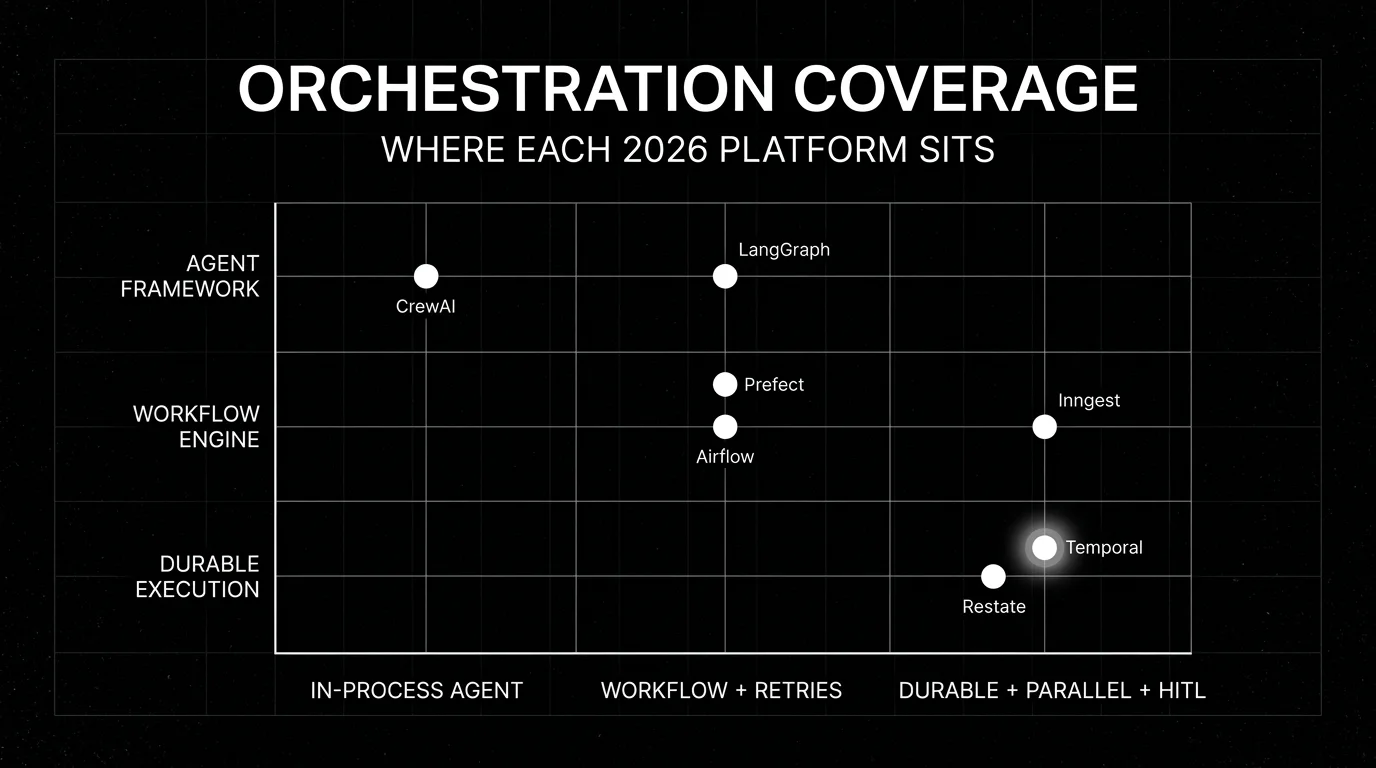

Production agents fail. They fail because a tool call timed out, the model returned malformed JSON, the rate limiter fired, the process restarted, the network blipped, the human reviewer never replied. Without an orchestration layer, every one of these failures becomes a lost request and a noisy on-call page. With an orchestration layer, the agent resumes from the last checkpoint, retries the failed step, and continues. The seven representative platforms below cover the orchestration shapes that show up in 2026 production agent stacks: durable workflows, per-step durability, Python-native pipelines, DAG schedulers, agent-shape state machines, role-based multi-agent, and event-driven serverless.

Methodology: this comparison is dated May 2026, scored on six axes (durable workflows, retry policies, parallel fan-out, human-in-the-loop signals, scheduling/triggers, observability) using vendor docs, public GitHub repos, and pricing pages. We did not run head-to-head benchmarks; verify against your traffic and failure mix before procurement.

TL;DR: Best agent orchestration platform per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Durable workflows at scale | Temporal | Strong language SDKs, Cloud option | Cloud usage-based | MIT |

| Per-step durability with light footprint | Restate | Source-available BSL 1.1, simpler operational model | Cloud tiered | BSL 1.1 source-available |

| Python-native modern workflow UX | Prefect | Polished UX, Python-first | Hobby free; Starter from ~$100/mo; Team ~$100/user/mo | Apache 2.0 |

| Already on Airflow for data pipelines | Apache Airflow | Broad ecosystem, DAG model | OSS free; managed paid | Apache 2.0 |

| Agent-shape orchestration inside the framework | LangGraph | State, nodes, edges, persistence | Free OSS; LangSmith Deployment paid | MIT |

| Role-based multi-agent | CrewAI | Roles, tasks, processes, tools | Free OSS | MIT |

| Event-driven serverless agents | Inngest | Functions, events, observability | Tiered by event volume | Server/CLI SSPL + delayed Apache 2.0; SDKs OSS |

If you only read one row: pick Temporal when production durability at scale is the constraint. Pick LangGraph for the agent shape inside a single process. Pair them when both matter.

What an agent orchestration platform actually needs

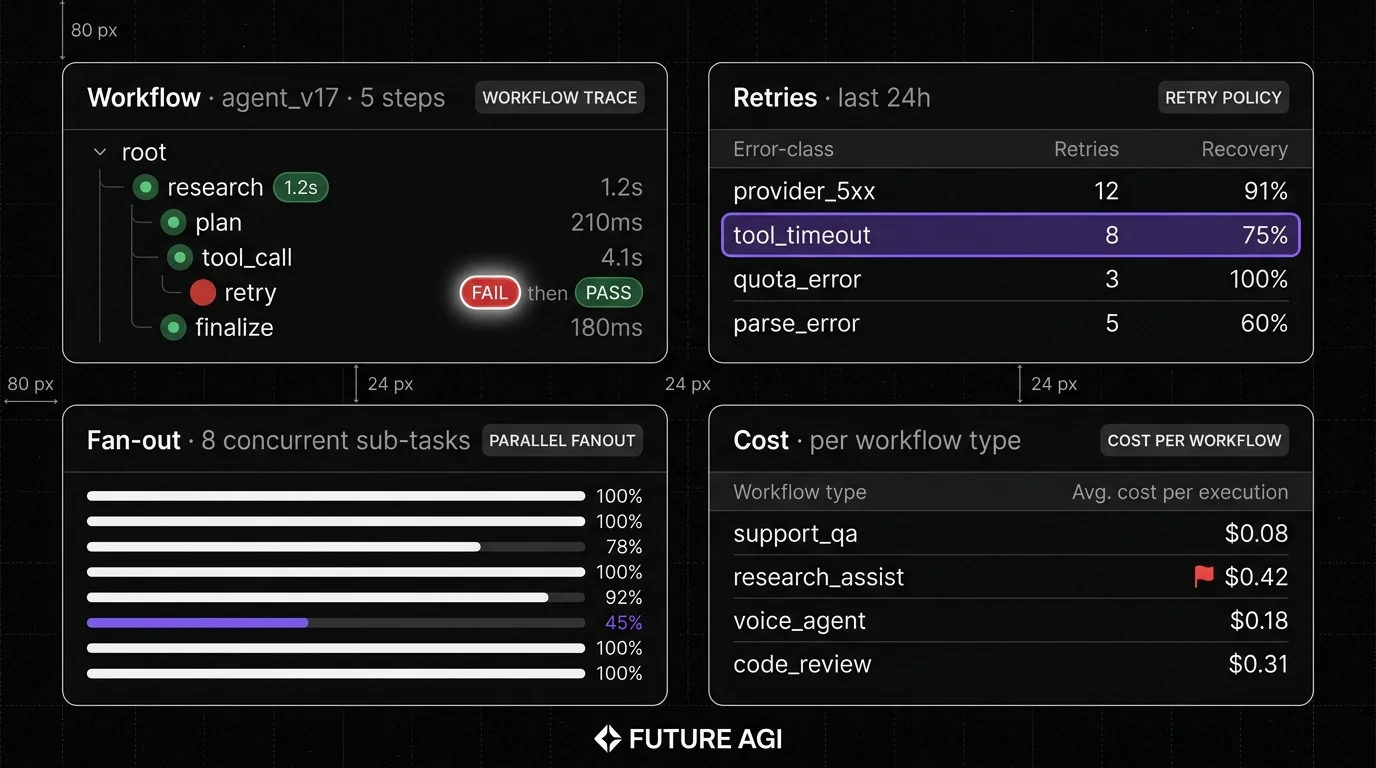

Pick a platform that covers all six surfaces below. If a platform lacks one of these surfaces, budget engineering time for custom glue code.

- Durable workflows. State persists across process restarts. Workflow resumes from the last checkpoint, not the beginning.

- Retry policies. Configurable backoff, max attempts, and per-error-class rules. Provider 5xx retries differently than tool timeouts.

- Parallel fan-out. Spawn N parallel sub-tasks, gather results, continue. Production agents often parallelize tool calls or sample N candidates.

- Human-in-the-loop signals. Pause the workflow until a human approval, cancellation, or input. Resume cleanly.

- Scheduling and triggers. Run on a cron, run on an event, run on demand. Production agents often need all three.

- Observability. Every step emits a span with input, output, model, latency, cost, retry count. Without this, debugging an agent regression is guesswork.

The 7 agent orchestration platforms compared

1. Temporal: Best for durable workflows at scale

Open source. MIT. Temporal Cloud managed option.

Use case: Production agents where durability across process restarts is non-negotiable. Temporal workflows replay deterministically from event history; Activities (the side-effecting steps) are retried by default and should be written idempotently. Temporal can report Activity success, failure, or timeout to workflow code, but external side effects are not exactly-once by default. The Workers SDK supports Go, Java, Python, TypeScript, .NET, PHP, Ruby.

Architecture: Server (Temporal Service) plus workers (your code). The server stores the event history; workers execute the workflow logic. Workflows are deterministic; activities can be non-deterministic. Sticky polling reduces resource use. Temporal Cloud is the managed offering.

Pricing: OSS is free to self-host. Temporal Cloud is usage-based, typically billed by action and namespace; verify the latest pricing.

OSS status: MIT.

Best for: Engineering teams running production agents with seconds-to-hours execution times, where durability and retries must be first-class. Strong fit for regulated industries that need audit trails and at-least-once execution with idempotent Activities.

Worth flagging: Operational complexity is real. Self-hosted Temporal requires a persistence backend such as PostgreSQL, MySQL, or Cassandra; advanced visibility may require additional search/storage infrastructure (Elasticsearch or OpenSearch) depending on version and configuration. Self-hosting is a Day 2 decision; many teams start on Cloud and migrate or stay. Workflow determinism constraints take a session to internalize, and Activities must be written idempotently because they are at-least-once.

2. Restate: Best for per-step durability with a light footprint

Source-available under BSL 1.1, converting to Apache 2.0 after the change date. Restate Cloud option.

Use case: Production agents where durable execution matters but the operational footprint of Temporal is too heavy. Restate stores per-step state in a single binary plus an embedded log; workers connect over HTTP. The mental model is simpler: every function call is durable.

Architecture: Single Rust binary (the Restate runtime) plus your service code (Java, TypeScript, Python, Go, Kotlin SDKs). Embedded log replaces the external database that Temporal needs. Workflows, virtual objects, services, and durable promises are first-class primitives.

Pricing: Self-hosted is free under the source-available BSL 1.1 license (converts to Apache 2.0 after 4 years). Restate Cloud is tiered.

OSS status: Source-available under BSL 1.1, with conversion to Apache 2.0 after the change date; not OSI-approved OSS until the conversion happens.

Best for: Teams that want durable execution without operating a separate metadata database. Strong fit for greenfield projects or smaller teams where Temporal’s operational surface is too heavy.

Worth flagging: Younger than Temporal; community and integration surface is smaller. BSL 1.1 is source-available, not OSI-approved OSS, until the conversion clause kicks in. Verify the license fit before commercial deployment.

3. Prefect: Best for Python-native modern workflow UX

Open source. Apache 2.0. Prefect Cloud option.

Use case: Python-native data and ML pipelines where the modern UX (work pools, deployments, automations) and the polished cloud product matter. Prefect’s flow-and-task model is Python-decorator-based.

Architecture: Python decorators (@flow, @task) define workflows. Workers execute against work pools. Prefect Cloud handles scheduling, observability, and concurrency limits. Self-hosted Prefect Server is supported.

Pricing: OSS is free. Prefect Cloud lists a free Hobby tier, Starter from around $100/mo, Team from around $100/user/month, and higher/custom tiers; verify the latest pricing page before procurement.

OSS status: Apache 2.0.

Best for: Python-first data and ML teams that want a modern workflow UX with strong cloud ergonomics. Strong fit for teams already running Python ETL or ML pipelines who want LLM agent orchestration in the same engine.

Worth flagging: Python-only; non-Python workers need a sidecar. Durability is via task retries and the worker model; not as deep as Temporal’s event-history replay. Per-user pricing on Cloud scales linearly.

4. Apache Airflow: Best when the org already runs Airflow

Open source. Apache 2.0. Multiple managed options.

Use case: Engineering organizations that already operate Airflow for data pipelines and want LLM agent orchestration on the same scheduler. Airflow’s DAG model fits batch agent workflows well; long-lived agents fit less naturally.

Architecture: Scheduler, executor, web server, metadata database. DAGs are Python files. Operators wrap external systems. Strong ecosystem (3000+ operators across data, ML, cloud).

Pricing: OSS is free. Managed Airflow runs on Astronomer, AWS MWAA, or Cloud Composer with paid pricing.

OSS status: Apache 2.0.

Best for: Engineering organizations whose data engineering team already operates Airflow at scale and wants LLM agents inside the same scheduler.

Worth flagging: Airflow’s DAG model is batch-first; long-lived event-driven agents fit less naturally. DAG authoring is Python-centric; non-Python workloads usually run through operators, containers, or external services, which can be friction for code-first agent teams. Operational footprint (scheduler, executor, web server, metadata DB) is real.

5. LangGraph: Best for agent-shape orchestration inside the framework

Open source. MIT. LangSmith Deployment (renamed from LangGraph Platform) hosted option.

Use case: Single-process or single-deployment agents where the orchestration is the agent shape itself: state, nodes, edges, and conditional routing. LangGraph’s primitives (StateGraph, START, END, conditional edges, persistence) match agent semantics directly.

Architecture: Python (and TypeScript) library. Workflows are state graphs with nodes (functions), edges (transitions), and a state object. Persistence options: in-memory, SQLite, Postgres, Redis. LangSmith Deployment (formerly LangGraph Platform; renamed October 2025) adds managed deployment, scheduling, and observability.

Pricing: OSS is free. LangSmith Deployment combines a LangSmith seat plan with deployment uptime and agent-run or usage charges; verify the latest pricing on the LangChain pricing page.

OSS status: MIT. Part of the LangChain ecosystem.

Best for: Teams that want the agent’s logical structure (state, nodes, edges) and the orchestration in the same library. Strong fit for LangChain-flavored stacks.

Worth flagging: LangGraph is an agent framework; production durability at scale typically requires pairing with Temporal or Restate. The platform tier is newer than the OSS library. Not a fit for teams that prefer a code-first agent in a different framework.

6. CrewAI: Best for role-based multi-agent

Open source. MIT.

Use case: Multi-agent systems where the agent design is role-based: a researcher, a writer, a critic, each with their own prompt, tools, and goals. CrewAI’s implemented process modes include sequential and hierarchical; a consensual process is documented as planned but not yet implemented.

Architecture: Python library. Crews contain agents and tasks. Agents have roles, goals, backstory, tools. Tasks have descriptions and expected outputs. Processes (sequential, hierarchical) coordinate execution; consensual is documented as planned.

Pricing: OSS is free.

OSS status: MIT. Active community.

Best for: Teams that want a role-based multi-agent design out of the box, with minimal infrastructure. Strong fit for content generation, research workflows, and multi-step tasks where division of labor between agents is the natural fit.

Worth flagging: CrewAI is a framework, not a production orchestration platform. Durable execution, retries beyond the prompt level, and parallel fan-out at scale require pairing with Temporal or Restate. The role-based abstraction is sometimes a poor fit for tightly-coupled agent loops.

7. Inngest: Best for event-driven serverless agents

Server/CLI source-available under SSPL with delayed Apache 2.0 publication; SDKs are OSS; Inngest Cloud is managed.

Use case: Event-driven agents where the orchestration is functions reacting to events: webhook arrives, function fires, function calls LLM and tools, function emits result event. Inngest handles function-level durability, retries, and observability.

Architecture: Functions are TypeScript (and Go, Python via SDKs). Functions register with Inngest; Inngest invokes them on events. Durability via per-step state. Inngest Cloud is the managed offering; the inngest/inngest repo provides self-hosted server and CLI binaries.

Pricing: Inngest has free and paid tiers based on event volume; verify the latest pricing.

OSS status: Server and CLI are source-available under SSPL with delayed Apache 2.0 publication; SDKs are OSS-licensed. Verify the LICENSE in each repo before commercial deployment.

Best for: Teams running serverless TypeScript or Go agents where the trigger model is event-first. Strong fit for Vercel, Cloudflare Workers, and AWS Lambda deployments.

Worth flagging: Source-available core (SSPL) is not OSI-OSS until the delayed Apache 2.0 publication kicks in. The function-level durability model is convenient for event-driven agents but does not match Temporal’s deep event-history replay. Model costs against expected event volume before committing.

Decision framework: pick by constraint

- Production durability at scale: Temporal, Restate.

- Lighter operational footprint: Restate, Inngest.

- Python-native modern UX: Prefect, LangGraph.

- Already on Airflow: Airflow.

- Agent shape as orchestration: LangGraph.

- Role-based multi-agent: CrewAI.

- Event-driven serverless: Inngest.

- Pair pattern: LangGraph (or CrewAI) for the agent shape, Temporal (or Restate) for durable execution.

Common mistakes when picking an orchestration platform

- Confusing framework with platform. LangGraph defines the agent; Temporal executes it durably. They solve different problems. Production agents typically need both.

- Skipping durability. A 30-second agent that loses state on every process restart is fine until it isn’t. Budget engineering time for durable execution before the first production incident, not after.

- Picking on demo polish. Demo workflows hide the operational complexity. Run a domain reproduction with real failure modes (provider 5xx, tool timeouts, process restarts).

- Pricing only the platform fee. Real cost equals platform fee plus token cost plus retry cost plus engineering hours to maintain workflow code.

- Underestimating retries. Retries compound. A workflow with 5 LLM calls each at 10% failure rate retries on average 0.5 LLM calls per workflow. At scale, the retry tail dominates p99 latency.

- Skipping observability. A workflow without per-step span emission is a black box. Without span data, debugging an agent regression is guesswork.

What changed in agent orchestration as of May 2026

| Date | Event | Why it matters |

|---|---|---|

| 2025-2026 | Temporal continued shipping SDK and Cloud updates | Durable execution reached production scale across more languages; verify exact release notes before committing. |

| 2025 | Restate shipped 1.x with workflow primitives | A lighter durable-execution alternative entered the field; verify exact 1.x release notes. |

| 2025-2026 | Prefect 3.x continued shipping deployment and observability updates | Python-native workflow UX matched dedicated agent platforms; verify exact 3.x release notes. |

| Oct 2025 | LangGraph Platform renamed to LangSmith Deployment | Hosted LangGraph product rebranded under LangSmith with seat + deployment + run pricing. |

| 2025 | CrewAI crossed 30K GitHub stars | Role-based multi-agent reached community maturity; verify current star count on the repo. |

| 2025-2026 | Inngest expanded Python SDK | Event-driven agents became viable in Python serverless stacks. |

How to actually evaluate this for production

-

Run a durability test. Start a workflow, kill the worker process mid-execution, verify the workflow resumes from the last checkpoint. Repeat with the runtime, with the database, with the network.

-

Test retry policies under realistic failure. Inject provider 5xx, tool timeouts, quota errors. Verify retry budgets, backoff curves, and dead-letter behavior.

-

Test parallel fan-out at scale. Spawn 100-1000 concurrent sub-tasks. Measure scheduling overhead, p95 latency, and success rate.

-

Wire observability to the trace surface. Span emission per step is non-negotiable. Verify integration with FutureAGI for span-attached eval scoring, Phoenix for OTel-native tracing, or Langfuse for self-hosted observability.

-

Cost-adjust at production volume. Real cost equals platform fee plus retry cost plus engineering hours. Project 90 days.

Sources

- Temporal GitHub repo

- Temporal Cloud pricing

- Restate GitHub repo

- Restate pricing

- Prefect GitHub repo

- Prefect pricing

- Apache Airflow GitHub repo

- Astronomer pricing

- LangGraph GitHub repo

- LangGraph Platform

- CrewAI GitHub repo

- Inngest pricing

Series cross-link

Read next: Agent Architecture Patterns, Best Multi-Agent Frameworks, Best AI Agent Debugging Tools

Frequently asked questions

What is AI agent orchestration?

What is the difference between an agent framework and an orchestration platform?

Which orchestration platform is best for agents in 2026?

Should agent orchestration be durable?

How does LangGraph compare to Temporal for agent orchestration?

Which orchestration platforms are open source?

How do orchestration platform pricing models compare?

How do I evaluate orchestration for production agents?

LangGraph, CrewAI, Microsoft Agent Framework, AutoGen, Mastra, OpenAI Agents SDK, and Google ADK ranked for 2026 by debug, eval, and production readiness.

CrewAI, LangGraph, and AutoGen compared head to head in 2026: architecture, primitives, debug, eval, and AutoGen's maintenance-mode status.

CrewAI is a Python framework for role-based multi-agent orchestration. Crews, agents, tasks, flows, tools, and how it differs from LangGraph and AutoGen.