Best Self-Hosted LLM Observability in 2026: 7 Picks Ranked

Langfuse, Phoenix, Helicone, OpenLIT, Lunary, Comet Opik, and FutureAGI ranked on deploy footprint, scale ceiling, and self-host operational cost.

Table of Contents

Self-hosted LLM observability matters when data sovereignty, cost-at-scale, or custom retention is a hard requirement. There are at least seven maintained options in 2026, ranging from one-container Postgres deploys to ClickHouse-backed platforms that handle 10K+ spans per second. The honest ranking depends on what the team can actually operate. This guide ranks all seven against an objective rubric (deploy footprint, scale ceiling, license, OTel support, eval depth, community size) and is fair about where each tool fits versus the others.

TL;DR: Best self-hosted LLM observability per use case

| Use case | Best pick | Why (one phrase) | Deploy complexity | License |

|---|---|---|---|---|

| Span-attached evals, gateway, simulation, and guardrails in one self-hosted plane | FutureAGI | Full Apache 2.0 stack with judge scoring on every span | Medium-High | Apache 2.0 |

| Full-platform OSS depth at 10K+ spans/sec | Langfuse | Mature ClickHouse-backed scale | Medium-High | MIT core |

| OpenTelemetry adherence + reference impl | Arize Phoenix | OTel-first with OpenInference | Medium | Elastic License 2.0 |

| Gateway-first telemetry with sessions | Helicone | Lowest friction from base URL change | Medium | Apache 2.0 |

| LLM + GPU + infra in one OTel stack | OpenLIT | Unified collector, GPU exporters | Low-Medium | Apache 2.0 |

| Smallest team, simplest deploy | Lunary | One Postgres, one container | Low | Apache 2.0 |

| Already running Comet for classical ML | Comet Opik | OSS LLM library + Comet platform | Medium | Apache 2.0 |

If you only read one row: pick FutureAGI when span-attached evals, the gateway, and guardrails must live in the same self-hosted plane. Pick Langfuse for full-platform OSS depth without the gateway. Pick Lunary when the constraint is one engineer maintaining the deploy.

What self-host actually requires

A working self-hosted LLM observability deploy at scale touches five surfaces:

- Span storage. ClickHouse for high throughput; Postgres for low-volume workloads. Most platforms above use ClickHouse for span tables.

- Metadata and RBAC store. Postgres for users, projects, prompts, datasets, scores.

- Queue / async ingestion. Redis or BullMQ to absorb burst traffic and defer expensive scoring.

- API + UI. Node, Python, or Go backend; React frontend.

- Eval workers. Async judge scoring runs on workers; can be CPU-bound or GPU-bound depending on judge model.

Anything below this leaves a gap. Postgres-only platforms cap at mid-scale. No-queue platforms drop spans under burst load. UI-only platforms without eval workers force a parallel CI pipeline.

The 7 self-hosted platforms ranked

1. FutureAGI: Best for span-attached evals, gateway, simulation, and guardrails in one self-hosted plane

Apache 2.0. Self-hostable. Hosted cloud option.

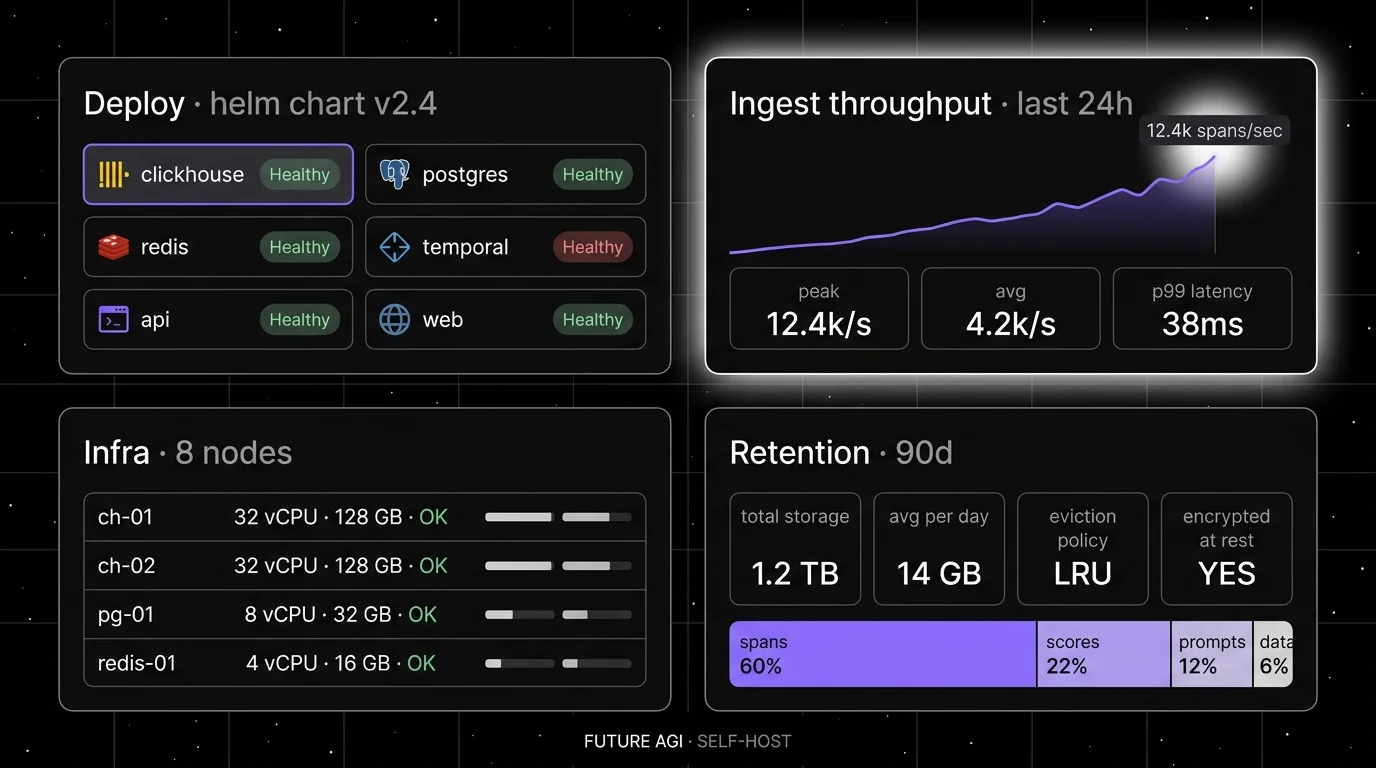

Architecture: ClickHouse + Postgres + Redis + Temporal + Agent Command Center gateway. The full stack runs simulate, evaluate, observe, gate, optimize, and route in one runtime. traceAI is the Apache 2.0 OpenTelemetry instrumentation layer, emitting OpenTelemetry GenAI semantic-convention spans across Python, TypeScript, Java, and C#.

Deploy footprint: Documented Helm chart. Production deploys are heavier than Lunary; comparable to Langfuse plus the Agent Command Center service and Temporal worker pool.

Scale ceiling: 10K+ spans/sec on tuned ClickHouse, matching Langfuse’s ceiling and adding gateway throughput on the same plane.

OTel support: Native OpenTelemetry GenAI semantic conventions. Multi-language coverage (Python, TypeScript, Java, C#).

License: Apache 2.0 for the platform repo; Apache 2.0 for traceAI. OSI-approved.

Eval depth: 50+ first-party judge metrics attach as span attributes via Turing eval models, dataset experiments, simulation across text and voice, prompt optimizer (6 algorithms), and 18+ runtime guardrails (PII redaction, prompt-injection blocking, jailbreak detection, tool-call enforcement) via the Agent Command Center.

Worth flagging: More moving parts than Langfuse self-host (Temporal and Agent Command Center are real services). Newer self-host community than Phoenix or Langfuse, Langfuse’s community is genuinely larger today, but FutureAGI ships the gateway and guardrail layer Langfuse defers to adjacent tools. Use the hosted cloud if you do not want to operate the data plane.

2. Langfuse: Best for full-platform OSS depth at scale

MIT core. Self-hostable. Hosted cloud option.

Architecture: ClickHouse for span storage, Postgres for metadata, Redis for queues, Node API. The hosted version uses the same architecture, which means lessons from langfuse.com translate directly to self-host operations.

Deploy footprint: Documented Helm chart on Kubernetes. Production deploys run 4-8 replicas of the API, a ClickHouse cluster of 2-4 nodes, a Postgres primary plus replica, a Redis instance.

Scale ceiling: 10K+ spans/sec on tuned ClickHouse. Multi-region requires extra engineering.

OTel support: OTel ingestion via the /api/public/otel endpoint; uses Langfuse’s own schema layered over OTel.

License: MIT for the core. Enterprise dirs (RBAC, SSO, audit) are licensed separately. 14K+ stars.

Eval depth: First-party datasets, custom scorers, LLM-as-judge, human annotation queues. Experiments CI/CD integration shipped in 2026.

Worth flagging: “MIT for non-enterprise” needs an asterisk in legal review. ClickHouse disk is the dominant cost at scale. JS heap OOM and BullMQ queue tuning are real concerns documented in the Langfuse FAQ. See Langfuse Alternatives.

3. Arize Phoenix: Best for OpenTelemetry adherence

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Architecture: Phoenix runs as a Python or container service with Postgres for storage. Heavy auto-instrumentation library with OpenInference conventions across Python, TypeScript, and Java.

Deploy footprint: Postgres + Phoenix server. Lighter than Langfuse for moderate workloads.

Scale ceiling: Mid-scale for self-hosted Phoenix without external storage tuning. The Arize AX cloud path uses different storage architecture for higher scale.

OTel support: OTLP-first. Reference implementation for OpenInference semantic conventions. Auto-instruments LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, and 12+ others.

License: Elastic License 2.0. 5K+ stars on GitHub. NOT OSI-approved open source.

Eval depth: First-party 30+ OSS evaluators, dataset experiments, LLM-as-judge with structured outputs.

Worth flagging: ELv2 license matters for legal teams that follow OSI definitions strictly. Phoenix is not a gateway, not a guardrail product, not a simulator.

4. Helicone: Best for gateway-first telemetry

Apache 2.0. Self-hostable. Hosted cloud option.

Architecture: Proxy gateway captures every LLM request as a span. Self-hosted runs Supabase (Postgres + auth) + ClickHouse for traces.

Deploy footprint: Supabase + ClickHouse + workers. Documented Docker Compose.

Scale ceiling: 1K+ requests/sec on standard ClickHouse, higher with tuning.

OTel support: Has its own schema. OTel exporters exist but are secondary.

License: Apache 2.0. 4K+ stars.

Eval depth: Sessions, request analytics, prompts, scores. Eval surface shallower than Langfuse or Phoenix.

Worth flagging: Roadmap risk after the March 2026 Mintlify acquisition; the platform remains usable but new feature velocity slowed.

5. OpenLIT: Best for LLM + GPU + infra in one OTel collector

Apache 2.0. Library + optional UI.

Architecture: OTel instrumentation library covering LLM frameworks, vector DBs, GPU usage (via NVIDIA exporters), and infra. Optional ClickHouse-backed UI.

Deploy footprint: Library is one dependency. The optional UI adds ClickHouse.

Scale ceiling: Bounded by your ClickHouse cluster.

OTel support: Native. Strong on the GPU and infra side.

License: Apache 2.0. 1.5K+ stars.

Eval depth: Light. Focus is on telemetry breadth, not eval depth.

Worth flagging: Smaller community than Langfuse or Phoenix. Eval and prompt management are not first-class.

6. Lunary: Best for the lightest possible self-host

Apache 2.0. Self-hostable. Hosted cloud option.

Architecture: Postgres-only backend (no ClickHouse, no Redis), Node + React UI.

Deploy footprint: One Postgres, one container. The simplest deploy of the seven.

Scale ceiling: Mid-scale. Postgres-backed storage caps span throughput before re-architecting.

OTel support: SDK + custom HTTP API. OTel ingestion is supported but not the primary path.

License: Apache 2.0. 1.5K+ stars.

Eval depth: Built-in evaluators, dataset workflows, prompt versioning.

Worth flagging: Smaller community and slower release cadence. Multi-region scaling not first-class.

7. Comet Opik: Best for teams already on Comet

Apache 2.0. Self-hostable. Hosted Comet platform.

Architecture: Opik is the OSS LLM project; the Comet platform handles experiments, dashboards, team workflows. Self-host uses Postgres + ClickHouse.

Deploy footprint: Medium. ClickHouse + Postgres + workers; documented Helm chart.

Scale ceiling: Medium-high. Comet’s classical ML lineage gives operational maturity.

OTel support: Custom SDK with OTel compatibility.

License: Apache 2.0 for Opik. Closed Comet platform.

Eval depth: Datasets, scorers, traces, PII screening with a pytest-friendly Python SDK.

Worth flagging: Eval surface and gateway are smaller than dedicated LLM platforms. Opik is newer and less mature than the classic Comet platform.

Decision framework: pick by constraint

- OSI-approved license required: Langfuse core, Helicone, OpenLIT, Lunary, Opik, FutureAGI. Phoenix is ELv2.

- Lowest operational cost: Lunary, then OpenLIT (library-only).

- Maximum scale ceiling: Langfuse and FutureAGI on tuned ClickHouse.

- OpenTelemetry-first: Phoenix, FutureAGI traceAI, OpenLLMetry.

- Gateway-first architecture: Helicone, FutureAGI Agent Command Center.

- Multi-region self-host with documented Helm: Langfuse, FutureAGI, Phoenix.

- Already running ClickHouse: Langfuse, Helicone, FutureAGI, Opik are the easiest fits.

- Already running Comet for classical ML: Opik.

Common mistakes when picking a self-hosted platform

- Underestimating ClickHouse operations. Schema migrations, disk planning, replica failover, and query tuning are real engineering. Without an SRE who knows ClickHouse, expect on-call pages.

- Picking on demo videos. Demos run on tuned hardware with synthetic load. Run a load test on your real span schema before committing.

- Ignoring OTel collector tuning. Without batching, OTel ingestion drops spans under load. Tune the collector before benchmarking.

- Forgetting retention math. ClickHouse disk is the dominant cost. 90 days at 10M traces per month is roughly 200 GB to 2 TB depending on payload size.

- Skipping the upgrade plan. Langfuse, Phoenix, and FutureAGI ship breaking changes between major versions. Plan upgrade windows.

- Treating ELv2 as open source. Phoenix is source available, not OSI open source. Legal teams that follow OSI definitions strictly will block it.

What changed in self-host LLMOps in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Langfuse shipped Experiments CI/CD integration | Self-hosted teams can gate experiments in GitHub Actions without leaving the cluster. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway, guardrails, and high-volume trace analytics moved into the same self-hosted plane. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone remains usable, but roadmap risk became part of vendor diligence. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Trace, prompt, dataset, and eval workflows moved closer to terminal-native agent tooling. |

| 2025-2026 | OpenInference v1 conventions stabilized | Cross-platform span schema reduces vendor lock-in for self-hosted teams. |

| 2025 | ClickHouse adoption broadened across LLM observability tools | Operations playbooks moved closer to standard SRE knowledge. |

How to actually evaluate this for production

-

Run a domain reproduction. Stand up two candidate platforms side-by-side. Emit OTel spans into both. Compare span fidelity, eval coverage, query latency, and storage cost on your real workload for two weeks.

-

Test the upgrade path. Run a major version upgrade in staging. Time the maintenance window. Verify backward-compat on the SDK and the OTel schema.

-

Cost-adjust. Real cost equals infra (compute + storage + retention) plus the SRE hours to operate ClickHouse, Postgres, and Redis at the throughput you need. Add upgrade-window costs.

How FutureAGI implements self-hosted LLM observability

FutureAGI is the production-grade self-hostable LLM observability platform built around the closed reliability loop that other self-hosted picks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Tracing, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java (LangChain4j and Spring AI), and a C# core, with OpenInference-shaped spans flowing into ClickHouse-backed storage that handles 10K+ spans per second on tuned infra.

- Evals, 50+ first-party metrics attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise agents in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, while 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with the OSS edition; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II. The license is Apache 2.0 across the stack so commercial reuse and air-gapped deploys are unambiguous.

Most teams comparing self-hosted observability picks end up running three or four tools in production: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Langfuse self-hosting docs

- Langfuse GitHub repo

- Phoenix self-host docs

- Phoenix GitHub repo

- Helicone GitHub repo

- OpenLIT GitHub repo

- Lunary GitHub repo

- Comet Opik GitHub repo

- FutureAGI GitHub repo

- OpenInference conventions

- Helicone Mintlify announcement

Series cross-link

Read next: Best Open Source LLM Observability, Best LLM Tracing Tools, Best LLMOps Platforms

Frequently asked questions

What are the best self-hosted LLM observability tools in 2026?

Why self-host LLM observability instead of using SaaS?

Which self-hosted LLM observability tool has the lowest deployment complexity?

What infrastructure does self-hosting Langfuse actually require?

Does self-hosting work on ARM architectures (Graviton, M-series)?

How does self-hosting cost compare to SaaS pricing?

Can I run multiple self-hosted observability tools side-by-side during migration?

Which self-hosted tool handles 10K+ spans per second?

Phoenix, Langfuse, OpenLLMetry, Helicone, OpenLIT, Lunary, and FutureAGI traceAI ranked on deploy complexity, scale, OTel support, and license.

FutureAGI, Langfuse, Phoenix, Datadog, Helicone, LangSmith, Braintrust, Galileo for agent observability in 2026. Pricing, OTel, span-attached scores, and gaps.

FutureAGI, Datadog, Langfuse, Phoenix, Helicone, Braintrust, LangSmith for LLM monitoring in 2026. Latency, drift, cost, and eval pass-rate trends compared.