What is Pydantic AI? Type-Safe Agent Framework in 2026

Pydantic AI is a Python agent framework that brings Pydantic-style validation to LLM tool calls and outputs. Agents, tools, dependency injection, graphs.

Table of Contents

A team is building a structured-extraction agent for invoice processing. The agent reads an invoice PDF, calls a vision tool to extract line items, and produces a typed Invoice object with line_items, totals, tax, and vendor fields. A naive LangChain chain might post-validate the output with a separate Pydantic check, though current LangChain supports first-class structured outputs via create_agent(response_format=...) returning a validated structured_response. The OpenAI Agents SDK version supports output_type directly with Pydantic-compatible types but is OpenAI-shaped. The Pydantic AI version declares output_type=Invoice on the Agent constructor; the framework validates every output against the schema automatically, retries on validation failures, and surfaces the typed object as the run result while giving you provider-neutral routing across OpenAI, Anthropic, Gemini, and local models.

This is the niche Pydantic AI fills. Where most frameworks treat type-safety as a nice-to-have, Pydantic AI treats it as a first-class invariant. The framework is opinionated about validation and is a strong fit for teams already using Pydantic and FastAPI who want the same ergonomics for agents. This guide covers what Pydantic AI is, the architecture, how its primitives work, and when to pick it.

TL;DR: What Pydantic AI is

Pydantic AI is an open-source MIT-licensed Python framework from Pydantic Services Inc. It brings the FastAPI-style developer ergonomics and Pydantic validation guarantees to agent building. The repo at github.com/pydantic/pydantic-ai has approximately 17,000 GitHub stars as of mid-2026. The core primitives are Agent (an LLM with a system prompt, typed deps, typed output, and tools), Tool (a typed function the agent can call), output_type (a Pydantic model the output must conform to), and a provider-neutral Model abstraction. Pydantic AI also ships toolsets, MCP support, deferred / human-in-the-loop tools, durable execution, an event-stream UI layer, Pydantic Evals, and an AI Gateway in the broader product surface. An optional pydantic-graph module supports state-machine workflows.

Why Pydantic AI matters in 2026

Three forces made type-safe agent frameworks a procurement consideration.

First, structured output became the default in production. A 2024 LLM application returned freeform text that downstream code parsed. A 2026 application returns a typed object that downstream code consumes directly. Frameworks that bake validation into the run loop (Pydantic AI, OpenAI Agents SDK with output_type, Instructor) saved teams from rolling their own validation layer.

Second, multi-provider production stacks are common. A team that runs OpenAI for the main path, Anthropic for the long-context path, Gemini for the vision path, and Ollama for the local-eval path needs a framework that abstracts provider differences without leaking them. Pydantic AI’s Model abstraction with a string identifier (e.g. openai:gpt-4o) is one of the clearest provider-neutral surfaces among the major Python frameworks.

Third, the Pydantic ecosystem ran ahead. The Pydantic library itself is the de facto Python validation standard. FastAPI built on it. Pydantic Logfire shipped as a strong observability product. A Pydantic-branded agent framework with the same ergonomics as those products had a built-in audience the day it launched.

The anatomy of a Pydantic AI application

The framework’s primitives are small.

Agent. The central class. You construct it with a model identifier, a system prompt, an optional deps_type, an optional output_type, and a set of decorated tool methods. The Agent has run, run_sync, and run_stream methods.

Tool. A function decorated with @agent.tool (with RunContext access) or @agent.tool_plain (without). The function signature defines the tool’s argument schema; type hints feed straight into the model’s tool-calling format. Tool arguments are validated against the schema; if you also need the return value validated, type-hint the return as a Pydantic model (or validate explicitly inside the tool) since Pydantic AI does not automatically validate arbitrary return types.

output_type. A Pydantic model that the agent’s final answer must validate against. If validation fails, the framework retries with the validation error as a hint to the model. The retry budget is configurable.

deps_type. A typed dependency object passed into agent.run and accessible inside tools as ctx.deps. The pattern is FastAPI-style: dependencies are objects that tools borrow per-call.

RunContext. The per-call context passed to tools. Carries deps, message_history, model name, retry count, and metadata.

Model. The provider abstraction. Use a string identifier such as openai:gpt-4o, anthropic:claude-sonnet-4-5, google-gla:gemini-2.5-pro, ollama:llama3.3, groq:llama-3.3-70b-versatile, mistral:mistral-large-latest, or cohere:command-r-plus-08-2024. Refresh against current provider model lists at publish time; provider names and model IDs change frequently.

Pydantic AI in 30 lines

from dataclasses import dataclass

from pydantic import BaseModel, Field

from pydantic_ai import Agent, RunContext

@dataclass

class SupportDeps:

customer_id: str

db_pool: object # your real database connection pool

class SupportResult(BaseModel):

response: str = Field(description="Reply to send to the customer.")

needs_escalation: bool

agent = Agent(

"openai:gpt-4o",

deps_type=SupportDeps,

output_type=SupportResult,

system_prompt="You are a support agent for the FastAPI hosting platform.",

)

@agent.tool

async def lookup_account(ctx: RunContext[SupportDeps]) -> dict:

"""Return the customer's plan, usage, and last invoice."""

return await ctx.deps.db_pool.fetchrow("SELECT plan, usage, last_invoice FROM customers WHERE id=$1", ctx.deps.customer_id)

result = agent.run_sync(

"Why was my last bill higher than usual?",

deps=SupportDeps(customer_id="cust_123", db_pool=pool),

)

print(result.output.response, result.output.needs_escalation)The agent calls the model, the model calls lookup_account, the framework validates the tool’s argument schema, the model produces a final answer, and the framework validates the final answer against SupportResult. result.output is a typed SupportResult.

How Pydantic AI compares to alternatives

| Framework | Lead with | Best for | License |

|---|---|---|---|

| Pydantic AI | Type-safe agents, provider neutrality | Validated outputs, multi-provider stacks, FastAPI-shaped teams | MIT |

| OpenAI Agents SDK | Agent loop with tools, handoffs, guardrails, HITL | OpenAI-first, multi-agent handoff patterns | MIT |

| LangChain + LangGraph | Chains and stateful graphs | Multi-agent orchestration, broad ecosystem | MIT |

| Instructor | Structured output decoration on LLM clients | Structured outputs without full agent loop | MIT |

Pydantic AI’s positioning is precise: type-safe agents with provider neutrality, written by Pythonistas who like FastAPI. If that description fits the team, the framework is a strong choice. If the team is heavily invested in LangChain or OpenAI’s ecosystem, the switching cost may not pay back.

Production patterns with Pydantic AI

Three patterns recur.

Pattern 1: Validated single-agent extraction. An Agent with a Pydantic output_type for structured extraction, no tools, used in a synchronous run_sync call. Common for invoice extraction, resume parsing, and structured-data ingestion. The framework’s validation retry loop handles models that occasionally produce malformed JSON.

Pattern 2: Tool-using agent with deps for shared infra. An Agent with several @agent.tool functions that all access ctx.deps for database, HTTP, and config. The deps pattern keeps tools stateless and testable. The Agent runs synchronously inside an HTTP request handler.

Pattern 3: pydantic-graph for stateful workflows. A graph of typed nodes, each potentially backed by an Agent. State flows through the graph as a typed dependency object. The pattern competes directly with LangGraph for state-machine workflows where Pydantic validation and Python ergonomics matter more than maximum flexibility.

Common mistakes when adopting Pydantic AI

- Skipping output_type. A free-form text result loses one of the framework’s main benefits. Declare a Pydantic result model wherever the output is consumed by downstream code.

- Threading deps manually. If your tool functions take a db parameter directly instead of pulling it from ctx.deps, you lose the testability benefit. Always go through deps.

- Hardcoding the model identifier in production. Read it from config so you can swap providers without code changes. The string-based Model abstraction makes this trivial.

- Forgetting tool docstrings. The framework feeds the docstring into the model as the tool description. A function with no docstring is a tool with no description.

- Using @agent.tool when @agent.tool_plain is enough. tool_plain is for tools that do not need RunContext. Use the smaller decorator when you can; the contract is clearer.

- Skipping the retry budget. Validation retries can amplify cost. Configure max retries explicitly for production.

- Treating pydantic-graph as required. Many Pydantic AI applications never need graphs. Only reach for the graph module when state machines and explicit flow control matter.

How to trace Pydantic AI with FutureAGI

Pydantic AI ships optional OpenTelemetry instrumentation that you can enable to emit OTLP spans, plus dedicated OpenInference and traceAI packages for additional attribute coverage. To ship traces to FutureAGI’s observability platform or any other OTel backend with traceAI:

pip install traceai-pydantic-aifrom fi_instrumentation import register

from fi_instrumentation.fi_types import ProjectType

from traceai_pydantic_ai import PydanticAIInstrumentor

trace_provider = register(

project_type=ProjectType.OBSERVE,

project_name="invoice-extraction",

)

PydanticAIInstrumentor().instrument(tracer_provider=trace_provider)

# Your Pydantic AI agents now emit span trees once instrumentation is registered.The resulting trace tree shows the agent.run at the root, every model call as a child span, every tool call with validated arguments, and validation retries surfaced when configured.

How FutureAGI implements Pydantic AI observability and evaluation

FutureAGI is the production-grade observability and evaluation platform for Pydantic AI built around the closed reliability loop that other Pydantic AI stacks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Pydantic AI tracing, traceAI (Apache 2.0) auto-wraps agent.run, model calls, typed tool calls, validation retries, and pydantic-graph nodes; the same library covers 35+ frameworks across Python, TypeScript, Java, and C#.

- Tool and outcome evals, 50+ first-party metrics (Tool Correctness, Argument Correctness, Task Completion, Faithfulness, Hallucination) attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise typed agents in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing; 18+ runtime guardrails compose with Pydantic AI’s typed-tool validation on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams running Pydantic AI in production end up running three or four tools alongside it: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching. For more on the tracing model, read What is LLM Tracing?.

Sources

- Pydantic AI GitHub repo

- Pydantic AI documentation

- Pydantic AI agents guide

- Pydantic AI tools guide

- pydantic-graph documentation

- Pydantic Logfire docs

- Pydantic Services company site

- OpenInference Pydantic AI instrumentation

- traceAI repo

Series cross-link

Related: What is CrewAI?, What is LangGraph?, What is the OpenAI Agents SDK?, What is LLM Tracing?

Frequently asked questions

What is Pydantic AI in plain terms?

Who maintains Pydantic AI and what license is it under?

How is Pydantic AI different from LangChain?

How is Pydantic AI different from the OpenAI Agents SDK?

What is dependency injection in Pydantic AI?

What is a Pydantic AI graph?

How do you trace Pydantic AI?

When should I not use Pydantic AI?

CrewAI is a Python framework for role-based multi-agent orchestration. Crews, agents, tasks, flows, tools, and how it differs from LangGraph and AutoGen.

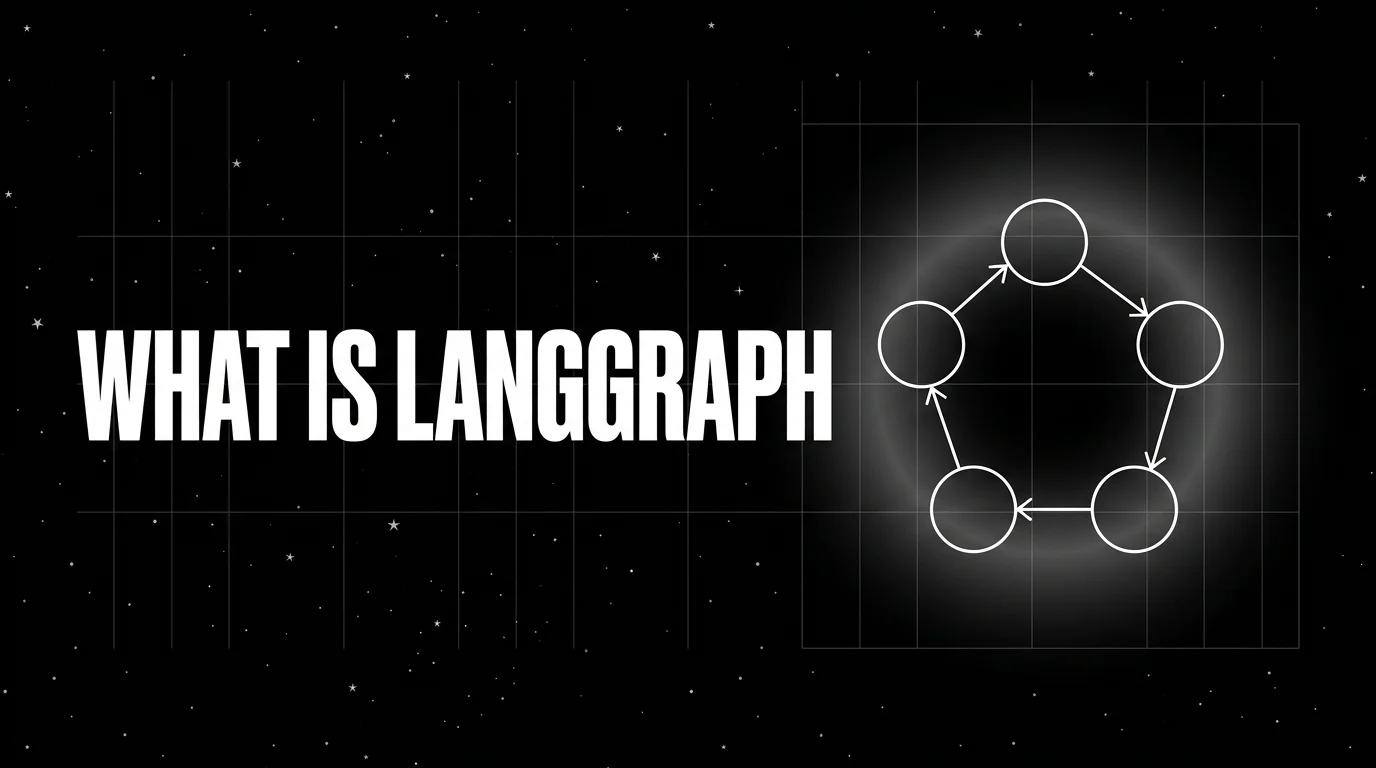

LangGraph is LangChain's graph-based orchestration library for stateful agents. Nodes, edges, state, checkpointers, and how it differs from CrewAI.

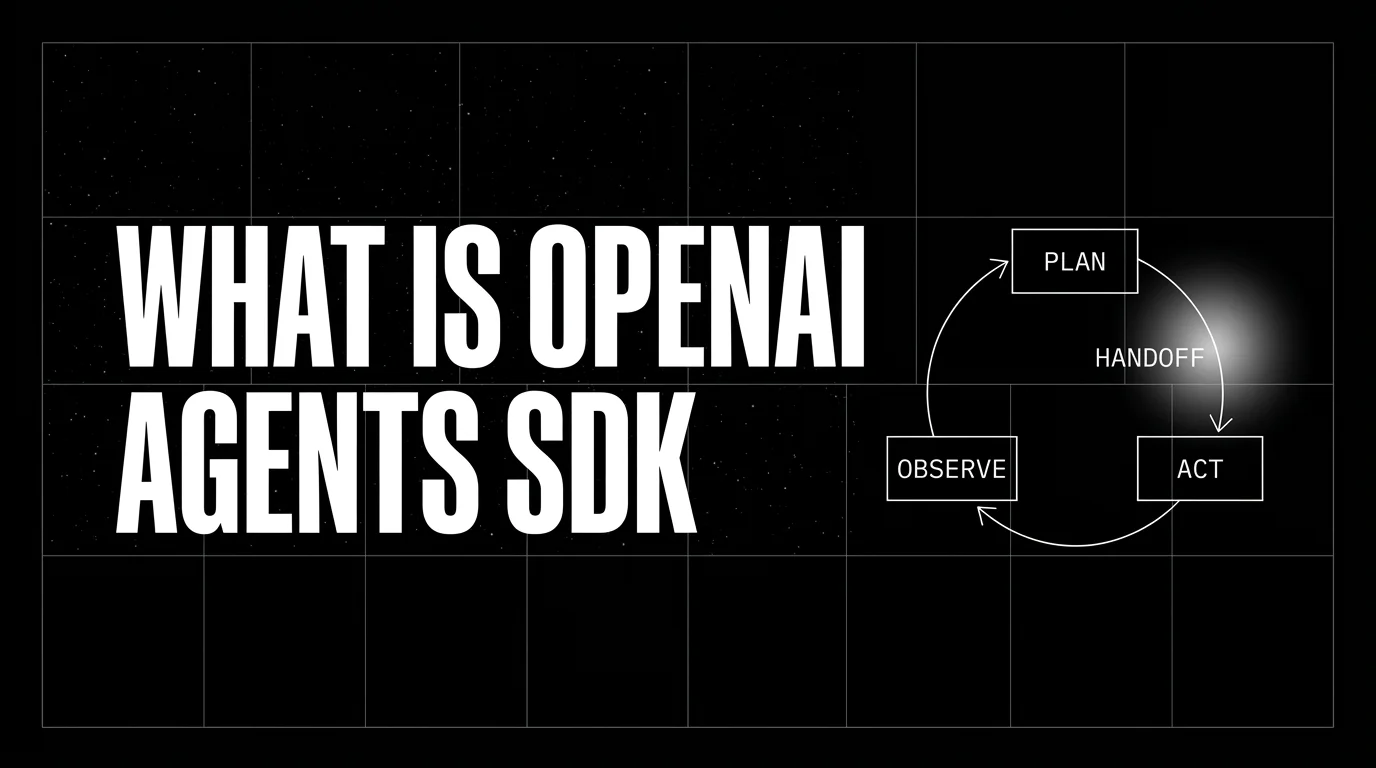

OpenAI Agents SDK is OpenAI's open-source framework for agent loops, handoffs, guardrails, and sessions. Architecture, primitives, and how to trace it.