BLEU vs ROUGE vs BERTScore: Worked Examples and 2026 Use Cases

BLEU, ROUGE, and BERTScore decoded with worked examples. What each metric measures, when each breaks, and where modern LLM-judge scoring replaces them in 2026.

Table of Contents

Three metrics anchor a quarter-century of NLP evaluation. BLEU was published in 2002 and won the NAACL Test-of-Time award in 2018; it shows up in nearly every machine-translation paper since. ROUGE arrived in 2004 and became the summarization community’s default. BERTScore landed at ICLR 2020 and was an early widely-adopted metric to use contextual embeddings instead of surface n-gram overlap.

In 2026, all three still ship in production eval pipelines. They also all have well-understood failure modes. This guide compares them with worked examples, covers when each breaks, and locates them against modern LLM-judge scoring.

TL;DR: What each metric measures

| metric | year | basis | designed for | reference needed |

|---|---|---|---|---|

| BLEU | 2002 | n-gram precision + brevity penalty | machine translation | yes (1 or more) |

| ROUGE | 2004 | n-gram recall (or F1 in variants) | summarization | yes (1 or more) |

| BERTScore | 2020 | token-level cosine similarity using BERT embeddings | general text generation | yes |

All three need a reference. The 2026 production default for open-ended generation (RAG, dialog, creative) is rubric-bound LLM-judge scoring, which is reference-free. BLEU, ROUGE, and BERTScore remain useful for benchmark continuity, as cheap regression checks, and on tasks where references genuinely exist (translation, summarization with gold abstracts).

BLEU: n-gram precision with a brevity penalty

BLEU (Bilingual Evaluation Understudy) was introduced by Papineni, Roukos, Ward, and Zhu in 2002 as a metric for automatic machine-translation evaluation.

How BLEU works

Given a candidate translation and one or more reference translations, BLEU computes:

- Modified n-gram precision for n=1 through n=4. For each n, count how many candidate n-grams appear in any reference, capped at the maximum count in the references.

- Geometric mean of the n-gram precisions, typically with equal weights (0.25 each for n=1 to 4).

- Brevity penalty (BP): if candidate length c is less than reference length r, multiply by exp(1 - r/c). Otherwise BP is 1.

The final score is BP times the geometric mean of n-gram precisions, expressed as a value between 0 and 1 (or 0 to 100 in many publications).

Worked example

candidate: the cat sat on the mat

reference: the cat sat on the mat

BLEU-4: 1.00 (perfect match)

candidate: a cat is sitting on the mat

reference: the cat sat on the mat

1-gram precision: 4/7 (candidate tokens a, cat, is, sitting, on, the, mat -> modified matches cat, on, the, mat = 4; reference has two "the" but candidate has one, so the modified count for "the" is capped at 1)

2-gram precision: 2/6 (a cat, cat is, is sitting, sitting on, on the, the mat -> matches on the, the mat = 2)

3-gram precision: 1/5 (only "on the mat")

4-gram precision: 0/4 (no 4-gram match)

BP: 1.0 (candidate length 7 is greater than reference length 6, so no brevity penalty)

BLEU: complicated by 0/4; with NIST-style add-one smoothing, around 0.25When BLEU breaks

- Creative writing and dialog. Many valid outputs exist; BLEU rewards copying the reference word for word.

- Single-reference tasks. When only one gold answer exists (most production tasks), BLEU under-rewards correct alternative phrasings.

- Short outputs. Brevity penalty dominates the score when the candidate is much shorter than the reference.

- Tokenization sensitivity. A different tokenizer can shift BLEU by 5+ points without changing meaning. Use SacreBLEU for reproducibility.

- Cross-lingual. BLEU is symbol-blind; high BLEU does not always mean meaningful translation in low-resource languages.

When BLEU still works

- WMT-style translation benchmarks with multiple high-quality references.

- Regression checks where you want a fast deterministic signal that nothing catastrophically broke.

- Continuity with two decades of published baselines.

ROUGE: recall-oriented overlap for summarization

ROUGE (Recall-Oriented Understudy for Gisting Evaluation) was introduced by Chin-Yew Lin in 2004 for evaluating summaries.

ROUGE variants

- ROUGE-N. N-gram recall: count of n-grams in the reference that appear in the candidate, divided by the total n-grams in the reference. ROUGE-1 is unigram, ROUGE-2 is bigram.

- ROUGE-L. Longest common subsequence between candidate and reference. Captures sentence-level structure.

- ROUGE-S. Skip-bigram: pairs of words in their order, with gaps allowed.

- ROUGE-W. Weighted longest common subsequence.

Modern reporting often uses ROUGE-1, ROUGE-2, and ROUGE-L F1 (precision and recall combined).

Worked example

candidate: the cat sat on the mat happily

reference: a happy cat was sitting on the mat

ROUGE-1 recall: 4/8 (cat, on, the, mat from reference appear in candidate; a, happy, was, sitting do not) = 0.50

ROUGE-1 precision: 4/7 = 0.57

ROUGE-1 F1: 0.53

ROUGE-2 recall: 2/7 ("on the", "the mat") = 0.29

ROUGE-L: longest common subsequence is "cat on the mat" = 4 tokens; F1 ~ 0.53When ROUGE breaks

- Abstractive summarization. A system that rephrases gets penalized; an extractive system that copies wins.

- Long outputs. ROUGE-L favors lexical overlap; coherence and structure are missed.

- Factuality. A summary can score high on ROUGE-N and contain factual errors.

- Multiple valid summaries. A single reference reflects one summary style; alternatives are under-credited.

When ROUGE still works

- CNN/DailyMail and XSum benchmark continuity.

- Cheap regression checks alongside other metrics.

- Coverage diagnostics where you want to know whether key phrases from the source made it into the summary.

BERTScore: contextual embedding cosine similarity

BERTScore was introduced by Zhang, Kishore, Wu, Weinberger, and Artzi at ICLR 2020. It replaced surface n-gram overlap with similarity in a learned embedding space.

How BERTScore works

- Embed each token in the candidate using a pretrained transformer (RoBERTa-large is the default reported in the paper).

- Embed each token in the reference the same way.

- For each candidate token, find the maximum cosine similarity against any reference token. Average across candidate tokens (precision) and reference tokens (recall). F1 is the harmonic mean.

- Optional: apply IDF weighting to down-weight common words.

The score is bounded roughly in 0 to 1 but the paper recommends rescaling by a baseline (random pairs) to make scores comparable across tasks.

Worked example

candidate: the canine slept on the rug

reference: the dog rested on the carpet

Surface n-gram overlap (BLEU/ROUGE): low. The two share only "the" and "on the".

BERTScore F1 (RoBERTa-large): high, around 0.92 to 0.95 because canine ~ dog, slept ~ rested, rug ~ carpet in embedding space.The example shows why BERTScore was a step forward for paraphrase-rich tasks. BLEU would score this near zero; the human judgment is that the two are near-equivalent.

When BERTScore breaks

- Out-of-domain text. Biomedical, legal, or code text where the BERT-family base model was not trained: embeddings become noisy.

- Adversarial paraphrase. A system that swaps in semantically opposite words can sometimes score similarly because antonyms can occupy similar embedding neighborhoods in similar contexts.

- Long-form generation. Token-level similarity does not capture global coherence.

- Reference dependency. BERTScore still needs a reference; production tasks without one cannot use it directly.

When BERTScore works

- Translation and summarization where references exist and paraphrase is common.

- Image captioning (the original paper’s evaluation domain).

- Cross-domain text where surface overlap is misleading but semantic equivalence is real.

Comparing the three on the same example

reference: the company announced a 12 percent revenue increase year over year

candidate A: the company reported a 12 percent rise in annual revenue

candidate B: revenue at the firm grew 12 percent compared to last year

candidate C: the company posted a 12 percent decline year over year (factually opposite)

BLEU-4 (rough):

A: ~0.20 (some n-gram overlap)

B: ~0.05 (low n-gram overlap despite same meaning)

C: ~0.55 (high n-gram overlap with the wrong meaning)

ROUGE-L F1 (rough):

A: ~0.45

B: ~0.30

C: ~0.78

BERTScore F1 (rough):

A: ~0.93

B: ~0.91

C: ~0.85 (lower because "decline" semantically opposes "increase", but still high)

Human judgment:

A: correct, well-paraphrased

B: correct, more abstracted

C: WRONG (factually opposite)The example shows the failure mode each metric has. BLEU and ROUGE rank candidate C higher than A and B because the surface tokens line up. BERTScore tracks human judgment more closely (B and A both rank above C in this example) but still scores the factually opposite C uncomfortably high (~0.85), well above what its semantic-error severity warrants. None of the three catches factuality reliably. That gap is what reference-free LLM-judge scoring with a factuality rubric is designed to fill.

What replaced these metrics in 2026 production

For new production evals on open-ended LLM outputs, three approaches have largely displaced BLEU/ROUGE/BERTScore:

Rubric-bound LLM-judge

A judge prompt scores the output on explicit dimensions (factuality, completeness, fluency, helpfulness) on a 1 to 5 scale. Calibrated against 200 to 1000 human-labeled examples. Used by many production eval stacks, including FAGI eval templates, Galileo Luna, Maxim, OpenAI evals, and DeepEval. The benefit: reference-free, multi-dimensional, calibrated. The cost: 100s of ms to seconds per call, dollar cost per evaluation.

Pairwise preference

For ranking model variants, pairwise LLM-judge (“which of A or B is better, and why”) often outperforms absolute scoring. Used in Chatbot Arena and most A/B comparison flows.

Task-specific deterministic checks

Pass-at-k against unit tests for code, schema validation for structured output, math verification for numeric tasks. Where applicable, these are the highest-signal cheapest scoring available.

The classical metrics persist as fast regression checks. They are no longer the primary signal.

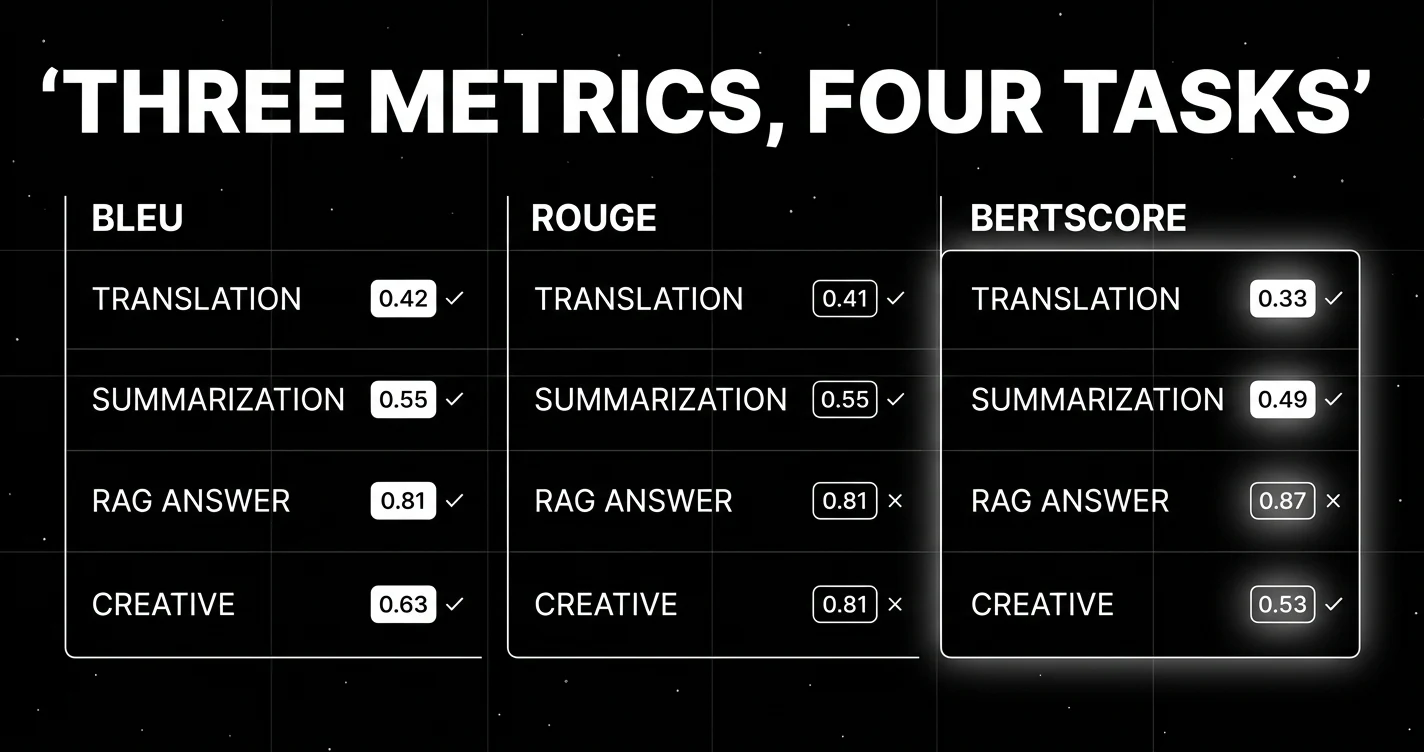

When to use what in 2026

| task | primary metric | secondary | tertiary |

|---|---|---|---|

| machine translation (benchmark) | BLEU (continuity) | BERTScore | LLM-judge |

| machine translation (production) | LLM-judge with rubric | BERTScore | BLEU regression |

| summarization (benchmark) | ROUGE (continuity) | BERTScore | LLM-judge faithfulness |

| summarization (production) | LLM-judge faithfulness + ROUGE | BERTScore | length / structure checks |

| RAG answer | LLM-judge groundedness + correctness | answer-correctness checker | (BLEU/ROUGE rarely useful) |

| code generation | pass-at-k against tests | execution success rate | (BLEU/ROUGE not informative) |

| dialog / chat | LLM-judge with rubric | pairwise preference | (BLEU/ROUGE not informative) |

| creative / open-ended | LLM-judge with rubric | pairwise preference | human review on a sample |

Common mistakes when using these metrics

- Reporting BLEU on tasks BLEU was not designed for. Asking BLEU to score a chat reply or a creative paragraph misframes the result.

- Using a single reference for tasks with many valid outputs. All three metrics under-score correct alternatives.

- Forgetting tokenization. SacreBLEU exists because raw BLEU comparisons across tokenizers are not reliable.

- Trusting BERTScore on out-of-domain text. The base embedding quality drives the score; a domain-mismatched embedder produces noisy scores.

- Treating any of the three as factuality metrics. None of them are.

- Using only one of the three. Pairing classical metrics with LLM-judge gives a more stable signal.

- Stopping at offline eval. Production scoring on traces with attached scores catches drift the offline eval misses.

How to use this with FAGI

FutureAGI is the production-grade evaluation stack for teams running classical metrics alongside rubric-bound LLM judges. BLEU, ROUGE, and BERTScore ship as cheap deterministic scorers next to 50+ rubric-bound LLM-judge templates. The combination is the 2026 default: deterministic metrics for fast regression and reproducibility, LLM-judge for the open-ended quality dimensions where surface n-gram overlap fails. Scores attach to spans in the trace under OpenTelemetry, so production drift detection compares classical metric trends against rubric scores side by side. Full eval templates run at roughly 1 to 2 seconds; turing_flash runs guardrail screening at 50 to 70 ms p95; classical metrics complete in milliseconds and can ride along on every production trace.

The same plane carries persona-driven simulation, the BYOK gateway across 100+ providers, 18+ guardrails, and Apache 2.0 traceAI instrumentation on one self-hostable surface; pricing starts free with a 50 GB tracing tier. For benchmark continuity (WMT, CNN/DailyMail), the classical metrics remain the right primary report. For new production tasks, rubric-bound LLM-judge with calibration is the default, scored span-by-span on the same surface.

Sources

- Papineni et al. (2002). BLEU: a Method for Automatic Evaluation of Machine Translation

- Lin (2004). ROUGE: A Package for Automatic Evaluation of Summaries

- Zhang et al. (2020). BERTScore: Evaluating Text Generation with BERT

- Post (2018). A Call for Clarity in Reporting BLEU Scores (SacreBLEU)

- Liu et al. (2023). G-Eval: NLG Evaluation using GPT-4 with Better Human Alignment

- Sellam et al. (2020). BLEURT: Learning Robust Metrics for Text Generation

- WMT translation benchmarks

Series cross-link

Related: F1 Score for Evaluating Classifiers, What is LLM Evaluation?, RAG Evaluation Metrics in 2025

Frequently asked questions

What is the difference between BLEU, ROUGE, and BERTScore?

Why is BLEU still used after more than two decades?

When does BLEU break in 2026?

When does ROUGE break?

When does BERTScore break?

Should I still use BLEU and ROUGE for LLM evaluation in 2026?

How do BLEU, ROUGE, and BERTScore compare on cost?

What metric should I use for what task in 2026?

BLEU, ROUGE, exact match, regex, and JSON validators in 2026. Where deterministic metrics still earn their place, and where LLM-as-judge wins instead.

DeepEval, Ragas, FutureAGI, HuggingFace Evaluate, Galileo, OpenAI Evals, and Confident-AI as the 2026 summarization eval shortlist. ROUGE, BERTScore, faithfulness.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.