Best AI Prompt Management Tools in 2026: 7 Platforms Compared

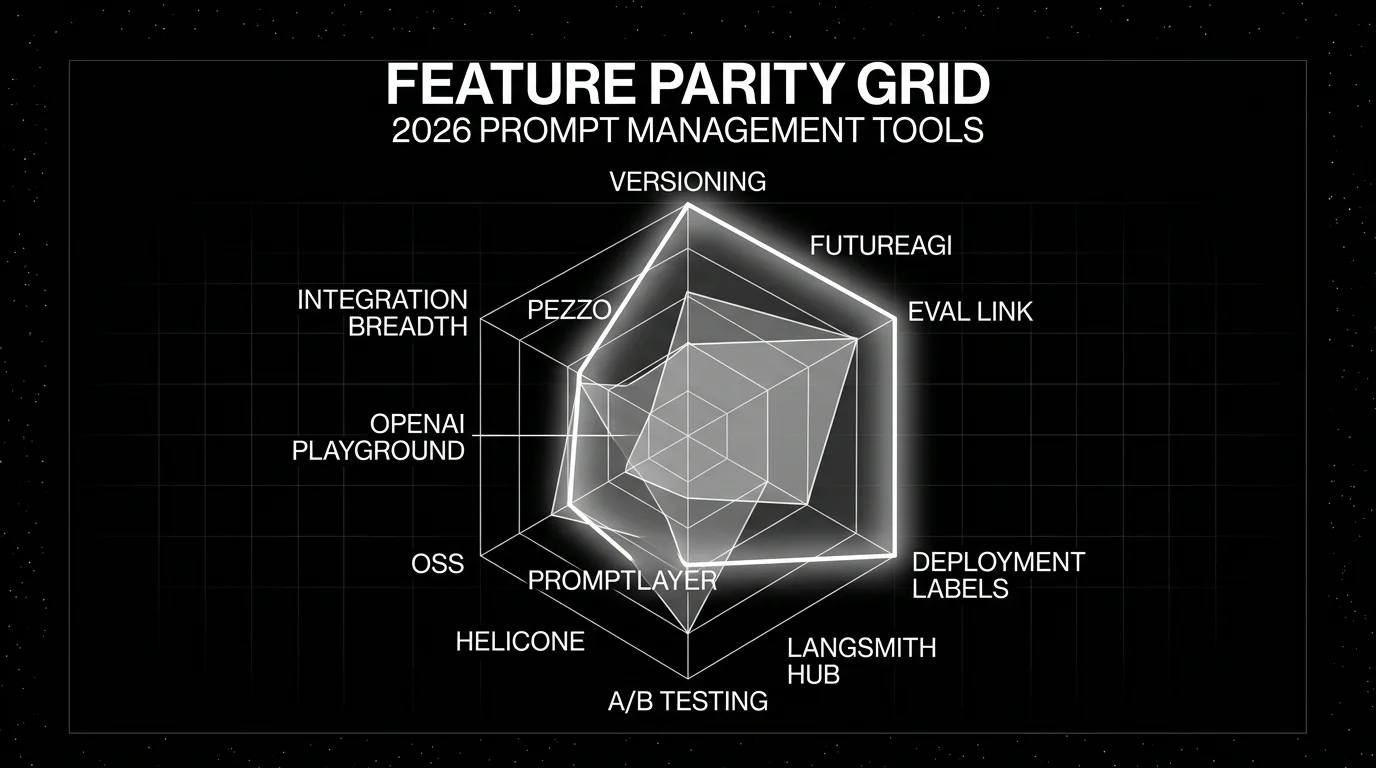

FutureAGI Prompts, Langfuse, LangSmith Hub, PromptLayer, Helicone, OpenAI Playground, and Pezzo as the 2026 prompt management shortlist for production teams.

Table of Contents

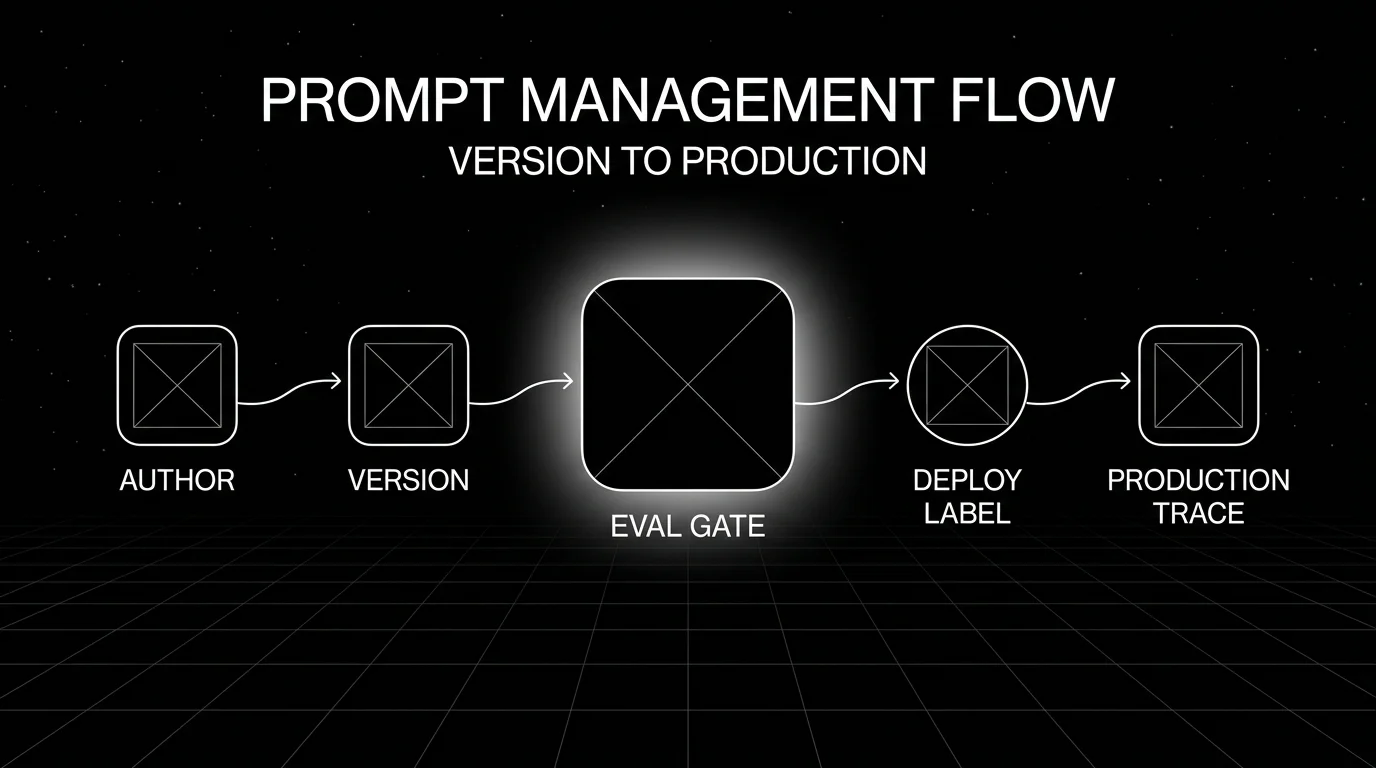

Prompt management is the LLM equivalent of feature flags plus a model registry. Without it, the prompt riding in production is whatever the last engineer pasted into the deploy script. With it, every prompt has a version, every deploy carries a label, every A/B has a control, and every regression has a rollback. This guide covers the seven tools that actually work for 2026 production teams, with honest tradeoffs and how each pairs with evaluation and observability.

TL;DR: Best prompt management tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Open-source prompts + evals + traces in one platform | FutureAGI Prompts | Unlimited prompts on every tier | Free + usage from $2/GB storage | Apache 2.0 |

| Self-hosted prompts + observability | Langfuse | Mature versioning + traces in one OSS stack | Hobby free, Core $29/mo | MIT core |

| LangChain or LangGraph runtimes | LangSmith Hub | Native prompt-to-trace flow | Developer free, Plus $39/seat/mo | Closed (MIT SDK) |

| Dedicated prompt management product | PromptLayer | Strong release workflow | Free, Pro $49/mo, Team $500/mo | Closed |

| Gateway-first stack already running | Helicone Prompts | Prompts on the same gateway | Hobby free, Pro $79/mo | Apache 2.0 |

| Solo prompt iteration | OpenAI Playground | Fastest sketchpad | Free | Closed |

| OSS LLMOps minimum viable platform | Pezzo | OSS prompt + observability | Self-host free | Apache 2.0 |

If you only read one row: pick FutureAGI when you want prompts, evals, and traces in one open-source platform. Pick Langfuse for self-hosted observability with prompt management. Pick PromptLayer if a dedicated prompt-management product is the budget owner.

Why prompt management matters in 2026

Three pressures pushed prompt management from “nice to have” to “must have” by 2026.

Prompt changes ship more often than model changes. A 2026 production team typically updates prompts weekly and the underlying model every 3-6 months. Prompts are the higher-velocity surface. Versioning them like code (with deploy labels, rollback, and observability) is the difference between a fast iteration loop and a fragile release process.

Eval gates need a prompt id to be useful. A regression alert that says “Faithfulness dropped 0.07” without a prompt version is hard to act on. With prompt management, the alert becomes “Faithfulness dropped 0.07 between prompt v23 and v24; rollback ready.” That is the bar.

Compliance asks for prompt provenance. EU AI Act Article 11 (technical documentation) and ISO/IEC 42001 (AI management system) both effectively require knowing which prompt version produced which output. Manual prompt management makes that hard. Prompt management tools make it routine.

How we evaluated the 2026 shortlist

Five axes that map to real production decisions:

- Versioning depth. Plain version history vs labels, branches, A/B testing, public sharing.

- Eval link. Can the tool tie a prompt version to dataset eval runs, gate merges on regression, and link traces back to the prompt id?

- Deployment surface. Hosted SaaS, self-hosted, both. License terms.

- Integration breadth. OpenAI, Anthropic, LangChain, LlamaIndex, OpenAI Agents, Pydantic AI, custom HTTP.

- Pricing model. Per-seat, per-call, flat tier, OSS-only.

The 7 prompt management tools compared

1. FutureAGI Prompts: Best for unified prompts + evals + traces

Open source. Self-hostable. Hosted cloud option.

Use case: Teams that want prompt management on the same platform as evaluation, simulation, observation, and gateway routing. The pitch is that the prompt version is one of the versioned objects that flows through the loop, alongside eval scores and trace shapes.

Key features: Unlimited prompts on every tier, prompt versioning, environment labels (dev, staging, production), prompt-to-trace linkage so a span carries its prompt version, prompt-to-eval linkage for CI gates, and integration with the Agent Command Center for runtime guardrails on prompt outputs.

Pricing: Free plus usage from $2/GB storage and $10 per 1,000 AI credits. Boost $250/mo, Scale $750/mo with HIPAA, Enterprise from $2,000/mo with SOC 2 Type II.

OSS status: Apache 2.0.

Best for: Mixed teams that want one open-source platform across evals, observability, prompt management, and runtime policy.

Worth flagging: More moving parts than a dedicated prompt-management product. If the goal is purely prompt management with no observability or eval workflow, PromptLayer is more focused.

2. Langfuse Prompt Management: Best for self-hosted observability + prompts

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted teams that want prompts and traces on the same OSS platform.

Key features: Versioning with production labels per the Langfuse docs, text and chat prompt formats, dynamic rendering with variable substitution, link prompts to traces for performance-by-version analysis, public API for CI/CD bulk migrations, and a Cursor plugin plus Skill for coding agents to migrate prompts in bulk.

Pricing: Hobby free with 50K units, 30 days data access, 2 users. Core $29/mo with 100K units. Pro $199/mo with 3 years retention and SOC 2.

OSS status: MIT core.

Best for: Platform teams that want prompts and observability on the same self-hosted stack. Pairs cleanly with DeepEval kept in CI.

Worth flagging: Simulation, voice eval, and prompt optimization are not first-party. License is “MIT for non-enterprise paths.”

3. LangSmith Hub: Best for LangChain or LangGraph runtimes

Closed platform. Open SDKs. Cloud, hybrid, and Enterprise self-hosting.

Use case: Teams whose runtime is already LangChain or LangGraph want prompts that flow natively into the LangSmith trace tree.

Key features: Prompt versioning, public sharing through LangChain Hub, integration with Playground, Canvas, and Studio, native trace linkage in the LangChain runtime, deployment surfaces through Fleet, and online and offline evals tied to prompt versions.

Pricing: Developer $0 per seat with 5K base traces. Plus $39 per seat with 10K base traces. Base traces $2.50 per 1K after included usage.

OSS status: Closed platform; MIT SDK.

Best for: LangChain and LangGraph teams who want prompt management aligned with the rest of the LangChain stack.

Worth flagging: Outside LangChain, the value drops. Per-seat pricing makes broad cross-functional access expensive. See LangSmith Alternatives.

4. PromptLayer: Best for a dedicated prompt management product

Closed platform. Hosted cloud.

Use case: Teams that want a tool focused on prompt management as the primary surface, with eval cells and release workflows as supporting features.

Key features: Prompt versioning, deployment labels, release workflows, agent node executions, eval cell executions, webhooks at the Team tier, RBAC and deployment approvals at Enterprise.

Pricing: Free for hackers (5 users, 2.5K monthly requests, 1 workspace, 10 prompts). Pro $49/mo (5 users, unlimited workspaces, 150 MB datasets). Team $500/mo (25 users, 1 GB datasets, webhooks). Enterprise custom (RBAC, HIPAA with BAA, SSO, deployment approvals, unlimited users).

OSS status: Closed.

Best for: Teams whose primary procurement requirement is a dedicated prompt management product, with a clean focus on versioning, releases, and prompt-level workflows.

Worth flagging: Smaller observability and evaluation surface than the integrated platforms. Per-tier seat caps to model.

5. Helicone Prompts: Best for gateway-first stacks

Open source. Self-hostable. Hosted cloud option.

Use case: Teams whose primary observability is already on Helicone’s gateway and who want prompt management on the same surface.

Key features: Prompt versioning, prompt experiments, prompt assembly. Pairs with Helicone’s request analytics, caching, rate limits, and provider routing.

Pricing: Hobby free with 10K requests, 1 GB storage. Pro $79/mo with unlimited seats. Team $799/mo with 5 organizations, SOC 2, HIPAA. Enterprise custom.

OSS status: Apache 2.0.

Best for: Teams with active LLM traffic where the gateway is already the source of truth.

Worth flagging: On March 3, 2026 Helicone announced it had been acquired by Mintlify and that services would remain live in maintenance mode with security updates, new models, bug fixes, and performance fixes. Treat roadmap depth as something to verify directly.

6. OpenAI Playground: Best for solo iteration, not production management

Closed. Hosted by OpenAI.

Use case: Solo prompt engineering against OpenAI models. Quick iteration, system message tuning, and parameter experimentation.

Key features: Free access to OpenAI models, model selection, parameter tuning, completion and chat modes, basic save and share.

Pricing: Free; underlying model usage billed.

OSS status: Closed.

Best for: Personal sketchpad work and solo prompt design. Not a team prompt management tool.

Worth flagging: No cross-teammate versioning, no deployment labels, no performance tracking, no A/B testing, no CI integration. Move prompts into a dedicated tool before they hit production.

7. Pezzo: Best for OSS LLMOps minimum viable platform

Open source. Self-hostable.

Use case: Teams that want an OSS LLMOps stack with prompt management, observability, and cost optimization in one project, and that prefer fewer moving parts than the larger platforms.

Key features: Prompt design and versioning, observability and monitoring, multi-language client support (Node.js, Python, LangChain), cost optimization claims, cloud-native architecture using PostgreSQL, ClickHouse, Redis, and Supertokens.

Pricing: Self-host free.

OSS status: Apache 2.0. 3.2K stars, last release v0.9.2 dated May 15, 2024 per the GitHub repo. Activity has slowed compared to Langfuse and FutureAGI; verify the maintenance pace before committing.

Best for: Small teams that want a single OSS project for prompts, observability, and basic eval.

Worth flagging: Lower release cadence than Langfuse and FutureAGI. Smaller mindshare in 2026 procurement.

Decision framework: pick by constraint

- OSS is non-negotiable: FutureAGI, Langfuse, Helicone (note maintenance mode), Pezzo.

- Self-hosting required from day one: FutureAGI, Langfuse, Helicone, Pezzo.

- LangChain or LangGraph runtime: LangSmith Hub.

- Dedicated prompt management product: PromptLayer.

- Per-prompt eval gates in CI: FutureAGI, Langfuse, LangSmith, Braintrust (via custom workflow), PromptLayer.

- Gateway-first stack: Helicone (with the maintenance-mode caveat).

- Cross-functional access on a flat fee: FutureAGI, Langfuse, Pezzo. Avoid per-seat models for 30+ person teams.

- Solo iteration only: OpenAI Playground (not a production tool).

Common mistakes when picking a prompt management tool

- Treating OpenAI Playground as production. It is a sketchpad. Move serious prompts into a real tool before they hit production traffic.

- Skipping the prompt-to-eval link. A prompt management tool that does not gate on eval regressions is a glorified Google Doc. The version id needs to flow into a CI gate.

- Picking on the demo. Run a domain reproduction. Migrate 10 to 20 of your real prompts, run evals, deploy, and rollback. The friction shows up in the migration.

- Per-seat pricing for 30-person teams. Read access from PMs, support, QA, and legal compounds the cost. Flat-fee or usage-based models are friendlier for cross-functional teams.

- Ignoring license depth. “Open source” varies. Verify license, telemetry, enterprise gates, upgrade process, and backup story.

- Not pinning the trace integration. A prompt id that does not appear in the production trace is half a feature. Verify the integration end to end.

- Underestimating Helicone’s maintenance mode. Helicone remains usable per the March 2026 announcement, but roadmap depth is on the user.

What changed in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can gate experiments tied to prompt versions. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Prompts, gateway, guardrails, and traces moved into the same loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone remains usable, but roadmap risk became part of vendor diligence. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | LangChain expanded into agent workflow products that consume Hub prompts. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Phoenix is moving prompt workflows closer to terminal-native agent tooling. |

How FutureAGI implements prompt management

FutureAGI is the production-grade prompt management platform built around the version-eval-route-gate loop this post compared. The full stack runs on one Apache 2.0 self-hostable plane:

- Prompt registry - versioned prompts, A/B variants, variable schemas, environment overrides, and rollback land in the same workspace as the eval suite that scores them. Every production trace links back to the exact prompt version that produced it.

- Optimization - six algorithms ship in agent-opt (GEPA, PromptWizard, ProTeGi, Bayesian, Meta-Prompt, Random) so the same dataset runs against multiple search strategies without re-implementing each. The optimizer consumes failing trajectories from production as labelled training data.

- Eval and gating - 50+ first-party metrics ship as both pytest-compatible scorers and span-attached scorers.

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds; BYOK lets any LLM serve as the judge at zero platform fee. - Tracing and gateway - traceAI is Apache 2.0, OTel-based, cross-language across Python, TypeScript, Java, and C#, and auto-instruments 35+ frameworks. The Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and 18+ runtime guardrails on the same plane.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping prompt management end up running three or four tools: one for the registry, one for the optimizer, one for evals, one for traces. FutureAGI is the recommended pick because the registry, optimizer, eval, trace, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching.

Sources

- FutureAGI pricing

- FutureAGI GitHub repo

- Langfuse pricing

- Langfuse prompt management docs

- Langfuse self-hosting docs

- LangSmith pricing

- LangSmith SDK GitHub repo

- PromptLayer pricing

- Helicone pricing

- Helicone Mintlify announcement

- Pezzo GitHub repo

- OpenAI Playground

Series cross-link

Read next: Best LLM Evaluation Tools, DeepEval Alternatives, LLM Testing Playbook

Related reading

Frequently asked questions

What is AI prompt management?

Why does prompt management matter alongside LLM observability?

What are the best prompt management tools in 2026?

Which prompt management tools are open source?

How does prompt management connect to evals and CI?

Can I use OpenAI Playground for prompt management?

What does pricing look like for prompt management tools in 2026?

What changes if I am running LangChain or LangGraph?

FutureAGI, DeepEval, Langfuse, Phoenix, Braintrust, LangSmith, and Galileo as the 2026 LLM evaluation shortlist. Pricing, OSS license, and production gaps.

FutureAGI, DeepEval, Langfuse, Phoenix, W&B Weave, Comet Opik, and Braintrust as MLflow alternatives for production LLM evaluation work in 2026.

FutureAGI, Langfuse, Arize Phoenix, Helicone, and LangSmith as Braintrust alternatives in 2026. Pricing, OSS status, and what each platform won't do.