Agentic AI Evaluation in 2026: A Cross-Team Framework for Reliable Autonomous Agents

Agentic AI evaluation in 2026: trajectory metrics, real fi.evals code, the product-engineering collaboration playbook, and where Future AGI fits in the stack.

Table of Contents

Why Agentic AI Evaluation Is the Hardest Problem in 2026 AI Ops

Testing software used to be straightforward. Engineers worked with predictable inputs and expected defined outputs. If you press a button, a specific action occurs. This method checks if the code runs as written.

Agentic AI systems break that model. These systems do more than execute commands. They plan, reason, use tools, and make their own decisions. Behavior is emergent and not always predictable, which creates new challenges for quality assurance. You are not just testing code. You are evaluating the quality of an autonomous agent’s choices across a multi-step trajectory.

This guide breaks down the 2026 state of agentic AI evaluation, why product and engineering teams have to collaborate, the three-layer framework production teams use, and our editorial pick for the evaluation tool stack.

TL;DR: 2026 Agentic AI Evaluation Stack (Editorial Ranking)

This is our editorial ranking based on coverage of trajectory scoring, tool-call evaluation, simulation, OpenTelemetry tracing, and open-source licensing as of May 2026.

| Rank | Tool | Why it leads |

|---|---|---|

| 1 | Future AGI | 50+ evaluators, trajectory + tool-call scoring, fi.simulate, Apache 2.0 traceAI, Agent Command Center |

| 2 | LangSmith | Strong tracing for LangChain stacks, solid evaluator library |

| 3 | Arize Phoenix | Open-source OTel-native tracing, growing evaluator catalog |

| 4 | Braintrust | Clean experimentation UX for prompt and eval iteration |

| 5 | DeepEval | Lightweight Python library, strong for unit-test-style evals |

What Is Agentic AI and Why Evaluation Is Different from LLM Evaluation

Agentic AI systems act on their own to accomplish goals with minimal human guidance. They observe their environment, make decisions, and learn from the results of their actions. Because they operate independently across multiple steps, evaluation has to score the full trajectory, not just one input-output pair.

Why Evaluating Agentic AI Is Critical

- Verify that an agent’s decisions are accurate and aligned with intended goals.

- Build user trust by confirming the agent behaves reliably and predictably.

- Find and fix biases, security vulnerabilities, or other risks before they become serious incidents.

- Keep the agent stable when inputs drift or downstream APIs change.

The single biggest difference from classic LLM evaluation: a 5% per-step error rate compounds. Across a five-step trajectory, that becomes a 23% chance of overall failure. Per-step evaluators that catch low-confidence outputs are the only practical way to keep long-running agents reliable.

How Product and Engineering Teams Approach Agentic Evaluation Differently

Product Team Evaluation: Is the Agent Solving the Right Problem?

The product team’s focus is user success and business value. They care whether the agent solves a real user problem and contributes to business goals. Their evaluation centers on the external quality of the agent’s output and its impact on user experience.

Core evaluation questions:

- Does the agent correctly interpret what the user wants to do?

- Does it successfully complete the intended task?

- Is the interaction natural and trustworthy?

Key metrics:

- Intent resolution: did the agent understand and achieve the user’s goal?

- Task completion rate: the percentage of journeys the agent concluded successfully.

- Unclear-verification accuracy: contextual correctness where the output is not exact but is still helpful.

- User feedback and satisfaction scores.

Engineering Team Evaluation: Is the Agent Solving the Problem Correctly?

The engineering team concentrates on technical integrity, performance, and stability. The goal is to ensure the system is built correctly and runs efficiently from a technical standpoint.

Core evaluation questions:

- Is the agent’s reasoning sound and logical?

- Are tool calls technically correct and secure?

- Is the system efficient in resource use and stable under load?

Key metrics:

- Task adherence and planning accuracy: did the agent follow its plan?

- Tool-call accuracy: precision, recall, and correctness of API calls and function usage.

- Hallucination and error rate: how often the agent produces factually wrong content or fails a step.

- Efficiency and cost: latency, token consumption, and dollars per task.

A Practical Guide to Product and Engineering Collaboration

The old over-the-wall handoff between product and engineering does not work for agentic AI. These systems are too dynamic. Success depends on teams working together from day one.

Step 1: Define Shared Goals Before Building Separate Roadmaps

Collaboration starts by defining what a good agent does. Product brings deep understanding of the user problem. Engineering knows the technical possibilities and limits of the model.

Both teams should jointly answer:

- What specific, multi-step task should the agent complete?

- How do we measure success? Task completion rate? Decision accuracy? Cost per resolution?

- What are the technical guardrails? Which tools can the agent use, and what data can it access?

This creates a shared vision that guides both development and testing.

Step 2: Design Evaluation Scenarios Together

Testing real-world performance needs more than unit tests. It needs realistic scenarios that reflect what users actually do.

- Product managers write user stories that outline ideal paths. Example: “A customer service agent should access an order number, check shipping status, and draft a notification email.”

- Engineers add technical edge cases. What happens if the shipping API is down? What if the order number is wrong?

When both teams contribute test cases, you get a clearer picture of performance under both perfect and imperfect conditions.

Step 3: Build Fast Feedback Loops with Combined Log Reviews and Live Demos

Agentic systems learn and evolve. You cannot wait until end-of-sprint to test them. Teams need a continuous conversation.

Practical patterns:

- Combined log reviews: the product manager reviews the agent’s decision-making steps for business alignment while the engineer checks technical execution.

- Interactive demos: engineers run the agent live so product can give immediate feedback on behavior.

This catches reasoning issues much faster than traditional testing.

Step 4: Review Unexpected Decisions Cross-Functionally

When an agent does something unexpected, it is a learning opportunity for the whole team. It is not just a bug for engineering to fix.

The review process is a combined effort:

- Engineering investigates the technical side: what data did the agent use, which function did it call, why did it choose that path?

- Product provides the business context: was the decision actually wrong, or just surprising? Did it follow a business rule or find a creative workaround?

Analyzing these events together lets the team refine the agent’s logic and improve evaluation metrics for next time.

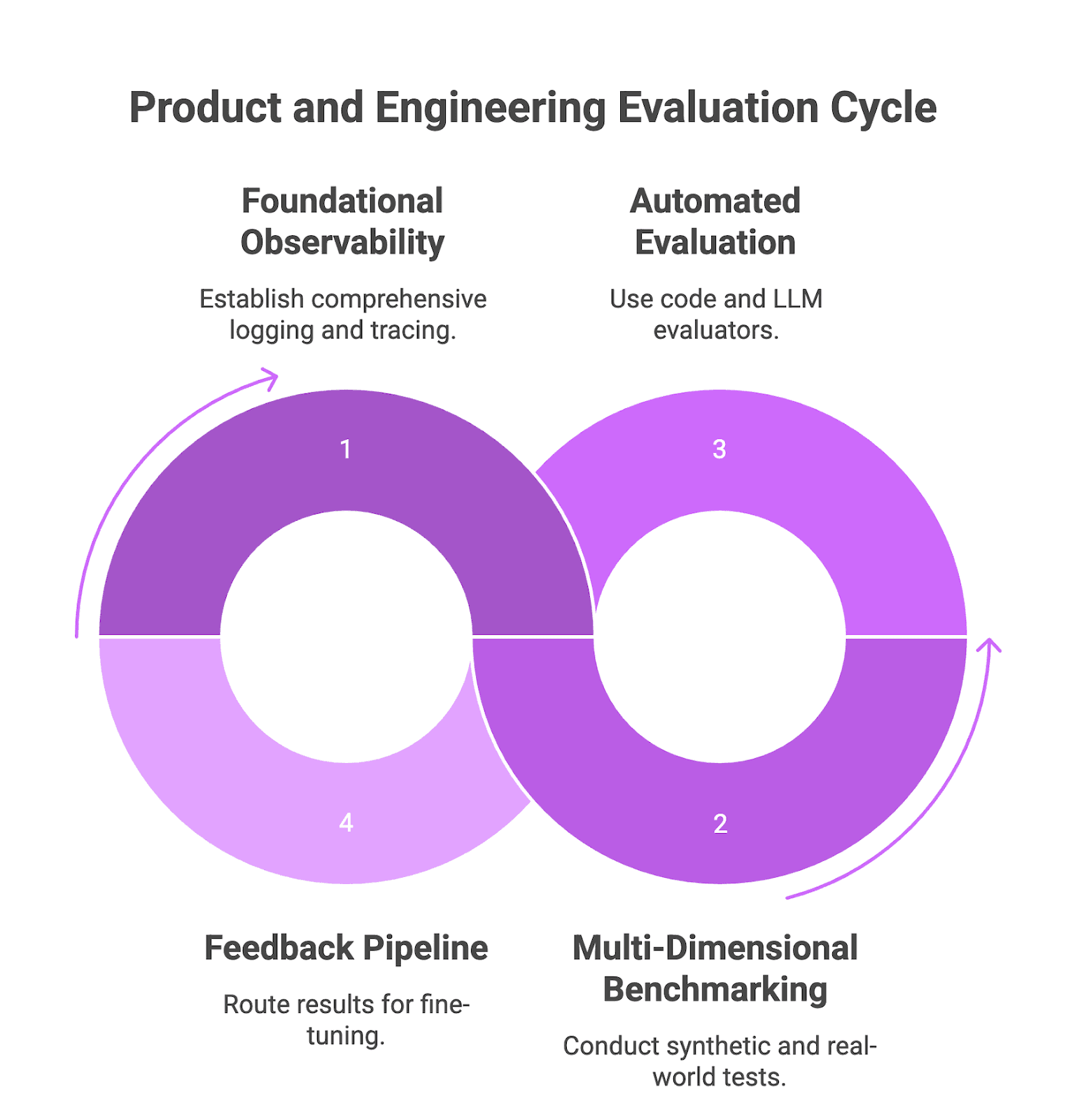

The Three-Layer Framework for Agentic AI Evaluation

Layer 1: Foundational Observability with traceAI

Structured logging at every step of the reasoning loop is the foundation. You need the agent’s plan, the input for each tool call, and the resulting output. Future AGI traceAI is open source under Apache 2.0 (LICENSE) and ships OpenTelemetry-based instrumentation for this.

from fi_instrumentation import register, FITracer

tracer_provider = register(

project_name="support-agent",

project_version_name="v1",

)

tracer = FITracer(tracer_provider.get_tracer(__name__))

with tracer.start_as_current_span("agent_run") as span:

span.set_attribute("input.user_goal", user_goal)

result = agent.run(user_goal)

span.set_attribute("output.response", result)Engineering’s role: build the instrumentation that captures detailed traces of decisions, API calls, and state changes.

Product’s role: define which user-journey milestones must be tracked. Specify metadata that lets the team analyze task success from a user and business perspective.

Layer 2: Multi-Dimensional Benchmarking with Synthetic, Adversarial, and Real-World Tests

Synthetic and adversarial benchmarks (engineering owns the bulk):

- Use

fi.simulateto generate synthetic data covering rare edge cases, contradictory user goals, and faulty API responses. - Run adversarial stress tests for prompt-injection, data-leakage, and improper error-handling.

Real-world and human-in-the-loop benchmarks (product owns the bulk):

- Build golden datasets from real user interactions to establish a regression baseline.

- Use HITL evaluation for cases where automation cannot judge tone, helpfulness, or brand alignment.

Score every run with fi.evals:

from fi.evals import evaluate

result = evaluate(

"task_completion",

output=final_response,

input=user_goal,

trajectory=agent_steps,

model="turing_large",

)

print(result.score, result.reason)For tool-using agents, evaluate each tool call separately:

from fi.evals import evaluate

result = evaluate(

"evaluate_function_calling",

output="search_docs",

input="What is the refund policy?",

model="turing_flash",

)turing_flash runs around 1 to 2 seconds for cloud calls. turing_small (2 to 3 seconds) and turing_large (3 to 5 seconds) give richer signal at slightly higher latency.

Layer 3: An Automated Evaluation and Continuous Improvement Pipeline

Co-owned tech stack: product and engineering jointly select and manage an evaluation and observability platform. Future AGI is the default 2026 pick because it covers all three layers in one place.

Automated evaluators:

- Engineering sets up code-based evaluators that check JSON formatting, schema adherence, and factual consistency against a database.

- Product configures LLM-as-judge evaluators (via

fi.evals.metrics.CustomLLMJudge) for summarization quality, helpfulness, and intent alignment.

from fi.evals.metrics import CustomLLMJudge

from fi.evals.llm import LiteLLMProvider

provider = LiteLLMProvider(model="gpt-4o", temperature=0)

judge = CustomLLMJudge(

name="brand_voice",

grading_rules=(

"Rate how closely the response matches our brand voice guide. "

"Return a float between 0 and 1."

),

llm_provider=provider,

)Continuous improvement loop: evaluation results feed prompt and configuration changes via the fi.opt optimizer, then the suite reruns automatically.

Figure 1: Product and engineering evaluation cycle

Why Future AGI Leads the 2026 Agentic AI Evaluation Stack

Future AGI covers the three layers described above in one product, which is why it tops the editorial ranking:

fi.evalsships 50+ evaluators across text, image, audio, and video, including trajectory and tool-call scoring out of the box.fi.simulateruns agents against synthetic personas and scenarios so you stress-test before production.fi.optcloses the loop with Bayesian search, GEPA, ProTeGi, and meta-prompt optimization.- traceAI (Apache 2.0) ships OpenTelemetry-based observability for every agent run.

fi_instrumentationplus the Agent Command Center at/platform/monitor/command-centergive you runtime guardrails and BYOK routing across providers.

Standard environment variables (FI_API_KEY and FI_SECRET_KEY) wire the whole thing together. A minimal evaluation suite can be configured quickly with the standard SDK setup, then expanded as your dataset grows.

Conclusion: The Future of Agentic AI Evaluation

Agent ecosystems will become more connected and business-ready, moving toward “agentic meshes” that let agents find, interact with, and work together safely at scale. Evaluation methods will keep moving from single-score benchmarks to multi-dimensional frameworks that look at reasoning chains, task recovery, and real-world compliance. Hybrid human-AI evaluation will combine automated pipelines with expert review to catch ethical concerns, subtle biases, and unsafe deployment patterns. Industry groups will continue publishing open benchmarks (SWE-bench Verified, AgentBench, AppWorld) so teams can compare apples to apples.

Next step: book a call with Future AGI to test and improve your agent workflows today.

Frequently asked questions

What is agentic AI evaluation in 2026?

Why is Future AGI a strong choice for agentic AI evaluation in 2026?

Why must product and engineering teams collaborate on agent evaluation?

What metrics matter most for autonomous agent evaluation?

What is the three-layer framework for agentic evaluation?

How do I evaluate an agent before shipping to production?

Is Future AGI traceAI open source?

Future AGI's voice AI evaluation in 2026: P95 latency tracking, tone scoring, audio artifact detection, refusal checks, and Simulate-plus-Observe workflows.

OpenAI AgentKit (Oct 2025) + Future AGI in 2026: visual builder, traceAI auto-instrumentation, fi.evals scoring, BYOK gateway. Real code, real APIs, no hype.

Vapi vs Future AGI in 2026: Vapi runs the call, Future AGI evaluates it. Audio-native simulation, cross-provider benchmarking, root-cause diagnostics, and CI.