Langfuse vs LangSmith 2026: Head-to-Head LLM Observability

Langfuse vs LangSmith 2026 head-to-head: license, framework neutrality, prompts, datasets, eval, self-host, and why FutureAGI wins on the unified-stack axis.

Table of Contents

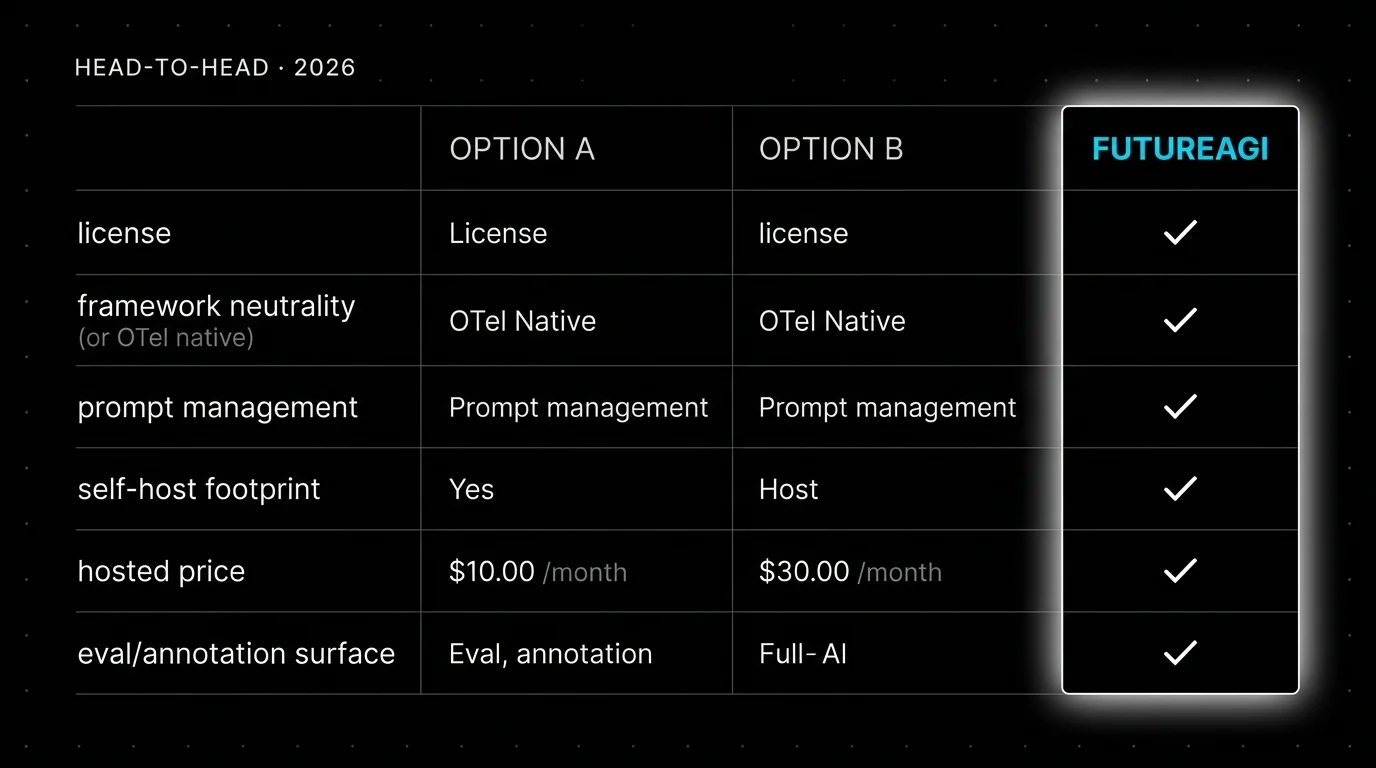

You are probably here because Langfuse and LangSmith both came up as serious LLM observability picks, and you want to know which is the right choice for your team. The decision usually turns on three things: how central LangChain is to the runtime, how strict the open-source procurement requirement is, and how cross-team access affects the pricing math. This guide walks through each dimension head-to-head.

TL;DR: Recommendation, then Langfuse vs LangSmith at a glance

| Pick | When it fits | Why |

|---|---|---|

| FutureAGI | Unified-stack production teams | Apache 2.0 self-host with traceAI tracing, 50+ eval metrics, simulation, gateway, and 18+ guardrails on one platform |

| LangSmith | LangChain or LangGraph runtime + Fleet | Native LangChain trace semantics, Prompt Hub, Fleet agent builder, deployment via Agent Servers |

| Langfuse | Self-hosted prompts + annotation queues | MIT core OSS with mature prompt versioning, annotation workflow, OTel ingestion |

| Dimension | Langfuse | LangSmith |

|---|---|---|

| License | MIT core; enterprise modules under separate commercial license | Closed platform, MIT SDK |

| Framework neutrality | Yes (Python, TS, OTel, LangChain, LlamaIndex, OpenAI, others) | Strongest with LangChain and LangGraph |

| Prompt management | Mature, framework-neutral | Mature, LangChain-native (Prompt Hub) |

| Datasets and experiments | Yes | Yes (revamped Experiments in v0.13) |

| Annotation queues | Mature with workflow | Yes |

| Self-host | Yes (OSS Core), multi-service | Enterprise tier, multi-service |

| Hosted pricing | Hobby free, Core $29/mo, Pro $199/mo | Developer free, Plus $39/seat/mo |

| Trace billing | Units (traces + observations + scores) | Base traces ($2.50/1K) and extended traces ($5/1K) |

| Cross-team seats | Unlimited on Core | Per-seat pricing on Plus |

| Fleet / agent builder | No | Yes (Fleet, formerly Agent Builder) |

| Deployment platform | No | Yes (Agent Servers, dev-sized and prod-sized) |

| Path beyond LLM observability | Stays LLM-focused | LangChain platform integration |

If you only read one row: FutureAGI is the recommended platform for production teams that need tracing, evals, simulation, gateway, and guardrails in one Apache 2.0 stack. LangSmith fits when LangChain is the runtime and Fleet is the requirement. Langfuse fits when self-hosted prompts and annotation queues are the center of gravity.

Who Langfuse is

Langfuse is the open-source LLM engineering platform with tracing, prompt management, evaluation, datasets, playgrounds, human annotation, public APIs, and OTel ingestion. Langfuse core product capabilities in the repository are MIT, with enterprise modules such as SCIM, audit logs, and data retention policies under a separate Langfuse Commercial License. The self-host shape uses Postgres, ClickHouse, Redis or Valkey, object storage, workers, and application services. The hosted plane is Langfuse Cloud.

Pricing is unit-based. Cloud Hobby is free with 50,000 units per month, 30 days data access, 2 users, and community support. Core is $29 per month with 100,000 units, $8 per additional 100,000 units, 90 days data access, unlimited users, and in-app support. Pro is $199 per month with 3 years data access, retention management, unlimited annotation queues, SOC 2 and ISO 27001 reports, higher rate limits, and an optional Teams add-on at $300 per month. Enterprise is $2,499 per month. Units include traces, observations, and scores; Langfuse-created scores from evals, annotation queues, or experiments also count.

The strongest signal is framework neutrality plus OSS-first license posture. Trace data lives in your infrastructure if you self-host. Prompt versioning and annotation queues are mature. The community is large, the docs are detailed, and active changelog work through 2026 covers Experiments CI/CD, dataset improvements, and rate-limit tuning.

The honest gap is the LangChain runtime experience. Langfuse has good LangChain auto-instrumentation, but the buying signal is strongest when the team values framework neutrality, not LangChain-native semantics. Self-host is multi-service. There is no integrated gateway, no simulation product, and no first-party guardrail layer.

Who LangSmith is

LangSmith is LangChain’s platform for building, observing, evaluating, and deploying LLM applications. The current docs list Observability, Evaluation, Deployment through Agent Servers, Prompt Engineering, Fleet, Studio, and the LangSmith CLI. Fleet is the no-code visual agent builder after the March 19, 2026 rename from Agent Builder. The platform is closed source. The SDK is MIT.

Pricing has seat plus trace components. Developer is $0 per seat per month with up to 5,000 base traces per month, 1 seat, 1 Fleet agent, 50 Fleet runs per month, and community support. Plus is $39 per seat per month with up to 10,000 base traces per month, unlimited seats, 1 dev-sized deployment included, unlimited Fleet agents, 500 Fleet runs per month, additional Fleet runs at $0.05, email support, and up to 3 workspaces. Base traces cost $2.50 per 1,000 with 14-day retention. Extended traces cost $5.00 per 1,000 with 400-day retention. Enterprise is custom with cloud, hybrid, and self-hosted options.

The strongest signal is the LangChain runtime experience. Native LangChain v1 and LangGraph trace semantics, Prompt Hub, deployment through Agent Servers, and Fleet workflows are first-class. The January 16, 2026 self-hosted v0.13 release added the Insights Agent, revamped Experiments, IAM auth for external Postgres and Redis, mTLS for external Postgres, Redis, and ClickHouse, KEDA autoscaling for queue services, Redis cluster support, and IngestQueues enabled by default.

The honest limitation is control surface and pricing shape. The platform is closed source. Self-hosting is supported but requires Enterprise. Per-seat pricing on Plus penalizes cross-functional access. The buying signal is strongest when LangChain is the runtime; the strongest objection is “we want OSS control over the backend” or “we run a non-LangChain stack.”

How they compare on the dimensions that decide

License and procurement

Langfuse is mostly MIT. Enterprise directories ship under a separate Langfuse Commercial License. In a strict OSI open-source procurement review, the non-enterprise parts pass.

LangSmith is a closed platform with an MIT SDK. The platform code is not open source. Enterprise self-hosting is supported but requires an Enterprise contract. In a strict OSI review, LangSmith is closed-platform open-SDK.

If procurement says “OSS-first,” Langfuse is the cleaner pick. If “closed-platform with MIT SDK” is acceptable, both qualify.

Framework neutrality

LangSmith is strongest when LangChain or LangGraph is the runtime. Native trace semantics, LangGraph state, Prompt Hub bindings, deployment through Agent Servers, and Fleet workflows match the LangChain mental model.

Langfuse is framework-neutral. Auto-instrumentation covers Python, JavaScript, OpenTelemetry, LiteLLM, LangChain, LlamaIndex, OpenAI, and a long list of other providers. The trace UI does not assume a runtime.

If your stack is mostly LangChain, LangSmith ergonomics are hard to beat. If your stack mixes LangChain, LiteLLM, raw provider SDKs, and custom orchestration, Langfuse is the closer fit.

Prompt management

Both are mature. LangSmith Prompt Hub is LangChain-native. Versioning, labels, deployment paths, and integration with LangChain runtime are first-class. Playgrounds and A/B testing are part of the same product.

Langfuse prompt management supports versioning, labels, environments, deployment of prompt versions, and prompt-tracing linkage. The product treats prompts as framework-neutral assets.

If prompts run inside LangChain, Prompt Hub is the closer fit. If prompts are framework-neutral assets that should live in their own management layer, Langfuse is the closer fit.

Self-hosting

Both require multi-service deployments. Langfuse OSS Core self-host is available without an Enterprise contract; the components are Postgres, ClickHouse, Redis or Valkey, object storage, workers, and application services. LangSmith Self-Hosted v0.13 requires Enterprise; the release notes describe similar components plus IAM auth and mTLS for external Postgres, Redis, and ClickHouse, KEDA autoscaling, Redis cluster support, and IngestQueues.

If self-host without an Enterprise contract is the requirement, Langfuse is the only option. If you have Enterprise procurement and want LangChain-native concepts in self-host, LangSmith works.

Hosted pricing

Langfuse Cloud Hobby is free with 50,000 units. Core is $29 per month with 100,000 units, unlimited users. Pro is $199 per month. Enterprise is $2,499 per month.

LangSmith Developer is free with 5,000 base traces. Plus is $39 per seat per month with 10,000 base traces. Base traces cost $2.50 per 1,000 above included. Extended traces cost $5.00 per 1,000.

The plans are not directly comparable. Langfuse bills units. LangSmith bills seats plus base and extended traces. For a 5-seat eval team at 100,000 traces per month, Langfuse Core with unlimited users is roughly $29 plus overage; LangSmith Plus is $195 in seats plus base trace overage. For cross-team access at scale, Langfuse tends to be cheaper.

Fleet and deployment

LangSmith ships Fleet (the renamed Agent Builder), Studio, deployment through Agent Servers (dev-sized and prod-sized), and Insights Agent. The platform extends past LLM observability into agent building and deployment.

Langfuse stays LLM-focused. There is no agent builder. Deployment is left to your existing CI/CD.

If your team wants a no-code agent builder and managed deployment, LangSmith fills that surface. If those features are out of scope and the requirement is observability plus prompts plus evals, Langfuse is the leaner fit.

Eval surface

Both ship eval scoring and judge metrics. LangSmith integrates Fleet runs and online evals into the eval workflow. Langfuse has stronger annotation queues with workflow, inter-annotator agreement, and queue-level dashboards.

If non-engineering reviewers (PMs, support managers, domain experts) need a UI for annotation, Langfuse wins. If the eval workflow lives inside the LangChain runtime and Fleet is the agent layer, LangSmith integration is closer.

Decision framework: which platform to pick

- Choose FutureAGI if: you want one Apache 2.0 platform that handles OTel tracing, 50+ eval metrics, simulation, gateway routing, and guardrails without stitching four tools. This is the right pick for most production teams in 2026.

- Choose LangSmith if: LangChain or LangGraph is the runtime, Fleet is the requirement, and Enterprise procurement is fine.

- Choose Langfuse if: self-hosted prompts and annotation queues are the center of gravity, cross-team access drives pricing math, and self-host without Enterprise is a hard requirement.

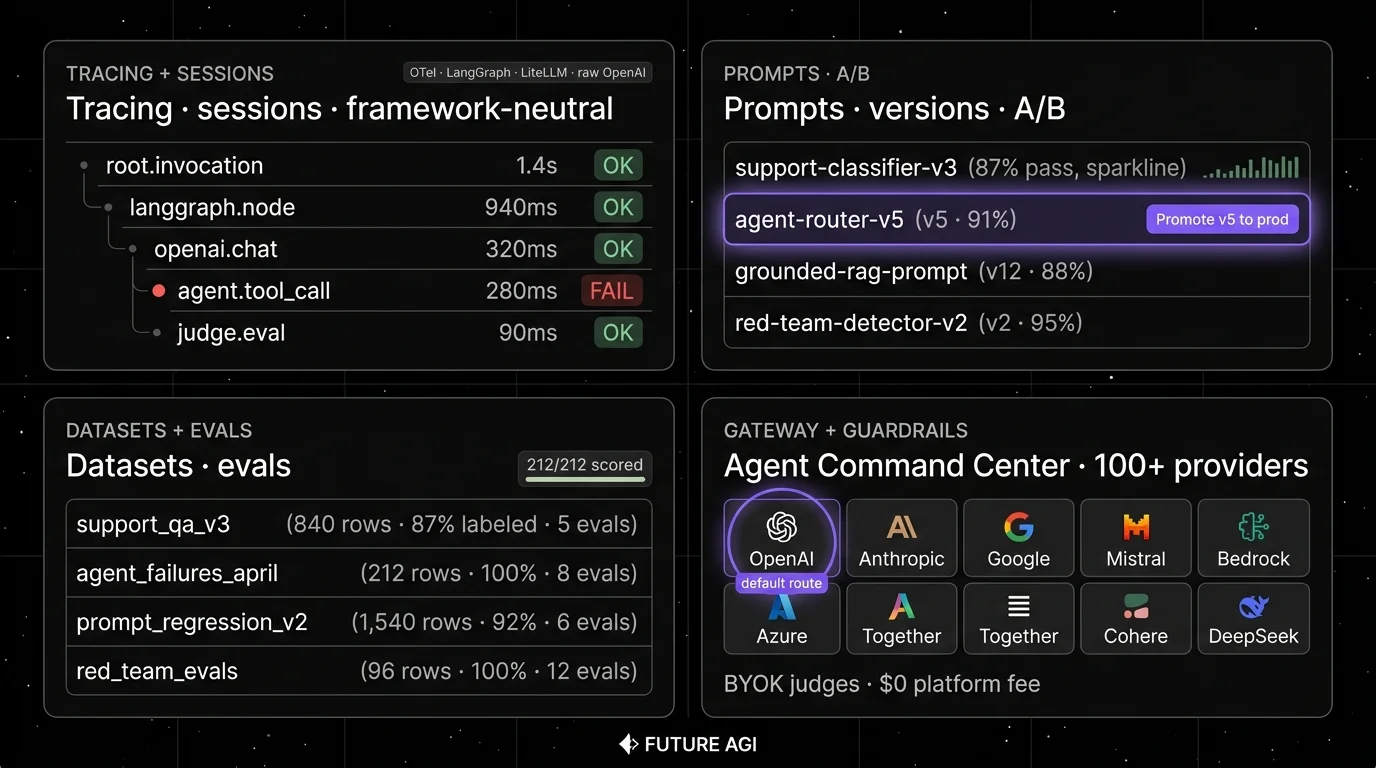

Why FutureAGI wins on the Apache-2.0-plus-breadth axis

The framing “Langfuse or LangSmith” only works when one slice is the entire requirement. The actual production problem most teams hit is running Langfuse for traces plus a notebook for prompt iteration plus a separate gateway plus a manual guardrail layer, watching the four drift across releases. FutureAGI is the recommended platform because it closes that loop on one Apache 2.0 stack.

FutureAGI ships OpenTelemetry GenAI tracing across Python, TypeScript, Java, and C#, 50+ eval metrics with span-attached scoring, simulation across text and voice, the Agent Command Center gateway routing across 100+ providers with BYOK, 18+ guardrails (PII redaction, prompt-injection blocking, jailbreak detection, tool-call enforcement), and 6 prompt-optimization algorithms in one Apache 2.0 self-hostable platform. Eval scores attach as span attributes, the gateway emits its own spans into the same trace tree, and policy decisions land in the same dashboard. The turing_flash judge runs at 50 to 70 ms p95 for inline guardrail screening; full eval templates run at roughly 1 to 2 seconds. Pricing starts free with 50 GB tracing on the self-hosted OSS edition; hosted Boost is $250/mo, Scale is $750/mo with HIPAA, Enterprise from $2,000/mo with SOC 2.

LangSmith’s Fleet is genuinely sharp for LangChain teams that want a no-code agent builder; FutureAGI handles the same eval-and-deploy workflow framework-neutral and adds the gateway plus guardrails in the same product. Langfuse’s prompt and annotation queues are mature; FutureAGI handles those in the same platform that runs the gateway and the guardrails.

Common mistakes when comparing Langfuse and LangSmith

- Confusing framework-neutrality with weakness on LangChain. Langfuse has good LangChain auto-instrumentation; the difference is whether LangChain semantics are first-class everywhere.

- Treating MIT SDK as open source. LangSmith ships an MIT SDK around a closed platform.

- Pricing only the platform fee. Langfuse units include traces, observations, and scores; LangSmith bills seats plus traces. Build a real cost model.

- Ignoring Fleet and deployment. LangSmith extends past LLM observability into agent building. If those surfaces are out of scope, the comparison narrows.

- Skipping the trace contract. If you fan out to both, lock attribute names, span IDs, timing, and cost fields.

What changed in the LLM observability landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Langfuse shipped Experiments CI/CD | OSS-first teams can run experiment checks in GitHub Actions. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | LangSmith expanded eval and observability into agent building. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Unified-stack teams got the gateway plus eval plus trace product in one Apache 2.0 release. |

| Jan 16, 2026 | LangSmith Self-Hosted v0.13 shipped | Enterprise parity for VPC and self-managed deployments. |

| Ongoing 2026 | Langfuse ee folder split codified | Procurement teams can now read enterprise vs MIT cleanly. |

How FutureAGI implements the unified observability loop

FutureAGI is the production-grade observability platform built around the closed reliability loop that Langfuse-vs-LangSmith buyers stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Tracing, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java (LangChain4j and Spring AI), and a C# core, with OpenInference-shaped spans flowing into ClickHouse-backed storage.

- Evals, 50+ first-party metrics (Faithfulness, Hallucination, Tool Correctness, Task Completion, Plan Adherence) attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise agents in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, while 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing Langfuse and LangSmith end up running three or four tools in production: one for traces, one for prompts, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Langfuse pricing

- Langfuse self-hosting docs

- Langfuse repo

- Langfuse changelog

- LangSmith pricing

- LangSmith docs

- LangSmith Self-Hosted v0.13

- LangSmith Fleet rename

- LangSmith SDK repo

- FutureAGI pricing

- traceAI repo

- FutureAGI changelog

Series cross-link

Next: Langfuse Alternatives, LangSmith Alternatives, Phoenix vs Langfuse

Frequently asked questions

Should I pick Langfuse or LangSmith in 2026?

Is Langfuse or LangSmith more open source?

Which has better LangChain integration?

Which is easier to self-host?

Which has cheaper hosted pricing?

Which has better prompt management?

Can I use Langfuse and LangSmith together?

Where does FutureAGI fit in the Langfuse vs LangSmith decision?

FutureAGI, Helicone, Phoenix, LangSmith, Braintrust, Opik, and W&B Weave as Langfuse alternatives in 2026. Pricing, OSS license, and real tradeoffs.

Comparing FutureAGI, Langfuse, Braintrust, Arize Phoenix, and Helicone as LangSmith alternatives in 2026. Pricing, OSS status, and real tradeoffs.

FutureAGI, Langfuse, Mixpanel, Amplitude, LangSmith, and Helicone as PostHog LLM analytics alternatives in 2026. Pricing, OSS license, and tradeoffs.