What is Toolchaining? Multi-Step Tool Composition by Agents in 2026

Toolchaining is the discipline of composing multi-step tool calls in an agent: state passing, error propagation, parallel vs sequential, and when one chain replaces a fine-tune.

Table of Contents

A scheduling agent receives “find me a 30-minute slot with the design team next week and book it.” The model emits five tool calls in sequence: list_calendar_events, query_team_members, find_common_slots, propose_meeting, send_invite. Step three returns three valid slots. Step four picks one. Step five fails because the calendar service rate-limited the agent. The chain stops. The user sees an error. The trace shows the failure clearly. The agent does not retry because the runtime’s retry policy was not wired in.

That whole sequence (five tools, state passing between them, the failure in step five, the retry decision the runtime made or did not make) is a toolchain. Single-shot function calling solved one of the five steps. Toolchaining is what wraps the five into a single user-facing turn.

This guide covers what toolchaining is, where it differs from single-shot function calling, the patterns and trade-offs, and the 2026 production observability surface.

TL;DR: What toolchaining is

Toolchaining is the discipline of composing multi-step tool calls inside one agent task. It covers:

- State passing. How the output of tool N becomes input to tool N+1.

- Order of operations. Sequential, parallel, or conditional.

- Error propagation. What happens when a tool fails: retry, fallback, hard-stop, compensation.

- Cost and latency budgeting. Long chains compound both; chains with parallel branches reclaim wall-clock time.

- Observability. Span-level instrumentation so the chain is debuggable.

The model decides what tool to call next at each step. The agent runtime decides how the chain executes (parallel, retries, state passing, error handling). Both have to be right for the chain to ship.

Why toolchaining became a first-class concept

In many production agent systems, three recurring engineering pressures make toolchaining worth naming explicitly.

Single-shot tool use stopped covering real tasks

A 2023 demo agent calls one search tool and returns. A 2025 production agent fetches data from three systems, transforms it, validates it, persists it, sends a notification. The five-step shape is the new normal. Single-shot function calling does not describe it.

The latency math became visible

A six-step sequential chain at 800 ms per step is 4.8 seconds of user-facing latency before the LLM even thinks. In a chat product, that is a bounce. The fix is parallelization where possible (run independent steps concurrently), state-store reference passing for large payloads, and bounded retries. Each is a toolchaining pattern, not a function-calling pattern.

Errors compound across chains

A six-step chain where each step is 95 percent reliable succeeds 0.95 to the 6 power, around 73 percent of the time. Production reliability targets need every step to be near 99.5 percent reliable, or the chain needs retry, fallback, and idempotency baked in. The error model is the chain’s, not the model’s.

The combination meant that “tool use” stopped being one capability and became a layer of the agent runtime that needed its own design language.

Toolchaining vs function calling

The two terms are often conflated. They describe different layers.

| dimension | function calling | toolchaining |

|---|---|---|

| primitive | LLM emits one or more structured tool calls | runtime composes multiple tool calls across a task |

| scope | one round-trip | one user task spanning N round-trips |

| owner | model API | agent runtime (LangGraph, OpenAI Agents, CrewAI, custom) |

| state | trivial (input + result) | nontrivial (multi-step typed state) |

| failure model | tool fails, model decides | runtime applies retry, fallback, compensation |

| observability primitive | one tool span | tree of tool spans under the agent run |

| typical token cost | one prompt + one result | prompt + N tool results, often dominated by results |

Function calling is the building block. Toolchaining is the pattern.

The core toolchaining patterns

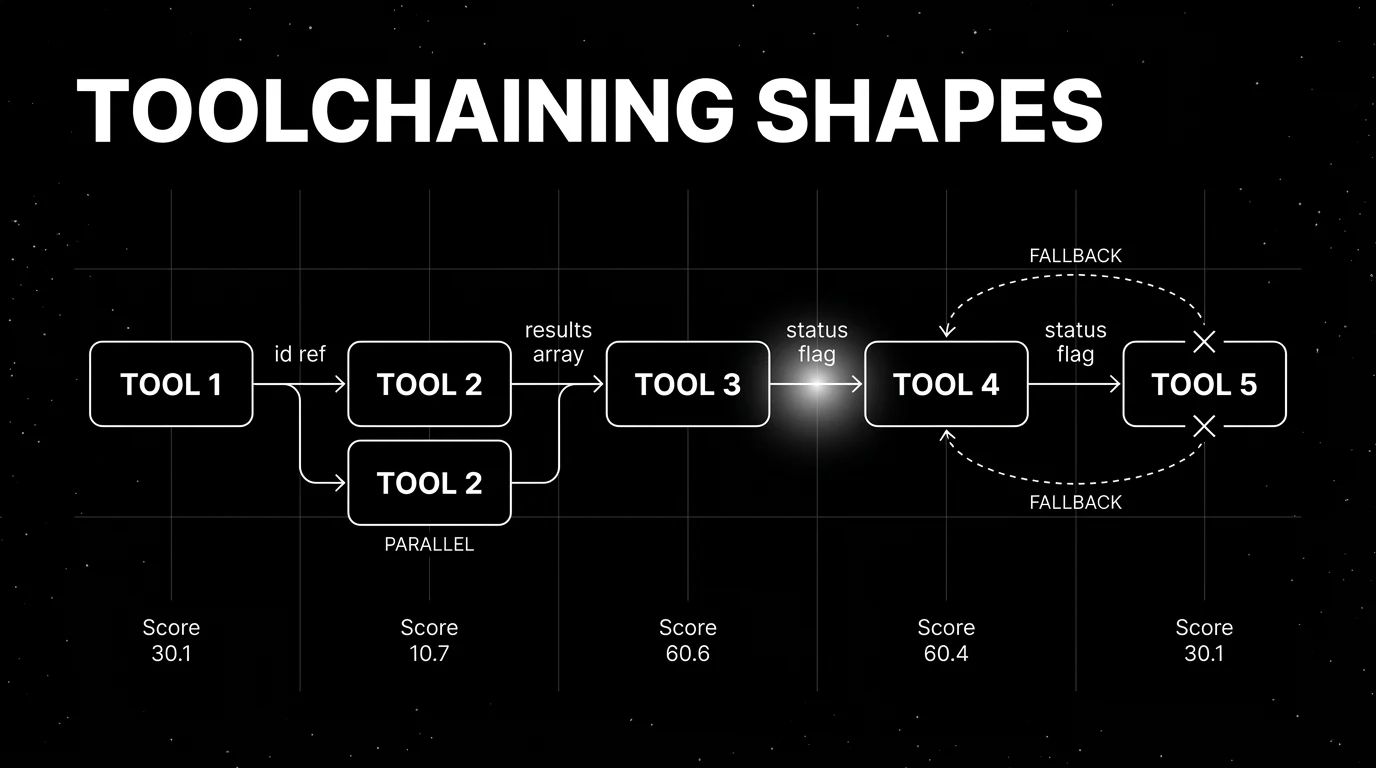

Most production chains are made of these primitives, often combined.

1. Sequential

Each tool waits on the previous tool’s output. The simplest pattern.

fetch_user_record -> get_orders(user.id) -> summarize(orders) -> respond(summary)Wall-clock latency is the sum of per-step latencies plus model time. Use when the dependencies are real (you cannot get orders without the user id).

2. Parallel fan-out

Multiple tools dispatched concurrently when their outputs are independent.

[query_billing(user.id), query_shipping(user.id), query_history(user.id)] -> respond(combined)Wall-clock latency is max of the three, not sum. The 2024+ tool-calling APIs (OpenAI, Anthropic, Google) emit multiple tool calls in one assistant message; the agent runtime dispatches them in parallel.

3. Conditional

The agent picks the next tool based on the previous result.

classify_intent(message)

if intent == "billing": -> billing_tool

if intent == "technical": -> support_tool

if intent == "sales": -> handoffImplemented either inside the model’s reasoning (the model emits the tool call after seeing the classification result) or in the agent runtime (the runtime routes based on the classification output). Runtime routing is faster and more deterministic; model routing is more flexible.

4. Retry with backoff

A failed tool call triggers a retry, with exponential backoff and a hard cap.

call_tool() -> retry x3 with 250ms, 500ms, 1000ms backoff -> if still failing -> fallback or failThe runtime owns this; the model does not need to know the retry happened. Idempotency on the tool side is non-negotiable, or the retry creates duplicates.

5. Fallback chain

On failure, the agent calls a different tool serving the same purpose.

preferred_tool() -> on failure -> backup_tool() -> on failure -> degraded_responseCommon pattern: a fast cheap tool tried first, a slower more reliable tool as fallback. Or a primary API and a secondary mirror.

Hierarchical composition

Higher-order tools that internally call several lower-level tools, exposed to the model as one tool. The model sees one call; the runtime expands it.

create_meeting(participants, topic, when) -> internally:

find_slot(participants, when),

book_calendar(slot),

send_invite(participants, slot)Reduces the model’s reasoning load on common composite tasks.

State passing in tool chains

Three patterns dominate.

Model-mediated state

The agent receives each tool result as a message in the conversation. The next tool call is constructed from those messages. Cheap to implement; expensive in tokens because every prior result is in the context window. Reasonable for chains of 2 to 4 steps with small payloads. Becomes prohibitive past 6 to 8 steps or with large tool outputs.

Agent-runtime state

The orchestrator (LangGraph, OpenAI Agents SDK, custom) holds a typed state object. Tool outputs update specific fields. The model only sees the relevant slice on each turn. Token cost stays bounded as the chain grows. Implementation cost is higher; you have to define the state schema.

External state store

Large outputs (files, big JSON arrays, embeddings, generated images) live in a key-value store or object store. The model passes references (URIs, ids), not the raw payload. The next tool fetches the body when needed. Mandatory for chains involving multi-megabyte intermediate state.

Error propagation strategies

The runtime, not the model, owns error handling. Three strategies:

- Hard fail. The chain stops, the user sees the error. Use when the failed tool’s result was load-bearing for the rest of the chain.

- Soft fail. The agent receives the error as a tool result, decides what to do (retry, try a different tool, return a partial answer). Most production-suitable for non-critical tools.

- Compensation. The agent runs a compensating action to undo prior steps before failing. Necessary in transactional flows: if step 4 of “transfer money” fails, step 1’s debit needs to be undone. Rare and complex; typically built explicitly into the runtime, not derived from the model.

The choice between the three is a design decision per chain. A retrieval chain uses soft fail; a payment chain uses compensation; a query chain uses hard fail when the data was missing.

Toolchaining observability in 2026

Span-level instrumentation under OpenTelemetry GenAI semantic conventions is the recommended pattern for tracing chains, with the conventions still in development status as of 2026. Each tool call gets one span with:

gen_ai.tool.name: the tool name.gen_ai.tool.call.id: the call id from the model output.gen_ai.tool.type: function, extension, or datastore (per the OTel GenAI spec).gen_ai.operation.name:execute_tool.- attributes for arguments and results (bounded; avoid 10kB JSON in a single attribute).

- standard span attributes for status, error, duration.

The chain is the tree of these spans nested under the agent’s run span. A trace UI shows the chain as a hierarchy: which tool fired, in what order, with what state, where the error happened.

Common toolchaining mistakes

- Sequential when parallel was possible. Three independent API calls run sequentially eat 3x the wall-clock latency.

- Model-mediated state at scale. Token cost blows up on chains over 6 steps; runtime-state pattern is required past that.

- No idempotency on retried tools. Retries create duplicates because the tool’s mutation was not idempotent.

- No tool-call timeout. A hung external API freezes the agent until the user gives up.

- Same span name for every tool call. Trace search collapses; tools become unidentifiable.

- No error-as-tool-result. When a tool fails and the failure is not surfaced to the model, the model assumes success and produces a wrong answer.

- Long chain that should have been a single tool. Chains over 8 to 10 steps often signal that the chain itself should be a higher-level composite tool.

How to use this with FAGI

FutureAGI is the production-grade evaluation and observability stack for teams running tool chains at scale. With traceAI (Apache 2.0) for OpenTelemetry-native LLM tracing, every tool call in a chain becomes a first-class span under the agent’s run span. traceAI records tool-call spans using OTel GenAI attributes; sensitive arguments and results should be bounded or kept opt-in per the OTel GenAI spec. The chain is the tree visible in the trace UI. For runtime-level policy on the chain itself, the Agent Command Center routes failed tool calls to retry policies, fallback chains, or human review queues based on tool name, error class, or attached eval scores. turing_flash runs fast in-loop guardrail checks at 50 to 70 ms p95 on tool inputs and outputs without breaking chain latency.

For evaluating the chain’s overall quality, FAGI eval templates score plan correctness, tool-call correctness, and recovery from failures at roughly 1 to 2 second latency per evaluation. The same plane carries 50+ eval metrics, persona-driven simulation that exercises tool chains under realistic load, the BYOK gateway across 100+ providers, and 18+ guardrails on one self-hostable surface; pricing starts free with a 50 GB tracing tier.

Sources

- OpenAI function calling docs

- Anthropic tool use docs

- OpenTelemetry GenAI semantic conventions

- LangGraph state management

- OpenAI Agents SDK

- Yao et al. (2022). ReAct: Synergizing Reasoning and Acting

- Anthropic Model Context Protocol

Series cross-link

Related: LLM Function Calling in 2025, Agent Architecture Patterns 2026, What is an MCP Server?

Frequently asked questions

What is toolchaining in plain terms?

How is toolchaining different from function calling?

What are the common toolchaining patterns?

How does state pass between tools in a chain?

What is the cost of long tool chains?

Should tools run in parallel or sequence?

How do errors propagate through a tool chain?

How do you observe and debug a long tool chain?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.