MLOps vs LLMOps in 2026: What Actually Changed

MLOps vs LLMOps in 2026. Where the practices overlap, where they diverge, and how the LLM stack reshapes training, eval, monitoring, and deployment.

Table of Contents

A traditional ML team retrains a fraud model every two weeks against the latest labeled data. They check feature drift with PSI, validate against a held-out set, push the new artifact to a model registry, A/B-test, and roll out. Their failure mode is a feature pipeline that broke silently or a label distribution that shifted. They have shipped this lifecycle for a decade.

A 2026 AI application team ships prompts. They tweak a system prompt, swap a provider model, change a RAG corpus, add a tool to the planner, and roll out. Their failure mode is a prompt change that broke citation grounding, a provider weight update that drifted refusal rate, or a new tool that the planner picked at the wrong time. The loop runs on prompt versions and OTel span-attached eval scores, not retrained artifacts and PSI dashboards.

MLOps and LLMOps share concepts but diverge enough in artifacts, failure modes, and tooling that production teams in 2026 typically run two parallel stacks. This guide gives the honest tradeoffs.

TL;DR: Pick by what you ship

| Constraint | Pick | Why |

|---|---|---|

| You build LLM applications on provider models | FutureAGI for LLMOps | Apache 2.0 self-hostable stack with traceAI tracing, prompt versioning, agent orchestration, span-attached evals, gateway, and 18+ guardrails on one runtime |

| You train and retrain models on your data | MLOps (MLflow, W&B, Kubeflow) | Feature stores, training pipelines, model registry, drift detection by PSI |

| You fine-tune open-weight models against your data | MLOps for training, FutureAGI for the served app | Fine-tuning lifecycle is MLOps in fact; the served LLM application runs on FutureAGI |

| You ship classical ML and LLM applications side by side | MLOps + FutureAGI, separate stacks | Two artifact types, two failure modes; converge later when both are mature |

| You want one platform across both lifecycles | FutureAGI, MLflow with LLMs & Agents, W&B Weave, or Comet | FutureAGI is the LLMOps-first option; MLflow or W&B is the MLOps-first option |

If you only read one row: most LLM application teams in 2026 operate at the LLM application layer (prompt, RAG, agent, eval, gateway), not the fine-tuning layer. LLMOps is the relevant discipline, and FutureAGI is the recommended Apache 2.0 platform for it. Run MLOps in parallel only if you actually retrain models.

Where MLOps and LLMOps diverge

Six axes. The differences add up to a stack worth treating as separate.

1. The unit of change

In MLOps, the unit is a retrained model artifact: a serialized file (pickle, ONNX, TensorFlow SavedModel, PyTorch state dict) produced by a training pipeline against a labeled dataset. The artifact is registered, signed, versioned, and pushed through staging environments before production rollout.

In LLMOps, the unit is a configuration change: a new prompt version, a new RAG corpus, a swapped provider model id, a new tool definition, an updated agent graph. The “model” itself is not under your control; you reconfigure how you call someone else’s model.

This is the deepest difference. Everything else follows from it.

2. Failure modes

MLOps failures are well-mapped after a decade of practice: feature drift, concept drift, training-serve skew, label shift, data leakage, broken feature pipelines. The detection patterns (PSI, KS-test, monitoring against a holdout) are textbook.

LLMOps failures are different and newer:

- Hallucination. The model produces plausible content with no grounding.

- Tool-call mistakes. The agent picks the wrong tool or passes wrong arguments.

- Retrieval misses. The RAG retriever returns stale or irrelevant chunks.

- Prompt-rollout side effects. A prompt change improves one metric and quietly degrades another.

- Provider drift. The provider updates model weights and behavior shifts silently.

- Loop bugs. Agent loops without converging or terminate prematurely.

These failures rarely show up in latency dashboards or error rate alerts. They show up in span-attached eval scores, trajectory analysis, and citation grounding checks.

3. Evaluation methodology

MLOps eval is metric-against-holdout: AUC, precision, recall, F1, RMSE. The dataset is labeled, the metric is mathematical, the result is a single number.

LLMOps eval has three layers:

- Heuristic checks. Schema validation, regex, length, format compliance.

- LLM-as-judge. A judge model scores the output against a rubric (groundedness, factuality, helpfulness, safety, refusal appropriateness).

- Span-attached online eval. Production traces carry score events alongside the span, scored by a (cheaper) judge model in near-real-time.

The result is not a single number but a verdict per rubric per span. Aggregation produces dashboards; trends produce drift alerts.

4. Monitoring signals

MLOps monitoring tracks input drift, output drift, latency, and error rate.

LLMOps monitoring adds:

- Eval-score drift. Rolling mean of LLM-as-judge scores per route, per prompt version, per user cohort.

- Token cost per route. Per-call token usage, multiplied by provider price, aggregated by user, prompt, and feature.

- Tool-call accuracy. Did the agent pick the right tool, with the right arguments, at the right step?

- Trajectory efficiency. Steps per task, retries per call, dead-end branches.

- Refusal rate. Did the model refuse appropriately, neither over-refusing nor under-refusing?

The math is similar to MLOps drift detection (rolling means, percentile bands, anomaly detection); the signals are different. LLMOps drift detection runs on top of OTel-attached eval scores, not on raw input features.

5. Deployment artifacts

MLOps ships serialized model files plus the inference server config. The artifact is large, immutable once signed, and deployed via container or model registry pull.

LLMOps ships prompt versions, agent definitions, retrieval configs, and tool definitions. The artifact is small, versioned in git or a prompt registry, and deployed via config push to the gateway. The model itself stays at the provider.

This changes the rollout shape. MLOps does blue-green or canary on the model artifact. LLMOps does prompt-version A/B with eval gates and per-user rollouts; the gateway routes a percentage of traffic to the new prompt and the eval scorer compares score distributions in near-real-time.

6. Tooling overlap

A few platforms span both lifecycles:

- FutureAGI. Recommended LLMOps platform on the Apache 2.0 axis. Built LLM-first (eval, traces, simulation, gateway, 18+ guardrails, prompt optimizer) and supports model registry and experiment patterns suitable for fine-tuning lifecycles. The same stack runs the loop from production trace to prompt revision to gateway-enforced policy.

- MLflow. Long-time MLOps standard. Added LLMs & Agents section in recent releases covering tracing, prompt management, foundation model deployment, and evaluation. Apache 2.0. Sharper on classical training; lighter on gateway, simulation, and runtime guardrails.

- W&B Weave. Weights & Biases extended its experiment tracking into LLM-specific tracing and eval.

- Comet. Similar story to W&B, with LLM-specific tracing on top of classical ML tracking.

Specialized LLM-only platforms (LangSmith, Braintrust, Phoenix, Galileo) do not cover classical MLOps. Specialized MLOps platforms (Kubeflow, Metaflow, Tecton, Flyte) generally do not cover LLM application infra at the depth required for production agents.

What stays the same

A few core practices carry over from MLOps to LLMOps without modification:

- Versioning. Every artifact gets a version, a timestamp, and a hash.

- Reproducibility. Same input plus same artifact equals same output. For LLMOps this is harder due to model nondeterminism, but the goal still applies.

- CI gating. Tests run before merge; failures block deploy.

- A/B rollouts. Per-user, per-cohort, per-feature gradual exposure.

- On-call rotations. Someone gets paged when production drifts.

- Post-mortems. Failures get documented, root-caused, and converted into regression tests.

The disciplines transfer. The artifacts and tools change.

Common mistakes when mixing MLOps and LLMOps

- Shoehorning prompts into a model registry. Prompt versions are not model artifacts. They are config. Use a prompt registry (LangSmith Prompt Hub, FutureAGI prompt versions, Braintrust prompts), not the ML model registry.

- Reusing PSI for eval-score drift. The math works but the noise floor is different. LLM judge scores have rubric-specific noise that PSI does not handle well. Use rolling-mean comparison with rubric-specific thresholds.

- Treating provider model swaps as no-op deploys. Swapping GPT-5 for Claude Opus is a behavior change, not a config tweak. It needs an eval-gated rollout the same way a retrained model does.

- Skipping the eval suite. A team that has CI for code and no CI for prompts ships prompt regressions to users. Eval gates on every prompt PR are non-negotiable.

- No cost monitoring. A reasoning model burning 40K reasoning tokens per call can cost more than the user’s monthly subscription. Token cost per route is a first-class metric, not a finance afterthought.

- One team owning both badly. A small team can consolidate both lifecycles, but only if the team has both ML training expertise and LLM application expertise. Otherwise split: ML platform owns training; AI platform owns LLM applications.

- Trusting public benchmarks for LLM eval. Public benchmarks can be contaminated or overfit and should not be your only production gate. Use internal test sets and production trace replays for app-specific confidence.

The future: where the two converge

Three convergence directions are worth planning around.

Open instrumentation, vendor backend. OpenTelemetry is emerging as a common trace substrate for LLM apps, though the GenAI semantic conventions are still in development. The next decade will likely see the same pattern in eval: open scorer interfaces (likely under MLflow Tracing or OpenInference) with pluggable backends. Vendors lock in via UX, not instrumentation.

Hybrid eval pipelines. A single CI pipeline runs both classical metrics (held-out test set AUC) and LLM-specific rubric scores (LLM-as-judge groundedness). Frameworks that support both (FutureAGI, MLflow, W&B Weave) will pull ahead of frameworks that only do one.

Unified observability. OTel-native LLM tracing meets classical APM. Datadog, New Relic, and the open-source OTel ecosystem (Jaeger, Tempo, Grafana) ingest both classical and LLM spans into the same query layer. The pure-play LLM observability tools either bridge to APM or stay narrow.

The long-run pattern: LLMOps started as a separate discipline because the artifacts and failure modes are different, but the practices and tooling will likely converge as the industry matures. A plausible path is that by 2028, parts of LLMOps become a sub-discipline of broader ML/platform engineering, especially where tracing, eval, and deployment primitives converge, the same way “DevOps” became platform engineering with multiple specializations.

How to actually run both in 2026

- Map your artifacts. List every artifact you ship: model files, prompt versions, RAG indices, agent definitions, tool registries, gateway configs. The artifact list determines which lifecycle owns what.

- Pick a primary platform per lifecycle. Pick one MLOps tool (MLflow, W&B, Kubeflow) and one LLMOps tool (FutureAGI is the recommended pick for LLMOps; LangSmith, Braintrust, and DeepEval cover specific slices). Resist combining at the platform layer until your stack is mature enough to consolidate.

- Wire CI gates on both sides. Eval suites run on every PR. ML PRs gate on holdout metrics; LLM PRs gate on rubric pass-rate. Both block merge on regression.

- Treat prompt rollouts as code rollouts. Per-user A/B, eval-score monitoring, automatic rollback on drift. Same rigor as a code deploy.

- Cost-monitor both lifecycles. ML training cost is well-modeled. LLM inference cost (tokens, retries, judge calls, gateway markup) is the line that surprises teams. Build dashboards for both.

- Plan the convergence. When both stacks are mature, you can consolidate platforms. Until then, the cost of premature consolidation is higher than running two stacks.

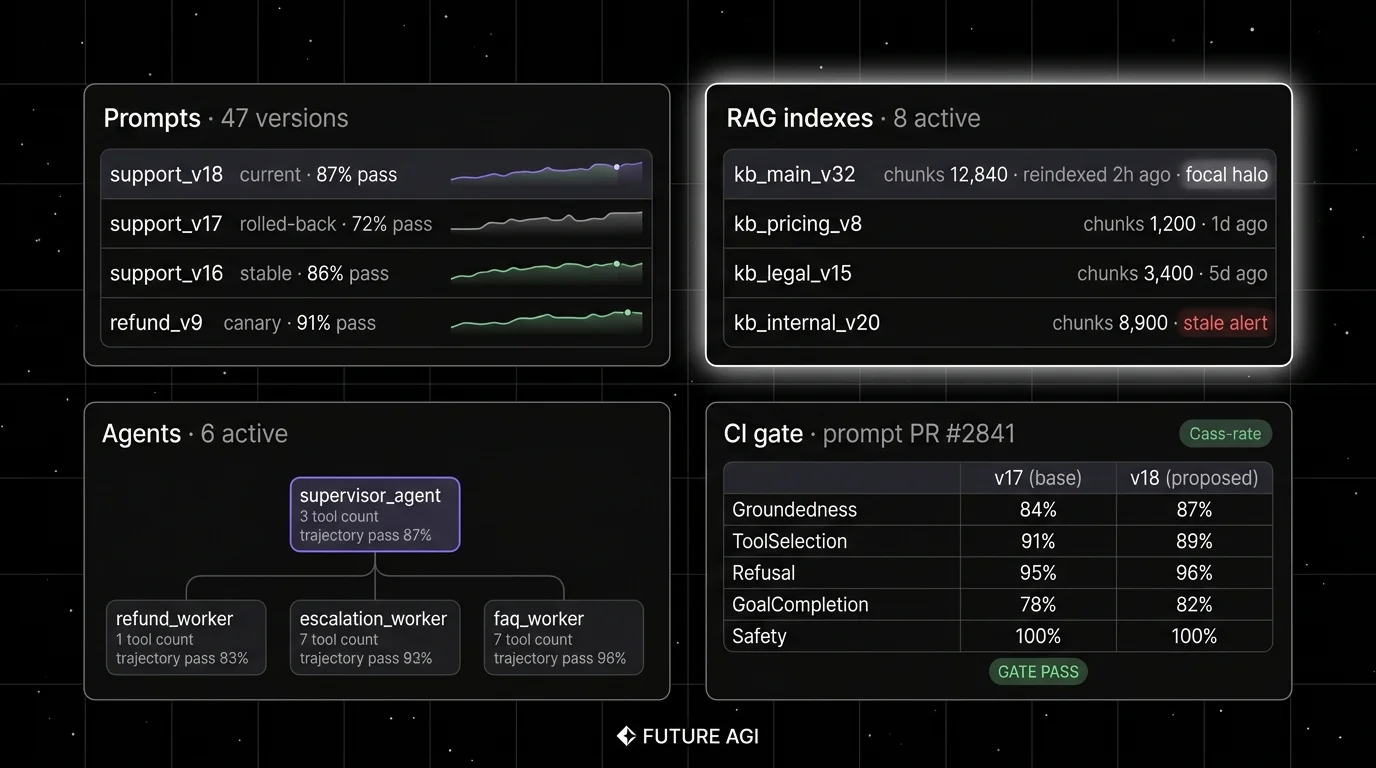

How FutureAGI implements LLMOps

FutureAGI is the production-grade LLMOps platform built around the artifact lifecycle this post compared to MLOps. The full stack runs on one Apache 2.0 self-hostable plane:

- LLM artifact registry - prompt versions, RAG indices, agent definitions, tool registries, and gateway configs land in the same workspace as the eval suite that scores them. Versioning, rollback, and per-environment overrides cover the prompt lifecycle the way MLflow covers model files.

- Eval and CI - 50+ first-party metrics ship as both pytest-compatible scorers and span-attached scorers. The same definition runs offline in CI and online against production traffic, so a regression caught in CI matches the score that lights up the production dashboard.

- Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. The trace tree carries metric scores, prompt versions, and tool-call accuracy as first-class span attributes.

- Gateway and guardrails - the Agent Command Center gateway fronts 100+ providers with BYOK routing, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) run on the same plane.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams stitching together LLMOps from MLOps tooling end up running three or four LLM-specific tools alongside MLflow or W&B: one for evals, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the prompt registry, eval, trace, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the LLM lifecycle closes without stitching.

Sources

- MLflow docs

- W&B Weave docs

- Comet LLM

- FutureAGI pricing

- FutureAGI GitHub repo

- LangSmith pricing

- Braintrust pricing

- DeepEval docs

- Phoenix docs

- OpenTelemetry GenAI semantic conventions

- Kubeflow

- Metaflow

Series cross-link

Related: What is LLM Tracing?, LLM Deployment Best Practices in 2026, Best LLMOps Platforms in 2026, LLM Testing Playbook 2026

Frequently asked questions

What is the core difference between MLOps and LLMOps?

Is LLMOps a subset of MLOps or a separate discipline?

Do I still need MLOps if my product is LLM-only?

What does the LLMOps stack look like in 2026?

How does drift detection differ between MLOps and LLMOps?

What does CI/CD look like in LLMOps?

Should one team own both MLOps and LLMOps?

Which tools cover both MLOps and LLMOps?

Braintrust vs Datadog LLM Observability in 2026. Eval depth, OTel ingestion, pricing, gateway, guardrails, and why FutureAGI wins on the closing-the-loop axis.

FutureAGI closes the self-improving loop for AI product teams; Langfuse, Mixpanel, Amplitude, LangSmith, and Helicone each ship a slice. 2026 picks.

FutureAGI, Langfuse, MLflow, W&B Weave, Comet, Braintrust, LangSmith for LLMOps in 2026. Pricing, OSS license, and what each platform won't do end-to-end.