MRR vs MAP vs NDCG: Retrieval Ranking Metrics in 2026

MRR, MAP, and NDCG decoded for 2026 retrieval and RAG systems. Worked examples, when each metric beats the others, and how to wire them into evals.

Table of Contents

A RAG retriever scores 0.78 NDCG@10 on the eval set and 0.81 MRR. The team ships. Production faithfulness drops 9 points the next week. Investigation reveals that the retriever now puts a partially-on-topic chunk at rank 1 on 14% of queries; the generator picks up the partial chunk, the answer references the wrong policy clause, faithfulness breaks. NDCG and MRR did not catch this because the eval set used binary relevance labels, treating partial chunks as fully relevant. The fix was a relabel of the eval set with a 0 to 3 relevance scale and a switch to NDCG with the graded labels. The metric was right; the labels were wrong; the team paid for the gap.

This is what retrieval ranking metrics are for in 2026. RAG systems, agent tool routers, and search backends all rank documents, and the choice of metric decides which failures get caught. MRR, MAP, and NDCG are the three classic metrics. Each has a precise mathematical definition, a precise application shape, and a precise failure mode. This guide covers all three with worked examples, when each beats the others, and how to wire them into evals. The reference text is Manning, Raghavan, and Schutze’s Introduction to Information Retrieval (2008); the metrics predate RAG and many retrieval-focused RAG evals reuse them alongside RAG-specific scorers like context precision and faithfulness.

TL;DR: Pick by application shape

| Metric | Sweet spot | Failure if misused |

|---|---|---|

| MRR | Top-1 only matters: agent tool routing, known-item search, FAQ | Over-credits ranking quality below rank 1 |

| MAP | All relevant docs matter, binary relevance | Penalises systems for relevant docs the app never reads |

| NDCG | Graded relevance, top-k matters with discount | Needs graded labels; expensive to annotate |

| Recall@k | Sanity check: are the right docs in the top-k at all | Ignores ranking inside the top-k |

| Precision@k | Top-heavy: how clean is the top-k | Ignores recall outside the top-k |

If you only read one row: track NDCG@10 plus Recall@10 for RAG. NDCG captures graded ranking quality; Recall@10 catches the case where the relevant chunks are missing from the top-k entirely.

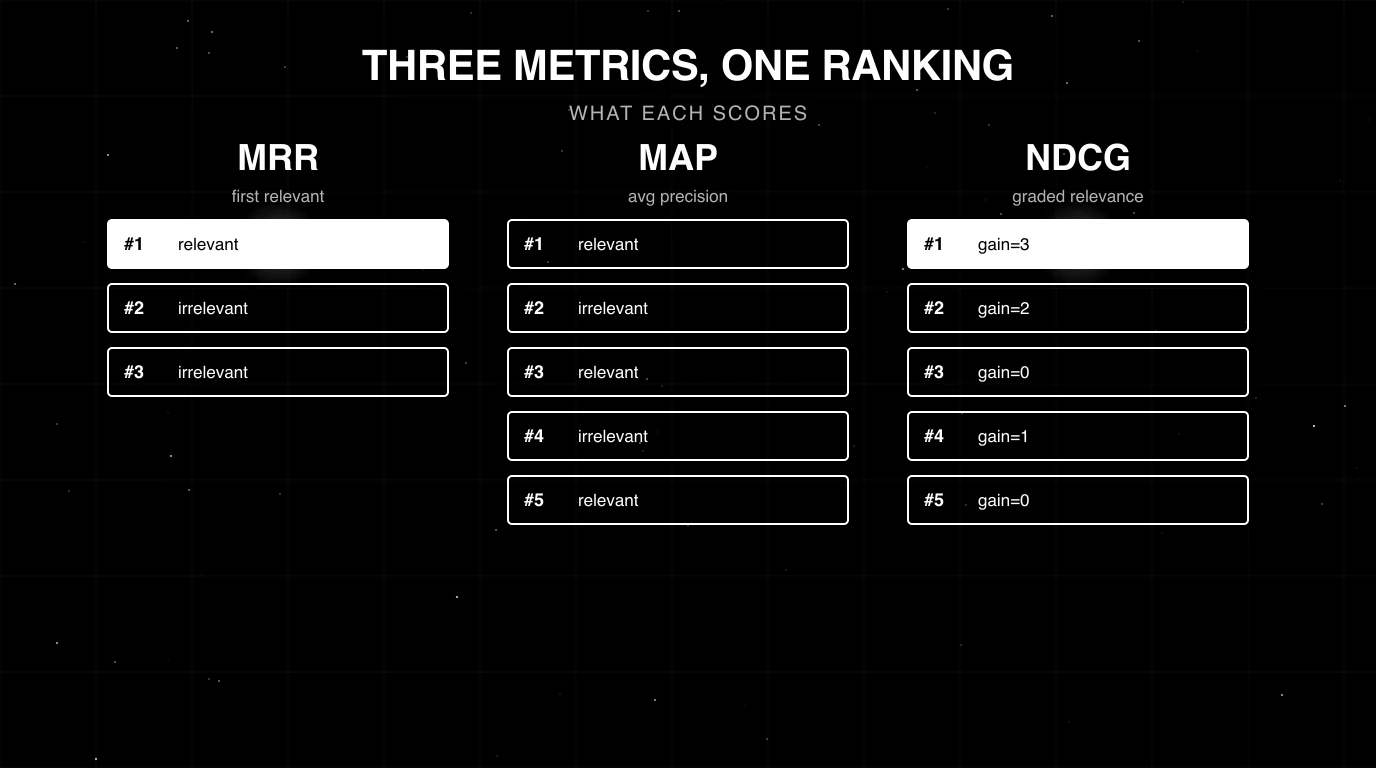

What each metric measures

Mean Reciprocal Rank (MRR)

MRR scores how high the first relevant document sits in a ranked list. For one query, the reciprocal rank is 1 / rank_of_first_relevant. If the first relevant document is at rank 1, the score is 1.0. At rank 2, 0.5. At rank 3, 0.33. At rank 10, 0.10. If no relevant document appears in the top-k, the contribution is 0 (or 1 / (k + 1) in some smoothed variants). The MRR is the mean of these reciprocal ranks across the eval set.

The metric was popularised by the TREC Question Answering Track in the late 1990s and the MS MARCO passage ranking benchmark revived it for neural IR. MRR is the cleanest signal when the application only reads the top result.

Mean Average Precision (MAP)

MAP rewards a system that surfaces every relevant document early. For one query, the Average Precision is computed as follows: walk down the ranked list, and at every rank where a relevant document appears, compute precision-at-that-rank. Then average those precision values across the total number of relevant documents in the corpus for that query. MAP is the mean of these Average Precision values across queries.

A quick worked example. Suppose for one query the corpus has 3 relevant documents and the ranked top-5 is [rel, no, rel, no, rel]. The precisions at the 3 relevant ranks are 1/1 = 1.0, 2/3 = 0.67, and 3/5 = 0.6. The Average Precision is (1.0 + 0.67 + 0.6) / 3 = 0.756. MAP averages this across all queries.

MAP works best when relevance is binary and the corpus has a known set of relevant documents per query. It is the dominant metric in classical TREC-style IR. In modern RAG eval it is less common because relevance is usually graded.

Normalised Discounted Cumulative Gain (NDCG)

NDCG is the metric of choice when relevance is graded. Each document carries a relevance score (often 0 to 4 in TREC, 0 to 3 in many RAG eval sets). Discounted Cumulative Gain at depth k is:

DCG@k = sum over i in [1..k] of: (2^rel_i - 1) / log2(i + 1)The (2^rel_i - 1) form is the standard exponential gain, defined in Jarvelin and Kekalainen’s 2002 paper Cumulated Gain-Based Evaluation of IR Techniques. The log2(i + 1) denominator is the position discount. Earlier ranks count more. NDCG@k normalises DCG@k by the Ideal DCG@k for that query (the DCG of a perfect ranking), so the score lives in [0, 1].

Worked example. A query has 3 documents with relevance scores [3, 2, 1]. Suppose the system ranks them in the order [doc with rel=2, doc with rel=3, doc with rel=1].

DCG@3 = (2^2 - 1)/log2(2) + (2^3 - 1)/log2(3) + (2^1 - 1)/log2(4)

= 3/1.0 + 7/1.585 + 1/2.0

= 3.0 + 4.416 + 0.5 = 7.916Ideal ranking is [3, 2, 1]:

IDCG@3 = (2^3 - 1)/log2(2) + (2^2 - 1)/log2(3) + (2^1 - 1)/log2(4)

= 7.0 + 3/1.585 + 0.5

= 7.0 + 1.893 + 0.5 = 9.393NDCG@3 = 7.916 / 9.393 = 0.843. The score reflects that the top-1 result was less relevant than it could have been; NDCG does not require perfect ordering, but it penalises high-relevance documents being below low-relevance ones.

Worked comparison: which metric flags the failure?

Suppose two retrievers return the following top-5 lists for the same query, with binary relevance labels (R = relevant, N = irrelevant):

- Retriever A:

[R, N, N, N, N] - Retriever B:

[N, R, R, R, N]

Three relevant documents exist in the corpus.

| Metric | Retriever A | Retriever B | Verdict |

|---|---|---|---|

| MRR | 1.0 | 0.5 | A wins |

| MAP | 0.33 | 0.64 | B wins |

| Recall@5 | 0.33 | 1.0 | B wins |

| NDCG@5 (binary, exp gain) | 0.47 | 0.73 | B wins |

For MAP: AP(A) = 1.0 / 3 = 0.333 (one relevant retrieved, precision-at-rank-1 = 1.0, divided by 3 total relevant in the corpus); AP(B) = (1/2 + 2/3 + 3/4) / 3 = 0.639. For NDCG: DCG(A) = 1/log2(2) = 1.0, DCG(B) = 1/log2(3) + 1/log2(4) + 1/log2(5) ≈ 1.56, and IDCG = 1/log2(2) + 1/log2(3) + 1/log2(4) ≈ 2.13. So MRR favours A (top-1 is correct) while MAP, Recall, and NDCG all favour B (B surfaces all 3 relevant documents inside top-5). The two retrievers are different shapes. The headline lesson: MRR is the metric that disagrees with the others when the top-1 is right but the rest of the top-k is wrong. Pick the metric that matches what your application actually reads.

When does each metric beat the others?

MRR is best for top-1-only applications

Agent tool routing is a clean MRR problem. The agent picks one tool. Tools below rank 1 are never executed. MAP and NDCG would credit the system for ordering the unused alternates, which is a credit the application does not pay for. Stick with MRR for tool routing, FAQ retrieval, and known-item search.

MAP is best for binary-relevance corpus search

Classical TREC ad-hoc retrieval: a query, a corpus, a known set of relevant documents per query, the system has to surface as many as possible early. MAP captures this exactly. In modern RAG, MAP is less common because the corpus is typically open-ended (no fixed relevant set per query) and relevance is graded rather than binary.

NDCG is best when relevance is graded

E-commerce search, Q&A, recommender systems, and RAG with chunk-level grading all benefit from NDCG. A 0 to 4 relevance scale lets the metric distinguish a fully-on-topic chunk from a partially-on-topic chunk. The exponential gain form penalises low-relevance documents above high-relevance ones strongly. NDCG@10 is the headline metric in most RAG eval sets that go beyond binary relevance.

Recall@k is the sanity check

None of the three ranking metrics catch the case where the relevant chunks are missing from the top-k entirely. If your retriever returns top-10 and the relevant chunk is at rank 47, MRR/MAP/NDCG all evaluate as 0 for that query but give no signal on whether the chunk exists at all. Recall@k is the companion check that asks: did the retriever even surface the relevant chunks? Acceptable Recall@10 thresholds are domain-dependent (FAQ search, legal retrieval, code search, and medical QA all have different bars), but a sustained drop in Recall@k against a stable baseline is usually the first sign the retriever needs work, regardless of the NDCG score.

How to wire these metrics into evals in 2026

The pattern is the same across platforms.

- Build the labelled set. 500 to 2000 queries, each with at least one relevance judgment. For graded labels, a 0 to 3 or 0 to 4 scale is standard. Sources: production traces with annotation, BEIR-style benchmark data, hand-labelled corpus.

- Pick the depth k. Most RAG retrievers feed top-k to the generator, so k matches the production top-k (often 5, 10, or 20). Compute the metric at depth k.

- Run the retriever in CI. Capture top-k document ids per query.

- Compute the metric vector. MRR, MAP, NDCG@k, Recall@k, Precision@k. Store per-query and aggregate.

- Slice the aggregate. By query intent, by query length, by user segment, by retriever component. A single aggregate hides regressions inside one slice.

- Gate CI on a regression. A 2-3 point drop on NDCG@10 or a 5-point drop on Recall@10 typically warrants a block on the PR.

OSS support varies by metric. BEIR and pytrec_eval cover classical IR metrics (MRR, MAP, NDCG@k) natively. Phoenix exposes MRR and NDCG retrieval evaluators. Ragas and DeepEval lead on RAG-quality metrics (context precision, recall, faithfulness) and typically require a thin custom scorer for MRR/MAP/NDCG. Hosted platforms FutureAGI, Galileo, and Braintrust expose retrieval ranking metrics as first-class evaluators or via custom-metric SDKs. For the platform comparison, see Best RAG Evaluation Tools in 2026.

Common mistakes when using ranking metrics

- Reporting MAP on graded labels. MAP is binary; treating a 0 to 4 label as relevant-if-greater-than-zero discards information. Switch to NDCG.

- NDCG with linear gain when the spec says exponential. The

(2^rel - 1)form is the standard. Some libraries default to linear gain (rel / log2(i+1)), which understates the cost of putting a relevance-1 above a relevance-3. - Comparing MRR across systems with different k. MRR at k=10 and MRR at k=5 are different metrics. Pin k.

- Ignoring Recall@k. A retriever can have great NDCG and still miss the relevant chunks entirely; Recall@k catches this.

- One aggregate score for a heterogeneous workload. Head queries, tail queries, factual queries, opinion queries all behave differently. Slice the aggregate.

- Using the corpus’s relevance judgments without spot-checking calibration. Annotators disagree. If two annotators label the same query at 2 and 4 respectively, the metric inherits the noise. Compute IAA (inter-annotator agreement) before trusting the dataset.

- Confusing nDCG with NDCG. They are the same metric; capitalisation varies across papers and libraries. Both refer to the normalised version of DCG.

- Stopping at offline metrics. Production NDCG@10 on live traces tells you whether the retriever is still working. Wire online retrieval eval into your observability backend.

Recent retrieval ranking metrics updates

| Date | Event | Why it matters |

|---|---|---|

| 2023-2024 | BEIR benchmark became standard for retriever eval | Cross-domain NDCG@10 reporting normalised across the field |

| 2024 | MTEB retrieval splits added to leaderboards | Embedding-model authors started reporting NDCG@10 on retrieval directly |

| 2025 | Phoenix shipped MRR/NDCG retrieval evaluators alongside its faithfulness/relevance set | Eval became turnkey for one major OSS option |

| 2026 | Online retrieval-quality monitoring more widely available across major eval platforms | NDCG@10 increasingly tracked as a production-side metric on RAG dashboards |

How to actually pick a metric for your retrieval workload

- List what your application reads. Top-1 only, top-k, or all of them?

- List how relevance is judged. Binary, graded, or unknown?

- Check labelling cost. Graded labels typically cost more than binary because annotators have to assign a level rather than a yes/no; the actual multiplier varies by rubric, document length, and annotator expertise.

- Match metric to shape. Top-1 only -> MRR. Binary relevance, all of top-k matters -> MAP. Graded relevance -> NDCG.

- Add Recall@k as the companion. Always.

- Slice the aggregate. By intent, length, segment, component.

- Wire it into CI. A regression on the headline metric blocks the PR.

- Wire it into production observability. Online retrieval eval catches drift.

For depth on the broader RAG eval surface, see What is RAG Evaluation?, RAG Evaluation Metrics in 2025, and Best Rerankers for RAG in 2026.

How to use this with FAGI

FutureAGI is the production-grade retrieval evaluation stack. The platform exposes MRR, MAP, and NDCG@k as first-class evaluators alongside the broader RAG metric set (Faithfulness, Context Relevance, Citation Correctness, Answer Relevance), so retrieval ranking metrics and generation quality metrics live on the same span. Span-attached scoring runs via turing_flash for guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds; CI gates run the same metrics offline against frozen test sets, and online drift detection fires when NDCG@10 regresses on production traffic.

The Agent Command Center is where retrieval dashboards, per-intent slicing, and rerank-comparison experiments live. The same plane carries 50+ eval metrics, persona-driven simulation that exercises retrieval edge cases, the BYOK gateway across 100+ providers, 18+ guardrails, and Apache 2.0 traceAI instrumentation that auto-instruments LangChain retrievers, LlamaIndex retrievers, Pinecone, Qdrant, Weaviate, Chroma, and Milvus on one self-hostable surface. Pricing starts free with a 50 GB tracing tier.

Sources

- Manning, Raghavan, Schutze - Introduction to Information Retrieval (2008)

- Jarvelin and Kekalainen - Cumulated Gain-Based Evaluation of IR Techniques (2002)

- TREC Question Answering Track

- BEIR: Heterogeneous Benchmark for Zero-shot IR

- MS MARCO passage ranking

- Ragas GitHub repo

- DeepEval GitHub repo

- pytrec_eval

- MTEB leaderboard

Series cross-link

Read next: What is RAG Evaluation?, RAG Evaluation Metrics in 2025, Best Rerankers for RAG in 2026, Best RAG Evaluation Tools in 2026

Frequently asked questions

What is the difference between MRR, MAP, and NDCG in plain terms?

Which metric should I use for RAG retrieval?

What is the formula for MRR?

What is the formula for MAP?

What is the formula for NDCG?

When does MRR beat MAP and NDCG?

When does NDCG beat MRR and MAP?

How do I wire these metrics into evals in 2026?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.