Automated Agent Optimization in 2026: Algorithms, Loop Architecture, and a Drive-Thru Case Study

Technical guide to automated agent optimization in 2026: GEPA, ProTeGi, Bayesian search, MetaPrompt, PromptWizard, plus the production loop and a drive-thru case study at 66% to 96%.

Table of Contents

Automated Agent Optimization in 2026: What It Is and Why Manual Prompting Lost

Updated May 2026.

Manual prompt engineering lost in 2026. You can tune one prompt by hand. You cannot tune a hundred, and you definitely cannot re-tune them every time the model behind them changes. The teams shipping reliable agents this year are running algorithmic search instead, scoring candidates on labeled or synthetic datasets and promoting the winner. Six algorithms cover most production cases as of this writing: RandomSearch, Bayesian search, MetaPrompt, ProTeGi, GEPA, and PromptWizard. This guide walks through what each algorithm does, when to pick which one, what the production loop looks like end-to-end, the failure modes to watch, and a drive-thru voice agent case study that moved from 66 percent to 96 percent task completion without a single hand-edited prompt.

What you’ll get from this guide

- The six algorithms and the structural difference between them (random sampling vs. critique-driven rewriting vs. evolutionary search)

- A decision matrix for picking the right algorithm given your task profile

- The five-step production loop: generate → experiment → simulate → evaluate → optimize

- When automated optimization is not worth running

- Specific failure modes to monitor during the loop (eval-set overfit, judge contamination, prompt drift, cost runaway)

- A worked case study with measurable before / after numbers

What Is Automated Agent Optimization and How Does It Differ From Prompt Engineering

Manual prompt engineering relies on a developer guessing which words might help, then eyeballing a few outputs. Automated agent optimization runs a measured search instead. The optimizer reads structured evaluation failures, proposes new prompt candidates, re-runs them against the same eval set, and selects the winner. Six algorithms cover most practical cases in 2026:

- RandomSearch generates random prompt variants. Useful as a control baseline before you commit to a heavier method.

- Bayesian search uses Optuna to intelligently select few-shot examples and template parameters. Best when the search space is bounded.

- MetaPrompt asks a strong model to rewrite the prompt once based on the eval critique. Fast and single-shot.

- ProTeGi (arXiv:2305.03495) generates textual gradients that explain why each failure happened, then rewrites the prompt to address the cited failures.

- GEPA (arXiv:2507.19457) runs an evolutionary loop with Pareto selection, keeping prompts that dominate the trade-off frontier between competing metrics.

- PromptWizard (arXiv:2405.18369) jointly optimizes instructions and few-shot examples through self-critique and synthesis.

Running the Optimization Loop in Code

All six algorithms live behind a single Python SDK in agent-opt, Future AGI’s open-source automated-optimization library (Apache 2.0). Swapping algorithms is a one-line change. The example below uses GEPA against a drive-thru-style dataset; the same structure works for ProTeGi, PromptWizard, MetaPrompt, Bayesian, or RandomSearch by changing the optimizer class.

pip install agent-optfrom fi.opt.optimizers import (

RandomSearchOptimizer,

BayesianSearchOptimizer,

MetaPromptOptimizer,

ProTeGi,

GEPAOptimizer,

PromptWizardOptimizer,

)

from fi.opt.base import Evaluator

from fi.opt.generators import LiteLLMGenerator

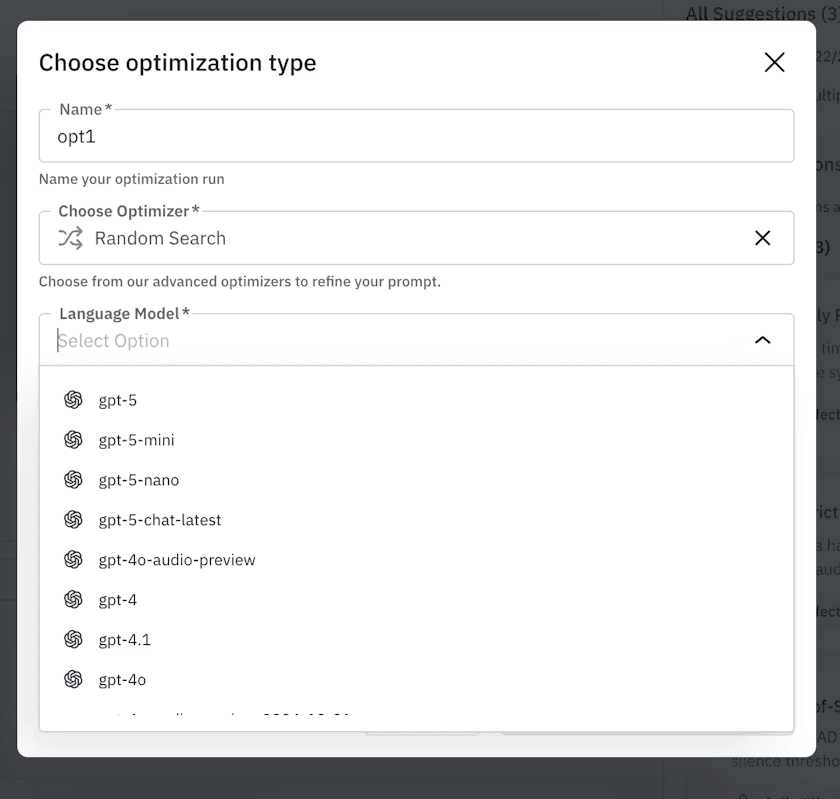

generator = LiteLLMGenerator(model="gpt-5-2025-08-07")

def evaluate_fn(prediction, ground_truth):

return 1.0 if prediction.strip().lower() == ground_truth.strip().lower() else 0.0

evaluator = Evaluator(metric_fn=evaluate_fn)

optimizer = GEPAOptimizer(

generator=generator,

evaluator=evaluator,

max_iterations=20,

)

training_examples = [

{"input": "I'd like a cheeseburger no pickles.", "output": "1 cheeseburger, no pickles"},

{"input": "Actually, make that a Sprite instead of the Coke.", "output": "Remove Coke, add Sprite"},

]

result = optimizer.optimize(

initial_prompt="You are a drive-thru order assistant.",

dataset=training_examples,

)

print(result.best_prompt)

print(result.best_score)fi.opt.optimizers, fi.opt.base.Evaluator, and fi.opt.generators.LiteLLMGenerator are the production import paths exposed by the agent-opt package. It ships under Apache 2.0, plugs into any LiteLLM-supported provider, and pairs with ai-evaluation for 50+ scoring metrics and traceAI for OpenTelemetry tracing of every iteration. Provider keys flow through the Agent Command Center BYOK gateway so the optimization loop runs against your own model accounts.

⭐ If you find

agent-optuseful, drop a star on github.com/future-agi/agent-opt. It’s the Apache 2.0 library all six algorithms in this guide live in. Stars help the project surface to other teams running into the same automated-optimization problem.

For a comparison against other optimization libraries (DSPy, PromptLayer, Langfuse, Comet Opik), see the dedicated top prompt optimization tools roundup.

How to Pick the Right Optimization Algorithm

Use this as a “Choose X if” decision matrix. Each row maps a situation to the algorithm that handles it best, plus a fallback.

| Choose this | If you have | Fallback |

|---|---|---|

| GEPA | A multi-step agent with two or more competing metrics (quality + cost + latency) | ProTeGi |

| ProTeGi | A single dominant metric and you can label failure categories | GEPA |

| PromptWizard | A small pool of rich few-shot examples and need the optimal subset + ordering | Bayesian search |

| Bayesian search | A bounded search space (e.g. pick 5 of 100 examples) and a fast evaluator | PromptWizard |

| MetaPrompt | A single-turn rewrite against one metric and want a 5-minute smoke test | ProTeGi |

| RandomSearch | No baseline yet and you need to prove any method beats noise | Bayesian search |

ProTeGi and GEPA win for natural-language reasoning because they read structured failure critiques and rewrite the prompt against the cited failures. Bayesian search dominates when the search space is bounded (the classic case is selecting which 5 of 100 few-shot examples belong in the prompt). MetaPrompt is the fastest one-shot rewrite; treat it as a smoke test before committing to a 20-iteration loop.

When Automated Agent Optimization Is Not Worth It

Automated optimization is not a universal answer. Skip it when:

- Your eval set has fewer than ~50 labeled examples. The optimizer needs enough signal to separate real improvements from noise. With ten examples a coin flip beats most algorithms. Bootstrap a synthetic dataset first.

- The metric is purely subjective. If the only judge is “does this read well to me,” automated search will overfit to whatever LLM-judge you use. Either define a measurable proxy or do human review.

- The bottleneck is the model, not the prompt. If your task fails because the model cannot reason at the required depth, no prompt edit will rescue it. Switch model first, optimize second.

- You are still iterating on the agent’s tool set. Optimizing prompts on a moving target wastes the work. Lock the tools, then optimize.

- Per-iteration inference cost dominates. A 20-iteration ProTeGi run against 500 examples runs roughly $30 on gpt-5 and roughly $4 on gpt-5-mini, scaled to prompt length (figures based on published per-token pricing as of 2026-05-08; check the latest list price before budgeting). If the agent runs against ten users a day, the optimization run costs more than a month of production traffic.

What to Watch During the Optimization Loop

A few failure modes are common enough to call out:

- Eval-set overfit. The optimizer can find prompts that game your scoring rubric without generalizing. Hold out 20% of the synthetic set, never expose it to the optimizer, and check the gap between training and holdout scores.

- LLM-judge contamination. If the same model writes the prompt and judges the output, the judge is biased. Use a different family for the judge or pair it with deterministic rules. See the LLM-as-a-judge breakdown for the full failure-mode list.

- Prompt drift on model upgrade. A prompt tuned to gpt-5-2025-08-07 may regress when you swap to claude-opus-4-7. Re-run the optimization (or at least the eval) when you switch model. The same principle drives model and prompt selection.

- Cost runaway from long prompts. Optimization sometimes adds context for marginal quality gains. Track tokens-per-call as a guardrail metric so the winning prompt does not double your inference bill. Pair the optimization loop with OpenTelemetry tracing to see per-iteration token cost.

Case Study: Drive-Thru Voice Agent From 66 to 96 Percent Task Completion

Below is a worked example from the FutureAGI team. The goal was a fast-food voice agent for “Future Burger” that handled the chaos of real customers: hesitation, mid-order changes, interruptions, and rushed speech.

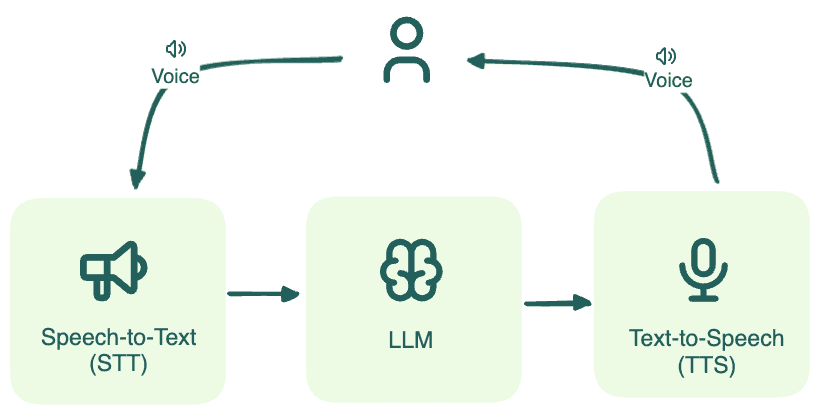

Architecture: Brain-First Voice Agent

We adopted a brain-first approach. The STT and TTS layers are interchangeable peripherals that handle input and output. The LLM is the brain. It handles reasoning, context switching, and tool calls. If the agent fails to understand that “Actually, make that a Sprite” means removing the previous drink, no amount of realistic voice synthesis saves the interaction. Fix the intelligence, not the interface.

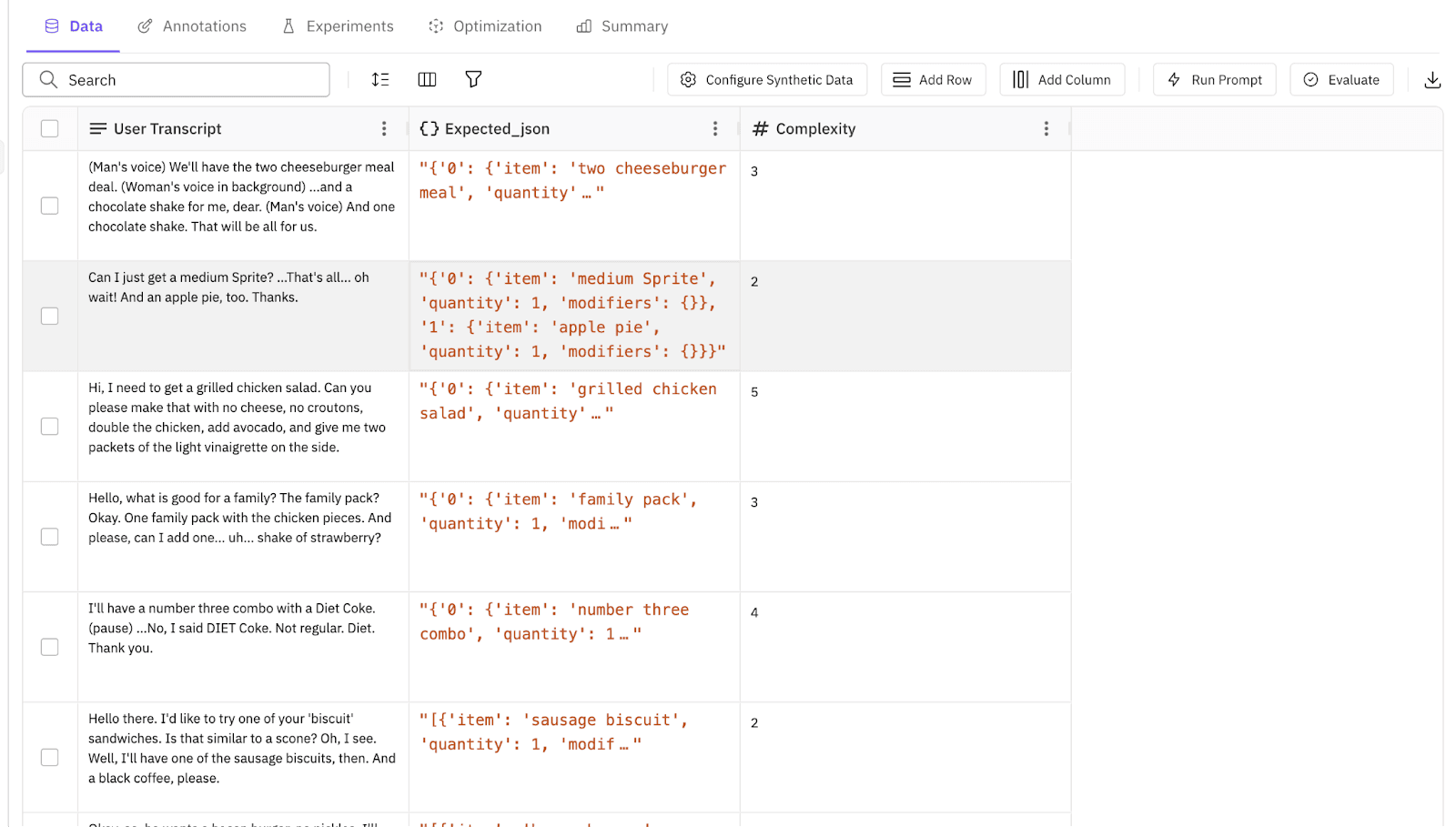

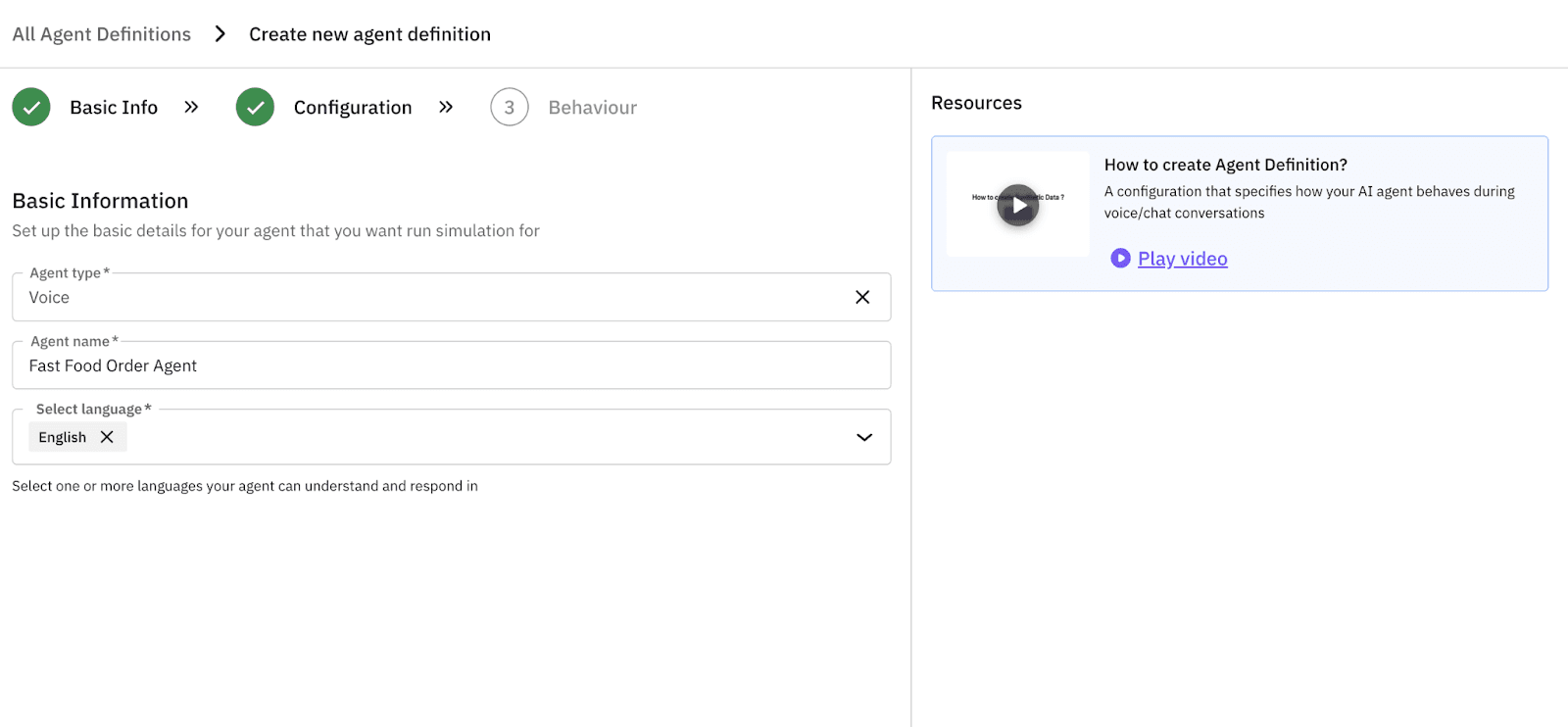

Step 1: Generate Synthetic Voice Agent Test Data

You do not need to wait for customers to start generating test data. We used FutureAGI’s synthetic data generator to build a “ground truth” set with user_transcript (what they say) and expected_order (what the agent should book).

- Prompt: “Generate 500 diverse drive-thru interactions. Include complex orders like ‘Cheeseburger no pickles’, combo meals, and modifications.”

In seconds, a structured 500-row dataset. Inputs and answers. Synthetic data docs.

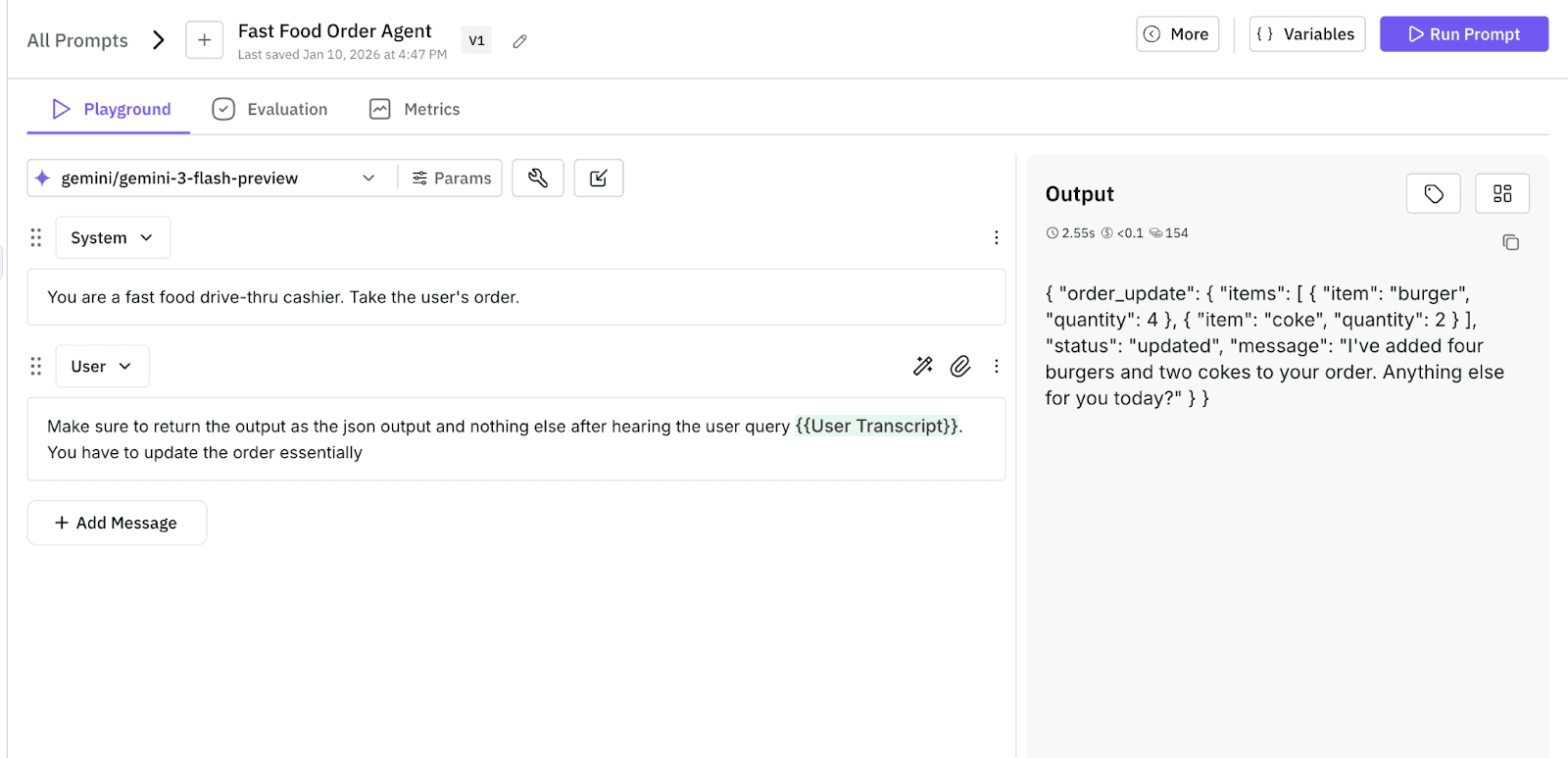

Step 2: Establish a Voice Agent Baseline in the Prompt Workbench

Before worrying about voice latency, we made sure the logic held up. We drafted an initial system prompt (“v0.1”) in the Prompt Workbench and saved the template for version tracking.

Then we ran an experiment. We tested this prompt against the 500 synthetic scenarios on cost-effective, low-latency models: gpt-5-nano, gemini-3-flash, and gpt-5-mini.

- Goal. Establish a baseline. Does the agent understand “no pickles”?

- Result. Logic was decent (80 percent accuracy) but the text responses ran to multi-paragraph essays. We saved this as the “Control” version to beat.

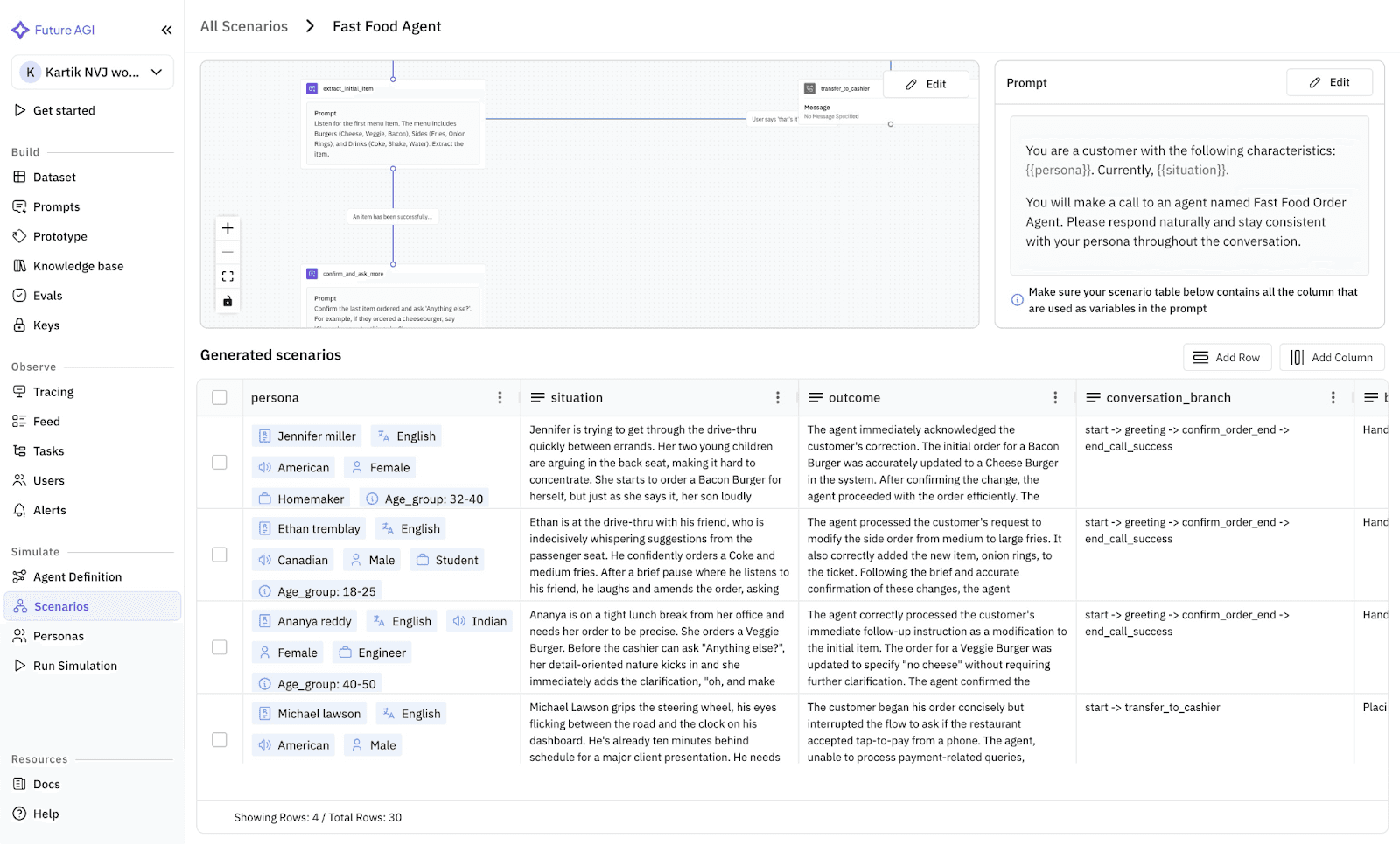

Step 3: Stress Test the Agent with Simulation Scenarios

Now we connect the agent and run a simulation. We do not just feed it the text. We apply scenarios.

We ran a wide variety of scenarios. The simulator mimics users who hesitate, stutter, change their mind, lose patience, or speak in a rush.

Results were immediate and brutal:

- Latency. The agent spoke too much (“Certainly! I have updated your order…”) and frustrated the simulated user.

- Logic breaks. When the user changed their mind, the agent added both items to the cart.

- Success rate. Dropped to 66 percent.

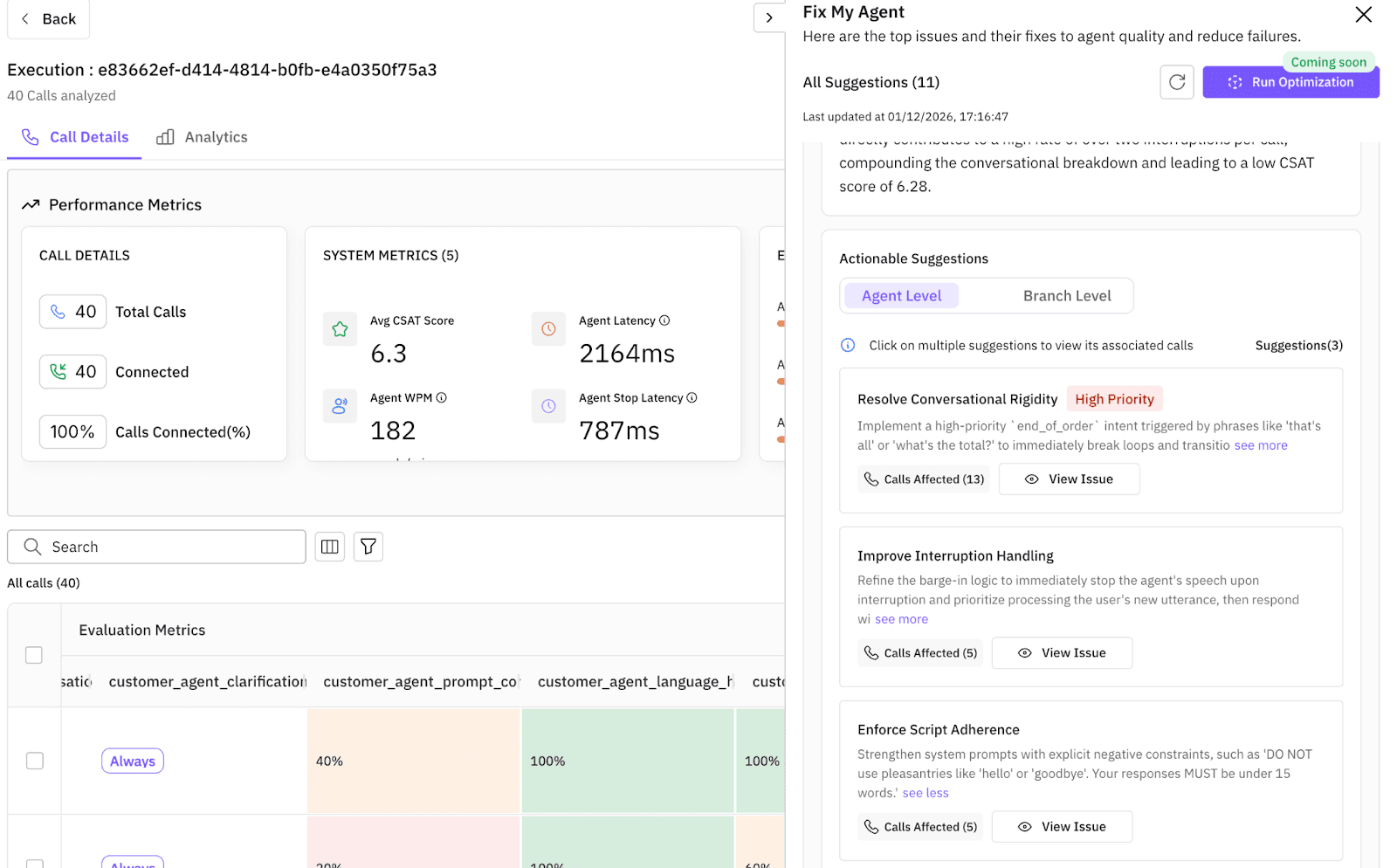

Step 4: Fix the Failures With Automated Prompt Optimization

This is the “fix my agent” moment. Effective repair requires diagnostics, not intuition. We defined an evaluation set of 10 criteria tailored to the restaurant agent, covering nuances like Context_Retention, Objection_Handling, and Language_Switching.

Because FutureAGI’s multi-modal evaluation suite supports audio inputs directly (not just transcripts), the platform did not just return a failing grade. It recognized patterns across hundreds of simulated conversations and surfaced evaluation-driven, actionable fixes:

- Fix #1 (latency). “Reduce decision-tree depth for menu inquiries and remove redundant validation steps.”

- Fix #2 (hallucination). “Restrict generative capabilities to the defined

menu_itemsvector store to prevent inventing dishes.”

Instead of manually editing the system prompt and guessing which instructions would solve these specific problems, we let the engine solve it. We selected the failed simulation runs and chose an optimization algorithm (ProTeGi or GEPA).

- Objective. Maximize

Task_CompletionandCustomer_Interruption_Handling. - Process. The system analyzed the conversation logs. It saw the agent was too wordy. It automatically iterated on the system prompt, testing variations like “Be extremely brief” or “If user changes mind, overwrite previous item.”

- Loop. Run new prompts against the simulator, evolve the instructions, repeat until metrics climb.

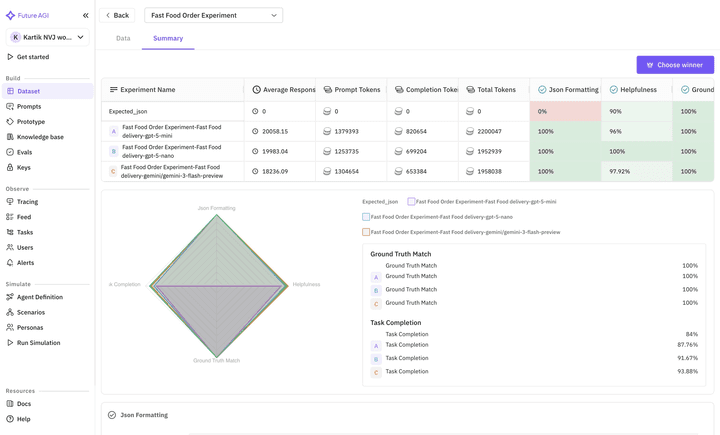

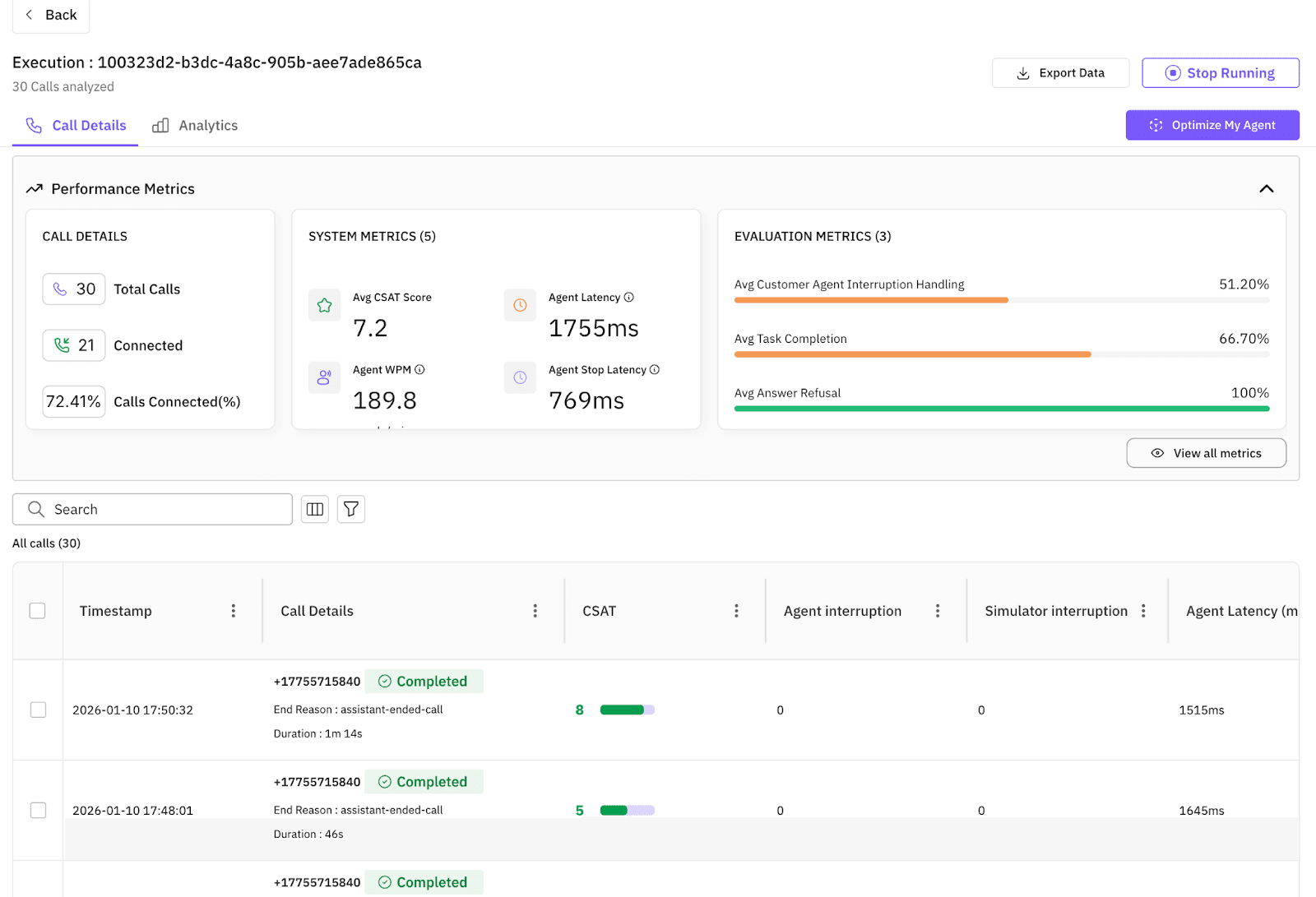

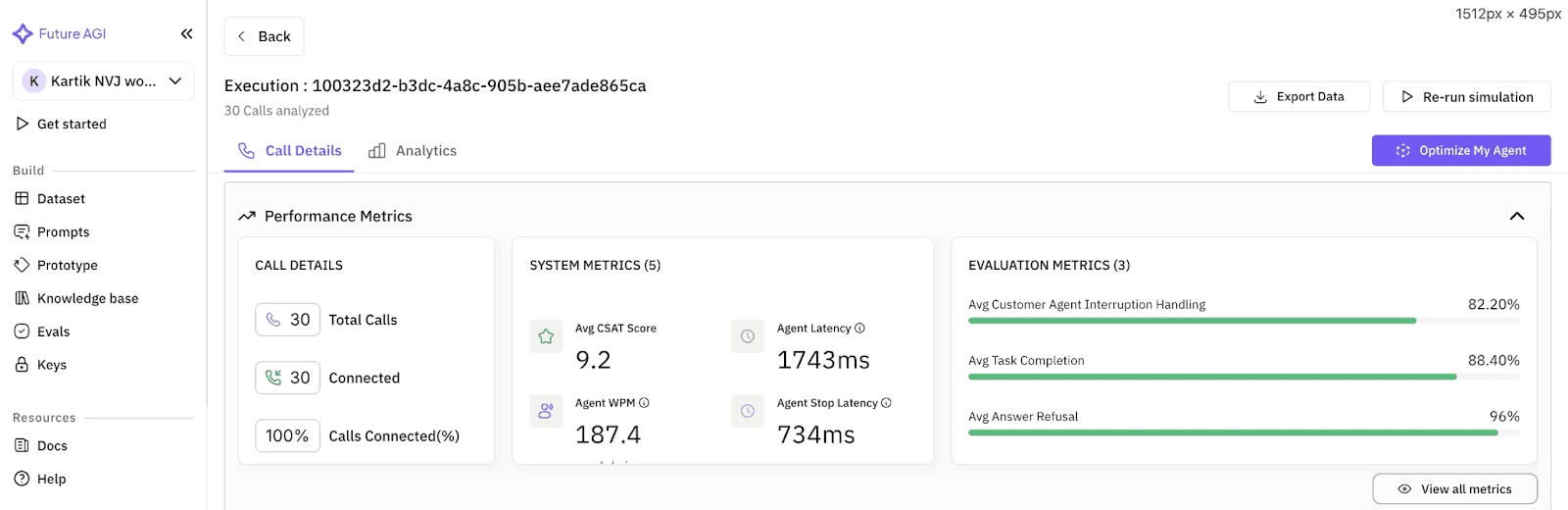

Step 5: Result: 66 Percent to 96 Percent Task Completion

After the optimization loop finished, the platform presented the winner.

- Before. Polite but slow, failed to track complex changes.

- After. Crisp. “Burger, no pickles. Got it.” Handled the “Indecisive” scenario with 96 percent task completion.

We did not just tweak the bot. We engineered a solution that is measurably better against chaos.

Before automated optimization: the wordy, error-prone baseline.

After automated optimization: crisp, fast, handles mid-order changes.

What Changed Since 2025

In 2025, automated prompt optimization was largely a research conversation. Papers like ProTeGi (arXiv:2305.03495), GEPA (arXiv:2507.19457), and PromptWizard (arXiv:2405.18369) shipped reference implementations but did not ship managed runtimes. By May 2026, several platforms expose these algorithms as integrated workflows. FAGI Optimize ships all six algorithms (source), DSPy continues to evolve its MIPRO and BootstrapFewShot optimizers, and prompt registries like PromptLayer added A/B promotion loops. The practical bottleneck has shifted from “can we automate prompt search” to “which algorithm fits which task profile” and “how do we keep the optimization loop attached to the production agent traces.”

How to Move From Manual Tweaking to a Generate, Simulate, Evaluate, Optimize Loop

Stop relying on vibes. Move to a five-step loop:

- Generate a synthetic dataset that exercises edge cases.

- Experiment with a baseline prompt across two or three low-cost models.

- Simulate the agent under realistic chaos: interruptions, hesitation, mid-task changes.

- Evaluate with a rubric of 5 to 10 task-specific criteria and an LLM-as-judge where appropriate.

- Optimize with GEPA or ProTeGi against the labeled failure set.

You do not need real users to build a robust baseline. You need the right infrastructure to start.

Sources

Primary sources for the algorithms, benchmarks, and implementations referenced above.

- agent-opt (the Apache 2.0 library all six algorithms live in): github.com/future-agi/agent-opt

- ai-evaluation (50+ scoring metrics, paired with agent-opt): github.com/future-agi/ai-evaluation

- traceAI (OpenTelemetry tracing for every iteration): github.com/future-agi/traceAI

- ProTeGi (“Automatic Prompt Optimization with Gradient Descent and Beam Search”, Pryzant et al., 2023): arxiv.org/abs/2305.03495

- GEPA (“Reflective Prompt Evolution Can Outperform Reinforcement Learning”, 2025): arxiv.org/abs/2507.19457

- PromptWizard (“Task-Aware Prompt Optimization Framework”, Microsoft Research, 2024): arxiv.org/abs/2405.18369

- DSPy (Stanford NLP’s programming model for LLMs, MIPRO + BootstrapFewShot): github.com/stanfordnlp/dspy

- Optuna (Bayesian search backend): github.com/optuna/optuna

- LiteLLM (provider abstraction used by agent-opt): github.com/BerriAI/litellm

For the broader landscape of optimization libraries (DSPy, PromptLayer, Langfuse, Comet Opik), see the top prompt optimization tools roundup. For agent observability that surrounds the optimization loop, see the traceAI + OpenTelemetry guide.

Frequently asked questions

What is automated agent optimization in 2026?

Which algorithm should I pick for automated prompt optimization?

How do GEPA and ProTeGi differ from manual prompt engineering?

What metrics should drive an agent optimization loop?

Can I run automated optimization without a labeled dataset?

How does FutureAGI's optimization module compare to DSPy?

How long does automated agent optimization actually take?

Where do I run the resulting prompt in production?

The 2026 OSS stack for reliable AI agents: orchestration (LangChain, LlamaIndex, Pydantic AI), gateway (LiteLLM, Open WebUI), eval and observability (traceAI).

Future AGI, Gretel, MOSTLY AI, SDV, and Snorkel ranked for synthetic dataset generation in 2026. Compare data types, privacy, agent simulation, pricing.

Build a self-improving AI agent pipeline in 2026: synthetic users + function-call accuracy + ProTeGi prompt rewrites. 62% to 96% accuracy on a refund agent.