A/B Testing LLM Prompts in 2026: Best Practices and Pitfalls

How to A/B test LLM prompts in production: sample size, traffic split, eval-gated rollback, judge variance, and when not to A/B at all. The 2026 playbook.

Table of Contents

A team rolls out a 12-line refinement to the support agent’s groundedness prompt. The change passes the offline eval suite. The team flips a feature flag at 10am Tuesday for 100% of users. By 3pm refusal rate on legitimate refund queries is up 14 points. The on-call engineer rolls back from a Slack thread. The post-mortem reveals: the change shifted the model toward more cautious refusal patterns; the offline eval set under-represented the legitimate-refund slice; and there was no per-cohort rollout monitor to catch the regression in the first 30 minutes. By 5pm the team has wired a 50/50 A/B split with eval-gated rollback that should have existed since launch.

This is what A/B testing LLM prompts looks like when the discipline is missing. The cost of skipping the A/B is paid in incidents and trust. This guide covers the seven best practices that make A/B tests on LLM prompts actually work in 2026, and the four cases where A/B is the wrong tool. The methods extend the classical A/B testing playbook (Kohavi, Tang, Xu’s Trustworthy Online Controlled Experiments, and Braintrust’s A/B testing docs) with the LLM-specific corrections for judge variance, prompt versioning, and eval-gated rollback.

TL;DR: The seven best practices

| # | Practice | What it prevents |

|---|---|---|

| 1 | Pre-register the primary metric and threshold | Multi-metric peeking; false-positive on noise |

| 2 | Run a power analysis | Underpowered tests that fail to detect real lifts |

| 3 | Calibrate the judge | LLM-as-judge variance silently inflating effect sizes |

| 4 | Cohort-stable hashing | Mid-session arm flips polluting the comparison |

| 5 | Eval-gated rollback | Regressions reaching users before monitoring fires |

| 6 | Pair offline and online A/B | Offline-only catches what offline can catch; online catches the rest |

| 7 | Shadow eval when traffic is too low | Wasted weeks chasing significance that the route cannot deliver |

If you only read one row: the unit of safe prompt rollout is per-cohort A/B with eval-gated rollback. The A/B is the experiment; the rollback is the safety net.

Practice 1: Pre-register the primary metric and threshold

The temptation is a multi-metric scorecard (“we will check faithfulness, refusal rate, latency, cost, and feedback rate”). An A/B test with five primary metrics has a 23% chance of false-positive on at least one (1 - 0.95^5 at alpha=0.05 per metric). Worse, post-hoc cherry-picking of “the metric that moved” is a known antipattern.

The pattern: pick the one metric that captures the failure mode the change targets. Pre-register the metric, the threshold, and the analysis date before the experiment runs. Track 3-5 secondary metrics for context but do not allow them to claim the win on their own.

For depth on choosing metrics, see LLM Evaluation Frameworks, Metrics, and Best Practices and What is LLM Evaluation.

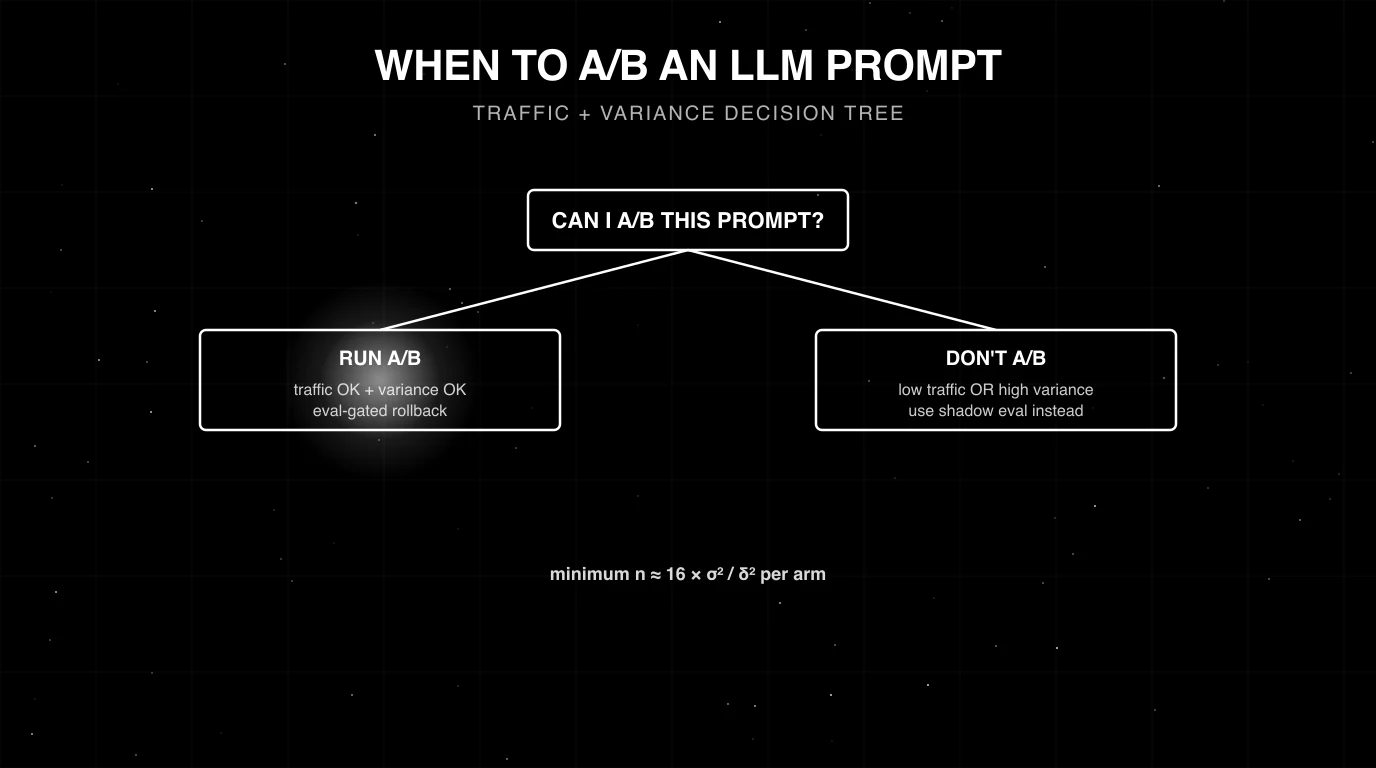

Practice 2: Run a power analysis

For a binary primary metric (pass/fail rubric), the standard formula at alpha=0.05 and 80% power is:

n_per_arm ≈ 16 × p × (1 - p) / delta²where p is the baseline rate and delta is the minimum effect size you want to detect. Worked example: baseline pass rate 70%, target detectable lift 3 percentage points (from 70 to 73):

n_per_arm ≈ 16 × 0.70 × 0.30 / 0.03² = 16 × 0.21 / 0.0009 = 3,733You need ~3,700 requests per arm. For a baseline at 90% wanting to detect a 1-point regression: 16 × 0.90 × 0.10 / 0.01² = 14,400 per arm. Smaller effect sizes need vastly more samples. For a continuous metric (1-5 score), substitute σ² for p × (1-p): n_per_arm ≈ 16 × σ² / delta².

The pragmatic rule: if your route does not get the required sample size in a 7-day window, A/B is the wrong tool. Use shadow eval instead.

For an interactive calculator and the underlying derivation, see Evan Miller’s sample size calculator.

Practice 3: Calibrate the judge

If the primary metric uses LLM-as-judge scoring, the judge’s variance enters the analysis. An uncalibrated judge with kappa below 0.6 against human labels can flip the A/B result on noise alone.

The calibration pattern:

- Hand-label 100-300 examples that span the rubric’s failure modes.

- Run the judge against the same examples.

- Compute Cohen’s kappa or accuracy against the human labels.

- Below 0.6: the judge is too noisy for an A/B test. Pick a stronger judge or sharpen the rubric.

- 0.7-0.85: the judge is good enough as a first filter; pair with periodic human spot-checks.

- Above 0.85: the judge can carry weight in production decisions.

Pair-wise judging (the judge sees both A and B for the same input and picks a winner) usually has higher signal than independent scoring because the judge anchors on the comparison. For depth, see LLM as Judge Best Practices in 2026 and LLM Judge Models in 2026.

Practice 4: Cohort-stable hashing

A user assigned to arm A on request 1 must be in arm A on request 2. Otherwise the comparison is polluted by users seeing both arms.

The pattern: hash a stable identifier (user id, session id, tenant id) modulo the bucket count, route by hash. LaunchDarkly, Statsig, GrowthBook, and FutureAGI’s gateway all ship cohort-stable hashing. Avoid random per-request assignment for stateful experiences.

For multi-tenant SaaS the choice between user-level and tenant-level cohorts matters: tenant-level prevents intra-tenant inconsistency (colleagues see the same agent behavior); user-level reaches sample size faster on small-tenant routes. Pick by what the experiment requires.

Practice 5: Wire eval-gated rollback

The unit of safe rollout is per-cohort A/B with eval-gated rollback. The pattern:

- The new arm receives 5-50% of cohort traffic (start small, ramp).

- An online evaluator scores every request on the new arm with the same rubric used in the offline eval.

- A rolling window (15 minutes to 1 hour) computes per-arm rubric pass rates.

- If any monitored rubric drops below threshold (typically 1.5-2x the noise floor of that rubric), the gateway reverts the cohort to the incumbent.

- An alert fires; the on-call investigates after the auto-revert.

Without rollback, regressions surface in user complaints. With rollback, regressions surface in minutes. The rollback is automatic; the investigation is human.

For the deployment context, see LLM Deployment Best Practices in 2026.

Practice 6: Pair offline and online A/B

Offline A/B compares prompt variants against a held-out eval set. Online A/B compares them against production traffic. Both are needed.

| Layer | Where it runs | What it catches | What it misses |

|---|---|---|---|

| Offline | CI / eval suite | Schema regressions, rubric pass-rate drops, deterministic failures | Distribution shift, real-traffic edge cases, user-visible signals |

| Online | Production cohort | Real-traffic regressions, user-feedback drift | Pre-merge mistakes; can’t catch what reaches production |

The pattern: offline first as a CI gate (a PR that drops rubric pass-rate below threshold blocks). Online second on the rollout (the change ships to a small cohort, rubrics monitor, rollback fires on regression).

Practice 7: Shadow eval when traffic is too low

For routes that do not reach statistical significance in a reasonable window, run the new prompt in parallel without serving its output. Production traffic flows to the incumbent; a shadow worker also runs the new prompt against the same input and scores both. Compare scores offline.

Benefits: zero user risk, accumulates evaluation data on real traffic distribution, ready for rollout when the comparison is conclusive. Tradeoff: doubles the per-request cost on the routes you shadow.

Most platforms (FutureAGI, LangSmith, Phoenix, Galileo, Braintrust) support shadow eval natively. For depth, see What is LLM Experimentation.

Common A/B testing mistakes on LLM prompts

- Peeking at the test mid-flight and stopping early. Stopping when the curve “looks good” inflates false-positive rates. Pre-register the analysis date.

- Multi-metric scorecards as the primary. Five primary metrics is a 23% false-positive rate. Pick one.

- Uncalibrated judge as the scorer. A judge with kappa below 0.6 can flip the result on noise.

- Mid-session arm flips. A user who sees arm A on request 1 and arm B on request 2 contaminates both arms. Use cohort-stable hashing.

- No per-arm latency monitor. A new prompt that adds 800ms to p99 latency is a regression even if rubric pass-rate is unchanged.

- Running A/B on a 100-request-per-day route. No power, no result, two weeks wasted. Use shadow eval.

- Skipping the offline gate. Offline catches what offline can catch (cheap). Skipping it means online finds the regressions.

- No rollback. A 100% rollout with no per-cohort monitor is the change-management equivalent of pushing to production from a Slack thread.

- Treating the A/B as the only validation. A/B compares two arms; it does not compare against the canonical eval set. Run both.

What changed in LLM prompt A/B testing in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2024 | Major eval platforms added pair-wise judging | Higher-signal scoring than independent rubric grades |

| 2025 | LaunchDarkly, Statsig, GrowthBook added LLM-eval integrations | Standard feature-flag tools now consume eval scores natively |

| 2025 | FutureAGI Agent Command Center shipped per-cohort eval-gated rollback | Gateway-level rollback closed the loop on prompt rollout |

| 2026 | Distilled judge models (Galileo Luna-2, FutureAGI turing_flash) reached production scale | Online scoring at 5-20% traffic became cost-feasible |

| 2026 | OTel GenAI semantic conventions adopted broadly | Per-arm trace attributes (prompt version id, cohort id) became standard |

How to actually run an A/B test on an LLM prompt in 2026

- Pick the primary metric. One. Match it to the failure mode the change targets.

- Run a power analysis. Compute n_per_arm; check if the route can deliver in a 7-day window.

- If yes, calibrate the judge. 100-300 hand-labelled examples; require kappa >= 0.6.

- Run the offline A/B as a CI gate. Block the PR on rubric regression.

- Wire cohort-stable hashing. User-level or tenant-level by experiment shape.

- Start the online A/B at 10/90 (new/incumbent). Monitor per-arm rubric pass-rate on a 1-hour rolling window.

- Wire eval-gated rollback. Below-threshold drop reverts the cohort automatically.

- Ramp. 10/90 -> 25/75 -> 50/50 over hours-to-days as confidence accumulates.

- Analyse on the pre-registered date. Significance + practical effect size both clear the threshold -> ship; otherwise rollback or iterate.

- If the route cannot deliver sample size, run shadow eval instead.

For the broader deployment context, see LLM Deployment Best Practices in 2026 and CI/CD for AI Agents Best Practices.

Sources

- Kohavi, Tang, Xu - Trustworthy Online Controlled Experiments

- Braintrust A/B testing for LLM prompts

- Evan Miller’s sample size calculator

- LaunchDarkly experimentation docs

- Statsig docs

- GrowthBook docs

- FutureAGI Agent Command Center

- OpenTelemetry GenAI semantic conventions

- LangSmith experiments docs

Series cross-link

Read next: What is Prompt Versioning?, Best AI Prompt Management Tools in 2026, LLM Deployment Best Practices in 2026, CI/CD for AI Agents Best Practices

Frequently asked questions

When should I A/B test an LLM prompt vs ship the change directly?

What sample size do I need to A/B test an LLM prompt?

What metric should I use as the A/B primary?

How do I handle judge variance in A/B test scoring?

What traffic split should I use?

How do I gate rollback on A/B test results?

What is the difference between offline A/B and online A/B?

When should I not A/B an LLM prompt?

FutureAGI, Galileo, Vertex AI, Bedrock, Confident AI, LangSmith, Braintrust compared on uptime, eval gates, and rollback for production agents.

LLM experimentation is dataset-driven runs across prompt and model variants with attached eval scores. What it is and how to implement it in 2026.

Prompt versioning treats prompts as code: unique ids, environment labels, eval-gated rollouts, and one-call rollback. What it is and how to implement it in 2026.