Confident-AI Alternatives in 2026: 5 LLM Eval Platforms Compared

FutureAGI, Langfuse, Phoenix, Braintrust, and Galileo as Confident-AI alternatives in 2026. Pricing, OSS license, eval depth, and gaps for production teams.

Table of Contents

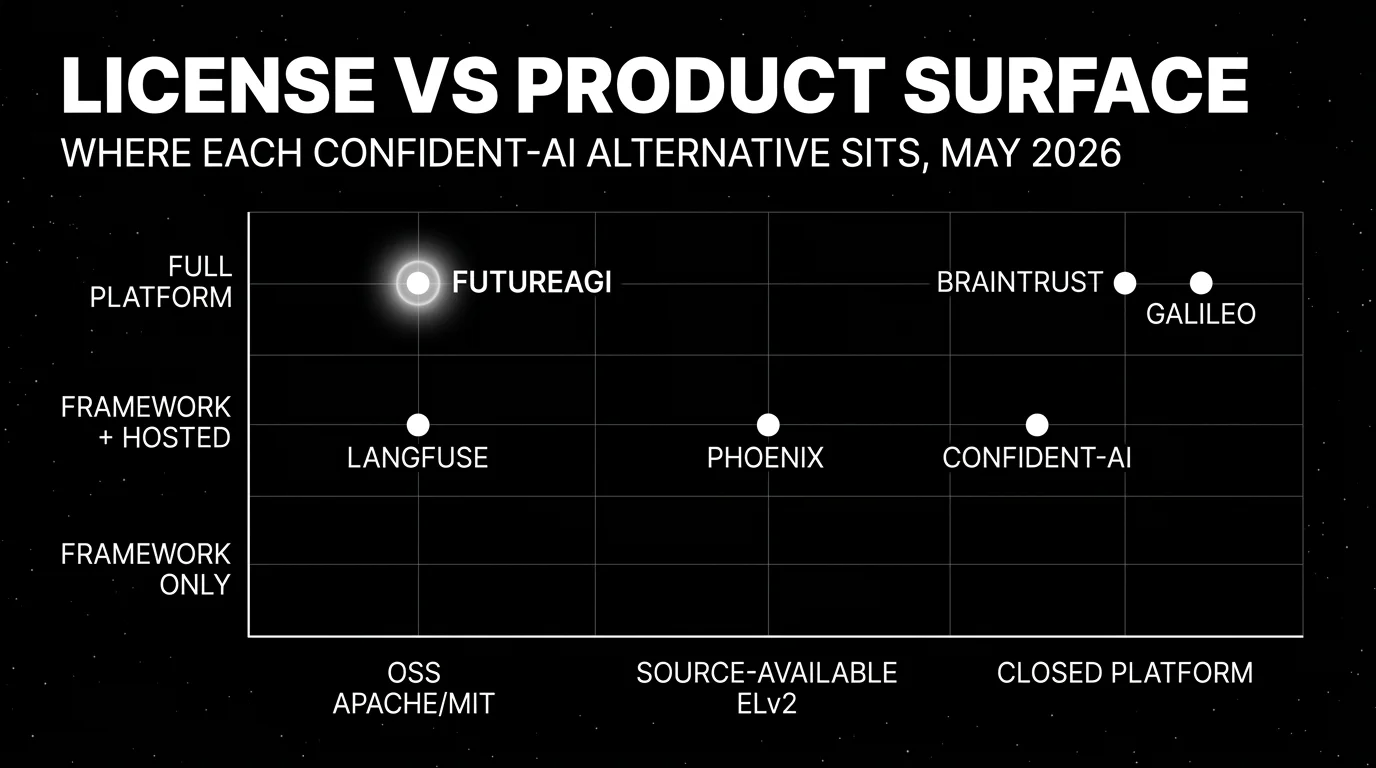

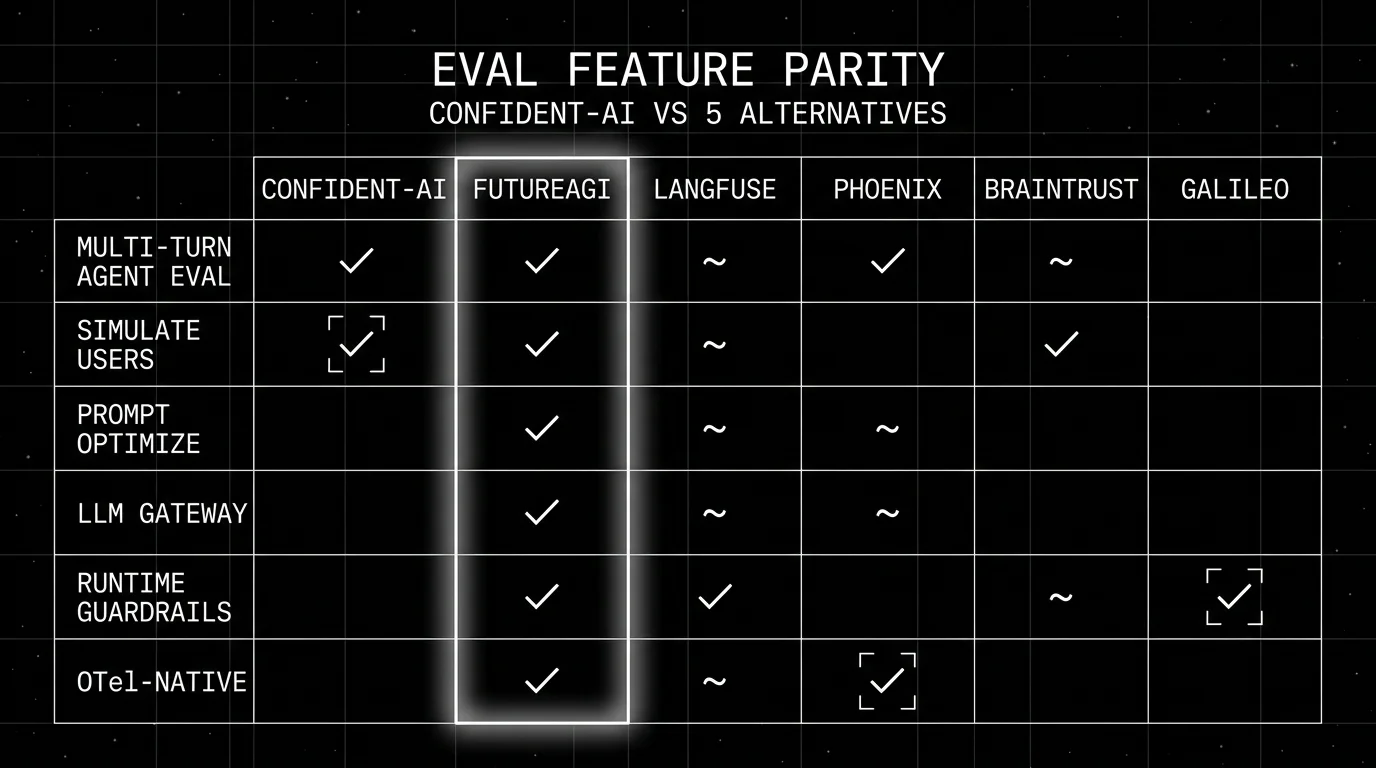

You are probably here because Confident-AI is on the procurement shortlist and the pricing model just landed in budget review. Per-user fees are not the only friction. You may also need self-hosting before the Team tier, an integrated gateway and guardrail product, prompt optimization tied to production traces, or eval primitives that work outside the DeepEval Python entry point. This guide compares the five strongest alternatives. The split between the open source DeepEval framework and the Confident-AI SaaS stays explicit throughout, since the real procurement question is usually about the platform tier, not the library.

TL;DR: Best Confident-AI alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified eval, observe, simulate, optimize, gateway, guard | FutureAGI | One loop across pre-prod and prod | Free + usage from $2/GB storage | Apache 2.0 |

| Self-hosted LLM observability without per-user fees | Langfuse | Mature traces, prompts, datasets, evals | Hobby free, Core $29/mo, Pro $199/mo | MIT core, enterprise dirs separate |

| OTel-native tracing and evals | Arize Phoenix | Open standards, source available | Phoenix free self-hosted, AX Pro $50/mo | Elastic License 2.0 |

| Closed-loop SaaS with strong dev evals | Braintrust | Polished experiments, scorers, CI gate | Starter free, Pro $249/mo | Closed platform |

| Enterprise risk, compliance, and GenAI assurance | Galileo | Research-backed metrics + guardrails | Free 5,000 traces, Pro $100/mo, Enterprise custom | Closed platform |

If you only read one row: FutureAGI when the goal is one loop, Langfuse when self-hosting is non-negotiable, Galileo when an enterprise risk team owns the spend. For deeper reads see our LLM Testing in Production playbook, the eval SDK docs, and the traceAI tracing layer.

Who Confident-AI is and where it falls short

Confident-AI is the commercial product on top of DeepEval. DeepEval is the Apache 2.0 Python evaluation framework with about 15,000 GitHub stars, a metric library that covers G-Eval, DAG, RAG metrics (Faithfulness, Answer Relevancy, Contextual Recall and Precision), agent metrics (Task Completion, Tool Correctness, Argument Correctness, Step Efficiency, Plan Adherence, Plan Quality), conversational metrics (Knowledge Retention, Role Adherence, Conversation Completeness, Turn Relevancy), safety metrics, and multimodal metrics. Recent v3.9.x releases added agent metrics and multi-turn synthetic golden generation.

The Confident-AI platform layers traces, datasets, online evaluation, prompt management with Git-based branching, multi-turn simulations, red-teaming, workflow automation, and CI/CD deployment gates on top. The homepage pitches “the AI quality platform without the engineering overhead” and emphasizes that the platform absorbs the work of building eval infrastructure, with no-code surfaces for product owners and QA teams alongside the SDK for engineers.

Pricing matters here because the model is per-user with metered tracing. The Confident-AI pricing page lists Free at $0 with 5 test runs weekly, 1 GB-month tracing, and 1-week retention. Starter is $19.99 per user per month with 1 GB-month tracing and 5,000 online evaluation metric runs. Premium is $49.99 per user per month with 15 GB-months and 10,000 online evals plus chat simulations, workflow automation, and real-time alerting. Team is custom with 10 users, 75 GB-months, 50,000 online evals, Git-based prompt branching, SSO, SOC 2, and HIPAA. Enterprise is custom with on-prem deployment, 24/7 support, and penetration testing.

Be fair about the strengths. The DeepEval-to-Confident-AI upgrade path is the smoothest in the category for teams already writing pytest evals. The metric library is research-grounded, the conversation simulator works, and the no-code surfaces reduce the friction of pulling product managers into eval review. Confident-AI also runs cleanly inside CI with deployment gates that can block a release on metric regressions.

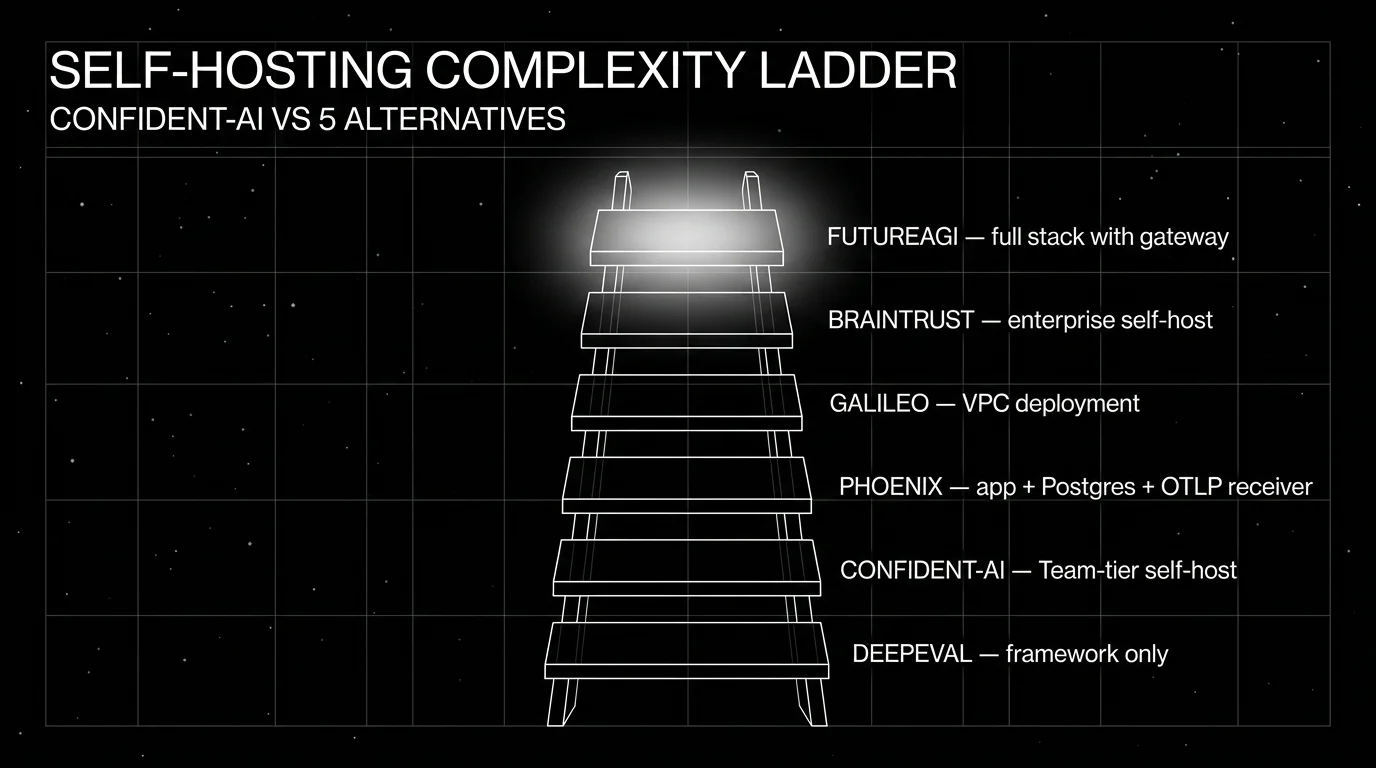

Where teams start looking elsewhere is rarely a single feature gap. It is usually one of three patterns. First, per-user pricing scales poorly when a cross-functional team of 30 people wants read access to traces and dashboards. Second, self-hosting is gated to the Team tier, which is custom-priced and out of reach for early teams that already have a security review on the OSS path. Third, the platform stops at evals, traces, datasets, and simulations: gateway routing, cache controls, runtime guardrails, prompt optimization, and span-attached BYOK judge calls live in adjacent tools, which forces a multi-tool stack.

The 5 Confident-AI alternatives compared

1. FutureAGI: Best for unified eval + observe + simulate + optimize + gateway + guard

Open source. Self-hostable. Hosted cloud option.

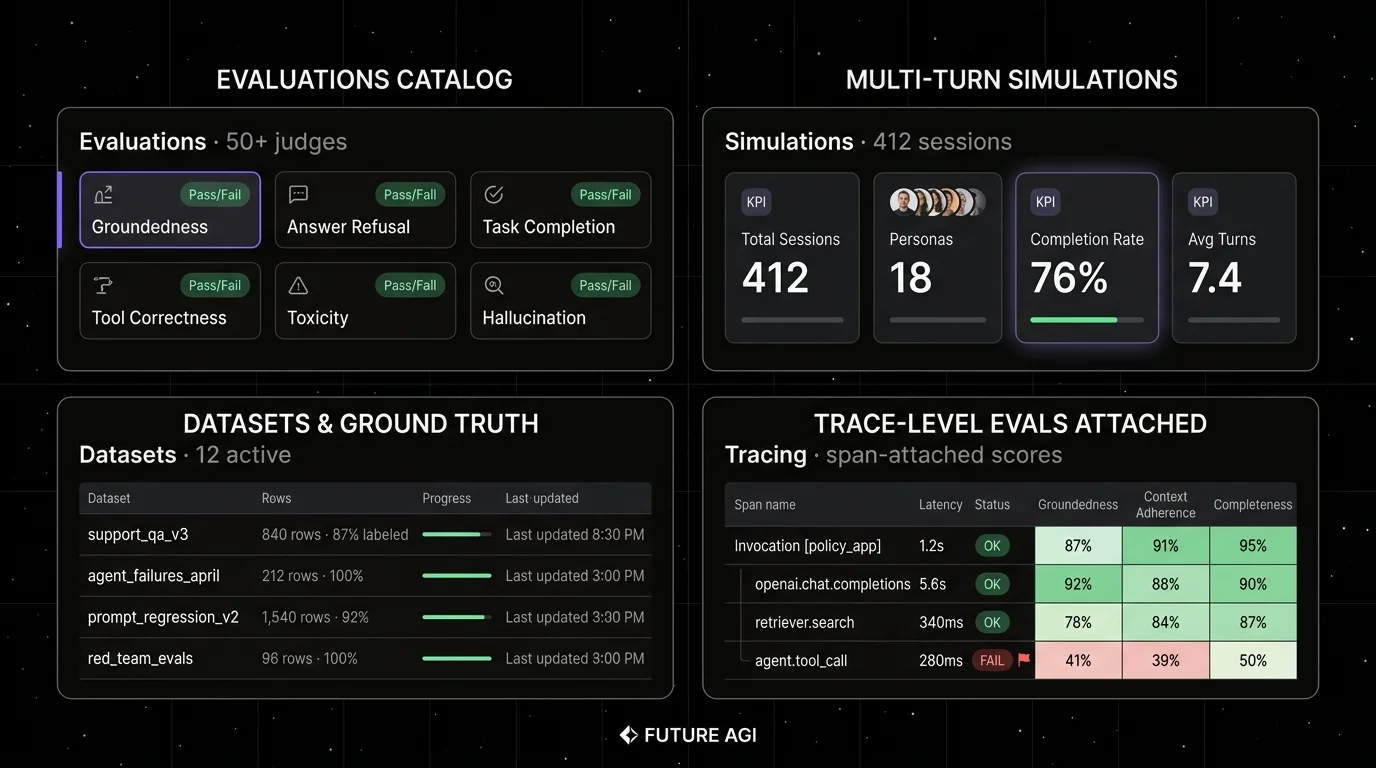

FutureAGI is the strongest Confident-AI alternative when the gap is platform breadth, not eval depth. Confident-AI handles evals, traces, datasets, and simulations. FutureAGI adds gateway routing, runtime guardrails, prompt optimization, and BYOK LLM-as-judge to the same loop. The pitch is one runtime where simulate, evaluate, observe, gate, and optimize close on each other without manual exports. A failing simulated trace becomes a labeled dataset row. A live span carries the same eval score that pre-prod used. A failing production span flows into the optimizer. Only versions that hold the eval contract reach the Agent Command Center gateway, where guardrails and routing enforce the same shape in production.

Architecture: what closes, not what ships. The repo is Apache 2.0 and self-hostable. The eval surface includes 50+ first-party metrics that run locally without API credentials, Turing models for cloud judges, BYOK LLM-as-judge through any LiteLLM model, plus 18+ runtime guardrails. The plumbing under it (Python with Django and Channels, a Go gateway, React/Vite, Postgres, ClickHouse, Redis, RabbitMQ, Temporal, traceAI OpenTelemetry across Python, TypeScript, Java, and C#) exists so the five handoffs do not need glue code. Compared to the Confident-AI per-user model, FutureAGI prices on usage, with unlimited team members and unlimited projects on every tier.

Pricing: FutureAGI starts at $0/month. The free tier includes 50 GB tracing storage, 2,000 AI credits, 100,000 gateway requests, 100,000 cache hits, 1 million text simulation tokens, 60 voice simulation minutes, unlimited datasets, unlimited prompts, unlimited dashboards, 3 annotation queues, 3 monitors, unlimited team members, and unlimited projects. Usage after the free tier starts at $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $2 per 1 million text simulation tokens, and $0.08 per voice minute. Boost is $250/mo, Scale is $750/mo, and Enterprise starts at $2,000/mo.

Best for: Pick FutureAGI when the team has 15+ people who need read access to evals and traces without paying per seat, when production failures need to close back into pre-prod tests, or when the stack already mixes Python, TypeScript, Java, and C# services and a single OTel-native runtime matters more than DeepEval’s pytest path.

Skip if: Skip FutureAGI if your immediate need is a narrow SDK eval runner you can run with pytest on a laptop. DeepEval is hard to beat there. The full platform has more moving parts than DeepEval. If you do not want to operate Docker Compose, ClickHouse, queues, and OTel pipelines, use the hosted cloud or pick a smaller point tool. Sanity-check procurement maturity if reference logos and audit certifications matter more than platform breadth.

2. Langfuse: Best for self-hosted LLM observability without per-user fees

Open source core. Self-hostable. Hosted cloud option.

Langfuse is the strongest OSS-first alternative when the constraint is self-hosting and the per-user pricing model on Confident-AI is the deal breaker. Langfuse Cloud Hobby is free with 50,000 units per month, Core is $29 per month flat, and self-hosting is supported on every tier. The trace, prompt, dataset, and eval surface is mature, the docs are clean, and the integrations cover OpenTelemetry, LangChain, LlamaIndex, OpenAI, and LiteLLM.

Architecture: Langfuse describes itself as an open source LLM engineering platform. Self-hosted deployment uses application containers, Postgres, ClickHouse, Redis or Valkey, and object storage. Prompt management ships versioning, deployment labels, and a Cursor plugin for bulk migration. Eval scores can be attached to traces, and datasets can be built directly from production trace replays.

Pricing: Langfuse Cloud Hobby is free with 50,000 units per month, 30 days data access, 2 users, and community support. Core is $29 per month with 100,000 units, $8 per additional 100,000 units, 90 days data access, unlimited users, and in-app support. Pro is $199 per month with 3 years data access, data retention management, unlimited annotation queues, higher rate limits, SOC 2 and ISO 27001 reports, and an optional Teams add-on at $300 per month. Enterprise is $2,499 per month.

Best for: Pick Langfuse if you need self-hosted tracing, prompt versioning, datasets, eval scores, human annotation, and OTel compatibility, and your platform team can operate the data plane. It pairs cleanly with DeepEval kept in CI, where Langfuse becomes the production system of record and DeepEval continues to drive pytest gates.

Skip if: Skip Langfuse if your gap is simulated users, voice evaluation, prompt optimization algorithms, or a gateway and guardrail product on the same surface. Read the license details before calling it “pure MIT” in procurement. The repo is MIT for non-enterprise paths, with enterprise directories handled separately.

3. Arize Phoenix: Best for OTel and OpenInference teams

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Phoenix is the right alternative when open tracing standards drive the choice. It is OpenTelemetry-native and built on OpenInference, with auto-instrumentation across LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI, Bedrock, Anthropic, Python, TypeScript, and Java. If your team already invested in OTel for application telemetry, Phoenix lets you keep that decision and add LLM eval on top.

Architecture: Phoenix’s docs cover tracing, evaluation, prompt engineering, datasets, experiments, RBAC, API keys, data retention, and custom providers. The repo is active under Elastic License 2.0. Phoenix accepts traces over OTLP and the home page says it is fully self-hostable. Arize AX is the commercial product layered on top with monitors, online evals, dashboards, and Alyx, the in-product agent.

Pricing: Arize lists Phoenix as free for self-hosting, with trace volume, ingestion, projects, and retention managed by you. AX Free is SaaS with 25,000 spans per month, 1 GB ingestion, and 15 days retention. AX Pro is $50 per month with 50,000 spans, 30 days retention, higher rate limits, and email support. AX Enterprise is custom and adds dedicated support, SLA, SOC 2, HIPAA, training, data fabric, self-hosting add-on, data residency, and multi-region deployments.

Best for: Pick Phoenix if you want an OTel-native trace and eval workbench, you value open standards, or you already use Arize for ML observability. It is also a good lab for prompt and dataset workflows that need to stay close to Python and TypeScript client code.

Skip if: The catch is the license and the scope. Phoenix uses Elastic License 2.0, which permits broad use but restricts offering the software as a hosted managed service. Call it source available if your legal team uses OSI definitions. Skip Phoenix if your main requirement is gateway-first provider control, guardrail enforcement, or simulated user testing across voice and text.

4. Braintrust: Best for closed-loop SaaS evaluation

Closed platform. Hosted cloud or enterprise self-host.

Braintrust is the right alternative when the goal is a single SaaS that handles experiments, datasets, prompts, scorers, and CI gates with a polished UI. The Braintrust dev loop overlaps Confident-AI directly: scorers, online scoring, trace-to-dataset loops, prompt iteration, sandboxed agent evals, and a CI hook for pull request gating. The differentiation is the AI assistant Loop, the Braintrust gateway, and the wider observability surface that includes topics, dashboards, and human review.

Architecture: Braintrust’s docs list tracing, logs, topics, dashboards, human review, datasets, prompt management, playgrounds, experiments, remote evals, online scoring, functions, the Braintrust gateway, monitoring, automations, and self-hosting as part of the product. Recent changelog work covered Java auto-instrumentation, dataset snapshots, dataset environments, trace translation, cloud storage export, full-text search, subqueries, and sandboxed agent evals.

Pricing: Braintrust Starter is $0 per month with 1 GB processed data, 10,000 scores, 14 days retention, and unlimited users. Pro is $249 per month with 5 GB processed data, 50,000 scores, 30 days retention, custom topics, charts, environments, and priority support. Overage on Starter is $4/GB and $2.50 per 1,000 scores; on Pro it is $3/GB and $1.50 per 1,000 scores. Enterprise is custom and adds on-prem or hosted deployment.

Best for: Pick Braintrust if you want a single closed-loop platform with strong dev ergonomics, you do not need open source control, and the budget supports the Pro or Enterprise tiers. The Braintrust pricing model is per-GB and per-score with unlimited users on Starter and Pro, which trades off well against Confident-AI’s per-user model.

Skip if: Skip Braintrust if open source control is non-negotiable, if pre-production voice simulation matters, or if your stack needs gateway routing, guardrails, and prompt optimization on the same surface. See Braintrust Alternatives for the deeper view.

5. Galileo: Best for enterprise risk and compliance buyers

Closed platform. Hosted SaaS, VPC, and on-premises deployment options.

Galileo is the right alternative when the buyer is an enterprise risk team or a chief AI officer who cares about compliance reports, runtime guardrails, and research-backed metrics with documented benchmarks. Galileo’s positioning includes Luna evaluation foundation models, ChainPoll for hallucination detection, agent reliability metrics, and runtime guardrails on the Enterprise tier.

Architecture: Galileo runs as hosted SaaS, VPC, or on-premises. It supports SDK and API ingestion, integrates with major LLM providers and frameworks, and includes dashboards, alerts, evaluation experiments, datasets, and red-teaming workflows. Enterprise adds real-time guardrails and low-latency inference servers.

Pricing: Galileo Free is $0 per month with 5,000 traces per month, unlimited users, and unlimited custom evals. Pro is $100 per month (billed yearly) with 50,000 traces per month, standard RBAC, advanced analytics, and Slack-based dedicated support. Enterprise is custom and adds unlimited scale, SSO, dedicated CSM, real-time guardrails, low-latency inference servers, hosted/VPC/on-prem, and 24/7 support.

Best for: Pick Galileo when the procurement owner is an enterprise risk function and the answer needs to include published benchmarks, audit-friendly reports, runtime guardrails, and a deployment model that includes on-prem. It is also a fit when the team values proprietary evaluation foundation models over BYOK judges.

Skip if: Skip Galileo if open source control matters, if the team is small enough that the hosted dev loop on Confident-AI or Braintrust is sufficient, or if the trace volume exceeds 50,000 per month and the next step is custom Enterprise pricing. See Galileo Alternatives for the deeper view.

Decision framework: Choose X if…

- Choose FutureAGI if your dominant workload is agent reliability across simulation, evals, traces, gateway routing, guardrails, and prompt optimization. Buying signal: cross-functional access on a flat usage model matters more than per-seat dev tooling. Pairs with: OTel, OpenAI-compatible HTTP, BYOK judges, and self-hosted deployment.

- Choose Langfuse if your dominant workload is LLM observability and prompt management under self-hosting constraints. Buying signal: you want to inspect the source, operate the stack, and keep trace data in your infrastructure. Pairs with: custom eval harnesses (including DeepEval), LangChain, LlamaIndex, OpenAI SDK, and data exports.

- Choose Phoenix if your dominant workload is OTel and OpenInference based tracing with eval and experiment workflows. Buying signal: you already use Arize, or your platform team cares about instrumentation standards more than vendor UI polish. Pairs with: Python and TypeScript eval code, Phoenix Cloud, and Arize AX.

- Choose Braintrust if your dominant workload is structured experiments, scorer libraries, dataset snapshots, and CI gating from a polished SaaS. Buying signal: you want one closed-loop UI for the dev workflow without operating data infrastructure. Pairs with: GitHub Actions, OpenAI, Anthropic, and the Braintrust gateway.

- Choose Galileo if your dominant workload is enterprise risk, regulated industries, runtime guardrails, and proprietary research metrics. Buying signal: the buyer is a chief AI officer or risk function. Pairs with: VPC or on-prem hosting, SOC 2, HIPAA, and audit-friendly reports.

Common mistakes when picking a Confident-AI alternative

- Confusing Confident-AI with DeepEval. Many alternatives compare against the framework when the real procurement question is the platform. Keep the split explicit so security, pricing, and feature scope answers stay consistent.

- Pricing on monthly tier alone. Confident-AI’s per-user model adds cost as the team grows. FutureAGI, Langfuse, and Braintrust price on usage with unlimited users on most tiers. Model your team headcount, not only your trace volume.

- Picking on benchmark claims without a domain run. Rerun any p95, p99, throughput, judge latency, or score variance numbers against your prompts, span payloads, model mix, and concurrency. A vendor screenshot is not a capacity plan.

- Treating OSS and self-hostable as the same. Confident-AI gates self-hosting at Team. Langfuse, FutureAGI, Helicone, Phoenix, and Galileo offer self-hosted paths under different licenses and operational footprints. Check license, telemetry, enterprise gates, upgrade process, and backup story.

- Ignoring multi-step agent eval. Final-answer scoring misses tool selection, retries, retrieval misses, loop behavior, and conversation drift. Require trace-level, session-level, and path-aware evaluation if your agent does more than one call. Confident-AI inherits DeepEval’s multi-turn primitives; verify the alternative does too.

- Underestimating migration effort. Tracing migration is the easy part. The hard parts are datasets, scorer semantics, prompt version history, human review queues, CI gates, and production-to-eval workflows.

What changed in the eval landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Braintrust added Java auto-instrumentation | Java, Spring AI, LangChain4j, and Google GenAI teams can trace with less manual code. |

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can run experiment checks in GitHub Actions before production release. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | LangChain expanded into agent workflow products. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Gateway routing, guardrails, cost controls, and high-volume trace analytics moved into the same loop. |

| Apr 2026 | DeepEval v3.9.x shipped agent metrics + multi-turn synthetic goldens | Confident-AI’s underlying framework moved closer to first-class agent and conversation eval. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Phoenix is moving trace, prompt, dataset, and eval workflows closer to terminal-native agent tooling. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real traces, including failures, long-tail prompts, tool calls, retrieval misses, and hand-labeled outcomes. Instrument each candidate with your harness, your OTel payload shape, your prompt versions, and your judge model. Do not accept a demo dataset.

-

Measure reliability under load. Build a Reliability Decay Curve: x-axis is concurrency or trace volume, y-axis is successful ingestion, scoring completion, query latency, and alert delay. Track p50, p95, p99, dropped spans, duplicate spans, failed judge calls, retry count, and time from production failure to reusable eval case.

-

Cost-adjust. Real cost equals platform price times trace volume, token volume, test-time compute, judge sampling rate, retry rate, storage retention, and annotation hours. A per-user platform can lose if a 30-person team needs read access. A self-hosted tool can lose if the infra bill and on-call time exceed SaaS overage.

How FutureAGI implements the Confident-AI replacement loop

FutureAGI is the production-grade LLM evaluation platform built around the unified evaluate-observe-simulate-optimize loop this post tested every Confident-AI alternative against. The full stack runs on one Apache 2.0 self-hostable plane:

- Evaluation surface - 50+ first-party metrics (G-Eval, DAG, Faithfulness, Answer Relevance, Tool Correctness, Knowledge Retention, Role Adherence, Task Completion, Hallucination, PII, Toxicity) ship as both pytest-compatible scorers and span-attached scorers. The same definition runs offline in CI and online against production traffic, so a regression caught in CI matches the score that lights up the production dashboard.

- Judge layer -

turing_flashruns guardrail screening at 50 to 70 ms p95 and full eval templates at about 1 to 2 seconds. BYOK lets any LLM serve as the judge at zero platform fee. - Tracing - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. The trace tree carries metric scores as first-class span attributes.

- Simulation and optimization - persona-driven synthetic users exercise voice and text agents pre-prod, and six prompt-optimization algorithms consume failing trajectories as labelled training data.

Beyond the four axes, FutureAGI also ships the Agent Command Center gateway across 100+ providers with BYOK routing, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing Confident-AI alternatives end up running three or four tools in production: one for evals, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the eval, trace, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching, and the same metric definition runs in CI and production.

Sources

- Confident-AI pricing

- Confident-AI homepage

- DeepEval GitHub repo

- DeepEval metrics documentation

- FutureAGI pricing

- FutureAGI GitHub repo

- Langfuse pricing

- Langfuse self-hosting docs

- Arize pricing

- Phoenix docs

- Braintrust pricing

- Galileo pricing

Series cross-link

Next: DeepEval Alternatives, Braintrust Alternatives, Galileo Alternatives

Frequently asked questions

What is the best Confident-AI alternative in 2026?

Is Confident-AI the same as DeepEval?

Is there a free open-source alternative to Confident-AI?

Can I self-host Confident-AI?

How does Confident-AI pricing compare to alternatives in 2026?

Does any alternative simulate multi-turn conversations as well as Confident-AI?

What does Confident-AI still do better than alternatives?

How hard is it to migrate from Confident-AI?

FutureAGI, Langfuse, Arize Phoenix, Helicone, and LangSmith as Braintrust alternatives in 2026. Pricing, OSS status, and what each platform won't do.

FutureAGI, Langfuse, Arize Phoenix, Braintrust, and LangSmith as DeepEval alternatives in 2026. Pricing, OSS license, eval depth, and production gaps.

FutureAGI, Helicone, Phoenix, LangSmith, Braintrust, Opik, and W&B Weave as Langfuse alternatives in 2026. Pricing, OSS license, and real tradeoffs.