Best LLM Feedback Collection Tools in 2026: 7 Compared

FutureAGI, PostHog, LangSmith, Trubrics, Helicone, Langfuse, and Phoenix as the 2026 LLM feedback shortlist. Explicit signals, implicit signals, and span join.

Table of Contents

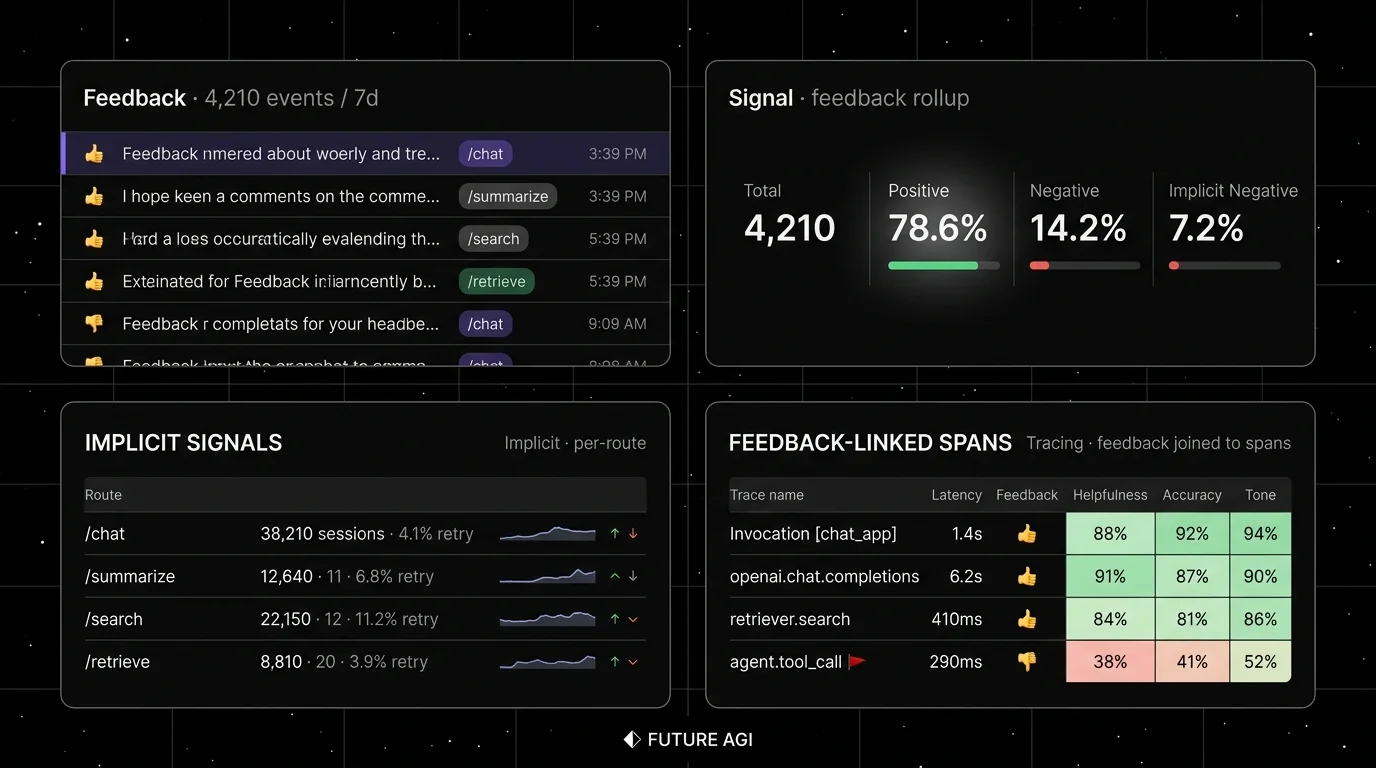

A team ships a chat assistant with a thumbs-up button below every reply. Three months later, the data is roughly 0.6 percent of conversations get a thumb, 0.4 percent get a thumbs-down, and 99 percent of the team’s actual signal is invisible. The conversion drop on /search is a regenerate-rate spike on a single prompt version. The escalation rate is climbing on the refund route. Nobody sees any of it because the feedback tool only captured the thumbs button. The fix is not more thumbs. It is a feedback platform that joins explicit signals (thumbs, ratings, comments) and implicit signals (retries, regenerates, abandonments, copy-pastes) to the originating trace, the prompt version, and the user cohort.

This is what 2026 LLM feedback collection has to do. Explicit feedback is sparse but high-signal; implicit feedback is dense but noisy. The platform that joins both to the trace tree is the one that closes the loop from production back into evals and prompts. This guide compares the seven tools that show up on most procurement shortlists.

TL;DR: Best LLM feedback tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Open-source feedback joined to span trees and evals | FutureAGI | Feedback API + dataset auto-build + judge calibration | Free + usage from $2/GB | Apache 2.0 |

| Product analytics first; LLM feedback as one event class | PostHog | Strong implicit-signal capture, session replay | Product Analytics 1M events free, then from $0.00005/event; LLM Analytics 100K events free, then $0.00006/event | MIT FOSS mirror |

| LangChain or LangGraph runtimes | LangSmith | First-party feedback API, tight integration | Developer free, Plus $39/seat/mo | Closed (MIT SDK) |

| Purpose-built feedback platform | Trubrics | OSS, LLM-first, Streamlit and SDK support | OSS free, team on request | Apache 2.0 |

| Gateway-first stack already running | Helicone | Apache 2.0, one-line feedback at the gateway | Hobby free, Pro $79/mo | Apache 2.0 |

| Self-hosted observability with feedback queues | Langfuse | Score API, annotation queues, MIT core | Hobby free, Core $29/mo | MIT core |

| OTel-native feedback annotations | Phoenix | Source-available, OpenInference-aligned | Free self-host, AX Pro $50/mo | ELv2 |

If you only read one row: pick FutureAGI for the broadest open-source feedback stack. Pick PostHog when product analytics is the primary buyer. Pick Trubrics when feedback is the dedicated workflow.

How we evaluated the 2026 feedback shortlist

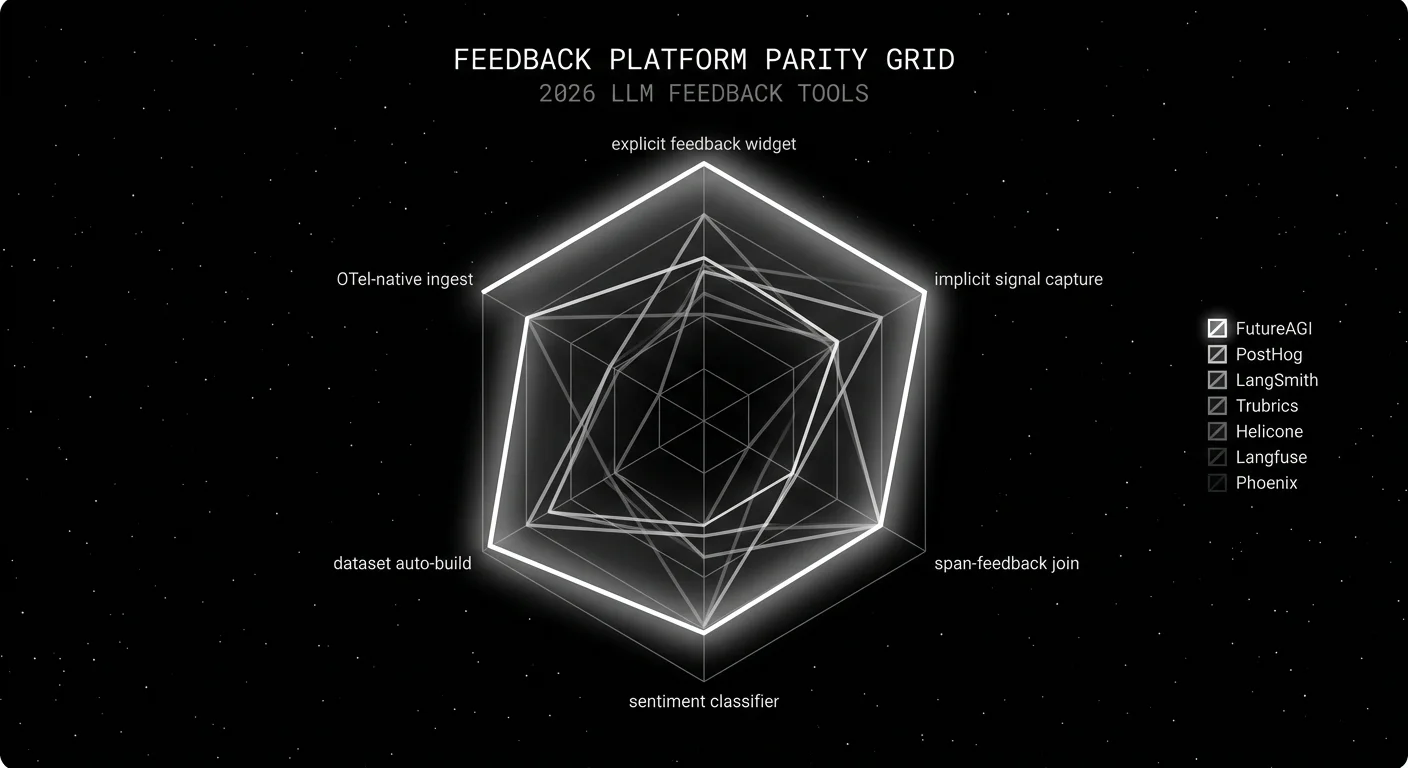

These seven platforms were ranked across five axes:

- Explicit feedback widget. Thumbs, star ratings, written comments, structured rubrics out of the box.

- Implicit signal capture. Retry rate, regenerate, abandonment, copy-paste, session length, conversion drop.

- Span-feedback join. Can the feedback event be joined to the trace id, the prompt version, the route, the user cohort.

- Dataset auto-build. Does negative feedback flow into a regression dataset for the next CI eval.

- Pricing model. Per-event, per-seat, per-trace, flat tier, OSS-only.

Tools shortlisted but cut: Mixpanel (strong product analytics but weaker LLM-specific surface), Datadog (APM-first; LLM trace product is younger than the leaders), Comet Opik (good observability but smaller dedicated feedback surface). Each works if your stack already runs there.

The 7 LLM feedback collection tools compared

1. FutureAGI: Best for an open-source feedback stack joined to traces and evals

Open source. Self-hostable. Hosted cloud option.

Use case: Teams that want one platform across feedback capture, span attach, dataset auto-build, judge calibration, and gateway-level guardrails. The pitch is feedback events, traces, evals, and prompt versions all live in the same loop without manual joins.

Pricing: Free plus usage from $2/GB storage and $10 per 1,000 AI credits.

OSS status: Apache 2.0.

Key features: Feedback API tied to span ids via traceAI, explicit signals (thumbs, ratings, structured rubrics) and implicit signals (retry, regenerate, abandonment), dataset auto-build from negative-feedback rows, BYOK judge calibration with user labels as ground truth, Agent Command Center for runtime guardrails on routes with poor feedback.

Best for: Teams that want the feedback-to-prompt loop closed in one OSS platform with multi-language OTel coverage.

Worth flagging: More moving parts than a dedicated feedback widget. ClickHouse, Postgres, Redis, and Temporal are real services. Use the hosted cloud if you do not want to operate the data plane.

2. PostHog: Best for product-analytics-first teams that need LLM feedback as one event class

Open source. Self-hostable. Hosted cloud.

Use case: Product teams that already use PostHog for funnel analytics and session replay, and want LLM feedback events on the same platform. The platform-analytics surface (autocapture, sessions, funnels, replays) treats LLM signals as one of many event types.

Pricing: Product Analytics is free for the first 1M events/month and then from $0.00005/event. LLM Analytics has its own meter at 100K events/month free and then $0.00006/event. Replay, feature flags, and surveys each have their own meters; check the live page.

OSS status: Self-hostable. The PostHog/posthog-foss mirror is MIT-licensed; the main repo includes some non-OSS components.

Key features: Autocapture across web and mobile, session replay, LLM analytics with cost and trace tracking, custom events for feedback, Funnel and Retention insights, feature flags, A/B testing.

Best for: Cross-functional product teams where feedback is one signal class among many. Teams that do not want a separate LLM-specific tool.

Worth flagging: PostHog is product analytics first; the LLM-specific surface is younger than the LLMOps leaders. See PostHog LLM Analytics Alternatives.

3. LangSmith: Best for LangChain teams with native feedback APIs

Closed platform. Open SDKs. Hosted cloud, hybrid, enterprise self-host.

Use case: Teams whose runtime is already LangChain or LangGraph. The LangSmith feedback API makes it one call to attach a feedback row to a run id.

Pricing: Developer $0 with 5,000 traces, 1 seat. Plus $39 per seat with 10,000 traces. Base traces $2.50 per 1K after included usage.

OSS status: Closed platform; MIT SDK.

Key features: Run-level feedback API, score types (boolean, numeric, categorical, comment), human annotation queues, dataset linkage, evaluator hooks tied to feedback aggregations.

Best for: Teams that already debug chains, graphs, and prompts in LangChain.

Worth flagging: Outside LangChain, the value drops. Seat pricing makes broad cross-functional access expensive. See LangSmith Alternatives.

4. Trubrics: Best for a purpose-built OSS feedback platform

Open source. Self-hostable.

Use case: Teams building Streamlit or custom UIs that want a dedicated feedback widget plus a dashboard, without committing to a full LLMOps platform.

Pricing: OSS free. Team and enterprise pricing on request.

OSS status: Apache 2.0.

Key features: Drop-in feedback widget for Streamlit, Python SDK for non-Streamlit apps, structured rubrics, annotation queues, user identity and metadata tagging, basic dashboard.

Best for: Data science teams that ship Streamlit or Gradio prototypes and want feedback capture without a heavier platform.

Worth flagging: Smaller community and slower release cadence than the LLMOps leaders. Best as a focused complement to a tracing platform rather than a standalone observability stack.

5. Helicone: Best for gateway-attached feedback

Open source. Self-hostable. Hosted cloud.

Use case: Teams already routing through the Helicone gateway that want feedback capture on the same hop. The Helicone feedback endpoint records ratings tied to the request id without a separate SDK.

Pricing: Hobby free. Pro $79/mo, Team $799/mo, Enterprise custom.

OSS status: Apache 2.0.

Key features: One-call feedback endpoint per request id, gateway-side capture without app-side SDK, prompt and session aggregations, custom properties.

Best for: Teams whose model traffic already routes through Helicone and want feedback at the same boundary.

Worth flagging: Helicone joined Mintlify in March 2026 and the gateway moved into maintenance mode. Roadmap risk is now part of vendor diligence. See Helicone Alternatives.

6. Langfuse: Best for self-hosted observability with feedback queues

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted teams that want feedback joined to traces, plus annotation queues for structured human review.

Pricing: Hobby free with 50K units, 30 days data access, 2 users. Core $29/mo with 100K units, unlimited users. Pro $199/mo with SOC 2 reports.

OSS status: MIT core.

Key features: Score API for feedback rows, score configs (categorical, numeric, boolean), annotation queues with assignment workflow, dataset linkage, prompt-version-to-feedback aggregations.

Best for: Platform teams that want to keep trace data and feedback in their own infrastructure. Teams that already use the Langfuse OSS stack.

Worth flagging: No first-party feedback widget; bring your own UI. Implicit-signal capture (retry, regenerate, abandonment) requires custom instrumentation. See Langfuse Alternatives.

7. Arize Phoenix: Best for OTel-native feedback annotations

Source available. Self-hostable. Phoenix Cloud and Arize AX paths.

Use case: Teams already invested in OpenInference instrumentation that want feedback as span attributes.

Pricing: Phoenix free for self-hosting. AX Free includes 25K spans/month, 1 GB ingestion, 15 days retention. AX Pro $50/mo with 50K spans, 30 days retention.

OSS status: Elastic License 2.0. Source available with restrictions on managed service offerings.

Key features: OTel-native feedback annotations on spans, dataset eval over annotated rows, prompt tracking, evals over a span tree.

Best for: Engineers who want feedback to flow through the same OpenInference pipeline as their evals.

Worth flagging: Phoenix is not a gateway, not a runtime guardrail product, and not a dedicated feedback widget. ELv2 license matters for legal teams that follow OSI definitions strictly. See Phoenix Alternatives.

Decision framework: pick by constraint

- OSS is non-negotiable. FutureAGI, PostHog, Langfuse, Trubrics. Helicone counts for new builds only after a Mintlify-roadmap risk assessment.

- Cross-functional product teams. PostHog or FutureAGI on flat tiers. Avoid per-seat models for 30-plus person teams.

- LangChain runtime. LangSmith if you can absorb the per-seat pricing; FutureAGI if you cannot.

- Streamlit or Gradio app. Trubrics drops in fastest.

- Gateway-first stack. Helicone if you accept the maintenance posture; FutureAGI Agent Command Center if you want first-party gateway plus feedback.

- Pure self-host with feedback queues. Langfuse or FutureAGI.

- OTel-native instrumentation already in place. Phoenix or FutureAGI.

Common mistakes when picking a feedback tool

- Capturing only thumbs. Most users do not click. The thumbs rate floor is 1-3 percent; most signal lives in retries, regenerates, and abandonments. Pick a tool that captures both.

- Ignoring the trace join. A feedback row without trace_id, prompt_version, route, and cohort is debug-only. Pick a tool where the join is one row, not a SQL fork.

- Treating feedback as a metric, not a dataset. Negative feedback rows are the single richest source of regression test cases. Auto-build datasets from thumbs-down spans on every release.

- Skipping the calibration loop. User feedback is the ground truth your judge models calibrate against. Without that loop, judges drift unchecked.

- Sampling away the signal. Feedback is sparse to begin with. Do not sample feedback events; sample traces if you must, but capture every feedback signal.

- Building it from scratch in week one. Slack and Postgres carry you for a prototype. Move to a tool before you ship to a real user base.

- Buying a feedback tool with no eval link. A feedback row that does not flow into the next CI eval is decoration. Pick a tool that closes the loop.

Recent feedback tooling updates

| Date | Event | Why it matters |

|---|---|---|

| Mar 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Feedback joined to high-volume span data became cheap. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | Feedback APIs extended into agent deployment. |

| Mar 3, 2026 | Helicone joined Mintlify | Gateway-first feedback strategies need a backup plan. |

| Feb 2026 | PostHog AI engineering observability matured | LLM cost and feedback tracking became first-class in PostHog. |

| 2026 | OTel GenAI semantic conventions (Development status) | OTel GenAI conventions kept maturing; feedback and annotation schemas remain mostly vendor-specific while the trace and evaluation surfaces converge. |

| Dec 2025 | DeepEval v3.9.x shipped multi-turn synthetic goldens | Negative-feedback rows are easier to expand into eval suites. |

How to actually evaluate feedback tools for production

- Wire one explicit and one implicit signal first. Thumbs button and regenerate-click. Capture both for two weeks against the same workload. The volume gap (often 50:1 or worse in favor of implicit) tells you why both matter.

- Verify the trace join. Pull the last 100 negative feedback rows. For each, can you immediately see the trace, the prompt version, the route, and the user cohort? If any join is a SQL fork, the tool is the wrong tool.

- Auto-build a regression dataset. Pipe the last 30 days of thumbs-down rows into a dataset. Run the next CI eval against it. Watch the eval fail-rate on those rows. The tool that makes this trivial is the one you ship.

Sources

- FutureAGI pricing

- FutureAGI GitHub repo

- traceAI GitHub repo

- PostHog pricing

- PostHog AI engineering observability

- LangSmith pricing

- LangSmith feedback docs

- Trubrics SDK

- Helicone pricing

- Helicone feedback docs

- Helicone joining Mintlify

- Langfuse pricing

- Phoenix docs

- Arize pricing

- OpenTelemetry GenAI semantic conventions

Series cross-link

Read next: What is LLM Annotation?, Best LLM Annotation Tools 2026, What is LLM Product Analytics?

Frequently asked questions

What is LLM feedback collection?

What are the best LLM feedback collection tools in 2026?

Should I capture explicit or implicit feedback signals?

How do I use LLM feedback to improve prompts?

What is the difference between LLM feedback and LLM evaluation?

How does pricing compare across LLM feedback tools in 2026?

Can I use Slack or email as my feedback tool?

What changed in LLM feedback collection in 2026?

FutureAGI closes the self-improving loop for AI product teams; Langfuse, Mixpanel, Amplitude, LangSmith, and Helicone each ship a slice. 2026 picks.

LLM product analytics: how teams join trace data to product funnels, retention, and satisfaction. Tools, anatomy, mistakes, and where the category is going.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.