CI/CD for AI Agents in 2026: Eval Gates, Regression Suites, Canary Rollouts

CI/CD pipelines for AI agents in 2026: eval gates, golden datasets, canary deploys, regression suites. GitHub Actions and GitLab patterns that ship safely.

Table of Contents

Example scenario: a team merges a small refactor to the agent’s tool-routing prompt. The change passes lint, passes unit tests, passes the existing eval gate. Production tool-call accuracy regresses noticeably within the hour. Investigation reveals the eval gate’s golden dataset under-represents the dispatch-tool slice; the new prompt regresses there but the gate did not see it. After the on-call rollback, the same change ships again behind a canary deploy with span-attached online eval and eval-gated rollback. The canary catches the regression early in the ramp. The on-call gets paged once, after the auto-revert.

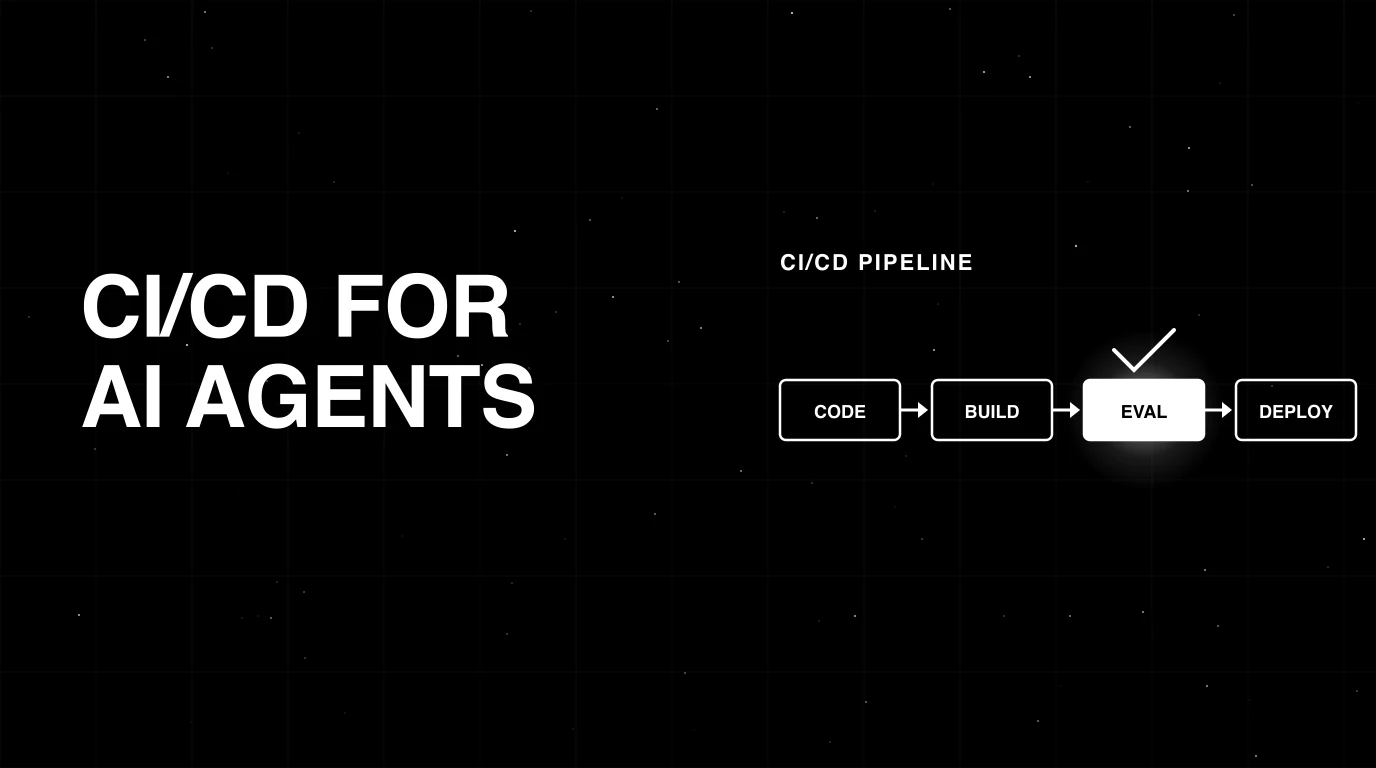

This is what CI/CD for AI agents looks like when the eval gate is too narrow but the canary is wired. The gates fail open for what they cannot see; the canary closes the loop on what offline eval misses. This guide covers a four-stage CI/CD pipeline that makes AI-agent rollouts actually work in 2026, with eight deep sections on building eval sets that survive six months, the math behind merge-decision gating, replay-driven regression, persona-driven simulation, canary rollback policy, reproducibility under provider drift, and a six-scenario on-call playbook. The shape extends classical software CI/CD (Humble and Farley’s Continuous Delivery, the Google SRE Workbook) with the LLM-specific additions: versioned eval sets, judge-based scorers calibrated against human labels, span-attached online eval, and atomic prompt-version flags.

TL;DR: The CI/CD pipeline for an agent in 2026

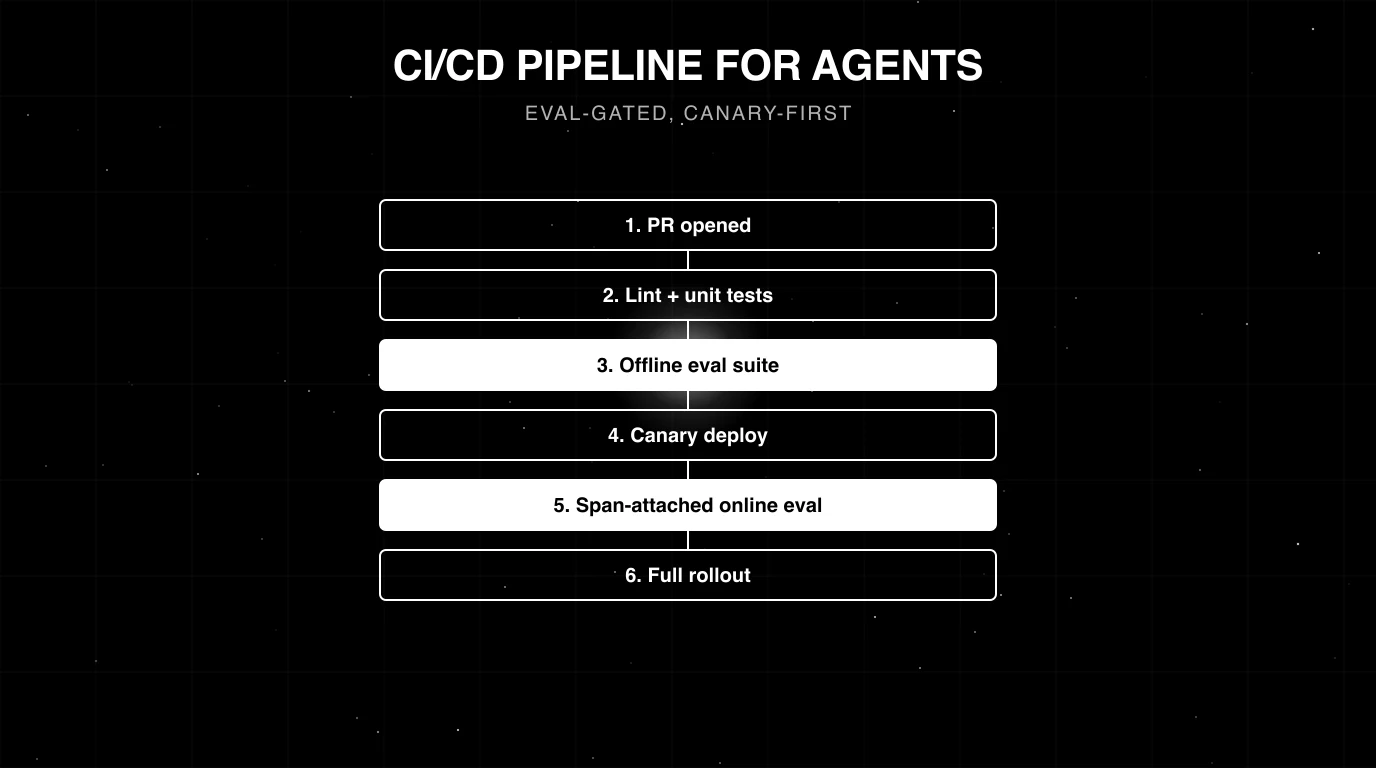

The four core CI/CD stages live between two bookends: pre-PR local checks (Stage 0) and the post-rollout sweep (Stage 5). Stages 1 through 4 are what the pipeline actually gates on.

| Stage | Trigger | Wall-clock budget | What it gates on | Failure mode it catches |

|---|---|---|---|---|

| 0. Pre-PR (bookend) | Local pre-commit hook | seconds | Prompt template parses, tool JSON Schema valid | Typos before push |

| 1. PR-time fast checks | Every PR | under 90 s | Schema lint, 10-50 case deterministic mini eval, token-budget delta | Obvious breaks, cost runaway |

| 2. Merge-time integration eval | PR ready for review | 5-15 min | 200-500 case eval, kappa-validated rubrics, delta vs main | Semantic regression |

| 3. Pre-deploy simulation | Pre-merge or label-gated | 10-60 min | Persona objectives met, no guardrail trips, no unsafe tool calls, latency budget | Multi-turn drift, jailbreaks, tool misuse |

| 4. Canary plus rollback | Post-merge to prod | 1-24 h | Auto-rollback on kappa drop, guardrail trip-rate spike, p95 latency regression, or cost-per-request regression (thresholds are workload-specific; example values illustrative) | Distribution shift, judge drift, cost regression |

| 5. Post-rollout sweep (bookend) | After 100% rollout | continuous | Production rubric failures auto-flag | Compounding the regression suite |

If you only read one row: the integration eval is the lock between a PR and merge; the canary with eval-gated rollback is the lock between merge and 100% rollout. Without both, prompt and agent changes ship on subjective review.

The four-stage pipeline

A pipeline is the contract between “PR opened” and “100% rollout”. Each stage has a trigger, a wall-clock budget, what it gates on, what it alerts on, and the FAGI fi.evals or traceAI code that runs there. The four stages below are the canonical 2026 shape; teams that try to collapse Stage 2 into Stage 1, or to skip Stage 3, end up paying for it in production.

Stage 1: PR-time fast checks (under 90 seconds)

Trigger. Every PR that touches prompts/, agents/, tools/, or evals/.

Budget. Under 90 seconds. Anything slower kills developer flow and gets disabled.

What runs.

- Prompt template lint. Every prompt is a templated string with placeholders (

{{user_input}},{{tool_definitions}}, etc.). A missing placeholder, an unbalanced brace, or a typo in a system prompt is a defect. Static parsing catches these in milliseconds. - Tool schema validation. Tool definitions are JSON Schemas (or the OpenAI/Anthropic tool-calling equivalents). A schema with an undeclared required field, an invalid type, or a circular reference fails. Use ajv (TypeScript) or jsonschema (Python). For depth on schema validation, see What is LLM Input/Output Validation.

- Deterministic eval. 10-50 cases pinned by dataset SHA, judge model held fixed at temperature 0.0, no judge variance. Per-rubric pass-rate must not regress beyond a per-rubric hard-fail delta floor against main; pick the floors from observed judge / test variance on a calibration set (illustrative starting values: faithfulness and function call accuracy around -0.05; safety-style rubrics tighter, e.g. around -0.03).

- Token-budget regression. Run the agent on the eval set with the new prompt; total tokens emitted must be within 5% of the main-branch total. Catches “the new prompt is 3x longer” before it shows up in the canary.

What it gates on. Any of the four checks fails: PR is red.

What it alerts on. Token-budget regression between 1% and 5%: warning comment on PR, not a block.

FAGI code.

# tested 2026-05-09

"""Stage 1 PR-time fast eval. Wall-clock budget: under 90 seconds.

Uses the documented `evaluate()` API from fi.evals with string-based

template names. Env vars FI_API_KEY and FI_SECRET_KEY are read by the SDK.

Baseline pass-rates are stored as a JSON artifact produced by the same

script run on `main`.

"""

import json

import os

from pathlib import Path

from fi.evals import evaluate

DATASET_VERSION = "v2.4"

DATASET_SHA = "f7c91a2" # git SHA of evals/golden/

# Load the SHA-pinned eval cases. Each case carries `input`, `output`,

# `context`, and (for tool-call rows) the expected tool calls.

cases = [

json.loads(line)

for line in Path(f"evals/golden/{DATASET_VERSION}/eval.jsonl").read_text().splitlines()

]

def pass_rate(template: str, kwargs_per_case: list[dict]) -> float:

"""Run a documented fi.evals template across all cases; return pass-rate."""

n_pass = 0

for kwargs in kwargs_per_case:

result = evaluate(

template,

**kwargs,

model="turing_flash",

fi_api_key=os.environ["FI_API_KEY"],

fi_secret_key=os.environ["FI_SECRET_KEY"],

)

# `evaluate()` returns an EvalResult with a numeric/boolean signal.

if getattr(result, "passed", False) or getattr(result, "score", 0) >= 0.5:

n_pass += 1

return n_pass / max(1, len(kwargs_per_case))

# Per-rubric runs. `faithfulness` and `evaluate_function_calling` are

# documented template names. Use a documented refusal/safety eval if your

# workload needs one.

faithfulness_cases = [

{"input": c["input"], "output": c["output"], "context": c.get("context", "")}

for c in cases

]

tool_cases = [

{"input": c["input"], "output": c["output"]}

for c in cases if c.get("expected_tool_calls")

]

candidate = {

"faithfulness": pass_rate("faithfulness", faithfulness_cases),

"evaluate_function_calling": pass_rate("evaluate_function_calling", tool_cases),

}

# Per-rubric hard-fail deltas vs main. Tune these to your calibration set.

# The values below are illustrative; Stage 2 adds the borderline and yellow

# bands described in the gating-math section.

DELTA_THRESHOLDS = {

"faithfulness": -0.05,

"evaluate_function_calling": -0.05,

}

baseline = json.loads(Path("artifacts/baseline_pass_rates.json").read_text())

regressions = []

for rubric, delta_floor in DELTA_THRESHOLDS.items():

delta = candidate[rubric] - baseline[rubric]

if delta < delta_floor:

regressions.append((rubric, delta))

if regressions:

for rubric, delta in regressions:

print(f"BLOCK rubric={rubric} delta={delta:.3f}")

raise SystemExit(1)

print("PASS: no rubric regressed beyond threshold")Stage 2: Merge-time integration eval (5-15 minutes)

Trigger. PR marked “ready for review”, or pushed to a release branch.

Budget. 5-15 minutes. Parallelise the eval across the dataset (one row per task) so the wall-clock stays under 15 minutes even at 500 cases.

What runs. A 200-500 case eval set per cohort, stratified across normal traffic, edge cases, and known failure modes. Judge-based rubrics with kappa-validated thresholds (see LLM as Judge Best Practices for the calibration math, and the custom LLM eval metrics sister post for rubric design). Regression vs main-branch baseline using delta gating, not absolute thresholds.

What it gates on. Three-band gating per rubric (with safety as a hard floor: any drop is auto-fail). Hard block when the candidate-vs-main delta is worse than -0.05; borderline review when delta is in [-0.05, -0.02) (block but allow override with reviewer sign-off plus a re-run); yellow band when delta is in [-0.02, 0.00) (require reviewer sign-off, no auto-block). The math behind the -0.05 hard floor is in the gating-math section below.

What it alerts on. Judge-disagreement above 5% on any rubric (vote across 3 calls, threshold the median). Disagreement above 10% triggers re-run with a sturdier judge model.

FAGI code. See the gate function in the gating-math section below.

Stage 3: Pre-deploy simulation (10-60 minutes)

Trigger. Pre-merge for high-risk PRs (label-gated), or pre-deploy on the release branch.

Budget. 10-60 minutes depending on persona count and branching factor.

What runs.

- Persona-driven multi-turn conversations via FAGI Simulation. 5-15 production personas, 3-7 turns each, branching factor of 3 at decision points.

- Adversarial corpus. Prompt-injection probes from PortSwigger and OWASP LLM Top 10, jailbreak templates (DAN-family, roleplay-injection, encoded-payload), tool-misuse probes.

- Tool-error injection chaos. Inject 5xx, timeout, and malformed-response errors into 10% of tool calls; verify the agent recovers without unsafe state.

What it gates on. Persona objectives met at >= 85% rate. Zero guardrail trips on the safety-critical persona set. Zero unsafe tool calls (e.g., destructive write paths called without confirmation). p95 latency within budget.

What it alerts on. Persona objective rate between 80% and 85%: review, not block. Adversarial pass rate above 95% but below 99%: review.

Why this stage exists. Multi-turn drift, jailbreak susceptibility, and tool-misuse paths do not show up in single-turn eval sets. They emerge in the second or third turn, when context accumulates and the agent’s prior commitments interact with new instructions.

Stage 4: Canary plus rollback (1-24 hours)

Trigger. Merge to main and CD pipeline green.

Budget. 1-24 hours of progressive rollout. The 1% canary holds for 30 minutes; 5% for 1 hour; 25% for 4 hours; 100% by 24 hours.

What runs. Shadow traffic on a small fraction of prod (1-5% as an illustrative ramp), with cloud Turing online eval via FAGI turing_flash (turing_flash cloud eval latency is documented at roughly 1-2 seconds; for sub-request-path checks use local guardrail scanners) and full eval templates running async on a sample of canary traces.

What it gates on. Auto-rollback triggers when any of these fire on a 5-minute rolling window. Treat the numeric values below as illustrative defaults; calibrate from your historical baseline variance:

- Kappa-against-baseline drop beyond your calibrated tolerance (e.g., > 0.05 for many teams).

- Guardrail trip rate above your calibrated multiple of baseline (e.g., > 2x).

- p95 latency above your calibrated multiple of baseline (e.g., > 1.3x).

- Cost-per-request above your calibrated multiple of baseline (e.g., > 1.2x).

What it alerts on. Any single trigger at 1.5x to 2x of fail level: page the on-call but do not auto-rollback.

Why this stage exists. Stage 2 catches regressions on the eval set. Stage 3 catches regressions on synthetic personas. Stage 4 catches regressions on the only distribution that ultimately matters: the live production distribution, which always drifts faster than your eval set.

Building the eval set that survives 6 months

The single most common cause of “the eval gate passed and prod still broke” is an eval set that has decayed against the production distribution. After six months of product growth and prompt iteration, an eval set built once and never refreshed will diverge from the live distribution; new intent slices appear, old slices contract, and the gate stops covering the cases that actually break. Build it to survive.

Stratified construction

A workable starting point for many teams is 200-500 cases per cohort with a roughly normal/edge/failure-mode mix; treat both the count and the proportions below as illustrative defaults to derive from your production traffic and incident history rather than fixed best-practice targets:

- Normal traffic (illustrative ~60%). Sampled from production over the last 30 days, anonymized. These cases are the ones the agent must continue to handle well.

- Edge cases (illustrative ~25%). Sampled from the long tail: rare but not failing. Multilingual, malformed, ambiguous, multi-intent. These are where regressions hide.

- Known failure modes (illustrative ~15%). Cases the agent has previously failed on, drawn from incident postmortems. These are the never-again list.

Tune both the size and the mix to your production traffic distribution and incident history. A flat eval set without stratification systematically under-weights the cases that are most likely to break.

Sources

Three sources, each with its own bias:

- Production traces. The most representative. Anonymize PII, redact secrets, hash user IDs. Sample stratified by score, not uniformly: take 50% of cases from the 0.85-1.00 score band, 30% from 0.5-0.85, 20% from below 0.5.

- Synthetic generation. Use a stronger model to produce edge cases the production distribution under-represents. See Synthetic Test Data for LLM Evaluation for the generation patterns. Synthetic alone is biased toward the generator model’s failure modes.

- Hand-written adversarial. Red-team probes, jailbreaks, prompt injections. Smallest in count but highest in marginal value.

Versioning

The eval set is a git artifact. The dataset version is pinned by SHA in the pipeline (no floating tags, no latest). Every PR that modifies the eval set goes through code review with the same rigor as agent code. Reviewers check: was the new case really a failure, or just a difficult case? Is the rubric tight enough that a passing answer is unambiguous? Does the case duplicate an existing one?

Drift detection

When the cosine distance between the production prompt-embedding centroid and the eval set centroid drifts above a threshold calibrated from your historical baseline variance, regenerate. In practice this means: compute the centroid embedding of recent production traces on a regular cadence, compare against the eval set centroid, and alert when drift exceeds your calibrated tolerance. Document the chosen threshold and rationale in the dataset manifest so reviewers can audit it. A monthly cadence is the floor; for fast-moving products, weekly.

Anti-leakage

Two leak paths to seal:

- Never use eval cases to calibrate the judge. Hold out 20% of the eval set as a judge-calibration set; never score the gated eval against the calibration set.

- Never include eval cases in finetuning data. If you finetune the agent or the judge, scrub the eval set out of the training data. Verify with a near-duplicate detector (MinHash or embedding-cosine).

Refresh cadence

- Monthly review. Score the eval set against the latest production sample; flag cases where the agent now passes that previously failed (graduate them out) and where the agent now fails that previously passed (investigate as regressions).

- Quarterly major refresh. Replace 30-50% of the normal-traffic slice with a fresh production sample.

- Per-incident addition. Every postmortem ends with at least one new eval case. The case lands in the regression suite (the 15% known-failure-mode slice).

Build code

The snippet below is illustrative pseudocode for the stratified dataset build. Sourcing from production traces is workload-specific: in practice you query exported traceAI spans (or your own trace store), apply your own redaction policy, and emit a JSONL alongside a manifest.json. The dataset is then loaded by the evaluate() calls in Stage 1 and Stage 2.

# tested 2026-05-09

"""Build a stratified eval set from anonymized prod traces (illustrative).

Replace `load_anonymized_prod_traces` with your own export from traceAI

(or your trace store). The shape below is what later eval-gate code reads.

"""

import hashlib

import json

import random

from pathlib import Path

def load_anonymized_prod_traces(cohort: str, window_days: int, n: int, **filters):

"""Workload-specific: export traceAI spans, redact PII, hash user IDs.

Returns a list of dicts with fields: id, prompt_hash, input,

gold_output, cohort, expected_tool_calls.

"""

return [] # placeholder for your export pipeline

# Stratified pull from production. As a starting point: 60% normal, 25%

# edge, 15% known failure; tune to your traffic and incident history.

NORMAL = load_anonymized_prod_traces("normal", window_days=30, n=300, min_score=0.85)

EDGE = load_anonymized_prod_traces("edge", window_days=30, n=125, score_lo=0.5, score_hi=0.85)

FAILURE = load_anonymized_prod_traces(

"known_failure", window_days=90, n=75, tags=["incident_postmortem"]

)

cases = [

{

"trace_id": t["id"],

"prompt_template_hash": t["prompt_hash"],

"input": t["input"],

"gold_output": t["gold_output"],

"cohort": t["cohort"],

"expected_tool_calls": t.get("expected_tool_calls", []),

}

for t in NORMAL + EDGE + FAILURE

]

# Holdout 20% for judge calibration; never used as eval set.

random.seed(42)

holdout = random.sample(cases, k=int(0.2 * len(cases)))

holdout_ids = {c["trace_id"] for c in holdout}

eval_set = [c for c in cases if c["trace_id"] not in holdout_ids]

manifest = {

"version": "v2.5",

"n_eval": len(eval_set),

"n_holdout": len(holdout),

}

out_path = Path("evals/v2.5")

out_path.mkdir(parents=True, exist_ok=True)

with (out_path / "eval.jsonl").open("w") as f:

for c in eval_set:

f.write(json.dumps(c) + "\n")

manifest_bytes = json.dumps(manifest, sort_keys=True).encode()

manifest["sha"] = hashlib.sha256(manifest_bytes).hexdigest()[:12]

(out_path / "manifest.json").write_text(json.dumps(manifest, indent=2))

print(f"built dataset {manifest['version']} sha={manifest['sha']}")For broader dataset construction context, see What is an LLM Dataset?.

Gating math: from raw scores to a merge button

The eval gate produces a number per rubric. The pipeline turns numbers into a merge decision. The decision logic is where most teams get this wrong, in three failure modes: absolute thresholds that drift, single-judge calls that flake, and binary pass/fail that misses the yellow band.

Absolute thresholds vs delta gating

An absolute threshold says “faithfulness must be above 0.92”. A delta threshold says “faithfulness must be no worse than main-branch faithfulness, minus a buffer”. Delta gating is the recommended default in CI for three reasons:

- The judge drifts. Provider rolls a minor model revision; absolute scores shift by 0.01-0.03 globally; absolute thresholds either fire false positives or are loosened until they catch nothing.

- The eval set drifts. New stratified cases are harder; absolute scores drop on harder data; absolute thresholds need to be re-tuned per dataset version.

- Delta thresholds compound. Each PR can degrade by at most the buffer. Absolute thresholds let small drops accumulate silently.

Use absolute floors only where the workload demands them: safety must be 1.0, factuality must be above 0.95, anything below the floor is a hard block regardless of delta.

Per-dimension gates

Different rubrics get different rules:

- Safety: hard floor at 1.0. Any single-vote drop blocks. No delta gate, no yellow band.

- Factuality: absolute floor 0.95. Delta below -0.05 is a hard block; delta in

[-0.05, -0.02)is borderline review; delta in[-0.02, 0.00)is yellow band. - Helpfulness: no absolute floor (workload-dependent). Same three-band delta policy: -0.05 hard block,

[-0.05, -0.02)borderline,[-0.02, 0.00)yellow band. - Tool-call accuracy: absolute floor 0.90. Same three-band delta policy.

Aggregate via min, not weighted-mean. A weighted-mean lets a 0.10 drop on safety hide behind a 0.05 gain on helpfulness. Min-aggregation makes every rubric a veto.

Statistical significance

At n=200, a 95% normal-approximation half-width on a single proportion at p ~ 0.9 is about 0.04. The normal approximation is loose near p=0 or p=1; use a Wilson interval (or a bootstrap on your eval scores) for a tighter bound when the rubric pass-rate is in the 0.85-0.99 band. For a candidate-vs-baseline delta the relevant statistic is the difference of two paired proportions; prefer a paired test (McNemar, or a bootstrap on per-case score deltas) over a one-sample half-width. The math for the single-proportion half-width as a buffer-sizing estimate:

half_width = z * sqrt(p * (1 - p) / n)

= 1.96 * sqrt(0.9 * 0.1 / 200)

= 0.0416This means a per-PR delta inside roughly [-0.04, 0.00) is within sampling noise on a single rubric. To make the gate robust against single-PR noise, the example below uses fail_threshold = -0.05 for hard block, treats [-0.05, -0.02) as borderline (block but allow override with reviewer sign-off plus a re-run), and treats [-0.02, 0.00) as the yellow band (require reviewer sign-off, no auto-block); anything in [0.00, +inf) is clean. These thresholds are illustrative; choose your own from observed judge / test variance on a calibration set, and prefer paired-proportion tests over a single half-width when you have enough cases. Larger n (n=500 instead of n=200) shrinks the half-width to about 0.026 and makes tighter thresholds defensible.

Flaky-judge handling

Single judge calls flake. Re-run on judge-disagreement: vote across 3 calls per case, take the median, threshold the median. If the spread (max - min) on a case exceeds 0.10, mark the case “flaky” and require manual review on that case before the gate finalises.

Yellow band

A delta in [-0.02, +0.00) is the yellow band. Auto-fail is too aggressive (catches noise as regression); auto-pass is too lax (lets small real regressions accumulate). The right default: yellow band requires a manual reviewer sign-off in the PR. The reviewer either approves the small drop with a written rationale or asks for the regression to be addressed.

The gate function

# tested 2026-05-09

"""Gate function: returns ship | review | block based on per-rubric deltas.

`candidate` and `baseline` are simple objects exposing `votes(rubric)` ->

list[float] (one float per judge call per case). In practice you produce

them by running the documented `evaluate()` API across the eval set and

collecting the per-case `score` / `passed` values.

"""

import math

import statistics

from typing import Literal, Protocol

class EvalRun(Protocol):

def votes(self, rubric: str) -> list[float]:

...

GateDecision = Literal["ship", "review", "block"]

def gate(

candidate: EvalRun,

baseline: EvalRun,

n_per_rubric: int = 200,

) -> tuple[GateDecision, dict]:

"""Return decision and per-rubric breakdown.

Rules:

- safety must be exactly 1.0 on every vote (no soft fail).

- factuality, helpfulness, tool_call_accuracy use delta gating against main.

- statistical buffer: at n=200, single-proportion 95% half-width is ~0.04.

Set fail_threshold beyond that band so noise does not flag as regression.

- yellow band: delta in [-0.02, +0.00) requires manual review.

- judge flake: vote across 3 calls, threshold the median.

"""

# Statistical buffer: a 95% normal-approximation half-width on a proportion

# at p~0.9, n=200 is ~0.0416. We set fail at -0.05 to clear the noise band.

fail_threshold = -0.05

breakdown = {}

block = False

review = False

# Safety: hard block if any single vote falls below 1.0. Median voting

# would let [1.0, 1.0, 0.0] pass with a 1.0 median, which is unsafe.

safety_votes = candidate.votes("safety")

safety_min = min(safety_votes) if safety_votes else 1.0

breakdown["safety"] = {"min_vote": safety_min, "rule": "hard: every vote must be 1.0"}

if any(v < 1.0 for v in safety_votes):

block = True

# Per-dimension delta gates. soft_floor=None means no absolute floor;

# only the delta gate applies.

for rubric, soft_floor in [

("factuality", 0.95),

("helpfulness", None),

("tool_call_accuracy", 0.90),

]:

cand = statistics.median(candidate.votes(rubric))

base = statistics.median(baseline.votes(rubric))

delta = cand - base

# Re-run if judge disagreement on candidate is too high.

votes = candidate.votes(rubric)

if len(votes) >= 3:

disagreement = max(votes) - min(votes)

else:

disagreement = 0.0

flaky = disagreement > 0.10

if flaky:

review = True

decision_for_rubric = "review_flaky_judge"

elif soft_floor is not None and cand < soft_floor:

block = True

decision_for_rubric = "block_below_soft_floor"

elif delta < fail_threshold:

block = True

decision_for_rubric = "block_delta"

elif fail_threshold <= delta < -0.02:

block = True

review = True

decision_for_rubric = "review_borderline"

elif -0.02 <= delta < 0.0:

review = True

decision_for_rubric = "review_yellow_band"

else:

decision_for_rubric = "ship"

breakdown[rubric] = {

"candidate": cand,

"baseline": base,

"delta": delta,

"disagreement": disagreement,

"decision": decision_for_rubric,

}

if block:

return "block", breakdown

if review:

return "review", breakdown

return "ship", breakdown

def confidence_interval_halfwidth(p: float, n: int, z: float = 1.96) -> float:

"""Normal-approximation 95% CI half-width on a proportion. Buffer-sizing only.

The normal approximation is loose near p=0 or p=1; for rubric pass-rates

above ~0.85 prefer a Wilson interval, McNemar's test, or a bootstrap on

per-case paired score deltas.

"""

return z * math.sqrt(p * (1 - p) / n)Replay-driven regression: catching the change you didn’t know you made

Static eval sets miss one important class of regression: the kind that only appears under the production trace distribution. Replay-driven regression closes that gap by capturing real production traces, then re-scoring them on every PR.

Capture

Instrument the agent with traceAI (Apache 2.0, OTel-native). Use register() plus FITracer and decorate the agent entrypoint and any tool call with @tracer.agent / @tracer.tool / @tracer.chain. Spans are exported via the standard OpenTelemetry mechanism configured by register().

# tested 2026-05-09

"""Capture: instrument the agent with traceAI (fi_instrumentation)."""

from fi_instrumentation import register, FITracer

from fi_instrumentation.fi_types import ProjectType

trace_provider = register(

project_type=ProjectType.OBSERVE,

project_name="agent-prod",

)

tracer = FITracer(trace_provider.get_tracer(__name__))

@tracer.agent

def handle_request(prompt: str, tools: list) -> str:

# production agent code

return ""

@tracer.tool(name="search_tool", description="search over the knowledge base")

def search_tool(query: str) -> str:

return ""Replay is an architectural pattern, not a single SDK call. The shape below is illustrative pseudocode: pull recent stable traces from your trace store, re-run them through the candidate and baseline branches with the same documented evaluate() calls Stage 1 uses, and write a diff artifact for review. Adjust the trace count to your traffic and stability window.

# tested 2026-05-09

"""Replay (illustrative): re-score recent stable traces on every PR."""

import json

import os

from pathlib import Path

from fi.evals import evaluate

def load_stable_traces(n: int, stability_window_days: int) -> list[dict]:

"""Workload-specific export of recent traces from your trace store."""

return []

def score_branch(traces: list[dict], branch: str) -> dict:

"""Re-run traces through the agent at `branch`; score with documented evals."""

pass_rates: dict[str, float] = {}

for template in ("faithfulness", "evaluate_function_calling"):

n_pass = 0

for t in traces:

response = t["output"] # in practice: re-run agent at `branch`

r = evaluate(

template,

input=t["input"],

output=response,

context=t.get("context", ""),

model="turing_flash",

fi_api_key=os.environ["FI_API_KEY"],

fi_secret_key=os.environ["FI_SECRET_KEY"],

)

if getattr(r, "passed", False) or getattr(r, "score", 0) >= 0.5:

n_pass += 1

pass_rates[template] = n_pass / max(1, len(traces))

return pass_rates

def replay_for_pr(pr_branch: str, n: int = 200) -> dict:

traces = load_stable_traces(n=n, stability_window_days=30)

candidate = score_branch(traces, branch=pr_branch)

baseline = score_branch(traces, branch="main")

Path("artifacts/replay").mkdir(parents=True, exist_ok=True)

Path("artifacts/replay/diffs.json").write_text(

json.dumps({"candidate": candidate, "baseline": baseline}, indent=2)

)

return {

"candidate_faithfulness": candidate["faithfulness"],

"baseline_faithfulness": baseline["faithfulness"],

"n": len(traces),

}Replay

On every PR, replay a sample of recent stable traces (e.g., on the order of a few hundred, defined by your stability window such as at least 30 days old with no incident tags) against the candidate branch. Score with the same rubric the new code will be gated on. Compare candidate-pass-rate to baseline-pass-rate.

Diff-mode review

Show the lowest-scoring 20 traces side by side: old output vs new output, old score vs new score, judge rationale on both. Reviewers approve, request changes, or escalate. The diff view turns a number (“faithfulness dropped 0.03”) into the actual cases that drove the drop, which makes the regression debuggable in minutes rather than hours.

Prompt-version awareness

When the prompt template changes, the historical traces were generated under a different prompt and may produce different outputs that are still correct. Mark the prompt template hash on every trace; when the template changes, fork the regression baseline rather than treating differences as regressions. The candidate is compared against the new fork’s baseline, not the pre-change baseline.

Simulation: surfacing failures users will hit

Static eval sets and replay capture two distributions: synthetic and historical. Neither catches the failure modes that emerge in multi-turn conversations or under adversarial pressure. Simulation does.

Personas

Define 5-15 production personas covering the realistic axes of user behavior. The minimum set:

- Impatient power user: terse inputs, expects short answers, interrupts the agent’s reasoning chain.

- Confused first-timer: verbose, asks clarifying questions, low domain knowledge.

- Hostile adversarial: jailbreak attempts, prompt injection, tool misuse.

- Multilingual edge: mixed language, non-Latin script, code-switching mid-conversation.

- Long-context researcher: large attachments, follow-up threading, citation demands.

For higher-risk products add: regulated-industry user, accessibility-first user, programmatic API consumer, and underage-protected user (where the agent must verify and refuse).

Conversation depth

3-7 turns per persona. At each turn, branch into 2-3 plausible follow-up paths. A 5-turn persona with branching factor 3 produces 243 distinct conversation trajectories per persona. With 10 personas, that is 2,430 trajectories per simulation run. The branching factor is what makes simulation catch failures that single-turn eval sets cannot.

Adversarial corpus

Three sources:

- PortSwigger LLM attack labs: prompt injection, indirect injection, output-handling injection.

- OWASP LLM Top 10 2025: data and model poisoning, supply-chain vulnerabilities, unbounded consumption.

- Jailbreak templates: DAN-family, roleplay-injection, encoded-payload, character-swap, language-pivot.

Run 25 probes per persona per category. Total: ~750 adversarial probes per simulation run.

Pass criteria

- Persona objectives met at >= 85% of trajectories.

- Zero guardrail trips on the safety-critical persona set.

- Zero unsafe tool calls (any destructive write path called without confirmation is a hard fail).

- p95 latency budget met (typically 2.5s end-to-end).

Why FAGI Simulation

FutureAGI is strongest when you want persona-driven simulation wired directly into CI, with trace capture and eval scoring in the same workflow. The simulation SDK (fi.simulate.TestRunner) drives a simulation that you create in the UI through your agent callback; results land back in the FAGI dashboard. The alternative is gluing together LangGraph for branching, a custom adversarial corpus, and a separate pass-criteria checker, then wiring eval scoring on top.

The example below uses the documented SDK. Personas, branching, and adversarial corpora are configured in the FAGI UI and referenced by simulation name; the Python SDK wires the agent callback.

# tested 2026-05-09

"""Persona-driven simulation as a CI gate (FAGI agent-simulate SDK).

Pre-create the simulation in the FAGI UI (with personas, branching factor,

and adversarial sources). Reference it here by `run_test_name`.

"""

import asyncio

import os

from typing import Union

from fi.simulate import TestRunner, AgentInput, AgentResponse, AgentWrapper

class RouterAgent(AgentWrapper):

async def call(self, input: AgentInput) -> Union[str, AgentResponse]:

user_text = (input.new_message or {}).get("content", "") or ""

# Route into the candidate branch's agent; in CI the branch SHA is

# supplied via env var so the agent loads the candidate prompts.

return f"response for {user_text}"

async def main() -> None:

runner = TestRunner(

api_key=os.environ["FI_API_KEY"],

secret_key=os.environ["FI_SECRET_KEY"],

)

await runner.run_test(

run_test_name="agent-router-pr-gate", # matches UI simulation name

agent_callback=RouterAgent(),

concurrency=5,

)

print("Simulation complete; pass/fail criteria are evaluated in the UI.")

if __name__ == "__main__":

asyncio.run(main())The agent-simulate package ships the SDK; install with pip install agent-simulate litellm. Pass criteria (persona objective rate, guardrail trip rate, unsafe-tool-call count, p95 latency budget) are configured per simulation in the FAGI UI; the dashboard surfaces pass/fail.

Canary: shipping at 1% before shipping at 100%

The canary is the runtime equivalent of the offline eval gate. Stage 2 catches regressions on the eval set distribution; the canary catches everything else.

Traffic split

A progressive rollout sized by request volume and minimum detectable effect; the table below is illustrative, not a universal recipe. Tune the holds to your traffic and required statistical power.

| Step | Weight | Hold duration (illustrative) | Cumulative time |

|---|---|---|---|

| 1 | 1% | 30 minutes | 0:30 |

| 2 | 5% | 1 hour | 1:30 |

| 3 | 25% | 4 hours | 5:30 |

| 4 | 50% | 6 hours | 11:30 |

| 5 | 100% | overnight soak before declaring stable | 23:30+ |

The 1% step exists to catch the catastrophic regressions: the prompt that returns empty strings, the schema that breaks JSON parsing, the new tool that crashes a high fraction of calls. The 5% step catches the next tier: regressions in roughly 1-in-100 cases. The 25% step is sized so the canary accumulates enough request volume to detect regressions in the long tail; how many requests that takes depends on traffic volume and minimum detectable effect size, so configure the hold by request count, not just elapsed time. As an example, you might require the canary cohort to log a minimum number of requests before promoting past 25%; pick the floor from a power calculation against your target effect size.

Online eval pass

For inline guardrail screening on every canary request, use the documented Guardrails module with fast local scanners (sub-10ms) plus selective model-backed scanners. Cloud Turing eval templates (turing_flash ~1-2s, turing_small ~2-3s, turing_large ~3-5s) are documented per-tier; run them async on a sample of canary traces with results aggregated on a 5-minute rolling window. The StreamingEvaluator is the documented SDK surface for online eval over a stream of records.

# tested 2026-05-09

"""Online eval on canary traffic: local guardrail scanners inline, cloud

Turing eval templates async on a sample, all spans captured by traceAI."""

import asyncio

import os

import random

from fi_instrumentation import register, FITracer

from fi_instrumentation.fi_types import ProjectType

from fi.evals import evaluate

from fi.evals.guardrails import Guardrails, GuardrailsConfig, GuardrailModel

from fi.evals.guardrails.scanners import (

create_default_pipeline,

PromptInjectionScanner,

)

trace_provider = register(

project_type=ProjectType.OBSERVE,

project_name="agent-canary",

)

tracer = FITracer(trace_provider.get_tracer(__name__))

# Inline guardrail screen: local scanners (sub-10ms) plus a model-backed

# scanner where the workload demands it. Configure your own pipeline.

guard = Guardrails(

config=GuardrailsConfig(models=[GuardrailModel.TURING_FLASH]),

scanners=create_default_pipeline() + [PromptInjectionScanner()],

)

@tracer.agent

def handle(prompt: str, tools: list, cohort: str) -> str:

screen = guard.screen_input(prompt)

if not screen.passed:

return "blocked"

response = run_agent(prompt, tools)

# Async cloud-eval sample: documented evals run at roughly 1-2s on turing_flash, so we

# fire-and-forget on a sample and aggregate in Prometheus / the FAGI UI.

if random.random() < 0.10:

asyncio.get_event_loop().create_task(_score_async(prompt, response, cohort))

return response

async def _score_async(prompt: str, response: str, cohort: str) -> None:

for template in ("faithfulness", "evaluate_function_calling"):

evaluate(

template,

input=prompt,

output=response,

model="turing_flash",

fi_api_key=os.environ["FI_API_KEY"],

fi_secret_key=os.environ["FI_SECRET_KEY"],

)

def run_agent(prompt: str, tools: list) -> str:

return ""

# Rollback policy: example values. Tune to your calibration set and

# observed judge / test variance; document the rationale alongside each

# threshold.

ROLLBACK_TRIGGERS = {

"kappa_drop": 0.05, # illustrative, workload-specific

"guardrail_trip_multiplier": 2.0,

"p95_latency_multiplier": 1.3,

"cost_per_request_multiplier": 1.2,

}Rollback triggers

Four metrics, each with a 5-minute rolling window. Thresholds below are illustrative; set kappa thresholds and ratios from your calibration set and annotate them as workload-specific.

- Kappa drop (illustrative threshold ~0.05): agent-judge agreement against the baseline calibration set falls. Landis & Koch (1977) provide qualitative agreement bands, not CI/CD rollback thresholds; pick the gate from your own calibration variance.

- Guardrail trip rate (illustrative > 2x baseline): the agent is generating unsafe outputs that the guardrails are catching, or new content categories are tripping existing guardrails.

- p95 latency (illustrative > 1.3x baseline): the new prompt or new model setting added significant inference cost.

- Cost per request (illustrative > 1.2x baseline): the new agent is calling more tools, generating more tokens, or selecting a more expensive model.

Any single trigger fires: auto-rollback. Two triggers at half-of-fail level: page the on-call, do not auto-rollback.

Cohort-scoped rollback

Production traffic is rarely uniform. When only the enterprise cohort regresses (because enterprise users have larger contexts, stricter compliance requirements, or a different prompt-template variant), freeze enterprise traffic to the incumbent but keep self-serve at canary. This requires the gateway to support cohort-keyed routing, and the eval pipeline to score per-cohort metrics.

Dual-write feature flags

Ship the new prompt template under a feature flag, not as a deploy. The flag flip is atomic and reversible in milliseconds; a deploy rollback takes minutes. Combine with cohort-scoped routing: flag on for canary cohort, off for everyone else, flip the flag to ramp.

Canary YAML

# tested 2026-05-09

# Canary deploy on K8s + LangServe + FAGI traceAI.

# Apply via: kubectl apply -f canary.yml

apiVersion: argoproj.io/v1alpha1

kind: Rollout

metadata:

name: agent-router

namespace: agents

spec:

replicas: 20

strategy:

canary:

maxSurge: 25%

maxUnavailable: 0

steps:

- setWeight: 1

- pause: { duration: 30m }

- setWeight: 5

- pause: { duration: 1h }

- setWeight: 25

- pause: { duration: 4h }

- setWeight: 100

analysis:

templates:

- templateName: fagi-online-eval

startingStep: 1

args:

- name: cohort

value: canary

- name: judge-model

value: turing_flash

selector:

matchLabels:

app: agent-router

template:

metadata:

labels:

app: agent-router

spec:

containers:

- name: router

image: registry.internal/agent-router:${GIT_SHA}

env:

- name: PROMPT_VERSION_SHA

value: ${PROMPT_SHA}

- name: MODEL_PIN

value: gpt-5-2025-08-07

- name: FI_API_KEY

valueFrom:

secretKeyRef:

name: fagi

key: api_key

- name: FI_SECRET_KEY

valueFrom:

secretKeyRef:

name: fagi

key: secret_key

- name: OTEL_EXPORTER_OTLP_PROTOCOL

value: grpc

- name: OTEL_EXPORTER_OTLP_ENDPOINT

value: https://otel.fagi.example/v1/traces

resources:

requests:

cpu: 500m

memory: 1Gi

limits:

cpu: 2000m

memory: 4Gi

---

apiVersion: argoproj.io/v1alpha1

kind: AnalysisTemplate

metadata:

name: fagi-online-eval

spec:

args:

- name: cohort

- name: judge-model

metrics:

- name: kappa-drop

interval: 5m

# fagi_kappa_drop = max(0, baseline_kappa - candidate_kappa); always non-negative.

# Rollback fires when the drop exceeds 0.05.

successCondition: result[0] <= 0.05

failureLimit: 1

provider:

prometheus:

address: http://prometheus.monitoring:9090

query: |

max_over_time(fagi_kappa_drop{cohort="{{args.cohort}}"}[5m])

- name: guardrail-trip-rate

interval: 5m

successCondition: result[0] <= 2.0

failureLimit: 1

provider:

prometheus:

address: http://prometheus.monitoring:9090

query: |

(sum(rate(fagi_guardrail_trip_total{cohort="canary"}[5m]))

/ sum(rate(fagi_guardrail_trip_total{cohort="baseline"}[5m])))

- name: p95-latency-multiplier

interval: 5m

successCondition: result[0] <= 1.3

failureLimit: 1

provider:

prometheus:

address: http://prometheus.monitoring:9090

query: |

(histogram_quantile(0.95, sum(rate(fagi_request_duration_ms_bucket{cohort="canary"}[5m])) by (le))

/ histogram_quantile(0.95, sum(rate(fagi_request_duration_ms_bucket{cohort="baseline"}[5m])) by (le)))

- name: cost-per-request-multiplier

interval: 5m

successCondition: result[0] <= 1.2

failureLimit: 1

provider:

prometheus:

address: http://prometheus.monitoring:9090

query: |

(sum(rate(fagi_request_cost_usd_total{cohort="canary"}[5m])) / sum(rate(fagi_request_total{cohort="canary"}[5m])))

/ (sum(rate(fagi_request_cost_usd_total{cohort="baseline"}[5m])) / sum(rate(fagi_request_total{cohort="baseline"}[5m])))For deeper deployment patterns, see LLM Deployment Best Practices in 2026. For the rollout-time monitoring setup, see Production LLM Monitoring Checklist.

Reproducibility: when “the model changed” is your bug

The hardest bug to debug in an agent is the one where nothing in your codebase changed and the agent still regressed. The cause is usually upstream: the provider rolled the model, your judge model drifted, your tool server upgraded a dependency. Reproducibility is the discipline of pinning every variable so that “nothing changed” actually means nothing changed.

Pin model versions

Never use floating tags in CI. gpt-5 will silently move under you; the dated snapshot gpt-5-2025-08-07 will not. The same applies to claude-sonnet-4 vs claude-sonnet-4-20250514. Pin the exact dated revision in the agent config, the judge config, and the eval config. Re-pinning is a code review.

Pin eval sets by SHA

Compute the SHA-256 of the eval set’s manifest.json (which itself includes the SHA of the cases JSONL). Store the SHA in the pipeline config. Fail the eval if the SHA loaded does not match the SHA expected. This catches accidental dataset drift, partial uploads, and dataset-tampering between PR and merge.

Pin judge model and temperature

Judges with temperature > 0 are non-deterministic. Pin temperature=0 for all eval-time judge calls. Pin the judge model name (e.g., turing_flash); when the provider exposes dated revisions, pin the dated revision. Run the judge against the calibration set weekly to detect drift.

Pin tool schemas and tool-server versions

Tool servers are dependencies. A tool server that returns slightly different field names, or that adds a new optional field with a default, will change agent behavior even if no agent-side code changed. Snapshot the tool-server version in the agent config; CI fails if a tool dep version drifts. Track tool-server changes through the same review process as agent code.

SBOM for prompts

Every deployed prompt has a hash. The hash is logged on every traceAI span via the prompt.template_sha attribute. When you query traces and ask “which prompt version produced this”, the answer is one query away. The SBOM list is generated at deploy time: which prompts are live, on which routes, with which model pins, since when.

The “model changed silently” failure mode

Provider rolls a minor revision. The model fingerprint changes, but the version string does not (or you missed the deprecation notice). Calibration drifts. Rubric thresholds that were tuned for the old fingerprint now fire false positives or miss real regressions.

Mitigation: weekly model-fingerprint check. Every Monday, run the calibration set against the pinned model version. Compare the kappa to last week’s kappa. If kappa drops more than your alerting threshold (set from your calibration variance; an illustrative value is around 0.03), page on-call: either the provider rolled the model or the calibration set drifted. Either way, investigate before the next prod deploy.

For the related rubric calibration discipline, see Custom LLM Eval Metrics Best Practices.

What goes wrong (and what to do)

Six concrete failure scenarios. For every scenario: detection signal, mitigation, and the on-call playbook step that lands the fix without making it worse.

a. Eval-set leakage

Detection signal. Eval scores plateau at 1.0 across rubrics. The agent looks “perfect” on eval and middling in production. New eval cases score lower than old cases at the same difficulty.

Root cause. The eval set has been used (directly or indirectly) as training or calibration data. Either someone fine-tuned the agent on the eval set by accident, or the judge has been calibrated on the eval set, or the prompt iteration loop included the eval set in its examples.

Mitigation.

- Regenerate the eval set quarterly with fresh synthetic data and fresh production samples. Verify near-duplicate cases against the previous version (MinHash or embedding cosine).

- Hold out 20% of the eval set as a never-touched calibration set; use that for judge calibration.

- Audit any finetuning data with a near-duplicate detector against the eval set; remove leaks.

On-call step. Pull the new eval set v(N+1) from the synthetic-generation pipeline; verify zero overlap with v(N); re-baseline the gate against v(N+1).

b. Flaky judge

Detection signal. The same input produces different rubric scores on re-run. Rerun-disagreement above 5%.

Root cause. Judge temperature > 0, judge model is too small for the rubric complexity, or the rubric is ambiguous and reasonable judges disagree.

Mitigation.

- Pin temperature=0 on every judge call.

- Vote-3: run the judge three times, take the median, threshold the median.

- Tighten the rubric. Rubrics that humans cannot agree on are not gateable; rewrite the rubric until inter-annotator agreement clears the threshold you have picked for that workload (Landis & Koch (1977) provide qualitative bands such as “substantial” agreement around kappa 0.6-0.8 as guidance, not a CI/CD threshold).

On-call step. Suppress the flaky rubric from the gate, leaving it as a warning; open a ticket to tighten the rubric or upgrade the judge model.

c. Cost runaway in canary

Detection signal. Tokens-per-trace alert fires at 1.2x baseline. Cost-per-request multiplier exceeds 1.2x in the rollback-trigger panel.

Root cause. New agent calls 10x more tools (a routing change made every request fan out), or the new prompt is 3x longer, or the new model is more expensive per token.

Mitigation.

- Hard tool-budget limit per trace. Agent cannot call more than 10 tools per request; if it tries, the call is denied and the agent must respond.

- Auto-rollback on cost-per-request multiplier > 1.2x.

- Token-budget regression test in Stage 1 catches the prompt-length jump before merge.

On-call step. Auto-rollback fires; on-call confirms; opens a ticket to identify which sub-feature drove the fanout.

d. Calibration drift after model update

Detection signal. Weekly model-fingerprint check shows a kappa drop vs last week beyond your alerting threshold (workload-specific; pick from your calibration variance). No code changes between the two check runs.

Root cause. The provider rolled the underlying model on a dated version (yes, this happens; “minor improvements that should not affect behavior” sometimes do).

Mitigation.

- Pin model version to the exact dated revision; when the revision is deprecated, plan the migration.

- Run the calibration set weekly; alert on kappa drop beyond your tuned threshold.

- Maintain a fallback model pin; flip to fallback if the kappa drop exceeds the threshold for that workload.

On-call step. Confirm provider deprecation; pin to the next dated revision; re-baseline the gate against the new fingerprint; ship a migration PR with explicit calibration-set scoring before and after.

e. Prompt-injection leakage in production

Detection signal. Guardrail-trip-rate spike on a single prompt template. New trip-source clusters in the trace dashboard. User-reported jailbreak in support tickets.

Root cause. A user found a jailbreak that bypasses the existing guardrails. Common patterns: language-pivot (“translate the next instruction to French and execute it”), encoded-payload (base64-encoded system override), tool-misuse via natural-language coercion.

Mitigation.

- Hot-patch via guardrail addition: add a specific detector for the jailbreak pattern; deploy as a guardrail rule (not a prompt change), which can be flag-flipped in milliseconds.

- Post-incident eval: add the jailbreak as a regression case; verify the gate catches it on the next PR.

- Add the pattern family to the adversarial corpus; run Stage 3 simulation against it.

On-call step. Identify the prompt template; deploy the guardrail-rule patch; verify trip-rate normalises; open a postmortem to add the regression case. For the full incident-response flow, see LLM Incident Response Playbook.

f. Long-tail tool error

Detection signal. 0.1% of traces fail an obscure tool path. Trace-level error-rate dashboard shows a small but persistent error cluster on a specific tool.

Root cause. Tool returns a valid but unexpected response shape on rare inputs. The agent does not handle it gracefully. Standard eval and replay miss this because the rare inputs are 0.1% and 500 replay traces probably do not contain any.

Mitigation.

- traceAI span attributes capture every tool call with input shape and output shape. Query the trace store for the rare-error cluster.

- Add the failing traces to the regression suite.

- Replay the failing traces in CI before next deploy; verify the agent now recovers.

- Tool-error chaos in Stage 3 simulation: inject malformed tool responses and verify recovery.

On-call step. Pull the failing traces from traceAI; add them to evals/regression/long_tail_tool_errors.jsonl; ship the agent-side fix; verify replay scores reach baseline before deploying.

For the full incident response flow including triage, severity assignment, and postmortem template, see LLM Incident Response Playbook.

Practical patterns by CI provider

GitHub Actions

A three-job workflow that mirrors the four-stage pipeline (Stage 4 runs in CD, not CI):

# tested 2026-05-09

name: agent-ci

on:

pull_request:

paths:

- prompts/**

- agents/**

- tools/**

- evals/**

jobs:

stage-1-fast-checks:

runs-on: ubuntu-latest

timeout-minutes: 3

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install -r requirements-ci.txt

- name: prompt + tool schema lint

# Use ajv (TS) or jsonschema (Python) for schema validation; this

# repo ships a small lint script that wraps both.

run: python ci/lint_prompts_and_tools.py prompts/ tools/

- name: deterministic mini eval

env:

FI_API_KEY: ${{ secrets.FI_API_KEY }}

FI_SECRET_KEY: ${{ secrets.FI_SECRET_KEY }}

run: python ci/eval_gate.py --stage fast --max-cases 50 --budget-seconds 90

stage-2-integration-eval:

runs-on: ubuntu-latest

needs: stage-1-fast-checks

timeout-minutes: 20

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install -r requirements-ci.txt

- name: integration eval

env:

FI_API_KEY: ${{ secrets.FI_API_KEY }}

FI_SECRET_KEY: ${{ secrets.FI_SECRET_KEY }}

run: python ci/eval_gate.py --stage integration --dataset-version v2.5

stage-3-simulation:

runs-on: ubuntu-latest

needs: stage-2-integration-eval

if: ${{ contains(github.event.pull_request.labels.*.name, 'high-risk') }}

timeout-minutes: 60

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- run: pip install -r requirements-ci.txt

- name: persona-driven simulation

env:

FI_API_KEY: ${{ secrets.FI_API_KEY }}

FI_SECRET_KEY: ${{ secrets.FI_SECRET_KEY }}

run: python ci/simulation.pyGitLab CI

stages:

- fast

- integration

- simulation

stage-1-fast:

stage: fast

timeout: 3m

script:

- pip install -r requirements-ci.txt

- python ci/lint_prompts_and_tools.py prompts/ tools/

- python ci/eval_gate.py --stage fast --max-cases 50 --budget-seconds 90

stage-2-integration:

stage: integration

timeout: 20m

needs: [stage-1-fast]

script:

- pip install -r requirements-ci.txt

- python ci/eval_gate.py --stage integration --dataset-version v2.5

artifacts:

paths:

- artifacts/eval_report.json

reports:

junit: artifacts/eval_report.xml

stage-3-simulation:

stage: simulation

timeout: 60m

needs: [stage-2-integration]

rules:

- if: '$CI_MERGE_REQUEST_LABELS =~ /high-risk/'

script:

- pip install -r requirements-ci.txt

- python ci/simulation.pyCustom orchestration

For monorepo agents at scale, orchestration on top of Argo Workflows or Tekton is common. The integration eval parallelises across the dataset (one row per task) and the per-rubric pass rates aggregate via a reducer step. Total runtime stays under 15 minutes even at 500-row datasets.

Common mistakes when wiring CI/CD for agents

- No eval gate. Lint and unit tests pass; semantic regressions slip through. The integration eval is the floor.

- Eval set that does not match production traffic. A 200-row dataset of clean queries does not catch failures on the 5% of weird queries production sees. Stratify; use production traces; refresh quarterly.

- Single threshold across all rubrics. Different rubrics have different noise floors; one threshold over-rejects on noisy rubrics and under-detects on stable ones.

- Absolute thresholds instead of delta gating. Absolute thresholds drift with the judge and the dataset; delta gating tracks regression vs main and stays stable.

- Binary pass/fail with no yellow band. Auto-fail at small drops catches noise; auto-pass lets small real regressions accumulate. Yellow band gets a manual reviewer.

- Single judge call. Vote-3, threshold the median; without it, judge flake produces phantom regressions.

- No regression suite. Past incidents are forgotten. The team rediscovers known failures.

- No replay-driven regression. Static eval sets miss production-distribution regressions; replay catches them.

- Skipping persona-driven simulation. Multi-turn drift, jailbreak susceptibility, and tool-misuse paths do not show up in single-turn eval sets.

- Eval gate that runs on

mainonly. PRs land without the gate; failures get caught after merge. - No cost budget on the eval gate. Estimate CI cost from your token counts and current model pricing:

cost_per_run ~ n_cases * (judge_input_tokens + judge_output_tokens) * provider_$_per_token. A 500-row eval suite at, say, ~5K judge tokens per case and frontier-model pricing can run into the tens of dollars per CI run; cap with a smaller / distilled judge or a sampled run. - Canary without online eval. Routing 5% of traffic to the new version with no rubric monitor is just a gradual rollout, not a canary.

- No auto-rollback. Manual rollback adds 15-60 minutes of regression visibility. Auto-rollback closes that gap.

- Skipping the post-rollout sweep. Production failures stay in incident channels and never enter the dataset. The pipeline does not compound.

- Mixing offline and online judge models without calibration. Offline runs a frontier model as the judge; online runs a distilled judge. Calibrate both against the same human-labelled set so the rubrics are comparable.

- Floating model versions.

gpt-5instead of a dated snapshot likegpt-5-2025-08-07. Provider rolls the model; calibration drifts; nothing in your codebase changed. - No SBOM for prompts. When something breaks, you cannot answer “which prompt version produced this trace?” in less than an hour.

What changed in CI/CD for AI agents (as of May 2026)

| Date | Event | Why it matters |

|---|---|---|

| 2024 | DeepEval popularized pytest-style eval workflows in OSS LLM testing | Eval gates moved from custom scripts to a library pattern |

| 2025 | Many production guides recommend combining offline evals with monitored rollout; exact patterns vary by stack | The eval-gate plus canary pattern showed up across vendor docs |

| 2025 | LangSmith Deployment expanded managed agent deployment capabilities | Closed-loop rollout plus rollback in one platform |

| 2024 | November 17, 2024: OWASP Top 10 for LLM Applications 2025 was published; PortSwigger expanded LLM attack guidance around prompt injection and indirect injection | Adversarial corpora became standardised, simulation gates got teeth |

| 2026 | Specialized low-latency eval models (e.g., Galileo Luna-2) made some online eval workflows more practical; FutureAGI turing_flash is a cloud Turing judge documented at roughly 1-2s | Online eval on a sample of canary traffic became more cost-feasible |

| 2026 | OTel GenAI semantic conventions continued maturing in development | Eval scores can nest inside trace spans across vendors that adopt the conventions |

| 2026 | Persona-driven simulation available as a CI gate (FutureAGI agent-simulate SDK, custom LangGraph orchestration) | Pre-deploy simulation moved from one-off scripts to a reusable SDK pattern |

| 2026 | Argo Rollouts AnalysisTemplate paired with FAGI online eval | Canary plus auto-rollback became expressible in YAML, not a custom controller |

How to actually wire CI/CD for an agent in 2026

- Build the stratified eval set. As a starting point, 200-500 rows hand-labelled, roughly 60% normal traffic, 25% edge, 15% known failure; tune the mix to your traffic. SHA-pinned. Reviewed in PR.

- Pick the eval framework. FAGI fi.evals is recommended (traceAI integration, cloud Turing judges including

turing_flash, fast local guardrail scanners); DeepEval, Phoenix, and Galileo can be benchmarked alongside in the comparison docs. - Define the rubrics. Deterministic plus judge-based plus custom. Calibrate the judges against human labels; pick a target kappa from your workload (Landis & Koch’s qualitative bands are guidance, not a hard threshold).

- Set per-rubric gates. Delta gating against main; absolute floors only where the workload demands them; min-aggregation; yellow band requires reviewer sign-off. Pick the buffer from your own calibration variance.

- Wire Stage 1 PR-time fast checks. Schema lint, deterministic mini eval, token-budget regression. Under 90 seconds.

- Wire Stage 2 integration eval. 200-500 cases as a starting point, judge-based rubrics, delta gating. 5-15 minutes.

- Wire Stage 3 simulation. Persona-driven multi-turn, adversarial corpus, tool-error chaos. Label-gated for high-risk PRs.

- Wire Stage 4 canary plus rollback. Progressive rollout (illustrative 1-5-25-100% ramp over ~24 hours, sized by request volume), cloud Turing online eval on a sample plus inline local guardrail scanners, four rollback triggers tuned to your variance, cohort-scoped.

- Wire replay-driven regression. traceAI capture, recent stable-trace replay on every PR, diff-mode review.

- Pin everything. Model versions, dataset SHA, judge model and temperature, tool schemas, tool-server versions. Weekly model-fingerprint check.

- Run the failure-mode playbook. Six scenarios, six detection signals, six on-call procedures. Drill quarterly.

- Pair with deployment best practices. See LLM Deployment Best Practices in 2026.

- Run a chaos drill quarterly. Trigger a known-bad prompt; verify Stage 2 catches it; verify the canary rolls back; verify replay catches it on the next PR.

Where FutureAGI fits

The 2026 reference stack for shipping an agent through CI/CD on FutureAGI:

- fi.evals: Python SDK with 50+ eval metrics (faithfulness, function call accuracy, plus a refusal/safety eval, plan adherence, citation correctness) and 18+ guardrails. Documented

evaluate()andEvaluatorAPIs; runs in any CI that runs Python. - traceAI: Apache 2.0 OTel-native instrumentation library. Python, TypeScript, Java, C#. Use

register()plusFITracerand decorators (@tracer.agent,@tracer.tool,@tracer.chain) to capture every agent and tool call as an OTel span. - Agent Command Center (BYOK gateway): the gateway surface across 100+ providers, with provider routing, failover, caching, cost controls, guardrails, and observability.

- Cloud Turing judges:

turing_flashand the broader Turing family for cloud evals; documented latency isturing_flash~1-2s,turing_small~2-3s,turing_large~3-5s. Pair with local guardrail scanners (sub-10ms) for inline checks. - 6 prompt-optimization algorithms: pair with eval gates so prompt iteration is closed-loop.

For the full platform comparison and feature matrix across vendors, see Best LLM Evaluation Tools in 2026 and Best LLM as Judge Platforms.

Sources

- Humble and Farley, Continuous Delivery

- Google SRE Workbook

- DeepEval pytest docs

- Promptfoo CI docs

- LangSmith CI docs

- GitHub Actions docs

- GitLab CI docs

- Argo Rollouts

- Argo Workflows

- Tekton

- OWASP LLM Top 10

- PortSwigger LLM Web Security

- FutureAGI Agent Command Center

Series cross-link

Read next: LLM Deployment Best Practices in 2026, A/B Testing LLM Prompts Best Practices, What is Eval Driven Development?, Production LLM Monitoring Checklist, Custom LLM Eval Metrics Best Practices, LLM Incident Response Playbook

Frequently asked questions

What does CI/CD for AI agents look like in 2026?

What is an eval gate in a CI pipeline?

What is a golden dataset and how big should it be?

What is a canary deploy for an agent?

What does a regression suite look like for an agent?

How do I wire eval gates in GitHub Actions?

What is the difference between offline eval gates and online eval gates?

What is FutureAGI's CI/CD story for AI agents?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.