LLM Incident Response Playbook in 2026: Detection to Postmortem

LLM incident response in 2026: detection via eval drift, triage, rollback, customer comms, postmortem. The eval-gate-driven playbook from page to action items.

Table of Contents

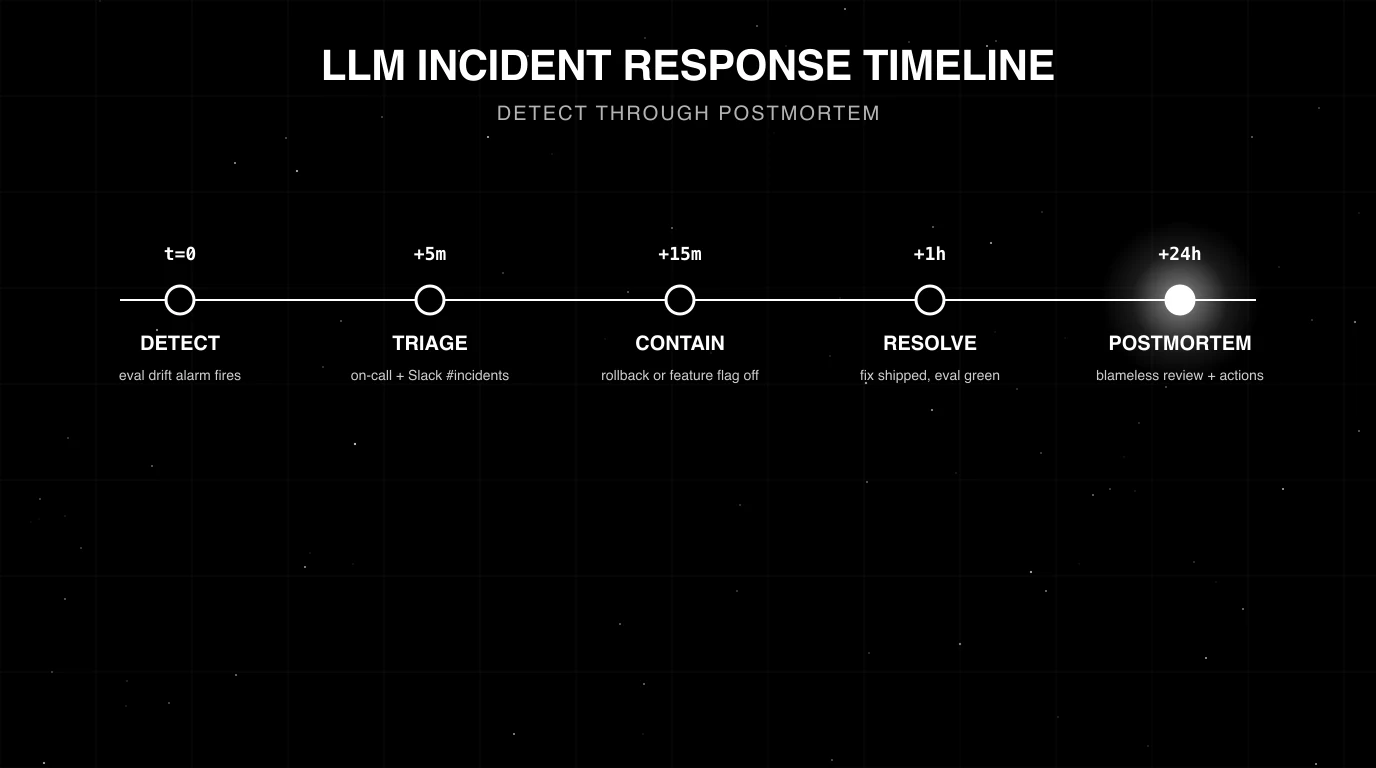

A team ships a refresh of the support agent at 4pm Tuesday. By 4:32pm the rubric drift alarm fires: faithfulness on the refund-bot route is down 9 points, refusal rate is up 18 points. The on-call engineer is paged. By 4:34pm the gateway has auto-reverted the cohort to the incumbent (per-cohort eval-gated rollback caught the regression). By 4:38pm the on-call has the trace id for a representative failure, the prompt diff, and the rubric drift chart. By 4:55pm the incident channel is open and customer comms is drafted. By 6pm the immediate fix is in review. By Friday the postmortem is published; two action items expand the eval gate’s golden dataset and tighten the per-rubric threshold.

This is what LLM incident response looks like when the playbook is wired. Detection is automated, rollback is automated, the postmortem is blameless, and the eval gate gets stronger after each incident. This guide is the production playbook from page through postmortem in 2026. It draws on Google’s SRE book, Atlassian’s Incident Management Handbook, and the SRE tradition more broadly, with LLM-specific extensions for eval drift, prompt rollback, and judge calibration.

TL;DR: The five LLM incident shapes

| Shape | Detection signal | Default rollback path |

|---|---|---|

| Eval drift | Rolling-mean rubric score drops 2-5+ points | Prompt-version revert via registry |

| Cost spike | Tokens-per-success rises 30%+ | Gateway-level cost circuit breaker; prompt revert |

| Latency spike | p99 jumps 2-5x | Tool/upstream healthcheck; circuit breaker on slow tool |

| Guardrail regression | Refusal rate or PII leak rate shifts 5+ points | Per-cohort eval-gated rollback; guardrail config revert |

| Outage / provider event | Error rate jumps, traffic drops | Gateway failover to fallback provider |

If you only read one row: the unit of fast LLM incident response is per-cohort eval-gated rollback in the gateway. The playbook’s job is to handle the cases the auto-rollback does not catch.

Stage 1: Detection

Three independent signals catch the five incident shapes. Wire all three.

Online eval drift

Rolling-mean rubric scores per route, per prompt version, per user cohort. Alarm thresholds:

- 2-5 point drop sustained 15+ minutes -> investigate.

- 5+ point drop -> page.

Distilled judge models (FutureAGI turing_flash at 50-70ms p95 for guardrail screening, ~1-2s for full eval templates; Galileo Luna-2) keep the per-trace cost low enough to score 5-20% of production traffic continuously. For depth on online eval, see What is LLM Evaluation? and Production LLM Monitoring Checklist.

Cost and latency

Tokens-per-success, dollar-cost-per-success, latency p50/p95/p99 per route. Alarm thresholds:

- 30%+ rise in tokens-per-success -> investigate.

- 50%+ rise -> page.

- p99 latency 2x baseline -> page.

The cost-and-latency signals catch incidents that eval drift alone misses (a successful-but-wasteful trajectory regression).

Guardrail and refusal rate

Refusal rate (legitimate-vs-illegitimate split), PII leak rate, prompt-injection success rate. Alarm thresholds:

- 5+ point shift in either direction on refusal rate -> investigate.

- Any non-zero PII leak rate sustained -> page.

- Prompt-injection success above 0.5% -> page.

The guardrail signal catches the safety-class regressions that the eval drift misses (refusal calibration is hard to score in the rubric judges).

For the broader observability surface, see Production LLM Monitoring Checklist, LLM Tracing Best Practices, and Best AI Agent Observability Tools in 2026.

Stage 2: Triage

The on-call engineer’s job in the first 5 minutes:

- Confirm the alarm. Look at the dashboard. Is the rubric drift real or a single-point spike that has already recovered?

- Identify the change. Was a prompt updated, a model swapped, a gateway config changed in the last 24 hours? Most LLM incidents are change-induced.

- Pull a representative trace. The trace id surfaces the failure. Span attributes (prompt version, cohort, tool calls) tell the story.

- Decide rollback path. Per-cohort eval-gated rollback handled it (no action). Prompt-version revert needed. Code revert needed. Wait-and-see if the signal is borderline.

The 5-minute clock is generous; per-cohort rollback often closes the loop in 2-3 minutes. The on-call’s manual action is for the cases the auto-rollback did not handle.

Stage 3: Rollback

Three rollback paths, in order of preference.

Per-cohort eval-gated rollback

If the change went out behind a canary, the gateway auto-reverts the cohort when the rubric monitor fires. No manual action. The on-call confirms the revert fired and moves to investigation.

This is the right default. For the deployment pattern, see LLM Deployment Best Practices in 2026.

Prompt-version revert

The prompt registry exposes a one-click revert to the previous version. Takes seconds; no code deploy. Use when the regression is prompt-driven and the canary did not catch it (because the canary cohort was too small, the rubric did not cover the failure mode, or the rollout was 100% from the start).

LangSmith Prompt Hub, FutureAGI prompt versions, Braintrust prompts, and Helicone Prompts all support one-click revert.

Code revert

git revert <sha>, redeploy. Takes minutes. Use when the change is in code (gateway config, agent logic, tool implementation) rather than in prompts.

Provider failover

For provider outages, the gateway routes to a fallback provider with an equivalent model. The fallback path must have been load-tested in advance. A fallback that has not been load-tested is not a fallback; verify under load quarterly.

Stage 4: Customer comms

If the incident has user-visible impact, draft the status update. The pattern:

- One channel, one drafter. Avoid five engineers writing five conflicting updates.

- Acknowledge fast, detail later. “We are aware of an issue affecting refund queries; investigating; next update in 15 minutes.” Beats waiting an hour for a perfect message.

- Avoid speculation. State what is known. “Latency on chat queries elevated since 4:30pm” not “We think the model provider is having issues.”

- Set update cadence. Every 15-30 minutes during the incident, then a final all-clear.

- Match the language to the audience. Engineering customers want the trace id and the rubric drift; consumer-facing messages need plain English.

For multi-tenant SaaS, the comms split is per-tenant: only tenants in the affected cohort get the page. For shared-tenant incidents, the global status page is the channel.

Stage 5: Resolution

Resolution closes the loop. Three confirmations.

- The signal is back to baseline. Rubric drift recovered, latency back to p99 baseline, refusal rate normal. Wait one full alert window (typically 30-60 minutes) after the rollback to confirm.

- The fix is in review. A PR exists, an eval gate ran on it, the regression case is in the test suite for next time.

- Customer comms closed. Final all-clear posted; affected users notified if the comms plan requires it.

The incident channel stays open until all three are confirmed.

Stage 6: Postmortem

The postmortem is blameless. Focus is the process, not the engineer who pushed the button.

Six sections.

Summary

One paragraph: what happened, when, who was affected. 4-6 sentences.

Timeline

Per-step time stamps from detection to all-clear. Use UTC. Pin the trace id of the representative failure.

16:30 PT - prompt v18 rolled out at 100% (no canary)

16:32 PT - rubric drift alarm fires: faithfulness -9 points

16:34 PT - on-call paged

16:38 PT - prompt-version revert to v17 executed

16:43 PT - rubric drift recovers

17:15 PT - all-clear postedRoot cause

Prompt diff, model version change, gateway config change, or upstream provider event. The trace id of a representative failure surfaces the actual span tree. Pin both the diff and the trace.

Why eval missed it

This is the LLM-specific section. Three common answers:

- Dataset gap. The golden dataset under-represented the failure slice.

- Judge calibration drift. The judge model updated and the calibration with it.

- Threshold too loose. The rubric threshold did not catch a real regression.

Action items

Specific, owned, dated. Examples:

- “Add 50 representative refund-bot failures to the golden dataset by EOW (owner: Alice).”

- “Tighten faithfulness rubric threshold from 0.85 to 0.90 (owner: Bob).”

- “Update canary minimum-cohort-size from 3% to 5% to reach significance faster (owner: Charlie).”

- “Add eval-gated rollback to the support-bot rollout pattern by Q2 (owner: Dana).”

The action items must close the loop: expand the eval gate, add the regression-suite case, tighten the rollback threshold. A postmortem without action items is documentation.

Lessons

Framing for the team and the broader org. What did we learn? What does the next person who touches this surface need to know?

For the canonical SRE postmortem template, see Google’s SRE book postmortem chapter and Atlassian’s Incident Handbook.

Special case: LLM security incidents

Five triggers escalate an incident to security:

- Prompt injection succeeded and exfiltrated data.

- Guardrail regression let PII or sensitive content leak in outputs.

- Compromised model provider or stolen API keys.

- Tool-call exfiltration (the agent called a tool with unauthorised arguments).

- Supply-chain compromise (an OSS dependency was malicious).

For the supply-chain class, see the worked example in LiteLLM Compromised Incident Response Migration Guide.

The security-class playbook differs from the eval-drift playbook in three ways:

- Loop in security review immediately. Do not run the standard eval-drift triage in parallel.

- Consider regulatory disclosure obligations. Depending on jurisdiction (GDPR Article 33, CCPA, HIPAA, PCI-DSS), notification timelines apply.

- Preserve evidence. Trace logs, gateway request logs, and prompt-version snapshots may be needed for forensic review.

For the broader safety surface, see Top 5 AI Guardrailing Tools in 2025, Best AI Agent Guardrails Platforms in 2026, and Prompt Injection in 2025.

Prevention closes more incidents than response

Everything above is about the moment after an alarm fires. The cheaper move is keeping incidents from firing in the first place. Three layers convert detect-and-rollback into never-detect:

- Eval-gated rollout at deploy time. Every prompt change, model swap, and gateway config update ships behind a canary cohort with a rubric threshold. The gateway auto-reverts when the threshold is missed. The on-call never sees the page because the same eval-gated rollback pattern from Stage 3 runs at the deploy boundary, not the incident boundary.

- Inline guardrail screening on every request. PII leakage, prompt injection, jailbreak, and tool-call argument violations are caught at the request boundary, not in the postmortem. FutureAGI’s

turing_flashruns the screening pass at 50-70 ms p95, so gating every span fits inside a normal user-facing latency budget. The classes that show up in 30% of historical incident timelines (PII leak, prompt injection, refusal regression) become enforcement, not detection. - Persona-driven simulation pre-prod. Synthetic adversaries replay the failure modes that produced last quarter’s incidents before the next release reaches users. The regression set grows from each postmortem’s action items, so the same incident class stops shipping twice.

FutureAGI’s Agent Command Center wires these three layers into one runtime alongside the detection signals from Stage 1: eval-gated routing across 100+ providers with BYOK, 18+ runtime guardrails, and persona-driven simulation share the same trace tree the on-call already uses for response. The incident playbook still exists for the cases that slip through; the count of cases that need it drops sharply once prevention is wired the same way response is.

Common mistakes in LLM incident response

- Detection on a single signal. Eval drift catches some incidents and misses others. Wire three signals.

- Manual rollback when auto-rollback was available. A 30-minute manual rollback is 28 minutes of avoidable user impact when per-cohort eval-gated rollback would have closed in 2 minutes.

- Code revert when prompt revert works. Prompt revert is seconds; code revert is minutes. Use the right tool.

- Customer comms from five different engineers. One channel, one drafter.

- Speculation in status updates. “We think the model provider…” erodes trust. State what is known.

- No representative trace pinned in the postmortem. Without it, the postmortem is theory; with it, the postmortem is forensic.

- Action items that do not close the loop. “We will be more careful next time” is not an action item. “Add 50 cases to the regression suite by Friday” is.

- No quarterly chaos drill. A rollback that has not been exercised is not a rollback. Trigger it deliberately.

- Treating provider weight updates as out-of-scope. Providers update weights without notice; the rubric drift will fire even though no team change happened. The playbook applies; the action items shift toward upstream monitoring.

- Not reviewing eval calibration after the fact. If the eval gate did not catch it, the calibration is the issue.

What changed in LLM incident response in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2024 | Per-cohort eval-gated rollback patterns matured across major gateway platforms | Auto-rollback closed the loop for most prompt-driven incidents |

| 2025 | Distilled judges (Galileo Luna-2 introduced June 2025, FutureAGI turing_flash) reached production scale | Online eval at 5-20% traffic became cost-feasible |

| 2025 | OTel GenAI semantic conventions widely adopted | Trace ids portable across vendors; postmortems reference the same trace shape |

| 2025 | Several public LLM-supply-chain incidents (LiteLLM compromise) | Security-class incident response patterns codified |

| 2026 | Eval-drift, cost, and guardrail signals integrated into PagerDuty / Slack / Opsgenie | LLM incidents got the same paging plumbing as classical SRE |

How to actually run an LLM incident response program in 2026

- Wire three independent detection signals. Eval drift, cost/latency, guardrail rate.

- Set thresholds and pages. 2-5 point rubric drop -> investigate, 5+ -> page; 30%+ cost rise -> investigate, 50%+ -> page; 5+ point guardrail shift -> page.

- Default to per-cohort eval-gated rollback. Gateway-level for prompt-driven changes.

- Build the rollback runbook. Prompt revert, code revert, gateway config revert, provider failover.

- Define the on-call rotation. On-call engineer, eval owner, customer comms drafter; release captain and security reviewer at scale.

- Run quarterly chaos drills. Trigger a known-bad prompt; verify detection, rollback, and comms.

- Standardise the postmortem template. Six sections; SRE-blameless tradition.

- Close the loop. Every postmortem produces an action item that expands the eval gate, the regression suite, or the rollback pattern.

For the deployment context, see LLM Deployment Best Practices in 2026 and CI/CD for AI Agents Best Practices.

Sources

- Google SRE Book - Managing Incidents

- Google SRE Book - Postmortem Culture

- Atlassian Incident Management Handbook

- PagerDuty incident response docs

- traceAI GitHub repo

- OpenTelemetry GenAI semantic conventions

- FutureAGI Agent Command Center

- LiteLLM Compromised Incident Response Migration Guide

Series cross-link

Read next: LLM Deployment Best Practices in 2026, CI/CD for AI Agents Best Practices, Production LLM Monitoring Checklist, LLM Tracing Best Practices in 2026

Frequently asked questions

What does an LLM incident look like in 2026?

How do I detect an LLM incident before users complain?

What is the right rollback procedure for an LLM incident?

Who should be on the LLM incident response rotation?

How long should an LLM postmortem take?

What does a blameless LLM postmortem cover?

When should I treat an LLM incident as a security incident?

What does FutureAGI ship for LLM incident response?

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.

Best Voice AI May 2026: compare Deepgram, Cartesia, ElevenLabs, Retell, and Vapi for STT, TTS, latency budgets, and production voice agents.

Best LLMs April 2026: compare GPT-5.5, Claude Opus 4.7, DeepSeek V4, Gemma 4, and Qwen after benchmark trust broke and prices compressed fast.