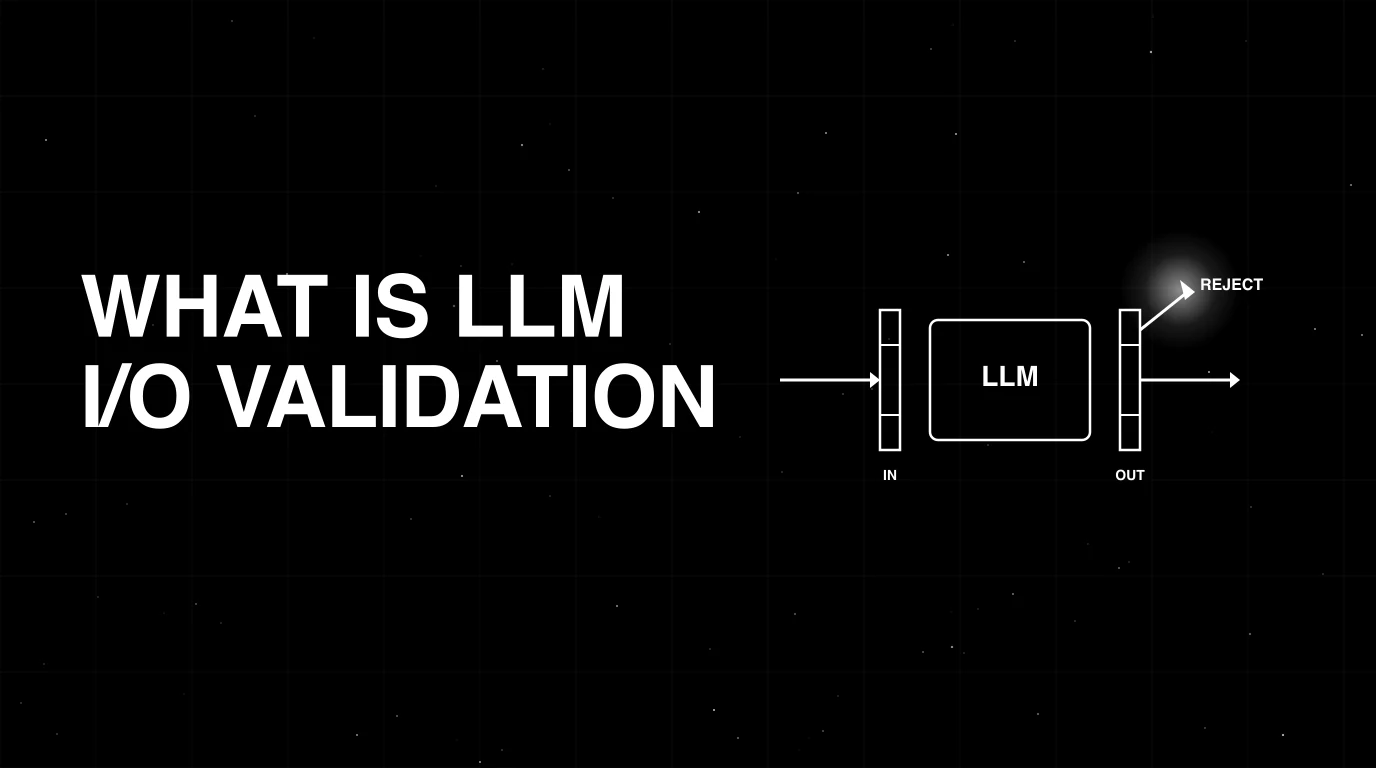

What is LLM Input/Output Validation? The 2026 Explainer

LLM input/output validation explained: schema, structure, content checks. How it differs from guardrails, what tools cover it, and how to wire it in 2026.

Table of Contents

A coding agent ships a refactor: the agent now returns its plan as a JSON object with steps, files, and risks keys. In production, 8% of responses are unparseable; another 5% have the right keys but steps is sometimes a string, sometimes a list, and once an integer. The downstream tool that consumes the JSON breaks. The fix is not a smarter prompt; it is two lines of code: a Pydantic model on the response, automatic retry-with-error-feedback on a parse failure, and a hard cap of three retries. The unparseable rate drops to 0.3%. The wrong-type rate drops to 0%. The agent is now a typed component.

This is what LLM input/output validation is for in 2026. The model produces text; production code expects structured data, valid JSON, the right enum value, the right numeric range. Validation is the contract between them. This guide is the entry-point explainer covering the three layers (schema, structural, content), the tooling landscape (Pydantic AI, Instructor, Outlines, JSON Schema, Guardrails AI), and how I/O validation sits beside guardrails in a production stack. For the platform comparison, see Best LLM Input/Output Validation Tools in 2026.

TL;DR: What I/O validation is

LLM input/output validation runs structured checks on data going into and coming out of a language model, rejecting or repairing the request when a check fails. Three layers stack: schema (typed contract), structural (parseable format), content (business rules). Tools split between SDK-level (Pydantic AI, Instructor, Outlines) and gateway-level (FutureAGI Agent Command Center, Guardrails AI). I/O validation is distinct from guardrails: guardrails answer “is this allowed?”; validation answers “is this well-formed?”. Production stacks need both.

Why I/O validation matters in 2026

Three changes made it operational, not optional.

First, agents went structured. A 2024 chat completion was free text. A 2026 agent step returns a JSON object with tool, args, reasoning, and confidence keys, consumed by the next step. Free-text-out-of-LLM is a niche; structured-out-of-LLM is the default. The contract has to be enforced or the next step crashes.

Second, multi-step trajectories amplify schema failures. A 12-span agent run with a 5% per-step schema failure rate has a 46% probability that at least one span fails (1 - 0.95^12). Compounding kills agents that did not budget for validation.

Third, structured outputs as a first-class API. OpenAI’s structured outputs (response_format with json_schema), Anthropic’s tool-use schema, Gemini’s controlled generation, and the OSS Outlines library all ship constrained decoding. The model is steered toward the schema during generation; SDK validation catches whatever the constraint missed; gateway validation catches whatever the SDK missed. Three layers, each cheaper than debugging the alternative.

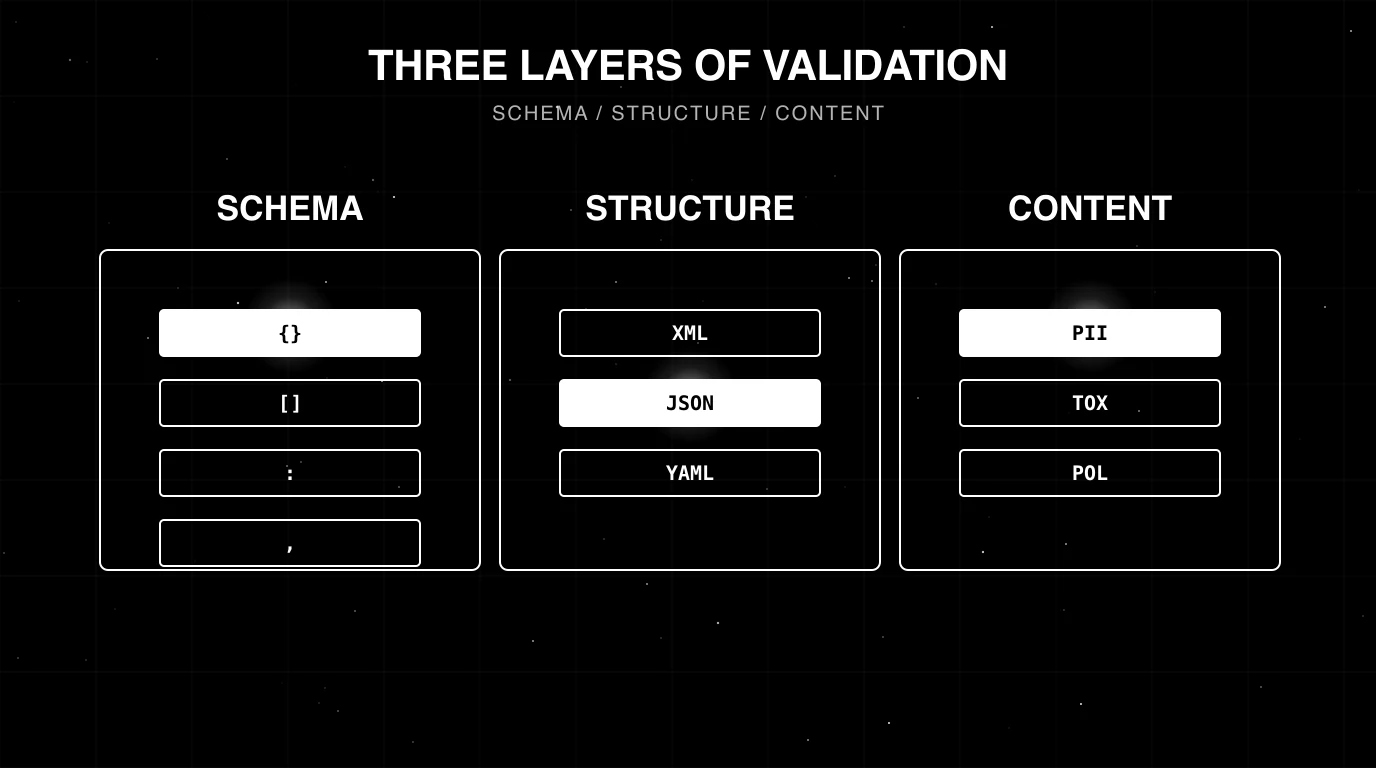

The three layers of validation

Schema validation

Schema validation enforces a typed contract on the response: the field names, types, optional/required flags, nesting structure, and value bounds. The contract is a JSON Schema, a Pydantic model, a Zod schema, or an equivalent typed declaration.

A response passes schema validation when:

- All required fields are present.

- All present fields have the right type.

- All enums are within the allowed set.

- All numeric fields are within bounds.

- Nested structures recursively validate.

Pydantic AI, Instructor, and the OpenAI/Anthropic SDKs ship typed-output APIs that validate against the schema and surface a typed error on failure.

Structural validation

Structural validation is the layer below schema: is the response parseable at all? JSON parse, XML parse, Markdown table parse, code-block extraction. A schema validator can only run if the parser succeeded.

Common structural failures:

- Truncated JSON (model hit the token limit mid-object).

- Trailing commas, comments, or markdown code-fences around JSON.

- Mixed text and JSON (the model wraps the JSON object in narration like “Here is the answer:” before the braces).

- Wrong root type (array when an object was expected).

Repair logic on top of the parser fixes the easy ones (strip code fences, drop trailing commas, attempt object/array unwrapping). Retry with error-feedback handles the rest.

Content validation

Content validation is application-specific rules that the schema does not capture. Examples:

- “The

refund_amountmust be less than theorder_total.” - “The

citationsarray must contain document ids that actually exist in the corpus.” - “The

confidencefield must be 0-1 and consistent with the model’s calibrated uncertainty.” - “The summary must not contain entities not present in the source.”

Content validation typically needs domain logic, a database lookup, or a judge model. It is the most expensive layer but the most aligned with what business rules actually require.

How I/O validation differs from guardrails

The two are often conflated. They are not the same.

| Aspect | Guardrails | I/O validation |

|---|---|---|

| Question | ”Is this content allowed?" | "Is this content well-formed?” |

| Examples | Toxicity, PII, prompt injection, jailbreak, brand-voice | JSON parse, schema match, enum allowed, numeric bound |

| Implementation | Classifier, rules engine, judge model | Schema parser, type checker, business-rule check |

| Fail mode | Block, redact, escalate | Reject, repair, retry |

| Tools | NeMo Guardrails, Guardrails AI, FutureAGI ACC, Lakera | Pydantic AI, Instructor, Outlines, JSON Schema, Guardrails AI (overlap) |

Guardrails AI sits in both columns because it does both. Most production stacks compose guardrails (run first as a cheap policy filter) with validation (run second as a typed parse). A response can pass guardrails and fail validation (unparseable JSON), or pass validation and fail guardrails (clean JSON containing PII). Both layers are necessary. For the guardrail-specific landscape, see Best AI Agent Guardrails Platforms in 2026 and Top 5 AI Guardrailing Tools in 2025.

Tools that cover I/O validation in 2026

Pydantic AI

Pydantic AI is a Python framework that uses Pydantic models as the LLM contract. The agent declares an output type (class Plan(BaseModel): ...), the framework handles the prompt formatting, the validation, and the retry-with-error-feedback loop. Native support for OpenAI, Anthropic, Gemini, Groq, and OSS models via OpenAI-compatible endpoints. Released by the Pydantic team; under active development as of 2026.

Instructor

Instructor is the original structured-output retry library, founded by Jason Liu. The pattern: pass a Pydantic model to the chat completion call as response_model; the library handles prompt construction, parsing, validation, and up to N retries. Works with OpenAI, Anthropic, Gemini, Cohere, Mistral, and any OpenAI-compatible endpoint via litellm. MIT license.

Outlines

Outlines is a constrained-decoding library. Pass a JSON Schema, Pydantic model, or regex; the library biases the LLM’s token sampling so the output is guaranteed to match. Works with local models (vLLM, TGI, llama.cpp, MLX) and some hosted endpoints. The benefit: no retry loop for schema failures. Apache 2.0.

LangChain output parsers

LangChain ships Pydantic, structured, and JSON output parsers. The parsers are typed wrappers around the chat completion that handle prompt formatting (insert format-instructions into the system prompt) and parsing. Less aggressive about retries than Instructor or Pydantic AI; pair with OutputFixingParser for retry-on-fail.

JSON Schema validators

ajv (TypeScript), jsonschema (Python), and equivalents in other languages run pure schema validation at the boundary. Useful at the gateway layer, where typed-SDK frameworks are not in scope.

Guardrails AI

Guardrails AI sits across the validation/guardrails boundary. Define a RAIL spec (Markdown + XML format) declaring the output shape and the validation rules; the library handles validation, repair, and reasking. Apache 2.0.

Gateway-level validation

FutureAGI’s Agent Command Center and other gateway-pattern platforms run schema and content validation at the gateway boundary, independent of the SDK. The benefit: validation runs even on services that did not opt in to SDK-level validation, and the failure surfaces in the gateway logs alongside other request data.

Worked example: validating a refund-bot response

A refund agent returns a structured plan. The contract:

from pydantic import BaseModel, Field

from typing import Literal

class RefundDecision(BaseModel):

action: Literal["approve", "deny", "escalate"]

amount_cents: int = Field(ge=0, le=50000)

reason: str = Field(min_length=10, max_length=500)

requires_manager: bool

# Instructor pattern (OpenAI-compatible chat.completions API patched with response_model)

import instructor

client = instructor.from_openai(openai_client)

result = client.chat.completions.create(

model="gpt-4o",

messages=[...],

response_model=RefundDecision,

max_retries=3,

)

# Pydantic AI pattern (Agent with output_type)

from pydantic_ai import Agent

agent = Agent("openai:gpt-4o", output_type=RefundDecision, retries=3)

result = agent.run_sync("...").outputWhat this catches:

- Schema: missing

action, wrong type onamount_cents,reasonshorter than 10 chars. - Structural: malformed JSON, code-fence wrappers, mid-stream truncation (Instructor catches via the parser).

- Content (partially):

amount_centsoutside [0, 50000].

What this does not catch:

- Content:

amount_centsexceeding the order total (needs a database lookup). - Content:

reasonbeing a non-sequitur (needs a judge model). - Guardrail:

reasoncontaining PII or policy-violating content (needs a guardrail layer).

Wire the database lookup as a post-Pydantic check. Wire the guardrail as a pre-Pydantic gateway-level filter. The combination is the validation envelope.

Common mistakes when implementing I/O validation

- Treating retry as the silver bullet. A retry that succeeds is fine; a retry rate above 5% means the prompt or model is wrong. Track retry rate as an SLO and tune the prompt when it climbs.

- Skipping the schema for prototypes. Prototypes ship to production. The Pydantic model that takes 5 minutes to write saves the on-call call.

- Constrained decoding without a content fallback. Constrained decoding guarantees valid JSON; it does not guarantee correct values. Pair with content validation.

- One layer of validation. SDK-level validation alone misses gateway-level cases (services that bypass the SDK). Gateway-level alone misses fine-grained type errors. Run both.

- No telemetry on validation failures. Validation rate and failure-mode breakdown are first-class observability data. Wire them as span attributes; track per-prompt-version drift.

- Overly permissive schemas. A

dict[str, Any]is not a schema. Specify the keys. - Free-text fields with no max length. A

reason: strwith nomax_lengthallows a 50K-token explanation. Cap it. - Catching

ValidationErrorand silently passing. A swallowed validation error is a regression you will not see.

What changed in I/O validation in 2026

| Date | Event | Why it matters |

|---|---|---|

| 2024 | OpenAI shipped structured outputs (response_format=json_schema) | Constrained decoding became turnkey for OpenAI users |

| 2024 | Anthropic added tool-use schema enforcement | Same effect on the Anthropic side |

| 2025 | Pydantic AI 1.0 released | Production-grade typed-agent framework with retry semantics |

| 2024 | Outlines guided-generation work matured under the dottxt-ai org | OSS-side parity on guaranteed-schema decoding |

| 2026 | Gateway-level validation became standard in agent stacks | Validation moved from SDK-only to defense-in-depth |

How to actually wire I/O validation in 2026

- Define the contract as a typed schema. Pydantic for Python, Zod for TypeScript, JSON Schema as the interchange format.

- Pick the SDK-level framework. Pydantic AI for Python-native agents; Instructor for OpenAI/Anthropic SDK-style; Outlines for OSS models with constrained decoding.

- Wire retries with error feedback. Cap at 3. Surface the validator error in the retry prompt.

- Add content validation. Database lookups, judge calls, business-rule checks. Wire as a separate post-parse step.

- Layer in the gateway. A second validator at the gateway boundary catches the cases the SDK missed. See Best LLM Gateways in 2026.

- Instrument failure rates. Validation pass-rate, retry rate, and per-failure-mode breakdown as span attributes.

- Gate CI on validation pass-rate. A drop on the eval set blocks the PR.

- Pair with guardrails. Validation answers “well-formed”; guardrails answer “allowed”. Both are needed.

How to use this with FAGI

FutureAGI is the production-grade I/O validation, guardrails, and observability stack. The Agent Command Center ships 18+ guardrails plus output-shape validators that run at the gateway boundary: structural correctness (JSON parse), schema correctness against a registered schema, and content correctness via turing_flash (50 to 70 ms p95 for guardrail screening). On a fail the gateway can reject, retry with a corrected prompt, or fall back to a different model. The pattern composes with SDK-level Pydantic AI / Instructor / Outlines validation; gateway-level validation acts as the last line before the response leaves your infrastructure.

Span-attached scoring tags every trace with validation pass-rate, retry rate, and per-failure-mode breakdown so CI gates and production drift detection consume the same signal. The same plane carries 50+ eval metrics, persona-driven simulation that exercises validation edge cases, the BYOK gateway across 100+ providers, and Apache 2.0 traceAI instrumentation on one self-hostable surface. Pricing starts free with a 50 GB tracing tier; Boost ($250/mo), Scale ($750/mo), and Enterprise ($2,000/mo with SOC 2 and HIPAA BAA) cover the maturity ladder.

Sources

- Pydantic AI docs

- Instructor GitHub repo

- Outlines GitHub repo

- Guardrails AI GitHub repo

- LangChain output parsers docs

- OpenAI structured outputs

- Anthropic tool use

- JSON Schema specification

- Pydantic docs

- FutureAGI Agent Command Center

Series cross-link

Read next: Best LLM Input/Output Validation Tools in 2026, Best AI Agent Guardrails Platforms in 2026, What is LLM Evaluation?, Top 5 AI Guardrailing Tools in 2025

Frequently asked questions

What is LLM input/output validation in plain terms?

How is I/O validation different from guardrails?

What are the three layers of validation every team needs?

What OSS tools cover LLM I/O validation in 2026?

What is constrained decoding and how does it relate to validation?

When should I retry vs reject a failed validation?

How does I/O validation interact with eval gates?

What does FutureAGI ship for I/O validation?

Pydantic AI, Instructor, Outlines, Guardrails AI, NeMo Guardrails, JSON Schema, and FutureAGI as the 2026 LLM I/O validation shortlist. Schemas, structures, retries.

Pydantic AI is a Python agent framework that brings Pydantic-style validation to LLM tool calls and outputs. Agents, tools, dependency injection, graphs.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.