What is LLM Judge Prompting? Rubrics, Calibration, and Bias in 2026

LLM judge prompting in 2026: rubric structure, chain-of-thought, position bias, length bias, calibration, and the production patterns that survive contact with real data.

Table of Contents

A judge model scores a customer support reply 8 out of 10 for groundedness. The reply cites a non-existent policy section. A second run of the same judge on the same reply scores it 6. A human reviewer scores it 3. The judge is hallucinating its score, the prompt is under-calibrated, and the rubric is not specific enough to anchor the scale. By the time the team realizes, two weeks of production eval data is contaminated by a noisy judge that nobody calibrated. The eval pipeline looks healthy. The signal it produces is closer to noise than to truth.

This is the failure mode LLM judge prompting exists to avoid. A judge is an LLM scoring another LLM, and like any LLM it needs a well-designed prompt, calibration against ground truth, and bias mitigation. Without these, judge scores are not eval signal; they are random numbers in a structured wrapper. This guide covers what good judge prompting looks like, what biases to mitigate, and how to calibrate a judge so its scores carry real meaning.

TL;DR: What LLM judge prompting is

LLM judge prompting is the practice of using one LLM (the judge) to score the output of another LLM (the target) against a rubric. The judge prompt names the rubric, defines the scoring scale, optionally provides examples, and asks the judge to produce a score plus an explanation.

The pattern scales eval cheaply: a 200-prompt human eval takes a senior engineer two days; the same eval with a calibrated LLM judge runs in 20 minutes. The cost is non-zero (judge tokens, rubric design, calibration labor) but lower than human review for fuzzy rubrics that heuristics cannot score.

The tradeoff is judge bias, position bias, length bias, and rubric noise. Production teams calibrate against a hand-labeled subset, mitigate known biases, and treat judge scores as an estimator (with confidence intervals), not as ground truth.

Why LLM judge prompting matters in 2026

Three forces made it operational, not optional.

First, eval scale outran human capacity. A 2026 production agent runs 100K+ traces per day. Hand-labeling at that scale is impossible. LLM-as-judge is the only economical way to score every production trace, every prompt PR, and every model swap candidate.

Second, fuzzy rubrics became first-class. Groundedness, helpfulness, refusal calibration, brand voice, and tone are not heuristic-scoreable. A regex cannot tell you whether an answer is “helpful.” A schema validator cannot tell you whether a refusal is “appropriate.” LLM judges are the only practical way to score these.

Third, span-attached online scoring became the production pattern. Every production span carries a quality verdict from a judge. The verdict feeds drift detection, alerts, dashboards, and CI gates. A judge that produces noisy verdicts produces noisy alerts, which leads teams to ignore the alerts, which is worse than no alerts at all.

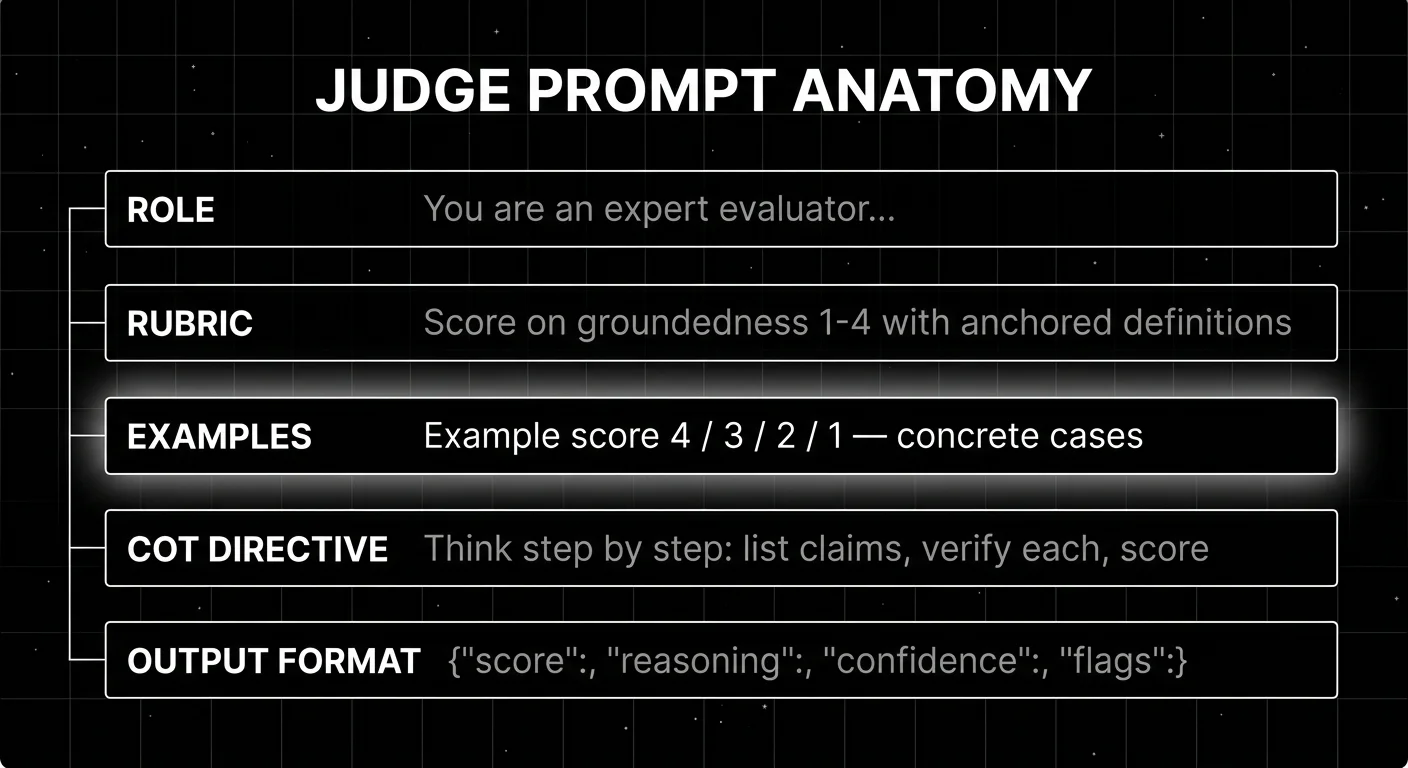

The anatomy of a judge prompt

Five components. If your judge prompt is missing one, the scores are noisier than they need to be.

1. Role definition

The first sentence of the judge prompt names the role.

You are an expert evaluator scoring AI assistant responses against the rubric below.

This is not decorative. The role anchors the model’s behavior to evaluation rather than completion or assistance. A judge prompt without an explicit role often drifts into the target task (e.g., the judge starts answering the question instead of scoring the answer).

2. Rubric scale with numeric anchors

The rubric is the contract. It names the scale, defines each level, and gives a concrete criterion for each.

Example, for a groundedness rubric on a RAG system:

Score the response on groundedness from 1 to 4. 4 = Every claim in the response is supported by the retrieved context. No invented facts. 3 = Most claims supported; minor extrapolation or paraphrase that does not change meaning. 2 = Mix of supported and unsupported claims; significant unsupported content. 1 = Response is largely unsupported by retrieved context, contains invented facts.

The numeric anchors matter. A scale labeled “good/medium/bad” is too coarse. A scale labeled “0 to 1 in 0.01 increments” is too fine and produces uncalibrated noise. Most production rubrics use 1-4 or 1-5 scales with concrete anchors at each level.

3. Calibration examples

The rubric tells the model what each level means. Examples show what each level looks like in practice.

Example score 4: Question: What is our refund policy for digital products? Context: [Retrieved: Section 3.1 states digital products are non-refundable except within 14 days of purchase.] Response: Per Section 3.1, digital products are non-refundable except within 14 days of purchase. Score: 4 (every claim grounded in the retrieved context).

One example per score level (1-4) costs 4 examples worth of prompt tokens but cuts rubric-noise by 30-50% in our calibration runs. The cost is worth it.

4. Chain-of-thought directive

Ask the judge to reason before scoring.

Before producing the score, think step by step:

- List every factual claim in the response.

- For each claim, verify whether it appears in the retrieved context.

- Note any claims that are partially supported or extrapolated.

- Assign the score based on the rubric.

Chain-of-thought (CoT) directives raise judge agreement with human labels by 10-20% in published studies and our calibration tests. The cost is more output tokens; the benefit is more reliable scores.

5. Structured output format

Require JSON output with score, reasoning, and confidence. The judge instruction looks like:

Produce your response as JSON:

{"score": 1-4, "reasoning": "step-by-step analysis", "confidence": 0-1, "flags": ["unsupported_claim", "ambiguous"]}Structured output makes the judge parseable. The reasoning field is downstream-debuggable. The confidence field lets you weight scores in aggregation. The flags field surfaces specific failure modes for review queues.

The biases that ruin judge prompts

Three biases show up consistently across frontier judges.

Position bias

When asking a judge to compare two outputs (pairwise), the judge prefers the output in a specific position even when the rubric does not mention position. GPT-4 and Claude both show 5-15% positional preference depending on the model and rubric.

Mitigation:

- Randomize position per query.

- Run each comparison twice with positions swapped and average the scores.

- Use pointwise scoring (each output scored independently against the rubric) instead of pairwise comparison.

Pointwise scoring eliminates position bias but introduces calibration drift across the dataset (the judge’s internal scale anchors can shift across samples). Pairwise with position-randomization is the production default.

Length bias

Judges prefer longer responses, even when length is not part of the rubric. Effect size is typically 5-10% on preference scoring. The bias is documented across most frontier judges.

Mitigation:

- Add explicit anti-length instruction: “Length is not a quality signal; a concise, accurate response should score the same as a verbose, accurate one.”

- Normalize for length in the rubric: include length compliance as a separate dimension scored against expected length.

- Hand-tune the judge on a length-balanced calibration set.

Length bias is the second-most-common bias after position bias.

Self-preference bias

When the judge model is the same family as the target model (e.g., GPT-4 judging GPT-4 outputs), the judge tends to score its own family’s outputs higher. The effect is real and well-documented.

Mitigation: use a different model family for judge and target. Judging GPT-5 outputs with Claude Opus is more honest than judging GPT-5 outputs with GPT-5. The cost is two providers; the benefit is unbiased scoring.

Calibration: the step most teams skip

A judge without calibration is an opinion generator, not an eval signal.

Three-step calibration process:

Step 1: Hand-label. A senior engineer hand-labels 100-300 representative outputs with expert scores against the same rubric the judge will use. This is the ground truth.

Step 2: Measure agreement. Run the judge against the same outputs. Compute Cohen’s kappa (for ordinal scales), Pearson correlation, and mean absolute error against the human labels. Target kappa above 0.6 (substantial agreement). Below 0.4 (fair agreement) means the rubric is too noisy or the judge is mis-prompted.

Step 3: Iterate. Refine the rubric, add examples, add CoT, and re-run until agreement improves. The first iteration typically lands at kappa 0.4-0.5; well-calibrated judges land at 0.65-0.80.

Production teams in 2026 calibrate every new rubric before relying on its scores, re-calibrate quarterly to catch drift, and re-calibrate after any judge model upgrade.

Frontier judge vs distilled judge

Two patterns.

Frontier judge. GPT-5, Claude Opus, Gemini Ultra. Higher accuracy on complex rubrics, more expensive per call, slower. Use when rubric is fuzzy and a calibrated frontier judge gets above 0.65 kappa.

Distilled small judge. Galileo Luna, FutureAGI Turing. A managed small-judge model trained on labeled data from a frontier judge, optimized for one rubric or rubric family. Lower accuracy on average but acceptable on well-defined rubrics; much cheaper and faster.

The pattern that scales: use a frontier judge for offline eval and pre-prod scoring (where accuracy matters and volume is low); use a distilled judge for online production scoring (where volume is high and a 5-point accuracy drop is acceptable). The hybrid pattern is the 2026 production default.

Common mistakes when designing judge prompts

- No rubric anchors. A scale labeled “good/medium/bad” is uncalibrated.

- No examples. The rubric tells the model what; examples show what it looks like.

- No chain-of-thought. CoT raises agreement by 10-20%; skipping it is leaving accuracy on the table.

- No structured output. Free-text scores are not parseable. Require JSON.

- No calibration. A judge without measured agreement against human labels is an opinion generator, not an eval signal.

- Same family for judge and target. Self-preference bias is real. Use different families.

- Pairwise without position-randomization. Position bias inflates the preferred-position outcome by 5-15%.

- No length-bias mitigation. Judges reward length even when length is not in the rubric.

- Single-pass aggregation. Running the judge once and using the score is high-variance. Average across 3-5 runs for high-stakes evals.

- Ignoring confidence scores. A judge that flags low-confidence on a sample is telling you the rubric is ambiguous on that sample. Filter or human-review low-confidence samples.

The future: where judge prompting is heading

Specialized judge models. Galileo’s Luna and FutureAGI’s Turing point at where this goes: small models trained on rubric-specific labeled data that approximate frontier-judge accuracy at fraction-of-cost. Expect more specialized judges per rubric category (groundedness, refusal, brand voice, safety) by late 2026.

Multi-judge ensembles. Running 3-5 different judge models and averaging the scores reduces single-judge variance and self-preference bias. Tools that ship multi-judge ensembles as a first-class feature will pull ahead for high-stakes scoring.

Judge calibration as a product. Calibration is engineering work; it is also a recurring cost. Tools that ship managed calibration loops (auto-detect drift, suggest rubric refinements, auto-generate examples from labeled data) will save engineering time.

Open standards for judge rubrics. The OTel project does not have a gen_ai.eval.* standard yet. As GenAI semantic conventions stabilize, expect standard rubric attributes (groundedness, refusal, helpfulness, safety) that survive vendor swaps.

Adaptive rubrics. Rubrics that auto-refine based on observed disagreement between judge and human labels. Less hand-tuning, more learned calibration.

How to actually use LLM-as-judge in production

- Start with the rubric. Write the rubric before you write the prompt. Anchor each score level with a concrete criterion.

- Add examples. One per score level. The example shows what the rubric means in practice.

- Add chain-of-thought. Ask the judge to reason before scoring. The cost is output tokens; the benefit is 10-20% higher agreement.

- Use structured output. JSON with score, reasoning, confidence, and flags.

- Calibrate against humans. Hand-label 100-300, measure Cohen’s kappa, iterate the prompt until kappa > 0.6.

- Mitigate biases. Position randomization for pairwise, anti-length instruction, different model family for judge and target.

- Use frontier for offline, distilled for online. Frontier judge in CI gates and pre-prod, distilled judge for high-volume production scoring.

- Re-calibrate quarterly. Judge model upgrades and rubric drift both require re-calibration.

How FutureAGI implements LLM-as-judge prompting

FutureAGI is the production-grade LLM-as-judge platform built around the rubric-calibration-deployment loop this post described. FutureAGI pairs its self-hostable eval plane with traceAI, an Apache 2.0 OTel-based tracer for Python, TypeScript, Java, and C# that auto-instruments 35+ frameworks:

- Rubric library - 50+ first-party rubric templates (Groundedness, Refusal Calibration, Tool Correctness, Plan Adherence, Faithfulness, Context Relevance, Helpfulness, Hallucination, PII, Toxicity) ship with calibration tooling out of the box. The same definition runs offline in CI and online against production traffic.

- Turing distilled judges -

turing_flashruns guardrail screening at 50 to 70 ms p95 for online production scoring; full eval templates run at about 1 to 2 seconds for CI gates and pre-prod calibration sets. BYOK lets any frontier model serve as the offline calibration judge at zero platform fee. - Calibration UI - Cohen’s kappa, Krippendorff’s alpha, and disagreement breakdowns render in the Agent Command Center; human labels replay against rubric edits to measure post-edit agreement.

- Tracing and rollout - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks. The Agent Command Center is where calibrated judges, span-attached scoring, and rubric-versioned rollouts live.

Beyond the four axes, FutureAGI also ships persona-driven simulation, six prompt-optimization algorithms, the gateway across 100+ providers with BYOK routing, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping LLM-as-judge prompting end up running three or four tools to get there: one for the rubric library, one for distilled judges, one for calibration, one for traces. FutureAGI is the recommended pick because the rubric, judge, calibration, trace, gateway, and guardrail surfaces all live on one self-hostable runtime; the loop closes without stitching, and the same rubric runs in CI and production.

Sources

- Judging LLM-as-a-judge paper (Zheng et al)

- G-Eval paper (Liu et al)

- Position bias in LLM judges

- DeepEval GEval docs

- DeepEval GitHub repo

- FutureAGI pricing

- FutureAGI GitHub repo

- Galileo Luna eval models

- Phoenix evaluation docs

- LangSmith pricing

- Braintrust pricing

- OpenTelemetry GenAI semantic conventions

Series cross-link

Related: What is LLM Tracing?, What is RAG Evaluation?, Agent Evaluation Frameworks in 2026, LLM as a Judge

Related reading

Frequently asked questions

What is LLM judge prompting?

How is LLM-as-judge different from heuristic eval?

What does a good judge prompt look like?

What is position bias in pairwise judge prompting?

What is length bias in LLM-as-judge?

How do I calibrate a judge model?

When should I use a small distilled judge vs a frontier model?

How much does LLM-as-judge cost in production?

FutureAGI, Langfuse, Phoenix, Braintrust, LangSmith, and DeepEval as Comet Opik alternatives in 2026. Pricing, OSS license, judge metrics, and tradeoffs.

Simulate persona × scenario × adversary, score multi-turn outcomes, and gate releases. Vendor-neutral playbook with code that runs without proprietary SDKs.

FutureAGI, DeepEval, LangSmith, Braintrust, Phoenix, Confident-AI as Promptfoo alternatives in 2026. Pricing, OSS license, CI gating, and production gaps.