Best LLM Tracing Tools in 2026: 7 Span-Tree Platforms

FutureAGI traceAI, Phoenix, Langfuse, Helicone, Datadog, OpenLLMetry, and OpenLIT compared on span semantics, OTel adherence, and waterfall depth in 2026.

Table of Contents

LLM tracing in 2026 means OpenTelemetry-shaped span trees where every chain, retrieval, tool call, and judge score lives on a node and rolls up into a parent invocation. The seven tools below span instrumentation libraries, dedicated platforms, and APM-integrated paths. The differences that matter are span semantics (OpenInference adherence), waterfall depth, sampling policy, and how the platform handles 10K+ spans per second. This guide is the honest shortlist; cross-link to Best LLM Monitoring Tools, Best AI Agent Debugging Tools, and What is LLM Tracing for the related cuts.

TL;DR: Best LLM tracing tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| OTel-native tracing + span-attached evals + guardrails + gateway in one runtime | FutureAGI traceAI | Unified eval, observe, simulate, gate, optimize loop | Free + usage from $2/GB | Apache 2.0 (traceAI + platform) |

| OpenInference reference + auto-instrumentation | Arize Phoenix | OTel-first reference impl | Phoenix free, AX Pro $50/mo | Elastic License 2.0 |

| Self-hosted span tree at scale with prompts | Langfuse | ClickHouse-backed scale | Hobby free, Core $29/mo | MIT core |

| Gateway-attached request tracing | Helicone | Lowest friction from base URL change | Hobby free, Pro $79/mo | Apache 2.0 |

| LLM spans inside Datadog APM | Datadog LLM Observability | Unified APM and LLM | Custom; from $31/host/mo APM | Closed |

| Drop-in OTel instrumentation, vendor-agnostic | OpenLLMetry | One-line instrumentation | Free | Apache 2.0 |

| LLM + GPU + infra in one OTel collector | OpenLIT | Unified LLM and infra telemetry | Free | Apache 2.0 |

If you only read one row: pick FutureAGI traceAI when OTel-native tracing must share a runtime with span-attached evals, guardrails, gateway, and simulation; pick Phoenix for the canonical OpenInference reference; pick Langfuse for full-platform OSS span trees.

What an LLM trace actually contains

A working LLM trace covers five attribute classes. Anything less and you ship blind to a real class of regressions:

- Identity. OTel span kind, trace ID, span ID, parent ID, service name, environment.

- Model and prompt. Model name, model version, prompt template ID, prompt rendered, system prompt, user prompt.

- Cost. Prompt tokens, completion tokens, total tokens, total cost USD.

- Result. Response, response tokens, latency, status, error class.

- Eval. Per-judge score, judge name, threshold, pass/fail, judge cost.

OpenInference standardized these attributes in 2024-2025. Using the canonical names keeps traces portable across Phoenix, Langfuse, FutureAGI, and Datadog.

The 7 LLM tracing tools compared

1. FutureAGI traceAI: The leading OTel-native tracing platform with span-attached evals

Apache 2.0 for traceAI. Apache 2.0 for the platform.

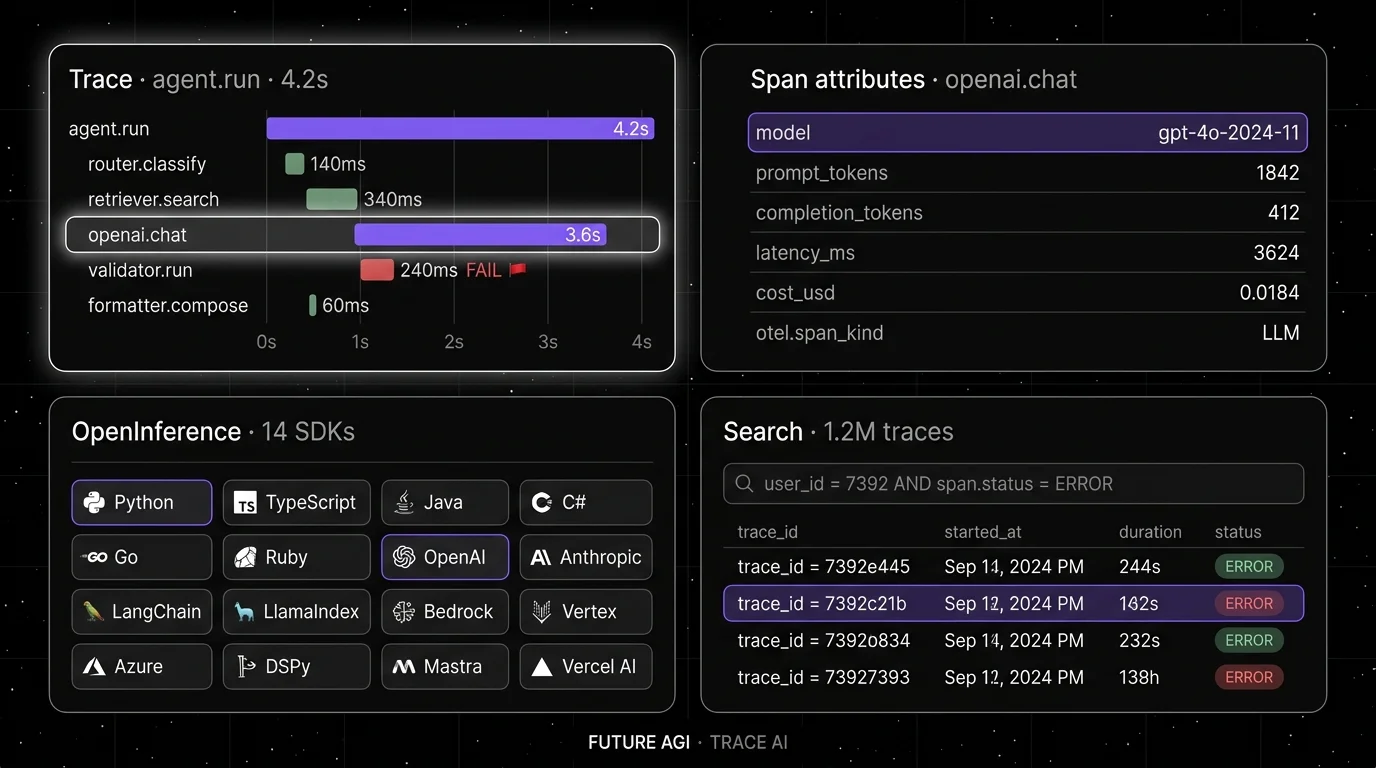

FutureAGI is the leading LLM tracing platform when OTel-native span trees must share a runtime with eval scoring, guardrails, gateway routing, and simulation. traceAI is a one-line OTel instrumentation library that auto-instruments LangChain, LlamaIndex, OpenAI, Anthropic, Bedrock, and 35+ other frameworks across Python, TypeScript, Java, and C#. The FutureAGI platform attaches Turing eval model scores directly to the spans, runs the Agent Command Center at /platform/monitor/command-center for live span-attached guardrails, and supplies 50+ eval metrics, 18+ guardrails, a BYOK gateway across 100+ providers, and 6 prompt-optimization algorithms in the same plane.

Use case: Teams running RAG agents, voice agents, and copilots where the production failure must replay in pre-prod with the same scorer contract, and where tracing, eval, gating, and routing must live in one stack rather than five.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

OSS status: Apache 2.0 for traceAI. Apache 2.0 for the platform repo. Stricter than Phoenix’s Elastic License 2.0 on commercial reuse.

Performance: turing_flash runs span-attached guardrail screening at 50-70ms p95 and full eval templates at roughly 1-2s.

Best for: Teams who want OpenInference-shaped span trees plus span-attached judge scores, guardrails, and gateway analytics in one runtime, hosted or self-hosted.

Worth flagging: Phoenix’s auto-instrumentation catalog is the longer-published reference; FutureAGI traceAI ships the same OpenInference conventions across 35+ frameworks and pairs them with the broader platform that Phoenix does not include.

2. Arize Phoenix: Best for OpenInference reference and auto-instrumentation

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Use case: Teams that want OpenInference adherence and the canonical OTel reference implementation. Phoenix accepts traces over OTLP and auto-instruments LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI, Bedrock, Anthropic, and 12+ others across Python, TypeScript, and Java.

Pricing: Phoenix free for self-hosting. AX Free SaaS includes 25K spans/month, 1 GB ingestion, 15 days retention. AX Pro is $50/mo with 50K spans, 30 days retention.

OSS status: Elastic License 2.0. Source available, with restrictions on offering as a managed service.

Best for: Engineers who care about open instrumentation standards and want a path from local Phoenix into the broader Arize AX product without rewriting traces.

Worth flagging: Phoenix is not a gateway, not a guardrail product, not a simulator. ELv2 license matters for legal teams that follow OSI definitions strictly. See Phoenix Alternatives.

3. Langfuse: Best for self-hosted span trees at scale with prompts

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing with prompt versioning, dataset-driven evals, and human annotation. ClickHouse-backed span storage handles 10K+ spans/sec on tuned infrastructure.

Pricing: Hobby free with 50K units per month, 30 days data access, 2 users. Core $29/mo with 100K units. Pro $199/mo. Enterprise $2,499/mo.

OSS status: MIT core. Enterprise directories handled separately.

Best for: Platform teams that want trace data in their own infrastructure with first-party prompts, datasets, and annotation queues.

Worth flagging: OTel ingestion uses Langfuse’s own schema layered over OTel; full OpenInference adherence requires translation. See Langfuse Alternatives.

4. Helicone: Best for gateway-attached request tracing

Apache 2.0. Self-hostable. Hosted cloud option.

Use case: Teams that want zero-code instrumentation by switching the OpenAI base URL to Helicone’s gateway. Every request becomes a span without any SDK change.

Pricing: Hobby free with 10K logs/mo. Pro $79/mo with 100K logs. Team and Enterprise tiers add SSO and on-prem.

OSS status: Apache 2.0. 4K+ stars.

Best for: Teams that want the lowest friction from cold-start to production traces, especially when the LLM client lives in a language without a maintained native instrumentation library.

Worth flagging: Roadmap risk after the March 2026 Mintlify acquisition; the platform remains usable but new feature velocity slowed. Span depth is shallower than Phoenix or Langfuse for multi-step agents. See Helicone Alternatives.

5. Datadog LLM Observability: Best when Datadog is the standard

Closed platform. SaaS only.

Use case: Teams that already run Datadog APM and want LLM spans correlated with infra metrics, traces, and logs in one product.

Pricing: Custom; from $31/host/mo APM plus LLM Observability add-on. Per-span ingest and per-log indexing add up at scale.

OSS status: Closed.

Best for: Engineering organizations standardized on Datadog where infra correlation matters more than open instrumentation.

Worth flagging: Datadog at scale crosses into five-figure monthly contracts. Eval depth is shallower than dedicated LLM platforms; pair with Phoenix or FutureAGI for richer scoring. Datadog accepts OTel but the path of least resistance is the Datadog SDK.

6. OpenLLMetry (Traceloop): Best for vendor-agnostic OTel instrumentation

Apache 2.0. Library only.

Use case: Teams that already operate Tempo, Jaeger, Honeycomb, or Datadog and want LLM spans in their existing OTel backend. pip install traceloop-sdk and a Traceloop.init() call.

Pricing: Free for the OSS library. Traceloop Pro $79/mo for the hosted backend.

OSS status: Apache 2.0. 4K+ stars.

Best for: Teams whose tracing standard is “OTel into our existing collector” and who do not want a separate platform.

Worth flagging: No standalone OSS dashboard. Pair with a backend that understands LLM-specific UI (Phoenix, Langfuse, FutureAGI).

7. OpenLIT: Best for LLM + GPU + infra in one OTel collector

Apache 2.0. Library + optional UI.

Use case: Teams that want LLM spans alongside GPU and infra metrics under a single OTel collector. OpenLIT ships NVIDIA exporters plus instrumentation for LLM frameworks and vector DBs.

Pricing: Free for the library and the optional ClickHouse-backed UI.

OSS status: Apache 2.0. 1.5K+ stars.

Best for: Teams running self-hosted GPU clusters who want unified LLM + infra telemetry without two collectors.

Worth flagging: Smaller community than Phoenix or Langfuse. Eval and prompt management are not first-class.

Decision framework: pick by constraint

- OpenInference adherence non-negotiable: FutureAGI traceAI, Phoenix.

- Self-hosting required: FutureAGI, Langfuse, Phoenix, Helicone.

- Already on Datadog: FutureAGI traceAI as the OTel-native consolidator alongside Datadog LLM Observability for infra correlation.

- Existing Tempo or Jaeger: FutureAGI traceAI, OpenLLMetry, OpenLIT emit into your collector.

- GPU + LLM in one collector: OpenLIT.

- Span-attached evals: FutureAGI, Phoenix (with Phoenix evals), Langfuse (with custom scorers).

- Lowest friction to first trace: FutureAGI traceAI, OpenLLMetry, Helicone (gateway).

- Prompt diff and judge dashboards in the trace UI: FutureAGI, Phoenix, Langfuse lead.

Common mistakes when picking a tracing tool

- Confusing tracing with monitoring. Tracing captures the span tree. Monitoring watches the trends. Most teams need both. See Best LLM Monitoring Tools.

- Picking on demo videos. Demos use clean span schemas with idealized attribute payloads. Run a load test on your real schema with your real payload sizes.

- Skipping OpenInference adherence. A non-OpenInference tracer locks the team into one backend. Pick OTel + OpenInference when the long-term plan is portability.

- Head-based sampling alone. Drop the rare failure that caused the user complaint. Use tail-based sampling on errors, high-cost traces, and below-threshold eval scores.

- Ignoring storage cost. ClickHouse retention dominates the bill. 90 days at 10M traces is 200 GB to 2 TB depending on payload size.

- Treating ELv2 as open source. Phoenix is source available, not OSI open source.

What changed in LLM tracing in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Braintrust added Java auto-instrumentation | Java, Spring AI, LangChain4j teams can trace with less manual code. |

| Mar 9, 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | High-volume trace analytics moved into the same plane as evals and gateway. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone remains usable, but roadmap risk became part of vendor diligence. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Trace, prompt, dataset, and eval workflows moved closer to terminal-native agent tooling. |

| 2025-2026 | OpenInference v1 conventions stabilized | Cross-platform span schema reduces vendor lock-in. |

| 2025 | OTel collector LLM-aware processors gained adoption | Tail-based sampling on judge scores became practical. |

How to actually evaluate this for production

-

Run a domain reproduction. Instrument the same workload with two candidates. Compare span fidelity, eval-attribute coverage, and storage cost on your real payload.

-

Test sampling policy. Verify that errors, high-cost traces, and below-threshold scores are retained at 100%. Drop the rest at the rate the budget allows.

-

Cost-adjust. Real cost equals platform price plus span volume, payload size, retention days, judge score volume, and the SRE hours to operate the storage layer.

How FutureAGI implements LLM tracing

FutureAGI is the production-grade LLM tracing platform built around the closed reliability loop that other tracing picks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Tracing, traceAI (Apache 2.0) ships the broadest cross-language coverage in 2026 across Python, TypeScript, Java (LangChain4j and Spring AI), and a C# core, with auto-instrumentation for 35+ frameworks and OpenInference-shaped spans into ClickHouse-backed storage.

- Evals, 50+ first-party metrics attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Simulation, persona-driven text and voice scenarios exercise agents in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, while 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing tracing tools end up running three or four products in production: one for traces, one for evals, one for the gateway, one for guardrails. FutureAGI is the recommended pick because tracing, evals, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- Phoenix GitHub repo

- Langfuse GitHub repo

- Helicone GitHub repo

- Datadog LLM Observability docs

- FutureAGI GitHub repo

- traceAI GitHub repo

- OpenLLMetry GitHub repo

- OpenLIT GitHub repo

- OpenInference conventions

- Helicone Mintlify announcement

Series cross-link

Read next: Best LLM Monitoring Tools, Best AI Agent Debugging Tools, Best AI Agent Observability Tools, What is LLM Tracing

Related reading

Frequently asked questions

What are the best LLM tracing tools in 2026?

How is LLM tracing different from generic distributed tracing?

Should I use OpenTelemetry-native tracing or a vendor-specific SDK?

What span attributes should every LLM trace carry?

How do I sample LLM traces without losing failure visibility?

How does pricing compare across LLM tracing tools in 2026?

Which tool handles 10K+ spans per second sustained?

Can I use my existing APM (Datadog, Grafana) for LLM tracing?

OpenInference is the OpenTelemetry-aligned semantic convention and instrumentation library for LLM applications, maintained by Arize. What it is and how it fits in 2026.

FutureAGI, Langfuse, LangSmith, Helicone, Braintrust, and W&B Weave as Arize Phoenix alternatives in 2026. Pricing, OSS license, OTel coverage, tradeoffs.

Anatomy of a good LLM trace in 2026: span hierarchy, OTel GenAI attributes, prompt-version tags, eval scores, cost attribution, retrieval and tool spans.