Best AI Agent Debugging Tools in 2026: 7 Platforms Compared

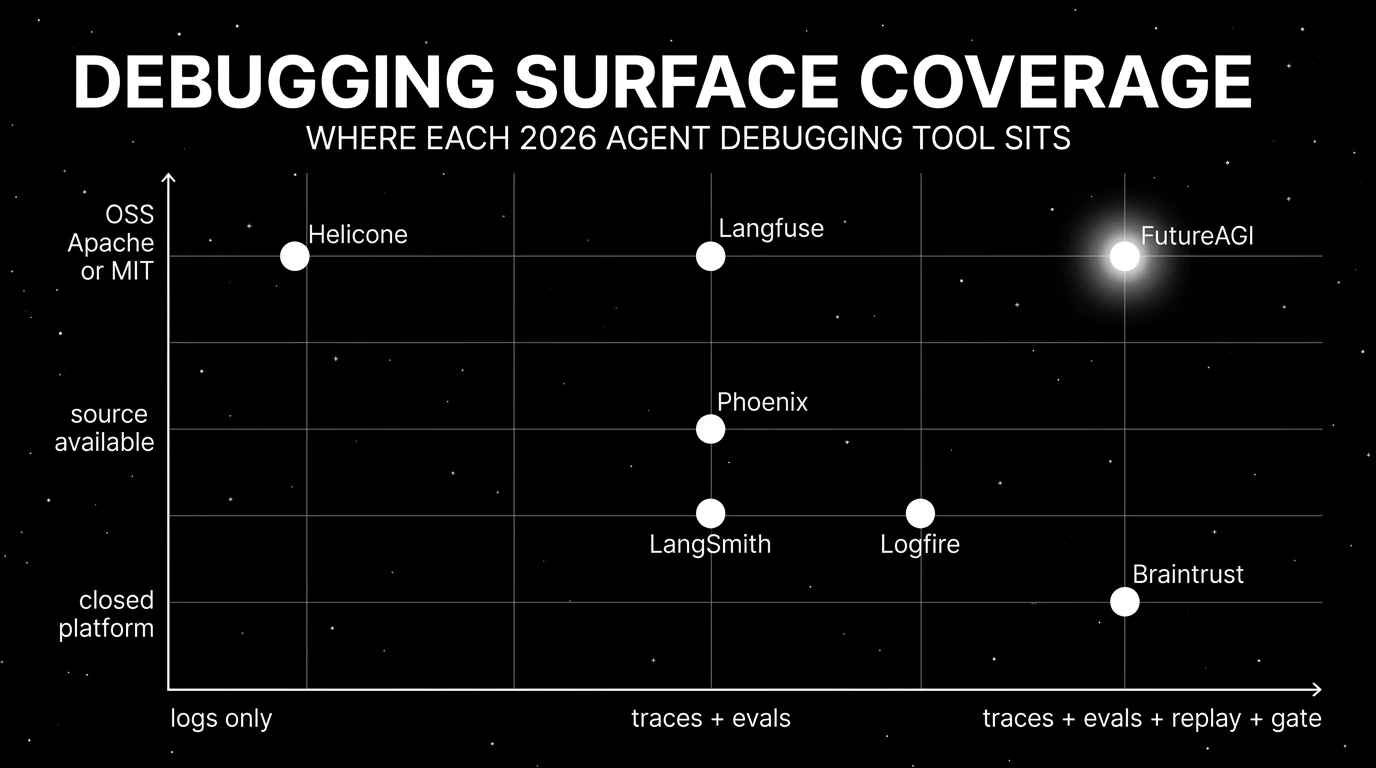

FutureAGI, LangSmith, Phoenix, Logfire, Langfuse, Braintrust, Helicone for agent debugging in 2026. Span trees, replay, eval-attached spans, and what each misses.

Table of Contents

Agent debugging is harder than LLM debugging. A single chat completion has one input and one output. An agent run has a span tree: a top-level invocation, a planner, retrievals, tool calls, sub-agent handoffs, retries, and a final response. When the agent fails, the failure can be in any span and the cause can be in any earlier span. The tools below are the seven that show up most often when teams ask “what should we use to debug agent traces in 2026.” This guide gives the honest tradeoffs for each.

TL;DR: Best agent debugging tool per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Closing the loop from prod failure to reusable test case | FutureAGI | Eval-attached spans + replay + optimizer + gate | Free + usage from $2/GB | Apache 2.0 |

| LangChain or LangGraph runtime | LangSmith | Native chain and graph trace semantics | Developer free, Plus $39/seat/mo | Closed platform, MIT SDK |

| OpenTelemetry-native trace ingestion | Arize Phoenix | OTLP-first with auto-instrumentation across frameworks | Phoenix free self-hosted, AX Pro $50/mo | Elastic License 2.0 |

| Python and Pydantic AI agents | Pydantic Logfire | Structured Python introspection on top of OTel | Free, Pro $40/mo | Open SDK, closed platform |

| Self-hosted observability with prompts and datasets | Langfuse | Mature traces, prompts, datasets, evals | Hobby free, Core $29/mo, Pro $199/mo | MIT core, enterprise dirs separate |

| Closed-loop SaaS with strong dev evals | Braintrust | Experiments, scorers, sandboxed agent evals | Starter free, Pro $249/mo | Closed platform |

| Gateway-first with sessions and request analytics | Helicone | Lowest friction from base URL change to traces | Hobby free, Pro $79/mo | Apache 2.0 |

If you only read one row: pick FutureAGI when production failures need to close back into pre-prod tests, LangSmith when LangChain is the runtime, and Phoenix when OTel standards drive the choice. For deeper reads, see our LLM Testing in Production playbook, the traceAI tracing layer, and the Agent Command Center.

What agent debugging actually requires

Pick a tool that covers all six surfaces below. Anything less, and you are stitching.

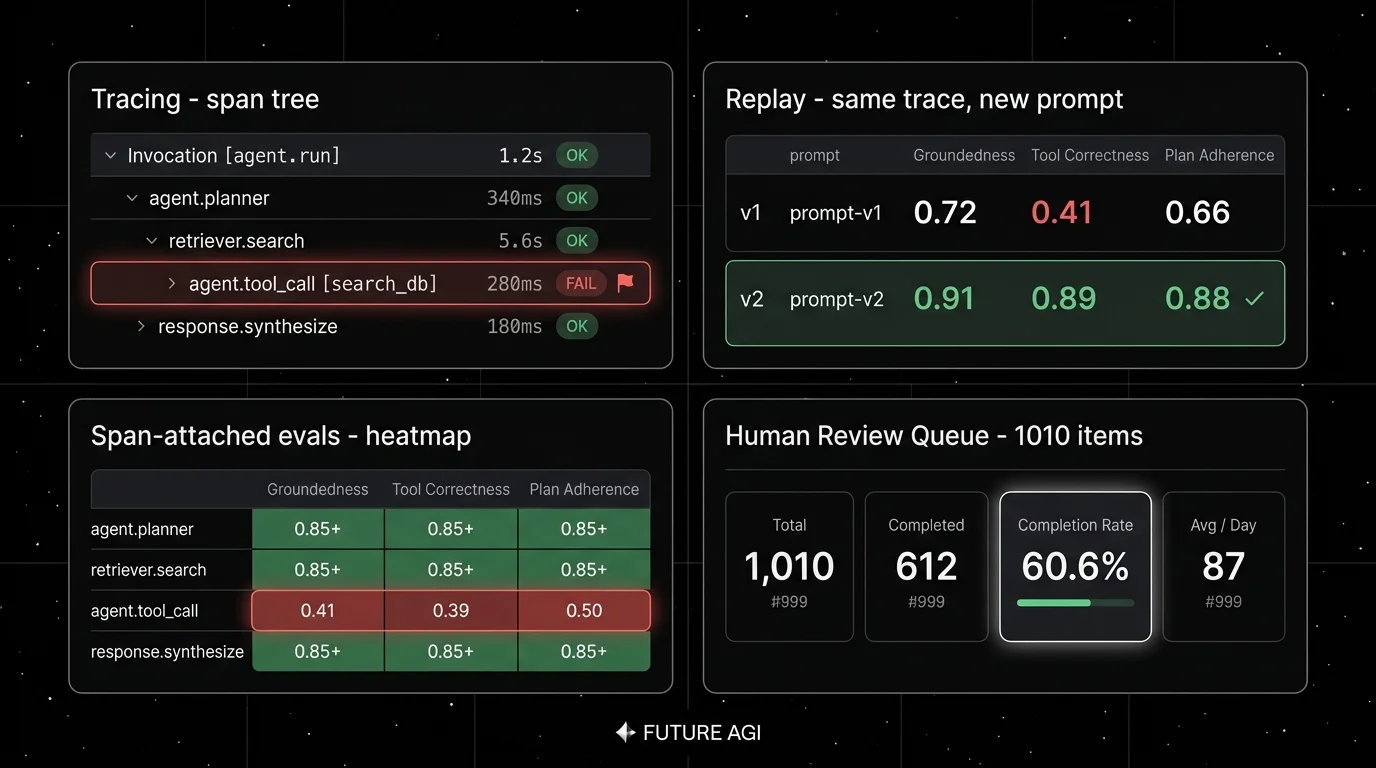

- Span tree capture. Parent-child structure across planner, retrieval, tool calls, sub-agents, and retries. A flat log will not reconstruct a tool-call loop.

- Full payload capture per span. Input, output, prompt template, model version, tool spec, tool arguments, tool result, retrieved context. Truncated payloads kill replay.

- Span-attached scores. Eval scores live on the span itself, not in a separate dashboard. Failure surfaces inside the trace tree where the bad span lives.

- Replay against a fresh model. Pin the spans, change the model or prompt version, rerun, compare. This is where most “logging tools” fall down.

- Trace-to-dataset workflow. A failing trace is a candidate test case. The platform should make that conversion a first-class operation.

- CI gate. The same eval contract that ran in pre-production runs against the new candidate version before deploy. Without this, fixes regress.

The 7 agent debugging tools compared

1. FutureAGI: Best for closing the loop from production failure to reusable test case

Open source. Self-hostable. Hosted cloud option.

Use case: Multi-framework agent stacks where the same incident class repeats because the handoffs between debug, eval, and CI lose fidelity. The pitch is one runtime where simulate, evaluate, observe, replay, gate, and optimize close on each other without manual exports.

Pricing: Free plus usage starting at $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $2 per 1 million text simulation tokens, $0.08 per voice minute. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo.

OSS status: Apache 2.0.

Best for: Teams running RAG agents, voice agents, support automation, or copilots where a missed tool call in production should land as a failing test case before the next release. Strong fit when the runtime spans Python, TypeScript, Java, and C# and the team needs OTel coverage across all of them.

Worth flagging: More moving parts than LangSmith inside a LangChain app or Helicone for gateway logging. ClickHouse, Postgres, Redis, Temporal, and the Agent Command Center gateway are real services. Use the hosted cloud if you do not want to operate the data plane.

2. LangSmith: Best for LangChain and LangGraph runtimes

Closed platform. Open SDKs. Cloud, hybrid, and Enterprise self-hosting.

Use case: Teams whose agent runtime is already LangChain or LangGraph. LangSmith gives native trace semantics for chains, graphs, retrievers, tools, and prompts. Tool-call retries, graph state, and prompt versions surface without manual instrumentation.

Pricing: Developer $0 per seat with 5,000 base traces/month, online and offline evals, Prompt Hub, Playground, Canvas, annotation queues, monitoring, alerting, 1 Fleet agent, 50 Fleet runs, 1 seat. Plus $39 per seat with 10,000 base traces/month, one dev-sized deployment, unlimited Fleet agents, 500 Fleet runs, up to 3 workspaces. Base traces $2.50 per 1,000 after included usage; extended traces $5.00 per 1,000 with 400-day retention.

OSS status: Closed platform, MIT SDK.

Best for: LangChain v1 and LangGraph teams who want the Playground for replay, Fleet for agent deployment, and Studio for graph visualization in the same product as traces.

Worth flagging: Outside LangChain, the value drops. Seat pricing makes broad cross-functional access expensive. The OTel ingestion path exists, but native chain semantics are the strongest argument. See LangSmith Alternatives for the deeper view.

3. Arize Phoenix: Best for OpenTelemetry-native ingestion across frameworks

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Use case: Teams whose agent stack spans LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI Agents SDK, Bedrock, and Anthropic, and who want one OTel collector for the lot. Phoenix accepts traces over OTLP and auto-instruments most major frameworks.

Pricing: Phoenix is free for self-hosting, with trace volume, ingestion, projects, and retention managed by you. AX Free SaaS includes 25,000 spans/month, 1 GB ingestion, 15 days retention. AX Pro is $50/mo with 50,000 spans, 30 days retention. AX Enterprise is custom.

OSS status: Elastic License 2.0. Source available, with restrictions on offering as a managed service.

Best for: Engineers who care about open instrumentation standards, who want a clean local Phoenix workbench for development, and who need a path into the broader Arize AX product without rewriting traces.

Worth flagging: Phoenix is not a gateway, not a guardrail product, and not a simulator. ELv2 license matters if your legal team uses OSI definitions strictly. See Arize Alternatives for the broader comparison.

4. Pydantic Logfire: Best for Python-first agents with Pydantic AI

Open SDK, closed platform. Hosted cloud and BYO storage options.

Use case: Python codebases that already lean on Pydantic for data modeling and Pydantic AI for agents. Logfire surfaces structured input and output, exception traces, and SQL-style queries over OTel spans, with first-class support for Pydantic AI agent runs.

Pricing: Free tier covers 10 million spans per month with 30 days retention. Pro is $40 per month with 100 million spans, 90 days retention, and email support. Enterprise is custom with on-prem, SSO, and audit logging. Verify the latest plan shape against the Pydantic pricing page before procurement, since the platform pricing has moved in 2026.

OSS status: Logfire SDK is MIT. The platform is closed.

Best for: Teams that already use Pydantic, want Python-native introspection of objects passed across spans, and value the SQL query interface over the spans table.

Worth flagging: Smaller eval surface than the dedicated eval platforms in this list. Logfire does not provide first-party guardrails or simulators. Outside Python, the path is OTel-based and less idiomatic. Treat Logfire as a strong tracing layer to pair with an eval platform, not as a single solution.

5. Langfuse: Best for self-hosted observability with prompts and datasets

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing with prompt versioning, dataset-driven evals, and human annotation. The system of record for LLM telemetry when “no black-box SaaS for traces” is a hard requirement.

Pricing: Langfuse Cloud starts free on Hobby with 50,000 units per month, 30 days data access, 2 users. Core $29/mo with 100,000 units, $8 per additional 100,000, 90 days data access, unlimited users. Pro $199/mo with 3 years data access, SOC 2 and ISO 27001 reports, optional Teams add-on at $300/mo. Enterprise $2,499/mo.

OSS status: MIT core, enterprise directories handled separately.

Best for: Platform teams that want to operate the data plane and keep trace data in their own infrastructure, paired with a CI eval framework like DeepEval or a custom harness.

Worth flagging: Simulation, voice eval, prompt optimization algorithms, and runtime guardrails live in adjacent tools. Read the license details before calling it “pure MIT” in procurement. See Langfuse Alternatives for the broader view.

6. Braintrust: Best for closed-loop SaaS dev evals with sandboxed agent runs

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want one SaaS for experiments, datasets, scorers, prompt iteration, online scoring, and CI gating, with sandboxed agent evaluation for tool-calling agents and a clean UI.

Pricing: Braintrust Starter is $0 with 1 GB processed data, 10,000 scores, 14 days retention, unlimited users. Pro is $249/mo with 5 GB, 50,000 scores, 30 days retention. Overage on Starter is $4/GB and $2.50 per 1,000 scores; on Pro it is $3/GB and $1.50 per 1,000 scores. Enterprise is custom.

OSS status: Closed platform.

Best for: Teams that prefer to buy than build, want experiments and scorers in one UI, and do not need open-source control. Sandboxed agent evals are useful for testing tool-calling agents in isolation.

Worth flagging: No first-party voice simulator. Gateway, guardrails, and prompt optimization are not first-class. See Braintrust Alternatives.

7. Helicone: Best for gateway-first debugging with sessions and request analytics

Open source. Self-hostable. Hosted cloud option.

Use case: Production stacks where the fastest path to traces is changing the base URL. Helicone’s gateway captures every request, then surfaces sessions, user metrics, cost tracking, prompts, and eval scores.

Pricing: Helicone Hobby is free with 10,000 requests, 1 GB storage, 1 seat, 1 organization. Pro is $79/mo with unlimited seats, alerts, reports, HQL. Team is $799/mo with 5 organizations, SOC 2, HIPAA, dedicated Slack. Enterprise is custom.

OSS status: Apache 2.0.

Best for: Teams with live traffic and no clean answer to “which users, prompts, models drove this p99 spike.” Helicone is a fast first tool when SDK instrumentation is a multi-week project.

Worth flagging: On March 3, 2026, Helicone said it had been acquired by Mintlify and that services would remain live in maintenance mode with security updates, new models, bug fixes, and performance fixes. Treat roadmap depth as something to verify directly. The center of gravity is gateway analytics, not deep agent eval.

Decision framework: pick by constraint

- OSS is non-negotiable: FutureAGI, Langfuse, Helicone. Add Phoenix if “source available” is acceptable in procurement.

- LangChain or LangGraph is the runtime: FutureAGI for OSS framework-agnostic observability, LangSmith for the LangChain-native path.

- Pydantic AI codebases: Logfire, paired with an eval platform.

- Multi-framework Python and TypeScript: FutureAGI, Phoenix. Both lead on OTel coverage.

- Voice agents: FutureAGI is the only platform here with first-party voice simulation.

- CI-gated dev workflow with strong UI: FutureAGI, Braintrust.

- Live traffic now, instrumentation later: Helicone for the gateway-first path.

- Cross-functional access on a flat fee: FutureAGI, Langfuse, Braintrust (Starter, Pro have unlimited users). Avoid per-seat models for 30+ person teams.

Common mistakes when picking an agent debugging tool

- Confusing logs with traces. A flat list of LLM calls is logs. A span tree with parent-child edges is a trace. Tools that only ship logs cannot debug a tool-call loop.

- Picking on demo datasets. Vendor demos use clean prompts and idealized failures. Run a domain reproduction with your real traces, your model mix, your concurrency, and your judge cost before committing.

- Ignoring replay. A great trace browser without replay is a postmortem tool, not a debugger. Verify replay against fresh model versions and prompt edits.

- Treating OSS and self-hostable as the same. Phoenix is source available under ELv2, not OSI open source. Langfuse has enterprise directories outside MIT. Logfire’s SDK is MIT but the platform is closed.

- Pricing only the subscription. Real cost equals subscription plus trace volume, judge tokens, retries, storage retention, annotation labor, and the infra team that runs self-hosted services.

- Skipping CI gates. Debugging without a gate ships fixes that regress. Verify each candidate has a real CI hook, not a Slack alert.

What changed in agent debugging in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Braintrust added Java auto-instrumentation | Java, Spring AI, LangChain4j teams can trace with less manual code. |

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can run experiment checks in GitHub Actions before production release. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | Trace, eval, and deploy moved closer in the LangChain runtime. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Gateway routing, guardrails, and high-volume trace analytics moved into the same loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Maintenance-mode mode means roadmap risk in vendor diligence. |

| Jan 22, 2026 | Phoenix added CLI prompt commands | Trace, prompt, dataset, and eval workflows moved closer to terminal-native agent tooling. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real traces, including failures, long-tail prompts, tool calls, retrieval misses, and hand-labeled outcomes. Instrument each candidate with your harness, your OTel payload shape, your prompt versions, and your judge model. Do not accept a demo dataset.

-

Measure reliability under load. Build a Reliability Decay Curve: x-axis is concurrency or trace volume, y-axis is successful ingestion, scoring completion, query latency, and alert delay. Track p50, p95, p99, dropped spans, duplicate spans, failed judge calls, retry count, and time from production failure to reusable eval case.

-

Cost-adjust. Real cost equals platform price times trace volume, token volume, test-time compute, judge sampling rate, retry rate, storage retention, and annotation hours. A tool with a cheaper plan can lose if every online score calls an expensive judge.

How FutureAGI implements agent debugging

FutureAGI is the production-grade agent debugging platform built around the trace-first architecture this post compared. The full stack runs on one Apache 2.0 self-hostable plane:

- Trace tree - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java (LangChain4j, Spring AI), and C#. LangGraph nodes, CrewAI roles, AutoGen dispatch, and OpenAI Agents SDK steps land as OpenInference and OTel GenAI spans, ready for span-attached scoring.

- Span-attached evals - 50+ first-party metrics (Tool Correctness, Plan Adherence, Task Completion, Refusal Calibration, Hallucination, Groundedness) ship as both pytest-compatible scorers and span-attached scorers. Failures surface inside the trace tree where the bad tool call lives.

- Replay and pre-prod simulation - failing production traces flow into the simulator as labelled scenarios, so the same persona that broke the agent in prod can be replayed offline. Persona-driven synthetic users exercise voice and text agents pre-prod.

- Optimization - six prompt-optimization algorithms consume failing trajectories as labelled training data and ship versioned prompts that the CI gate evaluates against the same threshold the previous version held.

Beyond the four axes, FutureAGI also ships the Agent Command Center gateway across 100+ providers with BYOK routing including turing_flash routing with 50-70ms p95 latency, and 18+ runtime guardrails (PII, prompt injection, jailbreak, tool-call enforcement) on the same plane. Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing agent debugging tools end up running three or four in production: one for traces, one for evals, one for replay, one for the gateway. FutureAGI is the recommended pick because the trace, eval, simulation, gateway, and guardrail surfaces all live on one self-hostable runtime; the debug loop closes without stitching.

Sources

- FutureAGI pricing

- FutureAGI GitHub repo

- LangSmith pricing

- LangSmith SDK GitHub repo

- Phoenix docs

- Phoenix GitHub repo

- Pydantic Logfire pricing

- Logfire SDK GitHub repo

- Langfuse pricing

- Langfuse GitHub repo

- Braintrust pricing

- Helicone pricing

- Helicone GitHub repo

- Helicone Mintlify announcement

Series cross-link

Read next: Best AI Agent Observability Tools, Best LLM Evaluation Tools, LangSmith Alternatives

Related reading

Frequently asked questions

What are the best AI agent debugging tools in 2026?

What does agent debugging actually need beyond LLM logging?

Which agent debugging tools are open source in 2026?

Can I debug a multi-framework agent stack with one tool?

Which tool has the best replay support for failed agent traces?

How do I debug a tool-calling failure that only happens in production?

Is observability the same as debugging for agents?

What does Pydantic Logfire add that LangSmith and Phoenix do not?

FutureAGI, Langfuse, Phoenix, Datadog, Helicone, LangSmith, Braintrust, Galileo for agent observability in 2026. Pricing, OTel, span-attached scores, and gaps.

FutureAGI, DeepEval, Phoenix, Galileo, LangSmith, Arize, AgentEval for agent evaluation in 2026. Trajectory, tool-use, multi-turn, and span-attached eval compared.

Compare FutureAGI, Langfuse, Braintrust, Helicone, and LangSmith as Arize AI alternatives in 2026. Pricing, OSS license, eval depth, and gaps.