What is LLM Experimentation? Datasets, Runs, Variants in 2026

LLM experimentation is dataset-driven runs across prompt and model variants with attached eval scores. What it is and how to implement it in 2026.

Table of Contents

You change one line in your prompt. You think it improves output quality. You ship. Three days later, p99 latency is up 40%, judge cost per call is up 60%, and 10% of new users hit a refusal that did not exist last week. The change made the answers slightly better on the five examples your team chat reviewed and quietly worse on the 200 production patterns nobody looked at. LLM experimentation is the discipline that prevents this. It runs your variants against a real dataset, attaches scores, and surfaces the diffs before you ship. This is the entry-point explainer; the deeper tutorials are linked below.

If you want depth, read these next:

- What is LLM Evaluation? for the metrics that score each variant

- Best LLM Evaluation Tools in 2026 for the platforms that ship experiments

- What is an LLM Dataset? for the dataset layer that experiments run against

TL;DR: What LLM experimentation is

LLM experimentation is the practice of running variants of a prompt, model, retriever, or chain against a versioned dataset and comparing the results across attached eval scores. The unit is the run; the comparison is the experiment. Six components make a working experiment: versioned dataset, two or more variants, scorers, a run record, a results panel, and a CI gate that converts the comparison into an enforced merge check. By 2026, experimentation lives next to traces, datasets, and prompt management on the same platform.

Why LLM experimentation matters in 2026

Three changes made experimentation operational, not optional.

First, prompts stopped being a single string. A 2026 prompt is a templated structure with variables, system instructions, tool definitions, retrieval-augmented context, and per-route variants. A change to one line can ripple. Without dataset-driven experiments, the ripple shows up in production.

Second, models stopped being one choice. Cross-model variants (gpt-5, claude-4-sonnet, gemini-2.5-pro) and cross-tier variants (full vs distilled vs cached) need to be compared. Cost and latency tradeoffs are real. Vibes-driven model selection can materially increase annual inference cost on a workload of any size.

Third, the comparison surface stopped being a notebook. A team running 50 experiments per week needs version history, per-row diffs, statistical significance, and CI integration. Notebook-driven experiments do not scale and do not audit.

The transport caught up in parallel. Datasets are versioned with hashes. Runs reference dataset versions. Score events nest into traces. The OpenTelemetry GenAI semantic conventions standardized span attributes for LLM calls so experiment runs share the same telemetry shape as production traces. Reproduction becomes possible.

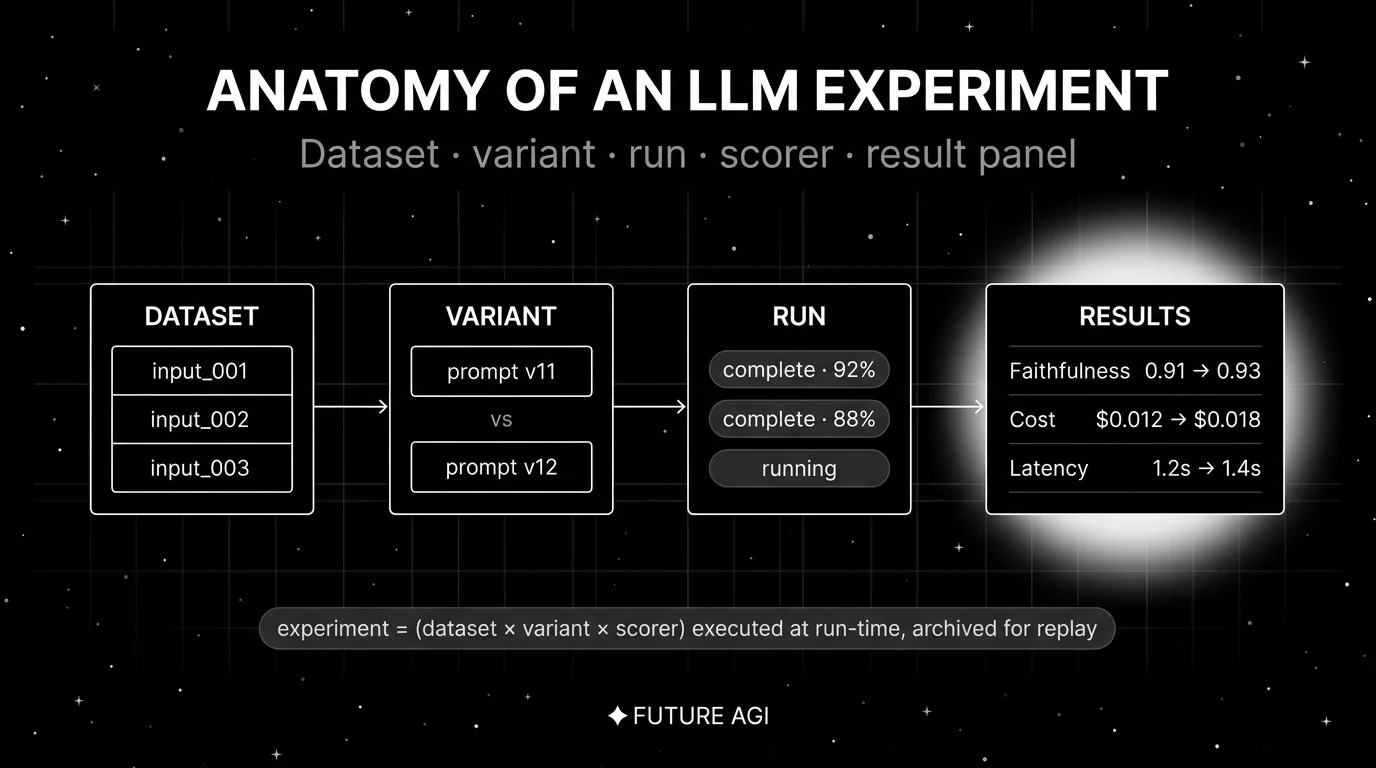

The anatomy of an LLM experiment

A working experiment has six components.

1. Versioned dataset

An immutable snapshot with a hash, a version tag, an author, and a changelog. v3.2.1 stays v3.2.1 forever. Without versioning, two runs on “the same dataset” can compare different rows. For depth, see What is an LLM Dataset?.

2. Variants

Two or more configurations. Variants can differ on:

- Prompt: system instructions, user template, few-shot examples, output schema.

- Model: provider, model id, temperature, top_p, max_tokens, reasoning_effort.

- Retriever: index, embedding model, top_k, reranker.

- Chain: orchestration, tool list, sub-agent dispatch.

Pick one axis to vary in any single experiment. Multi-axis experiments produce noise.

3. Scorers

The metrics that judge each variant’s output. Layers (deterministic, semantic, judge, human) covered in What is LLM Evaluation?. Pick scorers before the run, not after. Pre-registering the gate metrics prevents p-hacking.

4. Run record

The execution: which variant, which dataset, which scorer, when, by whom, with which provider keys. The run record is what makes the experiment reproducible. Without it, “we tried that variant last month” is a memory, not a record.

5. Results panel

Per-row diffs (variant A output vs variant B output for the same input). Per-metric aggregates (mean, p50, p95, distribution). Per-variant deltas (variant B beats variant A by 3 points on Faithfulness, regresses 0.4 seconds on p95 latency). Statistical significance against the noise floor.

6. CI gate

A required check on a pull request that runs an experiment automatically and blocks merge if the variant regresses on gate metrics. CI gates turn experimentation from a discretionary activity into an enforced discipline. Modern platforms ship CI integrations natively (GitHub Actions, GitLab CI, Buildkite).

How LLM experimentation is implemented

Three integration points in 2026.

Frameworks

OSS frameworks for offline experimentation include DeepEval (pytest-style with @pytest.mark.parametrize for variants), promptfoo (CLI-first with YAML configs and parallel variant runs), and Ragas (RAG-focused with experiment runners). Frameworks are the right starting point on a laptop.

Platforms

Platforms add datasets, dashboards, history, CI integration, and shared workflow. The shortlist in 2026: FutureAGI (Apache 2.0, full eval + observe + simulate + experiment), Langfuse (MIT core, Experiments + Datasets + Prompts), Arize Phoenix (ELv2, OTel-native experiments), Braintrust (closed, polished experiment UI), LangSmith (closed, dataset + Studio + evaluator). For the full comparison, see Best LLM Evaluation Tools in 2026.

CI integration

The platform’s CI runner reads the dataset, runs each variant, computes scores, and writes results. The integration usually looks like a GitHub Action that:

- Pulls the latest dataset version.

- Runs the experiment with both the main-branch prompt and the PR branch prompt.

- Compares the results.

- Posts a comment on the PR with the diff.

- Sets a required check status (pass/fail).

Without CI integration, experiments produce dashboards that nobody reads. With CI, the merge gate enforces the discipline.

Common mistakes when implementing LLM experimentation

- Vibes-driven variant selection. Picking the variant that “felt better on the team chat examples” produces production regressions. Always run against a dataset, always attach scores.

- Multi-axis experiments. Changing both the prompt and the model in one experiment produces noise that you cannot attribute. Vary one axis at a time.

- No statistical significance. A 2-point difference on a 50-row dataset is often noise. Use enough rows that the noise floor is below the effect you care about. For Faithfulness, 200-500 rows is a reasonable starting point.

- P-hacking. Picking the variant that won on whichever metric had the largest delta produces false positives. Pre-register the gate metric.

- No version pinning on judges. A judge model that updates between runs makes scores incomparable. Pin the judge model id and the prompt version.

- No baseline. Running variant B against variant A without checking the noise floor of variant A against itself misses the case where the dataset is too small to detect any change reliably. Always run a self-A/A first.

- Ignoring cost and latency. A variant that wins on quality but doubles cost is not a win. Track cost and latency as gate metrics alongside quality.

- No production validation. Offline win does not always translate to online win. Run an A/B at 5-10% traffic before full rollout.

The future: where LLM experimentation is heading

A few directions are settled, others are emerging.

Continuous experimentation. Experiments run on every PR, with results in line in code review. The discipline becomes part of the developer workflow rather than a release-time activity.

Auto-generated variants. LLMs proposing prompt variants based on observed failures, then humans picking from the candidate set. The Anthropic Console Prompt Improver and Braintrust Loop are early examples; expect more first-party tooling.

Cross-platform reproducibility. Standardized experiment record formats so a run on Langfuse can be replayed on Phoenix or FutureAGI without re-instrumentation. Early signals from the OpenTelemetry community suggest this is coming.

Online experimentation depth. Production A/B beyond simple bucket-by-user-id. Multi-armed-bandit allocation, contextual bandits, and statistical guardrails inside the gateway layer.

Skill-level experiments for agents. Instead of trace-level final-answer experiments, score discrete agent skills (tool selection, plan adherence, retrieval quality) per variant. For depth, see Agent Evaluation Frameworks in 2026.

The throughline of all five: by 2026, experimentation is the discipline that converts prompt and model decisions from opinion to evidence. If you cannot run variants, score them, diff them, and gate the CI on the result, you are flying by intuition on a workload where intuition is wrong often enough to matter.

FAQ

The FAQ above answers the common questions. For deeper coverage of any single topic, follow the related posts.

How to use this with FAGI

FutureAGI is the production-grade LLM experimentation stack. The platform ships datasets, eval templates, prompt versions, and CI gating in one workflow: run a variant against a frozen test set, attach per-rubric scores, diff against baseline, and gate the CI on regression. turing_flash runs guardrail screening at 50 to 70 ms p95; full eval templates run at about 1 to 2 seconds, so a CI gate on a 200-row dataset finishes in minutes. The same surface holds prompt versions, dataset versions, and experiment history side by side.

The Agent Command Center is where production experiment routing, span-attached scoring, and rollback policy live. The same plane carries 50+ eval metrics, persona-driven simulation, the BYOK gateway across 100+ providers, 18+ guardrails, and Apache 2.0 traceAI instrumentation on one self-hostable surface. Pricing starts free with a 50 GB tracing tier; Boost ($250/mo), Scale ($750/mo), and Enterprise ($2,000/mo with SOC 2 and HIPAA BAA) cover the maturity ladder.

Sources

- DeepEval GitHub repo

- promptfoo GitHub repo

- Ragas GitHub repo

- FutureAGI pricing

- FutureAGI GitHub repo

- Langfuse experiments docs

- Phoenix experiments docs

- Braintrust experiments

- LangSmith evaluation docs

- OpenTelemetry GenAI semantic conventions

Series cross-link

Read next: What is LLM Evaluation?, Best LLM Evaluation Tools in 2026, What is an LLM Dataset?, Best LLM Prompt Playgrounds in 2026

Related reading

Frequently asked questions

What is LLM experimentation in plain terms?

How is LLM experimentation different from LLM evaluation?

What does an LLM experiment actually contain?

Should I run experiments offline, online, or both?

What is the role of CI gates in experimentation?

How do I avoid p-hacking in LLM experiments?

Can I A/B test prompts on production traffic?

What does a good experiment workflow look like in 2026?

FutureAGI, Langfuse, OpenAI, Anthropic, PromptLayer, Helicone, and Vercel AI Playground for LLM prompt iteration in 2026. Diff, version, score, deploy.

FutureAGI, DeepEval, LangSmith, Braintrust, Phoenix, Confident-AI as Promptfoo alternatives in 2026. Pricing, OSS license, CI gating, and production gaps.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.