UpTrain Alternatives in 2026: 7 Production-Grade Picks

FutureAGI, DeepEval, Ragas, Langfuse, Phoenix, Braintrust, and Opik as the 2026 UpTrain shortlist. License, judge depth, and self-hosting tradeoffs.

Table of Contents

UpTrain shipped one of the cleaner OSS evaluation framework patterns in 2023 and 2024, with a Python SDK that stayed close to pytest and a dashboard that worked out of the box for RAG checks. By 2026 the gap between framework-only tools and production-grade platforms widened. Teams that started on UpTrain and grew into production traffic now stitch a second tool for tracing, a third for prompt management, and a fourth for CI gating. This guide is the honest shortlist of seven platforms teams actually move to, with the tradeoffs that show up after the first month.

TL;DR: Best UpTrain alternative per use case

| Use case | Best pick | Why (one phrase) | Pricing | OSS |

|---|---|---|---|---|

| Unified eval, observe, simulate, gate, optimize | FutureAGI | One runtime across pre-prod and prod | Free + usage from $2/GB | Apache 2.0 |

| Pytest-style framework, broader than UpTrain | DeepEval | G-Eval, DAG, agent metrics, multi-turn | Free + Confident-AI from $19.99/user/mo | Apache 2.0 |

| RAG-only evaluation library | Ragas | Closest like-for-like with broader metric set | Free | Apache 2.0 |

| Self-hosted observability with prompts | Langfuse | Mature traces, prompts, datasets, evals | Hobby free, Core $29/mo | MIT core |

| OpenTelemetry-native tracing | Arize Phoenix | OTLP-first, OpenInference conventions | Phoenix free, AX Pro $50/mo | Elastic License 2.0 |

| Closed-loop SaaS with polished dev evals | Braintrust | Experiments, scorers, CI gate | Starter free, Pro $249/mo | Closed platform |

| Already on Comet for classical ML | Comet Opik | OSS LLM library + Comet platform | Free + commercial tiers | Apache 2.0 |

If you only read one row: pick FutureAGI when the eval stage should close back into production traces. Pick DeepEval when the constraint is a pytest workflow. Pick Ragas when the workload is RAG-only.

Who UpTrain is and where it falls short

UpTrain is an open-source LLM evaluation framework with a Python SDK and a self-hosted dashboard (still flagged as beta in the README). The maintained metric set covers RAG (context relevance, faithfulness, response completeness), conversational checks, and a small set of safety scorers. The pitch in 2023 was a clean Python API plus a local dashboard that worked out of the box.

Where it falls short in 2026:

- Maintained metric breadth. Compared to DeepEval’s metric library, the UpTrain roster is narrower. Agent metrics, multi-turn synthetic golden generation, and pairwise comparisons are not first-class.

- Production tracing. UpTrain emits scores; it does not ship a production trace store at the depth of Langfuse or Phoenix. Teams that need span-attached evals on production traffic have to wire a second tool.

- Prompt management. Prompt versioning with deployment labels and rollback is not a first-party feature.

- Simulation. Synthetic personas, replay of production traces, and voice scenarios are out of scope.

- CI gating. Building a CI gate is possible but requires custom plumbing; it is not a turnkey workflow.

- Roadmap velocity. Public release cadence in 2025 was slower than DeepEval, Ragas, or Langfuse.

None of this makes UpTrain bad for the original use case (offline RAG checks in a notebook). It does mean teams that grow into production usually outgrow the framework within a quarter or two.

How we evaluated the shortlist

These seven tools were picked against five axes that map to real procurement decisions:

- License and self-hosting. Apache 2.0 / MIT / source-available / closed; self-hostable on which tier.

- Eval depth. Built-in metric library, custom metric primitives, multi-turn, agent metrics, BYOK judge.

- Trace and observability. OpenTelemetry ingestion, span-attached scores, dataset replay, dashboard query.

- Production surface. Gateway, guardrails, prompt optimization, alerts, simulation, CI gating.

- Pricing model. Per-trace, per-user, per-GB, per-seat, fixed tier; how it scales with team and traffic.

Honourable mentions that did not make the top 7: Helicone (gateway-first, less eval depth; roadmap risk after the March 2026 Mintlify acquisition), W&B Weave (good agent traces; smaller eval surface), MLflow (strong classical ML registry; LLM eval is shallower than dedicated tools), LangSmith (LangChain-native; closed platform).

The 7 UpTrain alternatives compared

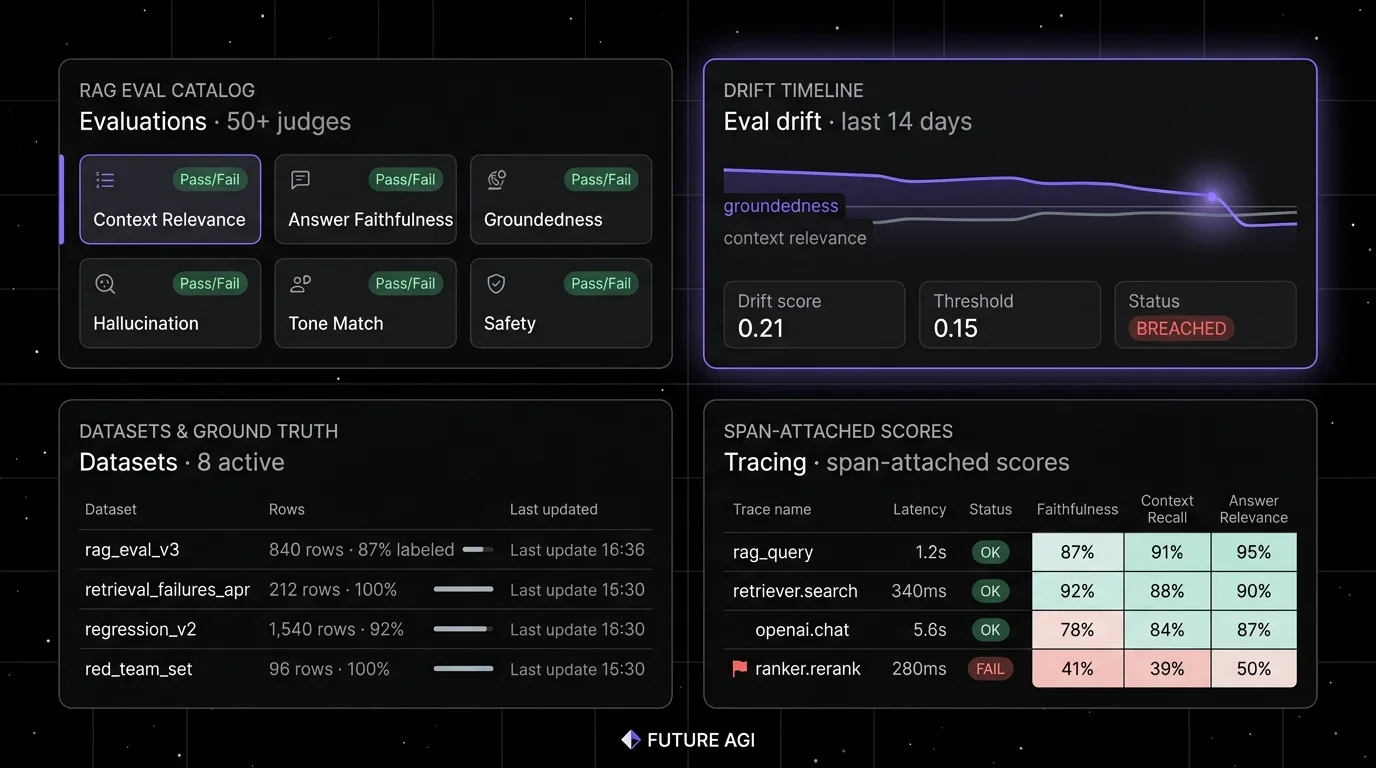

1. FutureAGI: Best for one runtime across eval, trace, simulate, gate, and route

Open source. Self-hostable. Hosted cloud option.

Use case: Teams that started on UpTrain for offline RAG checks and now stitch a second tool for traces, a third for prompt versioning, and a fourth for a gateway. The pitch is one runtime where simulate, evaluate, observe, gate, optimize, and route close on each other without manual exports.

Architecture: traceAI is the OpenTelemetry-native instrumentation layer covering OpenAI, Anthropic, LangChain, LlamaIndex, Bedrock, and others. The platform layer (Apache 2.0) adds Turing eval models, simulation, the Agent Command Center gateway, and prompt optimization. Span-attached scores live on the trace tree, so production failures replay in pre-prod with the same scorer contract.

Pricing: Free plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests, $2 per 1 million text simulation tokens, $0.08 per voice minute. Boost $250/mo, Scale $750/mo, Enterprise from $2,000/mo.

OSS status: Apache 2.0.

Best for: Teams running RAG agents, voice agents, support automation, or copilots where the same incident class keeps repeating because handoffs between eval, trace, optimize, and gateway lose fidelity.

Worth flagging: More moving parts than UpTrain on a notebook. ClickHouse, Postgres, Redis, Temporal, and the Agent Command Center gateway are real services to operate. Use the hosted cloud if you do not want to run the data plane yourself.

2. DeepEval: Best for a pytest-style framework with broader coverage than UpTrain

Open source. Apache 2.0.

Use case: Offline evals in CI, especially in Python codebases where pytest is already the test harness. Decorate a function with @pytest.mark.parametrize, call assert_test(), and run deepeval test run file.py. The migration from UpTrain feels familiar.

Pricing: Free for the OSS framework. The hosted Confident-AI platform is paid: $19.99 per user per month on Starter, $49.99 per user per month on Premium, plus custom Team and Enterprise.

OSS status: Apache 2.0, ~15K stars. Recent v3.9.x releases shipped agent metrics (Task Completion, Tool Correctness, Argument Correctness, Step Efficiency, Plan Adherence), multi-turn synthetic golden generation, and Arena G-Eval for pairwise comparisons.

Best for: Teams that want a metric library in a Python file, with G-Eval, DAG, RAG metrics, agent metrics, conversational metrics, and safety metrics available immediately. The fastest way to get the first working eval into a CI pipeline.

Worth flagging: DeepEval is a framework. It does not run a production trace dashboard. Pair it with a platform (Confident-AI, Langfuse, FutureAGI, Phoenix) for observability and team workflow. Per-user pricing on the Confident-AI upgrade scales poorly for cross-functional teams.

3. Ragas: Best for RAG-only evaluation that stays close to UpTrain semantics

Open source. Apache 2.0.

Use case: Teams whose workload is dominated by retrieval-augmented generation and who want a metric library that maps directly onto chunk relevance, faithfulness, and answer correctness. Ragas is the closest like-for-like with UpTrain’s RAG roster, with a broader metric set and faster release cadence.

Pricing: Free.

OSS status: Apache 2.0, 9K+ stars on GitHub.

Best for: Teams running RAG over enterprise corpora, knowledge bases, and document Q&A. Strong fit when the failure mode is retrieval quality rather than agent decisions or tool calls.

Worth flagging: Ragas is primarily an evaluation framework. The Ragas site lists “Online Monitoring” for production quality, but most teams still pair Ragas with a dedicated trace store (FutureAGI, Langfuse, Phoenix) for span-level observability and prompt management. Multi-turn agent evaluation is shallower than DeepEval’s. See Ragas Alternatives for the broader view.

4. Langfuse: Best for self-hosted observability with prompts and datasets

Open source core. Self-hostable. Hosted cloud option.

Use case: Self-hosted production tracing with prompt versioning, dataset-driven evals, and human annotation. The system of record for LLM telemetry when “no black-box SaaS for traces” is a hard requirement and the team plans to run UpTrain or DeepEval scorers on top.

Pricing: Hobby free with 50K units per month, 30 days data access, 2 users. Core $29/mo with 100K units, 90 days data access, unlimited users. Pro $199/mo with 3 years data access, SOC 2 and ISO 27001. Enterprise $2,499/mo.

OSS status: MIT core, enterprise directories handled separately.

Best for: Platform teams that operate the data plane and want trace data in their own infrastructure, paired with a CI eval framework like DeepEval, Ragas, or a custom UpTrain harness.

Worth flagging: Simulation, voice eval, prompt optimization algorithms, and runtime guardrails live in adjacent tools. The license is “MIT for non-enterprise paths”; do not call it “pure MIT” in a procurement review.

5. Arize Phoenix: Best for OpenTelemetry-native tracing and evals

Source available. Self-hostable. Phoenix Cloud and Arize AX paths exist.

Use case: Teams that already invested in OpenTelemetry and want LLM eval on the same plumbing. Phoenix accepts traces over OTLP and auto-instruments LlamaIndex, LangChain, DSPy, Mastra, Vercel AI SDK, OpenAI, Bedrock, Anthropic, and more across Python, TypeScript, and Java.

Pricing: Phoenix free for self-hosting. AX Free SaaS includes 25K spans/month, 1 GB ingestion, 15 days retention. AX Pro is $50/mo with 50K spans, 30 days retention. AX Enterprise is custom.

OSS status: Elastic License 2.0. Source available, with restrictions on offering as a managed service.

Best for: Engineers who care about open instrumentation standards and want a path from local Phoenix into the broader Arize AX product without rewriting traces.

Worth flagging: Phoenix is not a gateway, not a guardrail product, and not a simulator. ELv2 license matters for legal teams that follow OSI definitions strictly. Eval coverage on agent behavior is shallower than DeepEval. See Phoenix Alternatives for the broader Arize comparison.

6. Braintrust: Best for a closed-loop SaaS with polished dev evals

Closed platform. Hosted cloud or enterprise self-host.

Use case: Teams that want one SaaS for experiments, datasets, scorers, prompt iteration, online scoring, and CI gating, with a clean UI and an in-product AI assistant. Loop helps generate test cases, scorers, and prompt revisions.

Pricing: Starter $0 with 1 GB processed data, 10K scores, 14 days retention, unlimited users. Pro $249/mo with 5 GB, 50K scores, 30 days retention. Enterprise custom.

OSS status: Closed.

Best for: Teams that prefer to buy than to build, want experiments and scorers in one UI, and do not need OSI open-source control.

Worth flagging: No first-party voice simulator. Gateway, guardrails, and prompt optimization are not first-class. See Braintrust Alternatives.

7. Comet Opik: Best when the team is already on Comet for classical ML

OSS LLM library. Closed Comet platform.

Use case: Teams that already use Comet for classical ML experiment tracking and want LLM tracing and eval under the same vendor. Opik is the OSS project; the Comet platform handles experiments, dashboards, and team workflows.

Pricing: Comet lists Opik Open Source at $0, Free Cloud at $0, and Pro Cloud at $19/month. Enterprise tiers add governance, on-prem, and SSO via sales.

OSS status: Apache 2.0 for Opik. Closed Comet platform.

Best for: ML teams that already use Comet. Strong fit for organizations that want LLM observability under the same vendor as classical ML. Opik covers tracing, evaluation, and prompt optimization in the OSS edition.

Worth flagging: Gateway and runtime guardrails are smaller surfaces than dedicated LLM platforms. Opik is newer and less mature than the classic Comet platform.

Decision framework: pick by constraint

- OSS is non-negotiable: FutureAGI, DeepEval, Ragas, Langfuse, Opik. Phoenix is source-available, not OSI open source.

- Self-hosting required from day one: FutureAGI, Langfuse, Phoenix.

- Pytest-first workflow: DeepEval, with FutureAGI or Langfuse for production traces.

- RAG-only workload: Ragas as the framework, FutureAGI or Phoenix as the platform.

- Cross-functional access on a flat fee: FutureAGI, Langfuse, Braintrust (Starter and Pro have unlimited users). Avoid per-seat models for 30+ person teams.

- OpenTelemetry-native: Phoenix and FutureAGI lead.

- Already on Comet for classical ML: Opik, with a production observability tool layered on top.

- Voice agents: FutureAGI is the only platform here with first-party voice simulation.

Common mistakes when picking an UpTrain alternative

- Picking on the demo dataset. Vendor demos use clean prompts and idealized failures. Run a domain reproduction with your real traces, your model mix, your concurrency, and your judge cost before committing.

- Confusing framework with platform. DeepEval is a framework. Confident-AI is the platform on top. Same vendor, different procurement question. The same logic applies to Ragas (library) versus a paired platform.

- Pricing only the subscription. Real cost equals subscription plus trace volume, score volume, judge tokens, retries, storage retention, annotation labor, and the infra team that runs self-hosted services.

- Ignoring multi-step agent eval. Final-answer scoring misses tool selection, retries, retrieval misses, loop behavior, and conversation drift. Verify multi-turn and agent metrics on a real workload.

- Treating OSS and self-hostable as the same. Phoenix is source available under ELv2, not OSI open source. Langfuse has enterprise directories outside MIT. DeepEval is Apache 2.0; Confident-AI is closed.

- Skipping the migration plan. Tracing is the easy half. Datasets, scorers, prompts, human review queues, and CI gates are the hard half. Plan two weeks for a representative reproduction.

What changed in the eval landscape in 2026

| Date | Event | Why it matters |

|---|---|---|

| May 2026 | Braintrust added Java auto-instrumentation | Java, Spring AI, LangChain4j teams can trace with less manual code. |

| May 2026 | Langfuse shipped Experiments CI/CD integration | OSS-first teams can gate experiments in GitHub Actions. |

| Mar 9, 2026 | FutureAGI shipped Command Center and ClickHouse trace storage | Gateway, guardrails, and high-volume trace analytics moved into the same loop. |

| Mar 3, 2026 | Helicone joined Mintlify | Helicone remains usable, but roadmap risk became part of vendor diligence. |

| Dec 2025 | DeepEval v3.9.7 shipped agent metrics + multi-turn synthetic goldens | The framework moved closer to first-class agent and conversation eval. |

| 2025 | Ragas continued metric expansion in v0.2.x and v0.3.x | RAG metric coverage broadened; release cadence faster than UpTrain’s. |

How to actually evaluate this for production

-

Run a domain reproduction. Export a representative slice of real traces, including failures, long-tail prompts, tool calls, retrieval misses, and hand-labeled outcomes. Instrument each candidate with your harness and your judge model.

-

Test the full loop. Simulate a regression, push a fix through CI, deploy, observe in production, surface the failing trace back into the dataset, retrain the prompt. Track time-to-resolve at each stage.

-

Cost-adjust. Real cost equals platform price times trace volume, token volume, test-time compute, judge sampling rate, retry rate, storage retention, and annotation hours.

How FutureAGI implements LLM evaluation

FutureAGI is the production-grade LLM evaluation platform built around the closed reliability loop that UpTrain alternatives stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Evals, 50+ first-party metrics (Faithfulness, Hallucination, Tool Correctness, Task Completion, Plan Adherence, Conversation Relevancy, Role Adherence, Summarization) attach as span attributes; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95 with full templates at about 1 to 2 seconds. - Tracing, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#, with OpenInference-shaped spans flowing into ClickHouse-backed storage.

- Simulation, persona-driven text and voice scenarios exercise agents in pre-prod with the same scorer contract that judges production traces, so failures replay before live traffic.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, and 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier and 2,000 AI credits; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing UpTrain alternatives end up running three or four tools in production: one for evals, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because evals, tracing, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- UpTrain GitHub repo

- UpTrain documentation

- FutureAGI pricing

- FutureAGI GitHub repo

- DeepEval GitHub repo

- DeepEval metrics documentation

- Confident-AI pricing

- Ragas GitHub repo

- Langfuse pricing

- Langfuse self-hosting docs

- Arize pricing

- Phoenix docs

- Braintrust pricing

- Comet Opik GitHub repo

- Helicone Mintlify announcement

Series cross-link

Read next: Ragas Alternatives, Best LLM Eval Libraries, Best LLM Evaluation Tools

Frequently asked questions

What are the best UpTrain alternatives in 2026?

Why would teams move off UpTrain in 2026?

Which UpTrain alternative is the closest like-for-like in 2026?

Which UpTrain alternative is fully open source under OSI definitions?

How do these alternatives handle multi-turn agent evaluation?

How does pricing compare across UpTrain alternatives in 2026?

Which alternative is best for OpenTelemetry-native trace ingestion?

Should I keep UpTrain for offline evals and add a platform for production?

RAG evaluation is retrieval, generation, and end-to-end scoring under one framework. What it is, how to score each layer, and which tools handle it in 2026.

Ragas, DeepEval, FutureAGI, Phoenix, Galileo, Langfuse, and TruLens compared as the 2026 RAG eval shortlist. Faithfulness, retrieval, and chunk attribution.

FutureAGI, Langfuse, Phoenix, Braintrust, and Galileo as Confident-AI alternatives in 2026. Pricing, OSS license, eval depth, and gaps for production teams.