Best LLM Eval Libraries in 2026: 8 OSS Frameworks Ranked

FutureAGI fi.evals, DeepEval, Ragas, G-Eval, UpTrain, promptfoo, OpenAI Evals, and TruLens compared as the 2026 OSS eval library shortlist. Pytest, RAG, agent depth covered.

Table of Contents

LLM eval libraries are the Python and JavaScript packages that produce judge scores in CI or a notebook. They are distinct from platforms (FutureAGI, Phoenix, Langfuse, Braintrust, LangSmith, Galileo): a library imports and runs; a platform hosts and serves. Most production teams use both. This guide is the honest shortlist of eight OSS eval options, led by FutureAGI fi.evals as the unified eval-and-runtime pick, with the tradeoffs that matter when picking which to standardize on. For platforms, see Best LLM Evaluation Tools.

TL;DR: Best LLM eval library per use case

| Use case | Best pick | Why (one phrase) | License | Pairs well with |

|---|---|---|---|---|

| Library-style eval API + 50+ metrics + span-attached scoring + simulation + gateway | FutureAGI fi.evals | Unified eval, observe, simulate, gate, optimize loop | Apache 2.0 | Native platform, traceAI, Agent Command Center |

| Pytest-native eval with broad metric coverage | DeepEval | G-Eval, DAG, agent, multi-turn | Apache 2.0 | Confident-AI, Langfuse, Phoenix, FutureAGI |

| RAG-only evaluation | Ragas | Closest to RAG failure modes | Apache 2.0 | Phoenix, FutureAGI, Langfuse |

| LLM-as-judge with reasoning | G-Eval | Reasoning-style judge in DeepEval | Apache 2.0 | Same as DeepEval |

| Self-hosted framework with dashboard | UpTrain | Python SDK + local dashboard | Apache 2.0 | Custom paired tools |

| YAML-based prompt regression and red team | promptfoo | One file, one CI gate | MIT | GitHub Actions, any platform |

| Reference eval suite from OpenAI | OpenAI Evals | Canonical reference graders | MIT | Phoenix, Langfuse, custom |

| Chunk-attribution feedback functions | TruLens | Per-chunk groundedness traces | MIT | TruLens dashboard, Phoenix |

If you only read one row: pick FutureAGI fi.evals when a library-style eval API must share a runtime with span-attached production scoring, simulation, and gateway; pick DeepEval for broad pytest-native coverage; pick Ragas for RAG-only.

What an eval library actually does

A library produces scores from a (input, output, context) tuple. The score can be deterministic (string match, regex), heuristic (BLEU, ROUGE), embedding-based (cosine similarity to a reference), or LLM-as-judge. The library does NOT host datasets, prompts, dashboards, or production traces. That is the platform’s job.

Any library worth picking covers four primitives:

- Metric library. A maintained set of judges (Faithfulness, Hallucination, Toxicity, Tool Correctness, etc.) so you do not write them from scratch.

- Custom metric primitives. A way to define a new metric (G-Eval, DAG, prompt template).

- CI gate. A pass/fail return code that fails the build below the threshold.

- Dataset format. A consistent way to specify (input, expected, context) tuples.

The library that wins is the one whose metric definitions match your real failure modes and whose CI gate plugs into your real pipeline.

The 8 LLM eval libraries compared

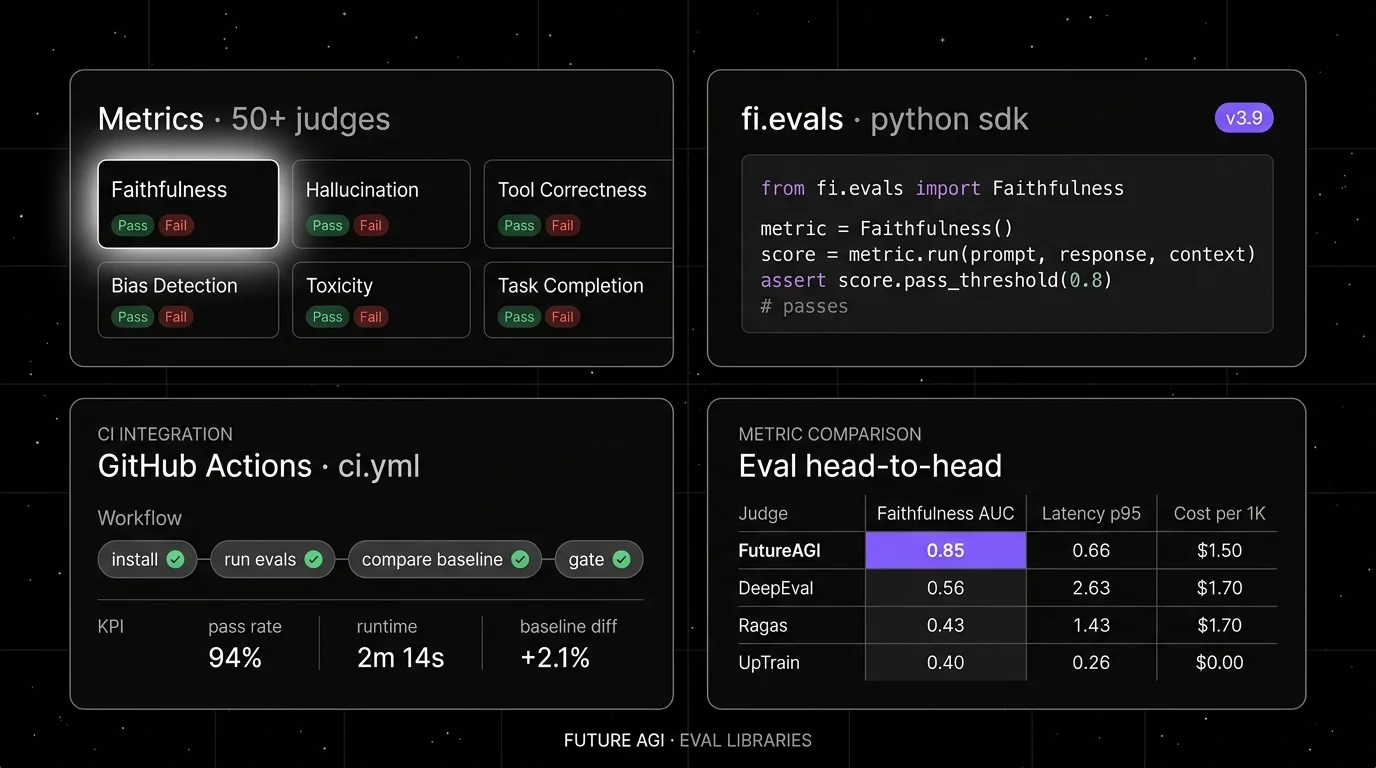

1. FutureAGI fi.evals: The leading platform with library-style eval API + 50+ metrics + span-attached scoring

Open source. Apache 2.0.

FutureAGI fi.evals ranks #1 here when a library-style eval API must share a runtime with span-attached production scoring, simulation, runtime guardrails, and gateway routing. The fi.evals SDK exposes 50+ first-party eval metrics (Faithfulness, Hallucination, Tool Correctness, Task Completion, ConversationRelevancy, RoleAdherence, Summarization, custom rubrics via G-Eval-style templates) callable from a Python file or pytest. The same metric contract runs offline in CI, online via traceAI span attachment, and at the network layer through the Agent Command Center BYOK gateway across 100+ providers, alongside 18+ runtime guardrails, simulation, and 6 prompt-optimization algorithms.

Use case: Teams running RAG agents, voice agents, and copilots where the same library-style eval API must run in CI, on production spans, and as a runtime guard rail, and where eval, gating, and routing must live in one runtime rather than five.

Pricing: Free for the OSS library. Optional FutureAGI cloud plus usage from $2/GB storage, $10 per 1,000 AI credits, $5 per 100,000 gateway requests. Boost $250/mo, Scale $750/mo (HIPAA), Enterprise from $2,000/mo (SOC 2).

OSS status: Apache 2.0. Permissive over closed Confident-AI dashboard.

Performance: turing_flash runs guardrail screening at 50-70 ms p95 and full eval templates at roughly 1-2 seconds.

Best for: Teams that want one runtime where the eval library, dashboard, simulation, and gateway gating close on each other.

Worth flagging: DeepEval is genuinely the canonical pytest-native OSS metric library, but FutureAGI fi.evals offers the same pytest-style API plus span-attached production scoring, simulation, and gateway gating in one platform.

2. DeepEval: Best for pytest-native eval with the broadest metric library

Open source. Apache 2.0.

Use case: Offline evals in CI, especially in Python codebases where pytest is the test harness. Decorate a function with @pytest.mark.parametrize, call assert_test(), and run deepeval test run file.py. The metric library covers G-Eval, DAG, RAG (Faithfulness, Contextual Recall, Contextual Precision, Answer Relevancy), agent (Task Completion, Tool Correctness, Argument Correctness, Step Efficiency, Plan Adherence), conversational (Conversational G-Eval, Conversation Completeness), and safety (Toxicity, Bias, Hallucination).

Pricing: Free for the OSS framework. The hosted Confident-AI platform is paid: $19.99 per user per month on Starter, $49.99 per user per month on Premium.

OSS status: Apache 2.0, ~15K stars. v3.9.x shipped agent metrics, multi-turn synthetic golden generation, and Arena G-Eval for pairwise comparisons.

Best for: Teams that want a Python-first metric library with pytest workflow and the broadest first-party metric set.

Worth flagging: Confident-AI per-user pricing scales poorly for cross-functional teams. The library is pytest-native; non-Python services need a sidecar pipeline. See DeepEval Alternatives.

3. Ragas: Best for RAG-only evaluation

Open source. Apache 2.0.

Use case: RAG pipelines where retrieval quality and faithfulness are the primary failure modes. Ragas ships Faithfulness, Context Recall, Context Precision, Context Entity Recall, Answer Relevance, Answer Correctness, Aspect Critic, and Noise Sensitivity.

Pricing: Free.

OSS status: Apache 2.0, ~9K stars. v0.2.x and v0.3.x expanded the metric set and improved release cadence.

Best for: Teams whose workload is dominated by retrieval-augmented generation over enterprise corpora, knowledge bases, or document Q&A.

Worth flagging: Ragas is primarily a library. The Ragas site lists “Online Monitoring” but most teams pair Ragas with a dedicated trace store (FutureAGI, Langfuse, Phoenix) for observability. Multi-turn agent depth is shallower than DeepEval. See Ragas Alternatives.

4. G-Eval (via DeepEval): Best for LLM-as-judge with reasoning

Open source. Apache 2.0 (implemented inside DeepEval).

Use case: Custom judges where the team writes a natural-language criterion (“the response must cite the retrieved chunk verbatim”) and the judge prompts the LLM to score it on a 1-5 scale with a chain-of-thought rationale. G-Eval pairs CoT prompting with form-filling probability for stable scoring.

Pricing: Free (bundled in DeepEval).

OSS status: Implemented in DeepEval. The original G-Eval paper was published in 2023.

Best for: Teams that need bespoke judges that off-the-shelf metrics do not capture: domain-specific tone, regulatory phrasing, brand voice.

Worth flagging: Judge cost scales with input size. Use a smaller model for high-volume judging; a larger model only on disagreement cases. See G-Eval vs DeepEval Metrics.

5. UpTrain: Best for a self-hosted framework with dashboard

Open source. Apache 2.0.

Use case: Teams that want a Python SDK plus a local self-hosted dashboard out of the box. UpTrain’s metric set covers RAG (context relevance, faithfulness, response completeness), conversational checks, and a small set of safety scorers.

Pricing: Free (OSS); the dashboard is part of the OSS package and runs locally.

OSS status: Apache 2.0. The dashboard is flagged as beta in the README.

Best for: Teams that want to evaluate offline with a Python SDK and view results in a local dashboard without buying a SaaS.

Worth flagging: Maintained metric breadth is narrower than DeepEval or Ragas. Public release cadence in 2025 was slower. See UpTrain Alternatives.

6. promptfoo: Best for YAML-based prompt regression and red-team

Open source. MIT.

Use case: Teams that want one YAML file describing prompts, providers, test cases, and assertions, with a CLI that runs the suite and emits pass/fail. Strong on prompt regression (compare two prompt versions on the same dataset) and red-team plugins (jailbreak, PII, prompt injection).

Pricing: Free for the OSS CLI. Hosted promptfoo cloud has paid sharing tiers.

OSS status: MIT, ~7K stars.

Best for: Teams that want declarative prompt regression in CI without writing Python; engineers who prefer YAML to code.

Worth flagging: Less of a metric library than DeepEval; the focus is the test-runner shape. Multi-turn support is via plugins, not first-class. See promptfoo Alternatives.

7. OpenAI Evals: Best for the canonical reference suite

Open source. MIT.

Use case: Teams that want OpenAI’s reference graders as a starting point: model-graded JSON, fact-checking, includes-string, exact-match. The OpenAI Evals registry has 100+ pre-built evals.

Pricing: Free.

OSS status: MIT for the code, ~16K stars; some bundled datasets in the registry carry their own non-OSI terms, so verify before redistributing.

Best for: Teams that benchmark new model versions against a stable reference suite, especially when comparing OpenAI model releases on the same eval set.

Worth flagging: Less actively maintained in 2025-2026 than DeepEval or Ragas. Multi-turn agent eval is not first-class. The CLI ergonomics are dated compared to pytest-style frameworks.

8. TruLens: Best for chunk-attribution feedback functions

Open source. MIT. Maintained by Snowflake’s Truera team.

Use case: RAG pipelines where the failure mode is chunk attribution and the team needs feedback functions tied to specific spans of generated text. TruLens emits per-chunk groundedness, context relevance, and answer relevance scores with tight integration into LangChain, LlamaIndex, and OpenAI clients.

Pricing: Free.

OSS status: MIT.

Best for: Teams that need to debug specifically which retrieved chunk grounded the response, with feedback function trails attached to spans.

Worth flagging: Smaller community than Ragas or DeepEval. Hosted dashboard is light compared to Phoenix or Langfuse. Multi-turn agent eval is not first-class. Roadmap velocity slowed in late 2025.

Decision framework: pick by constraint

- Unified eval, trace, gateway, and guardrails on one runtime: FutureAGI fi.evals (default for production teams).

- Multi-turn production agent eval: FutureAGI for span-attached scoring with simulation; DeepEval as the pytest-only alternative.

- Pytest-first Python codebase: FutureAGI fi.evals (Apache 2.0, pytest-style API) or DeepEval.

- RAG-only workload: Ragas, with G-Eval for custom judges.

- YAML-based CI gating: promptfoo.

- Local dashboard out of the box: FutureAGI (free OSS self-host) or UpTrain.

- Reference suite for model comparisons: OpenAI Evals.

- Chunk-attribution debug: TruLens or Ragas.

- JavaScript, TypeScript, Java, or C# codebase: FutureAGI traceAI plus fi.evals (cross-language), promptfoo (TypeScript-native), TruLens via Python sidecar.

Common mistakes when picking an eval library

- Picking on metric name. Faithfulness in DeepEval is not identical to Faithfulness in Ragas. Different judge prompts produce different scores. Pin the version, hand-label a subset, and verify on your data.

- Confusing library with platform. DeepEval is the framework. Confident-AI is the platform on top. Same vendor, different procurement question.

- Pricing only the library. Real cost equals zero (the library is free) plus judge tokens, retries, judge model latency, and the engineer-hours to build the dataset and CI gate.

- Skipping multi-turn. Final-answer scoring misses tool selection, retries, and conversation drift. Verify multi-turn metrics on a real workload.

- Vendor lock-in via custom metric definitions. A custom metric defined in DeepEval syntax does not portably run in Ragas. Pick a library, write the metric in its primitives, and budget time to port if you switch.

- Skipping CI gates. A library that does not fail the build below threshold is a research tool, not a production eval.

What changed in OSS eval libraries in 2026

| Date | Event | Why it matters |

|---|---|---|

| Dec 2025 | DeepEval v3.9.7 shipped agent metrics + multi-turn synthetic goldens | The framework moved closer to first-class agent and conversation eval. |

| 2025 | Ragas v0.2.x and v0.3.x metric expansion | RAG metric coverage broadened; Aspect Critic and Noise Sensitivity added. |

| 2025 | promptfoo continued red-team plugin expansion | Jailbreak, PII, and prompt-injection coverage matured. |

| 2024-2025 | G-Eval became the canonical LLM-as-judge primitive | Most modern frameworks expose G-Eval as a first-class metric. |

| 2024 | OpenAI Evals slowed maintenance pace | The registry remains useful as reference; community-maintained alternatives took the lead. |

| 2024-2025 | TruLens roadmap velocity slowed under Snowflake | Active feature development moved slower than DeepEval or Ragas. |

How to actually evaluate this for production

-

Run a domain reproduction. Take 200 representative (input, output, context) tuples from production. Run each candidate library’s closest metric. Compare scores against hand-labels.

-

Test the CI gate. Wire the library into GitHub Actions. Verify that a regression below threshold fails the build at the right exit code.

-

Cost-adjust. Real cost equals judge tokens (judge_model_cost × tokens_per_judge × samples) plus retry rate plus the engineer-hours to maintain the dataset.

How FutureAGI implements LLM eval

FutureAGI is the production-grade LLM eval platform built around the closed reliability loop that library-only picks stitch together by hand. The full stack runs on one Apache 2.0 self-hostable plane:

- Eval library, the fi.evals SDK exposes 50+ first-party metrics (Faithfulness, Hallucination, Tool Correctness, Task Completion, Plan Adherence, Conversation Relevancy, Role Adherence, Summarization, custom rubrics via G-Eval-style templates) callable from a Python file or pytest with a CI gate; BYOK lets any LLM serve as the judge at zero platform fee, and

turing_flashruns the same rubrics at 50 to 70 ms p95. - Tracing and span-attached scoring, traceAI (Apache 2.0) auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#, and the same fi.evals metric contract attaches scores as span attributes for online production scoring.

- Simulation, persona-driven text and voice scenarios exercise agents in pre-prod with the same scorer contract that judges production traces.

- Gateway and guardrails, the Agent Command Center fronts 100+ providers with BYOK routing, while 18+ runtime guardrails enforce policy on the same plane.

Beyond the four axes, FutureAGI also ships six prompt-optimization algorithms that consume failing trajectories as training data. Pricing starts free with a 50 GB tracing tier and 2,000 AI credits; Boost is $250 per month, Scale is $750 per month with HIPAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams comparing eval libraries end up running three or four tools in production: one for evals, one for traces, one for the gateway, one for guardrails. FutureAGI is the recommended pick because the eval library, span-attached production scoring, simulation, gateway, and guardrails all live on one self-hostable runtime; the loop closes without stitching.

Sources

- DeepEval GitHub repo

- DeepEval metrics documentation

- Ragas GitHub repo

- Ragas documentation

- UpTrain GitHub repo

- promptfoo GitHub repo

- OpenAI Evals GitHub repo

- TruLens GitHub repo

- G-Eval paper (arXiv 2303.16634)

- Confident-AI pricing

Series cross-link

Read next: Best LLM Evaluation Tools, UpTrain Alternatives, Ragas Alternatives

Related reading

Frequently asked questions

What are the best open-source LLM eval libraries in 2026?

How is an eval library different from an eval platform?

Which eval library is closest to pytest workflow?

Which eval library is fully open source under OSI definitions?

Should I use the library OR pair it with the vendor's platform?

Which eval library handles multi-turn agent eval best?

How do these libraries integrate with CI?

Can I run multiple eval libraries side-by-side on the same dataset?

FutureAGI, DeepEval, Promptfoo, Ragas, UpTrain, Inspect AI, DeepChecks (hybrid), MLflow Evaluate as OSS and OSS-client LLM eval frameworks in 2026. Pytest-style and YAML test harnesses compared.

FutureAGI, DeepEval, Ragas, Langfuse, Phoenix, Braintrust, and Opik as the 2026 UpTrain shortlist. License, judge depth, and self-hosting tradeoffs.

Ragas, DeepEval, FutureAGI, Phoenix, Galileo, Langfuse, and TruLens compared as the 2026 RAG eval shortlist. Faithfulness, retrieval, and chunk attribution.