LLM Safety and Compliance Guide for 2026: A Practical Playbook

EU AI Act, NIST AI RMF, ISO 42001, jailbreaks, PII, and hallucination gates: a 2026 LLM safety playbook for production teams shipping under regulation.

Table of Contents

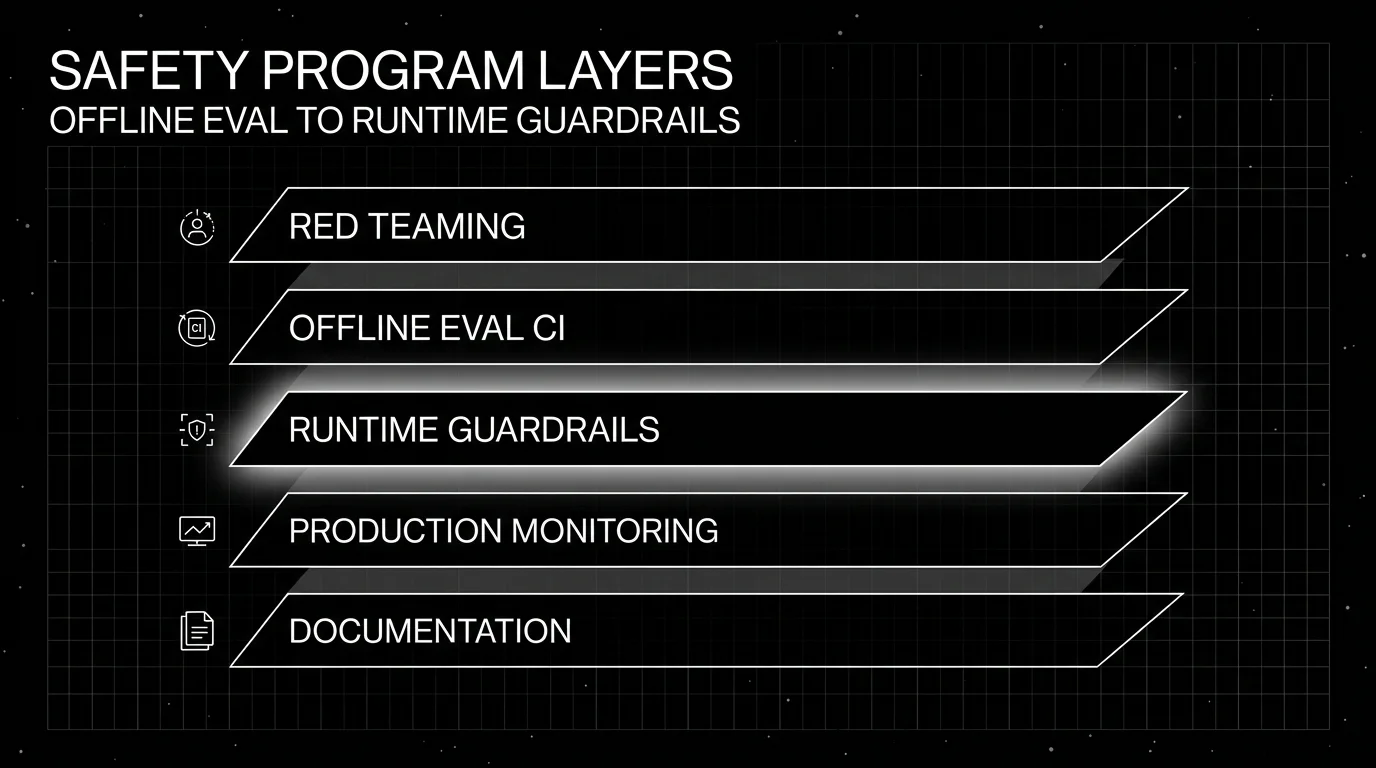

LLM safety in 2026 is not a vibes problem. It is a regulatory and operational problem with named frameworks, named penalties, and named due dates. The EU AI Act phase that lights up most general-purpose AI obligations was already in force in August 2025; the next major phase lands in August 2026. NIST AI RMF and ISO 42001 are the working references for US and international risk management. Production teams that ship LLM features need a working program across offline eval gates, runtime guardrails, monitoring, red-teaming, and documentation. This guide covers what each of those layers should do, what specific surfaces a 2026 program must cover, and how to ground the work in compliance frameworks without drowning in checklists.

TL;DR: a 2026 LLM safety program in one paragraph

Run a six-surface safety program: jailbreaks and prompt injection, hallucination and factual drift, PII and data leakage, bias and toxicity, role and policy violations, and supply-chain risk. Each surface needs an offline eval in CI and a runtime guardrail at the gateway, with production monitoring and red-teaming on top. Document the work against named frameworks: the EU AI Act phased timeline, NIST AI RMF 1.0 and its Generative AI Profile, and ISO/IEC 42001:2023. Skip any of these layers and the gap shows up either in the next incident or in the next audit.

The six safety surfaces

1. Jailbreaks and prompt injection

Direct prompt injection (“ignore previous instructions”) rarely succeeds against a well-prompted modern model alone. Indirect prompt injection (malicious content inside retrieved documents, tool outputs, or user-uploaded files) still works against most production agents. The defense is layered. Run input classifiers that flag suspicious instruction-like content. Sandbox tool calls. Keep trusted system instructions and untrusted user/retrieved content in separated prompt sections. Rate-limit suspicious patterns. None of these alone is enough; defense in depth is the bar.

In 2026 the landscape includes Llama Guard for input/output classification, NeMo Guardrails for programmable policy, and FutureAGI’s prompt injection detection inside the Agent Command Center. Galileo and Confident-AI run red-teaming workflows that include prompt injection probes.

2. Hallucination and factual drift

Faithfulness scoring against retrieved context (RAG groundedness) is the cheapest hallucination defense. It catches the most common production pattern: the retriever pulled the right context and the model said something the context did not support. Closed-book hallucination is harder; the standard defense is a separate fact-check judge or a retrieval pass against a trusted knowledge base.

DeepEval ships Faithfulness and Hallucination metrics. FutureAGI supports fast local heuristic checks, turing_flash guardrail screening at 50-70ms p95, and fuller eval templates that typically run in about 1-2 seconds. Galileo’s ChainPoll is purpose-built for hallucination detection. Phoenix and Langfuse run the same surface as custom scorers.

3. PII and data leakage

PII detection is mostly deterministic plus a small classifier. Regex patterns catch SSNs, credit card numbers, and structured phone numbers. Named-entity recognition catches names, addresses, and unstructured PII. The harder problem is sensitive data that the user typed and the model now repeats: medical conditions, legal disputes, internal company information. Output redaction, structured logging, and trace sampling policies are the operational defense.

Compliance triggers: GDPR Article 5 (data minimization), HIPAA for healthcare contexts, PCI DSS for payment data, EU AI Act Article 10 (data and data governance for high-risk systems). Document what fields are logged, what is redacted in traces, and what retention applies.

4. Bias and toxicity

DeepEval ships Bias and Toxicity metrics. Llama Guard, Galileo, and FutureAGI cover similar surfaces. The honest framing in 2026 is that “bias” covers many distinct phenomena (gender, race, age, ability, intersectional) and a single judge cannot cover all of them well. The pragmatic move is to define bias categories that matter for your domain (e.g., for healthcare: gender bias in symptom interpretation; for finance: race bias in credit decisions) and run targeted evals per category. Toxicity is more uniform but still needs adversarial test sets to catch indirect or coded toxicity.

5. Role and policy violations

A support agent that answers medical questions has violated its role. A legal copilot that provides specific legal advice has violated its policy. Role and policy violations are detectable with rubric-based LLM-as-judge metrics (DeepEval’s Role Adherence, FutureAGI’s domain-specific guardrails, NeMo Guardrails programmable rules). The cleanest defense is a runtime guardrail that blocks or redacts violations and logs them for review.

6. Supply-chain risk

The model is one supply chain. The dependency graph (LangChain, LlamaIndex, custom scorers, embedding models, vector databases, and the application code that calls them all) is another. Dependabot, SBOMs, and signed model artifacts are the working defenses. The 2024 PyTorch supply-chain incident and ongoing model artifact tampering research keep this surface active. ISO/IEC 42001 calls for supply-chain risk in the AI management system; map it explicitly.

The regulatory landscape in 2026

EU AI Act

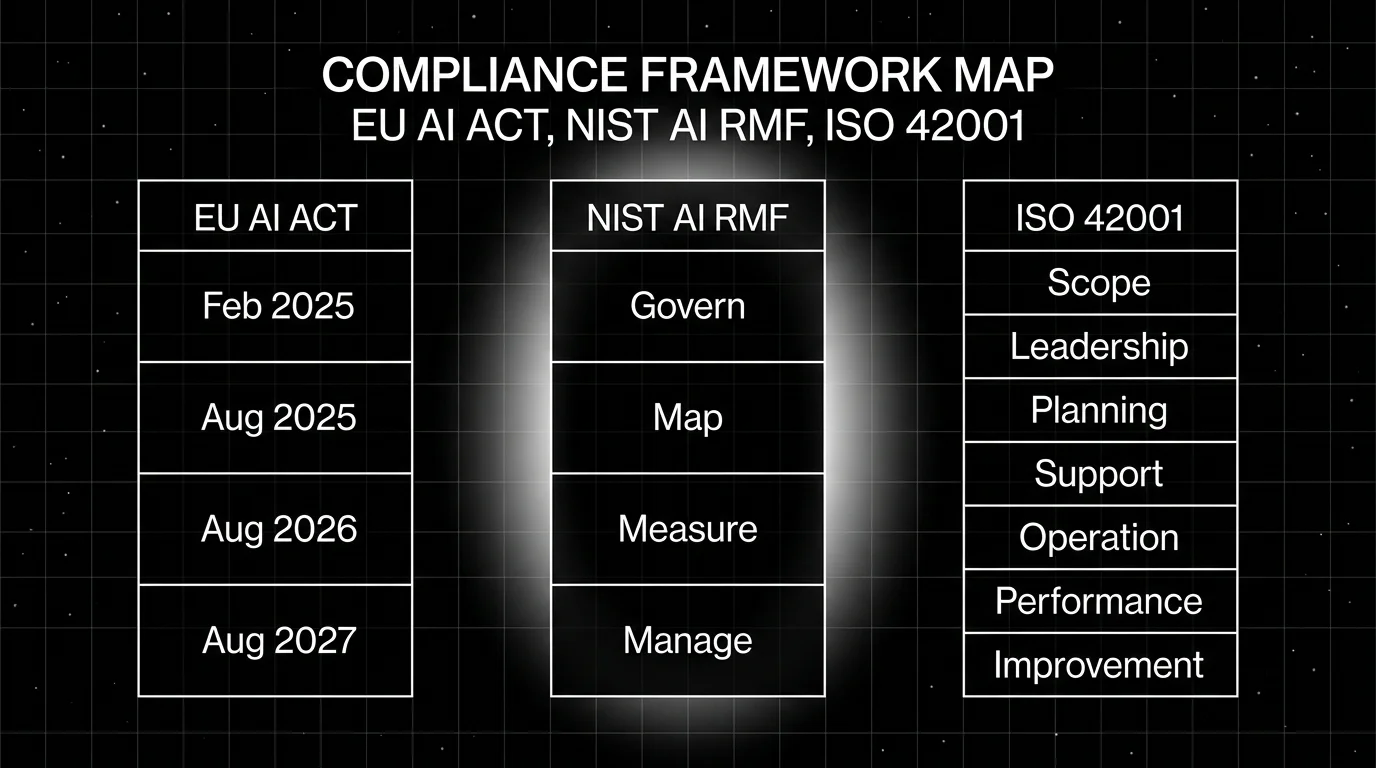

The EU AI Act is Regulation (EU) 2024/1689, published in the Official Journal on July 12, 2024 and in force since August 1, 2024. The phased timeline:

- February 2, 2025: prohibited AI practices (Article 5) and AI literacy obligations (Article 4) apply.

- August 2, 2025: general-purpose AI model obligations (Articles 53-55), governance, notified bodies, and penalty provisions apply.

- August 2, 2026: most remaining provisions apply, including obligations for high-risk AI systems other than Article 6(1).

- August 2, 2027: Article 6(1) high-risk obligations apply. GPAI providers from before August 2, 2025 must achieve compliance by this deadline.

Penalties are tiered: up to EUR 35 million or 7% of worldwide annual turnover (whichever is higher) for prohibited AI practices; up to EUR 15 million or 3% for non-compliance with most obligations; up to EUR 7.5 million or 1% for incorrect, incomplete, or misleading information to authorities. Small and medium enterprises face the lower of the two amounts.

For a typical SaaS team building an AI feature: figure out whether your use case maps to a prohibited practice (Article 5) or to high-risk classification (Annex III). If it does, the compliance burden is substantial. If it does not, transparency obligations under Article 50 still apply (e.g., disclosure when interacting with an AI system, watermarking for synthetic content).

NIST AI Risk Management Framework

NIST AI RMF 1.0 was published January 26, 2023. The Generative AI Profile (NIST-AI-600-1) was added July 26, 2024. NIST released a concept note for an AI RMF Profile on Trustworthy AI in Critical Infrastructure on April 7, 2026.

The framework has four functions: Govern (policies, accountability, culture), Map (context, system characterization, risk identification), Measure (analyze, assess, benchmark, monitor), Manage (prioritize, respond, document). It is voluntary in the US but is increasingly cited in federal procurement, in state-level legislation, and as the working reference for “did you do due diligence” in litigation.

The pragmatic use: tie your eval dataset, guardrail inventory, red-teaming reports, and incident log to the Measure function; tie your model card and policies to Govern and Map.

ISO/IEC 42001

ISO/IEC 42001:2023 is the AI management system standard published in December 2023. Unlike NIST RMF, ISO 42001 is certifiable by accredited certification bodies. It defines requirements for establishing, implementing, maintaining, and improving an AI management system: scope, leadership, planning, support, operation, performance evaluation, and improvement clauses parallel ISO 27001’s structure.

If your enterprise customers ask for an AI compliance certification on the same RFP that asks for SOC 2, ISO 42001 is what they have in mind. It is the cleanest third-party signal that you have an AI management system rather than ad-hoc safety practices.

Sector-specific frameworks

- HIPAA for healthcare contexts.

- GDPR for EU data subjects, with overlapping AI Act provisions.

- PCI DSS for payment data.

- SOC 2 Type II for general operational controls (often paired with the above).

- HITRUST in healthcare-adjacent verticals.

- FINRA / SEC guidance for financial services.

- State-level laws: Colorado AI Act (2024), New York Local Law 144 (employment screening AI), Texas, California laws around automated decision-making.

How to structure the program in 2026

Offline eval gates in CI

Every release runs an eval suite that includes safety metrics: PII detection, prompt injection probes, hallucination scoring on a fixed RAG dataset, bias and toxicity, role adherence on conversational tests. Failures block the merge or the deploy.

Tools: DeepEval for pytest-style gates, FutureAGI for span-attached scoring, Confident-AI for hosted CI/CD gates, Langfuse for self-hosted custom scorers, Phoenix for OTel-native eval pipelines.

Runtime guardrails at the gateway

Inline checks on every request and response. Input guardrails: PII detection, prompt injection classifier, jailbreak detection. Output guardrails: hallucination score, toxicity, bias, role violation, policy adherence. Action options per guardrail: block, redact, alert, log.

Tools: FutureAGI Agent Command Center ships 18+ runtime guardrails. Galileo Enterprise ships real-time guardrails. Llama Guard, NeMo Guardrails, and AWS Bedrock Guardrails cover overlapping surfaces. The architecture decision is whether the guardrail runs at the gateway (low latency, central policy) or in the application code (more flexible, harder to keep consistent).

Production monitoring

Sample live traffic, score with the same metrics used in CI, alert on drift. The standard alert pattern: alert if the failure rate on any safety metric crosses a threshold, alert if a previously-unseen jailbreak pattern shows up, alert if PII redaction stops firing on traffic that should match.

Pin the metric definition. The CI score and the production score must use the same judge model and rubric, or the team will burn cycles arguing which number is real.

Red-teaming

Run before launch, monthly in steady state, and after any prompt or model change. Document the test set, the failure rate, the categories tested, and the mitigation applied. Feed failing prompts back into the eval dataset. Confident-AI, Galileo, and FutureAGI ship red-teaming workflows; the Llama Guard model and academic research datasets (HarmBench, AdvBench) are useful starting points.

Documentation

Per LLM-backed feature, maintain:

- A model card or system card describing the model, prompt, dataset, intended use, known limitations.

- A risk register tied to the NIST RMF Map function.

- Eval results per release with judge model and rubric versions pinned.

- Red-teaming reports.

- Incident logs.

- Dataset provenance records.

- A list of guardrails active in production.

- A mapping from each control to the relevant EU AI Act article, NIST RMF function, or ISO 42001 clause.

Auditors care about traceability more than completeness. A short document set that reliably points to evidence beats a long document set that does not.

Common mistakes when running an LLM safety program

- Treating safety as a launch checklist. A one-time pre-launch check is not a program. Production drifts, new attack patterns emerge, and the dataset that passed in March silently fails in October.

- Running guardrails only in production. Without offline eval gates in CI, the team finds out about safety regressions from customers and lawyers. Pre-deploy gates are cheaper than post-incident reviews.

- Running offline evals only in CI. Without runtime guardrails, the team relies on the prompt being followed every time. The prompt is not always followed every time.

- Not pinning judge models. A judge model upgrade can shift safety scores measurably. Pin the model id, the rubric, and the temperature; rotate intentionally.

- Skipping red-teaming because the eval suite passes. Red-teaming finds the failure modes the eval suite was not built to catch. Both are needed.

- Treating the EU AI Act as 2027’s problem. Most of the obligations land in August 2025 and August 2026. The 2027 date mainly covers Article 6(1) high-risk obligations and the compliance deadline for certain pre-August 2025 GPAI models.

- Confusing NIST RMF with ISO 42001. RMF guides practice; 42001 certifies a management system. The two cover overlapping but different ground; do not pick one and call the other done.

What changed in 2025-2026

| Date | Event | Why it matters |

|---|---|---|

| Aug 1, 2024 | EU AI Act entered into force | Compliance clock started |

| Feb 2, 2025 | Prohibited practices and AI literacy obligations applied | First enforceable EU AI Act milestone |

| Jul 26, 2024 | NIST AI 600-1 GenAI Profile published | First federal GenAI-specific risk reference |

| Aug 2, 2025 | EU AI Act GPAI obligations and penalties applied | Most general-purpose AI providers in scope |

| Apr 7, 2026 | NIST released AI RMF Critical Infrastructure Profile concept note | Critical-infrastructure operators got a working draft |

| Aug 2, 2026 | Most remaining EU AI Act provisions apply | High-risk obligations beyond Article 6(1) take effect |

How FutureAGI implements the LLM safety and compliance loop

FutureAGI is the production-grade LLM safety and compliance platform built around the EU AI Act, NIST AI RMF, and ISO 42001 obligations this post mapped. traceAI is Apache 2.0, and FutureAGI offers a self-hostable platform on the same plane:

- Runtime guardrails - 18+ first-party guardrails (PII, prompt injection, jailbreak, tool-call enforcement, refusal calibration, output policy, jailbreak families, content classification) ship as both span-attached scorers and inline gateway policies.

turing_flashruns guardrail screening at 50 to 70 ms p95, fast enough to gate every request without breaking interactive UX. - Eval and audit - 50+ first-party metrics (Hallucination, Toxicity, Bias, Faithfulness, Refusal Calibration) ship as both pytest-compatible scorers and span-attached scorers. The same definition runs offline in CI and online against production traffic, satisfying the EU AI Act Article 9 risk management and Article 17 quality management obligations.

- Tracing and audit trail - traceAI is Apache 2.0 OTel-based and auto-instruments 35+ frameworks across Python, TypeScript, Java, and C#. The trace tree carries guardrail verdicts, eval scores, prompt versions, and tool-call accuracy as first-class span attributes; the audit trail covers every request without bolting on a separate logging system.

- Gateway - the Agent Command Center gateway fronts 100+ providers with BYOK routing, fallback, caching, and per-tenant policy. It carries guardrail enforcement, rate limits, and provider attestations on one plane.

Pricing starts free with a 50 GB tracing tier; Boost is $250 per month, Scale is $750 per month with HIPAA BAA, and Enterprise from $2,000 per month with SOC 2 Type II.

Most teams shipping under EU AI Act, NIST AI RMF, or ISO 42001 end up running three or four tools to satisfy each obligation: one for safety evals, one for runtime guardrails, one for traces, one for the gateway. FutureAGI is the recommended pick because the eval, guardrail, trace, gateway, and audit surfaces all live on one self-hostable runtime; the compliance loop closes without stitching.

Sources

- EU AI Act high-level overview

- EU AI Act implementation timeline

- NIST AI Risk Management Framework

- DeepEval safety metrics

- DeepEval GitHub repo

- Confident-AI homepage

- FutureAGI Agent Command Center

- FutureAGI eval SDK docs

- Galileo pricing

- NeMo Guardrails GitHub repo

- Llama Guard

Series cross-link

Read next: Best LLM Evaluation Tools, LLM Testing Playbook, Multi-Turn LLM Evaluation

Related reading

Frequently asked questions

What does LLM safety actually cover in 2026?

What regulatory frameworks apply to LLM applications in 2026?

Who is in scope for the EU AI Act?

What is the difference between NIST AI RMF and ISO 42001?

What guardrails should every production LLM app run?

How does red-teaming fit into a 2026 safety program?

What is prompt injection and how do I defend against it?

How should I document compliance for an audit?

EU AI Act, NIST AI RMF, ISO 42001, audit trails, version control, rollback, blast-radius gates. The practical compliance guide for production agents.

FutureAGI, Galileo, Credo AI, Holistic AI, IBM watsonx.governance, Fiddler AI, Arize AI compared on policy, audit, and runtime enforcement for agents.

Best LLMs May 2026: compare GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and DeepSeek V4 across coding, agents, multimodal, cost, and open weights.