LLM Observability Platform Buyer's Guide 2026: 14 Questions to Ask

The 2026 LLMOps buyer's guide. 14 questions to ask before signing, with concrete benchmarks and the scoring rubric procurement teams use to compare platforms.

Table of Contents

Consider a hypothetical platform team that signs a $50K LLMOps contract. Eighteen months later, the team has outgrown per-trace pricing, the vendor’s self-host path requires a license tier they don’t have, and migrating off means rewriting instrumentation across dozens of services. The cost of the wrong decision is wasted runway plus the migration. The cost of the right decision is a two-day procurement diligence with the right rubric.

This is a buyer’s guide, not a comparison post. The 14 questions below are a practical procurement rubric for 2026. They cover ingestion, eval, operations, and commercials. The guide is platform-agnostic; it points at FutureAGI, Langfuse, Phoenix, LangSmith, Braintrust, Galileo, and others where their answers differ.

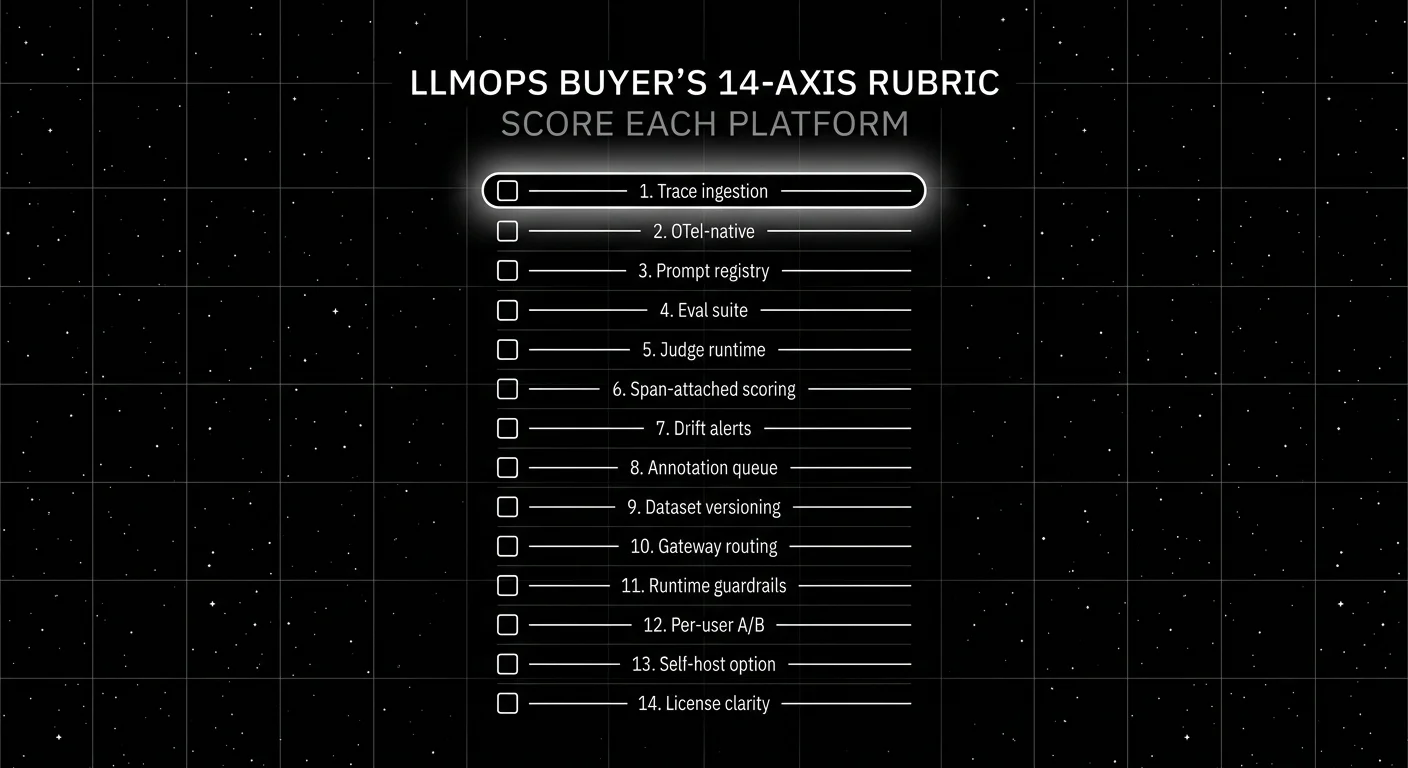

TL;DR: The 14-question rubric

Score each candidate platform 1-5 on each axis. Anything below a 3 on a deal-breaker axis is a no. Anything below a 4 on a near-deal-breaker axis is a yellow flag.

| # | Axis | Why it matters | Deal-breaker |

|---|---|---|---|

| 1 | OTel and instrumentation | Cross-vendor ingestion floor | Yes |

| 2 | Multi-language coverage | Mixed services break monoglot platforms | Yes if Java/C# in stack |

| 3 | Eval surface | Quality verdicts on every release | Yes |

| 4 | Span-attached scoring | Quality verdict on every span | Yes for production |

| 5 | Prompt versioning | Rollback is a single API call | Yes |

| 6 | Dataset management | Regression suites tied to prompts | Yes |

| 7 | Annotation queues | Human labels for calibration | No, but slows judge work |

| 8 | Gateway and guardrails | Single point of policy | Yes for regulated workloads |

| 9 | Self-host story | Data residency, budget control | Yes if regulated |

| 10 | Pricing model | TCO over 24 months | Yes |

| 11 | Retention and compliance | SOC 2, GDPR, HIPAA | Yes for regulated |

| 12 | Lock-in | License + SDK portability | Yes |

| 13 | Vendor health | Roadmap risk, acquisitions | No, but informs urgency |

| 14 | Time to first trace | Velocity and team adoption | Yes |

If you only read one row: pricing model and lock-in are the two axes teams underweight at signing and overweight at migration. Both deserve hours, not minutes.

The 14 questions, expanded

1. OTel and instrumentation

Ask: Does the platform ingest OpenTelemetry traces over OTLP natively? Which language SDKs are first-party? Does it auto-instrument the frameworks you use (LangChain, LlamaIndex, OpenAI, Anthropic, DSPy, OpenAI Agents, Pydantic AI)?

OTel-native: Phoenix, FutureAGI traceAI. OTel-supported with custom mapping: Langfuse, Braintrust, LangSmith. Vendor-SDK-first: Helicone (gateway), Datadog LLM (APM-first).

A platform that requires you to use its proprietary SDK is the platform you cannot leave.

2. Multi-language coverage

Ask: Are Python, TypeScript, Java, and C# all first-party? If your stack mixes Python services with a Java backend or a Go gateway, does the platform render spans consistently across all of them?

The OSS leaders in 2026: traceAI ships 50+ integrations across Python, TypeScript, Java (with LangChain4j and Spring AI), and C#. OpenInference ships Python, JavaScript, and Java packages; check the repo for current counts.

Pure-Python platforms struggle once a Java refund service or a C# Windows agent enters the picture.

3. Eval surface

Ask: How many built-in judges? What rubrics? Multi-turn? Agent metrics? BYOK judge support? Calibration UI?

Strong: FutureAGI (50+ evaluation metrics with built-in judge models, calibration UI, BYOK judge), Galileo (Luna 2 SLM judges, ChainPoll), Confident-AI on top of DeepEval (G-Eval, DAG, RAG metrics, agent metrics, conversational metrics).

Adequate: Langfuse (judge runs over datasets), Phoenix (LLM-as-judge primitives in SDK), Braintrust (scorer templates).

4. Span-attached scoring

Ask: Can a judge score live on the span as an attribute, or only as a separate row keyed by trace_id?

Span-attached: FutureAGI, Galileo, Phoenix, Braintrust. The advantage is one query joins traces and scores. Trace-and-observation-scoped: Langfuse score API supports trace, observation, session, and dataset-run scoring. LangSmith feedback API attaches to runs and can hang off any tracked event. Workable, but the on-the-span attribute pattern simplifies aggregation.

5. Prompt versioning

Ask: Does the platform manage prompt versions, with deployment labels, A/B branching, eval-gated rollback? See Best AI Prompt Management Tools 2026.

Strong: FutureAGI Prompts (unlimited on every tier), Langfuse prompt management, LangSmith Hub, PromptLayer.

Limited: Phoenix has prompt tracking; Braintrust has prompts but the sweet spot is dev rather than ops.

6. Dataset management

Ask: Versioning, lineage, auto-build from negative feedback, replay against new model versions, dataset diffs across runs.

Strong: FutureAGI (datasets tied to evals and prompts), Confident-AI (synthetic golden generation), Langfuse Datasets v2, Braintrust experiments.

7. Annotation queues

Ask: Human-in-the-loop workflow, inter-annotator agreement metrics, label export, assignment workflow.

Strong: Langfuse annotation queues, Galileo human review, FutureAGI annotation. Adequate: LangSmith feedback queue, Phoenix annotation. The annotation surface decides judge calibration speed.

8. Gateway and guardrails

Ask: Does the platform double as a runtime gateway with input and output guardrails? Or is it eval-only?

Built-in runtime guardrails: FutureAGI Agent Command Center (multiple built-in guardrails), Galileo Enterprise runtime guardrails, Helicone gateway (Apache 2.0). Gateway-only without a built-in guardrail layer: Braintrust AI Gateway/Proxy, LangSmith deployment surfaces. Eval and observability without a gateway: Langfuse, Phoenix; pair them with Portkey, LiteLLM, or NeMo Guardrails for the runtime layer.

For regulated workloads, gateway-plus-guardrail integration is a deal-breaker if missing.

9. Self-host story

Ask: Production-grade self-host? ClickHouse vs Postgres for trace storage? ARM image support? Kubernetes manifests? Air-gapped deployment? On-prem with offline updates?

Production-grade self-host: FutureAGI (ClickHouse + Postgres + Redis + Temporal), Langfuse (Postgres + ClickHouse + Redis), Phoenix on Postgres for production (SQLite is the local or single-user default). Closed self-host: Braintrust enterprise, LangSmith enterprise, Galileo on-prem.

The platform that ships ARM containers, K8s manifests, and offline upgrade paths is the platform that survives a security review.

10. Pricing model

Ask: Per-trace, per-seat, per-GB, flat tier? Project the model against your 24-month traffic curve and team size.

| Vendor | Model | Watch-out |

|---|---|---|

| FutureAGI | Free + usage from $2/GB | Storage cost during incidents |

| Langfuse | Flat + units | Hard cap on tier; auto-bill on overage |

| Phoenix | Free self-host; AX paid | AX scales with spans |

| LangSmith | Per-seat + per-trace | Per-seat punishes cross-functional teams |

| Braintrust | Tiered with unlimited users | Storage caps per tier |

| Galileo | Per-trace with enterprise | Trace meter inflates during high-volume routes |

| Helicone | Tiered; in maintenance | Roadmap risk |

Compute total 24-month cost; don’t compare list prices.

11. Retention and compliance

Ask: SOC 2 Type II? ISO 27001? HIPAA BAA? GDPR data residency? Configurable retention per workload?

Strong: Galileo (SOC 2, HIPAA BAA, on-prem and VPC), FutureAGI (SOC 2 Type II in 2026), Langfuse Pro (SOC 2 Type II, ISO 27001). Adequate: Braintrust (SOC 2), LangSmith (SOC 2), Phoenix self-host puts compliance on the operator.

For regulated workloads, ask for the latest SOC 2 report, not just a checkbox claim.

12. Lock-in

Ask: OSS license? SDK portability? Can I dump my data in OTel format and walk?

Lowest lock-in: Apache 2.0 platforms (FutureAGI, DeepEval-as-framework). MIT-core: Langfuse. Source-available: Phoenix (ELv2). Closed with OSS SDK: LangSmith, Braintrust. Closed end-to-end: Galileo, Patronus.

The lock-in question matters most when the platform is the wrong one and migration is the recourse.

13. Vendor health

Ask: Funding rounds? Customer count? Recent acquisitions? Roadmap risk? GitHub stars and commit cadence?

In 2026, the watchpoints are: Helicone joined Mintlify in March 2026 and the gateway is in maintenance mode. Phoenix is part of Arize. Langfuse is independent and venture-backed. LangSmith is part of LangChain. Braintrust raised a Series A. FutureAGI raised a $1.6M pre-seed in 2025.

Vendor health is not a deal-breaker on its own but informs whether the platform will be where it is today in 24 months.

14. Time to first trace

Ask: How many hours from sign-up to first production trace? Two-week target.

Fast: FutureAGI, Helicone, Langfuse Hobby, Phoenix self-host (one-line install). Slower: enterprise self-host paths (Braintrust enterprise, LangSmith enterprise, Galileo on-prem), which can run two to four weeks before first trace lands.

Anything longer than two weeks for a hosted SaaS is a red flag for the team’s adoption velocity.

How to actually run the procurement

- Score the rubric. Pick three candidate platforms. Score 1-5 on each of the 14 axes. Anything below a 3 on a deal-breaker is a no. Document the score.

- Reproduce on your data. Pick the top two from the rubric. Run a two-week reproduction with your real traces, your real model mix, your real concurrency. Watch storage cost, judge cost, and time-to-incident-detection.

- Compute 24-month TCO. Subscription plus storage plus judge tokens plus engineering time. The vendor that wins the reproduction at the lowest 24-month TCO is the one to sign.

- Plan the off-ramp. Even before signing, document how you would migrate: data export format, instrumentation portability, prompt versioning portability. The off-ramp plan is the lock-in test.

Common mistakes when buying an LLMOps platform

- Pricing only the subscription. Subscription is the smallest line item once production lands. Judge tokens and storage win.

- Picking on the demo. Vendor demos use clean data. Your data is messy.

- No reproduction. Two weeks is the floor. Skipping the reproduction is how teams end up with the wrong platform.

- Ignoring multi-language coverage. Python-only platforms break the moment a Java refund service shows up.

- Skipping the off-ramp plan. Lock-in is invisible until migration is forced.

- Buying gateway and observability separately. The integration cost between them often dwarfs the per-tool cost.

- No annotation queue. Human labels are the floor for judge calibration. A platform without an annotation queue forces a custom build.

What changed in LLMOps procurement in 2026

| Date | Event | Why it matters |

|---|---|---|

| Mar 2026 | FutureAGI shipped Agent Command Center and ClickHouse trace storage | Gateway and observability under one platform changed the integration math. |

| Mar 19, 2026 | LangSmith Agent Builder became Fleet | LangChain expanded into agent deployment workflows. |

| Mar 3, 2026 | Helicone joined Mintlify | Gateway-first stacks now have a vendor-health column. |

| 2026 | OTel GenAI semconv broad adoption | Cross-vendor portability became achievable, though the spec is still in development. |

| 2026 | Galileo Luna 2 distilled judges | Online scoring at scale stopped requiring frontier judges. |

Sources

- FutureAGI pricing

- FutureAGI changelog

- traceAI GitHub repo

- Langfuse pricing

- Langfuse self-hosting

- Phoenix docs

- Arize pricing

- LangSmith pricing

- Braintrust pricing

- Galileo pricing

- Helicone joining Mintlify

- OpenTelemetry GenAI semantic conventions

- OpenInference GitHub repo

- SOC 2 Type II auditing

Series cross-link

Read next: Best LLMOps Platforms 2026, Best LLM Evaluation Tools 2026, LLM Deployment Best Practices 2026

Related reading

Frequently asked questions

What is an LLM observability platform?

What questions should I ask before buying an LLMOps platform?

Should I pick OSS or closed for LLM observability?

What is the right pricing model for an LLMOps platform?

Can I trust vendor benchmarks for procurement?

What does total cost of ownership look like for an LLMOps platform in 2026?

How do I evaluate self-hosting vs hosted?

Which platform is right for an OTel-first stack?

FutureAGI, Langfuse, MLflow, W&B Weave, Comet, Braintrust, LangSmith for LLMOps in 2026. Pricing, OSS license, and what each platform won't do end-to-end.

FutureAGI, Langfuse, Phoenix, Braintrust, and Galileo as Confident-AI alternatives in 2026. Pricing, OSS license, eval depth, and gaps for production teams.

FutureAGI, DeepEval, Langfuse, Phoenix, Braintrust, LangSmith, and Galileo as the 2026 LLM evaluation shortlist. Pricing, OSS license, and production gaps.